Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

REPAIR Act and State Laws: What automotive OEMs must prepare for

Right to Repair is becoming a key issue in the U.S., with the REPAIR Act (H.R. 906) at the center. This proposed federal law would require OEMs to give vehicle owners and independent repair shops access to vehicle-generated data and critical repair tools.

The goal? Protect consumer choice and promote fair competition in the automotive repair market, preventing manufacturers from monopolizing repairs.

For OEMs, it means growing pressure to open up data and tools that were once tightly controlled. The Act could fundamentally change how repairs are managed , forcing companies to rethink their business models to avoid risks and stay competitive.

We’ll walk you through the REPAIR Act’s key provisions and practical steps automotive OEMs can take to adapt early and avoid compliance risks.

What’s inside the REPAIR Act (H.R. 906)

The REPAIR Act (H.R. 906), also known as the Right to Equitable and Professional Auto Industry Repair Act, aims to give consumers and independent repair shops access to vehicle data, tools, and parts that are crucial for repairs and maintenance.

Its goal is to level the playing field between manufacturers and independent repairers while protecting consumer choice. This could mean significant changes in how OEMs manage vehicle data and repair services.

REPAIR Act timeline – where are we now

The REPAIR Act (H.R. 906) was introduced in February 2023 and forwarded to the full committee in November 2023.

As of January 3, 2025, the bill has not moved beyond the full committee stage and was marked "dead" because the 118th Congress ended before its passage. But the message remains clear - Right to Repair isn’t going away. The growing momentum behind repair access and data rights is reshaping the conversation.

REPAIR Act provisions

Which obligations for manufacturers are covered by the Repair Act?

1) Access to vehicle-generated data

- Direct data access: OEMs would be required to provide vehicle owners and their repairers with real-time, wireless access to vehicle-generated data. This includes diagnostics, service, and operational data.

- Standardized access platform: OEMs must develop a common platform for accessing telematics data to provide consistent and easy access across all vehicle models.

2) Standardized repair information and tools

- Fair access: Critical repair manuals, tools, software, and other resources must be made available to consumers and independent repair shops at fair and reasonable costs.

- No barriers: OEMs cannot restrict access to essential repair information. The aim is to prevent them from monopolizing repair services.

3) Ban on OEM part restrictions

- Aftermarket options: The Act prohibits manufacturers from requiring the use of OEM parts for non-warranty repairs. Consumers can choose aftermarket parts and independent service providers.

- Fair competition: This provision supports competition by allowing aftermarket parts manufacturers to offer compatible alternatives without interference.

4) Cybersecurity and data protection

- Security standards: The National Highway Traffic Safety Administration (NHTSA) will set standards to balance data access with cybersecurity.

- Safe access: OEMs can apply cryptographic protections for telematics systems and over-the-air (OTA) updates, provided they do not block legal access to data for independent repairers and vehicle owners.]

These provisions go beyond theory and will directly affect how OEMs handle repairs and manage data access. Even more challenging? The existing patchwork of state laws that already demand similar access makes compliance tricky.

Complex regulatory landscape: How Right to Repair influences automotive OEMs

The regulatory environment for the Right to Repair in the U.S. is becoming increasingly complex, with state-level laws already in effect and a potential nationwide federal law still pending. This evolving framework presents both immediate and long-term challenges for automotive OEMs, requiring them to navigate overlapping requirements and conflicting standards.

State-level laws: A growing patchwork

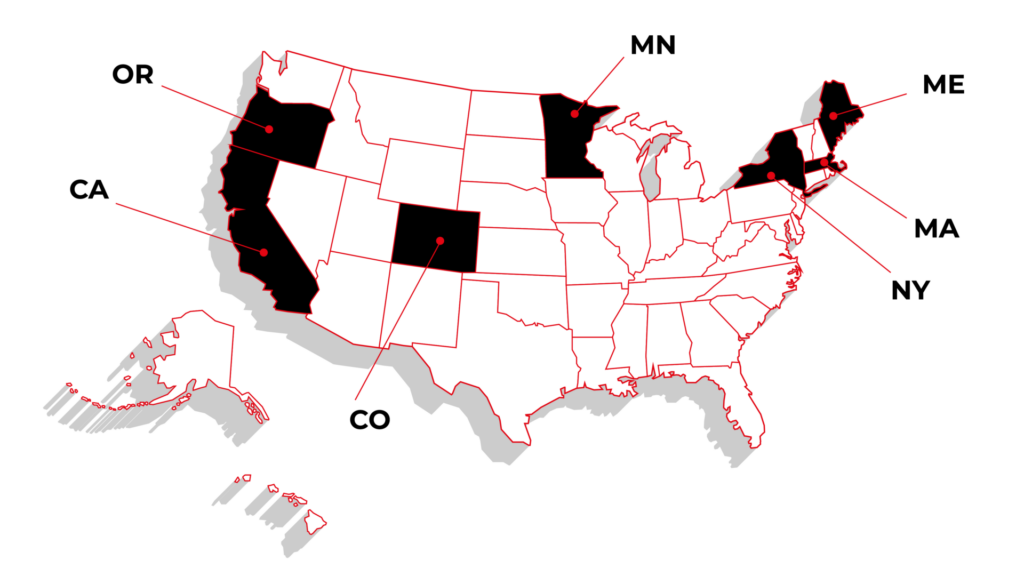

As of February 2025, several states have enacted comprehensive Right to Repair laws.

Massachusetts and Maine have laws explicitly targeting automotive manufacturers. (Automakers have sued to block the law’s implementation in Maine.)

These regulations require manufacturers to provide vehicle owners and independent repairers with access to diagnostic and repair information, as well as a standardized telematics platform.

Other states like California, Minnesota, New York, Colorado, and Oregon have focused on consumer electronics or agricultural equipment without directly impacting automotive OEMs.

However, the broader push for repair rights means automotive manufacturers cannot ignore the implications of this trend.

Additionally, as of early 2025, 20 states had active Right to Repair legislation, reflecting the momentum behind this movement. While most of these bills remain under consideration, they highlight the growing pressure for more open access to repair information and vehicle data.

Federal vs. state regulations: Compliance challenges

The pending federal REPAIR Act (H.R. 906) aims to create a unified national framework for the Right to Repair, focusing on vehicle-generated data and repair tools. However, until it becomes law, OEMs must comply with varying state laws that could contradict or go beyond future federal requirements.

Key scenarios:

- If the REPAIR Act includes a preemption clause , federal law will override conflicting state laws, providing a single set of rules for OEMs.

- If preemption is not included , OEMs will face a dual compliance burden, adhering to both federal and state-specific requirements.

This uncertainty complicates planning and increases the risk of non-compliance, making it essential for OEMs to prepare now.

Global pressures: The EU's Right to Repair mandates

The U.S. isn’t the only region focusing on the Right to Repair. European Union regulations are setting global standards for OEMs selling internationally.

- European Court of Justice Ruling (October 2023): Automotive manufacturers cannot limit repair data access under cybersecurity claims, expanding rights for independent repairers.

- EU Data Act (September 12, 2025): Requires OEMs to provide third-party access to vehicle-generated data, making open data compliance mandatory for the EU market.

For OEMs operating internationally, aligning early with these standards is a smart move. While the 2024 Right to Repair Directive doesn’t directly target vehicles, it reflects the broader trend toward increased data access and repairability.

How automotive OEMs should prepare for the Right to Repair (Even without a federal law)

Waiting is risky. Regardless of whether the REPAIR Act becomes law, preparation is key. Waiting for final outcomes could lead to costly adjustments and missed opportunities. Here’s where to start:

1. Develop a standardized vehicle data access platform

Why: Regulations require open and transparent data-sharing for diagnostics and updates. Without a standardized platform, compliance becomes difficult.

How: Focus on building a secure platform that gives vehicle owners and independent repair shops transparent access to the necessary data.

2. Provide open access to repair information and tools

Why: Some states already require OEMs to provide critical repair information and tools at fair prices. This trend is likely to expand.

How: Start creating a centralized repository for repair manuals, diagnostic tools, and other key resources.

3. Strengthen cybersecurity without restricting repair access

Why: Protecting data is critical, but legitimate repairers need safe entry points for service.

How: Develop security protocols that protect key vehicle functions without blocking legitimate access. This means securing software updates and repair-related data while allowing repairers safe entry points for diagnostics and service.

4. Improve OTA software update capabilities

Why: Having strong OTA capabilities helps comply with future regulations requiring real-time access and updates.

How: Upgrade your current OTA systems to allow secure updates and diagnostics. Include tools authorized third parties can use for updates and software repairs.

5. Transition to modular and repairable product design

Why: Designing products for easier repair reduces costs and improves compliance.

How: Shift toward using modular components that can be replaced individually. Avoid locking parts to specific manufacturers, as some states have banned this practice. Modular designs also support longer spare part availability, which many laws will require.

6. Align supply chain and warranty systems with Right-to-Repair laws

Why: Warranty terms and parts availability are common regulatory targets.

How: Make spare parts available for several years after the sale of a vehicle. Update warranty policies to allow third-party repairs and non-OEM parts without penalty.

7. Monitor regulations and adapt quickly

Why: The regulatory landscape is evolving rapidly. Staying informed about new laws and adjusting plans early will help avoid costly last-minute changes.

How: Track new laws and build flexible systems that can easily adjust as regulations change.

How an IT enabler helps OEMs prepare for Right to Repair

Managing compliance can feel overwhelming, but it doesn’t have to disrupt operations. An IT enabler helps manufacturers build systems and processes that meet regulatory demands without adding unnecessary complexity.

Here’s how:

Turning regulations into practical solutions

Right to Repair regulations vary across states and countries. An IT enabler translates these requirements into practical tools - systems for managing access to repair data, diagnostics, and tools – to make compliance more manageable.

Building the right technology

OEMs need reliable platforms that allow repairers to access diagnostic data and tools while keeping vehicle systems secure. IT experts develop scalable solutions that work across different models and markets without compromising safety.

Balancing security and access

Access to repair data must be balanced with strong security. IT solutions help protect sensitive vehicle functions while providing authorized repairers with the necessary information.

Keeping operations simple

Compliance shouldn’t add complexity. Automating key processes and streamlining workflows lets internal teams focus on core operations rather than administrative tasks.

Long-term support

Laws and standards evolve. IT partners provide continuous updates and maintenance to keep systems aligned with the latest regulations, reducing the risk of falling behind.

Delivering custom solutions

Every manufacturer has unique needs. Whether it’s updating your warranty system for third-party repairs, improving OTA update capabilities, or adapting your supply chain for spare part availability, custom solutions help you stay compliant and competitive.

At Grape Up , we help OEMs adapt to Right to Repair regulations with practical solutions and long-term support.

We have experience working with automotive, insurance, and financial enterprises, building systems that account for differences in regulations across various states.

Preparing for changes? Contact us today.

From secure diagnostics to repair information management, we provide the expertise and tools to help you stay compliant and ready for what’s next.

The key to ROAI: Why high-quality data is the real engine of AI success

Data might not literally be “the new oil,” but it’s hard to ignore its growing impact on companies' operations. By some estimates, the world will generate over 180 zettabytes of data by the end of 2025 . Yet, many organizations still struggle to turn that massive volume into meaningful insights for their AI projects.

According to IBM, poor data quality already costs the US economy alone $3.1 trillion per year - a staggering figure that underscores just how critical proper governance is for any initiative, AI included.

On the flip side, well-prepared data can dramatically boost the accuracy of AI models, shorten the time it takes to get results and reduce compliance risks. That’s why the high quality of information is increasingly recognized as the biggest factor in an AI project’s success or failure and a key to ROAI.

In this article, we’ll explore why good data practices are so vital for AI performance, what common pitfalls often derail organizations, and how usage transparency can earn customer trust while delivering a real return on AI investment.

Why data quality dictates AI outcomes

An AI model’s accuracy and reliability depend on the breadth, depth, and cleanliness of the data it’s trained on. If critical information is missing, duplicated, or riddled with errors, the model won’t deliver meaningful results, no matter how advanced it is. It’s increasingly being recognized that poor quality leads to inaccurate predictions, inefficiencies, and lost opportunities.

For example, when records contain missing values or inconsistencies, AI models generate results that don’t reflect reality. This affects everything from customer recommendations to fraud detection, making AI unreliable in real-world applications. Additionally, poor documentation makes it harder to trace data sources, increasing compliance risks and reducing trust in AI-driven decisions.

The growing awareness has made data governance a top priority across industries as businesses recognize its direct impact on AI performance and long-term value.

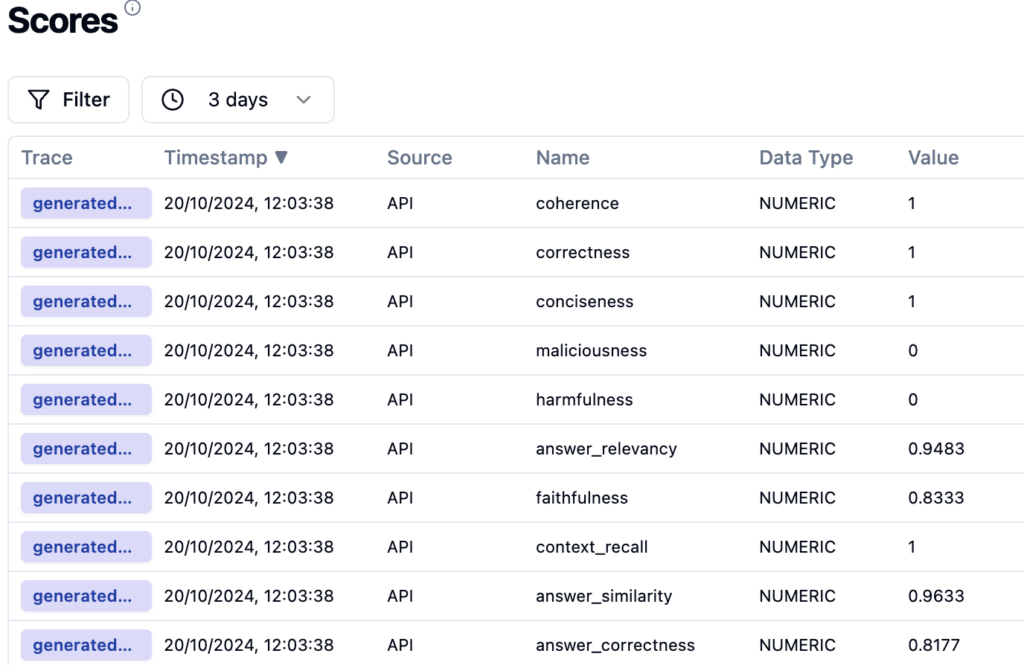

Metrics for success: Tracking the impact of quality data on AI

Even with the right data preparation processes in place, organizations benefit most when they track clear metrics that tie data quality to AI performance. Here are key indicators to consider:

Monitoring these metrics lets organizations gain visibility into how effectively their information supports AI outcomes. The bottom line is that quality data should lead to measurable gains in operational efficiency, predictive accuracy, and overall business value. In other words - it's the key to ROAI.

However, even with strong data quality controls, many companies struggle with deeper structural issues that impact AI effectiveness.

AI works best with well-prepared data infrastructures

Even the cleanest sets won’t produce value if data infrastructure issues slow down AI workflows. Without a strong data foundation, teams spend more time fixing errors than training AI models.

Let's first talk about the people - they too are, after all, key to ROAI.

The right talent makes all the difference

Fixing data challenges is about tools as much as it is about people.

- Data engineers make sure AI models work with structured, reliable datasets.

- Data scientists refine data quality, improve model accuracy, and reduce bias.

- AI ethicists help organizations build responsible, fair AI systems.

Companies that invest in data expertise can prevent costly mistakes and instead focus on increasing ROAI.

However, even with the right people, AI development still faces a major roadblock: disorganized, unstructured data.

Disorganized data slows AI development

Businesses generate massive amounts of data from IoT devices, customer interactions, and internal systems. Without proper classification and structure, valuable information gets buried in raw, unprocessed formats. This forces data teams to spend more time cleaning and organizing instead of implementing AI in their operations.

- How to improve it: Standardized pipelines automatically format, sort, and clean data before it reaches AI systems. A well-maintained data catalog makes information easier to locate and use, speeding up development.

Older systems struggle with AI workloads

Many legacy systems were not built to process the volume and complexity of modern AI workloads. Slow query speeds, storage limitations, and a lack of integration with AI tools create bottlenecks. These issues make it harder to scale AI projects and get insights when they are needed.

- How to improve it: Upgrading to scalable cloud storage and high-performance computing helps AI process data faster. Moreover, integrating AI-friendly databases improves retrieval speeds and ensures models have access to structured, high-quality inputs.

Beyond upgrading to cloud solutions, businesses are exploring new ways to process and use information.

- Edge computing moves data processing closer to where it’s generated to reduce the need to send large volumes of information to centralized systems. This is critical in IoT applications, real-time analytics, and AI models that require fast decision-making.

- Federated learning allows AI models to train across decentralized datasets without sharing raw data between locations. This improves security and is particularly valuable in regulated industries like healthcare and finance, where data privacy is a priority.

Siloed data limits AI accuracy

Even when companies maintain high-quality data, access restrictions, and fragmented storage prevent teams from using it effectively. AI models trained on incomplete datasets miss essential context, which in turn leads to biased or inaccurate predictions. When different departments store data in separate formats or systems, AI cannot generate a full picture of the business.

- How to improve it: Breaking down data silos allows AI to learn from complete datasets. Role-based access controls provide teams with the right level of data availability without compromising security or compliance.

Fixing fragmented data systems and modernizing infrastructure is key to ROAI, but technical improvements alone aren’t enough. Trust, compliance, and transparency play just as critical a role in making AI both effective and sustainable.

Transparency, privacy, and security: The trust trifecta

AI relies on responsible data handling. Transparency builds trust and improves outcomes, while privacy and security keep organizations compliant and protect both customers and businesses from unnecessary risks. When these three elements align, people are more willing to share data, AI models become more effective, and companies gain an edge.

Why transparency matters

82% of consumers report being "highly concerned" about how companies collect and use their data, with 57% worrying about data being used beyond its intended purpose. When customers understand what information is collected and why, they’re more comfortable sharing it. This leads to richer datasets, more accurate AI models, and smarter decisions. Internally, transparency helps teams collaborate more effectively by clarifying data sources and reducing duplication.

Privacy and security from the start - a key to ROAI

While transparency is about openness, privacy and security focus on protecting data. Main practices include:

Compliance as a competitive advantage

Clear records and responsible data practices reduce legal risks and allow teams to focus on innovation instead of compliance issues. Customers who feel their privacy is respected are more willing to engage, while strong data practices can also attract partners, investors, and new business opportunities.

Use data as the strategic foundation for AI

The real value of AI comes from turning data into real insights and innovation - but none of that happens without a solid data foundation.

Outdated systems, fragmented records, and governance gaps hold back AI performance. Fixing these issues ensures AI models are faster, smarter, and more reliable.

Are your AI models struggling with data bottlenecks?

Do you need to modernize your data infrastructure to support AI at scale?

We specialize in building, integrating, and optimizing data architectures for AI-driven businesses.

Let’s discuss what’s holding your AI back and how to fix it.

Contact us to explore solutions tailored to your needs.

The foundation for AI success: How to build a strategy to increase ROAI

AI adoption is on the rise but turning it into real business value is another story. 74% of companies struggle to scale AI initiatives , and only a tiny fraction - just 26% - develop the capabilities needed to move beyond proofs of concept. The real question on everyone's mind is - How to increase ROAI?

One of the biggest hurdles is proving the impact. In 2023, the biggest challenge for businesses was demonstrating AI’s usefulness in real operations . Many companies invest in this technology without a clear plan for how it will drive measurable results.

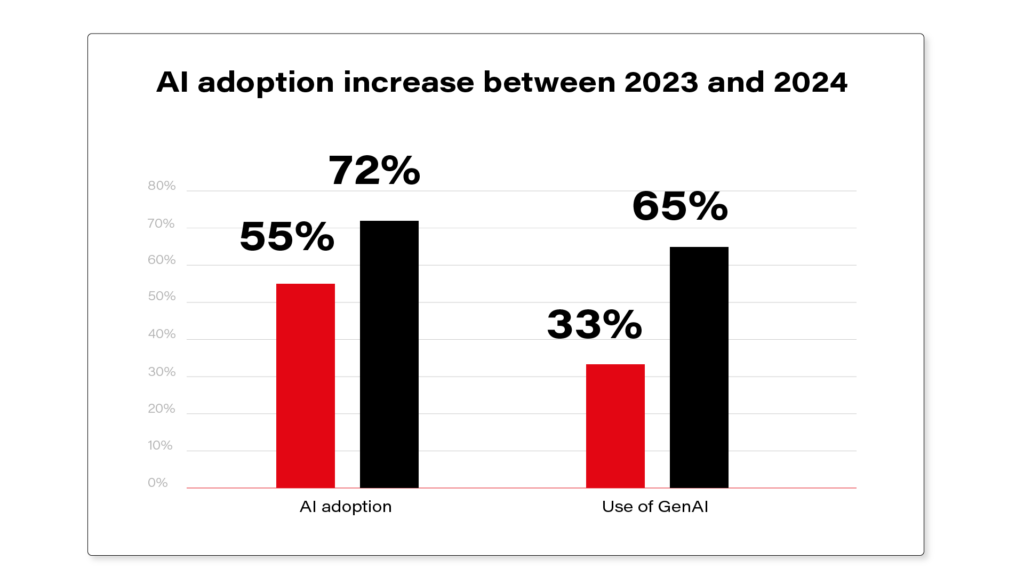

Even with these challenges, the adoption keeps growing. McKinsey's 2024 Global Survey on AI reported that 65% of respondents' organizations are regularly using Generative AI in at least one business function, nearly doubling from 33% in 2023. Businesses know its value, but making artificial intelligence work at scale takes more than just enthusiasm.

Source: https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

That’s where the right approach makes all the difference. A holistic strategy, strong data infrastructure, and efficient use of talent can help you increase ROAI and turn technology into a competitive advantage. But you need to start with building a foundation for AI investments and implementation first.

Why AI must be aligned with business goals

Too many AI projects fail when companies focus on the technology first instead of the problem it’s meant to solve. Investing in artificial intelligence just because it’s popular leads to expensive pilots that never scale, systems that complicate workflows instead of improving them, and wasted budgets with nothing to show for it.

Start with the problem, not the technology

Before committing resources, leadership needs to ask:

- What’s the goal? Is the priority cutting maintenance costs, making faster decisions, or detecting fraud more accurately? If the objective isn’t clear, neither will the results.

- Is AI even the right solution? Some problems don’t need machine learning. Sometimes, better data management or process improvements do the job just as well, without the complexity or cost of AI.

Choosing AI use cases that deliver real value

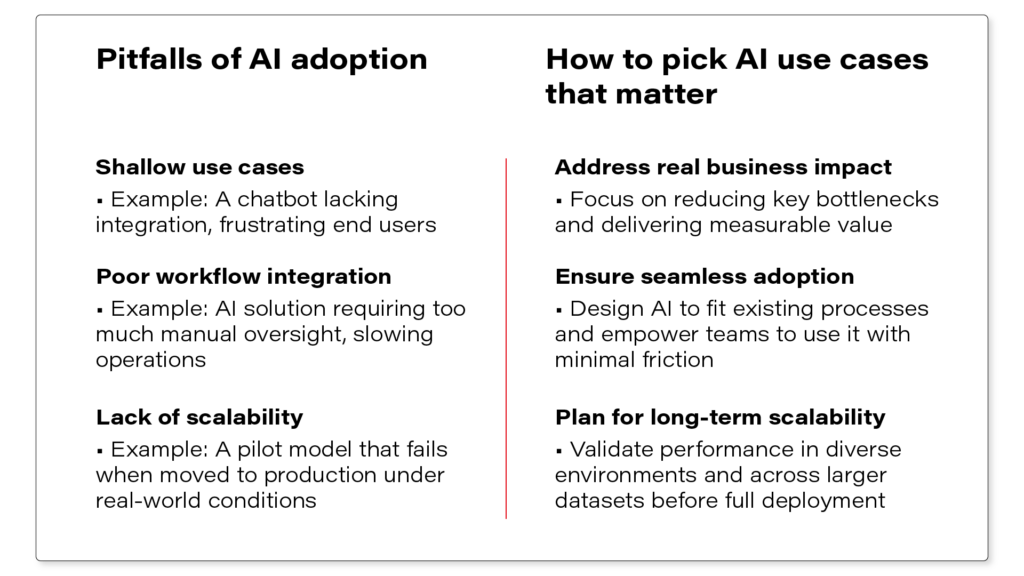

Once AI aligns with business goals, the next challenge is selecting initiatives that generate measurable impact. Companies often waste millions on projects that fail to solve real business problems, can’t scale, or disrupt workflows instead of improving them.

See which factors must align for AI to create tangible business value:

How responsible AI ties back to business results

Responsible AI protects long-term business value by creating systems that are transparent, fair, and aligned with user expectations and regulatory requirements. Organizations that take a proactive approach to AI governance minimize risks while building solutions that are both effective and trusted.

One of the biggest gaps in AI adoption is the lack of consistent oversight . Without regular audits and monitoring, models can drift, introduce bias, or generate unreliable results. Businesses need structured frameworks to keep AI reliable, adaptable, and aligned with real-world conditions. This also means actively managing ethical issues, explainability, and data security to maintain performance and trust.

As regulations evolve, compliance is no longer an afterthought. AI used in critical areas like fraud detection, risk assessment, and automated decision-making requires continuous monitoring to meet regulatory expectations. Companies that embed AI governance from the start avoid operational risks.

Another key challenge is trust . When AI-driven decisions lack transparency, scepticism grows. Users and stakeholders need clear visibility into how AI operates to build confidence. Companies that make decisions transparent and easy to understand improve adoption across their organization, and ultimately increase ROAI.

Measuring AI success and proving ROAI

The real test of AI’s success is whether it improves daily operations and delivers measurable business value. When teams work more efficiently, revenue grows, and risks become easier to manage, the investment is clearly paying off.

Key indicators of AI success

Is AI reducing manual effort? Automating repetitive tasks helps employees focus on more strategic work. If delays still slow operations or fraud detection overwhelms teams with false positives, AI may not be delivering real efficiency. Faster approvals and quicker customer issue resolution indicate AI is making a difference.

Is AI improving financial outcomes? Accurate forecasting cuts waste, and AI-driven pricing boosts profit margins. If automation isn’t lowering operational costs or streamlining workflows, it may not be adding real value.

Is AI strengthening security and compliance? Fraud detection prevents financial losses when it catches real threats without unnecessary disruptions. Compliance automation eases the burden of manual oversight, while AI-driven security reduces the risk of data breaches. If risks remain high, AI may need adjustments.

To prove AI’s return on investment, companies need to establish success criteria upfront , track AI performance over time, and compare different configurations (e.g., Generative AI use cases, LLM models ) to confirm the technology delivers cost savings and tangible benefits .

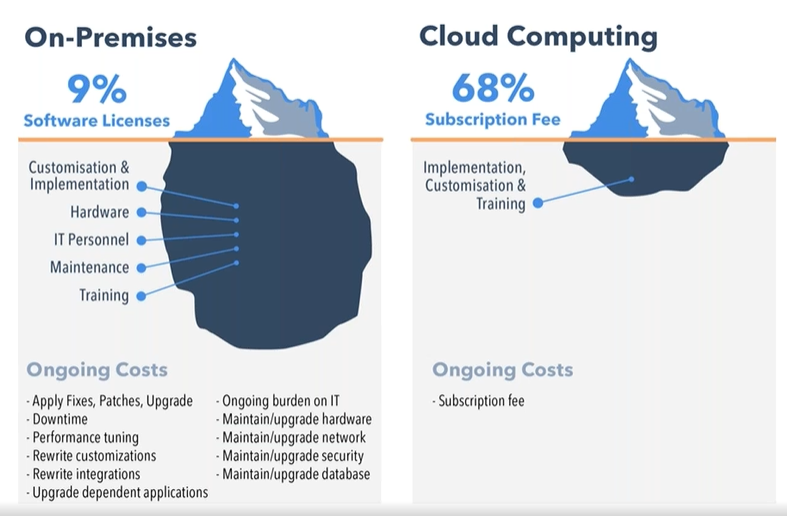

The hidden costs of AI initiatives and the challenge of scaling

Investing in artificial intelligence goes beyond development. Many companies focus on building and implementing models but underestimate the effort required to scale, maintain, and integrate them into existing systems. Costs accumulate over time, and without proper planning, AI projects can stall, and budgets stretch.

One of the highest ongoing costs is data . AI relies on clean, structured information, but collecting, storing, and maintaining it requires continuous effort. Over time, models need regular updates to remain accurate as well. Fraud tactics change, regulations evolve, and systems produce unreliable results without adjustments, leading to costly mistakes.

This becomes even more challenging when AI moves from a controlled pilot to full-scale implementation . A model that performs well in one department may not integrate easily across an entire organization. Expanding its use often exposes hidden costs, workflow disruptions, and technical limitations that weren’t an issue on a smaller scale.

Scaling AI successfully also requires coordination across different teams . While ML engineers refine models, business teams track measurable outcomes, and compliance teams manage regulatory requirements. You need these groups to align early.

AI must also integrate with existing enterprise systems without disrupting workflows, which requires dedicated infrastructure investments . Many legacy IT environments weren’t designed for AI-driven automation, which leads to increased costs for adaptation, cloud migration, and security improvements.

Companies that navigate these challenges effectively see real gains from AI. However, aligning strategy, execution, and scaling AI efficiently isn’t always straightforward. That’s where expert guidance makes a difference.

See how Grape Up helps businesses increase ROAI

Grape Up helps business leaders turn AI from a concept into a practical tool that delivers measurable ROAI by aligning technology with real business needs.

We work with companies to define AI roadmaps, making sure every initiative has a clear purpose and contributes to strategic goals. Our team supports data infrastructure and AI integration , so new solutions fit smoothly into existing systems without adding complexity.

From strategy to execution, Grape Up helps you increase ROAI. Make technology a real business asset adapted for long-term success.

Top 10 AI integration companies to consider in 2025

Artificial Intelligence has evolved from a specialized technology into a fundamental business imperative. However, the initial excitement around GenAI tools has given way to a more nuanced understanding - successful AI adoption requires a comprehensive organizational transformation, not just technological implementation.

This reality has highlighted a critical challenge: finding experienced AI integration partners who can "translate" AI software into genuine business value.

Recent industry analysis reveals a dramatic acceleration in AI adoption. According to McKinsey's latest survey, 72% of organizations now utilize AI solutions, marking a significant increase from 50% in previous years.

Generative AI has emerged as a particular success story, with 65% of organizations reporting regular usage - nearly double the previous year's figures. Organizations are deploying AI across diverse functions, from advanced data analysis and process automation to personalized customer experiences and strategic forecasting.

Investment trends reflect this growing confidence in AI's potential. Most organizations now allocate over 20% of their digital budgets to AI technologies, with 67% of executives planning to increase these investments over the next three years.

Quite often, they rely on AI integration companies to help them maximize benefits of investment in artificial intelligence.

Strategic goals: From implementation to innovation

Organizations approaching AI adoption typically balance immediate operational improvements with long-term strategic transformation:

Immediate priorities:

- Enhancing operational efficiency and productivity

- Reducing operational costs through automation

- Improving employee experience and workflow optimization

- Accelerating decision-making processes through data-driven insights

- Streamlining customer service operations

Strategic objectives:

- Business model innovation and market differentiation

- Sustainable revenue growth through AI-enabled capabilities

- Enhanced market positioning and competitive advantage

- Integration of sustainable practices and responsible AI usage

- Comprehensive data intelligence and operational effectiveness

Success stories: AI in action

The transformative potential of AI is already evident across multiple sectors:

Financial services

American Express has revolutionized customer engagement through AI-powered predictive analytics, achieving a 20% increase in customer engagement and more effective retention strategies. Similarly, Klarna demonstrated remarkable efficiency gains, with their AI assistant effectively replacing 700 human customer service agents while improving service quality.

Manufacturing

Siemens has implemented AI-driven monitoring systems across their manufacturing facilities, significantly reducing maintenance costs and minimizing production downtime. GE's application of AI in supply chain management has resulted in 10-15% inventory cost reduction and dramatically improved delivery efficiency.

Retail

Walmart's AI-powered inventory strategies have transformed retail operations, improving inventory turnover and reducing holding costs. Target has leveraged AI for personalized marketing, achieving significant improvements in conversion rates and customer engagement.

AI implementation challenges

Despite these successes, AI implementation often faces significant obstacles:

Infrastructure barriers

Many organizations struggle with legacy systems that aren't equipped for AI workloads. Complete system overhauls are often impractical due to cost and risk considerations, limiting AI integration to specific processes rather than enabling comprehensive transformation.

Data management complexities

Smaller organizations frequently lack robust data management policies, resulting in inefficient data handling and integration challenges. Data engineers often spend disproportionate time resolving basic data source connections rather than focusing on AI implementation.

Security and governance

Organizations must navigate complex security considerations, particularly when handling sensitive data. Only 29% of practitioners express confidence in their generative AI applications' production readiness, highlighting significant governance challenges.

Implementation challenges

The proliferation of open-source AI models presents its own challenges. These generic solutions often fail to address specific business needs and provide inadequate control over proprietary data, potentially compromising organizational AI strategies.

The path forward: AI strategic partnership

These challenges emphasize that successful AI adoption requires more than technical expertise. Organizations need strategic partners who can:

- Navigate complex technical infrastructure challenges

- Implement robust data management strategies

- Address security concerns effectively

- Bridge organizational skill gaps

- Develop customized solutions aligned with business objectives

- Establish meaningful performance metrics

- Balance technological capabilities with strategic goals

This comprehensive understanding of both technical and strategic considerations is crucial for identifying the right AI consulting partner - one who can guide organizations through their unique AI transformation journey.

10 leading AI integration companies: Detailed profiles

1. Binariks

Binariks specializes in custom AI and machine learning solutions, focusing on healthcare, fintech, and insurance sectors. Their approach emphasizes tailored development and operational efficiency.

Service offerings

- Custom AI Model Development

- Predictive Analytics Solutions

- NLP Applications

- Computer Vision Systems

- Generative AI Implementation

Notable achievements

- Fleet tracking system with FHIR integration

- Medicare analytics platform optimization (20x cost reduction)

- Gamified meditation application development

- B2B health coaching platform transformation

- Medical appointment scheduling system

2. Grape Up

Grape Up supports global enterprises in building and maintaining mission-critical systems through the strategic use of AI, cloud technologies, and modern delivery practices. Working with major players in automotive , manufacturing , finance , and insurance , Grape Up drives digital transformation and delivers tangible business outcomes.

Service portfolio

Data & AI Services

- Data and AI Infrastructure : Establishing the technical foundations for large-scale AI initiatives, from data pipelines to the deployment of machine learning solutions.

- Machine Learning Operations : Deploying and maintaining ML models in production to ensure consistent performance, reliability, and easy scalability.

- Generative AI Applications : Using generative models to boost automation efforts, enhance customer experiences, and power new digital services.

- Tailored AI Consulting and Solutions : Advising organizations on how to integrate AI into existing processes and developing solutions aligned with specific objectives.

Software Design & Engineering Services

- A pplication Modernization with Generative AI : Modernizing legacy software by incorporating generative AI, reducing development time and improving overall performance.

- End-to-End Digital Product Developmen t: Designing, building, and launching digital products that tackle practical challenges and meet user needs.

- Cloud-First Infrastructure : Establishing and optimizing cloud environments to ensure security, scalability, and cost-effectiveness.

Success stories

- AI-Powered Customer Support for a Leading Manufacturer : Implemented an intelligent support solution to deliver quick, accurate responses and lower operational costs.

- LLM Hub for a Major Insurance Provider : Built a centralized platform that connects multiple AI chatbots for better customer engagement and streamlined operations.

- Accelerated AI/ML Deployment for a Sports Car Brand : Designed a rapid deployment system to speed up AI application development and production.

- Voice-Driven Car Manual : Enabled real-time, personalized guidance via generative AI in mobile apps and infotainment systems.

- Generative AI Chatbot for Enhanced Operations : Created a context-aware chatbot tapping into multiple data sources for secure, on-demand insights.

Check out case studies by Grape Up - https://grapeup.com/case-studies/

3. BotsCrew

Founded in 2016, BotsCrew has emerged as a specialist in generative AI agents and voice assistants. The company has developed over 200 AI solutions, serving global brands including Adidas, FIBA, Red Cross, and Honda.

Core competencies

- Generative AI Development

- Conversational AI Systems

- Custom Chatbot Solutions

- AI Strategy Consulting

Key implementations

- Honda: AI voice agent deployment with 15,000+ interactions

- Red Cross: Internal AI assistant covering 65% of queries

- Choose Chicago: Website AI agent engaging 500k+ visitors

4. Addepto

Addepto has established itself as a leading AI consulting firm, earning recognition from Forbes, Deloitte, and the Financial Times. The company combines strategic advisory services with hands-on implementation expertise, specializing in process automation and optimization for global enterprises.

Service portfolio

- AI Strategy & Consulting: Strategic guidance and transformation roadmap development

- Generative AI Development : Text, image, code, and multi-modal solutions

- A gentic AI : Autonomous systems for decision-making

- Custom Chatbot Solutions : Advanced NLU-powered conversational systems

- Machine Learning & Predictive Analytics

- Computer Vision Applications

- Natural Language Processing Solutions

Proprietary products

- ContextClue: Knowledge base assistant for document research

- ContextCheck : Open-source RAG evaluation tool

Success stories

Addepto's portfolio spans multiple industries with notable implementations:

- Aviation sector optimization through intelligent documentation systems

- AI-powered recycling process enhancement

- Real estate transaction automation

- Manufacturing predictive analytics

- Supply chain optimization for parcel delivery

- Advanced luggage tracking systems

- Retail compliance automation

- Energy sector ETL optimization

5. Miquido

With 12 years of experience and 250+ successful digital products, Miquido offers comprehensive AI services integrated with broader digital transformation capabilities. Their client portfolio includes Warner, Dolby, Abbey Road Studios, and Skyscanner.

Technical expertise

- Generative AI Solutions

- Machine Learning Systems

- D ata Science Services

- Computer Vision Applications

- Python Development

- RAG Implementation

- Strategic AI Consulting

Notable implementations

- Nextbank: Credit scoring system (97% accuracy, 500M+ applications)

- PZU: Pioneer Google Assistant deployment

- Pangea: Rapid deployment platform (90%+ efficiency improvement)

Each of these companies brings unique strengths and specialized expertise to the AI consulting landscape. Their success stories and diverse project portfolios demonstrate the practical impact of well-implemented AI solutions across various industries.

6. Cognizant

Cognizant focuses on digital transformation and AI integration across various industries. The company has garnered numerous awards for its excellence in AI technologies, including the AI Breakthrough Award for Best Natural Language Generation Platform.

Service portfolio

- AI and Machine Learning Solutions : Implementing advanced AI technologies to enhance decision-making processes and operational efficiency.

- Cloud Services: Facilitating seamless migration to cloud-based architectures to improve scalability and agility.

- Data Management and Analytics : Providing tools for effective data aggregation, analysis, and visualization to drive informed business decisions.

- Digital Transformation Consulting: Assisting organizations in adopting innovative technologies to modernize their operations.

- Generative AI Services : Developing solutions that leverage generative AI for various applications, including healthcare administration.

Success stories:

- Generative AI: Increased coding productivity by 100% and reduced rework by 50%.

- Intelligent Underwriting Tool: Streamlined underwriting processes for a global reinsurance company.

- AI for Biometric Data Protection: Automated real-time masking of Aadhaar numbers for compliance.

- Campaign Conversion Improvement: Enhanced ad performance, increasing click-through and conversion rates.

- Cloud-Based AI Analytics for Mining: Improved real-time monitoring and efficiency in ore transportation.

- Fraud Loss Reduction: Saved a global bank $20M through expedited check verification.

- Preventive Care AI Solution: Identified at-risk patients for drug addiction, lowering healthcare costs.

7. SoluLab

SoluLab specializes in next-generation digital solutions, combining domain expertise with technical excellence to address complex business challenges through AI, blockchain, and web development.

Service portfolio

- AI Consulting : Provides end-to-end guidance for AI adoption, from feasibility analysis and use case identification to ROI-focused implementation strategies. Their team assesses existing infrastructure and creates tailored roadmaps that prioritize scalability and measurable outcomes.

- AI Application Development : Delivers custom AI-powered applications focusing on intelligent automation, real-time analytics, and predictive modeling. They follow agile methodologies to ensure solutions align with evolving business needs.

- Large Language Model Fine-Tuning : Specializes in optimizing pre-trained models like GPT and BERT for specific business domains, ensuring efficient deployment with minimal latency and continuous performance monitoring.

- Generative AI Development : Creates innovative applications for content generation and creative workflow automation, with robust monitoring systems to optimize performance and maintain ethical AI practices.

- AI Chatbot Development : Designs conversational AI solutions that enhance customer engagement and streamline communication, with seamless integration across platforms like WhatsApp and Slack.

- AI Agent Development : Builds autonomous decision-making systems for tasks ranging from customer service to supply chain optimization, featuring real-time learning capabilities for dynamic process improvement.

Success stories

- Gradient: Developed an advanced AI platform that combines stable diffusion and GPT-3 integration for seamless image and text generation.

- InfuseNet: Created a comprehensive AI platform that enables businesses to import and process data from various sources using advanced models like GPT-4, FLAN, and GPT-NeoX, focusing on data security and business growth.

- Digital Quest: Implemented an AI-powered ChatGPT solution for a travel business, enhancing customer engagement and travel recommendations through seamless communication.

8. LeewayHertz

LeewayHertz is a specialized AI services company with deep expertise in machine learning, natural language processing, and computer vision. They focus on helping businesses adopt AI technologies through strategic consulting and implementation services, with a strong emphasis on delivering measurable outcomes and maximum value for their clients.

Service portfolio

- AI/ML Strategy Consulting : Provides strategic guidance to help businesses align their AI initiatives with organizational goals, ensuring maximum value from AI investments.

- Custom AI Development : Creates tailored solutions including specialized machine learning models and NLP applications to address specific business challenges.

- Generative AI : Develops advanced tools for content creation and virtual assistants, designed to enhance engagement and operational efficiency.

- Computer Vision : Builds sophisticated applications for image and video analysis, enabling process automation and enhanced security measures.

- Data Analytics : Delivers insights-driven solutions that optimize decision-making processes and operational efficiency.

- AI Integration : Ensures seamless deployment and ongoing support for integrating AI solutions into existing systems and workflows.

Success stories

- Wine Recommendation LLM App: Developed a sophisticated large language model application for a Swiss wine e-commerce company, featuring personalized recommendations, multilingual capabilities, and real-time inventory management.

- Compliance and Security Access Platform: Created an LLM-powered application that streamlines access to compliance benchmarks and audit data, enhancing user experience and providing valuable industry insights.

- Medical Assistant AI: Implemented an advanced healthcare solution utilizing algorithms and Natural Language Processing to improve data gathering, analysis, and diagnostic workflows for enhanced patient care.

- Machinery Troubleshooting Application: Developed an LLM-powered solution for a Fortune 500 manufacturing company that integrates machinery data with safety policies to provide rapid troubleshooting and enhanced safety protocol management.

- WineWizzard Recommendation Engine: Built an AI-powered engine delivering personalized wine suggestions and detailed product information to boost customer engagement and satisfaction.

9. Ekimetrics

Ekimetrics is a specialized data science and analytics consulting firm focused on helping businesses leverage data for strategic decision-making and performance improvement. The company combines expertise in statistical modeling, machine learning, and artificial intelligence to deliver actionable insights tailored to client needs across industries. Their approach integrates advanced analytics with practical business applications to drive measurable results.

Service portfolio

- AI-Powered Marketing Solutions : Optimizes marketing strategies and budget allocations through advanced mix models and attribution systems, ensuring maximum ROI for marketing investments.

- C ustomer Analytics : Provides AI-driven analysis of customer data to uncover behavioral patterns, preferences, and segmentation opportunities, enabling improved marketing personalization and engagement.

- Predictive Modeling : Implements machine learning algorithms for forecasting trends and consumer actions, helping businesses anticipate demand and make informed strategic decisions.

- Operational Excellence : Streamlines processes and optimizes supply chain management through AI-powered automation and workflow optimization.

- Sustainability Solutions : Offers AI tools for environmental impact assessment, including carbon footprint analysis and strategies for achieving net-zero goals.

- Custom AI Solutions : Develops tailored AI applications in partnership with clients to address specific business challenges while ensuring scalability and long-term value.

Success stories

- Nestlé Customer Insights: Delivered advanced analytics and customer insights enabling Nestlé to refine marketing strategies and enhance consumer engagement across their product portfolio.

- Ralph Lauren Predictive Analytics: Implemented predictive modeling solutions that improved customer behavior understanding and inventory management, leading to more accurate sales forecasting.

- McDonald's Data Strategy: Partnered with McDonald's to analyze customer data and optimize menu offerings, resulting in improved customer satisfaction and sales performance.

10. BCG X

BCG X is a division of Boston Consulting Group that pioneers transformative business solutions through advanced technology and AI integration. With a powerhouse team of nearly 3,000 experts spanning technologists, scientists, and designers, BCG X builds innovative products, services, and business models that address critical global challenges. Their distinctive approach combines predictive and generative AI capabilities to deliver scalable solutions that help organizations revolutionize their operations and customer experiences.

Service portfolio

- Advanced AI Integration : Developing comprehensive predictive AI solutions that transform data into strategic insights, enabling clients to make informed decisions and anticipate market trends.

- Digital Product Innovation : Creating cutting-edge digital platforms and products that leverage AI capabilities to deliver exceptional user experiences and drive business value.

- Enterprise Transformation : Orchestrating end-to-end digital transformations that combine AI technology, process optimization, and organizational change to achieve sustainable results.

- Customer Experience Design : Crafting AI-powered customer journeys that deliver personalized experiences, enhance engagement, and maximize lifetime value through data-driven insights.

- Technology Architecture : Building robust, scalable technology foundations that enable rapid innovation and seamless integration of advanced AI capabilities across the enterprise.

Success stories

- Global Financial Services Transformation: Partnered with a leading bank to implement AI-driven risk assessment and customer service solutions, resulting in 40% faster processing times and improved customer satisfaction scores.

- Retail Innovation Initiative: Developed and deployed an AI-powered inventory management system for a major retailer, reducing stockouts by 30% and increasing supply chain efficiency.

- Healthcare Analytics Platform: Created a comprehensive data analytics platform for a healthcare provider network, enabling predictive patient care modeling and improved resource allocation.

- Manufacturing Optimization: Implemented advanced AI solutions in production processes for a global manufacturer, leading to 25% reduction in operational costs and improved quality control.

- Digital Product Launch: Collaborated with a consumer goods company to develop and launch an AI-enabled digital product suite, resulting in new revenue streams and enhanced market position.

Ready to accelerate your AI journey?

Are you looking for a strategic partner with deep expertise in cloud-native solutions, real-time data streaming, and user-centred AI product development? Grape Up is here to help. Our team of experts specializes in tailoring AI solutions that align with your organization’s unique goals. We help you deliver measurable, sustainable outcomes.

Apache Kafka fundamentals

Nowadays, we have plenty of unique architectural solutions. But all of them have one thing in common – every single decision should be done after a solid understanding of the business case as well as the communication structure in a company. It is strictly connected with famous Conway’s Law:

“Any organization that designs a system (defined broadly) will produce a design whose structure is a copy of the organization's communication structure.”

In this article, we go deeper into the Event-Driven style, and we discover when we should implement such solutions. This is when Kafka comes to play.

The basic definition taken from the Apache Kafka site states that this is an open-source distributed event streaming platform . But what exactly does it mean? We explain the basic concepts of Apache Kafka, how to use the platform, and when we may need it.

Apache Kafka is all about events

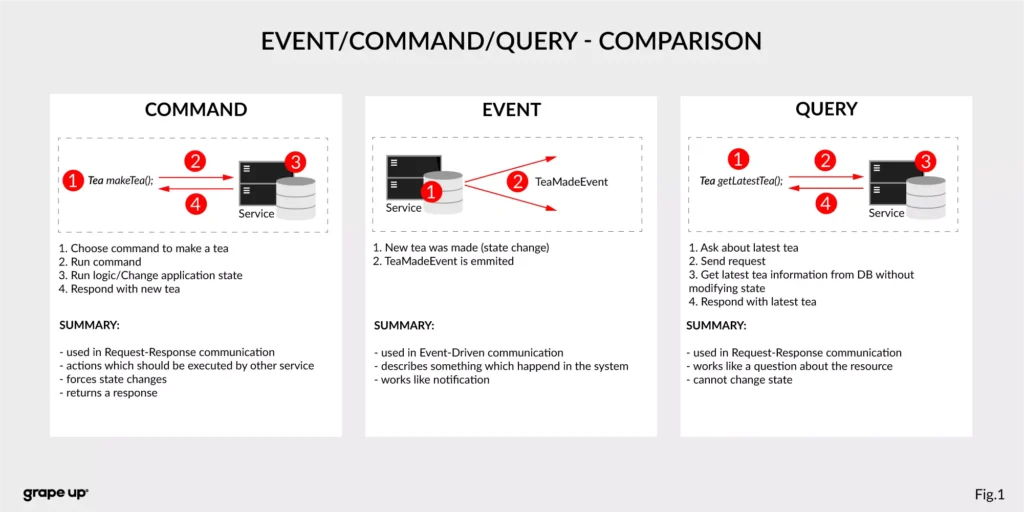

To understand what the event streaming platform is, we need to have a prior understanding of an event itself. There are different ways of how the services can interact with each other – they can use Commands, Events, or Queries. So, what is the difference between them?

- Command – we can call it a message in which we expect something to be done - like in the army when the commander gives an order to soldiers. In computer science, we are making requests to other services to perform some action, which causes a system state change. The crucial part is that they are synchronous, and we expect that something will happen in the future. It is the most common and natural method for communication between services. On the other hand, you do not really know if your expectation will be fulfilled by the service. Sometimes we create commands, and we do not expect any response (it is not needed for the caller.)

- Event – the best definition of an event is a fact. It is a representation of the change which happened in the service (domain). It is essential that there is no expectation of any future action. We can treat an event as a notification of state change. Events are immutable. In other words - it is everything necessary for the business. This is also a single source of truth, so events need to precisely describe what happened in the system.

- Query – in comparison to the others, the query is only returning a response without any modifications in the system state. A good example of how it works can be an SQL query.

Below there is a small summary which compares all the above-mentioned ways of interaction:

Now we know what the event is in comparison to other interaction styles. But what is the advantage of using events? To understand why event-driven solutions are better than synchronous request-response calls, we have to learn a bit about software architecture history.

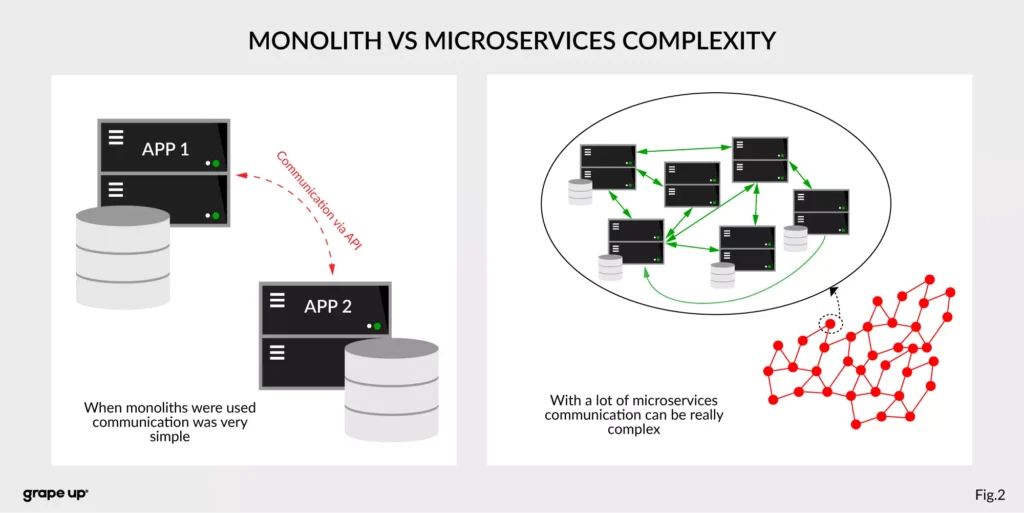

The figure describes a difference between a system that has old monolith architecture and a system with new modern microservice architecture.

The left side of the figure presents an API communication between two monoliths. In this case, communication is straightforward and easy. There is a different problem though such monolith solutions are very complex and hard to maintain.

The question is, what happens if we want to use, instead of two big services, a few thousands of small microservices . How complex will it be? The directed graph on the right side is showing how quickly the number of calls in the system can grow, and with it, the number of shared resources. We can have a situation when we need to use data from one microservice in many places. That produces new challenges regarding communication.

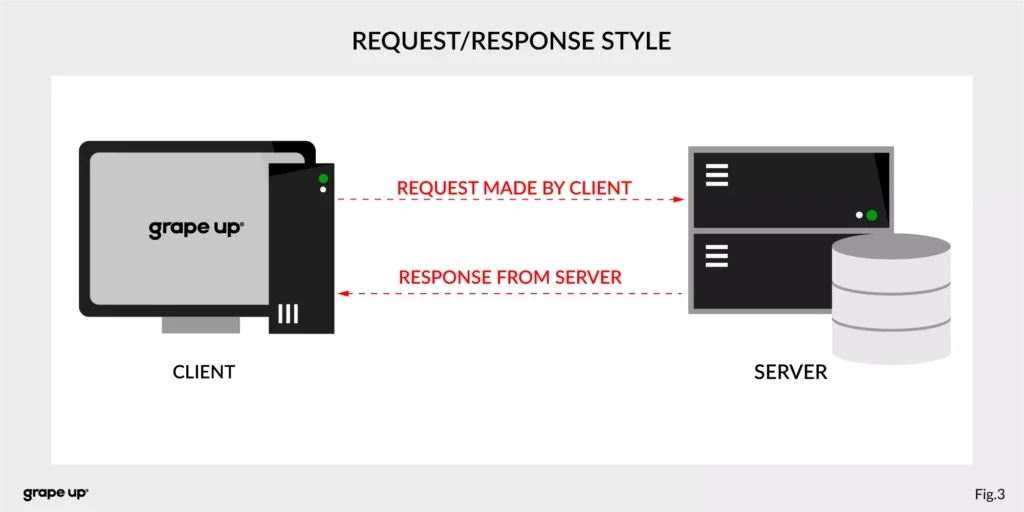

What about communication style?

In both cases, we are using a request-response style of communication (figure below), and we need to know how to use API provided by the server from the caller perspective. There must be some kind of protocol to exchange messages between services.

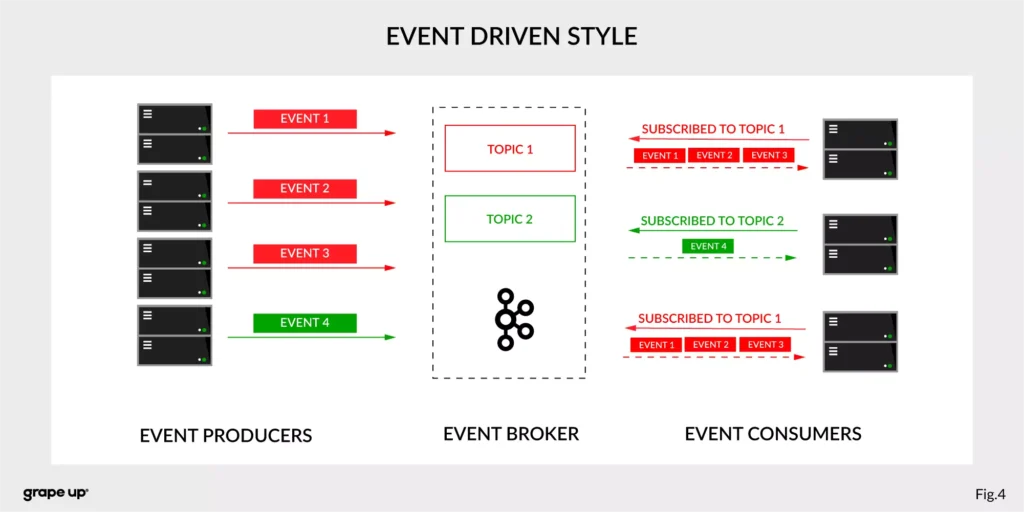

So how to reduce the complexity and make an integration between services easier? To answer this – look at the figure below.

In this case, interactions between event producers and consumers are driven by events only. This pattern supports loose coupling between services, and what is more important for us, the event producer does not need to be aware of the event consumer state. It is the essence of the pattern. From the producer's perspective, we do not need to know who or how to use data from the topic.

Of course, as usual, everything is relative. It is not like the event-driven style is always the best. It depends on the use case. For instance, when operations should be done synchronously, then it is natural to use the request-response style. In situations like user authentication, reporting AB tests, or integration with third-party services, it is better to use a synchronous style. When the loose coupling is a need, then it is better to go with an event-driven approach. In larger systems, we are mixing styles to achieve a business goal.

The name of Kafka has its origins in the word Kafkaesque which means according to the Cambridge dictionary something extremely unpleasant, frightening, and confusing, and similar to situations described in the novels of Franz Kafka.

The communication mess in the modern enterprise was a factor to invent such a tool. To understand why - we need to take a closer look at modern enterprise systems.

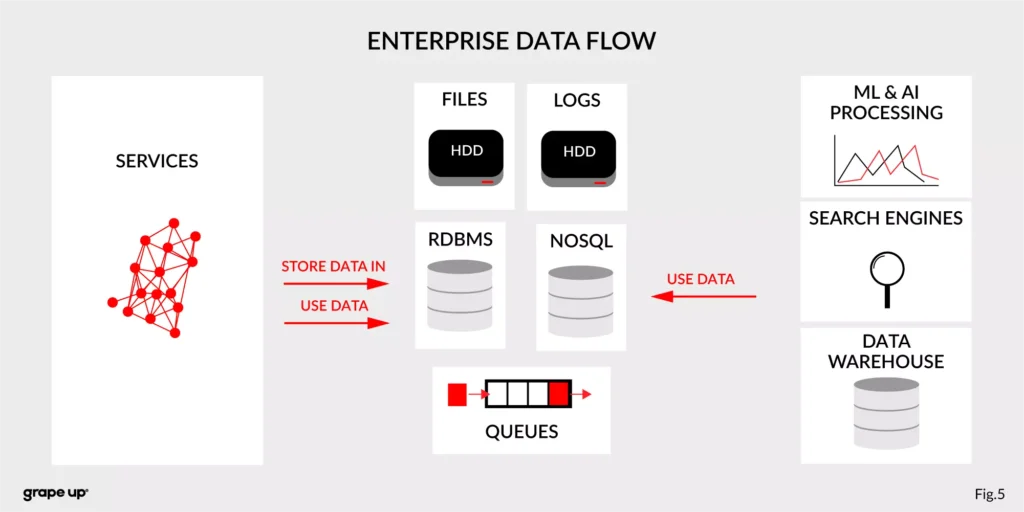

The modern enterprise systems contain more than just services. They usually have a data warehouse, AI and ML analytics, search engines, and much more. The format of data and the place where data is stored are various – sometimes a part of the data is stored in RDBMS, a part in NoSQL, and other in file bucket or transferred via a queue. They can have different formats and extensions like XML, JSON, and so on. Data management is the key to every successful enterprise. That is why we should care about it. Tim O’Reilly once said:

„We are entering a new world in which data may be more important than software.”

In this case, having a good solution for processing crucial data streams across an enterprise is a must to be successful in business. But as we all know, it is not always so easy.

How to tame the beast?

For this complex enterprise data flow scenario, people invented many tools/methods. All to make this enterprise data distribution possible. Unfortunately, as usual, to use them, we have to make some tradeoffs. Here we have a list of them:

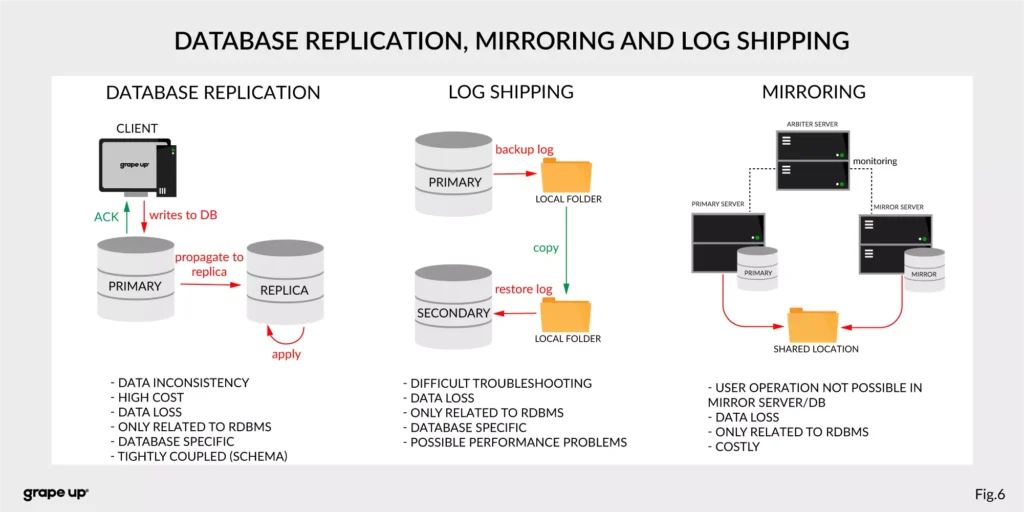

- Database replication, Mirroring, and Log Shipping - used to increase the performance of an application (scaling) and backup/recovery.

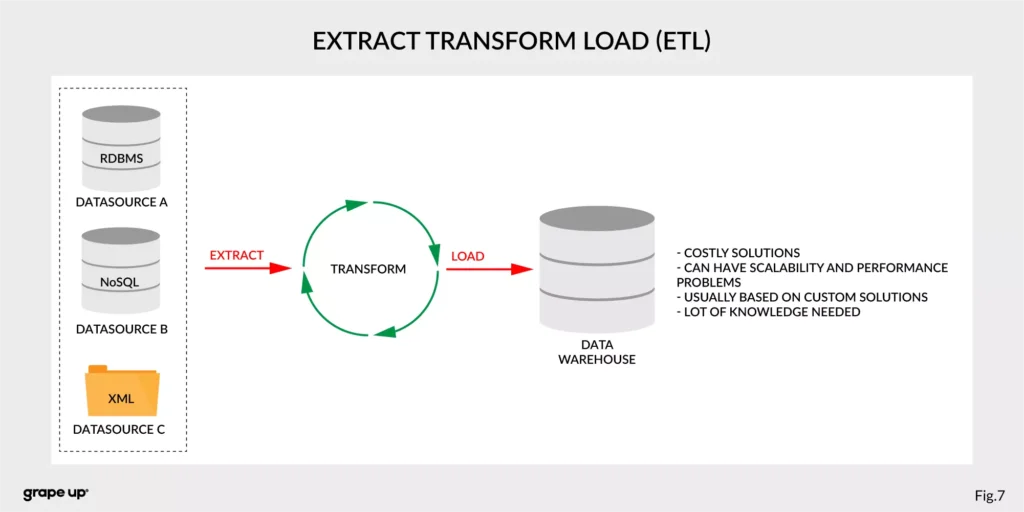

- ETL – Extract, Transform, Load - used to copy data from different sources for analytics/reports.

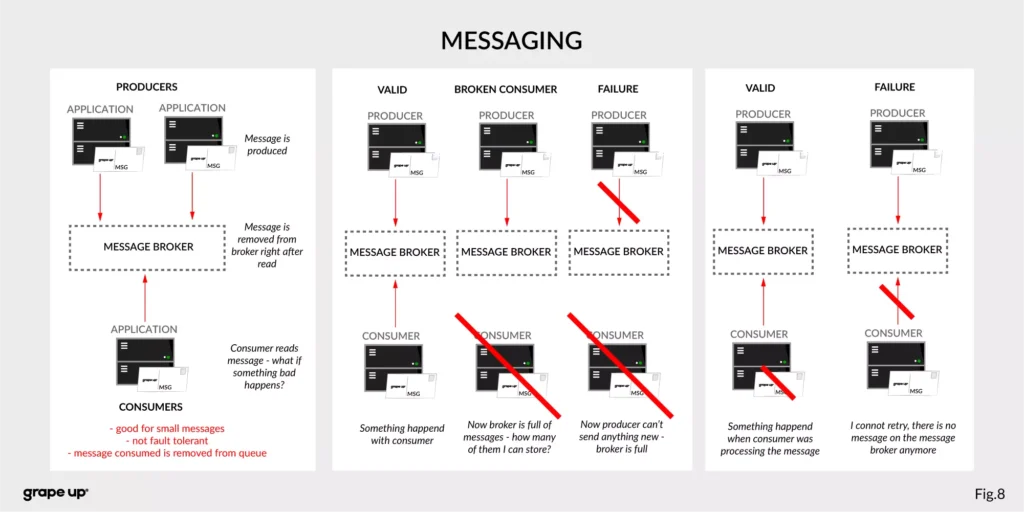

- Messaging systems - provide asynchronous communication between systems.

As you can see, we have a lot of problems that we need to take care of to provide correct data flow across an enterprise organization. That is why Apache Kafka was invented. One more time we have to go to the definition of Apache Kafka. It is called a distributed event streaming platform. Now we know what the event is and how event-driven style looks like. So as you probably can guess, event streaming, in our case, means capturing, storing, manipulating, processing, reacting, and routing event streams in real-time. It is based on three main capabilities – publishing/subscribing, storing, and processing. These three capabilities make this tool very successful.

- Publishing/Subscribing provides an ability to read/write to streams of events and even more – you can continuously import/export data from different sources/systems.

- Storing is also very important here. It solves the abovementioned problems in messaging. You can store streams of events for as long as you want without being afraid that something will be gone.

- Processing allows us to process streams in real-time or use history to process them.

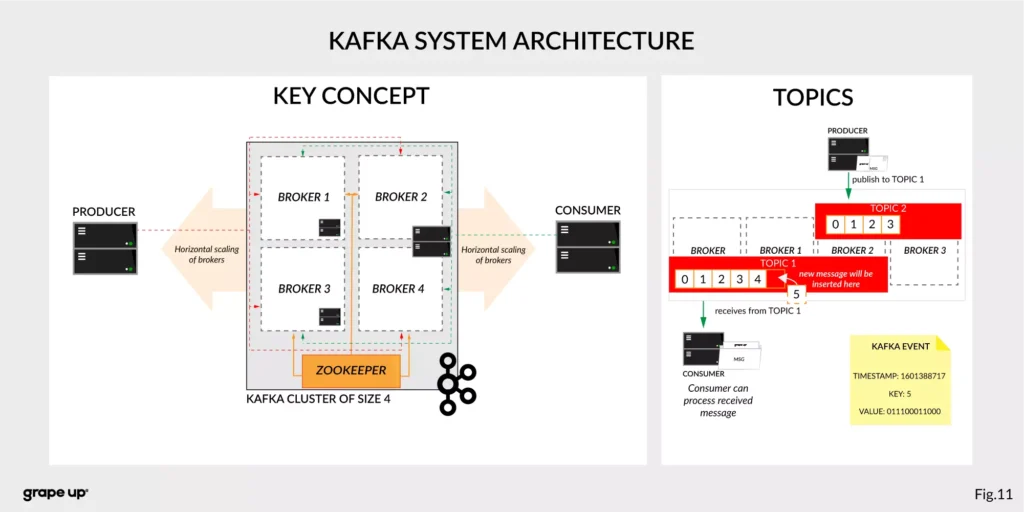

But wait! There is one more word to explain – distributed. Kafka system internally consists of servers and clients. It uses a high-performance TCP Protocol to provide reliable communication between them. Kafka runs as a cluster on one or multiple servers which can be easily deployed in the cloud or on-prem in single or multiple regions. There are also Kafka Connect servers used for integration with other data sources and other Kafka Clusters. Clients that can be implemented in many programming languages have a special role to read/write and process event streams. The whole ecosystem of Kafka is distributed and of course like every distributed system has a lot of challenges regarding node failures, data loss, and coordination.

What are the basic elements of Apache Kafka?

To understand how Apache Kafka works let first explain the basic elements of the Kafka ecosystem.

Firstly, we should take a look at the event. It has a key, value, timestamp, and optional metadata headers. A key is used not only for identification, but it is used also for routing and aggregation operations for events with the same key.

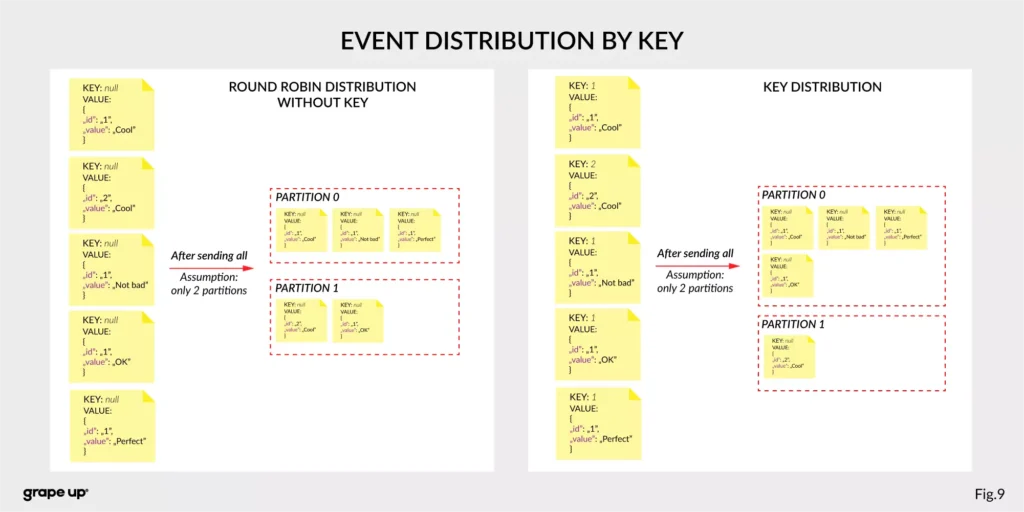

As you can see in the figure below - if the message has no key attached, then data is sent using a round-robin algorithm. The situation is different when the event has a key attached. Then the events always go to the partition which holds this key. It makes sense from the performance perspective. We usually use ids to get information about objects, and in that case, it is faster to get it from the same broker than to look for it on many brokers.

The value, as you can guess, stores the essence of the event. It contains information about the business change that happened in the system.

There are different types of events:

- Unkeyed Event – event in which there is no need to use a key. It describes a single fact of what happened in the system. It could be used for metric purposes.

- Entity Event – the most important one. It describes the state of the business object at a given point in time. It must have a unique key, which usually is related to the id of the business object. They are playing the main role in event-driven architectures.

- Keyed Event – an event with a key but not related to any business entity. The key is used for aggregation and partitioning.

Topics –storage for events. The analogy to a folder in a filesystem, where the topic is like a folder that organizes what is inside. An example name of the topic, which keeps all orders events in the e-commerce system can be “ orders” . Unlike in other messaging systems, the events stay on the topic after reading. It makes it very powerful and fault-tolerant. It also solves a problem when the consumer will process something with an error and would like to process it again. Topics can always have zero, single, and multiple producers and subscribers.

They are divided into smaller parts called partitions. A partition can be described as a “commit log”. Messages can be appended to the log and can be read only in the order from the beginning to the end. Partitions are designed to provide redundancy and scalability. The most important fact is that partitions can be hosted on different servers (brokers), and that gives a very powerful way to scale topics horizontally.

Producer – client application responsible for the creation of new events on Kafka Topic. The producer is responsible for choosing the topic partition. By default, as we mentioned earlier round-robin is used when we do not provide any key. There is also a way of creating custom business mapping rules to assign a partition to the message.

Consumer – client application responsible for reading and processing events from Kafka. All events are being read by a consumer in the order in which they were produced. Each consumer also can subscribe to more than one topic. Each message on the partition has a unique integer identifier ( offset ) generated by Apache Kafka which is increased when a new message arrives. It is used by the consumer to know from where to start reading new messages. To sum up the topic, partition and offset are used to precisely localize the message in the Apache Kafka system. Managing an offset is the main responsibility for each consumer.

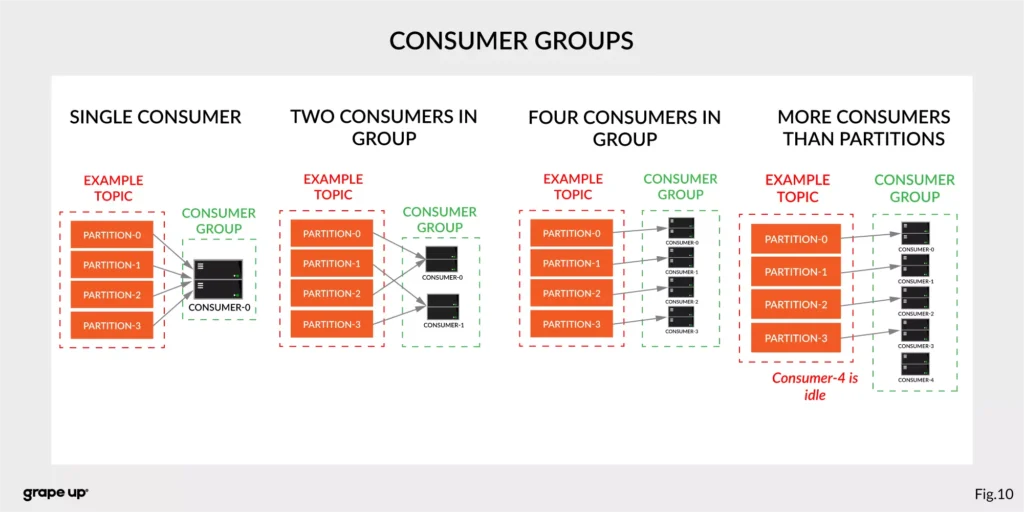

The concept of consumers is easy. But what about the scaling? What if we have many consumers, but we would like to read the message only once? That is why the concept of consumer group was designed. The idea here is when consumer belongs to the same group, it will have some subset of partitions assigned to read a message. That helps to avoid the situation of duplicated reads. In the figure below, there is an example of how we can scale data consumption from the topic. When a consumer is making time-consuming operations, we can connect other consumers to the group, which helps to process faster all new events on the consumer level. We have to be careful though when we have a too-small number of partitions, we would not be able to scale it up. It means if we have more consumers than partitions, they are idle.

But you can ask – what will happen when we add a new consumer to the existing and running group? The process of switching ownership from one consumer to another is called “rebalance.” It is a small break from receiving messages for the whole group. The idea of choosing which partition goes to which consumer is based on the coordinator election problem.

Broker – is responsible for receiving and storing produced events on disk, and it allows consumers to fetch messages by a topic, partition, and offset. Brokers are usually located in many places and joined in a cluster . See the figure below.

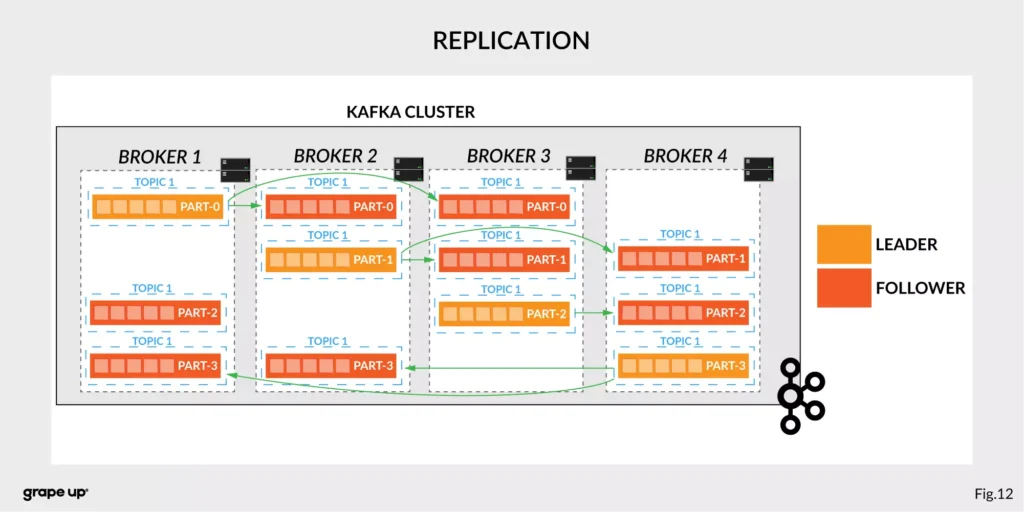

Like in every distributed system, when we use brokers we need to have some coordination. Brokers, as you can see, can be run on different servers (also it is possible to run many on a single server). It provides additional complexity. Each broker contains information about partitions that it owns. To be secure, Apache Kafka introduced a dedicated replication for partitions in case of failures or maintenance. The information about how many replicas do we need for a topic can be set for every topic separately. It gives a lot of flexibility. In the figure below, the basic configuration of replication is shown. The replication is based on the leader-follower approach.

Everything is great! We have found all advantages of using Kafka in comparison to more traditional approaches. Now it is time to say something when to use it.

When to use Apache Kafka?

Apache Kafka provides a lot of use cases. It is widely used in many companies, like Uber, Netflix, Activision, Spotify, Slack, Pinterest, Coursera, LinkedIn, etc. We can use it as a:

- Messaging system – it can be a good alternative to the existing messaging systems. It has a lot of flexibility in configuration, better throughput, and low end-to-end latency.

- Website Activity tracking – it was the original use case for Kafka. Activity tracking on the website generates a high volume of data that we have to process. Kafka provides real-time processing for event-streams, which can be sometimes crucial for the business.

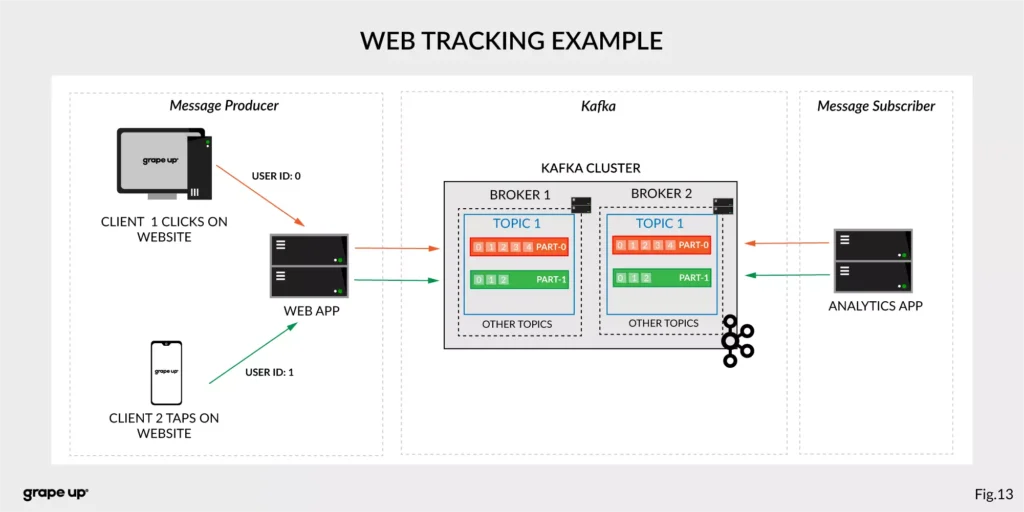

Figure 13 presents a simple use case for web tracking. The web application has a button that generates an event after each click. It is used for real-time analytics. Clients' events that are gathered on TOPIC 1. Partitioning is using user-id so client 1 events (user-id = 0) are stored in partition 0 and client 2 (user-id = 1) are stored in partition 1. The record is appended and offset is incremented on a topic. Now, a subscriber can read a message, and present new data on a dashboard or even use older offset to show some statistics.

- Log aggregation – it can be used as an alternative to existing log aggregation solutions. It gives a cleaner way of organizing logs in form of the event streams and what is more, gives a very easy and flexible way to gather logs from many different sources. Comparing to other tools is very fast, durable, and has low end-to-end latency.

- Stream processing – is a very flexible way of processing data using data pipelines. Many users are aggregating, enriching, and transforming data into new topics. It is a very quick and convenient way to process all data in real-time.

- Event sourcing – is a system design in which immutable events are stored as a single source of truth about the system. A typical use case for event sourcing can be found in bank systems when we are loading the history of transactions. The transaction is represented by an immutable event which contains all data describing what exactly happened in our account.

- Commit log – it can be used as an external commit-log for distributed systems. It has a lot of mechanisms that are useful in this use case (like log-compaction, replication, etc.)

Summary

Apache Kafka is a powerful tool used by leading tech enterprises. It offers a lot of use cases, so if we want to use a reliable and durable tool for our data, we should consider Kafka. It provides a loose coupling between producers and subscribers, making our enterprise architecture clean and open to changes. We hope you enjoyed this basic introduction to Apache Kafka and you will try to dig deeper into how it works after this article.

Looking for guidance on implementing Kafka or other event-driven solutions?

Get in touch with us to discuss how we can help.

Sources:

- kafka.apache.org/intro

- confluent.io/blog/journey-to-event-driven-part-1-why-event-first-thinking-changes-everything/

- hackernoon.com/by-2020-50-of-managed-apis-projected-to-be-event-driven-88f7041ea6d8

- ably.io/blog/the-realtime-api-family/

- confluent.io/blog/changing-face-etl/

- infoq.com/articles/democratizing-stream-processing-kafka-ksql-part2/

- cqrs.nu/Faq

- medium.com/analytics-vidhya/apache-kafka-use-cases-e2e52b892fe1

- confluent.io/blog/transactions-apache-kafka/

- martinfowler.com/articles/201701-event-driven.html

- pluralsight.com/courses/apache-kafka-getting-started#

- jaceklaskowski.gitbooks.io/apache-kafka/content/kafka-brokers.html

Bellemare, Adam. Building event-driven microservices: leveraging distributed large-scale data . O'Reilly Media, 2020.

Narkhede, Neha, et al. Kafka: the Definitive Guide: Real-Time Data and Stream Processing at Scale . O'Reilly Media, 2017.

Stopford, Ben. Designing Event-Driven Systems, Concepts and Patterns for Streaming Services with Apache Kafka , O'Reilly Media, 2018.

How to build an Android companion app to control a car with AAOS via Wi-Fi

In this article, we will explore how to create an application that controls HVAC functions and retrieves images from cameras in a vehicle equipped with Android Automotive OS (AAOS) 14.

The phone must be connected to the car's Wi-Fi, and communication between the Head Unit and the phone is required. The Android companion app will utilize the HTTP protocol for this purpose.

In AAOS 14, the Vehicle Hardware Abstraction Layer (VHAL) will create an HTTP server to handle our commands. This functionality is discussed in detail in the article " Exploring the Architecture of Automotive Electronics: Domain vs. Zone ".

Creating the mobile application

To develop the mobile application, we'll use Android Studio. Start by selecting File -> New Project -> Phone and Tablet -> Empty Activity from the menu. This will create a basic Android project structure.

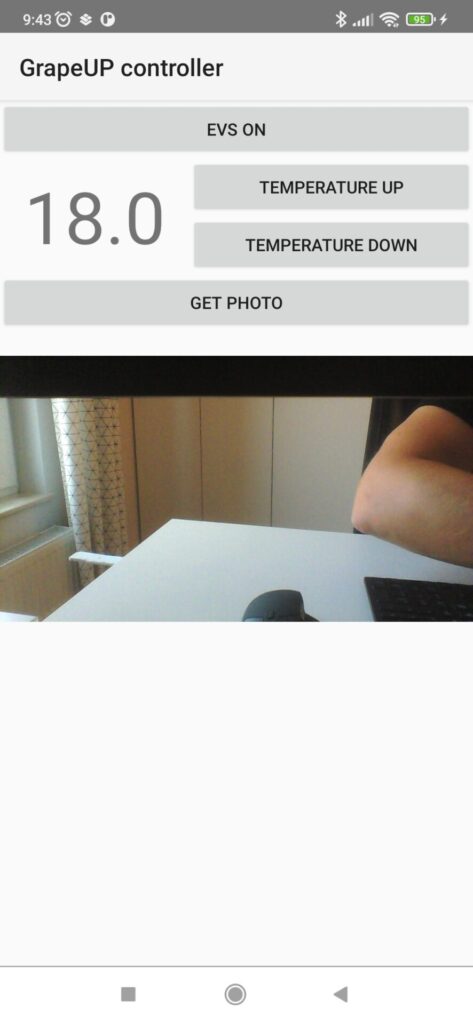

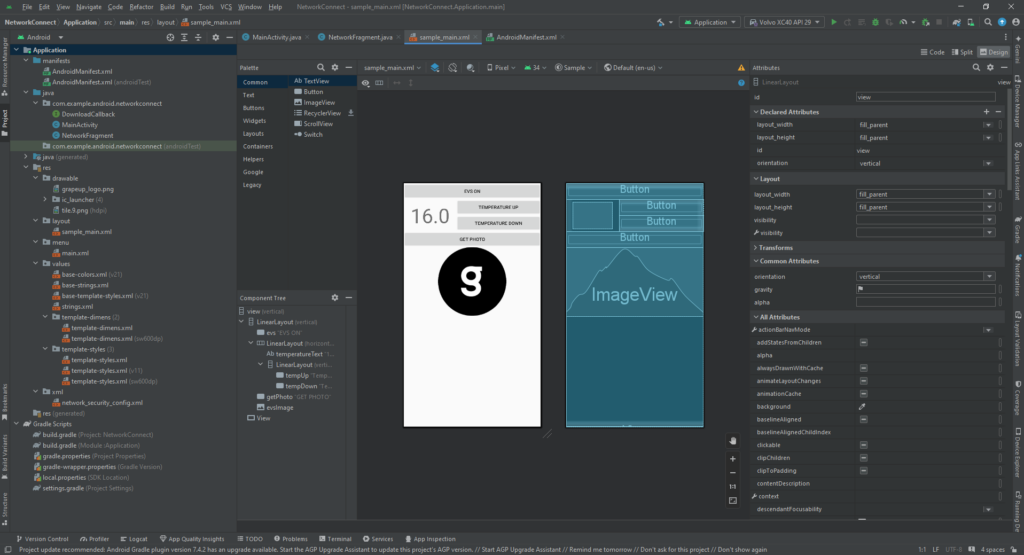

Next, you need to create the Android companion app layout, as shown in the provided screenshot.

Below is the XML code for the example layout:

<?xml version="1.0" encoding="utf-8"?>

<!-- Copyright 2013 The Android Open Source Project -->

<LinearLayout xmlns:android="http://schemas.android.com/apk/res/android"

xmlns:app="http://schemas.android.com/apk/res-auto"

android:id="@+id/view"

android:layout_width="fill_parent"

android:layout_height="fill_parent"

android:orientation="vertical">

<LinearLayout

android:layout_width="match_parent"

android:layout_height="match_parent"

android:layout_weight="1"

android:orientation="vertical">

<Button

android:id="@+id/evs"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:text="EVS ON" />

<LinearLayout

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:orientation="horizontal">

<TextView

android:id="@+id/temperatureText"

android:layout_width="wrap_content"

android:layout_height="wrap_content"

android:layout_marginStart="20dp"

android:layout_marginTop="8dp"

android:layout_marginEnd="20dp"

android:text="16.0"

android:textSize="60sp" />

<LinearLayout

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:orientation="vertical">

<Button

android:id="@+id/tempUp"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:text="Temperature UP" />

<Button

android:id="@+id/tempDown"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:text="Temperature Down" />

</LinearLayout>

</LinearLayout>

<Button

android:id="@+id/getPhoto"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:text="GET PHOTO" />

<ImageView

android:id="@+id/evsImage"

android:layout_width="match_parent"

android:layout_height="wrap_content"

app:srcCompat="@drawable/grapeup_logo" />

</LinearLayout>

<View

android:layout_width="fill_parent"

android:layout_height="1dp"

android:background="@android:color/darker_gray" />

</LinearLayout>

Adding functionality to the buttons

After setting up the layout, the next step is to connect actions to the buttons. Here's how you can do it in your MainActivity :

Button tempUpButton = findViewById(R.id.tempUp);

tempUpButton.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

tempUpClicked();

}

});

Button tempDownButton = findViewById(R.id.tempDown);

tempDownButton.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

tempDownClicked();

}

});

Button evsButton = findViewById(R.id.evs);

evsButton.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

evsClicked();

}

});

Button getPhotoButton = findViewById(R.id.getPhoto);

getPhotoButton.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

Log.w("GrapeUpController", "getPhotoButton clicked");

new DownloadImageTask((ImageView) findViewById(R.id.evsImage))

.execute("http://192.168.1.53:8081/");

}

});

Downloading and displaying an image

To retrieve an image from the car’s camera, we use the DownloadImageTask class, which downloads a JPEG image in the background and displays it:

private class DownloadImageTask extends AsyncTask<String, Void, Bitmap> {

ImageView bmImage;

public DownloadImageTask(ImageView bmImage) {

this.bmImage = bmImage;

}

@Override

protected Bitmap doInBackground(String... urls) {

String urldisplay = urls[0];

Bitmap mIcon11 = null;

try {

Log.w("GrapeUpController", "doInBackground: " + urldisplay);

InputStream in = new java.net.URL(urldisplay).openStream();

mIcon11 = BitmapFactory.decodeStream(in);

} catch (Exception e) {

Log.e("Error", e.getMessage());

e.printStackTrace();

}

return mIcon11;

}

@Override

protected void onPostExecute(Bitmap result) {

bmImage.setImageBitmap(result);

}

}

Adjusting the temperature

To change the car’s temperature, you can implement a function like this:

private void tempUpClicked() {

mTemperature += 0.5f;

new Thread(new Runnable() {

@Override

public void run() {

doInBackground("http://192.168.1.53:8080/set_temp/" +

String.format(Locale.US, "%.01f", mTemperature));

}

}).start();

updateTemperature();

}

Endpoint overview

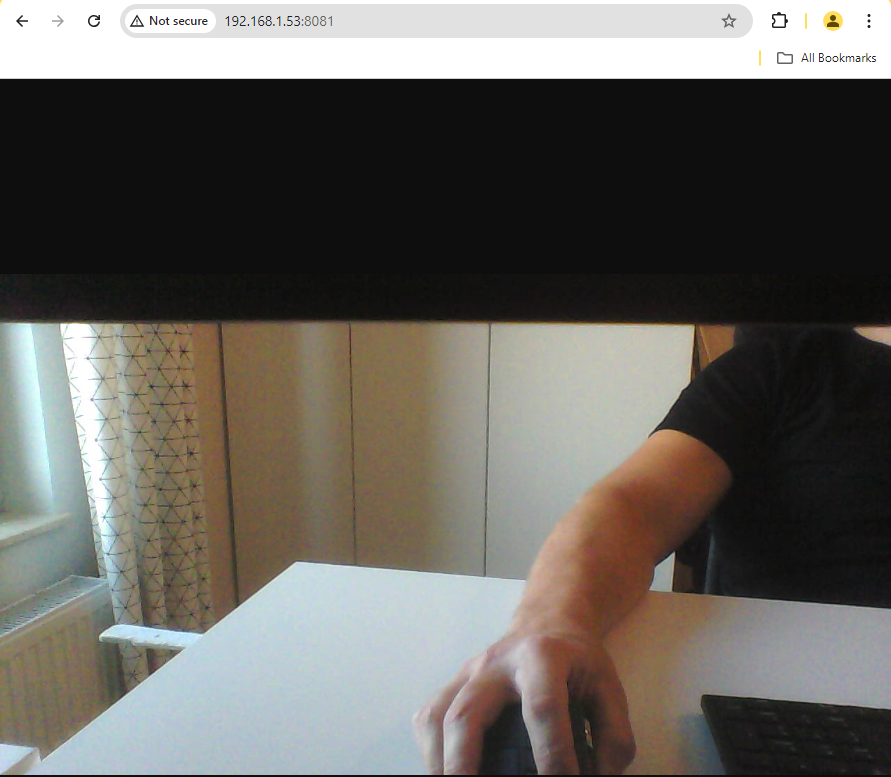

In the above examples, we used two endpoints: http://192.168.1.53:8080/ and http://192.168.1.53:8081/.

- The first endpoint corresponds to the AAOS 14 and the server implemented in the VHAL , which handles commands for controlling car functions.

- The second endpoint is the server implemented in the EVS Driver application. It retrieves images from the car’s camera and sends them as an HTTP response.

For more information on EVS setup in AAOS, you can refer to the articles " Android AAOS 14 - Surround View Parking Camera: How to Configure and Launch EVS (Exterior View System) " and " Android AAOS 14 - EVS network camera. "

EVS driver photo provider

In our example, the EVS Driver application is responsible for providing the photo from the car's camera. This application is located in the packages/services/Car/cpp/evs/sampleDriver/aidl/src directory. We will create a new thread within this application that runs an HTTP server. The server will handle requests for images using the v4l2 (Video4Linux2) interface.

Each HTTP request will initialize v4l2, set the image format to JPEG, and specify the resolution. After capturing the image, the data will be sent as a response, and the v4l2 stream will be stopped. Below is an example code snippet that demonstrates this process:

#include <errno.h>

#include <fcntl.h>

#include <linux/videodev2.h>

#include <stdint.h>

#include <stdio.h>

#include <string.h>

#include <sys/ioctl.h>

#include <sys/mman.h>

#include <unistd.h>

#include "cpp-httplib/httplib.h"

#include <utils/Log.h>

#include <android-base/logging.h>

uint8_t *buffer;

size_t bufferLength;

int fd;

static int xioctl(int fd, int request, void *arg)

{

int r;

do r = ioctl(fd, request, arg);

while (-1 == r && EINTR == errno);

if (r == -1) {

ALOGE("xioctl error: %d, %s", errno, strerror(errno));

}

return r;

}

int print_caps(int fd)

{

struct v4l2_capability caps = {};

if (-1 == xioctl(fd, VIDIOC_QUERYCAP, &caps))

{

ALOGE("Querying Capabilities");

return 1;

}

ALOGI("Driver Caps:\n"

" Driver: \"%s\"\n"

" Card: \"%s\"\n"

" Bus: \"%s\"\n"

" Version: %d.%d\n"

" Capabilities: %08x\n",

caps.driver,

caps.card,

caps.bus_info,

(caps.version >> 16) & 0xff,

(caps.version >> 24) & 0xff,

caps.capabilities);

v4l2_format format;

format.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

format.fmt.pix.pixelformat = V4L2_PIX_FMT_MJPEG;

format.fmt.pix.width = 1280;

format.fmt.pix.height = 720;

LOG(INFO) << __FILE__ << ":" << __LINE__ << " Requesting format: "

<< ((char*)&format.fmt.pix.pixelformat)[0]