Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

A path to a successful AI adoption

Artificial Intelligence seems to be a quite overused term in recent years, yet it is hard to argue that it is definitely the greatest technological promise of current times. Every industry strongly believes that AI will empower them to introduce innovations and improvements to drive their businesses, increase sales, and reduce costs.

But even though AI is no longer a new thing, companies struggle with adopting and implementing AI-driven applications and systems . That applies not only to large scale implementations (which are still very rare) but often to the very first projects and initiatives within an organization. In this article, we will shed some light on how to successfully adopt AI and benefit from it.

AI adoption - how to start?

How to start then? The answer might sound trivial, but it goes like this: start small and grow incrementally. Just like any other innovation, AI cannot be rolled out throughout the organization at once and then harvested across various business units and departments. The very first step is to start with a pilot AI adoption project in one area, prove its value and then incrementally scale up AI applications to other areas of the organization.

But how to pick the right starting point? A good AI pilot project candidate should have certain characteristics:

- It should create value in one of 3 ways:

- By reducing costs

- By increasing revenue

- By enabling new business opportunities

- It should give a quick win (6-12 months)

- It should be meaningful enough to convince others to follow

- It should be specific to your industry and core business

At Grape Up, we help our customers choose the initial AI project candidate by following a proven process. The process consists of several steps and eventually leads to implementing a single pilot AI project in production.

Step 1: Ideation

We start with identifying possible areas in the organization that might be enhanced with AI, e.g., parts of processes to improve, problems to solve, or tasks to automate. This part of the process is the most essential as it becomes the baseline for all subsequent phases. Therefore it is crucial to execute it together with the customer but also ensure the customer understands what AI can do for their organization. To enable that, we explain the AI landscape, including basic technology, data, and what AI can and cannot do. We also show exemplary AI applications to a customer-specific industry or similar industries.

Having that as a baseline, we move on to the more interactive part of that phase. Together with customer executives and business leaders, we identify major business value drivers as well as current pain points & bottlenecks through collaborative discussion and brainstorming. We try to answer questions such as:

- What in current processes impedes your business development?

- What tasks in current processes are repeatable, manual, and time-consuming?

- What are your pain points, bottlenecks, and inefficiencies in your current processes?

This step results in a list of several (usually 5 to 10) ideas ready for further investigation on where to potentially start applying AI in the organization.

Step 2: Business value evaluation

The next step aims at detailing the previously selected ideas. Again, together with the customer, we define detailed business cases describing how problems identified in step 1 could be solved and how these solutions can create business value.

Every idea is broken down into a more detailed description using the Opportunity Canvas approach - a simple model that helps define the idea better and consider its business value. Using filled canvas as the baseline, we analyze each concept and evaluate against the business impact it might deliver, focusing on business benefits and user value but also expected effort and cost.

Eventually, we choose 4-8 ideas with the highest impact and the lowest effort and describe detailed use cases (from business and high-level functional perspective).

Step 3: Technical evaluation

In this phase, we evaluate the technical feasibility of previously identified business cases – in particular, whether AI can address the problem, what data is needed, whether the data is available, what is the expected cost and timeframe, etc.

This step usually requires technical research to identify AI tools, methods, and algorithms that could best address the given computational problem, data analysis – to verify what data is needed vs. what data is available and often small-scale experiments to better validate the feasibility of concepts.

We finalize this phase with a list of 1-3 PoC candidates that are technically feasible to implement but more importantly – are verified to have a business impact and to create business value.

Step 4: Proof of Concept

Implementation of the PoC project is the goal of this phase and involves data preparation (to create data sets whose relationship to the model targets is understood), modeling (to design, train, and evaluate machine learning models), and eventual deployment of the PoC model that best addresses the defined problem.

It results in a working PoC that creates business value and is the foundation for the production-ready implementation.

How to move AI adoption forward?

Once the customer is satisfied with PoC results, they want to productionize the solution to fully benefit from the AI-driven tool. Moving pilots to production is also a crucial part of scaling up AI adoption. If the successful projects remain still just experiments and PoCs, then it is demanding for a company to move forward and apply AI to other processes within the organization .

To summarize the most important aspects of a successful AI adoption:

- Start small – do not try to roll out innovation globally at once.

- Begin with a pilot project – pick the meaningful starting point that provides business value but also is feasible.

- Set realistic expectations – do not perceive AI as the ultimate solution for all your problems.

- Focus on quick wins – choose a solution that can be built within 6-12 months to quickly see the results and benefits.

- Productionize – move out from the PoC phase to production to increase visibility and business impact.

Automotive Tech Week Megatrends: Key takeaways

For the last couple of months, the ones during which we have to cope with COVID-19, we could have heard people all over the world saying that virtual events are pointless, and it’s not the same as it used to be. Presentations are pre-recorded, and speakers are reading the text instead of presenting. If you ask the speaker a question, you may not get the answer because s/he is not there, and the audience is very limited.

Luckily, not all the events go like this. A perfect example of a great virtual event is Automotive Tech Week Megatrends that took place 2 weeks ago. Great speakers, insightful topics even for the most demanding automotive industry enthusiasts. A lot of attendees and most importantly - everything was happening live!

If you are a member of the automotive community, or at least you follow the industry trends, you have probably noticed how impactful changes have happened there during the last few years. From a very conservative industry, it turned into a forefront of digital disruption, adopting the newest technologies and trends faster than ever. How does it work? High-speed internet availability, LTE and 5G network coverage increased largely. Extreme miniaturization of fast, low-energy silicon chips made modern technologies like augmented reality or autonomous driving available for the users, but it also completely changed the way OEM’s build cars. We can also observe new channels of monetization in the industry.

Results are spectacular. Not so long ago, introducing a new model or solutions used to take a few years, now automotive enterprises work at a rapid pace having a new competition on the market like Tesla or Rimac. Subscription-based monetization models are here to stay, as well as huge infotainment screens and very sophisticated, intelligent driver assistants.

But technology is not the only aspect of the fast-changing world. The other, maybe even more important trend is the ecology in mobility, with the main focus on electric and hybrid drivetrains. Reducing the CO 2 footprint of the automotive industry by decreasing fossil fuel share in favor of electric or even fuel cell motors have become important and limited only by charging infrastructure and, still awaiting for game-changing invention, batteries capacity.

However, what are the next fundamental trends for the industry? Which of the trends, we can notice now, are here to stay and which are just fads? Speakers of Automotive Tech Week Megatrends tried to answer that specific question in the last week of January 2021.

Presentations covered a wide spectrum of topics: electric cars and charging infrastructures, urban mobility, and mobility as a service, user experience based vehicle and infotainment designs, V2X, and modern vehicle system infrastructure. Let’s quickly walk through the most interesting ones.

Evolving Mobility in an everchanging digital world

In the opening presentation, Stephen Zeh from Silvercar, Audi, explained the challenges of shared mobility, the evolution of their rental services and value of digital offerings, especially subscription-based. The shared mobility experiences dramatic growth caused by a shift in customer behavior and approach - owning a car is no longer a goal, but people still want to travel by car. Different means of travel require different vehicles - small cars for short trips, bigger for family tours, micromobility like electric bikes or scooters for urban travels or shared mobility like Uber replacing taxis.

The automotive industry had to quickly adapt to the pandemic situation, especially when, in April 2020, the ride-halting customer count plummeted as the fear of infection emerged in society and demand for contactless, safe car rental surged.

All of that caused major changes in Audi Dealerships offering, not just limited to vehicle sales anymore, but providing a much wider set of services for their clients.

How user experience drove design for the all-new F-150

The next day, Ehab Kaod, Chief Designer for F-150 at Ford, explained the challenges of designing a modern car by starting from user experience, affecting interior, exterior, and infotainment design and architecture.

Starting from on-site and remote customer research, finding the important features, and their pain points in previous truck models and how the new F-150 can solve their everyday issues. All of that resulted in new UX inventions like stow away, foldable shifter, bigger work surface inside, tailgate workbench or improved front seat design allowing a customer to take a nap in the vehicle.

5G & V2X – shaping the future of automotive

Another important presentation delivered by Claes Herlitz, VP and Head of Global Automotive Services at Ericsson, went through a relevant topic - always-connected vehicle infrastructure.

The challenge of a connected vehicle is the network bandwidth, stability of the connection, but also prioritization of services and a cost factor for the customer.

With overcoming this challenge, organic growth can come because of new services being available: fast, over-the-air updates improving the car and adding new features, data used for analytics to find the ways to improve cost efficiency and user experience.

Finding a balance between customer consent, data & new products

Next, Magnus Gunnarsson from Ericson, Sebastian Lasek from Skoda Auto, Manfred Wiedemann from Porsche AG, and Diego Villuendas Pellicero from Seat jointly participated in a panel covering the customer data handling from the perspective of different regions: Europe, USA, and China, using the data for designing and evaluating new products and strategies increasing customer acceptance for the handling of data securely.

With cars becoming always connected, IoT devices, the amounts of data about customer behavior or the vehicles itself grows exponentially. This may raise questions about data security, user consent for data storage and usage for e-commerce, marketing and improving user experience.

In Europe, the common framework for handling this situation is GDPR (General Data Protection Regulation), which helps to establish a common baseline for all manufacturers regarding data security and customer consent. It also helps OEMs feel safe when operating under this kind of strict regulations.

On the other hand, the big question arises if other regions, like America or China, should use the same processes, as both legal regulations and cultural differences may be an important aspect to consider when, for example, the vehicle infotainment asks customers for license acceptance or data usage consent when this is not really necessary.

All things considered, the big challenge for data monetization in Europe now is getting higher user acceptance.

The future of in-car payments – converting the car to a marketplace

Will Judge, Vice-President for Mobility Payments in Mastercard, explained in his presentation how car infotainment can be converted into a digital marketplace. For most customers, the convenience of digital commerce is not as important as its security. They want to feel they control the payment process with full knowledge about all parties that can access their sensitive data. Modern credit card providers enable digital transactions using secure tokens of the payment card that can be transferred through the internet, as well as heuristic and AI for fraud detection making the whole process safer.

With this level of security, the digital purchases can be safely provided in-car directly from infotainment systems with a rather easy-to-use manner for the user.

Automotive Tech Week Megatrends covered most of the hot topics that the industry is currently talking about. Great speakers representing world-recognized brands like Skoda and Audi showed some great results of their approach to innovation but most importantly how they are changing the way their organizations think of monetizing cars. Having advertisements appearing on your infotainment and a marketplace in Skoda cars seems to be the way that others will follow in the coming months/years. It is clearly visible how the Internet and Internet of Things, ecology, and data-driven approaches are guiding the industry to expand the market presence through sharing economy and subscription-based mobility. It seems that C.A.S.E strategy (Connected-Autonomous-Shared-Electric) is still the way to go!

Automation testing: Making tests independent from existing data

Each test automation project is different. The apps are different, the approach is different, even though the tools and frameworks used might seem to be the same. Each project brings different challenges and requirements, resulting in a need to adapt to solutions being delivered - although all of it is covered by the term "software testing". This time we want to tackle the issue of test data being used in automation testing.

Setting up the automation testing project

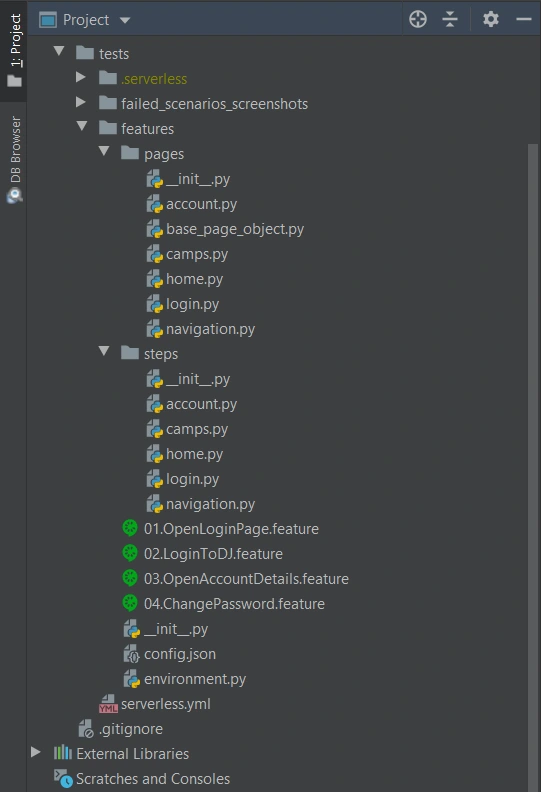

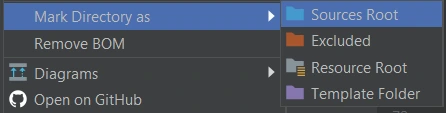

Let's consider the following scenario: as usual, our project implements the Page Object Pattern approach with the use of Cucumber .). This part is no novelty - tidy project structure and test scenarios written in Gherkin, which is easily understandable by non-technical team members. However, the application being tested required total independence from data existing in the database, even in Development and QA environments.

The solution implemented by our team had to ensure that every Test Scenario - laid out in each Feature File, which contains steps for testing particular functionalities, was completely independent from data existing on the environment and did not interfere with other Test Cases. What was also important, the tests were also meant to run simultaneously on Selenium Grid. In a nutshell, Feature Files couldn't rely on any data (apart from login credentials) and had to create all of the test data each time they were run.

To simplify the example we are going to discuss, we will describe an approach where only one user will be used to log in to the app. Its credentials remain unchanged so there are two things to do here to meet the project criteria: the login credentials have to be passed to the login scenario and said scenario has to be triggered before each Feature File since they are run simultaneously by a runner.

Independent logging in

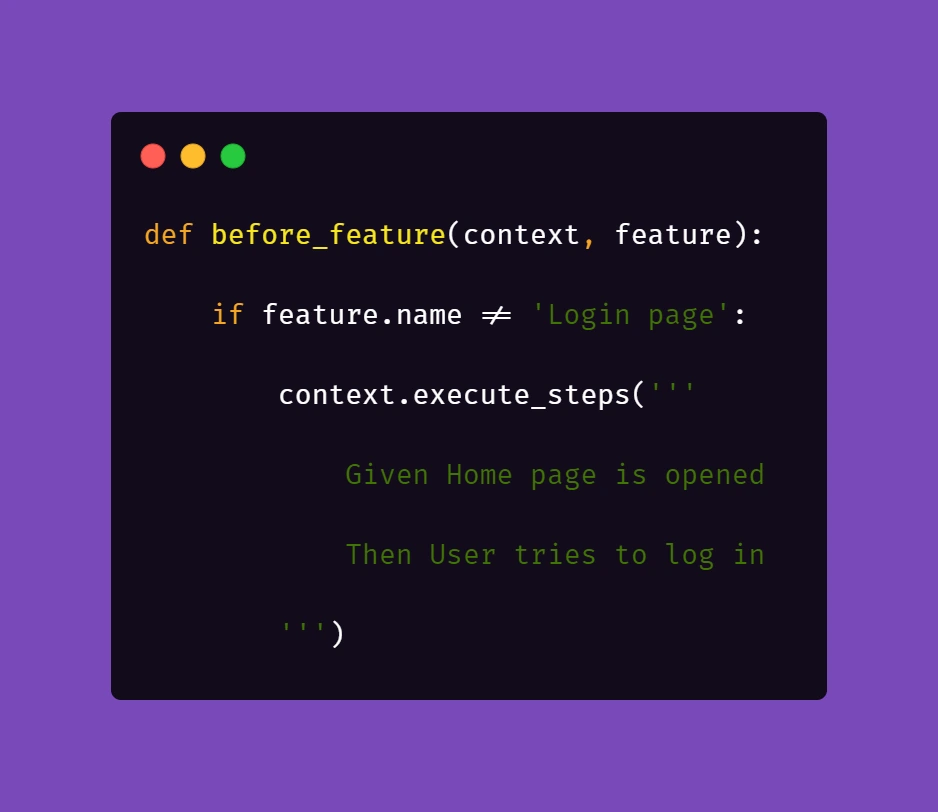

The first part is really straightforward; in your environment file you need to include a similar block of code:

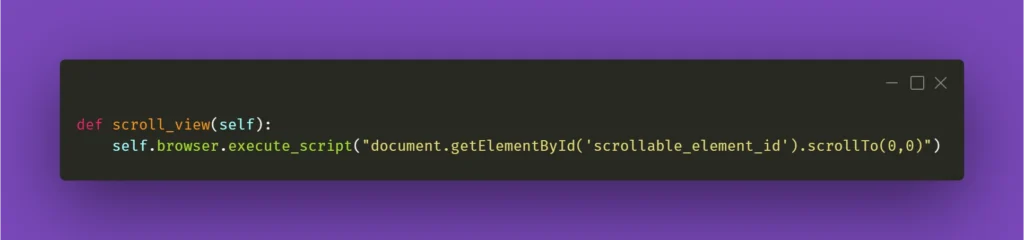

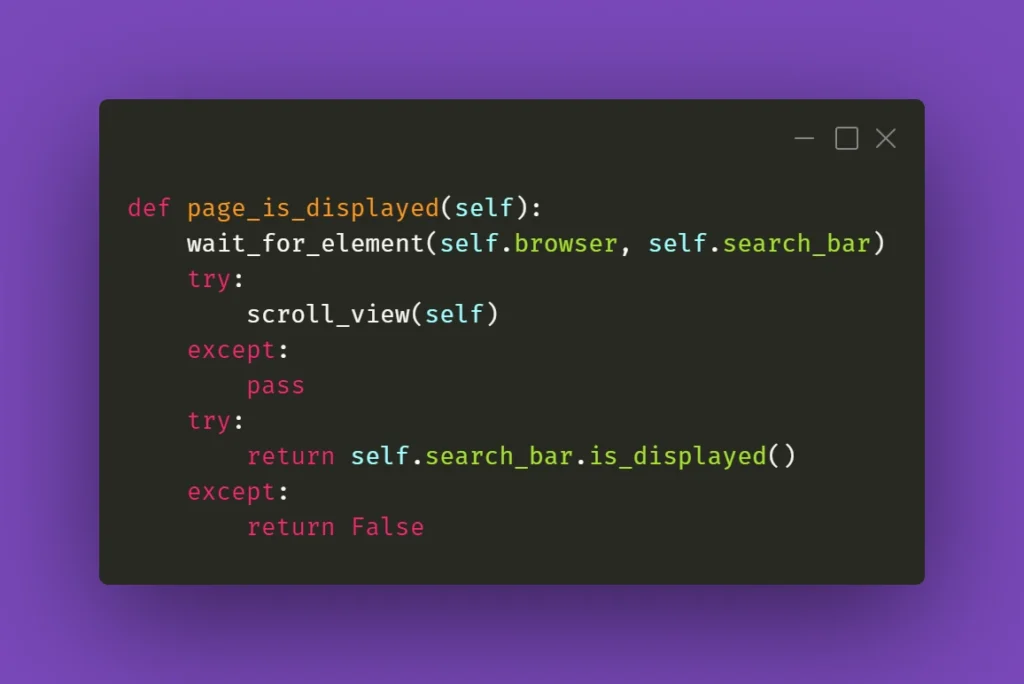

What this does is, before each feature file, which is not a Login scenario, Selenium will attempt to open the homepage of the project and attempt to log in.

Then, we need to ensure that if a session is active, logging in should be skipped.

Therefore, in the second step 'User tries to log in' we verify if within the instance of running a particular feature file, the user's session is still active. In our case, when the homepage is opened and a logged user's session is active, the app's landing page is opened. Otherwise, the user is redirected to the login page. So in the above block of code we simply verify whether the login page is opened and if login_prompt_is_displayed method returns True , login steps are executed.

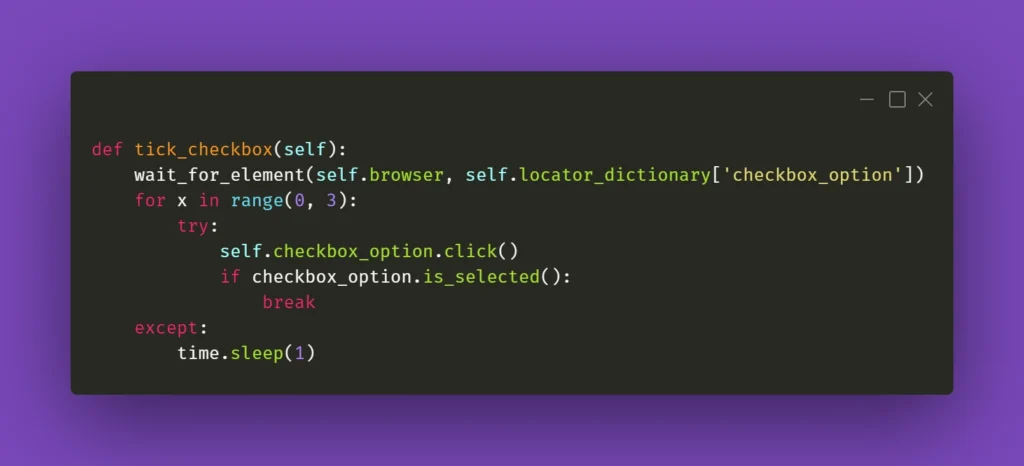

Once we dealt with logging in during simultaneous test runs, we need to handle the data being used during the tests. Again, let us simplify the example: let's assume that our hypothetical application allows its users - store staff - to add and review products the company has to offer. The system allows manipulating many data fields that affect other factors in workflows, e.g. product bundles, discounts, and suppliers. On top of that, the stock constantly grows and changes, thus even in test environments we shouldn't just run tests against migrated data to ensure consistency in test results.

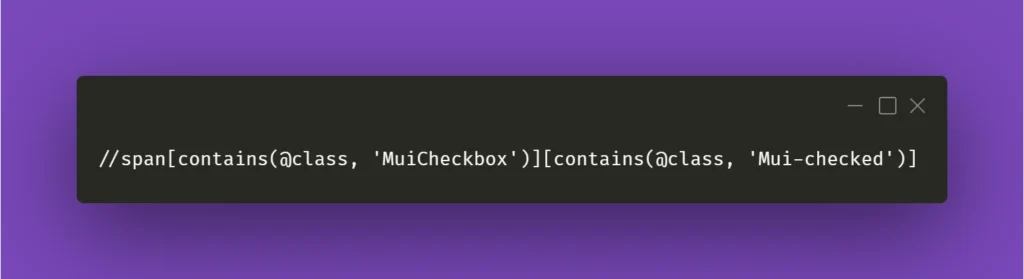

As a result of that, our automation tests will have to cover the whole flow, adding all the necessary elements to the system to test against later on. In short: if we want to cover a scenario for editing certain data in a product, the tests will need to create that specific product, save it, search for it, manipulate the data, save changes and verify the results.

Create and manipulate

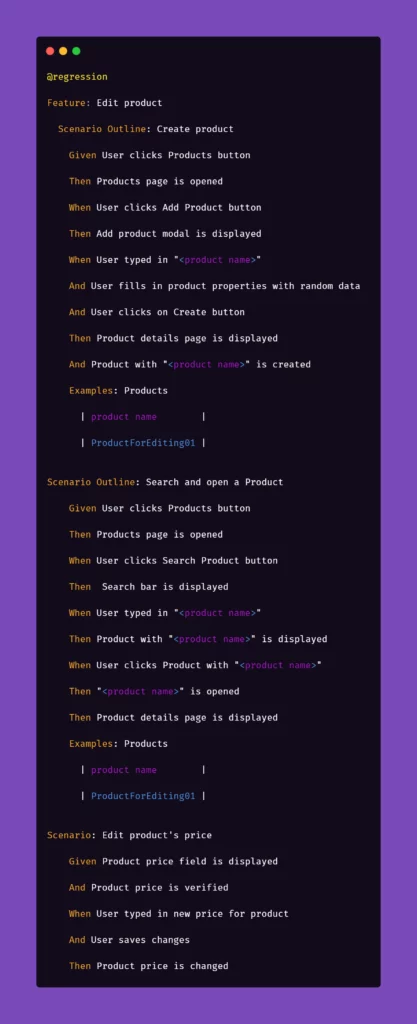

Below are the test steps to the above scenario laid out in Gherkin to illustrate what will it look like:

While the basic premise of the above scenarios may seem straightforward, the tricky part may be ensuring consistency during test runs. Of course, scripting a single scenario of adding an item in the app sounds simple, but what if we would have to do that a couple dozens of time during the regression suite run?

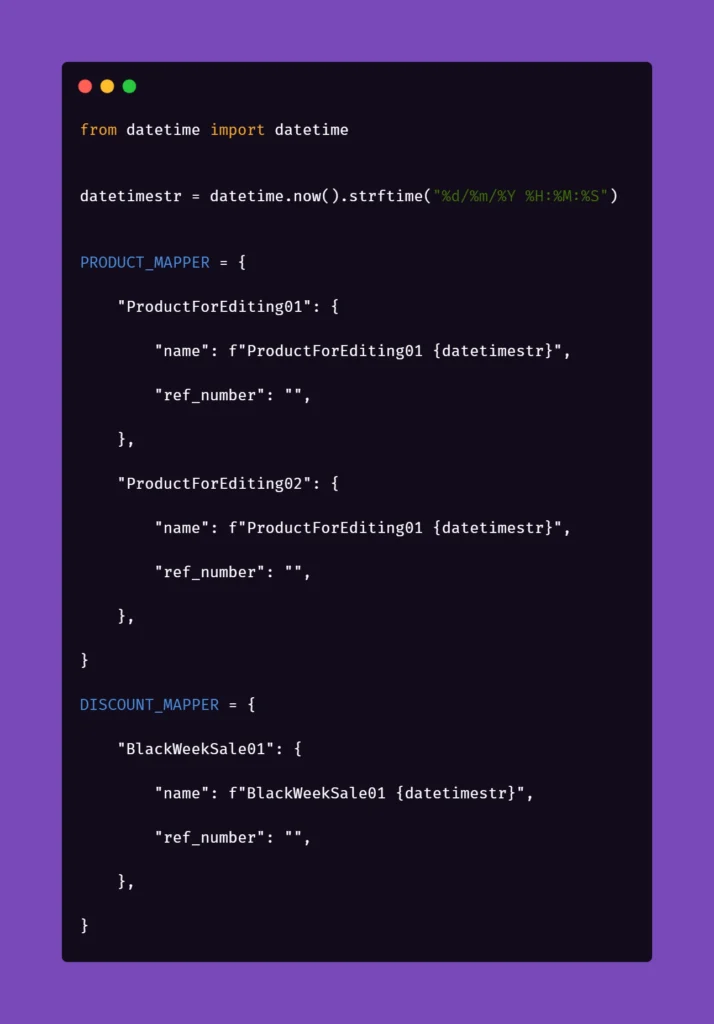

We want to have consistent, trackable test data while avoiding multiplying lines of code. To achieve that, we introduced another file to the project structure called 'globals' and placed it in the directory of feature files. Please note that in the above snippet, we extensively use "Examples" sections along with the "Scenario Outline" approach in Gherkin. We do that to pass parameters into test step definitions and methods that create and manipulate the actions we want to test in the application. That first stage of parametrization of a test scenario works in conjunction with the aforementioned 'globals' file. Let's consider the following contents of such file:

Inside the ‘globals’ file, you can find mappers for each type of object that the application can create and manipulate, for now including only a name and a reference number as an empty string. As you can see, each element will receive a datetime stamp right after its core name, each time the object in the mapper is called for. That will ensure the data created will always be unique. But what is the empty string for, you may ask?

The answer is as simple as its usage: we can store different parameters of objects inside the app that we test. For example, if a certain object can be found only by its reference number, which is unique and assigned by the system after creating, e.g., a product, we might want to store that in the mapper to use it later. But why stop there? The possibilities go pretty much as far as your imagination and patience go. You can use mappers to pass on various parameters to test steps if you need:

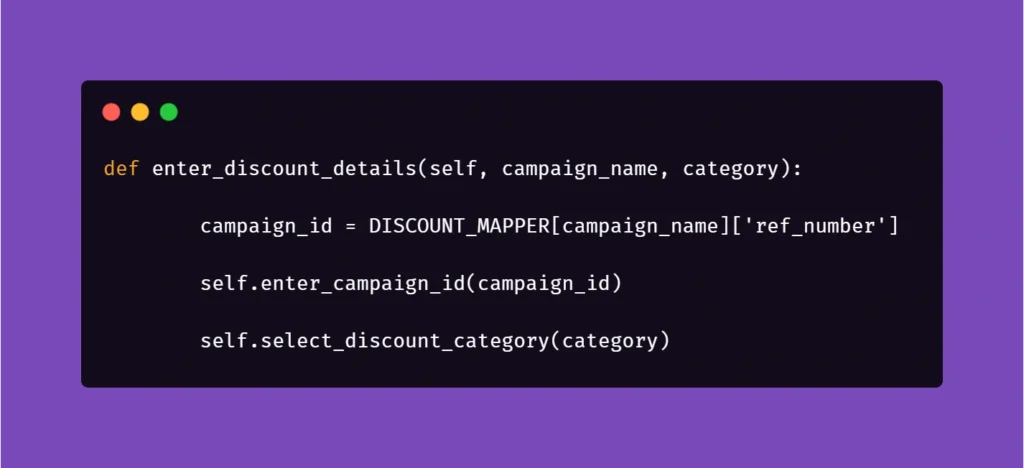

As you can see, the formula of mappers can really come in handy when your test suite needs to create somewhat repeatable, custom data for tests. The above snippet includes parameters for the creation of an item in the app which is a promotional campaign including certain types of products. Above that, you can see a mapping for a product that falls into one of the categories qualifying it for the promotional campaign. So hypothetically, if you want to test a scenario where enabling a promotional campaign will automatically discount certain products in the app, the mapping could help with that. But let's stick to basic examples to illustrate how to pass these parameters into the methods behind test steps.

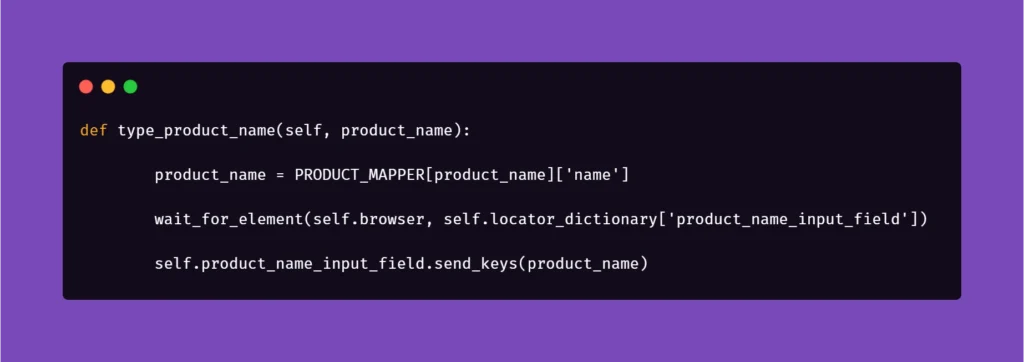

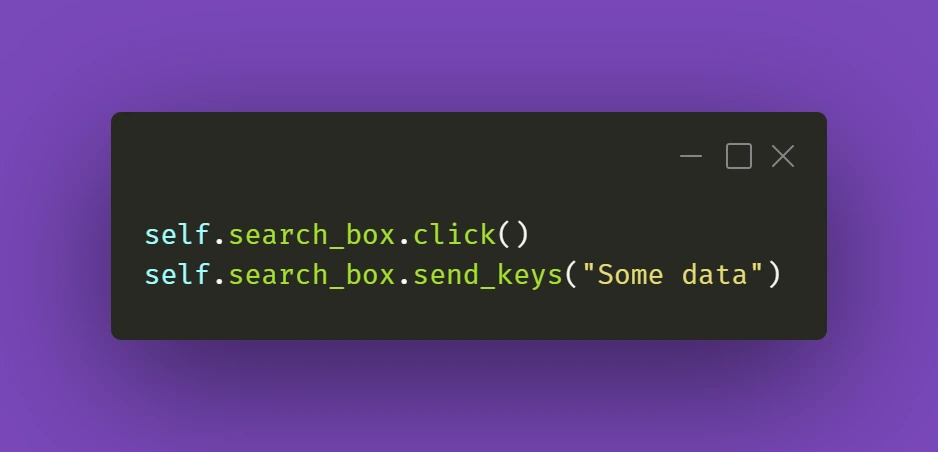

Let us begin with the concept of creating products mentioned in the Gherkin snippet. Below is the excerpt from /steps file for step "User typed in "<product name>"":

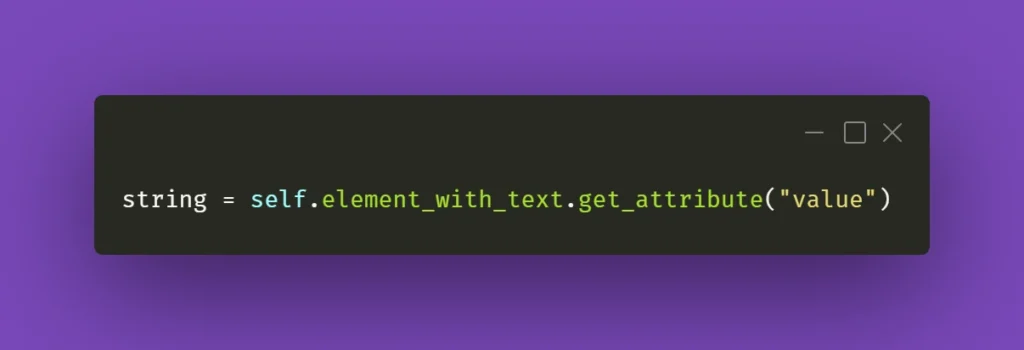

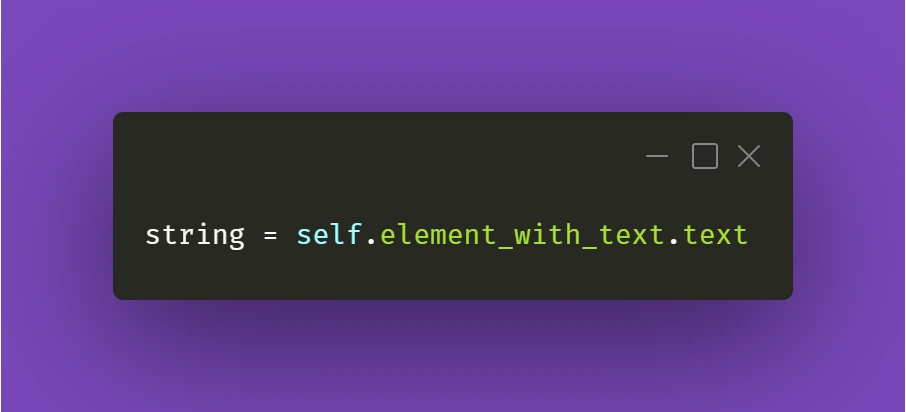

Above, we just simply pass the parameter from Gherkin to the method. Nothing fancy here. But it gets more interesting in /pages file:

First, you'll need to import a globals file to get to the data mapped out there:

Next, we want to extract the data from mapper:

Basically, the name for the product inputted in the Examples section in Scenario Outline matches the name in PRODUCT_MAPPER . Used as a variable, it allows Selenium to input the same name with a timestamp each time the scenario asks for the creation of a certain object. This concept can be used quite extensively in the test code, parameterizing anything you need.

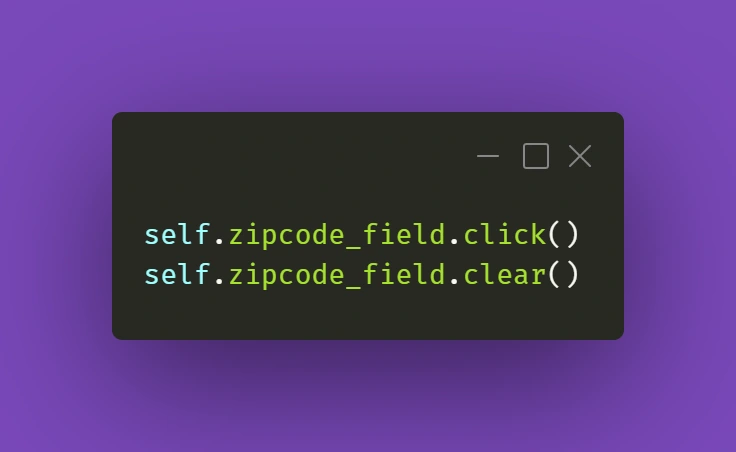

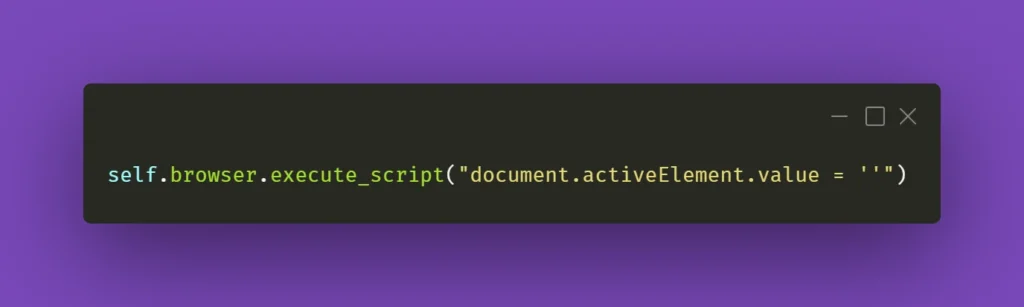

And another example:

Here, we get the data from mapper to create a specific locator to use in a specific context. This way, if the app supports it, test code can be reduced due to parametrization.

We hope that the concepts presented in this article will help you get on with your work on test automation suites. These ideas should help you automate tests faster, more clever, and much more efficiently, resulting in maximum consistency and stable results.

Kafka transactions - integrating with legacy systems

The article covers setting up and using Kafka transactions, specifically in the context of legacy systems that run on JPA/JMS frameworks. We look at various issues that may occur from using different TransactionManagers and how to properly use these different transactions to achieve desired results. Finally, we analyze how Kafka transactions can be integrated with JTA.

Many legacy applications were built on JMS consumers with the JPA database, relying on transactions to ensure exactly-once delivery. These systems rely on the stability and surety of transactional protocols so that errors are avoided. The problem comes when we try to integrate such systems with newer systems built upon non-JMS/JPA solutions – things like Kafka, MongoDB, etc.

Some of these systems, like MongoDB , actively work to make the integration with legacy JMS/JPA easier. Others, like Kafka, introduce their own solutions to such problems. We will look more deeply into Kafka and the ways we can integrate it with our legacy system.

If you want some introduction to Kafka fundamentals, start with this article covering the basics .

Classic JMS/JPA setup

First, let us do a quick review of the most common setups for legacy systems. They often use JMS to exchange messages between different applications, be it IBM MQ, RabbitMQ, ActiveMQ, Artemis, or other JMS providers – these are used with transactions to ensure exactly-once delivery. Messages are then processed in the application, oftentimes saving states in a database via JPA API using Hibernate/Spring Data to do so. Sometimes additional frameworks are used to make the processing easier to write and manage, but in general, the processing may look similar to this example:

@JmsListener(destination = "message.queue")

@Transactional(propagation = Propagation.REQUIRED)

public void processMessage(String message) {

exampleService.processMessage(message);

MessageEntity entity = MessageEntity.builder().content(message).build();

messageDao.save(entity);

exampleService.postProcessMessage(entity);

messageDao.save(entity);

jmsProducer.sendMessage(exampleService.createResponse(entity));

}

Messages are read, processed, saved to the database, processed further, updated in the database, and the response is sent to a further JMS queue. It is all done in a transactional context in one of two possible ways:

1) Using a separate JMS and JPA transaction during processing, committing a JPA transaction right before committing JMS.

2) Using JTA to merge JMS and JPA transactions so that both are committed or aborted at the same time.

Both solutions have their upsides and pitfalls; neither of them fully guarantees a lack of duplicates, though JTA definitely gives better guarantees than separate transactions. JTA also does not run into the problem of idempotent consumers, it does, however, come with an overhead. In either case, we may run into problems if we try to integrate this with Kafka.

What are Kafka transactions?

Kafka broker is fast and scalable, but the default mode in which it runs does not hold to exactly-once message delivery guarantee. We may see duplicates, or we may see some messages lost depending on circumstances, something that old legacy systems based on transactions cannot accept. As such, we need to switch Kafka to transactional mode, enabling exactly-once guarantee.

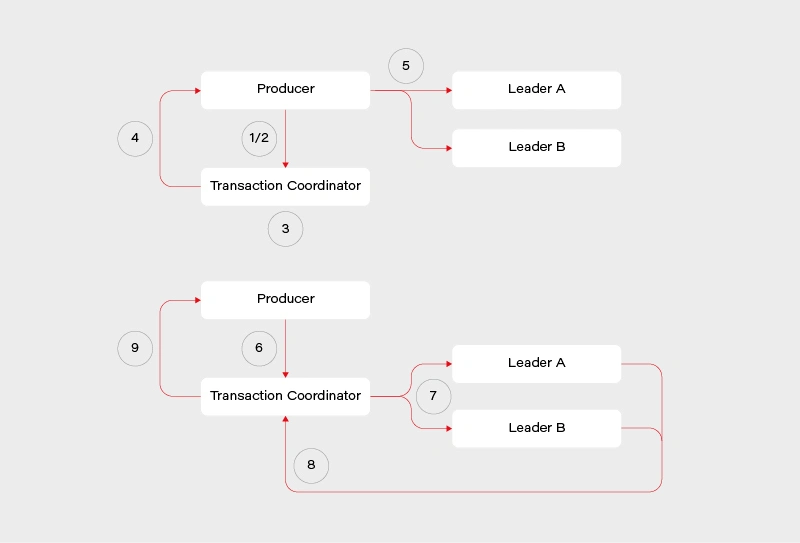

Transactions in Kafka are designed so that they are mainly handled on the producer/message broker side, rather than the consumer side. The consumer is effectively an idempotent reader, while the producer/coordinator handle the transaction.

This reduces performance overload on the consumer side, though at the cost of the broker side. The flow looks roughly like this:

1) Determine which broker is the coordinator in the group

2) Producer sends beginTransaction() request to the coordinator

3) The coordinator generates transaction-id

4) Producer receives a response from the coordinator with transaction-id

5) Producer sends its messages to the leading brokers of data partitions together with transaction-id

6) Producer sends commitTransaction() request to the coordinator and awaits the response

7) Coordinator sends commitTransaction() request to every leader broker and awaits their responses

8) Leader brokers set the transaction status to committed for the written records and send the response to the coordinator

9) Coordinator sends transaction result to the producer

This does not contain all the details, explaining everything is beyond the scope of this article and many sources can be found on this. It does however give us a clear view on the transaction process – the main player responsible is the transaction coordinator. It notifies leaders about the state of the transaction and is responsible for propagating the commit. There is some locking involved in the producer/coordinator side that may affect performance negatively depending on the length of our transactions.

Readers, meanwhile, simply operate in read-committed mode, so they will be unable to read messages from transactions that have not been committed.

Kafka transactions - setup and pitfalls

We will look at a practical example of setting up and using Kafka transactions, together with potential pitfalls on the consumer and producer side, also looking at specific ways Kafka transactions work as we go through examples. We will use Spring to set up our Kafka consumer/producer. To do this, we first have to import Kafka into our pom.xml :

<!-- Kafka -->

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

To enable transactional processing for the producer, we need to tell Kafka to explicitly enable idempotence, as well as give it transaction-id :

producer:

bootstrap-servers: localhost:9092

transaction-id-prefix: tx-

properties:

enable.idempotence: true

transactional.id: tran-id-1

Each producer needs its own, unique transaction-id , otherwise, we will encounter errors if more than one producer attempts to perform a transaction at the same time. It is crucial to make sure that each instance of an application in a cloud environment has its own unique prefix/transaction-id . Additional setup must also be done for the consumer:

consumer:

bootstrap-servers: localhost:9092

group-id: group_id

auto-offset-reset: earliest

enable-auto-commit: false

isolation-level: read_committed

The properties that interest us set enable-auto-commit to false so that Kafka does not periodically commit transactions on its own. Additionally, we set isolation-level to read committed, so that we will only consume messages when the producer fully commits them. Now both the consumer and the producer are set to exactly-once delivery with transactions.

We can run our consumer and see what happens if an exception is thrown after writing to the queue but before the transaction is fully committed. For this purpose, we will create a very simple REST mapping so that we write several messages to the Kafka topic before throwing an exception:

@PostMapping(value = "/required")

@Transactional(propagation = Propagation.REQUIRED)

public void sendMessageRequired() {

producer.sendMessageRequired("Test 1");

producer.sendMessageRequired("Test 2");

throw new RuntimeException("This is a test exception");

}

The result is exactly as expected – the messages are written to the queue but not committed when an exception is thrown. As such the entire transaction is aborted and each batch is aborted as well. This can be seen in the logs:

2021-01-20 19:44:29.776 INFO 11032 --- [io-9001-exec-10] c.g.k.kafka.KafkaProducer : Producing message "Test 1"

2021-01-20 19:44:29.793 INFO 11032 --- [io-9001-exec-10] c.g.k.kafka.KafkaProducer : Producing message "Test 2"

2021-01-20 19:44:29.808 ERROR 11032 --- [producer-tx-1-0] o.s.k.support.LoggingProducerListener : Exception thrown when sending a message with key='key-1-Test 1' and payload='1) Test 1' to topic messages_2:

org.apache.kafka.common.KafkaException: Failing batch since transaction was aborted

at org.apache.kafka.clients.producer.internals.Sender.maybeSendAndPollTransactionalRequest(Sender.java:422) ~[kafka-clients-2.5.1.jar:na]

at org.apache.kafka.clients.producer.internals.Sender.runOnce(Sender.java:312) ~[kafka-clients-2.5.1.jar:na]

at org.apache.kafka.clients.producer.internals.Sender.run(Sender.java:239) ~[kafka-clients-2.5.1.jar:na]

at java.base/java.lang.Thread.run(Thread.java:834) ~[na:na]

2021-01-20 19:44:29.808 ERROR 11032 --- [producer-tx-1-0] o.s.k.support.LoggingProducerListener : Exception thrown when sending a message with key='key-1-Test 2' and payload='1) Test 2' to topic messages_2:

org.apache.kafka.common.KafkaException: Failing batch since transaction was aborted

at org.apache.kafka.clients.producer.internals.Sender.maybeSendAndPollTransactionalRequest(Sender.java:422) ~[kafka-clients-2.5.1.jar:na]

at org.apache.kafka.clients.producer.internals.Sender.runOnce(Sender.java:312) ~[kafka-clients-2.5.1.jar:na]

at org.apache.kafka.clients.producer.internals.Sender.run(Sender.java:239) ~[kafka-clients-2.5.1.jar:na]

at java.base/java.lang.Thread.run(Thread.java:834) ~[na:na]

The LoggingProducerListener exception contains the key and contents of the message that failed to be sent. The exception tells us that the batch has been failed because the transaction was aborted. Exactly as expected, the entire transaction is atomic so failing it at the end will cause messages successfully written beforehand to not be processed.

We can do the same test for the consumer, the expectation is that the transaction will be rolled back if a message processing error occurs. For that, we will create a simple consumer that will log something and then throw it.

@KafkaListener(topics = "messages_2", groupId = "group_id")

public void consumePartitioned(String message) {

log.info(String.format("Consumed partitioned message \"%s\"", message));

throw new RuntimeException("This is a test exception");

}

We can now use our REST endpoints to send some messages to the consumer. Sure enough, we see the exact behavior we expect – the message is read, the log happens, and then rollback occurs.

2021-01-20 19:48:33.420 INFO 14840 --- [ntainer#0-0-C-1] c.g.k.kafka.KafkaConsumer : Consumed partitioned message "1) Test 1"

2021-01-20 19:48:33.425 ERROR 14840 --- [ntainer#0-0-C-1] essageListenerContainer$ListenerConsumer : Transaction rolled back

org.springframework.kafka.listener.ListenerExecutionFailedException: Listener method 'public void com.grapeup.kafkatransactions.kafka.KafkaConsumer.consumePartitioned(java.lang.String)' threw exception; nested exception is java.lang.RuntimeException: This is a test exception

at org.springframework.kafka.listener.adapter.MessagingMessageListenerAdapter.invokeHandler(MessagingMessageListenerAdapter.java:350) ~[spring-kafka-2.5.7.RELEASE.jar:2.5.7.RELEASE]

Of course, because of the rollback, the message goes back on the topic. This results in the consumer reading it again, throwing and rolling back, creating an infinite loop that will lock other messages out for this partition. This is a potential issue that we must keep in mind when using Kafka transactions messaging, the same way as we would with JMS. The message will persist if we restart the application or the broker so mindful handling of the exception is required – we need to identify exceptions that require a rollback and those that do not. This is a very-application-specific problem so there is no way to give a clear-cut solution in this article simply because such a solution does not exist.

Last but not least, it is worth noting that propagation works as expected with Spring and Kafka transactions. If we start a new transaction via @Transactional annotation with REQUIRES_NEW propagation, then Kafka will start a new transaction that commits separately from the original one and whose commit/abort result has no effect on the parent one.

There are a few more things we have to keep in mind when working with Kafka transactions, some of them to be expected, others not as much. The first thing is the fact that producer transactions lock down the topic partition that it writes. This can be seen if we run 2 servers and make one transaction delayed. In our case, we started a transaction on server 1 that wrote messages to a topic and then waited 10 seconds to commit the transaction. Server 2 in the meantime wrote its own messages and committed immediately while Server 1 was waiting. The result can be seen in the logs:

Server 1:

2021-01-20 21:38:27.560 INFO 15812 --- [nio-9001-exec-1] c.g.k.kafka.KafkaProducer : Producing message "Test 1"

2021-01-20 21:38:27.578 INFO 15812 --- [nio-9001-exec-1] c.g.k.kafka.KafkaProducer : Producing message "Test 2"

Server 2:

2021-01-20 21:38:35.296 INFO 14864 --- [ntainer#0-0-C-1] c.g.k.kafka.KafkaConsumer : Consumed message "1) Test 1 Sleep"

2021-01-20 21:38:35.308 INFO 14864 --- [p_id.messages.0] o.a.k.c.p.internals.TransactionManager : [Producer clientId=producer-tx-2-group_id.messages.0, transactionalId=tx-2-group_id.messages.0] Discovered group coordinator gu17.ad.grapeup.com:9092 (id: 0 rack: null)

2021-01-20 21:38:35.428 INFO 14864 --- [ntainer#0-0-C-1] c.g.k.kafka.KafkaConsumer : Consumed message "1) Test 2 Sleep"

2021-01-20 21:38:35.549 INFO 14864 --- [ntainer#0-0-C-1] c.g.k.kafka.KafkaConsumer : Consumed message "1) Test 1"

2021-01-20 21:38:35.676 INFO 14864 --- [ntainer#0-0-C-1] c.g.k.kafka.KafkaConsumer : Consumed message "1) Test 2"

Messages were consumed by Server 2 after Server 1 has committed its long-running transaction. Only a partition is locked, not the entire topic – as such, depending on the partitions that producers send messages to, we may encounter full, partial, or no locking at all. The lock is held until the end of the transaction, be it via commit or abort.

Another interesting thing is the order of messages – messages from Server 1 appear before messages from Server 2, even though Server 2 committed its transaction first. This is in contrast to what we would expect from JMS – the messages committed to JMS first would appear first, unlike our example. It should not be a major problem but it is something we must, once again, keep in mind while designing our applications.

Putting it all together

Now that we have Kafka transactions running, we can try and add JMS/JPA configuration to it. We can once again utilize the Spring setup to quickly integrate these. For the sake of the demo, we use an in-memory H2 database and ActiveMQ:

<!-- JPA setup -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-jpa</artifactId>

</dependency>

<dependency>

<groupId>com.h2database</groupId>

<artifactId>h2</artifactId>

<scope>runtime</scope>

</dependency><!-- Active MQ -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-activemq</artifactId>

</dependency>

<dependency>

<groupId>org.apache.activemq</groupId>

<artifactId>activemq-broker</artifactId>

</dependency>

<dependency>

<groupId>com.google.code.gson</groupId>

<artifactId>gson</artifactId>

</dependency>

We can set up a simple JMS listener, which reads a message in a transaction, saves something to the database via JPA, and then publishes a further Kafka message. This reflects a common way to try and integrate JMS/JPA with Kafka:

@JmsListener(destination = "message.queue")

@Transactional(propagation = Propagation.REQUIRED)

public void processMessage(String message) {

log.info("Received JMS message: {}", message);

messageDao.save(MessageEntity.builder().content(message).build());

kafkaProducer.sendMessageRequired(message);

}

Now if we try running this code, we will run into issues – Spring will protest that it got 2 beans of TransacionManager class. This is because JPA/JMS uses the base TransactionManager and Kafka uses its own KafkaTransactionManager . To properly run this code we have to specify which transaction manager is to be used in which @Transactional annotation. These transaction managers are completely separate and the transactions they start or commit do not affect each other. As such, one can be committed and one aborted if we throw an exception at a correct time. Let’s amend our listener for further analysis:

@JmsListener(destination = "message.queue")

@Transactional(transactionManager = "transactionManager", propagation = Propagation.REQUIRED)

public void processMessage(String message) {

log.info("Received JMS message: {}", message);

messageDao.save(MessageEntity.builder().content(message).build());

kafkaProducer.sendMessageRequired(message);

exampleService.processMessage(message);

}

In this example, we correctly mark @Transactional annotation to use a bean named transactionManager , which is the JMS/JPA bean. In a similar way, @Transactional annotation in KafkaProducer is marked to use kafkaTransactionManager , so that Kafka transaction is started and committed within that function. The issue with this code example is the situation, in which ExampleService throws in its processMessage function at line 10.

If such a thing occurs, then the JMS transaction is committed and the message is permanently removed from the queue. The JPA transaction is rolled back, and nothing is actually written to the database despite line 6. The Kafka transaction is committed because no exception was thrown in its scope. We are left with a very peculiar state that would probably need manual fixing.

To minimize such situations we should be very careful about when to start which transaction. Optimally, we would start Kafka transactions right after starting JMS and JPA transactions and commit it right before we commit JPA and JMS. This way we minimize the chance of such a situation occurring (though still cannot fully get rid of it) – the only thing that could cause one transaction to break and not the other is connection failure between commits.

Similar care should be done on the consumer side. If we start a Kafka transaction, do some processing, save to database, send a JMS message, and send a Kafka response in a naive way:

@KafkaListener(topics = "messages_2", groupId = "group_id")

@Transactional(transactionManager = "kafkaTransactionManager", propagation = Propagation.REQUIRED)

public void processMessage(String message) {

exampleService.processMessage(message);

MessageEntity entity = MessageEntity.builder().content(message).build();

messageDao.save(entity);

exampleService.postProcessMessage(entity);

messageDao.save(entity);

jmsProducer.sendMessage(message);

kafkaProducer.sendMessageRequired(exampleService.createResponse(entity));

}

Assuming MessageDAO/JmsProducer start their own transaction in their function, what we will end up with if line 12 throws is a duplicate entry in the database and a duplicate JMS message. The Kafka transaction will be properly rolled back, but the JMS and JPA transactions were already committed, and we will now have to handle the duplicate. What we should do in our case, is to start all transactions immediately and do all of our logic within their scope. One of the solutions to do so, is to create a helper bean that accepts a function to perform within a @Transactional call:

@Service

public class TransactionalHelper {

@Transactional(transactionManager = "transactionManager",

propagation = Propagation.REQUIRED)

public void executeInTransaction(Function f) {

f.perform();

}

@Transactional(transactionManager = "kafkaTransactionManager",

propagation = Propagation.REQUIRED)

public void executeInKafkaTransaction(Function f) {

f.perform();

}

public interface Function {

void perform();

}

}

This way, our call looks like this:

@KafkaListener(topics = "messages_2", groupId = "group_id")

@Transactional(transactionManager = "kafkaTransactionManager", propagation = Propagation.REQUIRED)

public void processMessage(String message) {

transactionalHelper.executeInTransaction(() -> {

exampleService.processMessage(message);

MessageEntity entity = MessageEntity.builder().content(message).build();

messageDao.save(entity);

exampleService.postProcessMessage(entity);

messageDao.save(entity);

jmsProducer.sendMessage(message);

kafkaProducer.sendMessageRequired(exampleService.createResponse(entity));

});

}

Now we start the processing within the Kafka transaction and end it right before the Kafka transaction is committed. This is of course assuming no REQUIRES_NEW propagation is used throughout the inner functions. Once again, in an actual application, we would need to carefully consider transactions in each subsequent function call to make sure that no separate transactions are running without our explicit knowledge and consent.

We will run into a problem, however – the way Spring works, JPA transactions will behave exactly as expected. JMS transaction will be started in JmsProducer anyway and committed on its own. The impact of this could be minimized by moving ExampleService call from line 13 to before line 12, but it’s still an issue we need to keep an eye on. It becomes especially important if we have to write to several different JMS queues as we process our message.

There is no easy way to force Spring to merge JPA/JMS transactions, we would need to use JTA for that.

What can and cannot be done with JTA

JTA has been designed to merge several different transactions, effectively treating them as one. When the JTA transaction ends, each participant votes whether to commit or abort it, with the result of the voting being broadcasted so that participants commit/abort at once. It is not 100% foolproof, we may encounter a connection death during the voting process, which may cause one or more of the participants to perform a different action. The risk, however, is minimal due to the way transactions are handled.

The main benefit of JTA is that we can effectively treat several different transactions as one – this is most often used with JMS and JPA transactions. So the question arises, can we merge Kafka transactions into JTA and treat them all as one? Well, the answer to that is sadly no – the Kafka transactions do not follow JTA API and do not define XA connection factories. We can, however, use JTA to fix the issue we encountered previously between JMS and JPA transactions.

To set up JTA in our application, we do need a provider; however, base Java does not provide an implementation of JTA, only the API itself. There are various providers for this, sometimes coming with the server, Websphere, and its UOP Transaction Manager being a good example. Other times, like with Tomcat, nothing is provided out of the box and we have to use our own. An example of a library that does this is Atomikos – it does have a paid version but for the use of simple JTA, we are good enough with the free one.

Spring made importing Atomikos easy with a starter dependency:

<!-- JTA setup -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-jta-atomikos</artifactId>

</dependency>

Spring configures our JPA connection to use JTA on its own; to add JMS to it, however, we have to do some configuration. In one of our @Configuration classes, we should add the following beans:

@Configuration

public class JmsConfig {

@Bean

public ActiveMQXAConnectionFactory connectionFactory() {

ActiveMQXAConnectionFactory connectionFactory = new ActiveMQXAConnectionFactory();

connectionFactory.setBrokerURL("tcp://localhost:61616");

connectionFactory.setPassword("admin");

connectionFactory.setUserName("admin");

connectionFactory.setMaxThreadPoolSize(10);

return connectionFactory;

}

@Bean(initMethod = "init", destroyMethod = "close")

public AtomikosConnectionFactoryBean atomikosConnectionFactory() {

AtomikosConnectionFactoryBean atomikosConnectionFactory = new AtomikosConnectionFactoryBean();

atomikosConnectionFactory.setUniqueResourceName("XA_JMS_ConnectionFactory");

atomikosConnectionFactory.setXaConnectionFactory(connectionFactory());

atomikosConnectionFactory.setMaxPoolSize(10);

return atomikosConnectionFactory;

}

@Bean

public JmsTemplate jmsTemplate() {

JmsTemplate template = new JmsTemplate();

template.setConnectionFactory(atomikosConnectionFactory());

return template;

}

@Bean

public DefaultJmsListenerContainerFactory jmsListenerContainerFactory(PlatformTransactionManager transactionManager) {

DefaultJmsListenerContainerFactory factory = new DefaultJmsListenerContainerFactory();

factory.setConnectionFactory(atomikosConnectionFactory());

factory.setConcurrency("1-1");

factory.setTransactionManager(transactionManager);

return factory;

}

}

We define an ActiveMQXAConnectionFactory , which implements XAConnectionFactory from JTA API. We then define a separate AtomikosConnectionFactory , which uses ActiveMQ one. For all intents and purposes, everything else uses Atomikos connection factory – we set it for JmsTemplate and DefaultJmsListenerContainerFactory . We also set the transaction manager, which will now become the JTA transaction manager.

Having all of that set, we can run our application again and see if we still encounter issues with transactions not behaving as we want them to. Let’s set up a JMS listener with additional logs for clarity:

@JmsListener(destination = "message.queue")

@Transactional(transactionManager = "transactionManager", propagation = Propagation.REQUIRED)

public void processMessage(final String message) {

transactionalHelper.executeInKafkaTransaction(() -> {

MessageEntity entity = MessageEntity.builder().content(message).build();

messageDao.save(entity);

log.info("Saved database entity");

kafkaProducer.sendMessageRequired(message);

log.info("Sent kafka message");

jmsProducer.sendMessage("response.queue", "Response: " + message);

log.info("Sent JMS response");

throw new RuntimeException("This is a test exception");

});

}

We expect that JTA and Kafka transactions will both roll back, nothing will be written to the database, nothing will be written to response.queue , nothing will be written to Kafka topic, and that the message will not be consumed. When we run this, we get the following logs:

2021-01-20 21:56:00.904 INFO 9780 --- [enerContainer-1] c.g.kafkatransactions.jms.JmsConsumer : Saved database entity

2021-01-20 21:56:00.906 INFO 9780 --- [enerContainer-1] c.g.k.kafka.KafkaProducer : Producing message "This is a test message"

2021-01-20 21:56:00.917 INFO 9780 --- [enerContainer-1] c.g.kafkatransactions.jms.JmsConsumer : Sent kafka message

2021-01-20 21:56:00.918 INFO 9780 --- [enerContainer-1] c.g.kafkatransactions.jms.JmsProducer : Sending JMS message: Response: This is a test message

2021-01-20 21:56:00.922 INFO 9780 --- [enerContainer-1] c.g.kafkatransactions.jms.JmsConsumer : Sent JMS response

2021-01-20 21:56:00.935 WARN 9780 --- [enerContainer-1] o.s.j.l.DefaultMessageListenerContainer : Execution of JMS message listener failed, and no ErrorHandler has been set.

org.springframework.jms.listener.adapter.ListenerExecutionFailedException: Listener method 'public void com.grapeup.kafkatransactions.jms.JmsConsumer.processMessage(java.lang.String)' threw exception; nested exception is java.lang.RuntimeException: This is a test exception

at org.springframework.jms.listener.adapter.MessagingMessageListenerAdapter.invokeHandler(MessagingMessageListenerAdapter.java:122) ~[spring-jms-5.2.10.RELEASE.jar:5.2.10.RELEASE]

The exception thrown is followed by several errors about rolled back transactions. After checking our H2 database and looking at Kafka/JMS queues, we can indeed see that everything we expected has been fulfilled. The original JMS message was not consumed either, starting an endless loop which, once again, we would have to take care of in a running application. The key part though is that transactions behaved exactly as we intended them to.

Is JTA worth it for that little bit of surety? Depends on the requirements – do we have to write to several JMS queues simultaneously while writing to the database and Kafka? We will have to use JTA. Can we get away with a single write at the end of the transaction? We might not need to. There is sadly no clear-cut answer, we must use the right tools for the right job.

Summary

We managed to successfully launch Kafka in transactional mode, enabling exactly-once delivery mechanics. This can be integrated with JMS/JPA transactions, although we may encounter problems in our listeners/consumers depending on circumstances. If needed, we may introduce JTA to allow us an easier control of different transactions and whether they are committed or aborted. We used ActiveMQ/H2/Atomikos for this purpose, but this works with any JMS/JPA/JTA providers.

If you're looking for help in mastering cloud technologies , learn how our team works with innovative companies.

Using Azure DevOps Platform for configurable builds of a multicomponent iOS application

In this article, we share our experience with building CI/CD for a multicomponent multi-language project. The article describes the structure of the pipeline set up and focuses on two important features needed in our project’s automation workflow: pipeline chaining and build variants.

The CI/CD usage is a standard in any application development process . Mobile apps are no exception here.

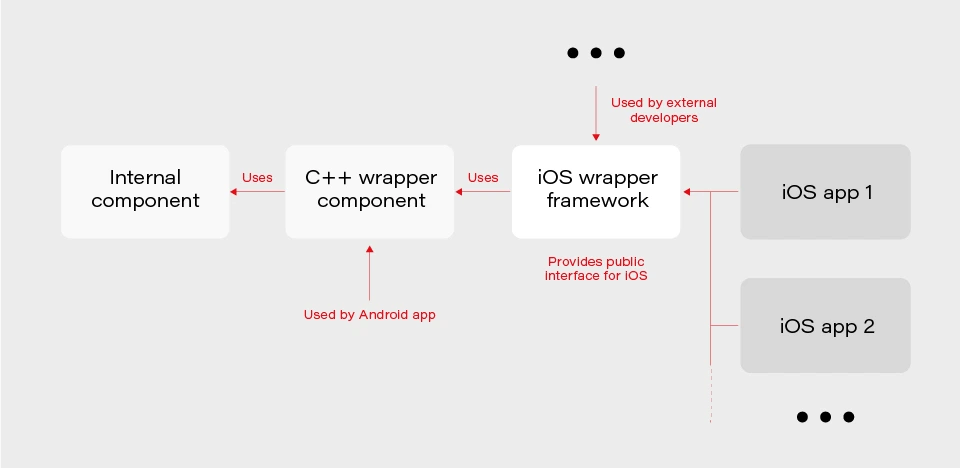

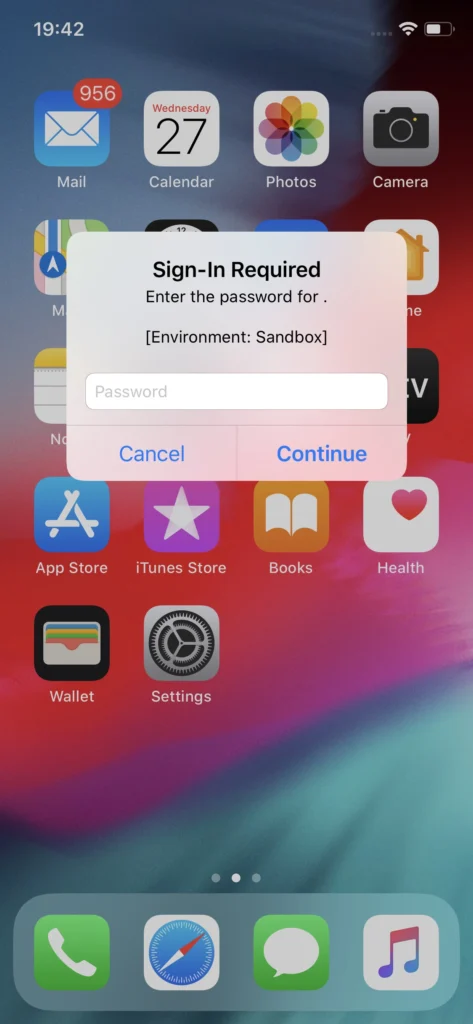

In our project, we have several iOS applications and libraries. Each application uses several components (frameworks) written in different languages. The components structure is as in the picture below:

The internal component contains all the core (domain) logic that apps use. The first two components are C/C++ based and are compiled as frameworks. The wrapper framework provides an Objective-C/Swift layer that is necessary for using it in an iOS application. There are several iOS applications that are using the wrapper framework. Additionally, this framework is also used by external developers in their own applications.

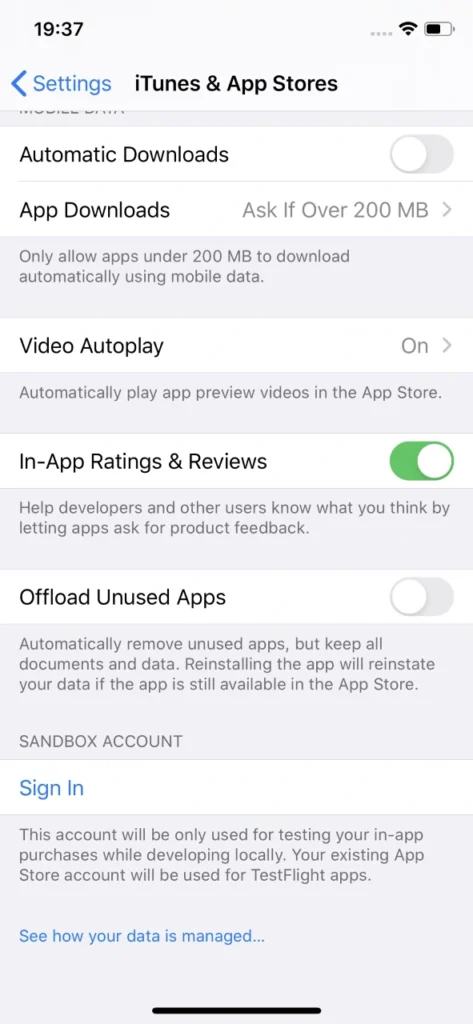

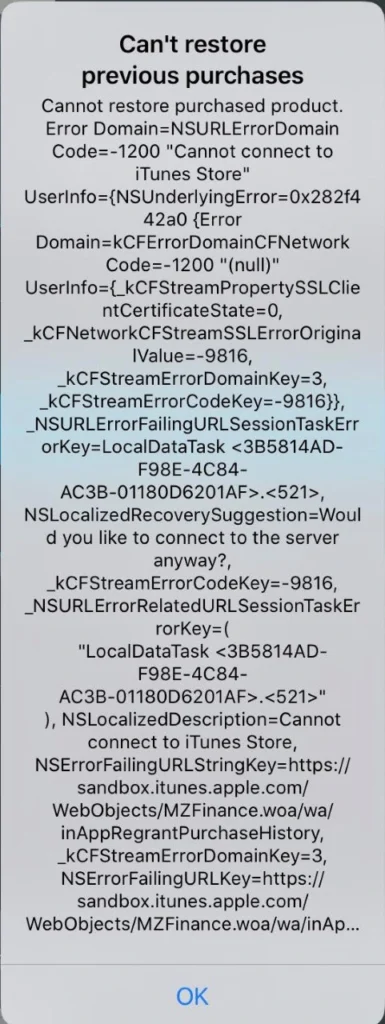

The wrapper framework should be built for both x86_64 and arm64 architecture to be used on both a simulator and a real iOS device. Also, we need a debug and release version for each architecture. When it comes to applications each of them may be built for AppStore, internal testing (Ad-Hoc) or TestFlight beta testing.

Without an automated CI/CD system, it would be extremely inefficient to build the whole chain of components manually. As well as to track the status of merges/pull requests for each component. That is to control if the component is still building after the merge. Let’s see how our pipelines are organized.

Using Azure DevOps pipelines

For building CI/CD, we’ve chosen Azure DevOps. We use Azure Pipelines for building our components and Azure Artifacts to host the built components, as well as several external 3rd party libraries.

To check the integrity and track the build status of each component, we have special integration pipelines that are integrated with GitHub. That is, each pull request that is going to be merged to the development branch of a particular component triggers this special integration pipeline.

For regular builds, we have pipelines based on the purpose of each branch type: experimental, feature, bugfix, development, and master.

Since each component depends on another component built on Azure, we should somehow organize the dependency management. That is versioning of the dependent components and their download. Let’s take a look at our approach to dependency management.

Dependency management

Azure provides basic CLI tools to manipulate pipelines. We may use it to download dependencies (inform of Azure artifacts) required to build a particular component. At a minimum, we need to know the version, configuration (debug or release) and architecture (x86_64 or arm64) of a particular dependency. Let’s take a look at the options that Azure CLI gives us:

az artifacts universal download \

--organization "${Organization}" \

--feed "${Feed}" \

--name "${Name}" \

--version "${Version}" \

--path "${DownloadPath}"

The highlighted parameters are the most important for us. The CLI does not provide explicit support of build configuration or architecture. For this purpose, we simply use the name (specified as --name parameter) that has a predefined format:

<component name>-<configuration>-<architecture>

This makes it possible to have components of the same version with different architecture and build configurations.

The other aspect is how to store info about version, configuration, etc., for each dependency. We’ve decided to use the git config format to store this info. It’s pretty easy to parse using git config and does not require any additional parsing tool. So, each component has its own dependencies.config file. Below is the example file for component dependent on two frameworks:

[framework1]

architecture = "arm64"

configuration = "release"

version = "1.2.3.123"[framework2]

architecture = "arm64"

configuration = "release"

version = "3.2.1.654"

To make it possible to download dependencies as part of the build process, we have a special script that manages dependencies. The script is run as a build phase of the Xcode project of each component. Below are the basic steps the script does.

1. Parse dependencies.config file to get version, architecture, and configuration. The important thing here is that if some info is omitted (e.g. we may not specify build configuration in dependencies.config file) script will use the one the dependent component is being built with. That is, when we build the current component for the simulator script will download dependencies of simulator architecture.

2. Form artifact’s name and version and forward them to az artifacts universal download command .

There are two key features of our build infrastructure: pipeline chaining and build variants support. They cover two important cases in our project. Let’s describe how we implemented them.

Chaining pipelines

When a low-level core component is updated, we want to test these changes in the application. For this purpose, we should build the framework dependent on the core component and build the app using this framework. Automation here is extremely useful. Here’s how it looks like with our pipelines.

1. When a low-level component (let’s call it component1 ) is changed on a specific branch (e.g., integration), a special integration pipeline is triggered. When a component is built and an artifact is published, the pipeline starts another pipeline that will build the next dependent component. For this purpose, az pipelines build queue command is used as follows:

az pipelines build queue \

--project "component2" \

--branch "integration" \

--organization "${Organization}" \

--definition-name "${BuildDefinition}" \

--variables \

"config.pipeline.component1Version=${BUILD_BUILDNUMBER}" \

“config.pipeline.component1Architecture=${CurrentArchitecture}" \

"config.pipeline.component1Configuration=${CurrentConfiguration}"

This command starts the pipeline for building component2 (the one dependent on component1 ).

The key part is passing the variables config.pipeline.component1 Version, config.pipeline.component1Architecture and config.pipeline.component1Configuration to the pipeline. These variables define the version, build configuration, and architecture of component1 (the one being built by the current pipeline) that should be used to build component2 . The command overrides the corresponding values from dependencies.config file of component2 . This means that the resulting component2 will use newly built component1 dependency instead of the one defined by dependencies.config file.

2. When component2 is built, it uses the same approach to launch the next pipeline for building a subsequent component.

3. When all the components in the chain required by the app are ready, the integration pipeline building the app is launched. As a part of its build process, the app is sent to TestFlight.

So, simply pushing changes of the lowest level component to the integration branch gives you a ready-to-test app on TestFlight.

Build variants

Some external developers that use the wrapper iOS framework may need additional features that should not be available in regular public API intended for other developers. This brings us to the need of having different variants of the same component. Such variants may be distinct in different features, or in behavior of the same features.

Additional methods or classes may be provided as a specific or experimental API in a wrapper framework for iOS. The other use case is to have behavior different than the default one for regular (official) public API in the wrapper framework. For instance, a method that writes an image file to a specified directory in some cases may be required to also write additional files along with the image (e.g., file with image processing settings or metadata).

Going further, an implementation may be changed not only in the iOS framework itself but also in its dependencies. As described previously, core logic is implemented in a separate component and iOS framework is dependent on. So, when some code behavior change is required by a particular build variant, most likely it will also be done in the internal component.

Let’s see how to better implement build variants. The proper understanding of use cases and potential extension capabilities are crucial for choosing the correct solution.

The first important thing is that in our project different build variants have few changes in API compared to each other. Usually, a build variant contains a couple of additional methods or classes. Most part of the code is the same for all variants. Inside implementation, there also may be some distinctions based on the concrete variant we’re building. So, it would be enough to have some preprocessor definition (active compilation conditions for Swift) indicating which build variant is being built.

The second thing is that the number of build variants is often changed. Some may be removed, (e.g., when an experimental API becomes generally accessible.) On the other hand, when an external developer requests another specific functionality, we need to create a new variant by slightly modifying the standard implementation or exposing some experimental/internal API. This means that we should be able to add or remove build variants fast.

Let’s now describe our implementation based on the specifics given above. There are two parts of the implementation. The first one is at the pipeline level.

Since we may often add/remove our build variants, creating a pipeline for each build variant is obviously not a good idea. Instead, we add a special variable config.pipeline.buildVariant in the pipeline’s Variables to each pipeline that is supposed to be used for building different variants. The variable should be added to pipelines of all the components the resulting iOS framework depends on because a specific feature often requires code changes, not only in the iOS framework itself but also in its dependencies. Pipeline implementation then will use this variable e.g., for downloading specific dependencies required by a particular variant, tagging build to indicate the variant, and, of course, providing the corresponding build setting to Xcode build command.

The second part is a usage of the build variant setting provided by the pipeline inside the Xcode project. Using Xcode build settings we’re adding a compile-time constant (preprocessor definition for Objective C/C++ code and compilation conditions for Swift) that reflect the selected build variant. It is used to control which functionality to compile. This build settings may also be used to choose to build variant-specific resources to be embedded into the framework.

When chaining pipelines we just pass the variable to next pipeline:

az pipelines build queue \

--project "component2" \

--branch "integration" \

--organization "${Organization}" \

--definition-name "${BuildDefinition}" \

--variables \

"config.pipeline.component1Version=${BUILD_BUILDNUMBER}" \

"config.pipeline.component1Architecture=${CurrentArchitecture}" \

"config.pipeline.component1Configuration=${CurrentConfiguration}" \

“config.pipeline.buildVariant=${CONFIG_PIPELINE_BUILDVARIANT}"

Summary

In this article, we’ve described our approach to multi-component app CI/CD infrastructure based on Azure . We’ve focused on two important features of our build infrastructure: chaining component builds and building different variants of the same component. It’s worth mentioning that the described solution is not the only correct one. It's rather the most optimal that fits our needs. You may experiment and try different approaches utilizing a flexible developed pipeline system that Azure provides.

8 tips for an agile debugging of a web application

Building a complex web application, you've probably encountered the fact that something didn’t work as planned. You’ve spent hours and hours looking for a bug in your code and then on the internet searching for help with fixing the problem. To make it easier for you, in this article we explain some effective techniques of debugging a web application that significantly reduce the pain of debugging and shorten the time of detecting issues.

Console.log

First, a commonly used javascript method console.log. You can insert a method in your code with the given variable. During code execution, the application will return the value of the variables specified inside the method in the console. This is the easiest way to check if the program returns the expected value.

Unfortunately, this is not a very effective method of debugging. Such an approach t does not allow us to see the progress of code execution (unless we insert console.log every few lines, but then the amount of data thrown in the console will be unreadable and we will only make a mess in the code.) Furthermore, it returns only the passed variable, provided that the application does not throw an error while executing the code.

Tip no. 1

If you have many console.logs put the name in a string and the next variable, e.g., console.log(‘variable’, variable).

Chrome DevTools (Source Tab)

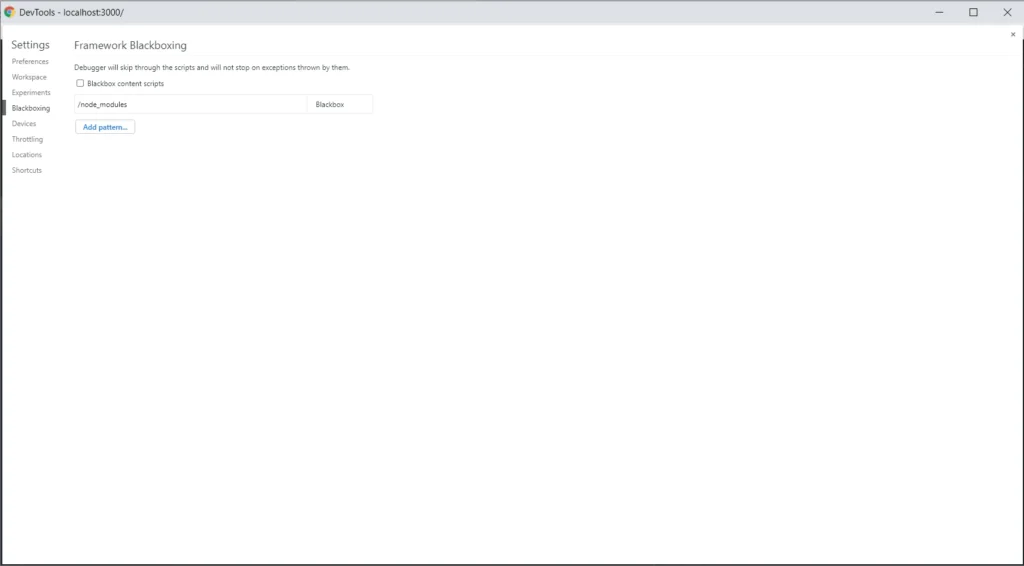

A more efficient method for debugging a web application is to use Chrome DevTools and Source Tab. Before we start debugging in the source tab, we need to add node_modules to black boxing. We add this rule so that when going through breakpoints it does not show us files from external packages, which makes debugging difficult. We need to open settings in Chrome → Blackboxing → Add patterns and then write there /node_modules .

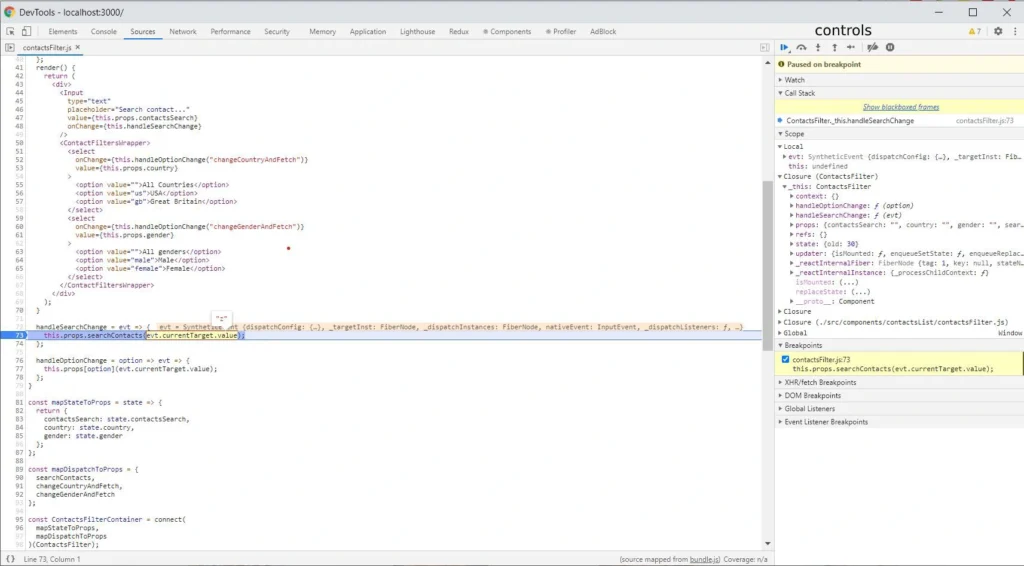

When you add node_modules to black boxing we can go to the Source Tab. Let’s assume you want to follow in real time the process of your function, and check the outputs. Press Ctrl + O in the source tab, and enter a source file name. Then put the breakpoints on the lines of code that interest, you and you can start executing the process in your browser. When the lines you selected start processing, the browser will stop executing the code. See the screenshot below.

As you can see, the current line of code where the browser has stopped has a blue background. On the right side, there is a bar where our command center is located. Here is our brief introduction.

Controls

At the top of the bar, you have the controls section. Let's focus on the crucial elements. The first Resume control takes us to the next marked breakpoint within the scope of the code being executed. The second control Step over next function call takes us to the next line of the code being executed. The last Deactive breakpoints control deactivates the selected breakpoints. It’s a useful control when we have many breakpoints selected, and we want to go back to clicking through the application for a while without pausing at every breakpoint.

Scopes

We have a scopes section below. We have several types of scopes: local (the currently performed function), and closures depending on the scope in which we are (for example, the parent of the currently performed function or a component). In each of these scopes, the browser shows us all variables occurring in them.

Breakpoints

The last section discussed is breakpoints. It shows what breakpoints and in what files are marked. Using checkboxes, we can easily deactivate and reactivate them.

Tips no. 2-5

- If you use immutable.js in your project, install the Immutable.js Object Formatter plugin and activate it in browser settings. This will significantly simplify the debugging of immutable objects.

- If you do not use immutable.js in your project and you use Visual Studio Code as IDE, We strongly recommend installing and configuring Debugger for Chrome (VSC does not have an immutable.js formatting plugin). It simplifies debugging even further and allows for faster code changes.

- If the source tab doesn’t show your local files check the source map in your project.

- When the browser stops on a breakpoint you have access to variables also in the console.

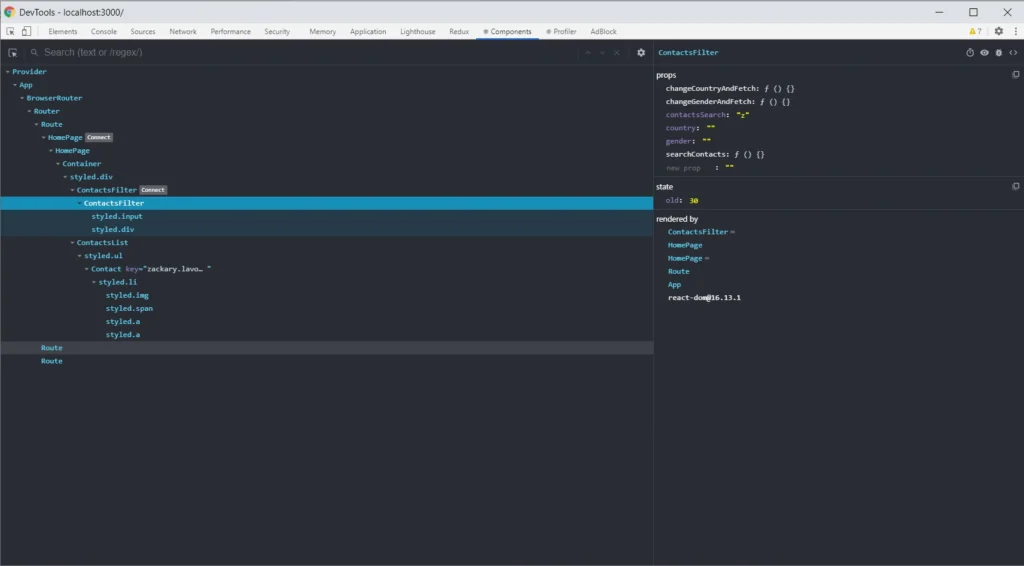

React Developer Tools

React Developer Tools are also helpful solutions. Such tools allow you to easily view the React tree structure in your project, the states, and props in the component. The Select an element in the page to inspect it function is powerful, especially when you don't know the whole project. It helps you find the component you need to update.

Tip no. 6

If you use Vue.js, you can use Vue.js devtools. The extension has similar functions and access to Vuex (the redux equivalent in react).

Redux DevTools

If you use Redux in your project, Redux DevTools is a must-have. Such a solution allows you to track the full flow of actions, status changes, payload, and view the current store after each action performed. If something does not work as we assume, and everything seems fine in the code, it is worth considering what actions are dispatching, with what payload. Sometimes there are simple mistakes like copying the constant from the above action, renaming without changing the value, and then calling something completely different. Below is a gif showing the most important Redux DevTools functions.

Tip no. 7

If your application runs a large number of actions, the extension may not function properly due to insufficient memory to process that many actions. You can try to configure it in the extension options (in this case, the maxAge option.)

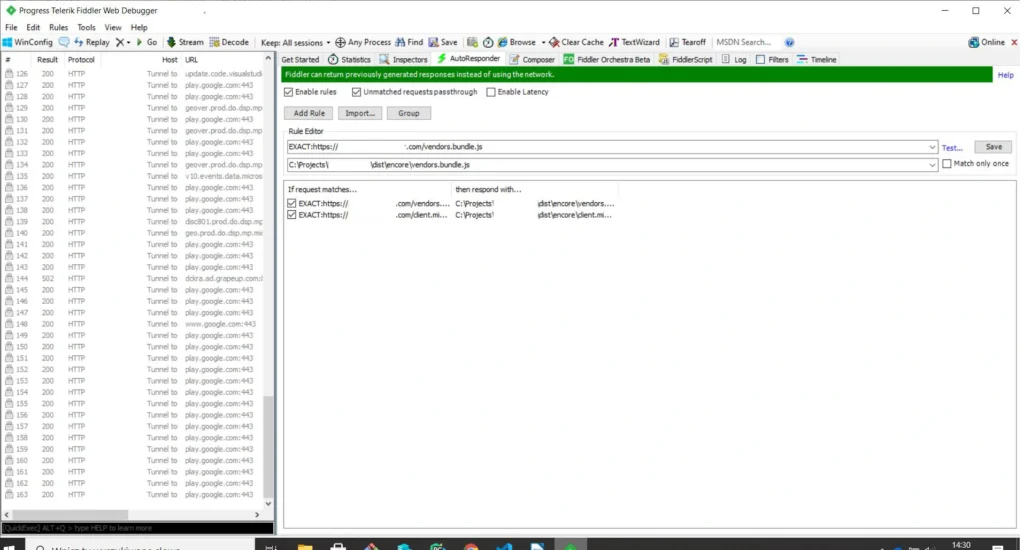

Fiddler

The last tool we would like to introduce is Fiddler . The tool was created to manage network traffic. It is extremely useful when we have some production bugs and we cannot copy the production data to a lower environment to debug locally. In such a situation, to have access to production data in the local environment, we set the traffic in the AutoResponder tab. When you open the page, instead of downloading the js file from the server, Fidler connects our locally built production file. Further debugging is done in the chrome dev tools source tab. Below is a screenshot with the setting to redirect traffic to the local file. The program also allows for mock endpoints.

Tip no. 8

If you want to create mocks of endpoints in an easy and fast way, you can use a mock moon program.

Summary of an agile debugging of a web application

For many of us, the process of debugging a web application is associated with a headache and long hours spent in front of the computer. However, this process can be shortened and made more pleasant if you have the right tools and know-how to use them. Often they are at your fingertips and for free. We shared with you the most important and useful tools that we use daily .

Connected car: Challenges and opportunities for the automotive industry

The development of connected car technology accelerated digital disruption in the automotive industry. Verified Market Research valued the connected car market at USD 72.68 billion in 2019 and projected its value to reach USD 215.23 billion by 2027. Along with the rapid growth of this market’s worth, we observe the constant development of new customer-centric services that goes far beyond driving experience.

While the development of connected car technology created a demand for connectivity solutions and drive-assistance systems, companies willing to build their position in this market have to face some significant challenges. This article is the first one of the mini-series that guides you through the main obstacles with building software for connected cars. We start with the basics of a connected vehicle, then dive into the details of prototyping and providing production-ready solutions. Finally, we analyze and predict the future of verticals associated with automotive-rental car enterprises, insurers, and mobility providers.

This series provides you with hands-on knowledge based on our experience in developing production-grade and cutting-edge software for the leading automotive and car rental enterprises. We share our insights and pointers to overcome recurring issues that happen to every software development team working with these technologies.

What is a Connected Car?

A Connected Car is a vehicle that can communicate bidirectionally with other systems outside the car , such as infrastructure, other vehicles, or home/office. Connected cars belong to the expanding environment of devices that comprise the Internet of Things landscape. As well as all devices that are connected to the internet, some functions of a vehicle can be managed remotely.

Along with that, IoT devices are valuable resources of data and information that enable further development of associated services. And while most car owners would describe it as the mobile application paired with a car that allows users to check the fuel level, open/close doors, control air conditioning, and, in some cases, start the ignition, this technology goes much further.

V2C - Vehicle to Cloud

Let’s focus on some real-case scenarios to showcase the capabilities of connected car technology. If a car is connected, it may also have a sat-nav system with a traffic monitoring feature that can alert a driver if there is a traffic jam in front of them and suggest an alternative route. Or maybe there is a storm at the upcoming route and navigation can warn the driver. How does it work?

That is mostly possible thanks to what we call V2C - Vehicle to Cloud communication. Utilizing the fact that a car is connected, and it is sending and gathering data, a driver may also try to find it, in case it was stolen. Telematics data is also helpful to understand the reasons behind an accident on the road - we can analyze what happened before the accident and what may have led to the event. The data can be also used for predictive maintenance, even if the rules managing the dates are changing dynamically.

While this seems just like a nice-to-have feature for the drivers, it allows car manufacturers to provide an extensive set of subscription-based features and functionalities for the end-users. The availability of services may depend on the current car state - location, temperature, and technical availability. As an example: during the winter, if the car is equipped with heated seats and the temperature drops under 0 Celsius, but the subscription for this feature expires, the infotainment can propose to buy the new one - which is more tempting when the user is at this time cold.

V2I - Vehicle to Infrastructure

A vehicle equipped with connected car technology is not limited to communicating only with the cloud. Such a car is capable of exchanging data and information with road infrastructure, and this functionality is called V2I - Vehicle to Infrastructure communication. A car processes information from infrastructure components - road signs, lane markings, traffic lights to support the driving experience by suggesting decision makings. In the next steps, V2I can provide drivers with information about traffic jams and free parking spots.

Currently, in Stuttgart, Germany, the city’s infrastructure provides the data live traffic lights data for vehicle manufacturers, so drivers can see not just what light is on, but how long they have to wait for the red light to switch to green again. This part of connected car technology can rapidly develop with the utilization of wireless communication and the digitalization of road infrastructure.

V2V - Vehicle to Vehicle

Another highly valuable type of communication provided by connected car technology is V2V - Vehicle to Vehicle. By developing an environment in which numerous cars are able to wirelessly exchange data, the automotive industry offers a new experience - every vehicle can use the information provided by a car belonging to the network, which leads to more effective communication covering traffic, car parking, alternative routes, issues on the road, or even some worth-seeing spots.

It may also significantly increase safety on the road, when one car notifies another that drives a few hundred meters behind him that it just had a hard breaking or that the road surface is slippery, using the information from ABS, ESP, or TC systems. That has not just an informational value but is also used for Adaptive Cruise Control or Travel Assist systems and reduces the speed of vehicles automatically increasing the safety of the travelers. V2V communication makes use of network and scale effects - the more users have connected to the network, the more helpful and complete information the network provides.

The list of use cases for connected car technology is only limited by our imagination but is excelling rapidly as many teams are joining the movement aiming to transform the way we travel and communicate. The Connected Car revolution leads to many changes and impacts both user experience and business models of the associated industries.

How connected car technology impacts business models of the automotive industry

Connected cars bring innovative solutions to the whole environment comprising the automotive landscape. Original Equipment Manufacturers (OEMs) have gained new revenue streams. Now vehicles allow their users to access stores and purchase numerous features and associated services that enhance customer experience, such as infotainment systems. By delivering aftermarket services directly to a car, the automotive industry monetizes new channels. Furthermore, these systems enable automakers to deliver advertisements, which become an increasing source of revenue.

The development of new technology in automotive creates a similar change as we observed in the mobile phone market. When smartphones equipped with operating systems had become a new normal, significantly increased the number of new apps that now allow their users to manage numerous services and tasks using the device.