Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

How to expedite claims adjustment by using AI to improve virtual inspection

If we look at the claims adjustment domain from a high-level perspective, we will surely notice it is a very complex set of elements: processes, data, activities, documents, systems, and many others, depending on each other. There are many people who are involved in the process and in many cases, they struggle with a lot of inefficiency in their daily work. This is exactly where AI comes to help. AI-based solutions and mechanisms can automate, simplify, and speed up many parts of the claims adjustment process, and eventually reduce overall adjustment costs.

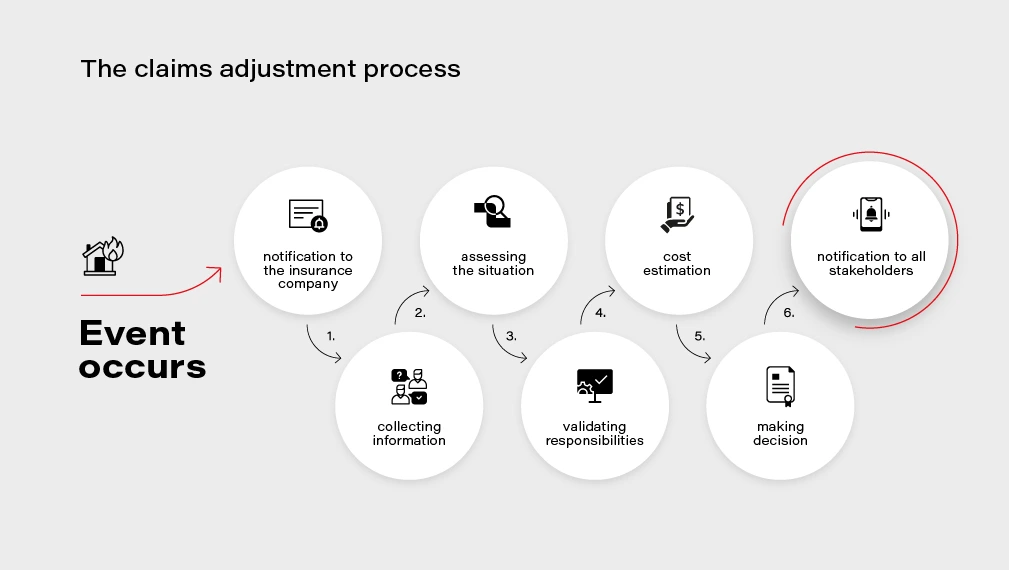

The claims adjustment process

Let's look at the claims adjustment process in more detail. There are multiple steps on the way: when an event that causes a loss for the customer occurs, the customer notifies the insurance company about the loss and files a claim. Then the company needs to gather all the information and documentation to understand the circumstances, assess the situation, and eventually be able to validate their responsibility and estimate the loss value. Finally, the decision needs to be made, and appropriate parties, including the customer, need to be notified about the result of the process.

At each step of this process, AI can not only introduce improvements and optimizations but also enable new possibilities and create additional value for the customer .

Let’s dive into a few examples of potential AI application to claims adjustment process in more detail.

Automated input management

The incoming correspondence related to claims is very often wrongly addressed. Statistics show that on average, 35% of messages is incorrectly addressed. A part of them is sent to a generic corporate inbox, next ones to wrong people, or sometimes even to entirely different departments. That causes a lot of confusion and requires time to reroute the message to the correct place.

AI can be very helpful in this scenario - an algorithm can analyze the subject and the content of the message, look for keywords such as claim ID, name of the customer, policy number , and automatically reroute the message to the correct recipient. Furthermore, the algorithm can analyze the context and detect if it is a new claim report or a missing attachment that should be added to an already-filed claim. Such a solution can significantly improve the effectiveness and speed up the process.

Automated processing of incoming claims

The automation of processing of incoming documents and messages could be taken one step further. What if we used an AI algorithm to analyze the content of the message? A claim report can be sent using an official form, but also as a plain email message or even as a scanned paper document – the solution could analyze the document and extract the key information about the claim so that it can be automatically added to the claim registry system. Simultaneously the algorithm could check if all the needed data, documents, and attachments are provided and if not, notify the reporter appropriately. In a "traditional" approach, this part is often manual and thus takes a lot of time. Introducing an AI-based mechanism here would drastically reduce the amount of manual work, especially in the case of well-defined and repeatable causes, e.g., car insurance claims.

Verification of reported damage

Appraisal of the filed claim and verification of reported damage is another lengthy step in the claim adjustment process. The adjuster needs to verify if the reported damage is true and if the reported case includes those that occurred previously. Computer vision techniques can be used here to automate and speed up the process - e.g., by analyzing pictures of the car taken by the customer after the accident or analyzing satellite or aerial photos of a house in case of property insurance.

Verification of incurred costs

AI-driven verification can also help identify fraudulent operations and recognize costs that are not related to the filed claim. In some cases, invoices presented for reimbursement include items or services which should not be there or which cost is calculated using too high rates. AI can help compare the presented invoices with estimated costs and indicate inflated rates or excess costs - in case of medical treatment or hospital stay. Similarly, the algorithm can verify whether the car repair costs are calculated correctly by analyzing the reported damage and comparing an average rate for corresponding repair services with the presented rate.

Such automated verification helps flag potentially fraudulent situations and saves adjuster's time. letting them focus only on those unclear cases rather than analyze each one manually.

Accelerate online claims reporting with automated VIN recognition

In the current COVID-19 situation, digital services and products are becoming critical for all the industries. Providing policyholders with the capability to effectively use online channels and virtual services is essential for the insurance industry as well.

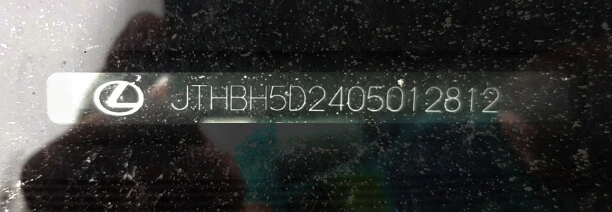

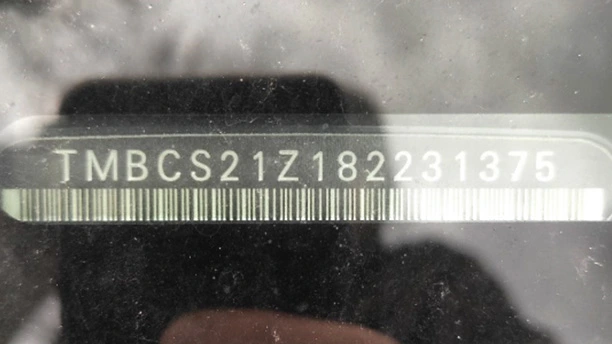

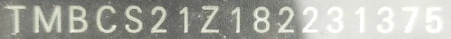

One of our customers wanted to speed up the processing of claims reported through their mobile application. The insurer faced a challenging issue, as 8% of claims reported through the mobile application were rejected due to the bad quality of VIN images. Adjusters had problems with deciphering the Vehicle Identification Number and had to request the same information from the customer. The whole process was unnecessarily prolonged and frustrating for the policyholder.

By introducing a custom machine learning model, trained specifically for VIN recognition instead of a generic cloud service, our customer increased VIN extraction accuracy from 60% to 90% , saving on average 1,5 h per day for each adjuster. Previously rejected claims can be now processed quicker and without asking policyholders for the information they already provided resulting in increased NPS and overall customer satisfaction.

https://www.youtube.com/watch?v=oACNXmlUgtY

Those are just a few examples of how AI can improve claims adjustments. If you would like to know more about leveraging AI technologies to help your enterprises improve your business, tell us about your challenges and we will jointly work on tackling them .

Kubernetes cluster management: Size and resources

While managing Kubernetes clusters, we can face some demanding challenges. This article helps you manage your cluster resources properly, especially in an autoscaling environment.

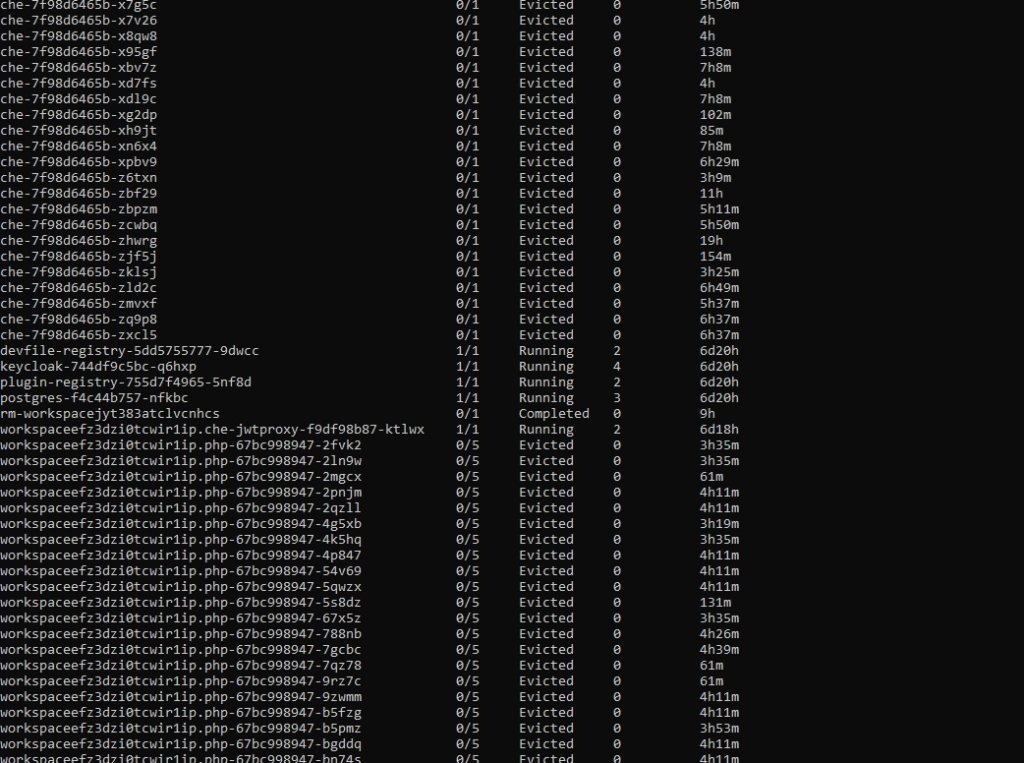

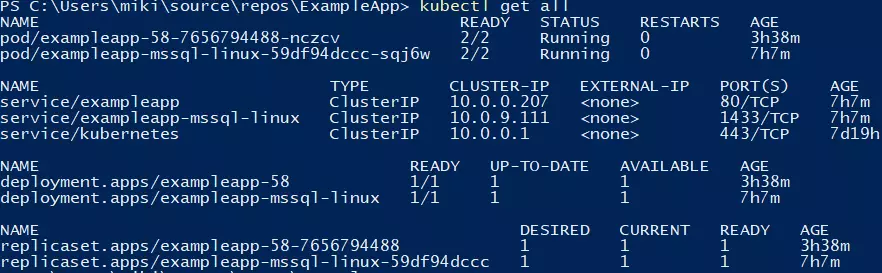

If you try to run a resource-hungry application, especially on a cluster which has autoscaling enabled, at some point this happens:

For the first time, it may look bad, especially if you see dozens of evicted pods in kubectl get, and you only wanted to run 5 pods. With all that claims, that you can run containers without worries about the orchestration, as Kubernetes does all of that for you, you may find it overwhelming.

Well, this is true to some extent, but the answer is - it depends, and it all boils down to a crucial topic associated with Kubernetes cluster management. Let's dive into the problem.

Learn more about services provided by Grape Up

You are at Grape Up blog, where our experts share their expertise gathered in projects delivered for top enterprises. See how we work.

Enabling the automotive industry to build software-defined vehicles

Empowering insurers to create insurance telematics platforms

Providing AI & advanced analytics consulting

Kubernetes Cluster resources management

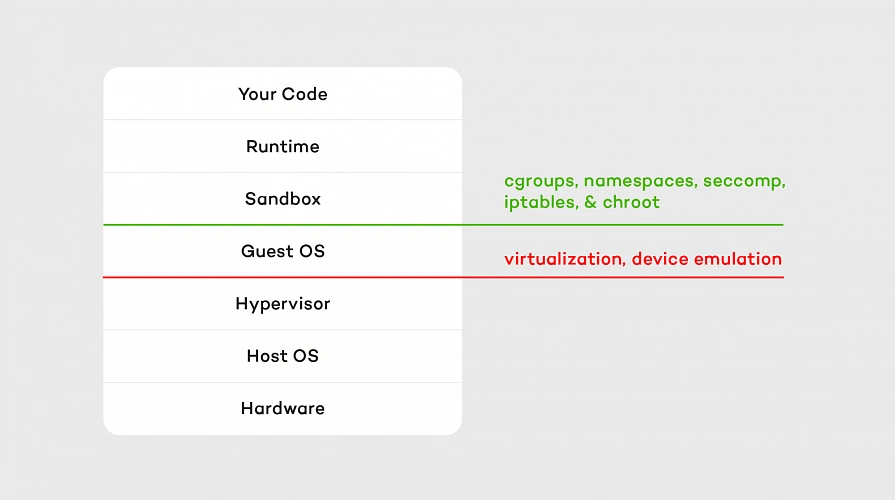

While there is a general awareness that resources are never limitless - even in a huge cluster as a service solution, we do not often consider the exact layout of the cluster resources. And the general idea of virtualization and containerization makes it seem like resources are treated as a single, huge pool - which may not always be true. Let’s see how it looks.

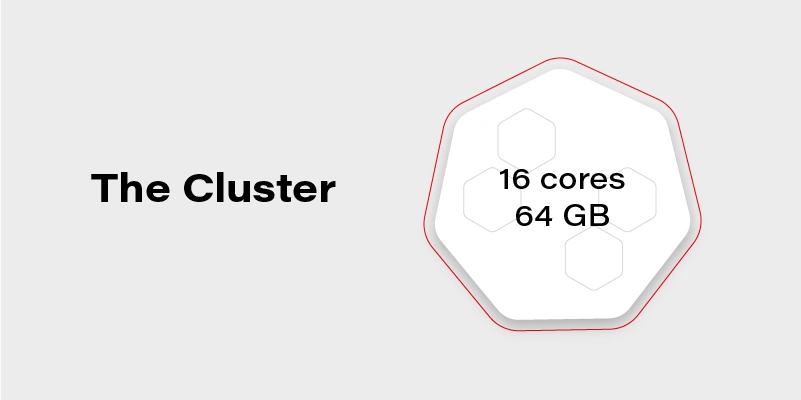

Let’s assume we have a Kubernetes cluster with 16 vCPU and 64GB of RAM.

Can we run on it our beautiful AI container, which requires 20GB of memory to run? Obviously, not. Why not? We have 64GB of memory available on the cluster!

Well, not really. Let’s see how our cluster looks inside:

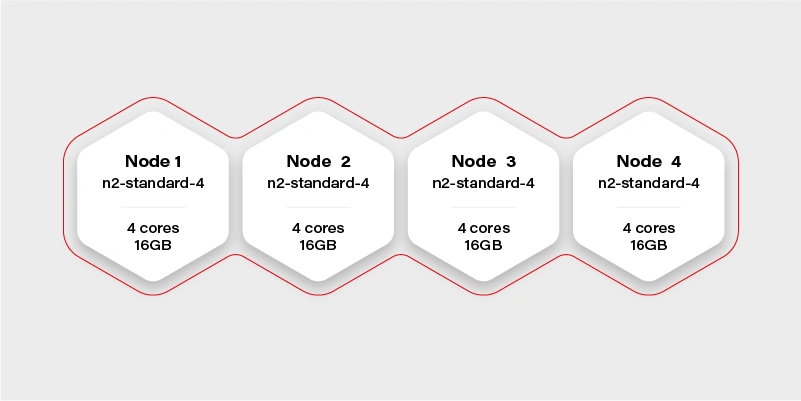

The Cluster again

There are 4 workers in the cluster, and each has 16GB of memory available (in practice, it will be a little bit less, because of DaemonSets and system services, which run a node and take their small share). Container hard memory limit is, in this case, 16GB, and we can’t run our container.

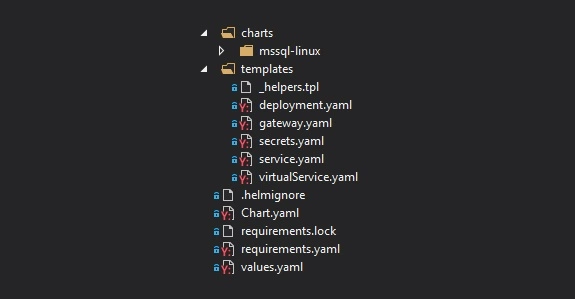

Moreover, it means we have to always take this limitation into account. Not just if we deploy one big container, but also in complex deployments, or even things which in general can run out-of-the-box like helm charts .

Let’s try another example.

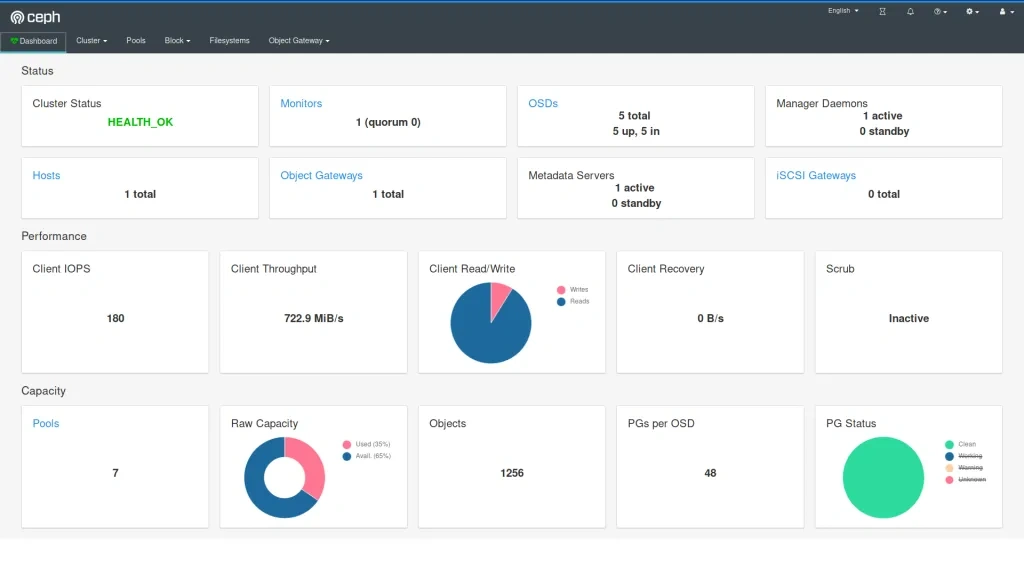

Our next task will be a Ceph deployment to the same cluster. The target we want to achieve is a storage size of 1TB split into 10 OSDs (object storage daemons) and 3 ceph MONs (monitors). We want to put it on 2 of the nodes, and leave the other 2 for deployments which are going to use the storage. Basic and highly extensible architecture.

The first, naive approach is to just set OSDs count to 10, MONs count to 3 and add tolerations to the Ceph pods, plus of course matching taint on Node 1 and Node 2 . All ceph deployments and pods are going to have the nodeSelector set to target only nodes 1 and 2 .

Kubernetes does its thing and runs mon-1 and mon-2 on the first worker along with 5 osds, and mon-3 along with 5 osds on the second worker.

mon-1

mon-2

osd-1

osd-2

osd-3

osd-4

osd-5 mon-3

osd-6

osd-7

osd-8

osd-9

osd-10 Stateless App

It worked out! And our application can now save quite a lot of large files to Ceph very quickly, so our job becomes easier. If we also deploy the dashboard and create a replicated pool, we can even see 1TB of storage available and 10 OSDs up, that's a huge achievement!

The very next morning, we check the status again and see that the available storage is around 400GB and 4 OSDs in flight. What is going on? Is this a crash? Ceph is resilient, it should be immune to crashes, restart quickly, and yet it does not seem like it worked very well here.

If we now check the cluster, we can see a lot of evicted OSD pods. Even more, than we are supposed to have at all. So what really has happened? To figure this out, we need to go back to our initial deployment configuration and think it through.

Limits and ranges

We ran 13 pods, 3 of them (monitors) don’t really need a lot of resources, but OSDs do. More we use it more resources it needs because ceph caches a lot of data in memory. Plus replication and balancing data over storage containers do not come free.

So initially after the deployment, the memory situation looks more or less like this:

Node 1

mon-1 - 50MB

mon-2 - 50MB

osd-1 - 200MB

osd-2 - 200MB

osd-3 - 200MB

osd-4 - 200MB

osd-5 - 200MB

1100MB memory used Node 2

mon-3 - 50M

Bosd-6 - 200MB

osd-7 - 200MB

osd-8 - 200MB

osd-9 - 200MB

osd-10 - 200MB

1050MB memory used

After a few hours of extensive usage, something goes wrong.

Node 1

mon-1 - 250MB

mon-2 - 250MB

osd-1 - 6500MB

osd-2 - 5300MB

osd-3 - Evicted

osd-4 - Evicted

osd-5 - Evicted

12300MB memory used Node 2

mon-3 - 300MB

osd-6 - 9100MB

osd-7 - 5700MB

osd-8 - Evicted

osd-9 - Evicted

osd-10 - Evicted

15100MB memory used

We have lost almost 50% of our pods. Does it mean it’s over? No, we can lose more of them quickly, especially if the high throughput will now target the remaining pods. Does it mean we need more than 32GB of memory to run this Ceph cluster? No, we just need to correctly set limits so a single OSD can’t just use all available memory and starve other pods.

In this case, the easiest way would be to take the 30GB of memory (leave 2GB for mons - 650MB each, and set them limits properly too!) and divide it by 10 OSDs. So we have:

resources :

limits :

memory : "3000Mi"

cpu : "600m"

Is it going to work? It depends, but probably not. We have configured 15GB of memory for OSDs and 650MB for each pod. It means that first node requires: 15 + 2*0.65 = 16.3GB. A little bit too much and also not taking into account things like DaemonSets for logs running on the same node. The new version should do the trick:

resources :

limits :

memory : "2900Mi"

cpu : "600m"

Quality of Service

There is one more warning. If we also set a request for the pod to exactly match the limit, then Kubernetes treats this kind of pod differently:

resources :

requests :

memory : "2900Mi"

cpu : "600m"

limits :

memory : "2900Mi"

cpu : "600m"

This pod configuration is going to have QoS in Kubernetes set to Guaranteed . Otherwise, it is Burstable . Guaranteed pods are never evicted - by setting the same request and limit size, we confirm that we are certain what is the resource usage of this pod, so it should not be moved or managed by Kubernetes. It reduces flexibility for the scheduler but makes the whole deployment way more resilient.

Obviously, for mission-critical systems , “best-effort” is never enough.

Resources in an autoscaling environment

If we can calculate or guess the required resources correctly to match the cluster size, the limits and quality of service may be just enough. Sometimes though the configuration is more sophisticated and the cluster size is fluid - it can scale up and down horizontally and change the number of available workers.

In this case, the planning goes in two parallel paths - you need to plan for the minimal cluster size and the maximum cluster size - assuming linear scaling of resources.

It cannot be assumed that applications will act properly and leave space for the other cluster cohabitants. If the pods are allowed to scale up horizontally or vertically while the cluster is expanding, it may result in evicting other pods when it’s scaling down. To mitigate this issue, there are two main concepts available in Kubernetes: Pod Priority and Pod Disruption Budget .

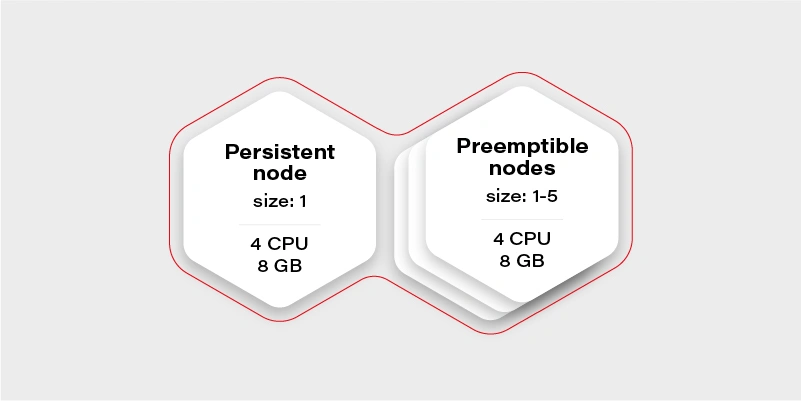

Let’s start again by creating our test scenario. This time we don’t need tons of nodes, so let’s just create a cluster with two node groups: one consisting of regular instances (let’s call it persistent) and one consisting of preemptible/spot instance (let’s just call them preemptible for the sake of an experiment).

The preemptible nodes group will scale up when the CPU usage of the VM (existing node) will be over 0.7 (70%).

The advantage of the preemptible/spot instances is their price. They are much cheaper than regular VMs of the same performance. The only drawback is that there is no guarantee for their lifetime - the instance can be killed when the cloud providers decide it is required somewhere else, for maintenance purposes, or just after 24 hours. This means we can only run fault-tolerant, stateless workloads there.

Which should be most of the things which run in your cluster if you follow the 12 factors, right?

Why there is one persistent node in our cluster then? To prepare for the rare case, when none of the preemptible nodes are running, it is going to maintain the minimal set of containers to manage the operability of the application.

Our application will consist of:

Application Replicas CPUs Memory Redis cluster with one redis master - has to run on a persistent node 1 0.5 300MB Frontend application (immutable) 2 0.5 500MB Backend application (immutable) 2 0.7 500MB Video converter application (immutable) 1 1 2GB Sum 3.9 4.3GB

We can configure the redis master to work on the persistent node using a node selector. Then just deploy everything else and Bob is your uncle .

Horizontal Pod Autoscaler

Well, but we have an autoscaling nodes group and no autoscaling configured in the cluster. This means we have never really triggered cluster autoscaling and it stays all the time on two workers, because application itself does not increase replicas count. Let’s start with the Horizontal Pod Autoscaler:

Frontend:

apiVersion : autoscaling/v2beta2

kind : HorizontalPodAutoscaler

metadata :

name : frontend-hpa

spec : scaleTargetRef :

apiVersion : apps/v1

kind : Deployment

name : frontend

minReplicas : 2

maxReplicas : 10

metrics :

- type : Resource

resource :

name : cpu

target :

type : Utilization

averageUtilization : 75

Backend:

apiVersion : autoscaling/v2beta2

kind : HorizontalPodAutoscaler

metadata :

name : backend-hpa

spec :

scaleTargetRef :

apiVersion : apps/v1

kind : Deployment

name : backend

minReplicas : 2

maxReplicas : 10

metrics :

- type : Resource

resource :

name : cpu

target :

type : Utilization

averageUtilization : 75

Video converter:

apiVersion : autoscaling/v2beta2

kind : HorizontalPodAutoscaler

metadata :

name : video-converter-hpa

spec :

scaleTargetRef :

apiVersion : apps/v1

kind : Deployment

name : video-converter

minReplicas : 1

maxReplicas : 25

metrics :

- type : Resource

resource :

name : cpu

target :

type : Utilization

averageUtilization : 25

So now we have the same configuration as we described in the deployment - the sum of minReplicas is equal. Why does the video converter have such a low target average utilization? When there are multiple conversions enqueued, it will make autoscaling quicker - if it quickly reaches 25% of average CPU usage, then the new one is spawned. This is a very trivial configuration - if you need something more sophisticated check scaling policies .

What might happen if we now test our environment and enqueue 50 video conversions each taking around 10 minutes?

It depends, but the likely scenario is that the video converter will scale up to the 25 instances. What happens with other containers in the cluster? Some of them will be evicted, maybe backend ones, maybe frontend ones, or maybe even redis. There is quite a high risk of the setup to break down and be inaccessible for the end-users.

Can we mitigate the issue? Yes, for example, we can create the priority classes and assign them lower for the video converter. The higher priority pod has, the more worth it has for the scheduler. If two pods are due to be evicted - the one with lower priority gets the pole position. If two pods of different priorities are scheduled, the higher priority one gets the precedence.

apiVersion : scheduling.k8s.io/v1

kind : PriorityClass

metadata :

name : high-priority

value : 100000

globalDefault : false

description : "This is high priority class for important workloads"

So if we give the converter lower priority, we confirm that the frontend and backend pods are more important, and in the worst case, the video converter can be expelled from the cluster.

Moreover, this is not going to guarantee that the backend can’t evict the frontend.

There is also an alternative that allows us to have better control over the scheduling of the pods. It is called…

Pod Disruption Budget

This resource allows us to configure a minimal amount of the deployment pods running at once. It is more strict than just priority because it can even block the node drain, if there is not enough space on other workers to reschedule the pod, and in result make the replicas count lower than the assigned budget.

The configuration is straightforward:

apiVersion : policy/v1beta1

kind : PodDisruptionBudget

metadata :

name : frontend-pdb

spec :

minAvailable : 2

selector :

matchLabels :

app : frontend

From now on, the frontend replica count cannot get lower than 2. We can assign this way minimums for all the pods and make sure there are always at least 1 or 2 pods which can handle the request.

This is the easiest and safest way to make sure that pod autoscaling and cluster scaling down is not going to affect the overall solution stability - as long as the minimal set of containers configured with the disruption budget can fit the minimal cluster size and it is enough to handle the bare minimum of requests.

Connecting the dots

Now we have all the required pieces to create a stable solution. We can configure HPAs to have the same min number of replicas as PDB to make the scheduler's life easier. We know our max cluster size and made sure limits are the same as requests, so pods are not evicted. Let’s see what we get with the current configuration:

Application Min. replicas Max. replicas PDB CPUs Memory A redis cluster with one redis master - has to run on a persistent node 1 1 1 0.5 300MB Frontend application (immutable) 2 10 2 0.5 500MB Backend application (immutable) 2 10 2 0.7 500MB Video converter application (immutable) 1 25 1 1 2GB Sum (min) 3.9 4.3GB Sum (max) 37.5 ~60.3GB

Not bad. It can even stay as it is, but the current max cluster size is 24 cores with 48GB of memory. With all the configurations we went through, it should be fine when we exceed that size, so there is a little bit of flexibility for the scheduler - for example if there is a very low load on frontend and backend, but a huge pile of data to be converted, then the converter can scale up to approx. 19-21 instances, which is nice to have.

There is no one design that fits all

Is there anything wrong with the current configuration? Well, there can be, but we are going into unknown depths of “it depends.”

It all starts with the simple question - what is the purpose of my solution/architecture and what are the KPIs. Let’s look again at the example - it is a video converted with a web application. A pretty basic solution that scales up if required to accommodate a higher load. But what is more important - faster conversion or more responsible UI?

It all boils down to the product requirements, and in general, it is easy to solve. There are three paths we can follow from now on:

The I don’t care path

If it does not matter from the user and product perspective just leave it and see how it performs. Maybe even two frontend pods can handle a lot of load? Or maybe nobody cares about the latency as long as nothing crashes unexpectedly? Don’t overengineer and don’t try the premature optimization - let it be and see if it’s fine. If it’s not there are still two other paths available.

The I know what matters most path

This path requires a bit of knowledge about priorities. If the priority is the smooth and scalable UI and it’s fine to have quite some conversions waiting - put the higher priority on the frontend and backend deployments as described in previous paragraphs. If the video conversion is the key - put the higher priority on it. Whatever you choose, it will be the deployment that can scale up at the expense of the other one. This is especially important if loads don’t really run in parallel most of the time, so can scale up and down independently, and the next path does not fit that scenario.

The I want to be safe path

The last path is straightforward, just put the maximums so to be close to the cluster limits, but not higher:

Application Min. replicas Max. replicas PDB CPUs Memory A redis cluster with one redis master - has to run on a persistent node 1 1 1 0.5 300MB Frontend application (immutable) 2 8 2 0.5 500MB Backend application (immutable) 2 8 2 0.7 500MB Video converter application (immutable) 1 13 1 1 2GB Sum (min) 3.9 4.3GB Sum (max) 23,1 34,3GB

Now there is some space in the memory department, so we can, for example, give the pods more memory. We are also always safe because most of the time, there will be no fighting for resources. It might happen only when the cluster will be scaling up.

Is this a perfect solution? Not really, because it is possible to fit 20 video converters at once in the cluster when there is no traffic on the UI (frontend and backend) and we artificially limit the deployment ability to scale.

Autoscaling considerations

When it comes to autoscaling, there are some things to keep in mind. First, it is not reliable - it’s impossible to say how long it will take for the cloud provider to spin up the VM. It may take seconds, and it may take minutes (in general it rarely takes less than a minute), so starting very small with the hope of autoscaling solving the peak loads may not be the greatest idea.

The other often forgotten thing is that when we scale up, then there is a point when the cluster scales down. If the deployment scales down and pods are truly stateless and can handle it gracefully - then it is not a big deal. When it comes to the cluster scaling down, we need to remember that it effectively shuts down the VMs. Sometimes something is running on them, and the scheduler has to quickly move the workload to the other workers. This is something that has to be thoughtfully tested to make sure it does not break the application operations.

Kubernetes cluster management - summary

This is the end of our quite long journey through Kubernetes cluster size and resources management. There is much more there, especially for the bigger clusters or complex problems, which may come in handy later on, like configuring the eviction policies , namespace requests and limits , or topology management useful when we have specific nodes for specific purposes. Although what we have gone through in this article should be perfectly fine and serve well even quite complex solutions . Good luck and we wish you no evicted pods in the future!

Variable key names for Codable objects: How to make Swift Codable protocol even more useful?

It’s hard to imagine modern Swift iOS application that doesn’t work with multiple data sources like servers, local cache DB, etc, or doesn’t parse/convert data between different formats. While Swift Codable protocol is a great solution for this purpose it also has some important drawbacks when developing a complex app that deals with multiple data formats. From this article, you will know how to improve the Swift Codable mechanism and why it’s important.

Swift has a great feature for encoding/decoding data in key-value formats called Coding protocol. That is, you may choose to store data in e.g. JSON format or plist by at minimum just defining names of the keys for which the corresponding values should be stored.

Advantages and disadvantages of Swift Codable protocol

Here are the advantages of Codable protocol:

1) Type safety . You don't need typecasting or parsing the strings read from the file. Swift does for you all the low-level reading and parsing only returning you a ready to use object of a concrete type.

2) The Simplicity of usage . At a minimum, you may just declare that your type that needs to be encodable or decodable confirms to the corresponding protocol (either Codable or it's parts Decodable or Encodable). The compiler will match the keys from your data (e.g., JSON) automatically based on the names of your type's properties. In case you need advanced matching of keys' names with your type's properties (and in most real life cases you need it), you may define an enum CodingKeys that will do the mapping.

3) Extensibility . When you need some advanced parsing, you may implement initialization and encoding methods to parse/encode the data. This, for example, allows you to decode several fields of JSON combining them into a single value or make some advanced transformation before assigning value to your codable object's property.

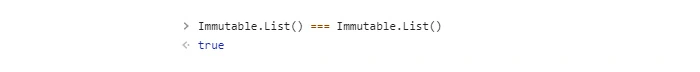

Despite its flexibility, the Codable approach has a serious limitation. For real-life tasks, it's often needed to store the same data in several data formats at the same time. For example, data coming from a server may be stored locally as a cache. Info about user account coming from the server is often stored locally to keep user sign in. At first glance, the Swift Codable protocol can be perfectly used in this case. However, the problem is that, as soon as one data source changes names of the keys for the stored values, the data won't be readable anymore by Codable object.

As an example let's imagine a situation when an application gets user info for a user account from the server and stores it locally to be used when the app is relaunched. In this case, the proper solution for parsing JSON data from the server into a model object is to use Codable protocol. The simplest way to store the object locally would be to just use Codable to encode the object (e.g. in plist format) and to store it locally. But codable object will use a certain set of keys that is defined by server JSON field names in our example. So if the server changes names of the JSON fields it returns, we'll have to change Codable implementation to match the new fields' names. So Codable implementation will use new keys to encode/decode data. And since the same implementation is used for local data, as well the user info that was previously saved locally will become unreadable.

To generalize, if we have multiple data sources for the same keyed data, the Codable implementation will stop working as soon as one of the data sources changes the names of the keys.

Approach with multiple entities

Let's see how to improve the Swift Codable protocol to properly handle such a situation. We need a way to encode/decode from each data source without restriction to have the same key names. To do it, we may write a model object type for each data source.

Back to our example with server and local data, we’ll have the following code:

// Server user info

struct ServerUserInfo: Codable {

let user_name: String

let email_address: String

let user_age: Int

}

// Local user info to store in User Defaults

struct LocalUserInfo: Codable {

let USER_NAME: String

let EMAIL: String

let AGE: Int

}

So we have two different structures: one to encode/decode user info from server and the other to encode/decode data for local usage in User Defaults. But semantically, this is the same entity. So code that works with such object should be able to use any of the structures above interchangeably. For this purpose, we may declare the following protocol:

protocol UserInfo {

var userName: String { get }

var email: String { get }

var age: Int { get }

}

Each user info structure will then conform to the protocol:

extension LocalUserInfo: UserInfo {

var userName: String {

return USER_NAME

}

var email: String {

return EMAIL

}

var age: Int {

return AGE

}

}

extension ServerUserInfo: UserInfo {

var userName: String {

return user_name

}

var email: String {

return email_address

}

var age: Int {

return user_age

}

}

So, code that requires user info will use it via UserInfo protocol.

Such solution is a very straightforward and easy to read. However, it requires much code. That is, we have to define a separate structure for each format a particular entity can be encoded/decoded from. Additionally, we need to define a protocol describing the entity and make all the structures conform to that protocol.

Approach with variational keys

Let’s find another approach that will make it possible to use a single structure to do the encoding/decoding from different key sets for different formats. Let’s also make this approach maintain simplicity in its usage. Obviously, we cannot have Coding keys bound to properties’ names as in the previous approach. This means we’ll need to override init(from:) and encode(to:) methods from Codable protocol. Below is a UserInfo structure defined for coding in JSON format from our example.

extension UserInfo: Codable {

private enum Keys: String, CodingKey {

case userName = "user_name"

case email = "email_address"

case age = "user_age"

}

init(from decoder: Decoder) throws {

let container = try decoder.container(keyedBy: Keys.self)

self.userName = try container.decode(String.self, forKey: .userName)

self.email = try container.decode(String.self, forKey: .email)

self.age = try container.decode(Int.self, forKey: .age)

}

func encode(to encoder: Encoder) throws {

var container = encoder.container(keyedBy: Keys.self)

try container.encode(userName, forKey: .userName)

try container.encode(email, forKey: .email)

try container.encode(age, forKey: .age)

}

}

In fact, to make the code above decode and encode another data format we only need to change the keys themselves. That is, we’ve used simple enum conforming to the CodingKey protocol to define the keys. However, we may implement arbitrary type conforming to the CodingKey protocol. For example, we may choose a structure. So, a particular instance of a structure will represent the coding key used in calls to container.decode() or container.encode() . While implementation will provide info about the keys of a particular data format. The code of such structure is provided below:

struct StringKey: CodingKey {

let stringValue: String

let intValue: Int?

init?(stringValue: String) {

self.intValue = nil

self.stringValue = stringValue

}

init?(intValue: Int) {

self.intValue = intValue

self.stringValue = "\(intValue)"

}

}

So, the StringKey just wraps a concrete key for a particular data format. For example, to decode userName from JSON, we’ll create the corresponding StringKey instances specifying JSON user_name field into init?(stringValue:) method.

Now we need to find a way to define key sets for each data type. To each property from UserInfo , we need somehow assign keys that can be used to encode/decode the property’s value. E.g. for property userName corresponds to user_name key for JSON and USER_NAME key for plist format. To represent each property, we may use Swift’s KeyPath type. Also, we would like to store information about which data format each key is used for. Translating the above into code we’ll have the following:

enum CodingType {

case local

case remote

}

extension UserInfo {

static let keySet: [CodingType: [PartialKeyPath<UserInfo>: String]] = [

// for .plist stored locally

.local: [

\Self.userName: "USER_NAME",

\Self.email: "EMAIL",

\Self.age: "AGE"

],

// for JSON received from server

.remote: [

\Self.userName: "user_name",

\Self.email: "email_address",

\Self.age: "user_age"

]

]

}

To let the code inside init(from:) and encode(to:) methods aware of the decode/encode data format we may use user info from Decoder/Encoder objects:

extension CodingUserInfoKey {

static var codingTypeKey = CodingUserInfoKey(rawValue: "CodingType")

}

...

let providedType = <either .local or .remote from CodingType enum>

let decoder = JSONDecoder()

if let typeKey = CodingUserInfoKey.codingTypeKey {

decoder.userInfo[typeKey] = providedType

}

When decoding/encoding, we’ll just read the value from user info for CodingUserInfoKey.codingTypeKey key and pick the corresponding set of coding keys.

Let’s bring all the above together and see how our code will look like:

enum CodingError: Error {

case keyNotFound

case keySetNotFound

}

extension UserInfo: Codable {

static func codingKey(for keyPath: PartialKeyPath<Self>,

in keySet: [PartialKeyPath<Self>: String]) throws -> StringKey {

guard let value = keySet[keyPath],

let codingKey = StringKey(stringValue: value) else {

throw CodingError.keyNotFound

}

return codingKey

}

static func keySet(from userInfo: [CodingUserInfoKey: Any]) throws -> [PartialKeyPath<Self>: String] {

guard let typeKey = CodingUserInfoKey.codingTypeKey,

let type = userInfo[typeKey] as? CodingType,

let keySet = Self.keySets[type] else {

throw CodingError.keySetNotFound

}

return keySet

}

init(from decoder: Decoder) throws {

let keySet = try Self.keySet(from: decoder.userInfo)

let container = try decoder.container(keyedBy: StringKey.self)

self.userName = try container.decode(String.self, forKey: try Self.codingKey(for: \Self.userName,

in: keySet))

self.email = try container.decode(String.self, forKey: try Self.codingKey(for: \Self.email,

in: keySet))

self.age = try container.decode(Int.self, forKey: try Self.codingKey(for: \Self.age,

in: keySet))

}

func encode(to encoder: Encoder) throws {

let keySet = try Self.keySet(from: encoder.userInfo)

var container = encoder.container(keyedBy: StringKey.self)

try container.encode(userName, forKey: try Self.codingKey(for: \Self.userName,

in: keySet))

try container.encode(email, forKey: try Self.codingKey(for: \Self.email,

in: keySet))

try container.encode(age, forKey: try Self.codingKey(for:

\Self.age,

in: keySet))

}

}

Note we’ve added two helper static methods: codingKey(for keyPath , in keySet) and keySet(from userInfo) . Their usage makes code of init(from:) and encode(to:) more clear and straightforward.

Generalizing the solution

Let’s improve the solution with coding key sets we’ve developed to make it easier and faster to apply. The solution has some boilerplate code for transforming KeyPath of the type into a coding key and choosing the particular key set. Also, encoding/ decoding code has a repeating call to codingKey(for keyPath, in keySet) that complicates the init(from:) and encode(to:) implementation and can be reduced.

First, we’ll extract helping code into helper objects. It will be enough to just use structures for this purpose:

private protocol CodingKeyContainable {

associatedtype Coding

var keySet: [PartialKeyPath<Coding>: String] { get }

}

private extension CodingKeyContainable {

func codingKey(for keyPath: PartialKeyPath<Coding>) throws -> StringKey {

guard let value = keySet[keyPath], let codingKey = StringKey(stringValue: value) else {

throw CodingError.keyNotFound

}

return codingKey

}

}

struct DecodingContainer<CodingType>: CodingKeyContainable {

fileprivate let keySet: [PartialKeyPath<CodingType>: String]

fileprivate let container: KeyedDecodingContainer<StringKey>

func decodeValue<PropertyType: Decodable>(for keyPath: KeyPath<CodingType, PropertyType>) throws -> PropertyType {

try container.decode(PropertyType.self, forKey: try codingKey(for: keyPath as PartialKeyPath<CodingType>))

}

}

struct EncodingContainer<CodingType>: CodingKeyContainable {

fileprivate let keySet: [PartialKeyPath<CodingType>: String]

fileprivate var container: KeyedEncodingContainer<StringKey>

mutating func encodeValue<PropertyType: Encodable>(_ value: PropertyType, for keyPath: KeyPath<CodingType, PropertyType>) throws {

try container.encode(value, forKey: try codingKey(for: keyPath as PartialKeyPath<CodingType>))

}

}

Protocol CodingKeyContainable just helps us to reuse key set retrieving code in both structures.

Now let’s define our own Decodable/Encodable-like protocols. This will allow us to hide all the boilerplate code for getting the proper key set and creating a decoder/encoder object inside of the default implementation of init(from:) and encode(to:) methods. On the other hand, it will allow us to simplify decoding/encoding the concrete values by using DecodingContainer and EncodingContainer structures we’ve defined above. Another important thing is that by using the protocols, we’ll also add the requirement of implementing:

static let keySet: [CodingType: [PartialKeyPath<UserInfo>: String]] by codable types for which we want to use the approach with variational keys.

Here are our protocols:

// MARK: - Key Sets

protocol VariableCodingKeys {

static var keySets: [CodingType: [PartialKeyPath<Self>: String]] { get }

}

private extension VariableCodingKeys {

static func keySet(from userInfo: [CodingUserInfoKey: Any]) throws -> [PartialKeyPath<Self>: String] {

guard let typeKey = CodingUserInfoKey.codingTypeKey,

let type = userInfo[typeKey] as? CodingType,

let keySet = Self.keySets[type] else {

throw CodingError.keySetNotFound

}

return keySet

}

}

// MARK: - VariablyDecodable

protocol VariablyDecodable: VariableCodingKeys, Decodable {

init(from decodingContainer: DecodingContainer<Self>) throws

}

extension VariablyDecodable {

init(from decoder: Decoder) throws {

let keySet = try Self.keySet(from: decoder.userInfo)

let container = try decoder.container(keyedBy: StringKey.self)

let decodingContainer = DecodingContainer<Self>(keySet: keySet, container: container)

try self.init(from: decodingContainer)

}

}

// MARK: - VariablyEncodable

protocol VariablyEncodable: VariableCodingKeys, Encodable {

func encode(to encodingContainer: inout EncodingContainer<Self>) throws

}

extension VariablyEncodable {

func encode(to encoder: Encoder) throws {

let keySet = try Self.keySet(from: encoder.userInfo)

let container = encoder.container(keyedBy: StringKey.self)

var encodingContainer = EncodingContainer<Self>(keySet: keySet, container: container)

try self.encode(to: &encodingContainer)

}

}

typealias VariablyCodable = VariablyDecodable & VariablyEncodable

Let’s now rewrite our UserInfo structure to make it conform to newly defined VariablyCodable protocol:

extension UserInfo: VariablyCodable {

static let keySets: [CodingType: [PartialKeyPath<UserInfo>: String]] = [

// for .plist stored locally

.local: [

\Self.userName: "USER_NAME",

\Self.email: "EMAIL",

\Self.age: "AGE"

],

// for JSON received from server

.remote: [

\Self.userName: "user_name",

\Self.email: "email_address",

\Self.age: "user_age"

]

]

init(from decodingContainer: DecodingContainer<UserInfo>) throws {

self.userName = try decodingContainer.decodeValue(for: \.userName)

self.email = try decodingContainer.decodeValue(for: \.email)

self.age = try decodingContainer.decodeValue(for: \.age)

}

func encode(to encodingContainer: inout EncodingContainer<UserInfo>) throws {

try encodingContainer.encodeValue(userName, for: \.userName)

try encodingContainer.encodeValue(email, for: \.email)

try encodingContainer.encodeValue(age, for: \.age)

}

}

This is where a true power of protocols comes. By conforming to VariablyCodable our type automatically becomes Codable. Moreover, without any boilerplate code, we now have the ability to use different sets of coding keys.

Going back to the advantages of the Codable protocol we outlined at the beginning of the article, let’s check which ones VariablyCodable has.

1) Type safety . Nothing changed here comparing to the Codable protocol. VariablyCodable protocol still uses concrete types without involving any dynamic type casting.

2) The simplicity of usage . Here we don’t have declarative style option with enum describing keys and values. We always have to implement init(from:) and encode(to:) methods. However, since the minimum implementation of the methods is so simple and straightforward (each line just decodes/encodes single property) that it is comparable to defining CodingKeys enum for the Codable protocol.

3) Extensibility . Here we have more abilities comparing to the Codable protocol. Additionally to the flexibility that can be achieved by implementing init(from:) and encode(to:) methods, we have also keySets map that provides an additional layer of abstraction of coding keys.

Summary

We defined two approaches to extend the behavior of the Codable protocol in Swift to be able to use a different set of keys for different data formats. The first approach implying separate types for each data format works well for simple cases when having two data formats and a single data flow direction (e.g. decoding only). However, if your app has multiple data sources and encodes/decodes arbitrarily between those formats you may stick to approach with VariablyCodable protocol. While it needs more code to be written at the beginning, once implemented, you will gain great flexibility and extensibility in coding/decoding data for any type you need .

Leveraging AI to improve VIN recognition - how to accelerate and automate operations in the insurance industry

Here we share our approach to automatic Vehicle Identification Number (VIN) detection and recognition using Deep Neural Networks. Our solution is robust in many aspects such as accuracy, generalization, and speed, and can be integrated into many areas in the insurance and automotive sectors.

Our goal is to provide a solution allowing us to take a picture using a mobile app and read the VIN that is present in the image. With all the similarities to any other OCR application and common features, the differences are colossal.

Our objective is to create a reliable solution and to do so we jumped directly into analysis of the real domain images.

VINs are located in many places on a car and its parts. The most readable are those printed on side doors and windshields. Here we focus on VINs from windshields.

OCR doesn’t seem to be rocket science now, does it? Well, after some initial attempts, we realized we’re not able to use any available commercial tools with success, and the problem was much harder than we had thought.

How do you like this example of KerasOCR ?

Despite many details, like the fact that VINs don’t contain the characters ‘I’, ‘O’, ‘Q’, we have very specific distortions, proportions, and fonts.

Initial approach

How can we approach the problem? The most straightforward answer is to divide the system into two components:

VIN detection VIN recognition Cropping the characters from the big image Recognizing cropped characters

In the ideal world images like that:

Will be processed this way:

After we have the intuition how the problem looks like, we can we start solving it. Needless to say, there is no “VIN reading” task available on the internet, therefore we need to design every component of our solution from scratch. Let’s introduce the most important stages we’ve created, namely:

- VIN detection

- VIN recognition

- Training data generation

- Pipeline

VIN detection

Our VIN detection solution is based on two ideas:

- Encouraging users to take a photo with VIN in the center of the picture - we make that easier by showing the bounding box.

- Using Character Region Awareness for Text Detection (CRAFT) - a neural network to mark VIN precisely and be more error-prone.

CRAFT

The CRAFT architecture is trying to predict a text area in the image by simultaneously predicting the probability that the given pixel is the center of some character and predicting the probability that the given pixel is the center of the space between the adjacent characters. For the details, we refer to the original paper .

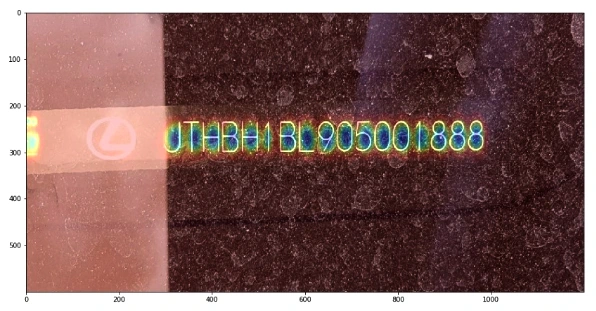

The image below illustrates the operation of the network:

Before actual recognition, it had sound like a good idea to simplify the input image vector to contain all the needed information and no redundant pixels. Therefore, we wanted to crop the characters’ area from the rest of the background.

We intended to encourage a user to take a photo with a good VIN size, angle, and perspective.

Our goal was to be prepared to read VINs from any source, i.e. side doors. After many tests, we think the best idea is to send the area from the bounding box seen by users and then try to cut it more precisely using VIN detection. Therefore, our VIN detector can be interpreted more like a VIN refiner.

It would be remiss if we didn’t note that CRAFT is exceptionally unusually excellent. Some say every precious minute communing with it is pure joy.

Once the text is cropped, we need to map it to a parallel rectangle. There are dozens of design dictions such as the affine transform, resampling, rectangle, resampling for text recognition, etc.

Having ideally cropped characters makes recognition easier. But it doesn’t mean that our task is completed.

VIN recognition

Accurate recognition is a winning condition for this project. First, we want to focus on the images that are easy to recognize – without too much noise, blur, or distortions.

Sequential models

The SOTA models tend to be sequential models with the ability to recognize the entire sequences of characters (words, in popular benchmarks) without individual character annotations. It is indeed a very efficient approach but it ignores the fact that collecting character bounding boxes for synthetic images isn’t that expensive.

As a result, we devaluated supposedly the most important advantage of the sequential models. There are more, but are they worth watching out all the traps that come with them?

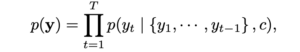

First of all, training attention-based model is very hard in this case because of

As you can see, the target characters we want to recognize are dependent on history. It could be possible only with a massive training dataset or careful tuning, but we omitted it.

As an alternative, we can use Connectionist Temporal Classification (CTC) models that in opposite predict labels independently of each other.

More importantly, we didn’t stop at this approach. We utilized one more algorithm with different characteristics and behavior.

YOLO

You Only Look Once is a very efficient architecture commonly used for fast and accurate object detection and recognition. Treating a character as an object and recognizing it after the detection seems to be a definitely worth trying approach to the project. We don’t have the problem and there are some interesting tweaks that can allow even more precise recognition in our case. Last but not least, we are able to have a bigger control of the system as much of the responsibility is transferred from the neural network.

However, the VIN recognition requires some specific design of YOLO. We used YOLO v2 because the latest architecture patterns are more complex in areas that do not fully address our problem.

- We use 960 x 32 px input (so images cropped by CRAFT are usually resized to meet this condition). Then we divide the input into 30 gird cells (each of size 32 x 32 px),

- For each grid cell, we run predictions in predefined anchor boxes,

- We use anchor boxes of 8 different widths but height always remains the same and is equal to 100% of the image height.

As the results came, our approach proved to be effective in recognizing individual characters from VIN.

Metrics

Appropriate metrics becomes crucial in machine learning-based solutions as they drive your decisions and project dynamic. Fortunately, we think simple accuracy fulfills the demands of a precise system and we can omit the research in this area.

We just need to remember one fact: a typical VIN contains 17 characters, and it’s enough to miss one of them to classify the prediction as wrong. At any point of work, we measure Character Recognition Rate (CER) to understand the development better. CERs at a level 5% (5% of wrong characters) may result in accuracy lower than 75%.

About the models tuning

It's easy to notice that all OCR benchmark solutions have much bigger effective capacity that exceeds the complexity of our task despite being too general as well at the same time. That itself emphasizes the danger of overfitting and directs our focus to generalization ability.

It is important to distinguish hyperparameters tuning from architectural design. Apart from ensuring information flow through the network extracts correct features, we do not dive into extended hyperparameters tuning.

Training data generation

We skipped one important topic: the training data.

Often, we support our models with artificial data with reasonable success but this time the profit is huge. Cropped synthetized texts are so similar to the real images that we suppose we can base our models on them, and only finetune it carefully with real data.

Data generation is a laborious, tricky job. Some say your model is as good as your data. It feels like the craving and any mistake can break your material. Worse, you can spot it as late as after the training.

We have some pretty handy tools in arsenal but they are, again, too general. Therefore we had to introduce some modifications.

Actually, we were forced to generate more than 2M images. Obviously, there is no point nor possibility of using all of them. Training datasets are often crafted to resemble the real VINs in a very iterative process, day after day, font after font. Modeling a single General Motors font took us at least a few attempts.

But finally, we got there. No more T’s as 1’s, V’s as U’s, and Z’s as 2’s!

We utilized many tools. All have advantages and weaknesses and we are very demanding. We need to satisfy a few conditions:

- We need a good variance in backgrounds. It’s rather hard to have a satisfying amount of windshields background, so we’d like to be able to reuse those that we have, and at the same time we don’t want to overfit to them, so we want to have some different sources. Artificial backgrounds may not be realistic enough, so we want to use some real images from outside our domain,

- Fonts, perhaps most important ingredients in our combination, have to resemble creative VIN’s fonts (who made them!?) and cannot interfere with each other. At the same time, the number of car manufacturers is much higher than our collector’s impulses, so we have to be open to unknown shapes.

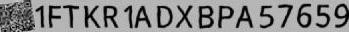

The below images are the example of VIN data generation for recognizers:

Putting everything together

It’s the art of AI to connect so many components into a working pipeline and not mess it up.

Moreover, we have a lot of traps here. Mind these images:

VIN labels often consist of separated strings, two rows, logos and bar codes present near the caption.

90% of end-to-end accuracy provided by our VIN reader

Under one second solely on mid-quality CPU, our solution has over 90% of end-to-end accuracy.

This result depends on the problem definition and test dataset. For example, we have to decide what to do with the images that are impossible to read by a human. Nevertheless, not regarding the dataset, we approached human-level performance which is a typical reference level in Deep Learning projects.

We also managed to develop a mobile offline version of our system with similar inference accuracy but a bit slower processing time.

App intelligence

While working on the tools designed for business , we can’t forget about the real use-case flow. With the above pipeline, we’re absolutely unresistant to photos that are impossible to read, even though we want it to be. Often similar situations happen due to:

- incorrect camera focus,

- light flashes,

- dirt surfaces,

- damaged VIN plate.

Usually, we can prevent these situations by asking users to change the angle or retake a photo, before we send it to the further processing engines.

However, the classification of these distortions is a pretty complex task! Nevertheless, we implemented a bunch of heuristics and classifiers that allow us to ensure that VIN, if recognized, is correct. For the details, you have to wait for the next post.

Last but not least, we’d like to mention that, as usual, there are a lot of additional components built around our VIN Reader . Apart from a mobile application, offline on-device recognition, we’ve implemented remote backend, pipelines, tools for tagging, semi-supervised labeling, synthesizers, and more.

https://youtu.be/oACNXmlUgtY

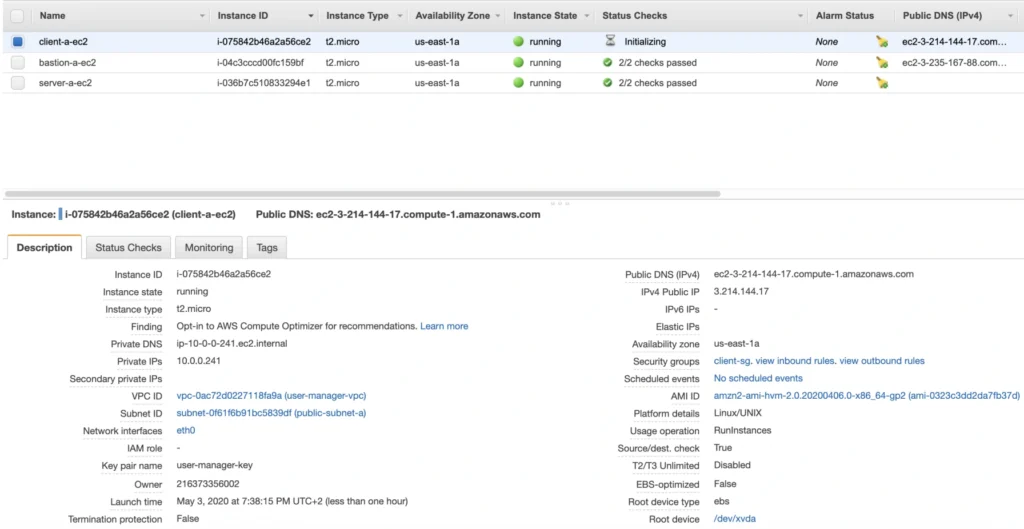

The path towards enterprise level AWS infrastructure – EC2, AMI, Bastion Host, RDS

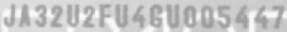

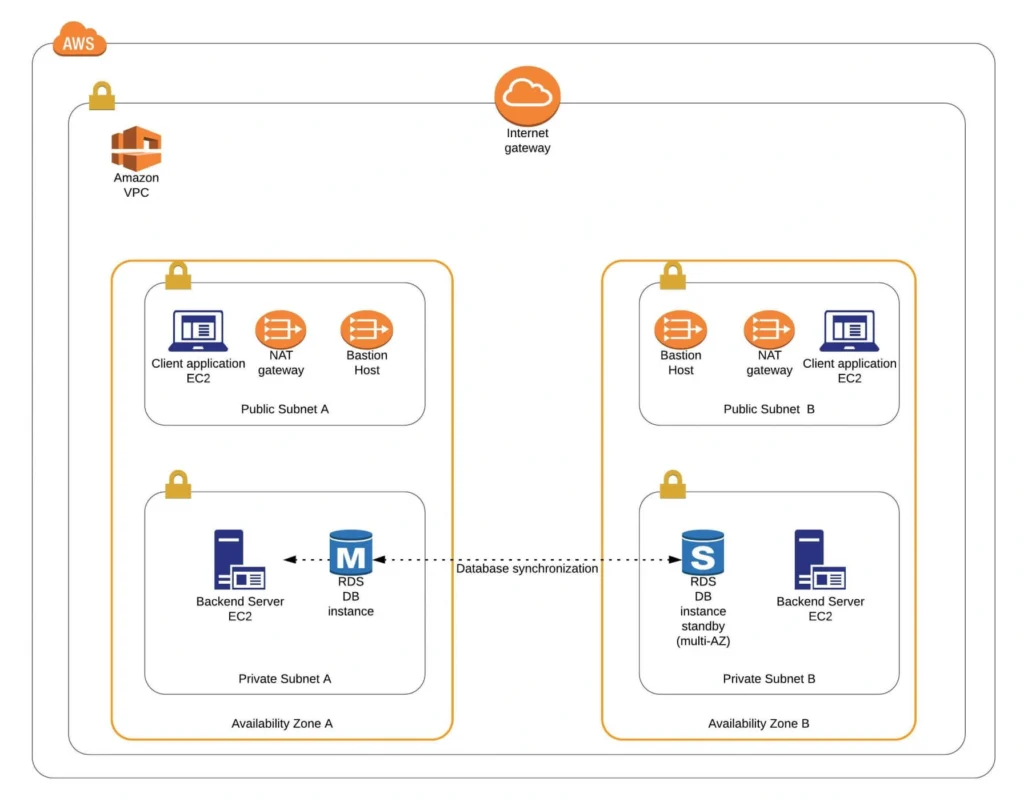

Let’s pick up the thread of our journey into the AWS Cloud, and keep discovering the intrinsics of the cloud computing universe while building a highly available, secure and fault-tolerant cloud system on the AWS platform. This article is the second one of the mini-series which walks you through the process of creating an enterprise-level AWS infrastructure and explains concepts and components of the Amazon Web Services platform. In the previous part, we scaffolded our infrastructure; specifically, we created the VPC, subnets, NAT gateways, and configured network routing. If you have missed that, we strongly encourage you to read it first. In this article, we will build on top of the work we have done in the previous part, and this time we focus on the configuration of EC2 instances, the creation of AMI images, setting up Bastion Hosts, and RDS database.

The whole series comprises of:

- Part 1 - Architecture Scaffolding (VPC, Subnets, Elastic IP, NAT).

- Part 2 - The Path Towards Enterprise Level AWS Infrastructure – EC2, AMI, Bastion Host, RDS.

- Part 3 - Load Balancing and Application Deployment (Elastic Load Balancer)

Infrastructure overview

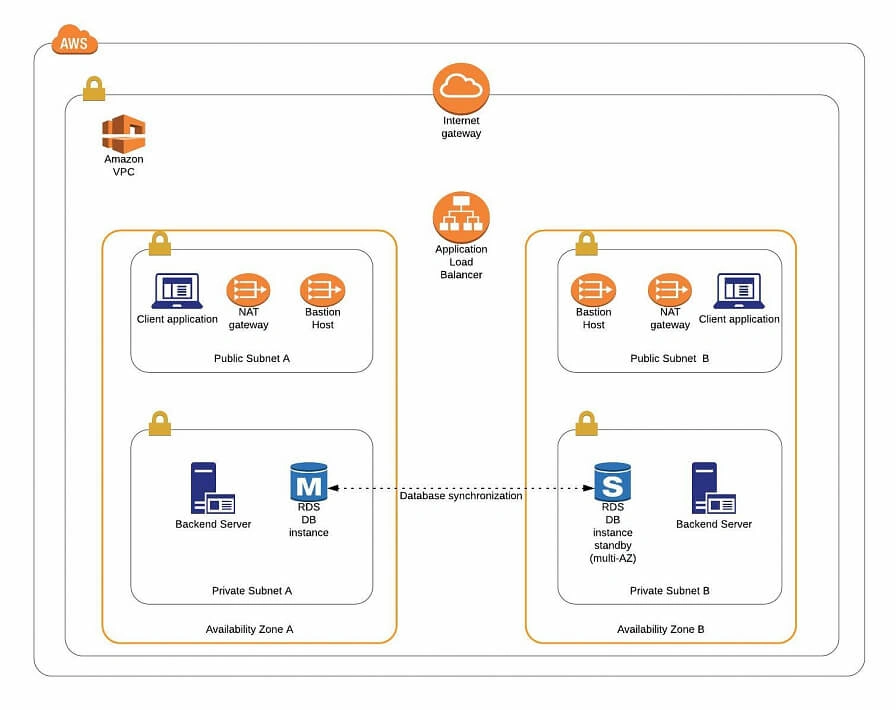

The diagram below presents our designed infrastructure. If you would like to learn more about design choices behind it, please read Part 1 - Architecture Scaffolding (VPC, Subnets, Elastic IP, NAT) . We have already created a VPC, subnets, NAT Gateways, and configured network routing. In this part of the series, we focus on the configuration of required EC2 instances, the creation of AMI images, setting up Bastion Hosts, and the RDS database.

AWS theory

1. Elastic cloud compute cloud (EC2)

Elastic Cloud Compute Cloud (EC2) is an Amazon service that allows you to manage your virtual computing environments, known as EC2 instances, on AWS. An EC2 instance is simply a virtual machine provisioned with a certain amount of resources such as CPU, memory, storage, and network capacity launched in a selected AWS region and availability zone. The elasticity of EC2 means that you can scale up or down resources easily, depending on your needs and requirements. The network security of your instances can be managed with the use of security groups by the configuration of protocols, ports, and IP addresses that your instances can communicate with.

There are five basic types of EC2 instances, which you can use based on your system requirements.

- General Purpose,

- Compute Optimized,

- Memory Optimized,

- Accelerated Computing,

- Storage Optimized.

In our infrastructure, we will use only general-purpose instances, but if you would like to learn more about different features of instance types, see the AWS documentation.

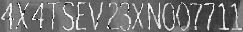

All EC2 instances come with instance store volumes for temporary data that is deleted whenever the instance is stopped or terminated, as well as with Elastic Block Store (EBS) , which is a persistent storage volume working independently of the EC2 instance itself.

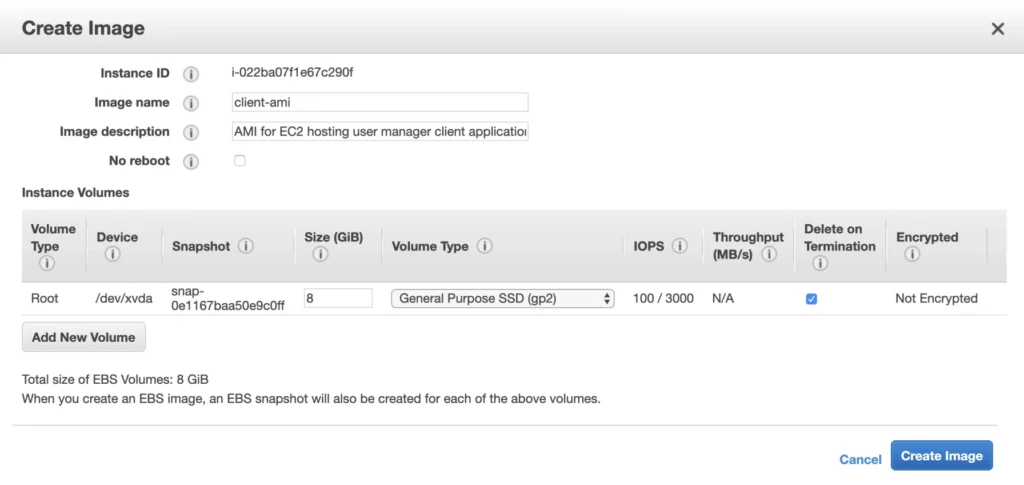

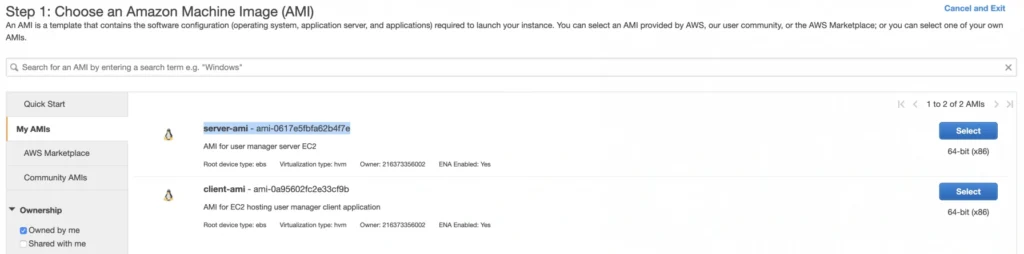

2. Amazon Machine Images (AMI)

Amazon utilizes templates of software configurations, known as Amazon Machine Images (AMI) , in order to facilitate the creation of custom EC2 instances. AMIs are image templates that contain software such as operating systems, runtime environments, and actual applications that are used to launch EC2 instances. This allows us to preconfigure our AMIs and dynamically launch new instances on the go using this image instead of always setting up VM environments from scratch. Amazon provides some ready to use AMIs on the AWS Marketplace, which you can extend, customize, and save as your own (which we will do soon).

3. Key pair

Amazon provides a secure EC2 login mechanism with the use of public-key cryptography. During the instance boot time, the public key is put in an entry within ~/.ssh/authorized_keys , and then you can securely access your instance through SSH using a private key instead of a password. The public and private keys are known as a key pair.

4. IAM role

IAM means Identity and Access Management and it defines authentication and authorization rules for your system. IAM roles are IAM identities which comprise a set of permissions that control access to AWS services and can be attached to AWS resources such as users, applications, or services. As an example, if your application needs access to a specific AWS service such as an S3 Bucket, its EC2 instance needs to have a role with appropriate permission assigned.

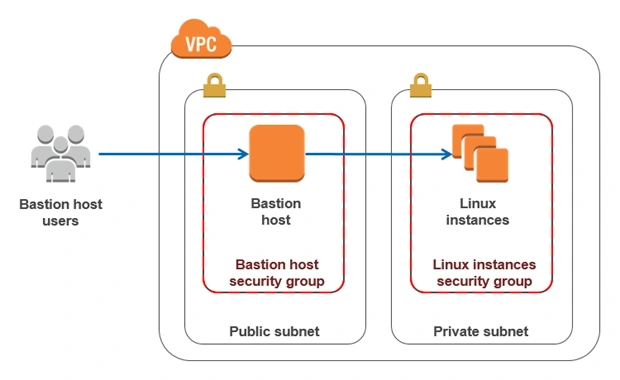

5. Bastion Host

Bastion Host is a special purpose instance placed in a public subnet, which is used to allow access to instances located in private subnets while providing an increased level of security. It acts as a bridge between users and private instances, and due to its exposure to potential attacks, it is configured to withstand any penetration attempts. The private instances only expose their SSH ports to a bastion host, not allowing any direct connection. What is more, bastion hosts may be configured to log any activity providing additional security auditing.

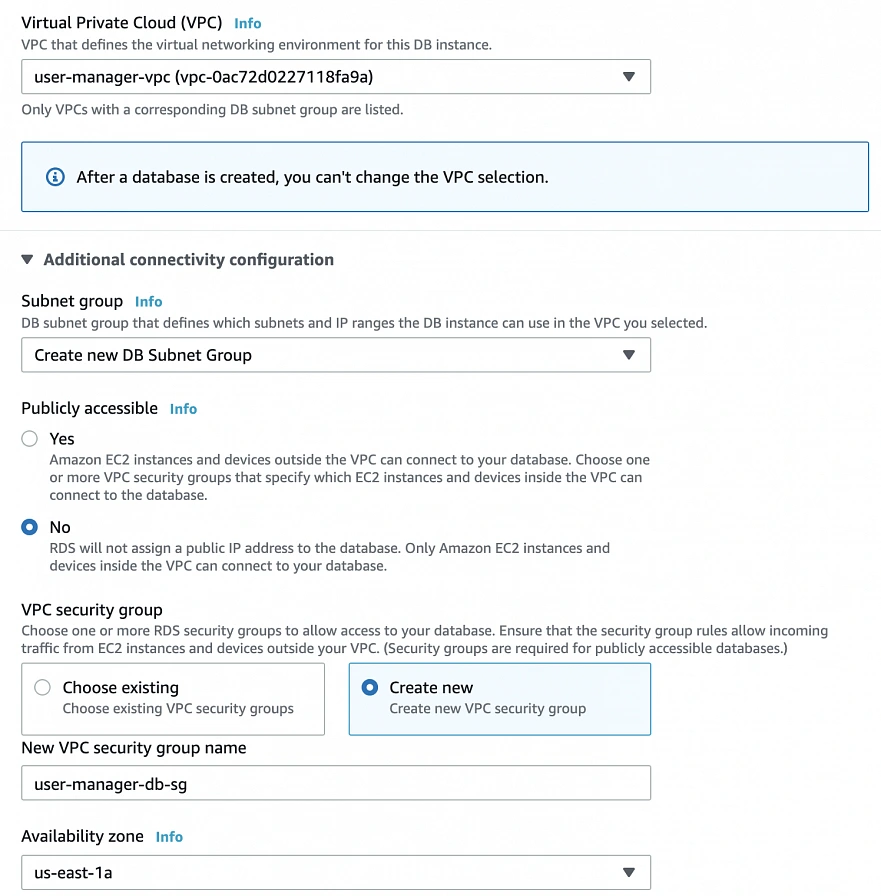

6. Amazon Relational Database Service (RDS)

6.1. RDS

RDS is an Amazon service for the management of relational databases in the cloud. As of now (23.04.2020), it supports six database engines specifically Amazon Aurora, PostgreSQL, MySQL, MariaDB, Oracle Database, and SQL Server. It is easy to configure, scale and it provides high availability and reliability with the use of Read Replicas and Multi-AZ Deployment features.

6.2. Read replicas

RDS Read Replicas are asynchronous, read-only instances that are replicas of a primary “master” db instance. They can be used for handling queries that do not require any data change, thus reliving the workload from the master node.

6.3. Multi-AZ deployment

AWS Multi-AZ Deployment is an option to allow RDS to create a secondary, standby instance in a different AZ, and replicate it synchronously with the data from the master node. Both master and standby instances run on their own physically independent infrastructures, and only the primary instance can be accessed directly. The standby replica is used as a failover in case of any master’s failure, without changing the endpoint of your DB.

This reduces downtime of your system and makes it easier to perform version upgrades or create backup snapshots, as they can be done on the spare instance. Multi-AZ is usually used only on the master instance. However, it is also possible to create read replicas with Multi-AZ deployment, which results in a resilient disaster recovery infrastructure.

Practice

We have two applications that we would like to run on our AWS infrastructure. One is a Java 11 Spring Boot application, so the EC2 which will host it is required to have Java 11 installed. The second one is a React.js frontend application, which requires a virtual machine with a Node.js environment. Therefore, as the first step, we are going to set up a Bastion Host, which will allow us to ssh our instances. Then, we will launch and configure those two EC2 instances manually in the first availability zone. Later on, we will create AMIs based on those instances and use them for the creation of EC2s in the second availability zone.

1. Availability Zone A

1.1. Bastion Host

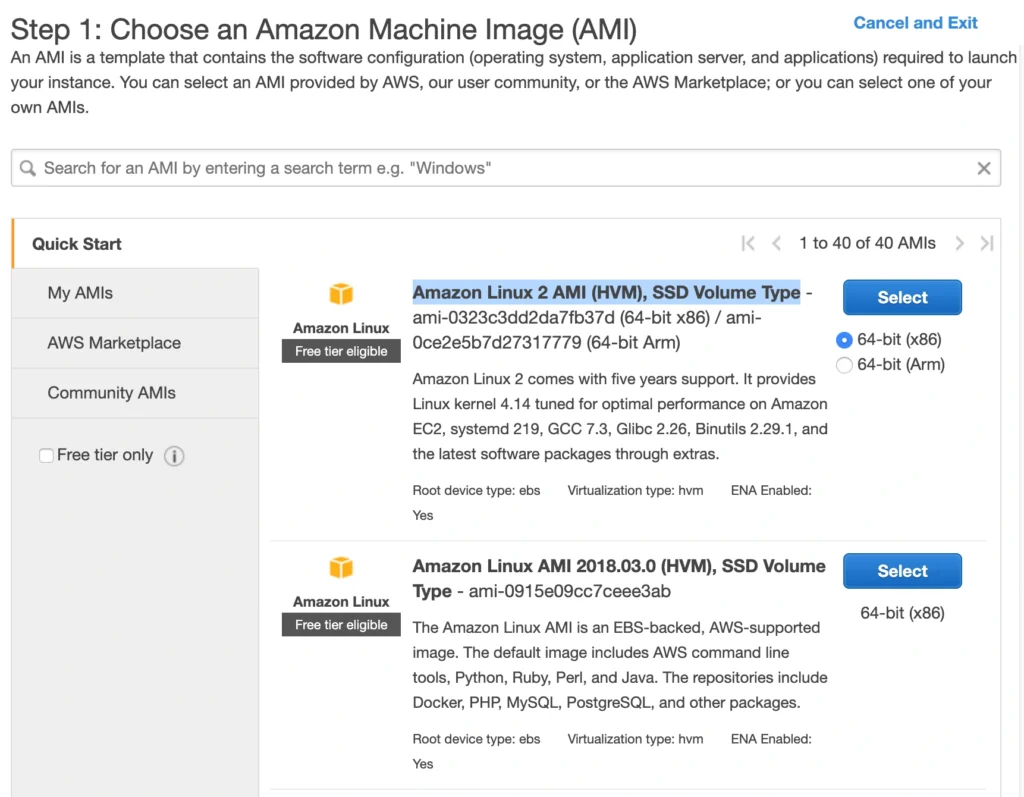

A Bastion Host is nothing more than a special-purpose EC2 instance. Hence, in order to create a Bastion Host, go into the AWS Management Console, and search for EC2 service. Then click the Launch Instance button, and you will be shown with an EC2 launch wizard. The first step is the selection of an AMI image for your instance. You can filter AMIs and select one based on your preferences. In this article, we will use the Amazon Linux 2 AMI (HVM), SSD Volume Type image.

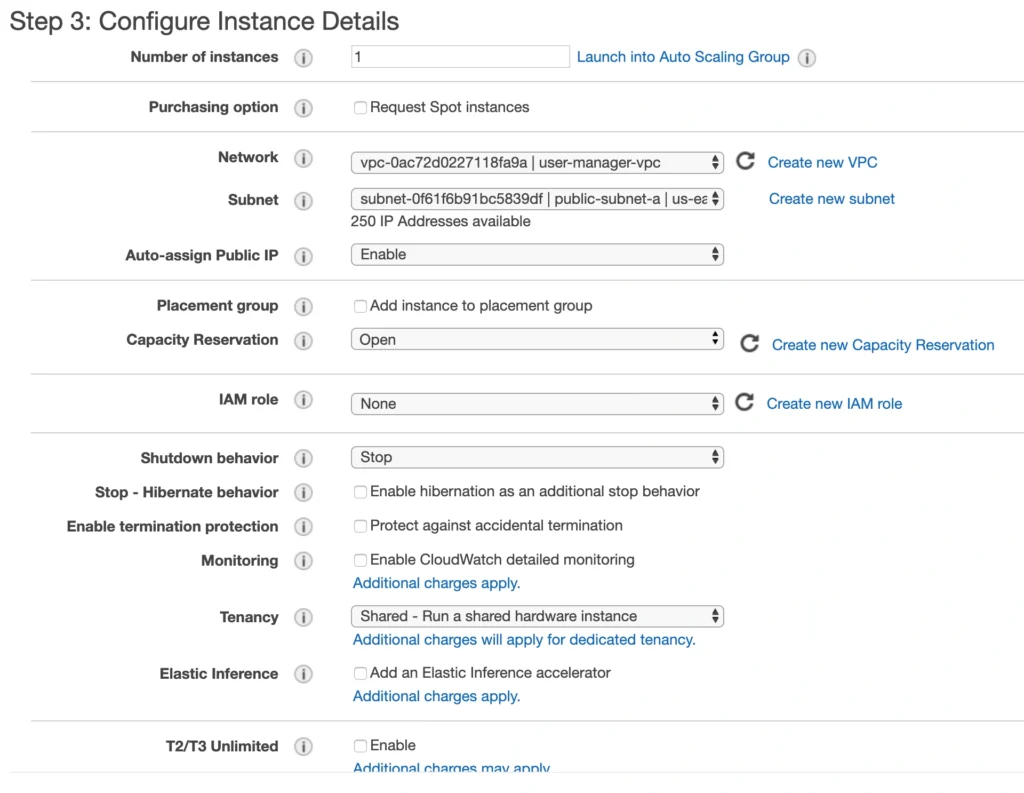

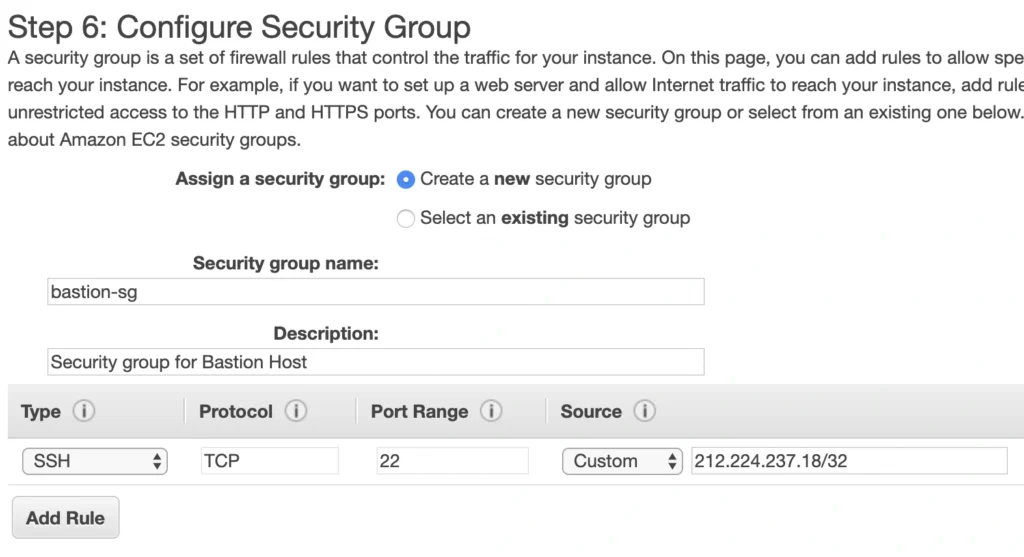

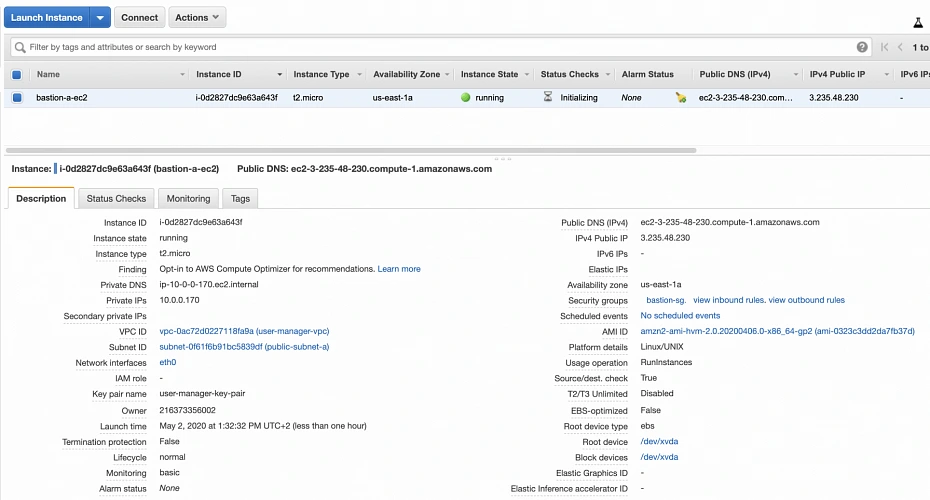

On the next screen, we need to choose an instance type for our image. Here, I am sticking with the AWS free tier program, so I will go with the general-purpose t2.micro type. Click Next: Configure instance Details . Here, we can define the number of instances, network settings, IAM configuration, etc. For now, let’s start with 1 instance, we will work on the scalability of our infrastructure later. In the Network section, choose your previously created VPC and public-subnet-a and enable Public IP auto-assignment. We do not need to specify any IAM role as we are not going to use any of the AWS services.

Click Next . Here you can see that the wizard automatically configures your instance with an 8GB EBS storage, which is enough for us. Click Next again. Now, we can add tags to improve the recognizability of our instance. Let’s add a Name tag bastion-a-ec2 . On the next screen, we can configure a security group for our instance. Create a new security group, name it bastion-sg .

You can see that there is already one predefined rule exposing our instance for SSH sessions from 0.0.0.0/0 (anywhere). You should change it here to allow only connections from your IP address. The important thing to note here is that in the production environment you would never expose your instances to the whole world, instead, you would whitelist the IP addresses of employees allowed to connect to your instance.

In the next step, you can review your EC2 configuration and launch it. The last action is the creation of a key pair. This is important because we need this key pair to ssh to our instance. Name the key pair e.g. user-manager-key-pair , download the private key, and store it locally on your machine. This is it, Amazon will take some time, but in the end, your EC2 instance will be launched.

In the instance description section, you can find the public IP address of your instance. We can use it to ssh to the EC2. That is where we will need previously generated and hopefully locally saved private key (*.pem file). That’s it, our instance is ready for now. However, in production, it would be a good idea to harden the security of the Bastion Host even more. If you would like to learn more about that, we recommend this article .

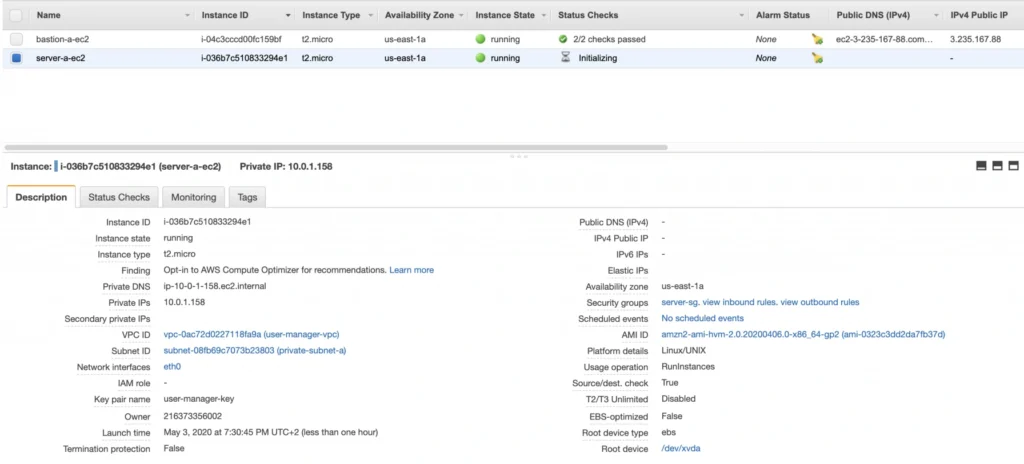

1.2. Backend server EC2

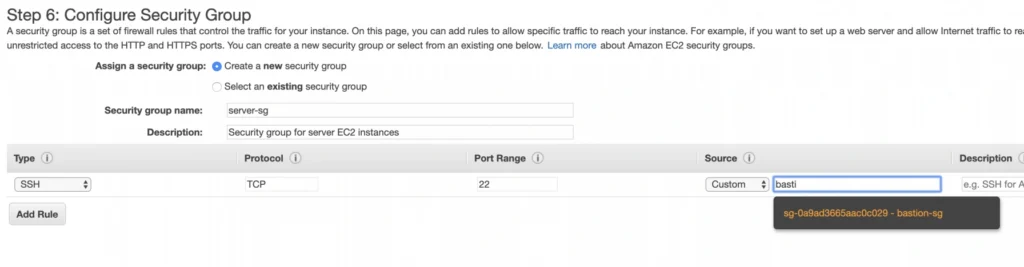

Now, let’s create an instance for the backend server. Click Launch instance again, choose the same AMI image as before, place it in your user-manager-vpc, private-subnet-a, and do not enable public IP auto-assignment this time. Move through the next steps as before, add a server-a-ec2 name tag. In the security group configuration, create a new security group, and modify its settings to allow SSH incoming communication only from the bastion-sg .

Launch the instance. You can create a new key pair or use the previously created one (for simplicity I recommend using the same key pair for all instances). In the end, you should have your second instance up and running.

You can see that server-a-ec2 does not have any public IP address. However, we can access it through the bastion host. First, we need to add our key to a keychain and then we can ssh to our bastion host instance adding -A flag to the ssh command. This flag enables agent-forwarding, which will let you ssh into your private instance without explicitly specifying private key again. This is a recommended way, which lets you avoid storage of the private key on the bastion host instance which could lead to a security breach.

ssh-add -k

ssh -A -i path-to-your-pem-file ec2-user@bastion-a-ec2-instance-public-ip

Then, inside your bastion host execute the command:

ssh ec2-user@server-a-ec2-instance-private-ip

Now, you should be inside your server-a-ec2 private instance. Let’s install the required software on the machine by executing those commands:

sudo yum update -y &&

sudo amazon-linux-extras enable corretto8 &&

sudo yum clean metadata &&

sudo yum install java-11-amazon-corretto &&

java --version

As a result, you should have java 11 installed on your server-a-ec2 instance. You can go back to the local command prompt by executing the exit command twice.

AMI

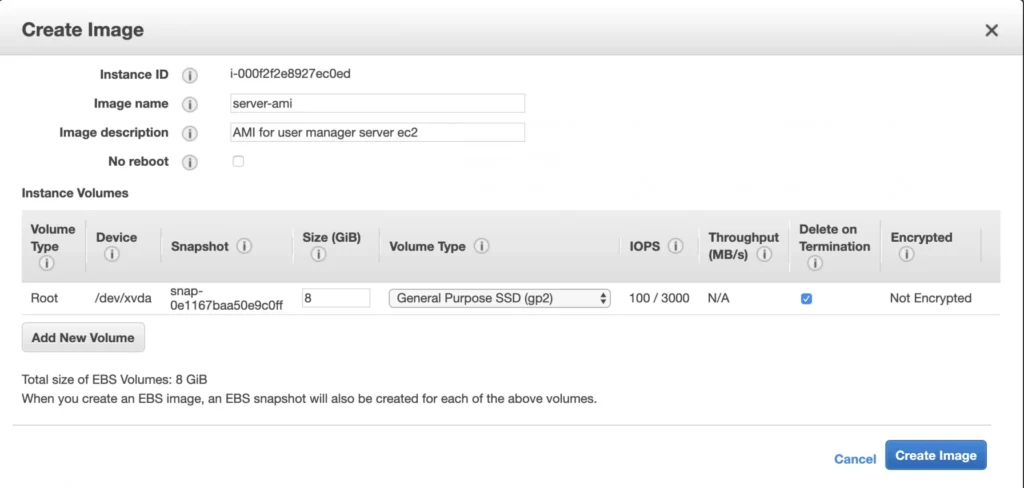

The ec2 instance for the backend server is ready for the deployment. In the second availability zone, we could follow exactly the same steps. However, there is an easier way. We can create an AMI image based on our pre-configured instance and use it later for the creation of the corresponding instance in availability zone b. In order to do that, go again into the Instances menu, select your instance, click Actions -> Image -> Create image . Your AMI image will be created and you will be able to find it in the Images/AMIs section.

1.3. Client application EC2

The last EC2 instance we need in the Availability Zone A will host the client application. So, let’s go once again through the process of EC2 creation. Launch instance, select the same base AMI as before, select your VPC, place the instance in the public-subnet-a , and enable public IP assignment. Then, add a client-a-ec2 Name tag, and create a new security group client-sg allowing SSH incoming connection from the bastion-sg security group. That’s it, launch it.

Now, SSH to the instance through the bastion host, and install the required software.

ssh -A -i path-to-your-pem-file ec2-user@bastion-a-ec2-instance-public-ip

Then, inside your bastion host execute the command:

ssh -A -i path-to-your-pem-file ec2-user@bastion-a-ec2-instance-public-ip

Inside client-a-ec2 command prompt, execute :

sudo yum update &&

curl -sL https://rpm.nodesource.com/setup_12.x | sudo bash - &&

sudo yum install -y nodejs &&

node -v &&

npm -v

Exit the EC2 command prompt and create a new AMI image based on it.

2. Availability Zone B

2.1. Bastion Host

Create the second bastion host instance following the same steps as for availability zone a, but this time place it in public-subnet-b , add Name tag bastion-b-ec2 , and assign to it previously created bastion-sg security group.

2.2. Backend server EC2

For the backend server EC2, go again to the Launch Instance menu, and this time instead of using Amazon’s AMI switch to My AMI’s tab and select the previously created server-ami image. Place the instance in the private-subnet-b , add a name tag server-b-ec2 , and assign to it the server-sg security group.

2.3. Client application EC2

Just as for the backend server instance, launch the client-b-ec2 using your custom AMI image. This time select the client-ami image, place EC2 in the public-subnet-b , enable automatic IP assignment, and choose the client-sg security group.

3. RDS

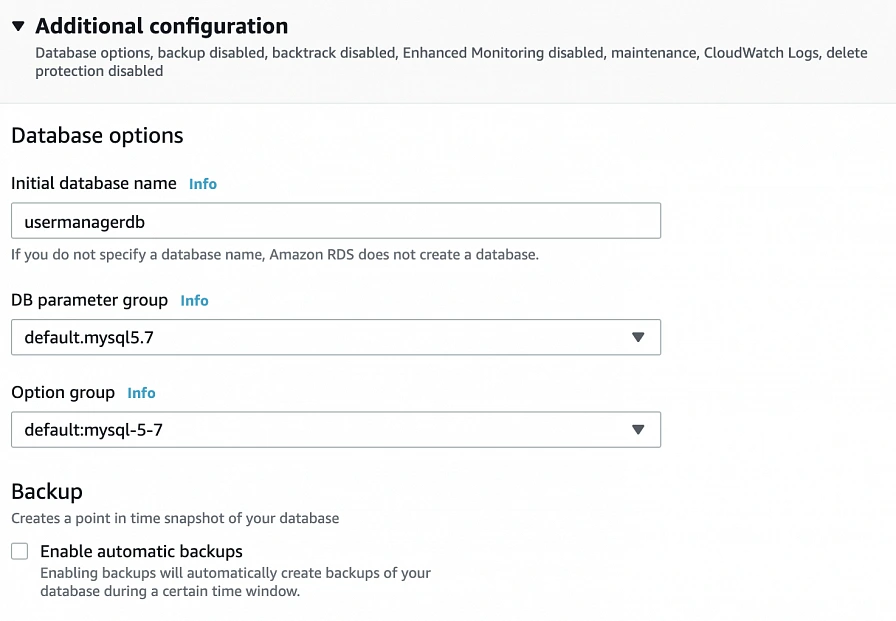

We have all our EC2 instances ready. The last part which we will cover in this article is the configuration of RDS. For that, go into the RDS service in the AWS Management Console and click Create database. In the database configuration window, follow the standard configuration path. Select MySQL db engine, and select Free tier template. Set your db name as user-manager-db , specify master username and password, select your user-manager-vpc , availability zone a, and make the database publicly not accessible. Create also a new user-manager-db-sg security group.

In the Additional configuration section, specify the initial db name, and finally create a database.

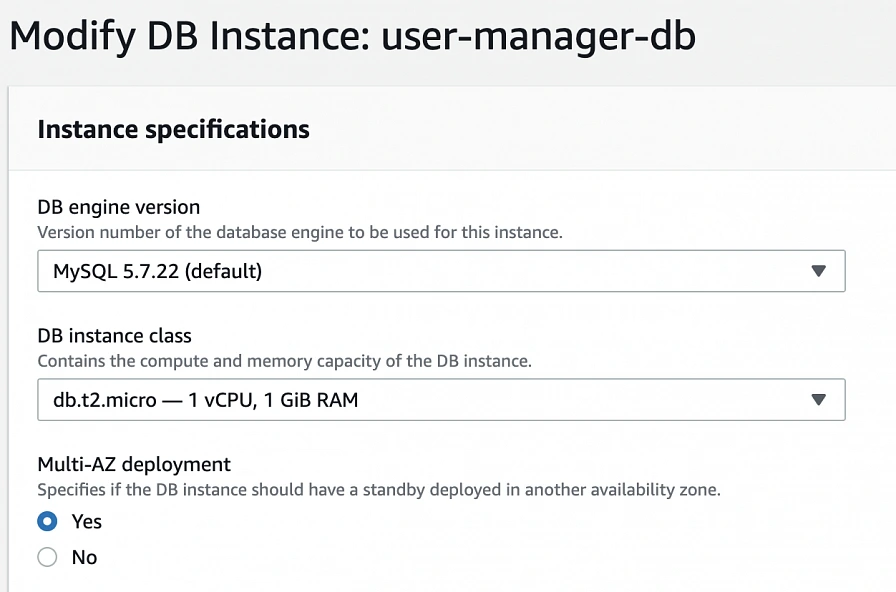

After AWS finishes the creation process, you will be able to get the database endpoint, which we will use to connect to the database from our application later on. Now, in order to provide high availability of the database, click the Modify button on the created database screen, and enable Multi-AZ deployment. Please, bear in mind that Multi-AZ deployment is not included in the free tier program, so if you would like to avoid any charges, skip this point.

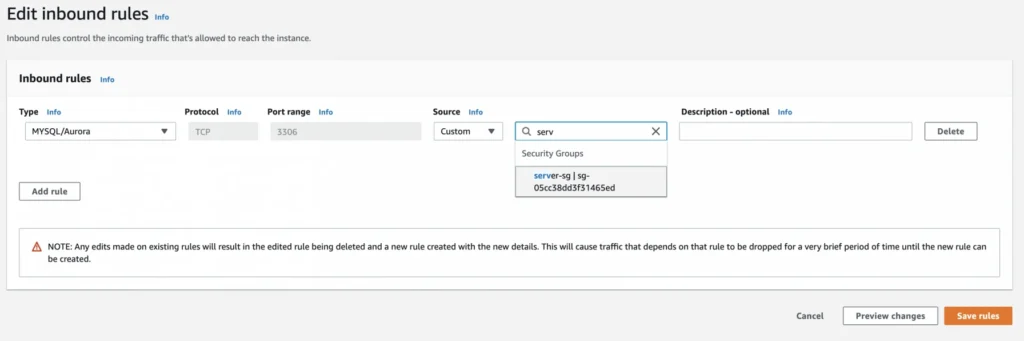

As the last step, we need to add a rule to the user-manager-db-sg to allow incoming connections from our server-sg on port 3306 in order to allow communication between our server and the database.

EC2, AMI, Bastion Host, RDS - Summary

Congratulations, our infrastructure is almost ready for deployment. As you can see in our final diagram, the only thing which is missing is the load balancer. In the next part of the series, we will take care of that, and deploy our applications to have a fully functioning system running on AWS infrastructure!

Sources:

- https://cloudacademy.com/blog/aws-bastion-host-nat-instances-vpc-peering-security/

- https://aws.amazon.com/quickstart/architecture/linux-bastion/

- https://aws.amazon.com/blogs/security/securely-connect-to-linux-instances-running-in-a-private-amazon-vpc/

- https://app.pluralsight.com/library/courses/aws-developer-getting-started/table-of-contents

- https://app.pluralsight.com/library/courses/aws-developer-designing-developing/table-of-contents

- https://app.pluralsight.com/library/courses/aws-networking-deep-dive-vpc/table-of-contents

- https://www.techradar.com/news/what-is-amazon-rds

- https://medium.com/kaodim-engineering/hardening-ssh-using-aws-bastion-and-mfa-45d491288872

- https://cloudacademy.com/blog/aws-bastion-host-nat-instances-vpc-peering-security/

- https://docs.aws.amazon.com/IAM/latest/UserGuide/id_roles.html

- https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ec2-key-pairs.html

- https://aws.amazon.com/ec2/instance-types/

- https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/concepts.html

The path towards enterprise level AWS infrastructure – architecture scaffolding

This article is the first one of the mini-series which will walk you through the process of creating an enterprise-level AWS infrastructure. By the end of this series, we will have created an infrastructure comprising a VPC with four subnets in two different availability zones with a client application, backend server, and a database deployed inside. Our architecture will be able to provide scalability and availability required by modern cloud systems. Along the way, we will explain the basic concepts and components of the Amazon Web Services platform. In this article, we will talk about the scaffolding of our architecture to be specific a Virtual Private Cloud (VPC), Subnets, Elastic IP Addresses, NAT gateways, and route tables. The whole series comprises of:

- Part 1 - Architecture Scaffolding (VPC, Subnets, Elastic IP, NAT)

- Part 2 - The Path Towards Enterprise Level AWS Infrastructure – EC2, AMI, Bastion Host, RDS

- Part 3 - Load Balancing and Application Deployment (Elastic Load Balancer)

The cloud, as once explained in the Silicon Valley tv-series, is “this tiny little area which is becoming super important and in many ways is the future of computing.” This would be accurate, except for the fact that it is not so tiny and the future is now. So let’s delve into the universe of cloud computing and learn how to build highly available, secure and fault-tolerant cloud systems, how to utilize the AWS platform for that, what are its key components and how to deploy your applications on AWS.

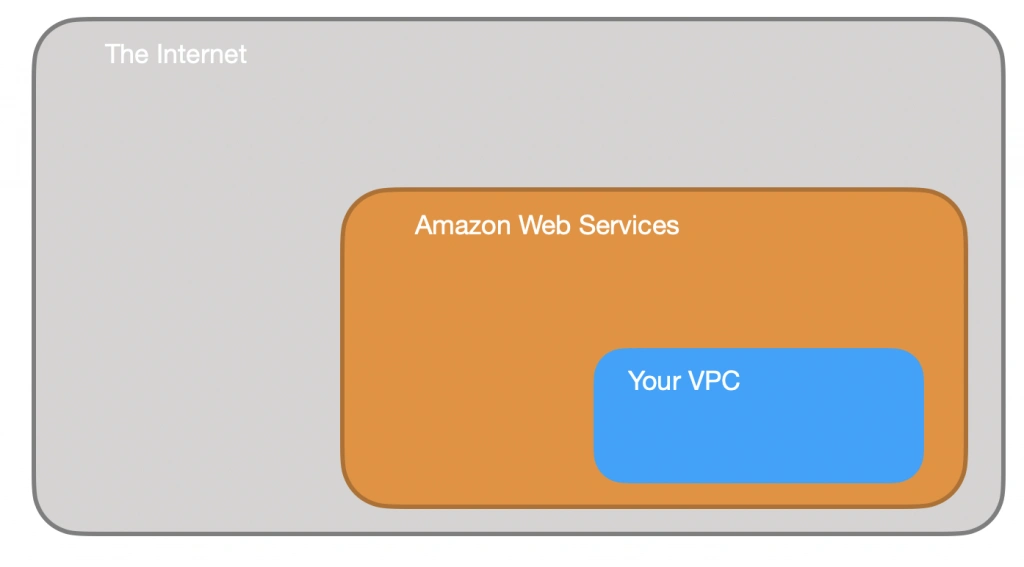

Cloud computing

Over the last years, the IT industry underwent a major transformation in which most of the global enterprises moved away from their traditional IT infrastructures towards the cloud. The main reason behind that is the flexibility and scalability which comes with cloud computing, understood as provisioning of computing services such as servers, storage, databases, networking, analytic services, etc. over the Internet ( the cloud ). In this model organizations only pay for the cloud resources they are actually using and do not need to manage the physical infrastructure behind it. There are many cloud platform providers on the market with the major players being Amazon Web Services (AWS), Microsoft Azure and Google Cloud. This article focuses on services available on AWS, but bear in mind that most of the concepts explained here will have their equivalents on the other platforms.

Infrastructure overview

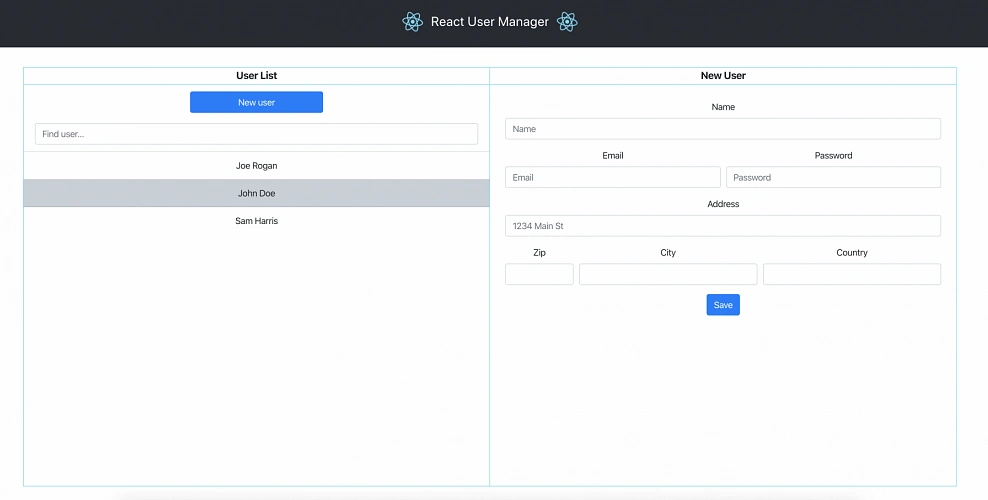

Let’s start with what we will build throughout this series. The goal is to create a real-life, enterprise-level AWS infrastructure that will be able to host a user management system consisting of a React.js web application, Java Spring Boot server and a relational database.

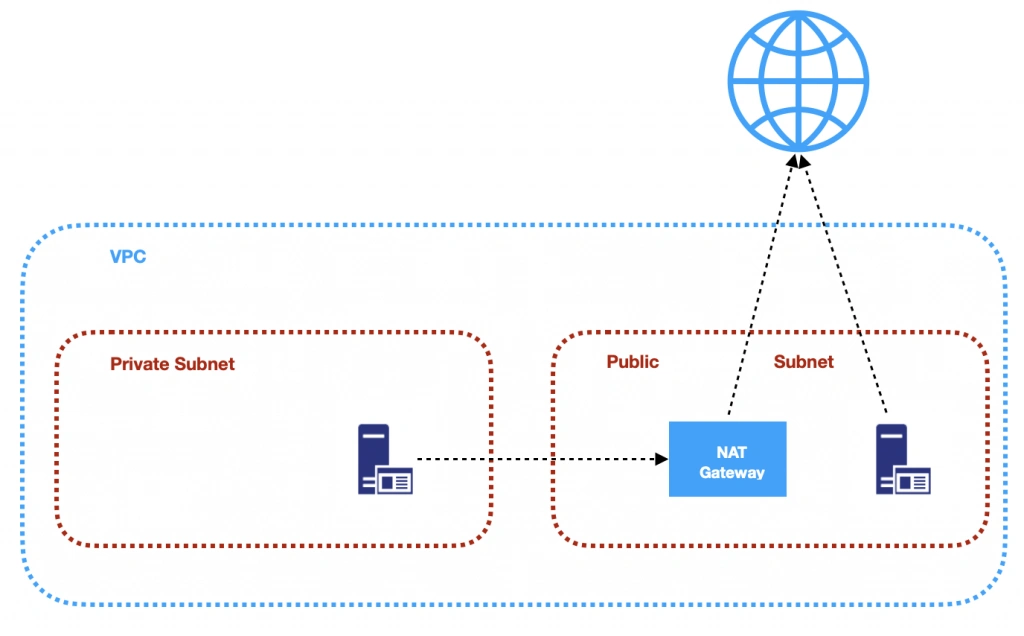

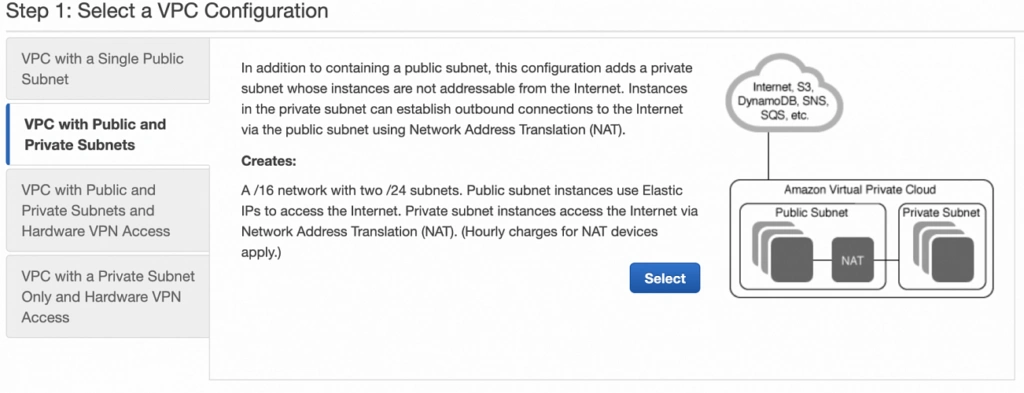

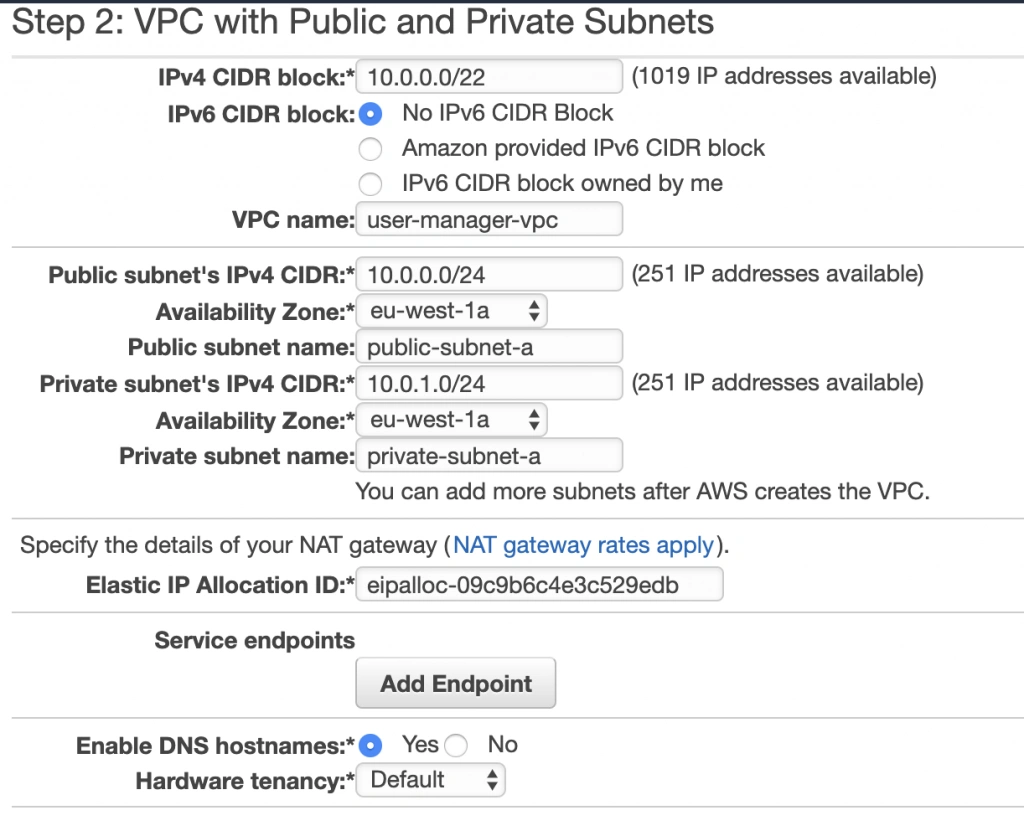

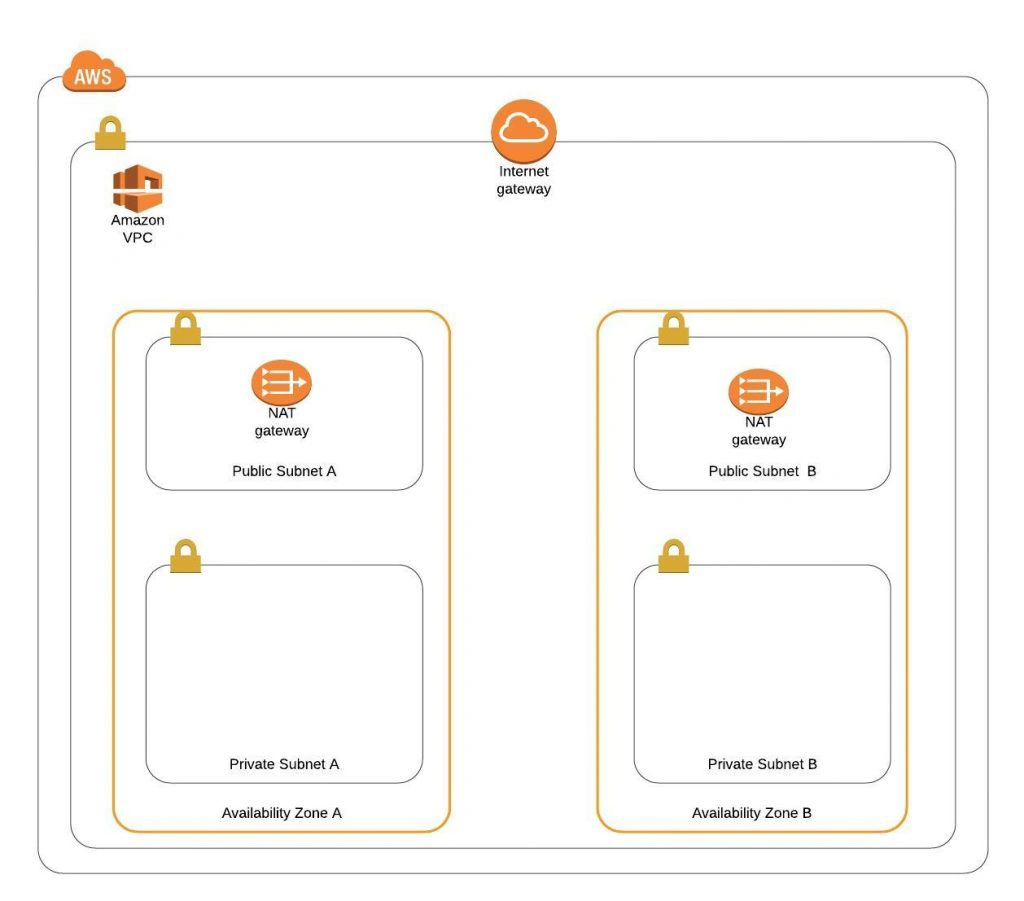

The architecture diagram is shown in figure 1. It comprises a VPC with four subnets (2 public and 2 private) distributed across two different availability zones. In public subnets are hosted a client application, a NAT gateway and a Bastion Host (more on that later), while our private subnets contain backend server and database instances. The infrastructure also includes Internet Gateway to enable access to the Internet from our VPC and a Load Balancer. The reasoning behind placing the backend server and database in private subnets is to protect those instances from being directly exposed to the Internet as they may contain sensitive data. Instead, they will only have private IP addresses and be behind a NAT gateway and a public-facing Elastic Load Balancer. Presented infrastructure provides a high level of scalability and availability through the introduction of redundancy with instances deployed in two different availability zones and the use of auto-scaling groups which provide automatic scaling and health management of the system.

Figure 2 presents the view of the user management web application system we will host on AWS:

The applications can be found on GitHub.

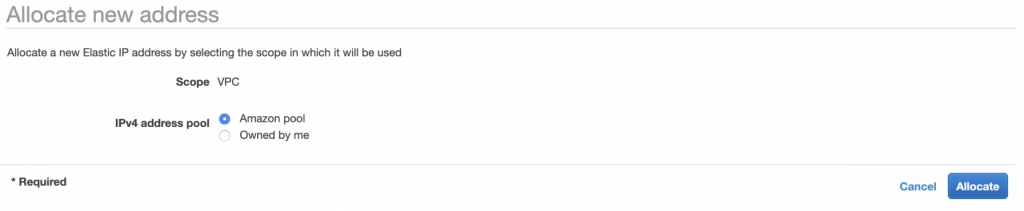

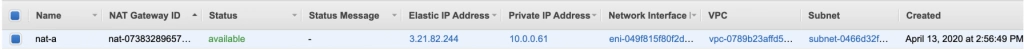

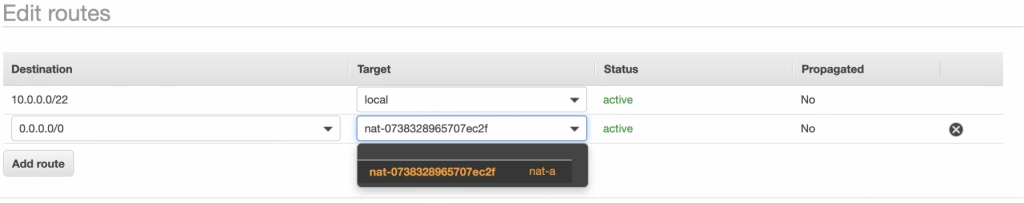

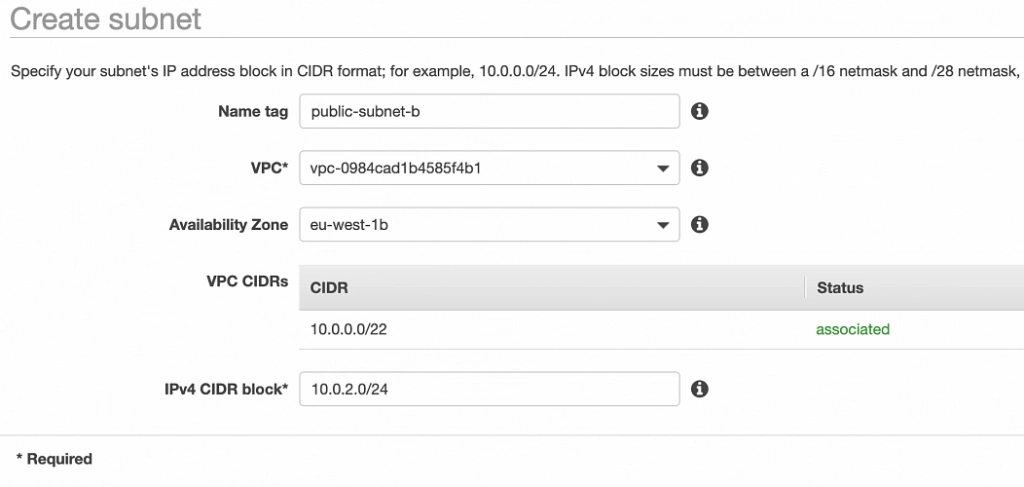

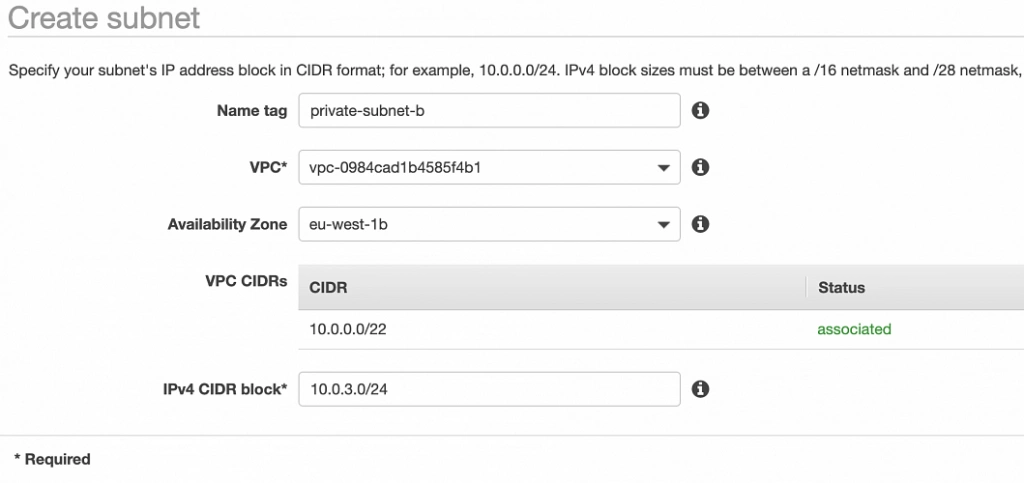

In this part of the article series, we will focus on the scaffolding of the infrastructure, namely allocating elastic IP addresses, setting up the VPC, creating the subnets, configuring NAT gateways and route tables.

AWS Free Tier Note