ASP.NET Core CI/CD on Azure Pipelines with Kubernetes and Helm

Due to the high entry threshold, it is not that easy to start a journey with Cloud Native. Developing apps focused on reliability and performance, and meeting high SLAs can be challenging. Fortunately, there are tools like Istio which simplify our lives. In this article, we guide you through the steps needed to create CI/CD with Azure Pipelines for deploying microservices using Helm Charts to Kubernetes. This example is a good starting point for preparing your development process. After this tutorial, you should have some basic ideas about how Cloud Native apps should be developed and deployed.

Technology stack

- .NET Core 3.0 (preview)

- Kubernetes

- Helm

- Istio

- Docker

- Azure DevOps

Prerequisites

You need a Kubernetes cluster, free Azure DevOps account, and a docker registry. Also, it would be useful to have kubectl and gcloud CLI installed on your machine. Regarding the Kubernetes cluster, we will be using Google Kubernetes Engine from Google Cloud Platform, but you can use a different cloud provider based on your preferences. On GCP you can create a free account and create a Kubernetes cluster with Istio enabled (Enable Istio checkbox). We suggest using a machine with 3 standard nodes.

Connecting the cluster with Azure Pipelines

Once we have the cluster ready, we have to use kubectl to prepare service account which is needed for Azure Pipelines to authenticate. First, authenticate yourself by including necessary settings in kubeconfig. All cloud providers will guide you through this step. Then following commands should be run:

kubectl create serviceaccount azure-pipelines-deploy

kubectl create clusterrolebinding azure-pipelines-deploy --clusterrole=cluster-admin --serviceaccount=default:azure-pipelines-deploy

kubectl get secret $(kubectl get secrets -o custom-columns=":metadata.name" | grep azure-pipelines-deploy-token) -o yaml

We are creating a service account, to which a cluster role is assigned. The cluster-admin role will allow us to use Helm without restrictions. If you are interested, you can read more about RBAC on Kubernetes website. The last command is supposed to retrieve secret yaml, which is needed to define connection – save that output yaml somewhere.

Now, in Azure DevOps, go to Project Settings -> Service Connections and add a new Kubernetes service connection. Choose service account for authentication and paste the yaml copied from command executed in the previous step.

One more thing we need in here is the cluster IP. It should be available at cluster settings page, or it can be retrieved via command line. In the example, for GCP command should be similar to this:

gcloud container clusters describe --format=value(endpoint) --zone

Another service connection we have to define is for docker registry. For the sake of simplicity, we will use the Docker hub, where all you need is just to create an account (if you don’t have one). Then just supply whatever is needed in the form, and we can carry on with the application part.

Preparing an application

One of the things we should take into account while implementing apps in the Cloud is the Twelve-Factor methodology. We are not going to describe them one by one since they are explained good enough here but few of them will be mentioned throughout the article.

For tutorial purposes, we’ve prepared a sample ASP.NET Core Web Application containing a single controller and database context. It also contains simple dockerfile and helm charts. You can clone/fork sample project from here. Firstly, push it to a git repository (we will use Azure DevOps), because we will need it for CI. You can now add a new pipeline, choosing any of the available YAML definitions. In here we will define our build pipeline (CI) which looks like that:

trigger:

- master

pool:

vmImage: 'ubuntu-latest'

variables:

buildConfiguration: 'Release'

steps:

- task: Docker@2

inputs:

containerRegistry: 'dockerRegistry'

repository: '$(dockerRegistry)/$(name)'

command: 'buildAndPush'

Dockerfile: '**/Dockerfile'

- task: PublishBuildArtifacts@1

inputs:

PathtoPublish: '$(Build.SourcesDirectory)/charts'

ArtifactName: 'charts'

publishLocation: 'Container'

Such definition is building a docker image and publishing it into predefined docker registry. There are two custom variables used, which are dockerRegistry (for docker hub replace with your username) and name which is just an image name (exampleApp is our case). The second task is used for publishing artifact with helm chart. These two (docker image & helm chart) will be used for the deployment pipeline.

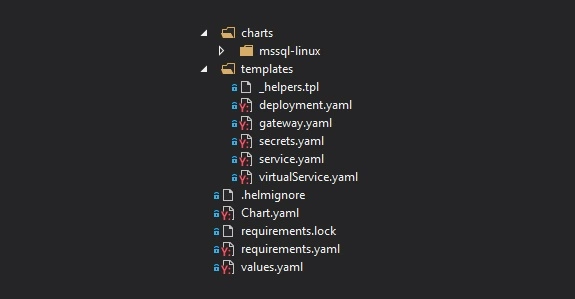

Helm charts

Firstly, take a look at the file structure for our chart. In the main folder, we have Chart.yaml which keeps chart metadata, requirements.yaml with which we can specify dependencies or values.yaml which serves default configuration values. In the templates folder, we can find all Kubernetes objects that will be created along with chart deployment. Then we have nested charts folder, which is a collection of charts added as a dependency in requirements.yaml. All of them will have the same file structure.

Let’s start with a focus on the deployment.yaml – a definition of Deployment controller, which provides declarative updates for Pods and Replica Sets. It is parameterized with helm templates, so you will see a lot of {{ template […] }} in there. Definition of this Deployment itself is quite default, but we are adding a reference for the secret of SQL Server database password. We are hardcoding ‘-mssql-linux-secret’ part cause at the time of writing this article, helm doesn’t provide a straightforward way to access sub-charts properties.

env:

- name: sa_password

valueFrom:

secretKeyRef:

name: {{ template "exampleapp.name" $root }}-mssql-linux-secret

key: sapassword

As we mentioned previously, we do have SQL Server chart added as a dependency. Definition of that is pretty simple. We have to define the name of the dependency, which will match the folder name in charts subfolder and the version we want to use.

dependencies:

- name: mssql-linux

repository: https://kubernetes-charts.storage.googleapis.com

version: 0.8.0

[...]

For the mssql chart, there is one change that has to be applied in the secret.yaml. Normally, this secret will be created on each deployment (helm upgrade), it will generate a new sapassword – which is not what we want. The simplest way to adjust that is by modifying metadata and adding a hook on pre-install. This will guarantee that this secret will be created just once on installing the release.

metadata:

annotations:

"helm.sh/hook": "pre-install"

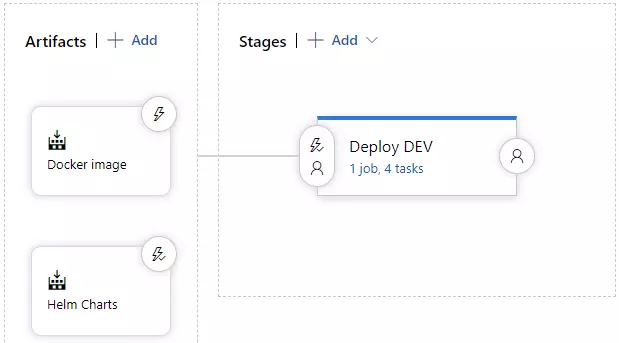

A deployment pipeline

Let’s focus on deployment now. We will be using Helm to install and upgrade everything that will be needed in Kubernetes. Go to the Releases pipelines on the Azure DevOps, where we will configure continuous delivery. You have to add two artifacts, one for docker image and second for charts artifact. It should look like on the image below.

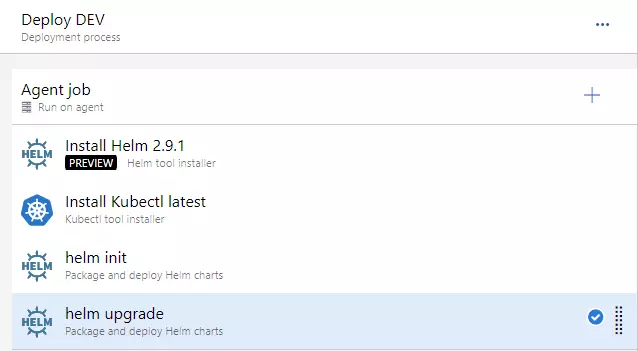

On the stages part, we could add a few more environments, which would get deployed in a similar manner, but to a different cluster. As you can see, this approach guarantees Deploy DEV stage is simply responsible for running a helm upgrade command. Before that, we need to install helm, kubectl and run helm init command.

For the helm upgrade task, we need to adjust a few things.

- set Chart Path, where you can browse into Helm charts artifact (should look like: “$(System.DefaultWorkingDirectory)/Helm charts/charts”)

- paste that “image.tag=$(Build.BuildNumber)” into Set Values

- and check to Install if release not present or add –install ar argument. This will behave as helm install if release won’t exist (i.e. on a clean cluster)

At this point, we should be able to run the deployment application – you can create a release and run deployment. You should see a green output at this point :).

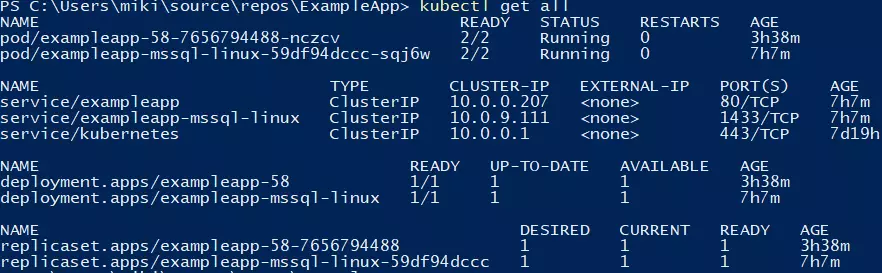

You can verify if the deployment went fine by running a kubectl get all command.

Making use of basic Istio components

Istio is a great tool, which simplifies services management. It is responsible for handling things like load balancing, traffic behavior, metric & logs, and security. Istio is leveraging Kubernetes sidecar containers, which are added to pods of our applications. You will have to enable this feature by applying an appropriate label on the namespace.

kubectl label namespace default istio-injection=enabled

All pods which will be created now will have an additional container, which is called a sidecar container in Kubernetes terms. That’s a useful feature, cause we don’t have to modify our application.

Two objects that we are using from Istio, which are part of the helm chart, are Gateway and VirtualService. For the first one, we will bring Istio definition, because it’s simple and accurate: “Gateway describes a load balancer operating at the edge of the mesh receiving incoming or outgoing HTTP/TCP connections”. That object is attached to the LoadBalancer object – we will use the one created by Istio by default. After the application is deployed, you will be able to access it using LoadBalancer external IP, which you can retrieve with such command:

kubectl get service/istio-ingressgateway -n istio-system

You can retrieve external IP from the output and verify if http://api/examples url works fine.

Summary

In this article, we have created a basic CI/CD which deploys single service into Kubernetes cluster with the help of Helm. Further adjustments can include different types of deployment, publishing tests coverage from CI or adding more services to mesh and leveraging additional Istio features. We hope you were able to complete the tutorial without any issues. Follow our blog for more in-depth articles around these topics that will be posted in the future.

Check related articles

Read our blog and stay informed about the industry's latest trends and solutions.

see all articles