Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

What is automotive software and why does it matter?

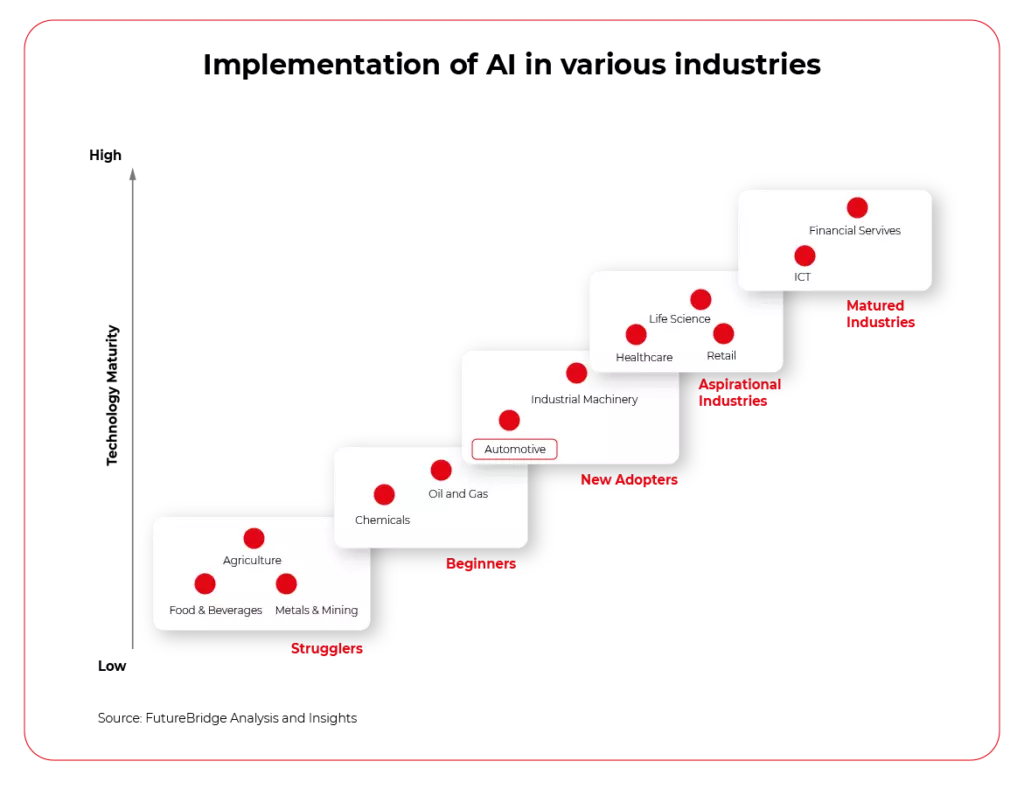

Connected Car , Software Development and Autonomous Driving are the three most repeated words in the automotive industry. It’s hard not to notice that all three are basically different use cases heavily dependent on different kinds of software: cloud, AI, edge computing, or internal applications. Analysts, investors, management, and even regular employees of OEMs seem to believe and agree that software is the future of the automotive industry. But why?

Automotive software - how did we get there?

To understand the origins of this trend, let’s briefly look at the last 20 years of automotive history. On the market, where 99% of vehicles were based on combustion engines, a new entrant appeared. Tesla Motors Inc. A company with no background in building cars, named to pay tribute to the well-known electrical engineer, Nikola Tesla. A year later, famous entrepreneur, Elon Musk, decided to invest in this dream of building electric vehicles for the masses.

Fast forward to 2012 and we have the world premiere of the Tesla Model S. The Electric Vehicle, being the biggest disruption in the automotive industry in years, immediately receiving several automotive awards, including Car of The Year. Designed and developed by a company with 10 years of experience on the market and literally, a single vehicle developed earlier (the original Tesla Roadster). This showed that there is a big, unoccupied market for electric vehicles.

Just a year later, the Tesla Autopilot was introduced, and the whole world joined the hype for autonomous driving.

Why did Tesla get so popular?

It was not just because the market desperately needed an electric vehicle. Since the beginning, Tesla has been designing its cars to be software-centric. Big on-board CPUs from Nvidia support, not just Autopilot but also a multitude of applications and services available in the largest (at the time at least) central screen of a road car.

And the software has been updated very often using Over-The-Air upgrades, giving the customers the feeling that the software was always fresh and the producer quickly reacted to feedback with new changes. Effectively, making the software a major selling point.

Electrification

Apart from the software-defined vehicle focus, electrification started as a solution to reduce the CO2 footprint of the industry. Both BEV and PHEV vehicles development was caused partially by new legislation and sustainability requirements, and partially of course by the success of Tesla. The EVs offering is increasing year by year, and most of the brands announced the potential timeline of reducing the combustion engines offering to 0 models.

The industry today

It seems like all of the large OEMs treated Tesla as their very own R&D department and allowed the company to conduct the world’s biggest ever market study. Tesla was able to prove that people actually care for the CO2 emission and want to drive electric cars and also showed that software in a vehicle may be more appealing to end-users than the sound of V8.

On the other hand, we compared Tesla to an R&D department because their cars are not always built with top quality and software sometimes have glitches – all in all, it’s a tremendous idea, but not an ideal car. VW Group, Toyota, or Stellantis could never afford to make such mistakes.

Software defined-vehicles became a real future trend, not when Tesla S was first shown to the world. That happened when all of the world’s top OEMs decided that enough is enough, the experiment was over and the time to “productionize” Tesla’s “concept” had come.

And here we are today, a few days after Stellantis Software Day, an investor meeting purely focused on the Software-Defined Vehicles and their new platform, STLA (pronounced `Stella`). A few months after Mercedes-Benz announced that they are hiring developers to work on their own Operating System, MB.OS, as part of a greater “Digital First” brand strategy. A year after the CARIAD by Volkswagen Group was fully defined to provide unified software platforms for all vehicles in the group, called ODP (One Digital Platform) or VW.OS and VW.AC (VW Automotive Cloud).

Everyone is fully committed. But what exactly is the automotive industry committed to? Let’s dissect the latest event, Stellantis Software Day, to see the core topics they want to focus on in the next few years.

- Disconnecting hardware and software lifecycle.

- Broadening the scope of software in the vehicle.

- OTA software updates for adding new features.

- Using software to create a unique offering for all brands in the group.

- Connected Car data monetization .

- Software to support EV and sustainability.

#SWDAY21Stellantis | Carlos Tavares, CEO: "We are transforming #Stellantis into a #tech #mobility company. We owe it to our customers. We owe it to our Brands. We owe it to the principle on which #Stellantis was founded". pic.twitter.com/iMYHSLpMwL — Stellantis (@Stellantis) December 7, 2021

Those are predicted to generate ~€20B in incremental annual revenues by 2030. That, of course, partially answers the “why?” question, but is there more to it?

Coming back to why

If we summarize the situation, we see that electrification and disruption forced the industry to change. The side effect of electrification is making the previous key differentiator - powertrain - much less important. With electric vehicles, the engines are not the key. Most of them are very similar and technology focuses more on batteries. This makes the different models similar, especially in terms of acceleration and horsepower.

So, where is the differentiator? Where do companies look for unique selling points for their brands, and how do they separate the offering of different models when the platform is almost exactly the same?

https://twitter.com/Herbert_Diess/status/1469218343068614657

As you might have already guessed - that is the software. Of course, it’s not just electrification, the other key aspect is also digitalization of our lives, but the disruption already happened and the industry tries to follow.

The people fueling the future of automotive software

Certainly, when everyone decides at the same time to do a similar shift, it can get complicated rapidly. From the resourcing perspective, in the market with such a shortage of skilled software engineers, when everyone tries to quickly build their software competencies, it cannot come without problems. Hiring an experienced software developer is hard, and it gets harder if a company is fully focused on vehicle manufacturing, with a limited budget for IT and IT recruitment departments. The problems with building teams can result in delays in project start or extending their timeline.

This is where partnerships with companies like Grape Up come into play. Partnering with a software development company with strong experience in the automotive industry can help mitigate those issues - having skilled engineers available to help frame the project, architect, develop, and productionize significantly reduces the risk of shifting towards software development, and in the meantime also allows to train internal staff by working together, hands-on, on the actual projects.

The end

We are an endangered species, you and me. We fans of speed, we devotees of power, we lovers of performance and beauty, and mechanical soul. We dare not speak of cams or cranks or double wishbones. We fear for our love of roaring V8s and the smell of burnt rubber. We're told to think of the economy, the environment, and not excitement and enjoyment. In an age of hybrid-this and automatic-that, we are the odd ones out. Yet there is hope. There is a haven. A place that celebrates speed, grip, gears, and fun. And it's all here for you to explore.

Jeremy Clarkson

Beyond Spotify and Netflix- the future of in-vehicle infotainment systems in connected cars

It cost a staggering $200 for that time. The antenna took up almost the entire roof of the car, the batteries barely fit under the front seat, and the huge speakers had to be fixed to the back of the seat backrest. The year was 1922, just over 20 years after the launch of the first mass-produced Oldsmobile Curved Dash car. Entertainment had just made its entrance into the car industry - Chevrolet introduced the first car radio. From then on it only got more exciting.

Nowadays, 100 years on from that event, we can no longer envisage a car without radio, music, or news. In fact, we can no longer imagine a car without entertainment in the broadest sense of the word. Because the radio - at least in its traditional form - is slowly becoming obsolete. It's being replaced by a "personal radio station" created by the driver - streaming music, favorite podcasts, audiobooks, and even video content.

Although we are still a far cry from the catchy phrase "a smartphone on wheels" , first uttered in 2011 by Akio Toyoda, the automotive industry is indeed heading in this direction. Cars are ceasing to be vehicles designed to take us from A to B. Like any other device connected to the Internet, they are becoming a gate to new worlds of entertainment, shopping, learning, or gaming.

When finishing shopping or listening to an audiobook on one device, we want to seamlessly continue the activity on a laptop or desktop computer. Whether we like it or not, the car is becoming another medium that will allow us to stay virtually connected all the time.

Akio Toyoda was wrong. A car is much more than a "smartphone" on wheels!

A potentially larger screen than a smartphone (not only the touchscreen in-vehicle infotainment system panel, but the windscreen too, which can also be used to display content), at least 4 seats that can be independently paired with the in-car entertainment system, and, ironically, much more mobility than mobile devices.

As we look at the development of V2X (vehicle-to-everything) technology, which will turn vehicles into the Internet of Things devices, the opportunities that lie ahead for the automotive industry in the entertainment field are hard to estimate.

One thing is certain. This process cannot be stopped. Every company in the automotive industry must be aware of the upcoming changes.

According to IHS Markit data, in 2014 only 53% of cars in the USA had a dashboard touch screen, while today this percentage has already reached 82%. These types of solutions can bring automotive companies entirely new revenue streams, and most importantly they will be less dependent on vehicle production cycles and with much higher margins.

The in-vehicle infotainment system market is estimated to be worth $78.9 billion by 2025. [Allied Market Research].

Quo Vadis in-vehicle infotainment systems?

In-vehicle voice assistants for infotainment control

Siri, Alexa, or Google Now are names that have become part of the consumer market and make life easier for most of us, allowing us to make phone calls, send messages or manage our own calendars. While sending voice commands to our phone or the speaker in our home or office is nothing new, communicating with our own car is still some kind of novelty.

And it is here while driving when we need to focus on the road and have our hands free, that voice technology can be of the most benefit and make driving more efficient and smooth. And of course, more fun.

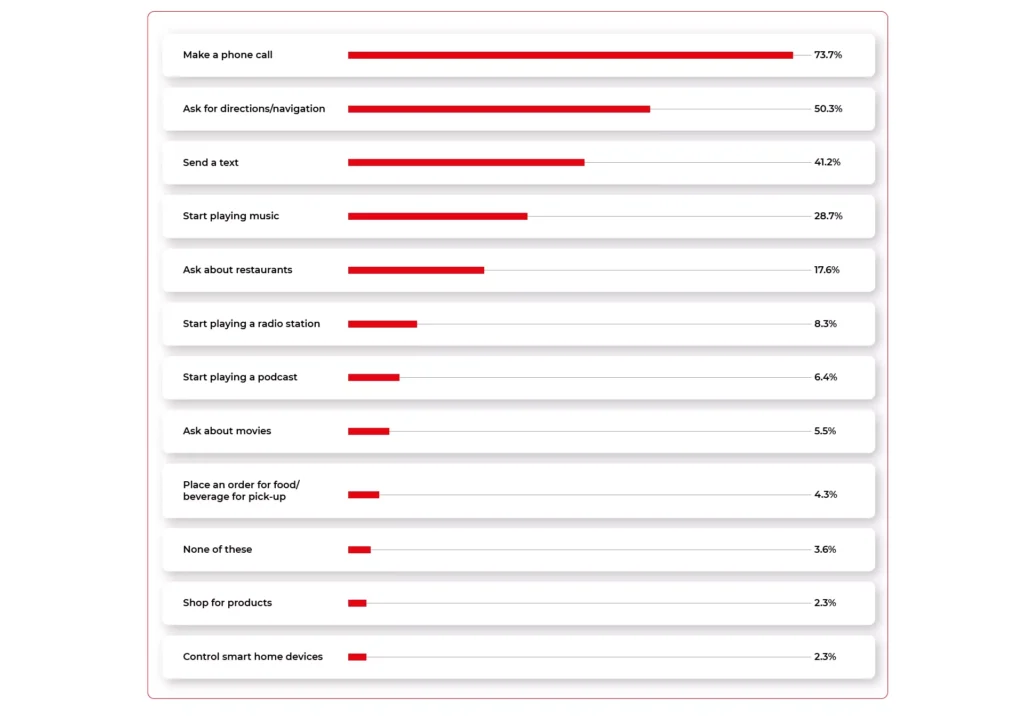

Navigant Research (Guidehouse) predicts that by 2028, 90% of vehicles will be equipped with a voice assistant. Already today - looking at Voicebot.ai data - a large proportion of commands given by drivers are entertainment-related. Playing music, listening to podcasts, finding out about movies, ordering food, or making purchases directly from behind the wheel is becoming increasingly popular among drivers with enhanced IVI systems.

The main players in this section are certainly the manufacturers already known for their other platforms, namely Google and Apple, which are integrating their Android Auto and Carplay technologies in partnership with major OEMs. Hot on its heels is Amazon, which has not only begun collaborating to bring Alexa into Toyota, Ford, and BMW vehicles but also released an Amazon Echo device that any driver can install in their car themselves (as long as it meets the manufacturer's technical requirements).

Vehicle manufacturers, however, are no longer just waiting for the offers of the largest players in this market, but are developing their systems or working with smaller business partners to help them develop such solutions.

Korea's Hyundai has entered into an operation with Saltlux, a company specializing in semantic networks. Honda, Kia Motors, and Daimler are working with the SoundHound start-up. And Volkswagen has invested $180 million in the Chinese start-up Mobvoi.

Gesture-recognition

Voice command in the car is a trend that will continue to grow every year. Yet, there are situations in which gestures are much better than voice commands - for example when you are on a call or have a cold and don't want to strain your throat. Gestures are universal for every driver, while voice assistant applications are often still hampered by technological limitations, for example, due to the variety of accents or the system's adaptation to the driver's language.

As the system recognizes a gesture made with the palm of your hand, fingers, or even your head, you can stay focused on your driving and at the same time activate a specific function when you cannot use your voice command. Scrolling through songs on the radio, raising or lowering the temperature in the car, launching a text message application - all these actions can be configured using gestures. Instead of clicking and scrolling through a touchpad, which always entails taking your eyes off the road, gestures will allow you to boost safety and easily manage the entire system.

Virtual reality & Augmented reality

While currently the introduction of virtual reality in vehicles only makes sense for passengers who do not need to focus on driving, augmented reality technologies are already being successfully implemented in vehicles. Unlike VR, augmented reality does not distract drivers from reality and allows them to concentrate on driving. And they can even increase safety.

Although today this type of technology can only be found in the most innovative and prestigious IVI systems (one of the first cars in which this technology was used was Mercedes-Benz GLE 2020), we should expect this type of solution to develop in the near future, as it brings a whole new quality to in-car entertainment.

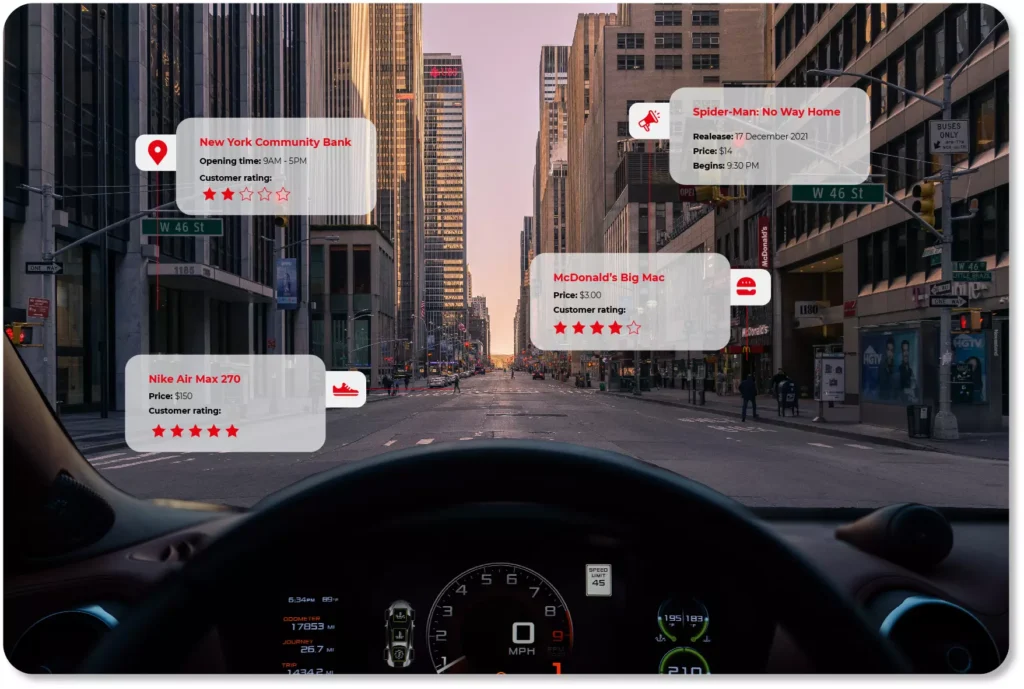

Their direct equivalent to the automotive field is the heads-up display system, which is an additional head-up display integrated into the vehicle's windscreen in addition to the IVI control panel. This screen can be used to display destination-related information, traffic warnings, or information about other vehicles on the road (so-called intelligent terrain mapping).

In the near future, these technologies may also be applied in entertainment itself - for instance in the form of augmented marketing. The windscreen will then display interesting offers and discounts from the restaurants, shops or shopping malls we have just passed. The displayed images will of course adapt to our driving speed, and we can decide for ourselves what kind of messages we wish to see.

On-demand in-car services

In-vehicle infotainment systems are the point of contact between different parties: customers, internet providers, companies producing vehicles, making entertainment, or electronic equipment (e.g. smartphones).

In most cases, drivers already have their favorite apps (Google and Apple being in the lead, of course) and use their favorite streaming services. Competing with platforms like Spotify, Netflix, Pandora or Slacker may not necessarily be the best strategy for automotive companies. It is much better to make use of the recognisability of brands that provide entertainment content and, based on this, extend it with a unique offer for their own clients. Opening up to partnerships with third-party platforms is the best way to address customer needs and create a stream of data that can be monetized .

One of the interesting market examples of this type are the efforts of the GM concern, which has created its own car application in the form of a marketplace, from which the driver can make purchases at Starbucks or Dunkin' Donuts, pay for the fuel at selected petrol stations, and book a hotel or a table at a restaurant.

We should expect that the trend of shopping straight from the car and making the most of the time we have on our commute to/from work while being stuck in traffic jams will not be limited to listening to music and podcasts only. With the development of the Internet of Things, drivers will also be able to control other devices within their "smart" network from their vehicles.

Samsung is already creating solutions that allow the driver to look into their own fridge and decide whether they need to go shopping, turn up the thermostat to prepare the perfect temperature for the return home, activate the alarm when going on holiday, or open the gate automatically.

Rear seat entertainment

Most modern IVI systems are not just an integrated head-unit, i.e. a touch panel on the vehicle dashboard for the driver, but more and more often, interactive panels dedicated to the passengers. These offer practically endless opportunities for entertainment. And we don't just mean the extensive range of streaming video services that can be subscribed to in the vehicle.

After all, the interactivity of the screens makes it possible to implement various applications and gamification elements in the car. These can take the form of quizzes, common picture drawing, shopping via third-party applications, or even karaoke singing, which can also engage the driver.

But what if the sound or type of music doesn't suit the driver, who wants to concentrate on driving? There are already solutions that direct the sound from different areas of the vehicle so that each passenger can listen to different music without wearing headphones.

This is how, for example, the Separated Sound Zone (SSZ) works in KIA cars. Based on multiple loudspeakers and the physical wave acoustics principles, the sounds do not overlap but instead reach their intended audience. Even if powerful beats dominate in the back seat, you can still relax while listening to calmer music in the driver's seat.

In-vehicle infotainment enters a new era

In-car entertainment has a long history. Ever since mobile devices became part of our lives, it is nothing new to connect a smartphone to a Bluetooth radio or for passengers to watch videos on their own smartphones/tablets. The only difference was that, until recently, in-vehicle infotainment was just an accessory, an element that makes a difference and highlights a brand. Today it is a factor on which customers often rely when buying a new vehicle.

In-vehicle infotainment is increasingly rarely limited to a touch screen panel on the dashboard. Right before our eyes, it is growing to be omnipresent and taking precedence over other vehicle functions. Brands that miss this moment and, like Blockbuster in the video content market or Nokia in the mobile market, may find themselves in a completely new reality. A reality in which totally different companies will be on top of the bunch.

Focus on the driver - data monetization at software-defined vehicle cannot exist without understanding customer needs

When talking about data monetization in the automotive industry, we tend to focus on technology, safety, sensors, or cloud solutions. However, all these elements fade when confronted with the ultimate element - the driver of the vehicle. Without taking into account their needs and expectations, there can be no question of generating revenue. Any vehicle data monetization strategy must be mindful of this.

We can fine-tune the system, we can find exceptional partners to implement the software in the vehicle, but without a deep understanding of the vehicle user, no one will benefit from the solutions developed. Our organization will put a considerable amount of effort into building the team and implementing the technology, but the new vehicle features will not be used by the driver.

For this to happen, we need two factors: a value proposition of the brand- which explains clearly and transparently what the user will get out of it, and a coherent action strategy based on a market-back methodology that stems from specific market needs and allow us to develop services that are desired by the customer.

What benefits do customers most often look for in a software-defined vehicle?

Remember that just because people want to use a service, it doesn't mean that they will pay for it. What matters here is not just the benefit, but also the way it is presented, the user experience, and the pricing model. Only the combination of all these elements determines the success of the service. First of all, it is worth focusing on the benefits themselves and only then selecting the right technology to match them.

What are users willing to actually pay for and what are they willing to share only? Many studies indicate that the main factor motivating consumers to share data is gamification and rivalry - this aspect has not changed for years, as we can see for example in social media or e.g. "free" applications, which from time to time appear on the market, gather millions of interested users and vanish in no time. However, when it comes to paying for such "services", users are not so willing to use them.

In vehicles, it looks slightly different. Capgemini's research shows that the connected car services that are most popular with consumers are those related to the "core" functionality of vehicles, such as:

- safety,

- driving comfort,

- time saving

- reduction of vehicle operating costs.

Among them, however, the services that are most willingly paid for are:

- hazard warning,

- collision warning,

- theft detection systems / vehicle finder.

Of course, just because entertainment or gamification isn't on the list doesn't mean that automotive companies should avoid them. It's also a way to distinguish and find their own individual voice that corresponds to the broad brand strategy and allows them to stand out in the market. It's about the way they are served, presented to the consumer, and showing that they can actually derive real benefit from them.

It also works the opposite way. Simply creating a "hazard warning" service in a connected car does not immediately guarantee success. It still needs to be packaged properly, run smoothly, and be provided with a payment model that suits the consumer.

Examples of customized connected car services

In-vehicle ads based on navigation and user experience

Is it possible that a driver will like the ads that will be displayed in the car? If we adopt the message to their needs and preferences, in all likelihood, it is. For example, if we often go to McDonald’s, the navigation system can mark such places on our route. We have our favorite clothing brand, right? We will certainly react differently to a sale offer in a shopping mall we just happen to be driving past. The context of shopping and the consumer’s needs are decisive, and the software-defined vehicle is perfectly suited to ensuring that the advertising message is 100% tailored to the driver.

Contextual payments

Removing barriers to shopping and being able to buy everything everywhere is a popular trend in modern commerce. In a vehicle where the driver is focused on the road and has their hands full, such a service makes even more sense. With the development of voice assistants, drivers will be able to pay this way not only for fuel or tolls but also for purchases beyond typical vehicle-related payments. Voice shopping on the way back home from work, instead of looking for a parking space in front of the mall and returning in traffic jams in the evening? Why not?

Sharing information about driver behaviour

Sharing data about the way we drive may not appeal to everyone. But if in return for sharing this information, a company gives us a huge discount on our car insurance or a super attractive leasing offer, then things may take a totally different turn. In cooperation with an insurance company or a bank, such services become a specific bargaining chip the OEM can play with when dealing with the driver.

Manufacturer's connected car applications

Saving money on car maintenance and taking care of the overall condition of the car is a benefit that most drivers will appreciate. A practical and thoughtful manufacturer app that warns of potential breakdowns, component replacements, or servicing will allow the user to enjoy a well-functioning vehicle for longer and sell it at a higher profit. In this way, the OEM gets the driver used to have the vehicle repaired at an authorized service center, and the user, due to the loyalty shown to the brand, can expect future discounts and lucrative offers.

Practical use of telemetry

Sharing telemetry data may seem profitable only to OEMs - after all, as they draw better conclusions based on the collected information and save on R&D processes. However, it is important for companies to make vehicle users aware of the benefits of such services, as well. After all, driving style data can be used to suggest solutions that improve road safety, work on fuel efficiency or reduce overall vehicle operating costs. In each of these cases, the winner is the driver. Example? When a vehicle frequently skids and triggers the ESP/TC system, the system can suggest that the driver should get better tyres (by a specific brand, of course).

Unlocking extra features on the subscription model

Paying for heated seats, just to use them for three months a year, may not be worthwhile for everyone. Well-known to us from streaming portals, the subscription model definitely meets the users’ needs. The customers themselves choose which functionalities they want to pay for and over what period of time. The OEM only has to take care of the right vehicle software that will enable that. And, of course, be careful not to alienate those customers who see this as "yet another" way to squeeze additional payments out of them. That’s how manufacturers can provide both functionalities directly related to the vehicle itself - e.g. better lights or engine boost - as well as those associated with in-car entertainment providers such as Spotify or Apple CarPlay.

What can be done to make the user more eager to pay for data monetization services?

A well-thought-out user experience is essential

In today's digital world, UX and mobile-friendly approaches decide whether a service is viable. If the product is presented in an unclear and incomprehensible way, and it is difficult for the user to find the desired options - they will not use it. The size and color of buttons, the messages displayed, the stability of the application - all of the above is of paramount importance and determine the popularity of the product. Keeping in mind the latest trends, mapping the market, and adapting to consumer trends is necessary to offer the vehicle user service of the quality known to them from e-commerce or their own AppStore.

UX itself is not only a practical tool that helps better track consumer behavior and how they use the service, but also a constant theme to promote and boost brand interest. Does Apple really need to upgrade iOS every year and does Instagram have to offer users a new feed layout every quarter? The answer is obvious. It's simply profitable for the brand.

Start with anonymized data

When creating a strategy for in-vehicle data monetization efforts, it's a good idea to start by developing services that don't require the sharing of personal data. A lower "pain threshold" will make it quicker for the user to learn the benefits of the system and how convenient or useful the service can be. Thus, it will be easier to convince people to use products that require more openness to data sharing. And this may be the next step in the implementation of technological solutions.

Focus on heavy-vehicle users

People who spend most of their day in the car or drive long and demanding routes happily embrace any technical innovations designed to make driving easier and safer for them. It is this group that should be targeted at the beginning of developing your own data monetization model.

Minimizing risks and accurately selecting the group will not solve all challenges, but it will increase the chance of success and help gain a new, loyal group of consumers who will help transfer the technology to other users.

Last, but not least: a flexible payment model

Convenience should accompany the user at every stage of the use of a new service. Not only when it is most beneficial to the user, but also when it is easiest for the user to give it up: whilst paying for the next billing period.

It is worth taking care of the flexibility of the payment model (e.g. one-off payment, freemium model, annual or monthly settlement), adjusting it to the user's needs and not hindering payments.

The smoother and more tailored to the user's needs the whole process of interacting with the service is - from understanding the need to using it to making payments - the greater the chance that the stream of data flowing from a given vehicle will not dry up after a short period of use (read: being frustrated using an underdeveloped product for the first time).

Let's remember that data monetization can succeed provided that it really understands the user, is fair and transparent to them and focuses on user experience. If we didn't have time to get to know the customer's needs, why should they waste their time on services they don't understand and don't need?

How to monetize vehicle data thanks to in-car technologies - what’s inside a Software-Defined Vehicle - Part 2

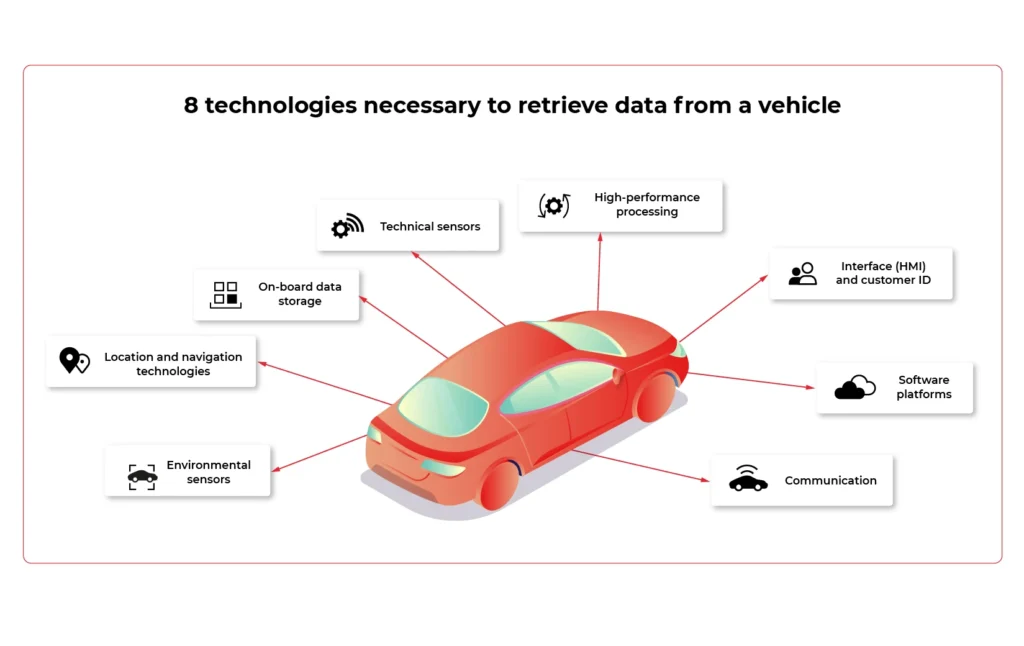

The collection of data and its subsequent monetization wouldn’t be possible without the ‘’attachment points" in the form of technologies already used in vehicles and controlled parts and systems. It's also common knowledge that car data monetization is based on three main sets of factors, covering quite different areas. These are automotive technologies, infrastructure technologies, and back-end processes. In this article, we are going to reverse-engineer in-car technologies.

There is no harvest without seeds. In relation to vehicles, these "seeds" are all the elements and systems that make data collection possible at all.

The proper design is the key when we talk about the effective use of information from the vehicle and from the users directly. Let's have a closer look at these crucial technologies.

8 technologies necessary to retrieve data from a vehicle

1. Technical sensors

For OEMs and suppliers , sensors are the foundation on which they can build knowledge about the vehicle's performance and possible breakdowns. Due to that, they are able to see how their products endure the operation.

With these resources, it is much easier to determine the cause of a particular fault. The biggest challenge? The type of setting and frequency of data collection and integration of results into R&D processes. These issues are yet to be discussed.

2. High-performance processing

Real-time processing and communication are pivotal in unlocking the data potential in the vehicle.

However, it is necessary to define, from the very outset, which specific processing elements are to take place in the vehicle and which in the cloud. Whether the hardware is upgradeable is also an important variable.

3. Interface (HMI) and customer ID

HMI is a bridge between a human and a machine. Any technology, tools, and devices allowing human beings to "communicate" with vehicles - request the operation, change the setting or read for example the current status of the engine.

User experience is the key. Making sure the vehicle operations are as intuitive as possible is the end goal of every interior and UI designer. Adding augmented reality, advanced HUD, gesture operations, or fancy ambient lights makes the driver feel at home, capable of quickly changing the vehicle settings, and always aware of the current situation and hazards.

4. Software platforms

They support various vehicle applications and high-speed data transmission protocols. In the context of monetization, two aspects are of paramount importance: the reliability of the over-the-air software updates and for which of what consumers will be able to pay.

5. Communication

The connection between the vehicle, sensors, internet, and onboard devices is essential. Network gateways include Wi-Fi, Bluetooth, RFID, as well as a high-speed 4G / 5G modem gateway. The latter is the greatest challenge.

The problem that needs to be dealt with is mainly the stability and cost of the aforementioned connection. As the vehicle moves, it can reach locations with low- or even no- mobile internet coverage. This results in interrupted connections, operation retries, or unavailability of services.

6. On-board data storage

It is a local hardware repository for data generated by the vehicle. It must be clear what data is stored on the cloud and who has access to it (e.g. insurers). It is equally important to reassure customers that their information is protected from unauthorized access from outside.

7. Location and navigation technologies

Monetization also depends on location data. The biggest players of software-defined vehicles must decide how to locate a vehicle (GPS) and decide which specific navigation information should be collected and which map's "technical archetype" to adopt.

8. Environmental sensors

It is not only what happens under the vehicle bonnet that matters, but also what influences it. Therefore, environmental factors provide valuable data. They detect parameters related to e.g. road conditions, weather, etc. They also focus on nearby vehicles and people as well as on the cockpit interior: passengers, transported goods, and the driver.

As for the latter, environmental sensors monitor its physiological condition. Based on fingerprint readers, cameras, and microphones, the technology determines, for example, the driver’s sobriety or the degree of fatigue. It is also possible to control vital signs such as heart rate and blood pressure.

To what extent is such data monetized? It all depends on how willing the customer is to share bio information about themselves and their passengers.

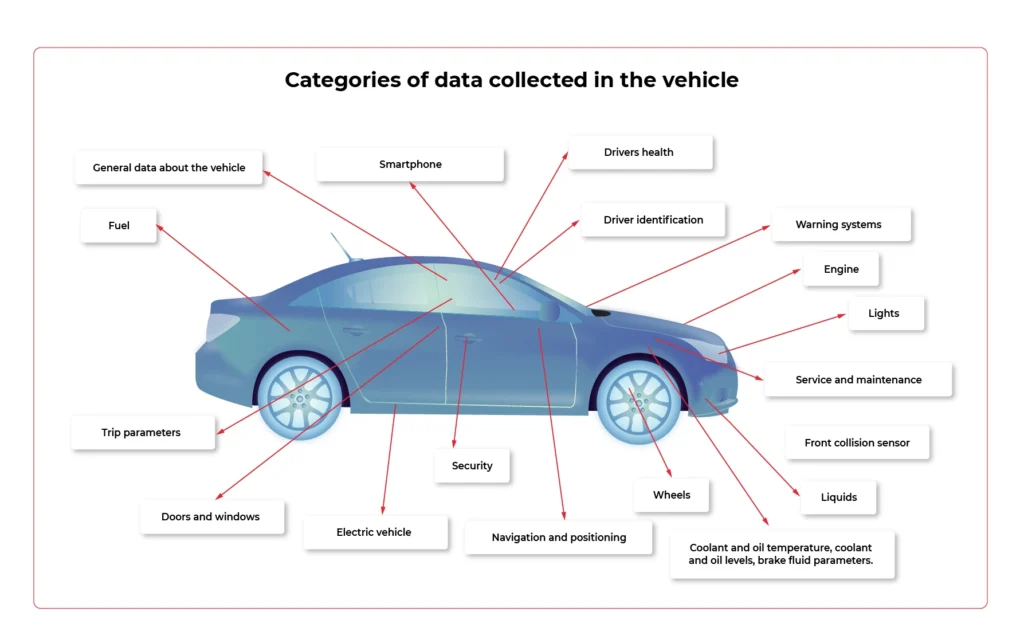

Categories of data collected in the vehicle

Which elements, systems, and subsystems are responsible for collecting valuable data that can be monetized in the automotive industry ? It's time to look at the specific spots in the vehicle that show the greatest potential for data aggregation.

- Front collision sensor

Information about the seriousness of the accident / collision and where it occurred.

- Doors and windows

The condition of the convertible roof, sunroof, doors, windows, bonnet and boot, spoilers and service lap.

- Driver identification

Identifying the person in charge and setting preferential settings for them.

- Drivers health

Pulse, data for diabetics, measuring stress levels.

- Trip parameters

Parameters such as mileage, acceleration / deceleration, remaining range, ECO or SPORT mode activation time, average distance, driving style rating, average fuel consumption, braking intensity and gear behaviour are taken into account.

- Electric vehicle

Battery status and voltage, charging profile and status, power consumption, recovered energy measurement.

- Engine

Ignition status, oil and engine temperature data when we are talking about gasoline/ diesel engine.

- Fuel

Tank capacity and remaining range.

- General data about the vehicle

Information from the display, outside temperature value, VIN number, environment temperature, air conditioning temperature, network connectivity, teleservices availability, vehicle orientation and position.

- Lights

The condition of the headlights and indicators.

- Liquids

Coolant and oil temperature, coolant and oil levels, brake fluid parameters.

- Navigation and positioning

GPS speed, navigation destination, vehicle location (latitude and longitude), time and distance remaining to reach the destination, vehicle alignment, vehicle movement status, most visited places to suggest destinations of travel.

- Security

Technical condition of the seat belts and their fastening, information about airbags.

- Service and maintenance

Date of the next brake fluid inspection and change, time threshold for the main test and exhaust fumes test, ‘check engine’ information.

- Smartphone

Pairing with smartphones, driver behavioural patterns.

- Warning systems

ESP (Electronic Stability Program), ADAS (Advanced Driver Assistance Systems). Data on automatic eCall, battery protection. Messages from sensors (parking, distance, speed).

- Wheels

Tire pressure status, brake pads.

Challenges related to technical possibilities

People responsible for the development and implementation of modern solutions face various challenges. How well they handle them determines the success of monetization.

When analyzing individual systems, you need to take into account such aspects as:

- the frequency of data collection,

- the possibility of updating,

- the improvement of sensors that allow collecting personal data

- maintaining the stability of connections,

- identifying entities that have access to collected data.

How to monetize vehicle data thanks to in-car technologies - the biggest challenges and control points of the process - Part 1

Brook. Not a stream yet, though. But in the foreseeable future, it is going to be a proper river. What are we talking about? Data obtained from vehicles. Experts estimate that data inflow is likely to rise from approximately 33 zettabytes (this is how much we obtained in 2018) to 175 zettabytes in 2052. For OEMs and companies from the broadly-defined automotive industry, this means one thing. Endless monetization possibilities. Providing that they face the challenges connected with data capture, filtering and storage, and become familiar with the in-vehicle technologies enabling that.

The potential is enormous. However, the Capgemini report shows that there is still a long way ahead before reaching its full potential. Today, as many as 44% of OEM customers do not yet avail of any online service in their cars, and still, connecting to the network is just the starting point because without the Internet there is no option of monetizing data. And even if the vehicle is already connected to the network, only every second driver declares frequent use of this type of service.

Anyway, the condition of the Internet is a challenge in itself. Today, in modern vehicles, there are around 100 points from which information can be downloaded (in the future it is estimated that there will be up to 10,000 of them!)

Before we get to know the technologies that enable it (about which we will write in the second part of the article), let's have a look at the challenges and checkpoints that must be considered when creating a data monetization strategy for a software-defined vehicle.

5 things to bear in mind if you want to monetize vehicle data

1. Developing the customer value proposition

This is where it all begins- from creating a sales offer and an environment in which drivers will believe you have something unique and valuable for them. Without trade, no technology will guarantee your success. Customers will simply not want to share data.

Think about the unique offer you want to present to them and develop a clear data management policy. As a result, it should be followed by the selection of appropriate technologies, and then their implementation in vehicles.

Obtaining data to offer the driver safety or a good sense of direction differs from getting information related to entertainment or directing the customer to a sale in a nearby shopping mall.

It would be perfect if the developed customer value proposition was consistent with your brand's DNA and features that have always been associated with it. This would make it easier to convince users, remain in line with your business assumptions, and stand out from the competition. Focus on technology application, not on technology just to be used.

2. Consider matching technology with the data for which users are most likely to "pay"

Speaking of users’ preferences, even today, at the stage when the technologies of obtaining data from vehicles are not fully-fledged yet, it can be seen that for some services customers are willing to give up some of their privacy, while they are largely opposed or reluctant towards others.

Capgemini's research shows that the group with the greatest potential includes services related to safety and facilitating driving:

- hazard warning;

- collision warning;

- theft detection system;

- e-call;

- interactive language assistance.

On the other hand, the greatest objection among users is aroused by services related to broadly -defined shopping:

- In-car delivery;

- in car e-commerce.

Keep this in mind when choosing technology to help you monetize your data.

3. Data collector strategy

The data in the vehicle is acquired by means of special sensors and then sent to collectors, which are supposed to gather this data and enable it to be transferred to the cloud. To effectively filter this data and derive maximum benefit from it, you need reliable technology to facilitate it. Due to the huge amount of data and the interaction between various sensors, the universal data collector is the best solution, as it collects all information obtained from sensors in the car.

In order to fully use its potential, during the implementation phase of this technology, it is crucial to ensure close work of the engineering team with people responsible for digital data management (see the next section). Close cooperation of both teams will help to obtain more interesting data and implement new services more efficiently.

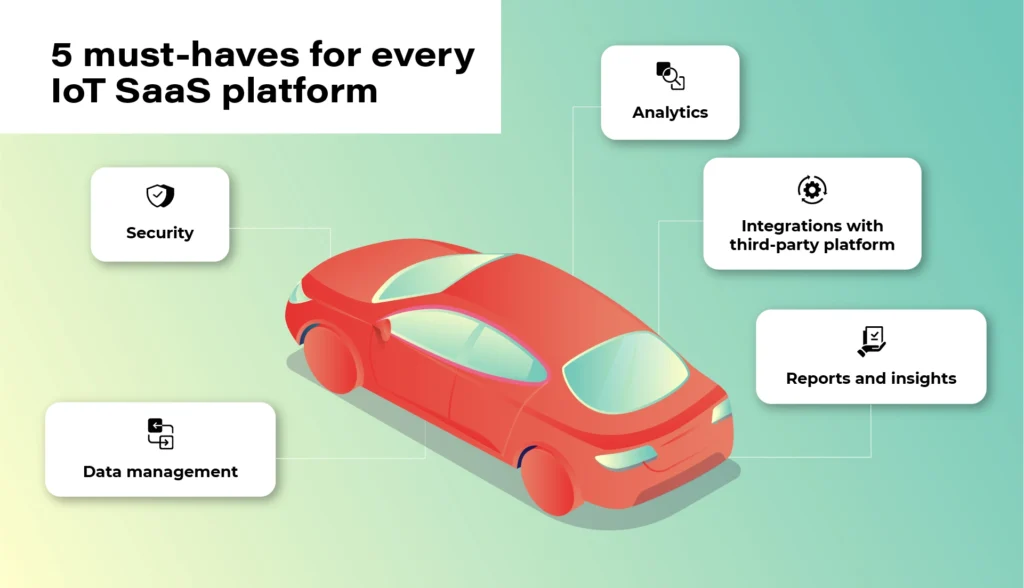

4. Provider of IoT data platform

Collecting data from vehicles is impossible without an IoT platform connected to cloud solutions dedicated to the automotive industry - this is where data is sent and analyzed to be later collected by the vehicle sensors.

Regardless of which platform you choose (the most popular solutions on the market today are: Microsoft Azure, Amazon AWS, and Otonomo, operating in the SaaS system), 5 features that such a platform should have are of paramount importance to enable the efficient flow of information.

You can read more about it in our article on this issue .

5. Data enrichment

While this article focuses on technologies directly related to obtaining data from the vehicle, it should not be overlooked that the software-defined vehicle operates in a wider ecosystem. Monetization of data from vehicles will not be possible without technologies related to infrastructure (e.g. smart-road infrastructure, V2X communication , or high-speed data towers), as well as coordination of back-end processes for which entities such as policymaker, cybersecurity specialist, technical regulator, road infrastructure operator or billing/tolling player are accountable.

To create more valuable and attractive services, a coherent policy is necessary, as it will enrich the data stream from third parties and the user themselves, and will improve cooperation between elements of the ecosystem.

Checkpoints inside the car

In-car technologies are not the only gateway for data that companies can obtain from drivers (another entry point may be, for instance, the driver's smartphone or road infrastructure). However, they are the ones over which OEMs and manufacturers have the greatest control, technically at least.

Before we directly describe the technologies in the vehicle allowing that data to be obtained, let's focus on the checkpoints that are crucial for the capture of information, its quality, and value for building services.

In the software-defined vehicle ecosystem, we can identify three such areas, a kind of bottleneck on which the flow of data depends. These are:

- Vehicle interior and infrastructure.

- Connection to cloud.

- Data cloud.

Let's have a look at the first area, which is practically entirely the responsibility of the automotive company and is directly related to the equipment in the vehicle.

We can list the following groups of such checkpoints which require closer attention when building a data monetization strategy.

1. Gateway to the customer

Key points due to the start of data gathering and the user's experience - their willingness to share data, and thus increasing the value of the gathered data for the manufacturer.

- HMI (i.e. a set of technologies enabling the driver to activate the vehicle and begin collecting data, e.g. touch screens, visual sensors, voice commands, etc. - certainly a topic for a separate article)

- Data gateway (port, mobile data connection, USB port, radio connection)

- Customer ID

2. Points that build loyalty and the need to buy

That is, the contact points with the offer that allow you to easily download new applications, pay bills and influence the user's willingness to renew the service. The more transparent, engaging, and easy-to-use, the more likely the user is to continue their subscription.

- App store / ecosystem

- Billing platform

- In-vehicle infotainment (IVI)

- Apps/ content

3. Key points for data security, data analysis and usability

- CPU/ control unit

- Car sensors / actuators

Software-defined vehicles do not run in a vacuum

When creating a data monetization strategy for a software-defined vehicle, one should always bear in mind the wide ecosystem in which such a vehicle operates. It is not enough to equip it with the technology itself and wait for the flow of data that will turn into specific value for the enterprise . In such a complex and extensive ecosystem, nothing happens by itself. There is no room for improvisation, omitting checkpoints, and presenting half-baked offers. Yes, the technology that downloads data from the vehicle is crucial, but it won't work unless we bear in mind the broader data management context that reaches beyond collecting and analyzing it.

Monitoring your microservices on AWS with Terraform and Grafana - monitoring

Welcome back to the series. We hope you’ve enjoyed the previous part and you’re back to learn the key points. Today we’re going to show you how to monitor the application.

Monitoring

We would like to have logs and metrics in a single place. Let’s imagine you see something strange on your diagrams, mark it with your mouse, and immediately have proper log entries from this particular timeframe and this particular machine displayed below. Now, let’s make it real.

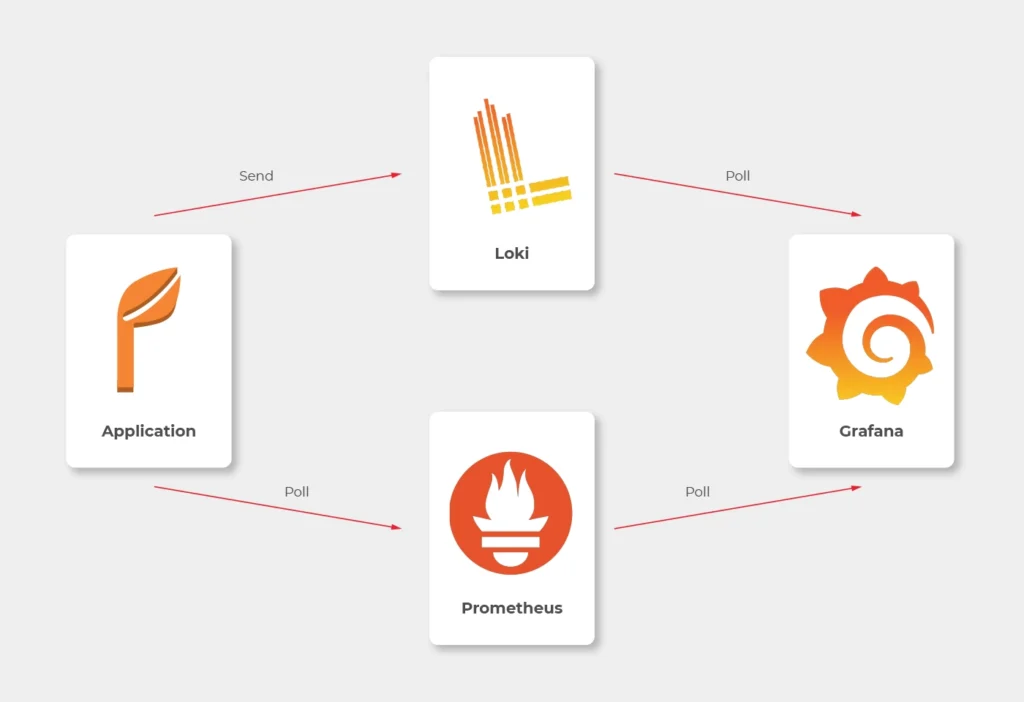

Some basics first. There is a huge difference between the way Prometheus and Loki get the data. Both of them are being called by Grafana to poll data, but Prometheus also actively calls the application to poll metrics. Loki, instead, just listens, so it needs some extra mechanism to receive logs from applications.

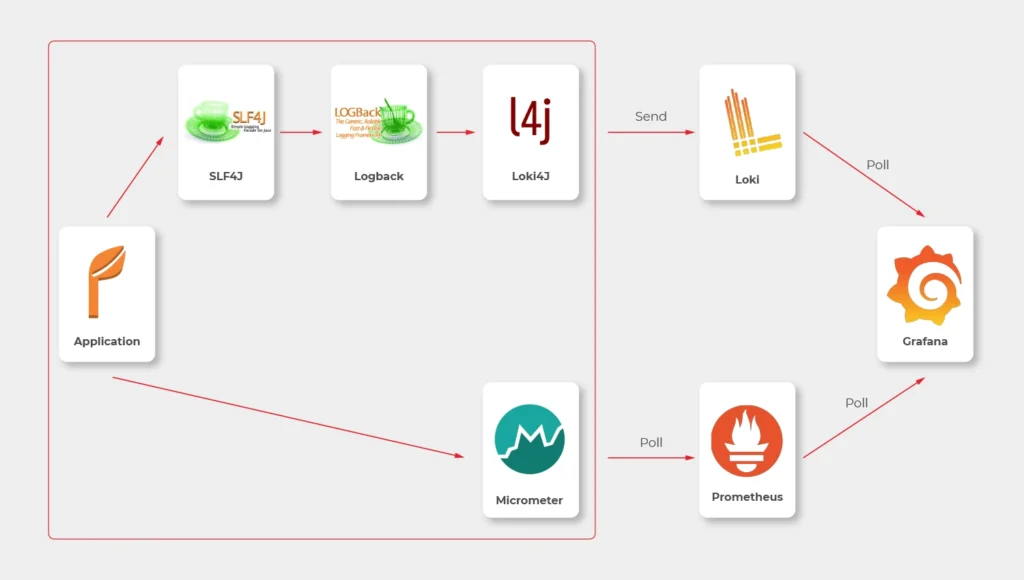

In most sources over the Internet, you’ll find that the best way to send logs to Loki is to use Promtail. This is a small tool, developed by Loki’s authors, which reads log files and sends them entry by entry to remote Loki’s endpoint. But it’s not perfect. Sending multiline logs is still in a bad shape (state for February 2021), some config is really designed to work with Kubernetes only and at the end of the day, this is one more additional application you would need to run inside your Docker image, which can get a little bit dirty. Instead, we propose to use a loki4j logback appender (https://github.com/loki4j). This is a zero-dependency Java library designed to send logs directly from your application.

There is one more Java library needed - Micrometer . We’re going to use it to collect metrics of the application.

So, the proper diagram should look like this.

Which means, we need to build or configure the following pieces:

- slf4j (default configuration is enough)

- Logback

- Loki4j

- Loki

- Micrometer

- Prometheus

- Grafana

Micrometer

Let’s start with metrics first.

There are just three things to do on the application side.

The first one is to add a dependency to the Micrometer with Prometheus integration (registry).

<dependency>

<groupId>io.micrometer</groupId>

<artifactId>micrometer-registry-prometheus</artifactId>

</dependency>

Now, we have a new endpoint exposable from Spring Boot Actuator, so we need to enable it.

management:

endpoints:

web:

exposure:

include: prometheus,health

This is a piece of configuration to add. Make sure you include prometheus in both config server and config clients’ configuration. If you have some Web Security configured, make sure to enable full access to /actuator/health and /actuator/prometheus endpoint.

Now we would like to distinguish applications in our metrics, so we have to add a custom tag in all applications. We propose to add this piece of code as a Java library and import it with Maven.

@Configuration

public class MetricsConfig {

@Bean

MeterRegistryCustomizer<MeterRegistry> configurer(@Value("${spring.application.name}") String applicationName) {

return (registry) -> registry.config().commonTags("application", applicationName);

}

}

Make sure you have spring.application.name configured in all bootstrap.yml files in config clients and application.yml in the config server.

Prometheus

The next step is to use a brand new /actuator/prometheus endpoint to read metrics in Prometheus.

The ECS configuration is similar to backend services. The image you need to push to your ECR should look like that.

FROM prom/prometheus

COPY prometheus.yml .

ENTRYPOINT prometheus --config.file=prometheus.yml

EXPOSE 9090

As Prometheus doesn’t support HTTPS endpoints, it’s just a temporary solution, and we’ll change it later.

The prometheus.yml file contains such a configuration.

scrape_configs:

- job_name: 'cloud-config-server'

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

dns_sd_configs:

- names:

- '$cloud_config_server_url'

type: 'A'

port: 8888

- job_name: 'foo'

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

dns_sd_configs:

- names:

- '$foo_url

type: 'A'

port: 8080

- job_name: bar

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

dns_sd_configs:

- names:

- '$bar_url

type: 'A'

port: 8080

- job_name: 'backend_1'

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

dns_sd_configs:

- names:

- '$backend_1_url

type: 'A'

port: 8080

- job_name: 'backend_2'

metrics_path: '/actuator/prometheus'

scrape_interval: 5s

dns_sd_configs:

- names:

- '$backend_2_url

type: 'A'

port: 8080

Let’s analyse the first job as an example.

We would like to call '$cloud_config_server_url' url with '/actuator/prometheus' relative path on a port 8080 . As we’ve used dns_sd_configs and type: 'A', the Prometheus can handle multivalue DNS answers from the Service Discovery, to analyze all tasks in each service. Please make sure you replace all ' $x' variables in the file with proper URLs from the Service Discovery.

The Prometheus isn’t exposed to the public load balancer, so you cannot verify your success so far. You can expose it temporarily or wait for Grafana.

Logback and Loki4j

If you use the Spring Boot, you probably already have spring-boot-starter-logging

library included. Therefore, you use logback as the default slf4j integration. Our job now is to configure it to send logs to Loki. Let’s start with the dependency:

<dependency>

<groupId>com.github.loki4j</groupId>

<artifactId>loki-logback-appender</artifactId>

<version>1.1.0</version>

</dependency>

Now let’s configure it. The first file is called logback-spring.xml and located in the config server next to the application.yml (1) file.

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<property name="LOG_PATTERN" value="%d{yyyy-MM-dd HH:mm:ss.SSS} %-5level [%thread] %logger - %msg%n"/>

<appender name="Console" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>${LOG_PATTERN}</pattern>

</encoder>

</appender>

<springProfile name="aws">

<appender name="Loki" class="com.github.loki4j.logback.Loki4jAppender">

<http>

<url>${LOKI_URL}/loki/api/v1/push</url>

</http>

<format class="com.github.loki4j.logback.ProtobufEncoder">

<label>

<pattern>application=spring-cloud-config-server,instance=${INSTANCE},level=%level</pattern>

</label>

<message>

<pattern>${LOG_PATTERN}</pattern>

</message>

<sortByTime>true</sortByTime>

</format>

</appender>

</springProfile>

<root level="INFO">

<appender-ref ref="Console"/>

<springProfile name="aws">

<appender-ref ref="Loki"/>

</springProfile>

</root>

</configuration>

What do we have here? There are two appenders with the common pattern, and one root logger. So we start with pattern configuration <property name="LOG_PATTERN" value="%d{yyyy-MM-dd HH:mm:ss.SSS} %-5level [%thread] %logger - %msg%n"/> . Of course you can configure it, as you want.

Then, the standard console appender. As you can see, it uses the LOG_PATTERN .

Then you can see the com.github.loki4j.logback.Loki4jAppender appender. This way the library is being used. We’ve used < springProfile name="aws" > profile filter to enable it only in the AWS infrastructure and disable locally. We use the same when using the appender with appender-ref ref="Loki" . Please note the label pattern, used here to label each log with custom tags (application, instance, level). Another important part here is Loki’s URL. We need to provide it as an environment variable for the ECS task. To do that, you need to add one more line to your aws_ecs_task_definition configuration in terraform.

"environment" : [

...

{ "name" : "LOKI_URL", "value" : "loki.internal" }

],

As you can see, we defined “loki.internal” URL and we’re going to create it in a minute.

There are few issues with logback configuration for the config clients.

First of all, you need to provide the same LOKI_URL environment variable to each client, because you need Loki before reading config from the config server.

Now, let’s put another logback-spring.xml file in the config server next to the applic ation.yml (2) file.

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<property name="LOG_PATTERN" value="%d{yyyy-MM-dd HH:mm:ss.SSS} %-5level [%thread] %logger - %msg%n"/>

<springProperty scope="context" name="APPLICATION_NAME" source="spring.application.name"/>

<appender name="Console" class="ch.qos.logback.core.ConsoleAppender">

<encoder>

<pattern>\${LOG_PATTERN}</pattern>

</encoder>

</appender>

<springProfile name="aws">

<appender name="Loki" class="com.github.loki4j.logback.Loki4jAppender">

<http>

<requestTimeoutMs>15000</requestTimeoutMs>

<url>\${LOKI_URL}/loki/api/v1/push</url>

</http>

<format class="com.github.loki4j.logback.ProtobufEncoder">

<label>

<pattern>application=\${APPLICATION_NAME},instance=\${INSTANCE},level=%level</pattern>

</label>

<message>

<pattern>\${LOG_PATTERN}</pattern>

</message>

<sortByTime>true</sortByTime>

</format>

</appender>

</springProfile>

<root level="INFO">

<appender-ref ref="Console"/>

<springProfile name="aws"><appender-ref ref="Loki"/></springProfile>

</root>

</configuration>

The first change to notice are slashes before environment variables (eg. \${LOG_PATTERN } ). We need it to tell the config server not to resolve variables on it’s side (because it’s impossible). The next difference is a new variable <springProperty scope="context" name="APPLICATION_NAME" source="spring.application.name"/> . with this line and spring.application.name in all your applications each log will be tagged with a different name. There is also a trick with the ${INSTANCE} variable. As Prometheus uses IP address + port as an instance identifier and we want to use the same here, we need to provide this data to each instance separately.

So your Dockerfile files for your applications should have something like that.

FROM openjdk:15.0.1-slim

COPY /target/foo-0.0.1-SNAPSHOT.jar .

ENTRYPOINT INSTANCE=$(hostname -i):8080 java -jar foo-0.0.1-SNAPSHOT.jar

EXPOSE 8080

Also, to make it working, you are supposed to tell your clients to use this configuration. Just add this to bootstrap.yml files in all you config clients.

logging:

config: ${SPRING_CLOUD_CONFIG_SERVER:http://localhost:8888}/application/default/main/logback-spring.xml

spring:

application:

name: foo

That’s it, let’s move to the next part.

Loki

Creating Loki is very similar to Prometheus. Your dockerfile is as follows.

FROM grafana/loki

COPY loki.yml .

ENTRYPOINT loki --config.file=loki.yml

EXPOSE 3100

The good news is, you don’t need to set any URLs here - Loki doesn’t send any data. It just listens.

As a configuration, you can use a file from https://grafana.com/docs/loki/latest/configuration/examples/ . We’re going to adjust it later, but it’s enough for now.

Grafana

Now, we’re ready to put things together.

In the ECS configuration, you can remove service discovery stuff and add a load balancer, because Grafana will be visible over the internet. Please remember, it’s exposed at port 3000 by default.

Your Grafana Dockerfile should be like that.

FROM grafana/grafana

COPY loki_datasource.yml /etc/grafana/provisioning/datasources/

COPY prometheus_datasource.yml /etc/grafana/provisioning/datasources/

COPY dashboad.yml /etc/grafana/provisioning/dashboards/

COPY *.json /etc/grafana/provisioning/dashboards/

ENTRYPOINT [ "/run.sh" ]

EXPOSE 3000

Let’s check configuration files now.

loki_datasource.yml:

apiVersion: 1

datasources:

- name: Loki

type: loki

access: proxy

url: http://$loki_url:3100

jsonData:

maxLines: 1000

I believe the file content is quite obvious (we'll return here later).

prometheus_datasource.yml:

apiVersion: 1

datasources:

- name: prometheus

type: prometheus

access: proxy

orgId: 1

url: https://$prometheus_url:9090

isDefault: true

version: 1

editable: false

dashboard.yml:

apiVersion: 1

providers:

- name: 'Default'

folder: 'Services'

options:

path: /etc/grafana/provisioning/dashboards

With this file, you tell Grafana to install all json files from /etc/grafana/provisioning/dashboards directory as dashboards.

The last leg is to create some dashboards. You can, for example, download a dashboard from https://grafana.com/grafana/dashboards/10280 and replace ${DS_PROMETHEUS} datasource with your name “prometheus”.

Our aim was to create a dashboard with metrics and logs at the same screen. You can play with dashboards as you want, but take this as an example.

{

"annotations": {

"list": [

{

"builtIn": 1,

"datasource": "-- Grafana --",

"enable": true,

"hide": true,

"iconColor": "rgba(0, 211, 255, 1)",

"name": "Annotations & Alerts",

"type": "dashboard"

}

]

},

"editable": true,

"gnetId": null,

"graphTooltip": 0,

"id": 2,

"iteration": 1613558886505,

"links": [],

"panels": [

{

"aliasColors": {},

"bars": false,

"dashLength": 10,

"dashes": false,

"datasource": null,

"fieldConfig": {

"defaults": {

"custom": {}

},

"overrides": []

},

"fill": 1,

"fillGradient": 0,

"gridPos": {

"h": 8,

"w": 24,

"x": 0,

"y": 0

},

"hiddenSeries": false,

"id": 4,

"legend": {

"avg": false,

"current": false,

"max": false,

"min": false,

"show": true,

"total": false,

"values": false

},

"lines": true,

"linewidth": 1,

"nullPointMode": "null",

"options": {

"alertThreshold": true

},

"percentage": false,

"pluginVersion": "7.4.1",

"pointradius": 2,

"points": false,

"renderer": "flot",

"seriesOverrides": [],

"spaceLength": 10,

"stack": false,

"steppedLine": false,

"targets": [

{

"expr": "system_load_average_1m{instance=~\"$instance\", application=\"$application\"}",

"interval": "",

"legendFormat": "",

"refId": "A"

}

],

"thresholds": [],

"timeRegions": [],

"title": "Panel Title",

"tooltip": {

"shared": true,

"sort": 0,

"value_type": "individual"

},

"type": "graph",

"xaxis": {

"buckets": null,

"mode": "time",

"name": null,

"show": true,

"values": []

},

"yaxes": [

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

},

{

"format": "short",

"label": null,

"logBase": 1,

"max": null,

"min": null,

"show": true

}

],

"yaxis": {

"align": false,

"alignLevel": null

}

},

{

"datasource": "Loki",

"fieldConfig": {

"defaults": {

"custom": {}

},

"overrides": []

},

"gridPos": {

"h": 33,

"w": 24,

"x": 0,

"y": 8

},

"id": 2,

"options": {

"showLabels": false,

"showTime": false,

"sortOrder": "Ascending",

"wrapLogMessage": true

},

"pluginVersion": "7.3.7",

"targets": [

{

"expr": "{application=\"$application\", instance=~\"$instance\", level=~\"$level\"}",

"hide": false,

"legendFormat": "",

"refId": "A"

}

],

"timeFrom": null,

"timeShift": null,

"title": "Logs",

"type": "logs"

}

],

"schemaVersion": 27,

"style": "dark",

"tags": [],

"templating": {

"list": [

{

"allValue": null,

"current": {

"selected": false,

"text": "foo",

"value": "foo"

},

"datasource": "prometheus",

"definition": "label_values(application)",

"description": null,

"error": null,

"hide": 0,

"includeAll": false,

"label": "Application",

"multi": false,

"name": "application",

"options": [],

"query": {

"query": "label_values(application)",

"refId": "prometheus-application-Variable-Query"

},

"refresh": 2,

"regex": "",

"skipUrlSync": false,

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

},

{

"allValue": null,

"current": {

"selected": false,

"text": "All",

"value": "$__all"

},

"datasource": "prometheus",

"definition": "label_values(jvm_classes_loaded_classes{application=\"$application\"}, instance)",

"description": null,

"error": null,

"hide": 0,

"includeAll": true,

"label": "Instance",

"multi": false,

"name": "instance",

"options": [],

"query": {

"query": "label_values(jvm_classes_loaded_classes{application=\"$application\"}, instance)",

"refId": "prometheus-instance-Variable-Query"

},

"refresh": 2,

"regex": "",

"skipUrlSync": false,

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

},

{

"allValue": null,

"current": {

"selected": false,

"text": [

"All"

],

"value": [

"$__all"

]

},

"datasource": "Loki",

"definition": "label_values(level)",

"description": null,

"error": null,

"hide": 0,

"includeAll": true,

"label": "Level",

"multi": true,

"name": "level",

"options": [

{

"selected": true,

"text": "All",

"value": "$__all"

},

{

"selected": false,

"text": "ERROR",

"value": "ERROR"

},

{

"selected": false,

"text": "INFO",

"value": "INFO"

},

{

"selected": false,

"text": "WARN",

"value": "WARN"

}

],

"query": "label_values(level)",

"refresh": 0,

"regex": "",

"skipUrlSync": false,

"sort": 0,

"tagValuesQuery": "",

"tags": [],

"tagsQuery": "",

"type": "query",

"useTags": false

}

]

},

"time": {

"from": "now-24h",

"to": "now"

},

"timepicker": {},

"timezone": "",

"title": "Logs",

"uid": "66Yn-8YMz",

"version": 1

}

We don’t recommend playing with such files manually when you can use a very convenient UI and export a json file later on. Anyway, the listing above is a good place to start. Please note the following elements:

In variable’s definitions, we use Prometheus only, because Loki doesn’t expose any metric so you cannot filter one variable (instance) when another one (application) is selected.

Because we would like to sometimes see all instances or log levels together, we need to query data like here: {application=\"$application\", instance=~\"$instance\", level=~\"$level \"}" . The important element is a tilde in instance=~\"$instance\" and level=~\"$level\" , which allows us to use multiple values.

Conclusion

Congratulation! You have your application monitored. We hope you like it! But please remember - it’s not production-ready yet! In the last part, we’re going to cover a security issue - add encryption at transit to all components.

Kubernetes supports Windows workloads - the time to get rid of skeletons in your closet has come

Enterprises know that the future of their software is in the cloud. Despite keeping that in mind, many tech leaders delay the process of transforming their core legacy systems. How will the situation change with Kubernetes supporting Windows workloads? Can we assume that companies will leverage the Kubernetes upgrade to accelerate their journey towards the cloud?

How can this article help you?

- You can see what Kubernetes supporting Windows workloads provides for enterprises.

- We remind you why going to the cloud is crucial for your business excellence.

- You can get to know the main reason stopping enterprises from transforming their legacy systems.

- We describe the main risks that come with delaying the transition towards the cloud.

- You learn how to leverage Kubernetes supporting Windows workloads.

Technical debt is an unpleasant legacy you often come into money while taking charges of critical systems or enterprise software older than you. Laying under the cache layer and various interfaces, legacy systems encourage you to forget them. And you are good with it - you have enough tasks to perform and things to manage on a daily basis. Sprint after sprint, your team deals with developing applications and particular features to meet increasing customer demand and sophisticated needs. Initiating a tremendous venture, which may transform into opening Pandora's box, it's not exactly what you want to add to your checklist.

The bad news is that if you're willing to be successful at your job, the clock is ticking. The problem with legacy systems is that you don't know when they break down, causing disaster. You will justify yourself, but the impact on your work will be nightmarish. What you know for sure, legacy systems under applications built by your talented teams hinder further development and make your job harder than it already is.

Whatever you are going to go for it all or don't want to throw yourself in at the deep end - Kubernetes supporting Windows workloads is the news you needed. See how it can accelerate your transition towards the cloud.

What's the deal with Kubernetes supporting Windows workloads

Kubernetes was designed to run Linux containers. Such an approach complicated the transition towards the cloud for enterprises with Windows Server legacy systems. And while over 70% of the global server market is Windows-based (according to Statista), we can see why so many legacy apps are in the closets. If you work at a large enterprise, the chances that you have a few of them hidden carefully are very high.

How supporting Windows workloads by Kubernetes is changing the game? In the - not so much - olden days, Windows-based applications were immovable - they needed to be run on Windows, required Windows server, and access to numerous related databases and libraries. Such a demanding environment encouraged enterprises to wait for better days. And now they have come. Kubernetes, with production support for scheduling Windows containers on Windows nodes in the platform cluster, allows for running these Windows applications, enabling enterprises to modernize and move their apps to the cloud.

It’s believed that with this release, Kubernetes provides enterprises with the opportunity to accelerate their DevOps and cloud transformation . In case you missed 1 mln publications about cloud advantages, we will write up the main points.

Why do enterprises move their legacy applications to the cloud

As promised above, let’s keep it short:

- Scalability - the cloud allows you to easily manage your IT resources, data storage capacity, computing power, and networking (in both ways) without downtimes or other disruptions. Such flexibility supports business growth, product/service development, and better cost management.

- Security - the right set of strategies and policies allow enterprises to build and manage secure cloud environments. Decentralization and support for your cloud stack provide solutions to common challenges in maintaining on-premise infrastructure.

- Maintenance - using cloud services delivered by trusted providers, you don't have to maintain many things on your own, just leveraging available services.

- Accessibility - the pandemic showed us how crucial is remote access to our IT resources, and the cloud provides your remote or distributed teams with easy access regardless of your team members' localization - that is priceless.

- Reliability - cloud providers ensure easier and cheaper data backups, disaster recovery, and business continuity as they use the economy at scale.

- Performance - as the cloud service market is blooming and service providers are competing about increasing revenues, the quality and performance of cloud infrastructure are top-notch.

- Cost-effectiveness - with cloud computing, your enterprise can cut off numerous spendings from your books - including infrastructure, electricity, and IT experts responsible for managing resources.

- Agility - forget about capacity planning while your computing provisioning can be done within a few clicks leveraging self-service.

Sounds convincing? If everything is obvious, why are there still so many legacy apps?

Why do enterprises delay with moving apps to the cloud

Legacy systems are long-time friends with procrastination. If you are long enough in this business, you have definitely heard a few of these excuses:

- We cannot do it now. We have too many things on the list. A better day will come.

- It’s risky. It’s critically risky. Why do you even ask? Do you want to see the world burning?

- Ok, let’s do it! But wait….who knows how to do it?

- We can cover it with our UI or cache layer, and nobody will ever notice.

- It’s our core system. You touch it, everything will go bad.

- Why change it if it works well?

- It’s a too huge project for me to decide and take responsibility for the never-ending process.

These are some examples from the top of the iceberg. Diving into the process of moving legacy apps to the cloud , you can stumble upon numerous points convincing you to stay out of them. But can it last forever? What if the “zero hour” strikes?

Playing a risky game: what can happen if you don’t migrate to the cloud

Many of our business challenges wouldn’t have existed if we, at some point, tackled the underestimated issues. The excuses highlighted above can convince you to leave things as they are. But what if your real problems are just ahead of you? Let’s name some threats that may occur at enterprises that delay transition towards the cloud.

- Maintaining legacy systems becomes more expensive with time as your company has to pay for computing power supporting these solutions.

- Your enterprise may face a huge challenge to find experts understanding your legacy systems. The longer you postpone the process, the harder it will be to look for people working with frameworks and tools that are outdated.

- By allowing for increasing your technical debt, your enterprise acts against your willingness for innovation. Your legacy systems suppress the development of new products and services, undermining your competitive advantage.

- You can face a challenge to provide services to your customers because of downtimes and distractions caused by inefficient systems.

- Technology develops fast. Legacy systems stop you from participating in the movement and may generate new issues in the future, especially in the time you will need to be flexible.

- Most established enterprises work on highly regulated markets and have to meet challenging conditions. One of our business partners had to rebuild one of its core systems because of new regulations regarding data management. Such a situation can lead to enormous costs.

- There appears a serious security threat as legacy systems are prone to attacks, and without upgrades, your system may become insecure.

The list above can be expanded to many additional issues. But instead of describing challenges, let’s discuss how they can be addressed using Kubernetes .

How to leverage Kubernetes supporting Windows workloads

There is a ton of code written on Windows. With the Kubernetes update, you don’t have to think about rebuilding your applications from scratch, so myriads of working hours spent by your team are secured. Most of the code can be moved to the Kubernetes container and there developed. It’s safer and cheaper.

Kubernetes supporting Windows workloads gives you time to navigate your journey to the cloud properly. First of all, it ends the discussion for all those excuses mentioned above. The moment is now. Secondly, you can now utilize an evolutionary approach by developing and upgrading your systems instead of building them from ground zero. Furthermore, with your key legacy systems moved to the cloud, you can accelerate the overall transformation at your enterprise towards an agile, DevOps-oriented organization open to innovation and developing highly competitive software.

What should be your next move?

By supporting Windows workloads, Kubernetes makes the life of many tech teams easier. But it would be too easy if everything worked by itself. Configuration of the Kubernetes cluster to utilize Windows workloads is demanding and time-consuming. Instead of doing it on your own, you can leverage the ready-to-use solution provided by Grape Up. Cloudboostr , our Kubernetes stack, enables you to move your Windows-based apps to the cloud. Consult our expert on how to do it properly!

The next step for digital twin – virtual world

Digital Twin is a widely spread concept of creating a virtual representation of object state. The object may be small, like a raindrop, or huge as a factory. The goal is to simplify the operations on the object by creating a set of plain interfaces and limiting the amount of stored information. With a simple interface, the object can be easily manipulated and observed, while the state of its physical reflection is adjusted accordingly.

In the automotive and aerospace industries , this is a common approach to use virtual objects representation to design, develop, test, manufacture, and operate both parts of a vehicle, like an engine, drivetrain, chassis/fuselage, or a full vehicle – a whole car, motorcycle, truck or aircraft. Virtual representations are easier to experiment with, especially on a bigger scale, and to operate - especially in situations when connectivity between a vehicle and the cloud is not stable ability to query the state anyway is vital to provide a smooth user experience.

It’s not always critical to replicate the object with all details. For some use cases, like airflow modeling for calculating drag force, mainly exterior parts are important. For computer vision AI simulation, on the other hand, user checking if the doors and windows are locked only requires a boolean true/false state. And to simulate the combustion process in the engine, even the vehicle type is not important.