Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

Exploring the architecture of automotive electronics: Domain vs. zone

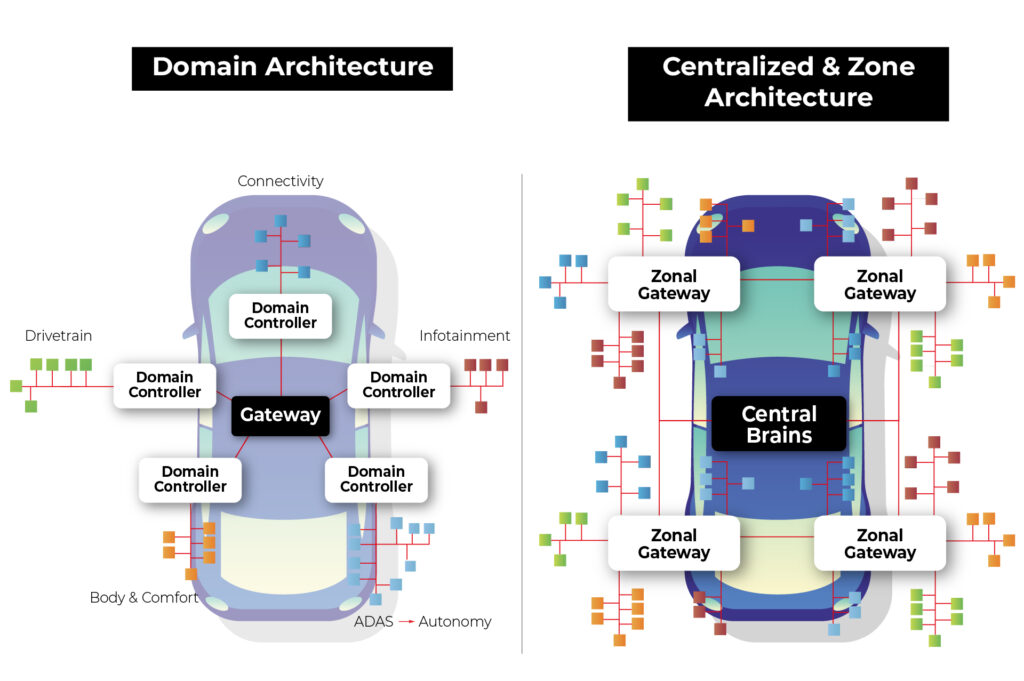

The automotive industry is undergoing a transformative shift towards electrification and automation, with vehicles becoming increasingly reliant on sophisticated electrical and electronic systems. At the heart of this evolution lies the architecture that governs how these systems are organized and integrated within the vehicle. Two prominent paradigms have emerged in this domain: domain architecture and zone architecture.

Source: https://www.eetasia.com/the-role-of-centralized-storage-in-the-emerging-zonal-automotive-architecture/

Domain Architecture : In this approach, various electrical and electronic functions are organized around "domains" or functional modules. Each domain is responsible for a specific functional area of the vehicle, such as the engine, braking system, steering system, etc. Each domain may have its independent controllers and communication networks.

Example : In a domain architecture setup, the engine domain would handle all functions related to the vehicle's engine, including ignition, fuel injection, and emissions control. This domain would have its dedicated controller managing these operations.

Zone Architecture : In this approach, the electrical and electronic systems are organized around different "zones" within the vehicle. Zones typically correspond to specific physical areas of the vehicle, such as the front dashboard, passenger cabin, front-end, rear-end, etc. Each zone may have independent electrical and electronic systems tailored to specific needs and functions.

Example : In a zone architecture setup, the front-end zone might encompass functions like lighting, HVAC (Heating, Ventilation, and Air Conditioning), and front-facing sensors for driver assistance systems. These functions would be integrated into a system optimized for the front-end zone's requirements.

Advantages of zonal architecture over domain architecture

In the realm of automotive electronics, zone architecture offers several advantages over domain architecture, revolutionizing the way vehicles are designed, built, and operated. Let's explore these advantages in detail:

1. Software defined vehicle (SDV)

The concept of a Software Defined Vehicle (SDV) represents a paradigm shift in automotive engineering, transforming vehicles into dynamic platforms driven by software innovation. SDV involves decoupling the application layer from the hardware layer, creating a modular and flexible system that offers several significant advantages:

Abstraction of Application from Hardware : In an SDV architecture, applications are abstracted from the underlying hardware, creating a layer of abstraction that simplifies development and testing processes. This separation allows developers to focus on building software functionalities without being constrained by hardware dependencies.

Sensor Agnosticism : One of the key benefits of SDV is the ability to utilize sensors across multiple applications without being tied to specific domains. In traditional domain architectures, sensors are often dedicated to specific functions, limiting their flexibility and efficiency. In an SDV setup, sensors are treated as shared resources that can be accessed and utilized by various applications independently. This sensor agnosticism enhances resource utilization and reduces redundancy, leading to optimized system performance and cost-effectiveness.

Independent Software Updates : SDV enables independent software updates for different vehicle functions and applications. Instead of relying on centralized control units or domain-specific controllers, software functionalities can be updated and upgraded autonomously, enhancing the agility and adaptability of the vehicle.

The OTA system in zonal architecture is also simpler in general as the whole idea is based on abstracting software from hardware, and less tightly coupled software is way easier to update remotely.

With independent software updates, manufacturers can address software bugs, introduce new features and deploy security patches more efficiently. This capability ensures that vehicles remain up-to-date with the latest advancements and safety standards, enhancing user satisfaction and brand reputation.

2. Security

Zone architecture in automotive electronics offers concrete advantages over domain architecture. Let's examine how zone architecture addresses security concerns more effectively compared to domain architecture:

Network Access Vulnerabilities in Domain Architecture:

In domain architecture, connecting to the vehicle network grants access to the entire communication ecosystem, including sensors, actuators, and the central computer.

Particularly concerning is the Controller Area Network (CAN), a widely used protocol lacking built-in authentication and authorization mechanisms. Once connected to a CAN network, an attacker can send arbitrary messages as if originating from legitimate devices.

Granular Access Control in Zone Architecture:

Zone architecture introduces granular access control mechanisms, starting at the nearest gateway to the zone. Each message passing through the gateway is scrutinized, allowing only authorized communications to proceed while rejecting unauthorized ones.

By implementing granular access control, attackers accessing the network gain access only to communication between sensors and the gateway. Moreover, the architecture enables the segregation of end networks based on threat levels.

Network Segmentation for Enhanced Security:

In a zone architecture setup, it's feasible to segment networks based on the criticality of components and potential exposure to threats.

Less critical sensors and actuators can be grouped together on a single CAN network. Conversely, critical sensors vulnerable to external access can be connected via encrypted Ethernet connections, offering an additional layer of security.

In summary, zone architecture provides a reliable solution to security vulnerabilities inherent in domain architecture. By implementing granular access control and network segmentation, zone architecture significantly reduces the attack surface and enhances the overall security posture of automotive systems. This approach ensures that critical vehicle functions remain protected against unauthorized access and manipulation, safeguarding both the vehicle and its occupants from potential cyber threats.

3. Simplified and lightweight wiring

Wiring in automotive electronics plays a critical role in connecting various components and systems within the vehicle. However, it also poses challenges, particularly in terms of weight and complexity. This section, explores how zone architecture addresses these challenges, leading to simplified and lightweight wiring solutions.

The Weight of Wiring: It's important to recognize that wiring is one of the heaviest components in a vehicle, trailing only behind the chassis and engine. In fact, the total weight of wiring harnesses in a vehicle can reach up to 70 kilograms. This significant weight contributes to the overall mass of the vehicle, affecting fuel efficiency, handling, and performance.

Challenges with Traditional Wiring: Traditional wiring systems, especially in domain architecture, often involve long and complex wiring harnesses that span the entire vehicle. This extensive wiring adds to the overall weight and complexity of the vehicle, making assembly and maintenance more challenging.

The Promise of Zone Architecture: Zonal architecture offers a promising alternative by organizing vehicle components into functional zones. This approach allows for more localized placement of sensors, actuators, and control units within each zone, minimizing the distance between components and reducing the need for lengthy wiring harnesses.

Reduced Cable Length: By grouping components together within each zone, zone architecture significantly reduces the overall cable length required to connect these components. Shorter cable runs translate to lower electrical resistance, reduced signal attenuation, and improved signal integrity, resulting in more reliable and responsive vehicle systems.

Optimized Routing and Routing Flexibility: Zone architecture allows for optimized routing of wiring harnesses, minimizing interference and congestion between different systems and components. Moreover, the flexibility inherent in zone architecture enables easier adaptation to different vehicle configurations and customer preferences without the constraints imposed by rigid wiring layouts.

4. Easier and cheaper production

Zonal architecture not only enhances the functionality and efficiency of automotive electronics but also streamlines the production process, making it easier and more cost-effective. Let's explore how zone architecture achieves this:

Modular Assembly : One of the key advantages of zone architecture is its modular nature, which allows for the assembly of individual zones separately before integrating them into the complete vehicle. This modular approach simplifies the assembly process, as each zone can be constructed and tested independently, reducing the complexity of assembly lines and minimizing the risk of errors during assembly.

Reduced Wiring Complexity : The reduction in wiring complexity achieved through zone architecture has a significant impact on production costs. Wiring harnesses are one of the most expensive components in a vehicle, primarily due to the labor-intensive nature of their installation. Each wire must be routed and connected individually, and since each domain typically has its own wiring harness, the process becomes even more laborious.

Automation Challenges with Wiring : Furthermore, automating the wiring process is inherently challenging due to the intricate nature of routing wires and connecting them to various components. While automation has been successfully implemented in many aspects of automotive production, wiring assembly remains largely manual, requiring a significant workforce to complete the task efficiently.

Batch Production of Zones : With zone architecture, the assembly of individual zones can be batch-produced, allowing for standardized processes and economies of scale. This approach enables manufacturers to optimize production lines for specific tasks, reduce setup times between production runs, and achieve greater consistency and quality control.

Integration of Wiring Harnesses : Another advantage of zone architecture is the integration of wiring harnesses into larger assemblies, such as the entire zone. By combining wiring harnesses and assembly for an entire zone into a single process, manufacturers can significantly accelerate production and reduce costs associated with wiring installation and integration.

In summary, zone architecture simplifies and streamlines the production process of vehicles by allowing for modular assembly, reducing wiring complexity, addressing automation challenges, and facilitating batch production of zones. By integrating wiring harnesses into larger assemblies and optimizing production lines, manufacturers can achieve cost savings, improve efficiency, and enhance overall quality in automotive production.

Introduction to zone architecture demonstration

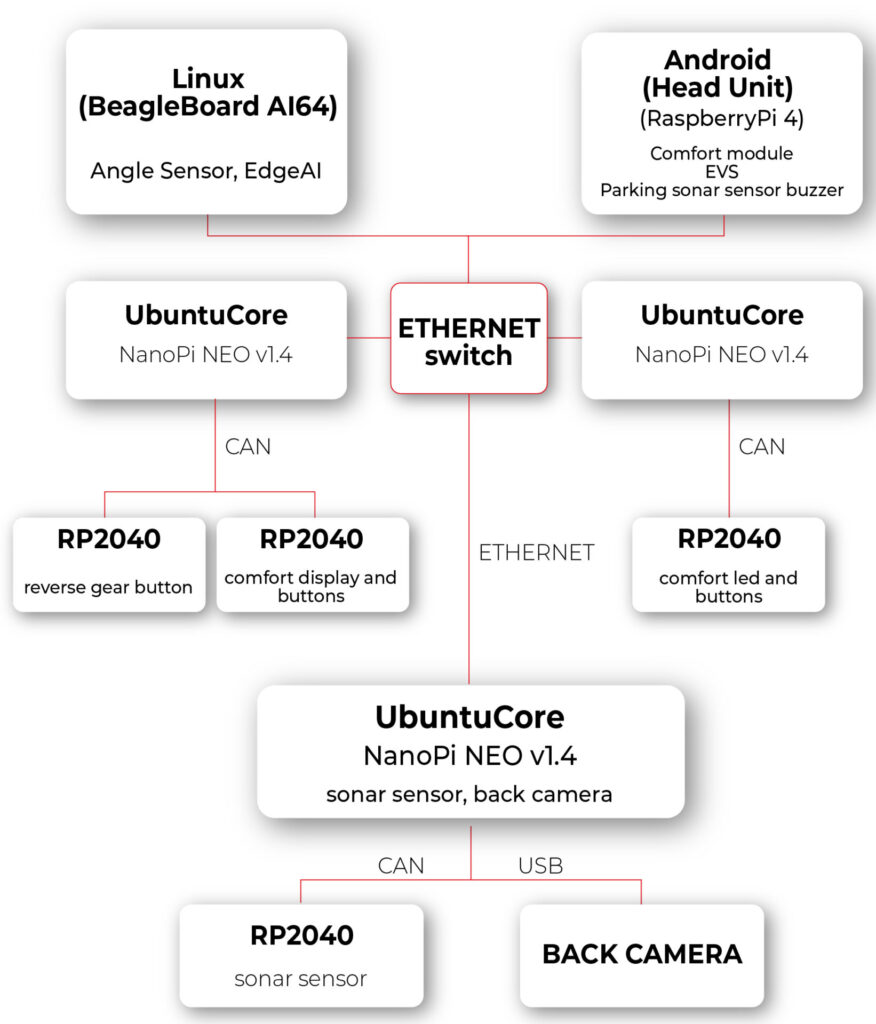

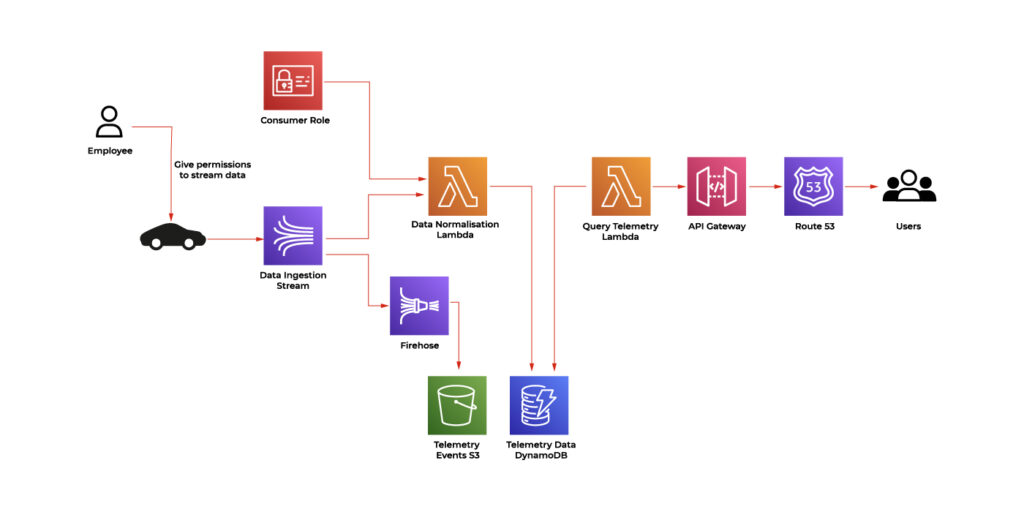

In our Research and Development (R&D) department, we're thrilled to present a demonstration showcasing the power and versatility of zone architecture in automotive electronics. Let's take a closer look at the key components of our setup:

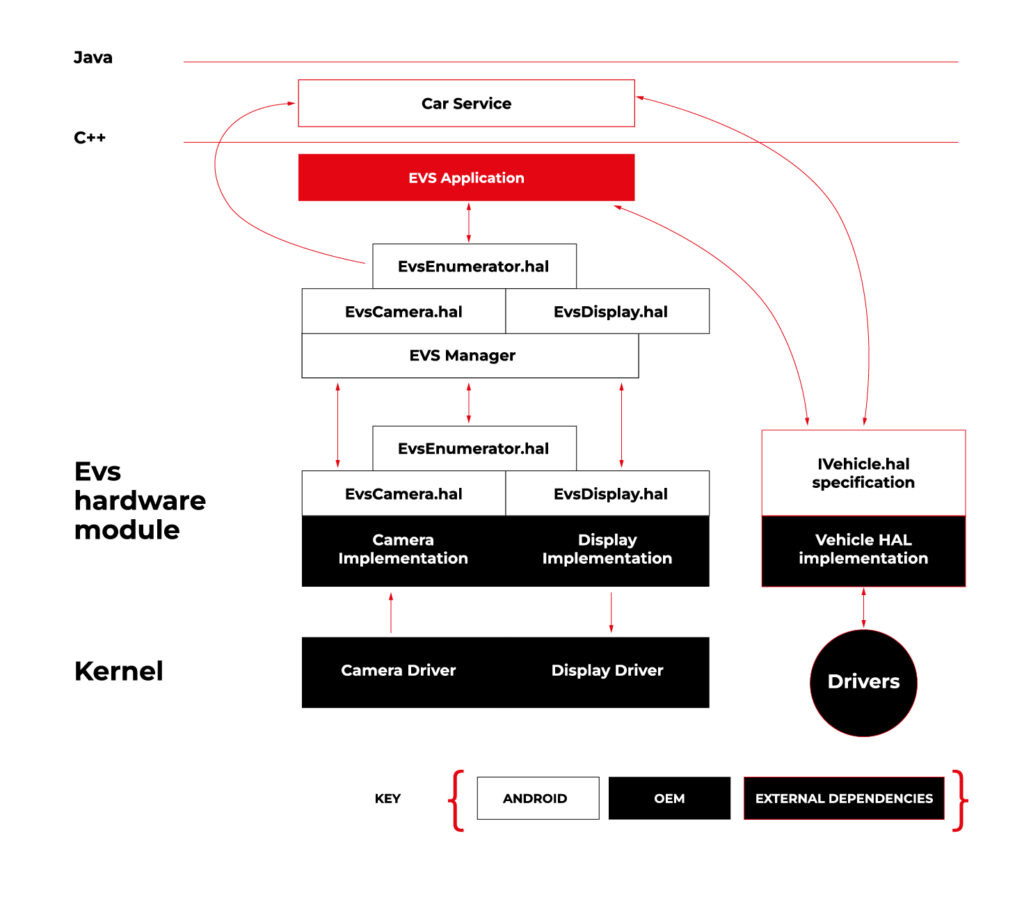

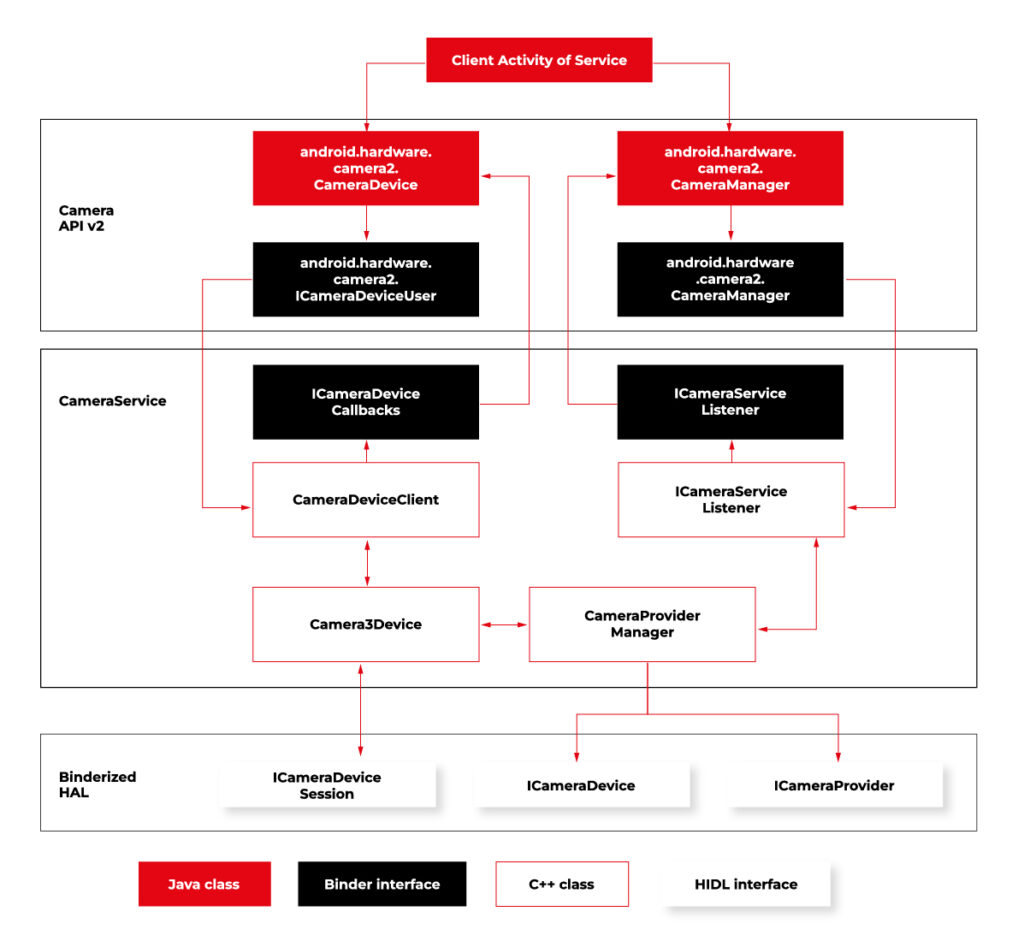

1. Android Computer with Modified VHAL for HVAC:

- Our central computing platform is an Android 14 device running Android Automotive OS (AAOS). This device serves as the hub for system control and communication.

- The modified Vehicle Hardware Abstraction Layer (VHAL) is a pivotal component of our setup. This software layer enables seamless interaction with the vehicle's HVAC module.

- The modified VHAL establishes a connection with the HVAC module through Ethernet and REST API communication protocols, facilitating real-time control and monitoring of HVAC functions.

2. Zone Computer with Ubuntu Core and HVAC Controller Application:

- Each zone in our architecture is equipped with a dedicated computer running Ubuntu Core.

- These zone computers act as the brains of their respective zones, hosting our custom HVAC controller application.

- The HVAC controller application, developed in-house, is responsible for interpreting commands received from the central Android computer and orchestrating HVAC operations within the zone.

- By leveraging Ethernet and REST API communication, as well as the Controller Area Network (CAN) network, the HVAC controller application ensures seamless integration and coordination of HVAC functions.

3. Microcontroller for Physical Interface:

- To provide users with intuitive control over HVAC settings, we've integrated a microcontroller into our setup.

- The microcontroller serves as an interface between the physical controls (buttons and display) and the zone computer.

- Using the CAN interface, the microcontroller relays user inputs to the zone computer, enabling responsive and interactive control of HVAC parameters.

- By bridging the gap between the digital and physical domains, the microcontroller enhances user experience and usability, making HVAC control intuitive and accessible.

The experiment with zone architecture in automotive electronics has proven the effectiveness of our setup. In our solution, pressing a button triggers the transmission of information to the zone computer, where the temperature is adjusted and broadcasted to the respective temperature displays in the zone and to the main Android Automotive OS (head unit IVI). Additionally, changing the temperature via the interface on Android results in sending information to the appropriate zone, thereby adjusting the temperature in that zone.

During the hardware layer testing, we utilized the REST API protocol to expedite implementation. However, we observed certain limitations of this solution. Specifically, we anticipated from the outset that the REST API protocol would not suffice for our needs. The VHAL in the Android system needs to know the HTTP addresses of individual zones and specify to which zone the temperature change should be sent. This approach is not very flexible and may introduce delays associated with each connection to the HTTP server.

In the next article, we plan to review available communication protocols and methods of message description in such a network. Our goal will be to find protocols that excel in terms of speed, flexibility of application, and security. By doing so, we aim to further refine our solution and maximize its effectiveness in the context of zone architecture in automotive electronics.

Addressing data governance challenges in enterprises through the use of LLM Hubs

In an era where more than 80% of enterprises are expected to use Generative AI by 2026, up from less than 5% in 2023, the integration of AI chatbots is becoming increasingly common. This adoption is driven by the significant efficiency boosts these technologies offer, with over half of businesses now deploying conversational AI for customer interactions.

In fact, 92% of Fortune 500 companies are using OpenAI’s technology, with 94% of business executives believing that AI is a key to success in the future.

Challenges to GenAI implementation

The implementation of large language models (LLMs) and AI-driven chatbots is a challenging task in the current enterprise technology scene. Apart from the complexity of integrating these technologies, there is a crucial need to manage the vast amount of data they process securely and ethically. This emphasizes the importance of having robust data governance practices in place.

Organizations deploying generative AI chatbots may face security risks associated with both external breaches and internal data access. Since these chatbots are designed to streamline operations, they require access to sensitive information . Without proper control measures in place, there is a high possibility that confidential information may be inadvertently accessed by unauthorized personnel.

For example, chatbots or AI tools are used to automate financial processes or provide financial insights. Failures in secure data management in this context may lead to malicious breaches.

Similarly, a customer service bot may expose confidential customer data to departments that do not have a legitimate need for it. This highlights the need for strict access controls and proper data handling protocols to ensure the security of sensitive information.

Dealing with complexities of data governance and LLMs

To integrate LLMs into current data governance frameworks, organizations need to adjust their strategy. This lets them use LLMs effectively while still following important standards like data quality, security, and compliance.

- It is crucial to adhere to ethical and regulatory standards when using data within LLMs. Establish clear guidelines for data handling and privacy.

- Devise strategies for the effective management and anonymization of the vast data volumes required by LLMs.

- Regular updates to governance policies are necessary to keep pace with technological advancements, ensuring ongoing relevance and effectiveness.

- Implement strict oversight and access controls to prevent unauthorized exposure of sensitive information through, for example, chatbots.

Introducing the LLM hub: centralizing data governance

An LLM hub empowers companies to manage data governance effectively by centralizing control over how data is accessed, processed, and used by LLMs within the enterprise. Instead of implementing fragmented solutions, this hub serves as a unified platform for overseeing and integrating AI processes.

By directing all LLM interactions through this centralized platform, businesses can monitor how sensitive data is being handled. This guarantees that confidential information is only processed when required and in full compliance with privacy regulations.

Role-Based Access Control in the LLM hub

A key feature of the LLM Hub is its implementation of Role-Based Access Control (RBAC) . This system enables precise delineation of access rights, ensuring that only authorized personnel can interact with specific data or AI functionalities. RBAC limits access to authorized users based on their roles in their organization. This method is commonly used in various IT systems and services, including those that provide access to LLMs through platforms or hubs designed for managing these models and their usage.

In a typical RBAC system for an LLM Hub, roles are defined based on the job functions within the organization and the access to resources that those roles require. Each role is assigned specific permissions to perform certain tasks, such as generating text, accessing billing information, managing API keys, or configuring model parameters. Users are then assigned roles that match their responsibilities and needs.

Here are some of the key features and benefits of implementing RBAC in an LLM Hub:

- By limiting access to resources based on roles, RBAC helps to minimize potential security risks. Users have access only to the information and functionality necessary for their roles, reducing the chance of accidental or malicious breaches.

- RBAC allows for easier management of user permissions. Instead of assigning permissions to each user individually, administrators can assign roles to users, streamlining the process and reducing administrative overhead.

- For organizations that are subject to regulations regarding data access and privacy, RBAC can help ensure compliance by strictly controlling who has access to sensitive information.

- Roles can be customized and adjusted as organizational needs change. New roles can be created, and permissions can be updated as necessary, allowing the access control system to evolve with the organization.

- RBAC systems often include auditing capabilities, making it easier to track who accessed what resources and when. This is crucial for investigating security incidents and for compliance purposes.

- RBAC can enforce the principle of separation of duties, which is a key security practice. This means that no single user should have enough permissions to perform a series of actions that could lead to a security breach. By dividing responsibilities among different roles, RBAC helps prevent conflicts of interest and reduces the risk of fraud or error.

Practical application: safeguarding HR Data

Let's break down a practical scenario where an LLM Hub can make a significant difference - managing HR inquiries:

- Scenario : An organization employed chatbots to handle HR-related questions from employees. These bots need access to personal employee data but must do so in a way that prevents misuse or unauthorized exposure.

- Challenge: The main concern was the risk of sensitive HR data—such as personal employee details, salaries, and performance reviews—being accessed by unauthorized personnel through the AI chatbots. This posed a significant risk to privacy and compliance with data protection regulations.

- Solution with the LLM hub :

- Controlled access: Through RBAC, only HR personnel can query the chatbot for sensitive information, significantly reducing the risk of data exposure to unauthorized staff.

- Audit trails: The system maintained detailed audit trails of all data access and user interactions with the HR chatbots, facilitating real-time monitoring and swift action on any irregularities.

- Compliance with data privacy laws: To ensure compliance with data protection regulations, the LLM Hub now includes automated compliance checks. These help to adjust protocols as needed to meet legal standards.

- Outcome: The integration of the LLM Hub at the company led to a significant improvement in the security and privacy of HR records. By strictly controlling access and ensuring compliance, the company not only safeguarded employee information but also strengthened its stance on data ethics and regulatory adherence.

Conclusion

Robust data governance is crucial as businesses embrace LLMs and AI. The LLM Hub provides a forward-thinking solution for managing the complexities of these technologies. Centralizing data governance is key to ensuring that organizations can leverage AI to improve their operational efficiency without compromising on security, privacy, or ethical standards. This approach not only helps organizations avoid potential pitfalls but also enables sustainable innovation in the AI-driven enterprise landscape.

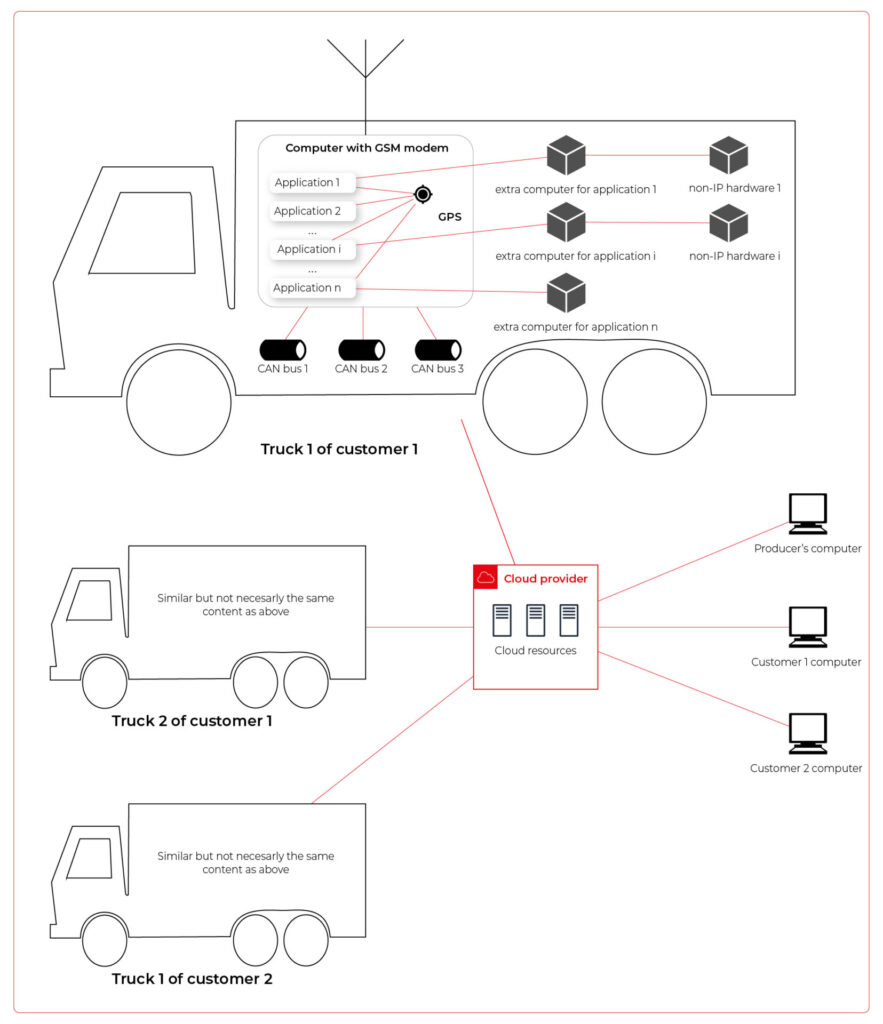

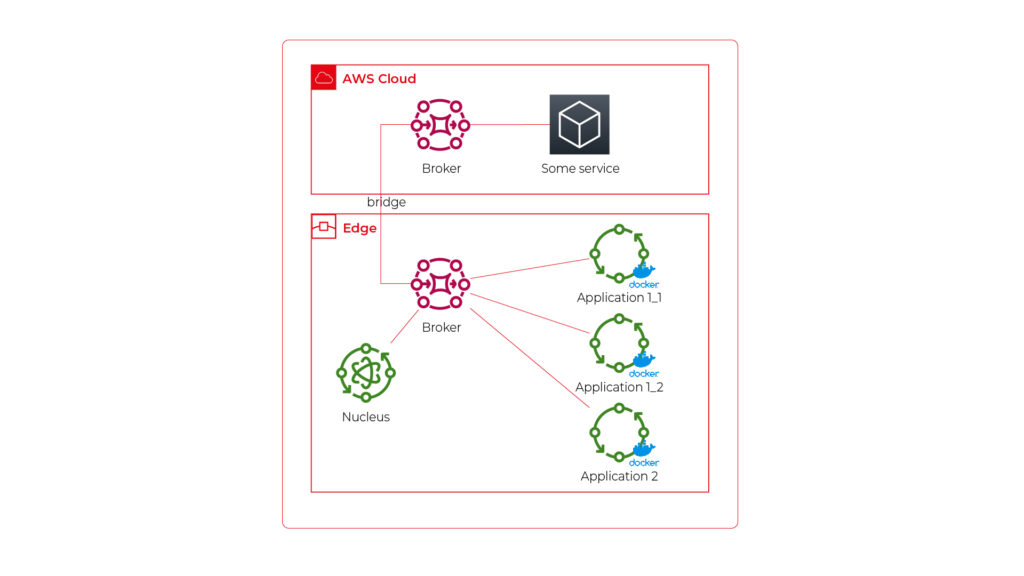

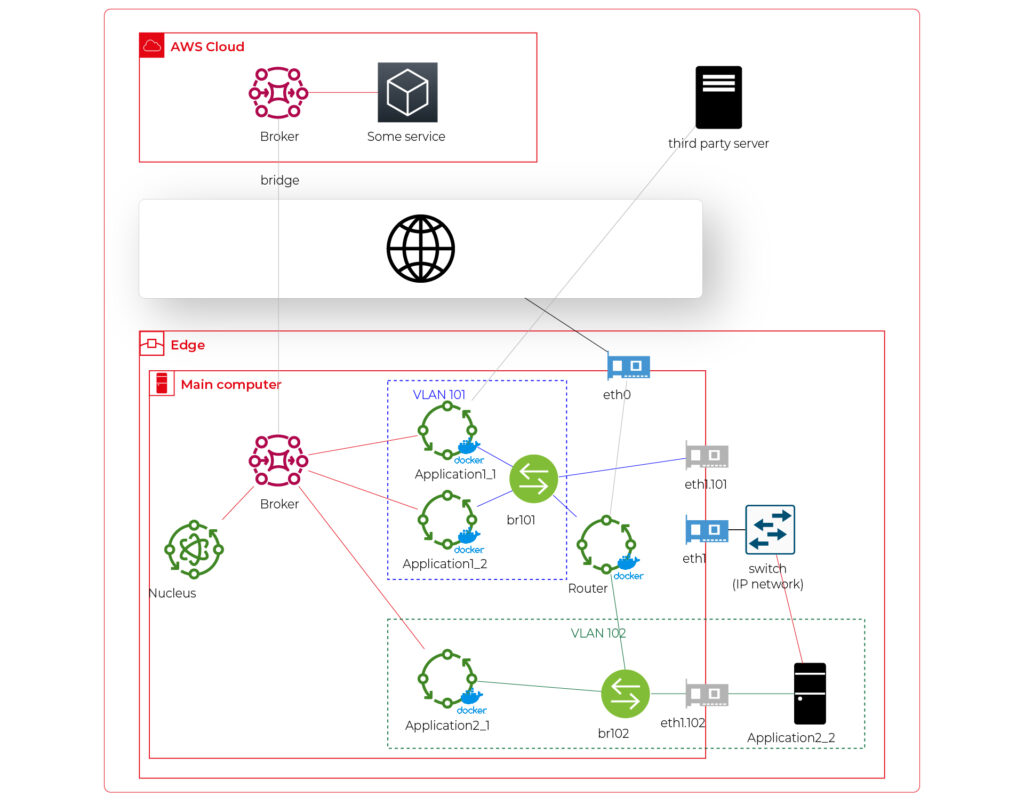

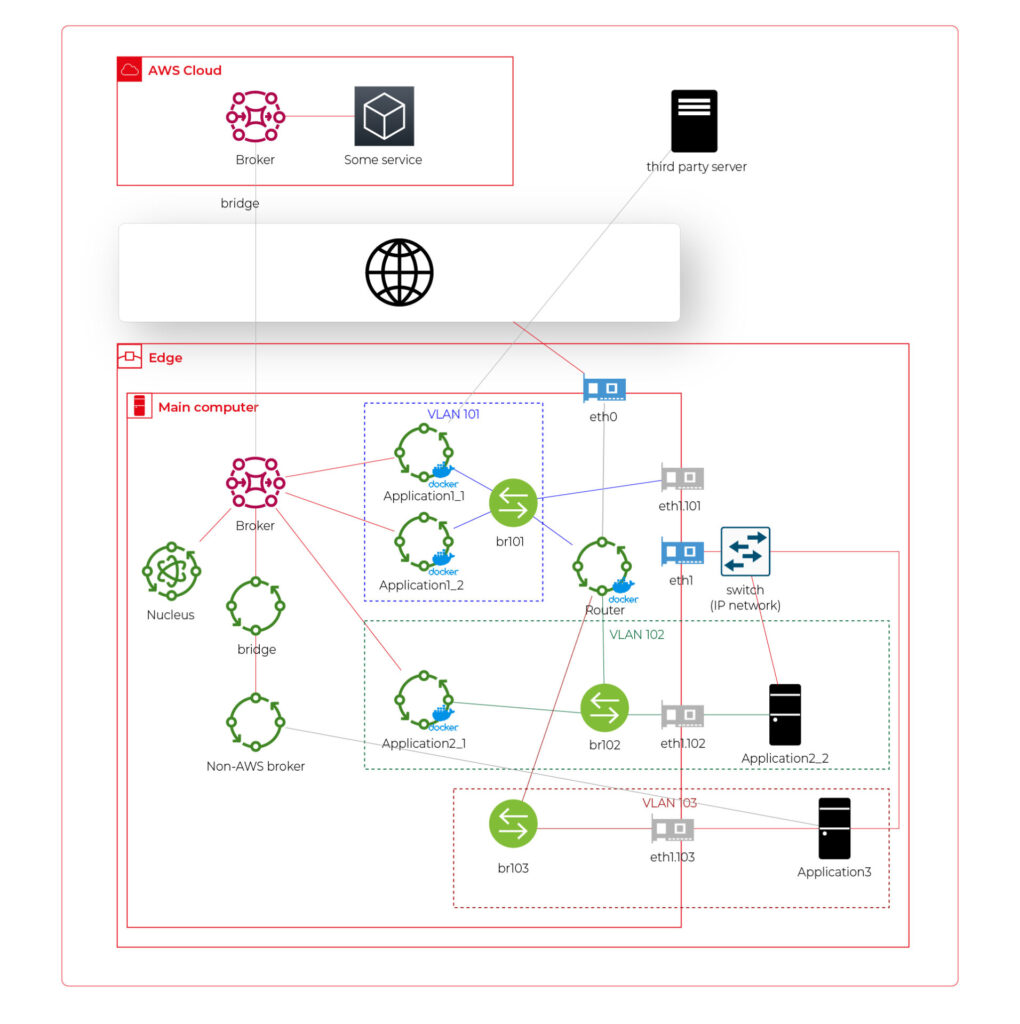

Fleet management task with AWS IoT – overcoming limitations of Docker Virtual Networks

We’re working for a client that produces fire trucks. There is a list of requirements and the architecture proposal in the first article and a step-by-step implementation of the prototype in the second one . This time, we’re going to close the topic with DHCP implementation and UDP tests.

DHCP server and client

A major issue with Docker is the need to assign IP addresses for containers. It is impractical to rely on automatic address assignments managed by Docker or to manually set addresses when containers are started. The architecture intended for IoT edge should ensure that the state of the device can be easily reproduced even after a power failure or reboot.

It may also be necessary to set fixed addresses for containers that will be the reference point for the entire architecture - see the Router container in our previous text. It is also worth considering the scenario where an external provider wants to connect to the edge device with extra devices. As part of the collaboration, it may be necessary to provide immutable IP addresses, e.g., for IP discovery service.

Our job is to provide a service to assign IP addresses from configurable pools for both physical and virtual devices in VLANs. It sounds like DHCP and indeed, it is DHCP, but it’s not so simple with Docker. Unfortunately, Docker uses its own addressing mechanism that cannot be linked to the network DHCP server.

The proposed solution will rely on a DHCP server and a DHCP client. At startup, the script responsible for running the Docker image will call the DHCP client and receive information about the MAC address and IP address the container will have.

Ultimately, we want to get a permanent configuration that is stored as a file or some simple database for the above. This will give us an immutable configuration for the basic parameters of the Docker container. To connect the MAC address, IP address, and Docker container, we propose adding the name of the potential Docker container to the record. This will create a link for the 3 elements that uniquely identifies the Docker container.

When the script starts, it queries the DHCP server for a possible available IP address and checks beforehand if there is already a lease for the IP/MAC address determined from the Docker container name.

This achieves a configuration that is resistant to IP conflicts and guarantees the reusability of previously assigned IP addresses.

DHCP server

For our use-case, we’ve decided to rely on isc-dhcp-server package. This is a sample configuration you can adjust for your needs.

dhcpd.conf

authoritative;

one-lease-per-client true;

subnet 10.0.1.0 netmask 255.255.255.0 {

range 10.0.1.2 10.0.1.200;

option domain-name-servers 8.8.8.8, 8.8.4.4;

option routers 10.0.1.3;

option subnet-mask 255.255.255.0;

default-lease-time 3600;

max-lease-time 7200;

}

subnet 10.0.2.0 netmask 255.255.255.0 {

range 10.0.2.2 10.0.1.200;

option domain-name-servers 8.8.8.8, 8.8.4.4;

option routers 10.0.2.3;

option subnet-mask 255.255.255.0;

default-lease-time 3600;

max-lease-time 7200;

}

Here is the breakdown for each line in the mentioned configuration. There are two subnets configured with two address pools for each VLAN in our network.

authoritative - this directive means that the DHCP server is the authoritative source for the network. If a client queries with an IP address that it was given by another DHCP server, this server will tell the client that the IP address is invalid, effectively forcing the client to ask for a new IP address.

one-lease-per-client - this ensures that each client gets only one lease at a time. This helps avoid scenarios where a single client might end up consuming multiple IP addresses, leading to a reduced available IP pool.

option domain-name-servers – this assigns DNS servers to the DHCP clients. In this case, it's using Google's public DNS servers (8.8.8.8 and 8.8.4.4).

option routers – this assigns a default gateway for the DHCP clients. Devices in this network will use 10.0.1.3 as their way out of the local network, likely to reach the internet or other networks.

option subnet-mask – this specifies the subnet mask to be assigned to DHCP clients, which in this case is 255.255.255.0. It determines the network portion of an IP address.

default-lease-time – specifies how long, in seconds, a DHCP lease will be valid if the client doesn't ask for a specific lease time. Here, it's set to 3600 seconds, which is equivalent to 1 hour.

max-lease-time - this sets the maximum amount of time, in seconds, a client can lease an IP address. Here, it's 7200 seconds or 2 hours.

DHCP Client

In our scenario, all new application containers are added to the system via bash commands executed on the Host – the firetruck’s main computer or Raspberry PI in our prototype. See the previous chapter for adding containers commands reference. The command requires IP addresses and gateways for each container.

Our approach is to obtain an address from the DHCP server (as dynamic IP) and set up a container with the address configured as static IP. To achieve this, we need a shell-friendly DHCP client. We’ve decided to go with a Python script that can be called when creating new containers.

DHCP Client Example (Python)

See comments in the scripts below for explanations of each block.

from scapy.layers.dhcp import BOOTP, DHCP

from scapy.layers.inet import UDP, IP, ICMP

from scapy.layers.l2 import Ether

from scapy.sendrecv import sendp, sniff

# Sendind discovery packet for DHCP

def locate_dhcp(src_mac_addr):

packet = Ether(dst='ff:ff:ff:ff:ff:ff', src=src_mac_addr, type=0x0800) / IP(src='0.0.0.0', dst='255.255.255.255') / \

UDP(dport=67, sport=68) / BOOTP(op=1, chaddr=src_mac_addr) / DHCP(options=[('message-type', 'discover'), 'end'])

sendp(packet, iface="enp2s0")

# Receiving offer by filtering out packets packet[DHCP].options[0][1] == 2

def capture_offer():

return sniff(iface="enp2s0", filter="port 68 and port 67",

stop_filter=lambda packet: BOOTP in packet and packet[BOOTP].op == 2 and packet[DHCP].options[0][1] == 2,

timeout=5)

# Transmitting packets with accepted offer (IP) from DHCP

def transmit_request(src_mac_addr, req_ip, srv_ip):

packet = Ether(dst='ff:ff:ff:ff:ff:ff', src=src_mac_addr, type=0x0800) / IP(src='0.0.0.0', dst='255.255.255.255') / \

UDP(dport=67, sport=68) / BOOTP(op=1, chaddr=src_mac_addr) / \

DHCP(options=[('message-type', 'request'), ("client_id", src_mac_addr), ("requested_addr", req_ip),

("server_id", srv_ip), 'end'])

sendp(packet, iface="enp2s0")

# Reading acknowledgement from DHCP. Filtering out packet[BOOTP].op == 2 and packet[DHCP].options[0][1] == 5 and ports 68/67

def capture_acknowledgement():

return sniff(iface="enp2s0", filter="port 68 and port 67",

stop_filter=lambda packet: BOOTP in packet and packet[BOOTP].op == 2 and packet[DHCP].options[0][1] == 5,

timeout=5)

# Ping offered IP address

def transmit_test_packet(src_mac_addr, src_ip_addr, dst_mac_addr, dst_ip_addr):

packet = Ether(src=src_mac_addr, dst=dst_mac_addr) / IP(src=src_ip_addr, dst=dst_ip_addr) / ICMP()

sendp(packet, iface="enp2s0")

if __name__ == "__main__":

# dummy mac address

mac_addr = "aa:bb:cc:11:22:33"

print("START")

print("SEND: Discover")

locate_dhcp(mac_addr)

print("RECEIVE: Offer")

received_packets = capture_offer()

server_mac_addr = received_packets[0]["Ether"].src

bootp_response = received_packets[0]["BOOTP"]

server_ip_addr = bootp_response.siaddr

offered_ip_addr = bootp_response.yiaddr

print("OFFER:", offered_ip_addr)

print("SEND: Request for", offered_ip_addr)

transmit_request(mac_addr, offered_ip_addr, server_ip_addr)

print("RECEIVE: Acknowledge")

received_packets2 = capture_acknowledgement()

print("ACKNOWLEDGE:", offered_ip_addr)

print("SEND: Test IP Packet")

transmit_test_packet(mac_addr, offered_ip_addr, server_mac_addr, server_ip_addr)

print("END")

Let’s talk about our use case.

The business requirement is to add another device to the edge - perhaps a thermal imaging camera. Our assumption is to guarantee as fully automatic onboarding of the device in our system as possible. Adding a new device will also mean, in our case, connecting it to the customer-provided Docker container.

Our expected result is to get a process that registers the new Docker container with the assigned IP address from the DHCP server. The IP address is, of course, dependent on the VLAN in which the new device will be located.

In summary, it is easy to see that plugging in a new device at this point just means that the IP address is automatically assigned and bound. The new device is aware of where the Router container is located - so communication is guaranteed from the very beginning.

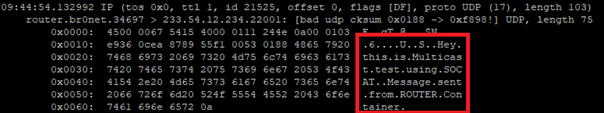

UDP broadcast and multicast setup

Broadcast UDP is a method for sending a message to all devices on a network segment, which allows for efficient communication and discovery of other devices on the same network. In an IoT context, this can be used for the discovery of devices and services, such as finding nearby devices for data exchange or sending a command to all devices in a network.

Multicast, on the other hand, allows for the efficient distribution of data to a group of devices on a network. This can be useful in scenarios where the same data needs to be sent to multiple devices at the same time, such as a live video stream or a software update.

One purpose of the architecture was to provide a seamless, isolated, LAN-like environment for each application. Therefore, it was critical to enable applications to use not only direct, IP, or DNS-based communication but also to allow multicasting and broadcasting messages. These protocols enable devices to communicate with each other in a way that is scalable and bandwidth-efficient, which is crucial for IoT systems where there may be limited network resources available.

The presented architecture provides a solution for dockerized applications that use UDP broadcast/multicast. The router Docker container environment is intended to host applications that are to distribute data to other containers in the manner.

Let’s check whether those techniques are available to our edge networks.

Broadcast

The test phase should start on the Container1 container with an enabled UDP listener. For that, run the command.

nc -ulp 5000

The command uses the netcat (nc) utility to listen (-l) for incoming UDP (-u) datagrams on port 5000 (-p 5000).

Then, let’s produce a message on the Router container.

echo -n "foo" | nc -uv -b -s 10.0.1.3 -w1 10.0.1.255 5000

The command above is an instruction that uses the echo and netcat to send a UDP datagram containing the string "foo" to all devices on the local network segment.

Breaking down the command:

echo -n "foo" - This command prints the string "foo" to standard output without a trailing newline character.

nc - The nc command is used to create network connections and can be used for many purposes, including sending and receiving data over a network.

-uv - These options specify that nc should use UDP as the transport protocol and that it should be run in verbose mode.

-b - This option sets the SO_BROADCAST socket option, allowing the UDP packet to be sent to all devices on the local network segment.

-s 10.0.1.3 - This option sets the source IP address of the UDP packet to 10.0.1.3.

-w1 - This option sets the timeout for the nc command to 1 second.

10.0.1.255 - This is the destination IP address of the UDP packet, which is the broadcast address for the local network segment.

5000 - This is the destination port number for the UDP packet.

Please note that both source and destination addresses belong to VLAN 1. Therefore, the datagram is sent via the eth0 interface to this VLAN only.

The expected result is the docker container Container1 receiving the message from the Router container via UDP broadcast.

Multicast

Let's focus on Docker Container parameters specified when creating containers (Docker containers [execute on host] sub-chapter in the previous article ). In the context of Docker containers, the --sysctl net.ipv4.icmp_echo_ignore_broadcasts=0 option is crucial if you need to enable ICMP echo requests to the broadcast address inside the container. For example, if your containerized application relies on UDP broadcast for service discovery or communication with other containers, you may need to set this parameter to 0 to allow ICMP echo requests to be sent and received on the network.

Without setting this parameter to 0, your containerized application may not be able to communicate properly with other containers on the network or may experience unexpected behavior due to ICMP echo requests being ignored. Therefore, the --sysctl net.ipv4.icmp_echo_ignore_broadcasts=0 option can be crucial in certain Docker use cases where ICMP echo requests to the broadcast address are needed.

Usage example

Run the command below in the container Container1 (see previous chapter for naming references). We use socat, which is a command line utility that establishes a bidirectional byte stream and transfers data between them. Please note that the IP address of the multicast group does not belong to the VLAN 1 address space.

socat -u UDP4-RECV:22001,ip-add-membership=233.54.12.234:eth0 /dev/null &

Then, add the route to the multicast group.

ip route add 233.54.12.234/32 dev eth0

You can ping the address from Device 1 to verify the group has been created.

ping -I eth0 -t 2 233.54.12.234

As you can see, an interface parameter is required with the ping command to enforce using the correct outgoing interface. You can also limit the TTL parameter (-t 2) to verify the route length to the multicast group.

Now, use socat on Device1 to open the connection inside the group.

ip route add 233.54.12.234/32 dev eth0

socat STDIO UDP-DATAGRAM:233.54.12.234:22001

Please note you have to setup the route to avoid sending packets to “unknown network” directly to the router.

Now, you can type the message on Device1 and use tcpdump on Container1 to see the incoming message.

tcpdump -i eth0 -Xavvv

Summary

Nowadays, a major challenge faced by developers and customers is to guarantee maximum security while ensuring compatibility and openness to change for edge devices. As part of IoT, it is imperative to keep in mind that the delivered solution may be extended in the future with additional hardware modules, and thus, the environment into which this module will be deployed must be ready for changes.

This problem asks the non-trivial question of how to meet business requirements while taking into account all the guidelines from standards from hardware vendors or the usual legal standards.

Translating the presented architecture into a fire trucks context, all the requirements from the introduction regarding isolation and modularity of the environment have been met. Each truck has the ability to expand the connected hardware while maintaining security protocols. In addition, the Docker images that work with the hardware know only their private scope and the router's scope.

The proposed solution provides a ready answer on how to obtain a change-ready environment that meets security requirements. A key element of the architecture is to guarantee communication for applications only in the VLAN space in which they are located.

This way, any modification should not affect already existing processes on the edge side. It is also worth detailing the role played by the Router component. With it, we guarantee a way to communicate between Docker containers while maintaining a configuration that allows you to control network traffic.

We have also included a solution for UDP Broadcast / Multicast communication. Current standards among hardware include solutions that transmit data via the standard. This means that if, for example, we are waiting for emergency data on a device, we must also be ready to handle Broadcasts and ensure that packets are consumed only by those components that are designed for this purpose.

Summarizing the presented solution, one should not forget about applications in other industries as well. The idea of independent Docker images and modularity for hardware allows application even in the Automotive and high-reliability areas, where the use of multiple devices, not necessarily from the same supplier, is required.

We encourage you to think about further potential applications and thank you for taking the time to read.

Exploring Texas Instruments Edge AI: Hardware acceleration for efficient computation

In recent years, the field of artificial intelligence (AI) has witnessed a transformative shift towards edge computing, enabling intelligent decision-making to occur directly on devices rather than relying solely on cloud-based solutions. Texas Instruments, a key player in the semiconductor industry, has been at the forefront of developing cutting-edge solutions for Edge AI. One of the standout features of their offerings is the incorporation of hardware acceleration for efficient computation, which significantly improves the performance of AI models on resource-constrained devices.

Pros and cons of running AI models on embedded devices vs. cloud

In the evolving landscape of artificial intelligence , the decision to deploy models on embedded devices or rely on cloud-based solutions is a critical consideration. This chapter explores the advantages and disadvantages of running AI models on embedded devices, emphasizing the implications for efficiency, privacy, latency, and overall system performance.

Advantages of embedded AI

- Low Latency

One of the primary advantages of embedded AI is low latency. Models run directly on the device, eliminating the need for data transfer to and from the cloud. This results in faster response times, making embedded AI ideal for applications where real-time decision-making is crucial. - Privacy and Security

Embedded AI enhances privacy by processing data locally on the device. This mitigates concerns related to transmitting sensitive information to external servers. Security risks associated with data in transit are significantly reduced, contributing to a more secure AI deployment. - Edge Computing Efficiency

Utilizing embedded AI aligns with the principles of edge computing. By processing data at the edge of the network, unnecessary bandwidth usage is minimized, and only relevant information is transmitted to the cloud. This efficiency is especially beneficial in scenarios with limited network connectivity. What’s more, some problems are very inefficient to solve on cloud-based AI models, for example: video processing with real time output. - Offline Functionality

Embedded AI allows for offline functionality, enabling devices to operate independently of internet connectivity. This feature is advantageous in remote locations or environments with intermittent network access, as it expands the range of applications for embedded AI. - Reduced Dependence on Network Infrastructure

Deploying AI models on embedded devices reduces dependence on robust network infrastructure. This is particularly valuable in scenarios where maintaining a stable and high-bandwidth connection is challenging or cost ineffective. AI feature implemented on the cloud platform will be unavailable in the car after the connection is lost.

Disadvantages of embedded AI

- Lack of Scalability

Scaling embedded AI solutions across a large number of devices can be challenging. Managing updates, maintaining consistency, and ensuring uniform performance becomes more complex as the number of embedded devices increases. - Maintenance Challenges

Updating and maintaining AI models on embedded devices can be more cumbersome compared to cloud-based solutions. Remote updates may be limited, requiring physical intervention for maintenance, which can be impractical in certain scenarios. - Initial Deployment Cost

The initial cost of deploying embedded AI solutions, including hardware and development, can be higher compared to cloud-based alternatives. However, this cost may be offset by long-term benefits, depending on the specific use case and scale. - Limited Computational Power

Embedded devices often have limited computational power compared to cloud servers. This constraint may restrict the complexity and size of AI models that can be deployed on these devices, impacting the range of applications they can support. - Resource Constraints

Embedded devices typically have limited memory and storage capacities. Large AI models may struggle to fit within these constraints, requiring optimization or compromising model size for efficient deployment.

The decision to deploy AI models on embedded devices or in the cloud involves careful consideration of trade-offs. While embedded AI offers advantages in terms of low latency, privacy, and edge computing efficiency, it comes with challenges related to scalability, maintenance, and limited resources.

However, chipset manufacturers are constantly engaged in refining and enhancing their products by incorporating specialized modules dedicated to hardware-accelerated model execution. This ongoing commitment to innovation aims to significantly improve the overall performance of devices, ensuring that they can efficiently run AI models. The integration of these hardware-specific modules not only promises comparable performance but, in certain applications, even superior efficiency.

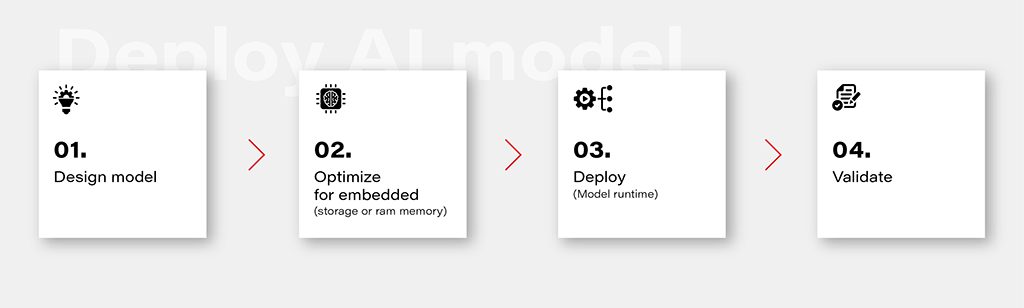

Deploy AI model on embedded device workflow

1. Design Model

Designing an AI model is the foundational step in the workflow. This involves choosing the appropriate model architecture based on the task at hand, whether it's classification, regression, or other specific objectives. This is out of the topic for this article.

2. Optimize for Embedded (Storage or RAM Memory)

Once the model is designed, the next step is to optimize it for deployment on embedded devices with limited resources. This optimization may involve reducing the model size, minimizing the number of parameters, or employing quantization techniques to decrease the precision of weights. The goal is to strike a balance between model size and performance to ensure efficient operation within the constraints of embedded storage and RAM memory.

3. Deploy (Model Runtime)

Deploying the optimized model involves integrating it into the embedded system's runtime environment. While there are general-purpose runtime frameworks like TensorFlow Lite and ONNX Runtime, achieving the best performance often requires leveraging dedicated frameworks that utilize hardware modules for accelerated computations. These specialized frameworks harness hardware accelerators to enhance the speed and efficiency of the model on embedded devices.

4. Validate

Validation is a critical stage in the workflow to ensure that the deployed model performs effectively on the embedded device. This involves rigorous testing using representative datasets and scenarios. Metrics such as accuracy, latency, and resource usage should be thoroughly evaluated to verify that the model meets the performance requirements. Validation helps identify any potential issues or discrepancies between the model's behavior in the development environment and its real-world performance on the embedded device.

Deploy model on Ti Edge AI and Jacinto 7

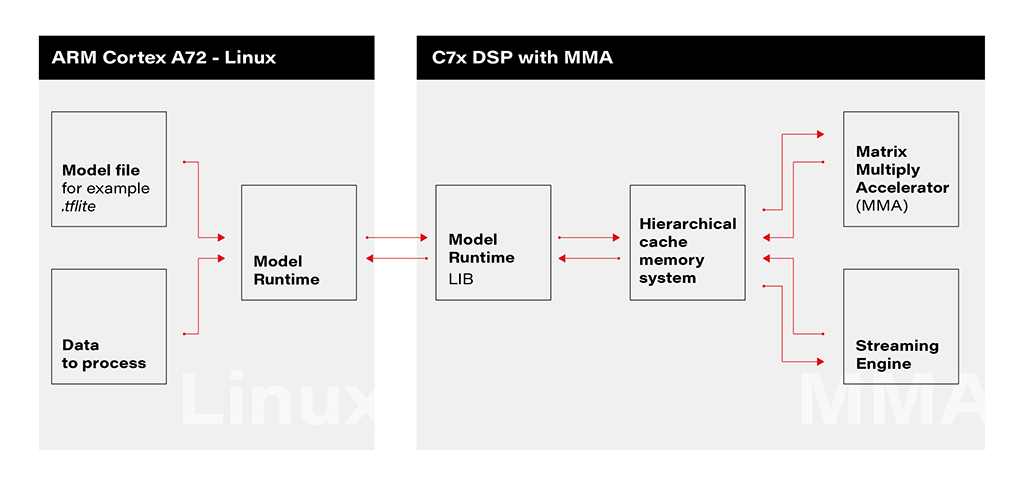

Deploying an AI model on Ti Edge AI and Jacinto 7 involves a series of steps to make the model work efficiently with both regular and specialized hardware. In simpler terms, we'll walk through how the model file travels from a general Linux environment to a dedicated DSP core, making use of special hardware features along the way.

1. Linux Environment on A72 Core: The deployment process initiates within the Linux environment running on the A72 core. Here, a model file resides, ready to be utilized by the application's runtime. The model file, often in a standardized format like .tflite, serves as the blueprint for the AI model's architecture and parameters.

2. Runtime Application on A72 Core: The runtime application, responsible for orchestrating the deployment, receives the model file from the Linux environment. This runtime acts as a proxy between the user, the model, and the specialized hardware accelerator. It interfaces with the Linux environment, handling the transfer of input data to be processed by the model.

3. Connection to C7xDSP Core: The runtime application establishes a connection with its library executing on the C7xDSP core. This library, finely tuned for hardware acceleration, is designed to efficiently process AI models using specialized modules such as the Matrix Multiply Accelerator.

4. Loading Model and Data into Memory: The library on the C7x DSP core receives the model description and input data, loading them into memory for rapid access. This optimized memory utilization is crucial for achieving efficient inference on the dedicated hardware.

5. Computation with Matrix Multiply Accelerator: Leveraging the power of the Matrix Multiply Accelerator, the library performs the computations necessary for model inference. The accelerator efficiently handles matrix multiplications, a fundamental operation in many neural network models.

The matrix multiply accelerator (MMA) provides the following key features:

- Support for a fully connected layer using matrix multiply with arbitrary dimension

- Support for convolution layer using 2D convolution with matrix multiply with read panel Support for ReLU non-linearity layer OTF

- Support for high utilization (>85%) for a typical convolutional neural network (CNN), such as AlexNet, ResNet, and others

- Ability to support any CNN network topologies limited only by memory size and bandwidth

6. Result Return to User via Runtime on Linux: Upon completion of computations, the results are returned to the user through the runtime application on the Linux environment. The inference output, processed with hardware acceleration, provides high-speed, low-latency responses for real-time applications.

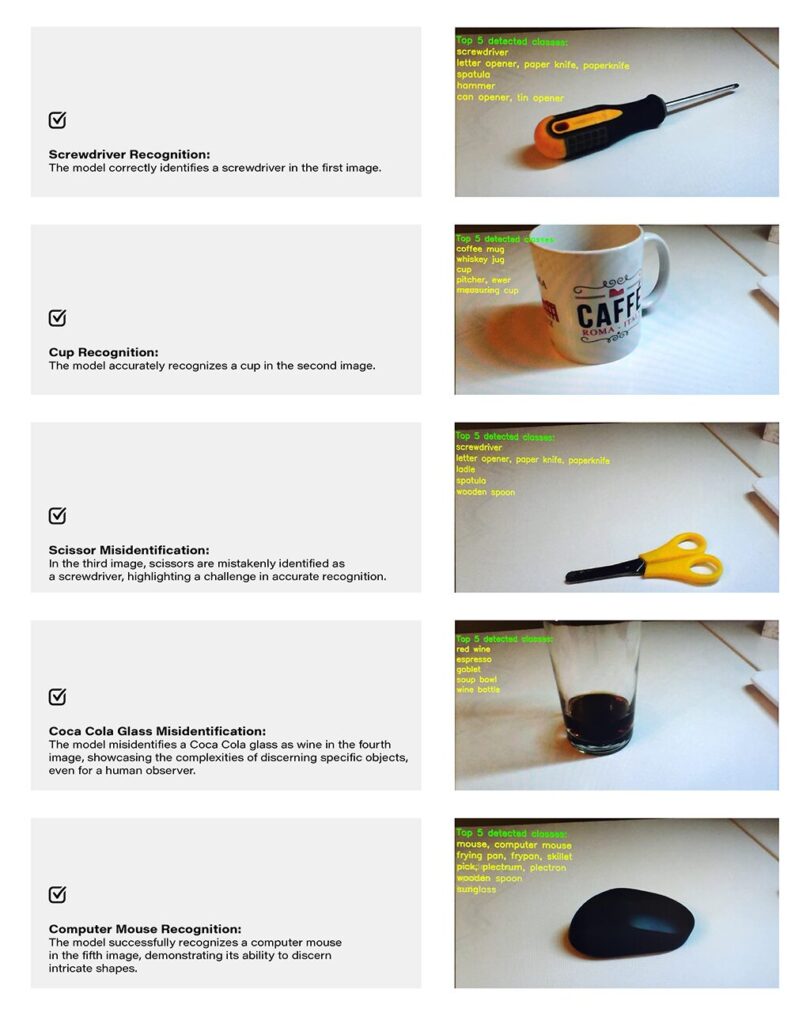

Object recognition with AI model on Jacinto 7: Real-world challenges

In this chapter, we explore a practical example of deploying an AI model on Jacinto 7 for object recognition. The model is executed according to the provided architecture, utilizing the TVM-CL-3410-gluoncv-mxnet-mobv2 model from the Texas Instruments Edge AI Model Zoo. The test images capture various scenarios, showcasing both successful and challenging object recognition outcomes.

The deployment architecture aligns with the schematic provided, incorporating Jacinto 7's capabilities to efficiently execute the AI model. The TVM-CL-3410-gluoncv-mxnet-mobv2 model is utilized, emphasizing its pre-trained nature for object recognition tasks.

Test Scenarios: A series of test images were captured to evaluate the model's performance in real-world conditions. Notably:

Challenges and Real-world Nuances: The test results underscore the challenges of accurate object recognition in less-than-ideal conditions. Factors such as image quality, lighting, and ambiguous object appearances contribute to the intricacy of the task. The third and fourth images, where scissors are misidentified as a screwdriver, and a Coca-Cola glass is misrecognized as wine, exemplify situations where even a human might face difficulty due to limited visual information-

Quality Considerations: The achieved results are noteworthy, considering the less-than-optimal quality of the test images. The chosen camera quality and lighting conditions intentionally mimic challenging real-world scenarios, making the model's performance commendable.

Conclusion: The real-world example of object recognition on Jacinto 7 highlights the capabilities and challenges associated with deploying AI models in practical scenarios. The successful identification of objects like a screwdriver, cup, and computer mouse demonstrates the model's efficacy. However, misidentifications in challenging scenarios emphasize the need for continuous refinement and adaptation, acknowledging the intricacies inherent in object recognition tasks, especially in dynamic and less-controlled environments.

How to develop AI-driven personal assistants tailored to automotive needs. Part 3

Blend AI assistant concepts together

This series of articles starts with a general chatbot description – what it is, how to deploy the model, and how to call it. The second part is about tailoring – how to teach the bot domain knowledge and how to enable it to execute actions. Today, we’ll dive into the architecture of the application, to avoid starting with something we would regret later on.

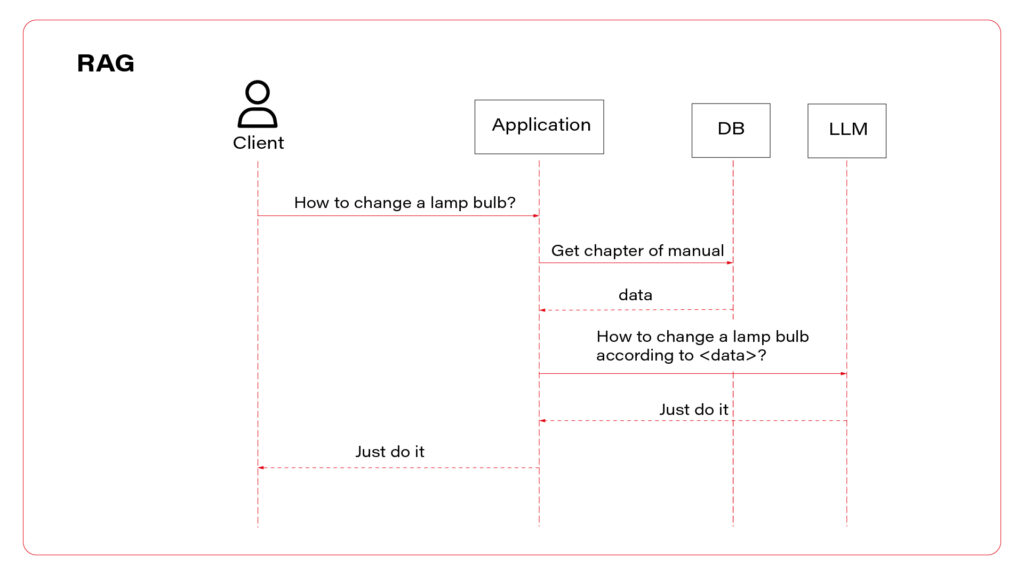

To sum up, there are three AI assistant concepts to consider: simple chatbot, RAG, and function calling.

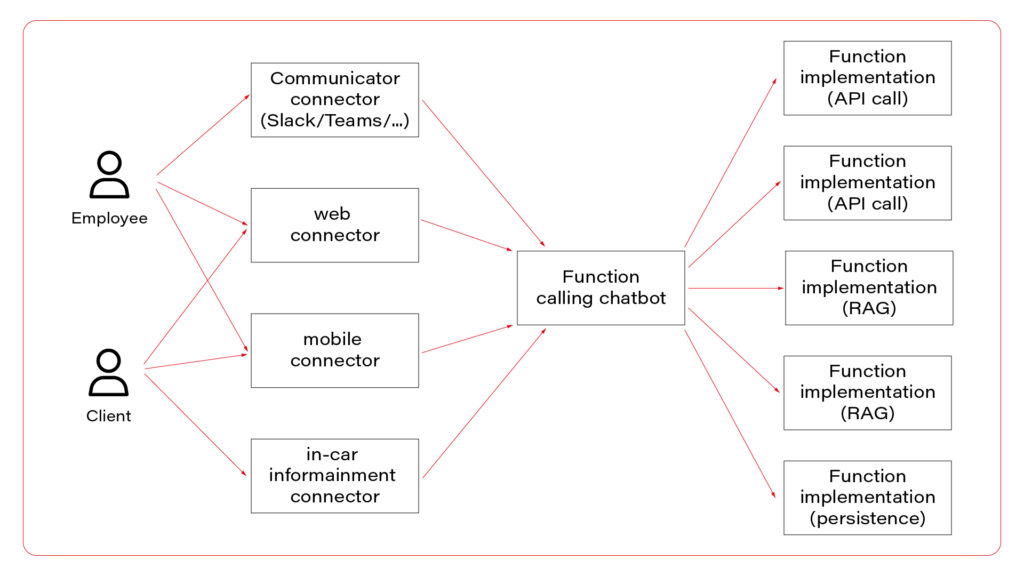

I propose to use them all at once. Let’s talk about the architecture. The perfect one may look as follows.

In the picture, you can see three layers. The first one, connectors, is responsible for session handling. There are some differences between various UI layers, so it’s wise to keep them small, simple, and separated. Members of this layer may be connected to a fast database, like Redis, to allow session sharing between nodes, or you can use a server-side or both-side communication channel to keep sessions alive. For simple applications, this layer is optional.

The next layer is the chatbot – the “main” application in the system. This is the application connected to the LLM, implementing the function calling feature. If you use middleware between users and the “main” application, this one may be stateless and receive the entire conversation from the middleware with each call. As you can see, the same application serves its capabilities both to employees and clients.

Let’s imagine a chatbot dedicated to recommending a car. Both a client and a dealer may use a very similar application, but the dealer has more capabilities – to order a car, to see stock positions, etc. You don’t need to create two different applications for that. The concept is the same, the architecture is the same, and the LLM client is the same. There are only two elements that differ: system prompts and the set of available functions. You can play it through a simple abstract factory pattern that will provide different prompts and function definitions for different users.

In a perfect world, the last layer is a set of microservices to handle different functions. If the LLM decides to use the function “store_order”, the “main” application calls the “store_order” function microservice that inserts data to an order database. Suppose the LLM decides to use the function “honk_and_flash” to localize a car in a crowded parking. In that case, the “main” application calls the “hong_and_flash” function microservice that handles authorization and calls a Digital Twin API to execute the operation in the car. If the LLM decides to use a function “check_in_user_manual”, the “main” application calls the “check_in_user_manual” function microservice, which is… another LLM-based application!

And that’s the point!

A side note before we move on – the world is never perfect so it’s understandable if you won’t implement each function as a separate microservice and e.g. keep everything in the same application.

The architecture proposed can combine all three AI assistant concepts. The “main” application may answer questions based on general knowledge and system prompts (“simple chatbot” concept) or call a function (“function calling” concept). The function may collect data based on the prompt (“RAG” concept) and do one of the following: call LLM to answer a question or return the data to add it to the context to let the “main” LLM answer the question. Usually, it’s better to follow the former way – to answer the question and not to add huge documents to the context. But for special use cases, like a long conversation about collected data, you may want to keep the document in the context of the conversation.

Which brings us to the last idea – mutable context. In general, each call contains the conversation history, including all data collected during the conversation, together with all available functions’ definitions.

First prompt:

System: You are a car seller, be nice to your customers

User: I’d like to buy a car

Functions: function1, function2, function3

Second prompt:

System: You are a car seller, be nice to your customers

User: I’d like to buy a car

Assistant: call function1

Function: function1 returned data

Assistant: Sure, what do you need?

User: I’m looking for a sports car.

Functions: function1, function2, function3

Third prompt:

System: You are a car seller, be nice to your customers

User: I’d like to buy a car

Assistant: call function1

Function: function1 returned data

Assistant: Sure, what do you need?

User: I’m looking for a sports car.

Assistant: I propose model A, it’s fast and furious

User: I like it!

Functions: function1, function2, function3

You can consider a mutation of the conversation context at this point.

Fourth prompt:

System: You are a sports car seller, be nice to your customers

System: User is looking for a sports car and he likes model A

Assistant: Do you want to order model A?

Functions: function1, function2, function3, function4

You can implement a summarization function in your code to shorten the conversation, or you can select different subsets of all functions available, depending on the conversation context. You can perform both those tasks with the same LLM instance you use to make the conversation but with totally different prompts, e.g. “Summarize the conversation” instead of “You are a car seller”. Of course, the user won’t even see that your application calls the LLM more often than on user prompts only.

Pitfalls

All techniques mentioned in the series of articles may be affected by some drawbacks.

The first one is the response time . When you put more data into the context, the user waits longer for the responses. It’s especially visible for voice-driven chatbots and may influence the user experience. Which means - it’s more important for customer-facing chatbots than the ones for internal usage only.

The second inhibition is cost . Today, the 1000 prompt tokens processed by GPT-4-Turbo cost €0,01, which is not a lot. However, a complex system prompts together with some user data may, let’s say, occupy 20000 tokens. Let’s assume that the first question takes 100 tokens, the first answer takes 150 tokens, and the second question takes 200 tokens. The cost of the conversation is calculated as follows.

First prompt: common data + first question = 20000 [tokens] + 100 [tokens] = 2100 [tokens]

Second prompt: common data + first question + first answer + second question = 20000 [tokens] + 100 [tokens] + 150 [tokens] + 200 [tokens] = 20450 [tokens]

This two-prompts conversation takes 40550 tokens in total so far, which costs €0,41, excluding completions. Be aware that users may play with your chatbot running up the bill.

The last risk is the security risk . In the examples in the part 2 article , the chatbot uses an “unlock” function that accepts a VIN. You are obliged to assume that the chatbot will try to call a function with the VIN parameter of a car that does not belong to the customer. Of course, you must implement various LLM-hacking prevention mechanisms (prompt engineering, prompt filtering, answer filtering, etc.), but you can never assume that it is sufficient. Therefore, the developer’s role is to secure the functions (function calling) and data sources (RAG). For example, if a user tries to call a vehicle-related function, the user should be identified by the OAuth2 token, and the function microservice should reject a call with a VIN argument that doesn’t match the user profile.

Outline

In this series of articles, I’ve given you a few examples of how to utilize the most significant invention of 2023 for your automotive clients. You can write a chatbot that analyses customers’ requirements, helps mechanics, automates maintenance visit scheduling, seeks answers in user manuals, or writes personalized emails. Large language models are powerful and may be customized with a little effort. On the other hand, you need to keep in mind their limitations and common pitfalls. At the end of the day, even if the engine of the application is a black box, there is still a lot to implement around it to meet the business requirements.

The era of LLM-based applications is started within the automotive industry too.

How to develop AI-driven personal assistants tailored to automotive needs. Part 2

Making the chatbot more suitable

Recently we’ve built a simple chatbot , using an Azure-hosted GPT 4 Turbo instance. It’s a great starting point, but we definitely need more to fulfill any business requirements. Let’s try to talk with our bot.

Prompt:

Hi, I’d like to buy a new car. Can you assist me with a choice? I’m looking for a family car with a hybrid powertrain and all-wheel drive. I’m a fan of modern design and fancy features. My budget is around 70k euro.

Completion:

Absolutely, I’d be happy to help you find a suitable family car that meets your criteria! Given your preferences (…), there are several options across different brands that could be a great fit. (…):

1. Audi Q5 TFSI e

Powertrain: (…)

Features: (…)

Price Range: (…)

2. BMW X5 xDrive45e

Powertrain: (…)

Features: (…)

Price Range: (…)

3. Volvo XC90 Recharge

Powertrain: (…)

Features: (…)

Price Range: (…)

4. Mercedes-Benz GLE 450 4MATIC

Powertrain: (…)

Features: (…)

Price Range: (…)

5. Lexus RX 450h

Powertrain: (…)

Features: (…)

Price Range: (…)

When considering these options, it’s important to test drive the ones you’re most interested in to get a feel for their driving dynamics, comfort, and the user-friendliness of their tech features. Additionally, (…)

As you can see, the response is quite long, versatile, and absolutely useless.

If you’re working for one of those brands, you don’t want the chatbot to offer your competitors. Also, the knowledge base is a bit outdated, so the answer is unreliable. Besides that, the answer is too long to be shown on a mobile device or to be read. And you need to be aware that the user may misuse the chatbot to generate a master thesis, write a fairy tale or plan a trip to Rome.

Our job is to limit the bot possibilities. The way to achieve it is prompt engineering. Let’s try to add some system messages before the user prompt.

Messages=[

{"role": "system", "content": "You are a car seller working for X"},

{"role": "system", "content": "X offers following vehicles (…)"},

{"role": "system", "content": "Never recommend X competitors"},

{"role": "system", "content": "Avoid topics not related to X. e.g. If the user asks for weather, kindly redirect him to the weather service"},

{"role": "system", "content": "Be strict and accurate, avoid too long messages"},

{"role": "user", "content": "Hi, I’d like to buy a new car. Can you assist me with a choice? I’m looking for a family car with a hybrid powertrain and all-wheel drive. I’m a fan of modern design and fancy features. My budget is around 70k euro."},

]

Now the chatbot should behave much better, but it still can be tricked. Advanced prompt engineering, together with LLM hacking and ways to prevent it, is out of the scope of this article, but I strongly recommend exploring this topic before exposing your chatbot to real customers. For our purposes, you need to be aware that providing an entire offer in a prompt (“X offers following vehicles (…)”) may go way above the LLM context window. Which brings us to the next point.

Retrieval augmented generation

You often want to provide more information to your chatbot than it can handle. It can be an offer of a brand, a user manual, a service manual, or all of that put together, and much more. GPT 4 Turbo can work on up to 128 000 tokens (prompt + completion together), which is, according to the official documentation , around 170 000 of English words. However, the accuracy of the model decreases around half of it [source] , and the longer context processing takes more time and consumes more money. Google has just announced a 1M tokens model but generally speaking, putting too much into the context is not recommended so far. All in all, you probably don’t want to put there everything you have.

RAG is a technique of collecting proper input for the LLM that may contain information required to answer the user questions.

Let’s say you have two documents in your company knowledge base. The first one contains the company offer (all vehicles for sale), and the second one contains maintenance manuals. The user approaches your chatbot and asks the question: “Which car should I buy?”. Of course, the bot needs to identify user’s needs, but it also needs some data to work on. The answer is probably included in the first document but how can we know that?

In more detail, RAG is a process of comparing the question with available data sources to find the most relevant one or ones. The most common technique is vector search. This process converts your domain knowledge to vectors and stores them in a database (this process is called embedding). Each vector represents a piece of document – one chapter, one page, one paragraph, depending on your implementation. When the user asks his question, it is also converted to a vector representation. Then, you need to find the document represented by the most similar vector – it should contain the response to the question, so you need to add it to the context. The last part is the prompt, e.g. “Basic on this piece of knowledge, answer the question”.

Of course, the matter is much more complicated. You need to consider your embedding model and maybe improve it with fine-tuning. You need to compare search methods (vector, semantic, keywords, hybrid) and adapt them with parameters. You need to select the best-fitting database, polish your prompt, convert complex documents to text (which may be challenging, especially with PDFs), and maybe process the output to link to sources or extract images.

It's challenging but possible. See the result in one of our case studies: Voice-Driven Car Manual .

Good news is – you’re not the first one working on this issue, and there are some out-of-the-box solutions available.

The no-code one is Azure AI Search, together with Azure Cognitive Service and Azure Bot. The official manual covers all steps – prerequisites, data ingestion, and web application deployment. It works well, including OCR, search parametrization, and exposing links to source documents in chat responses. If you want a more flexible solution, the low-code version is available here .

I understand if you want to keep all the pieces of the application in your hands and you prefer to build it from scratch. At this point we need to move back to the language opting. The Langchain library, which was originally available for Python only, may be your best friend for this implementation.

See the example below.

From langchain.chains.question_answering import load_qa_chain

from langchain.embeddings import HuggingFaceEmbeddings

from langchain.vectorstores import Qdrant

from qdrant_client import QdrantClient

from langchain.chat_models import AzureChatOpenAI

from langchain.chains.retrieval_qa.base import RetrievalQA

client = QdrantClient(url="…", api_key="…")

embeddings = HuggingFaceEmbeddings(model_name="hkunlp/instructor-xl")

db = Qdrant(client= client, collection_name="…", embeddings=embeddings)

second_step = load_qa_chain(AzureChatOpenAI(

deployment_name="…",

openai_api_key="…",

openai_api_base="…",

openai_api_version="2023-05-15"

), chain_type="stuff", prompt="Using the context {{context}} answer the question: …")

first_step = RetrievalQA(

combine_documents_chain=second_step,

retriever=db.as_retriever(

search_type="similarity_score_threshold", search_kwargs={"score_threshold": 0.5 }

),

)

first_step.run()

This is the entire searching application. It creates and executes a “chain” of operations – the first step is to look for data in the Qdrant database, using a model called instructor-xl for embedding. The second step is to put the output of the first step as a “context” to the GPT prompt. As you can see, the application is based on the Langchain library. There is a Java port for it, or you can execute each step manually in any language you want. However, using Langchain in Python is the most convenient way to follow and a significant advantage of using this language at all.

With this knowledge you can build a chatbot and feed it with company knowledge. You can aim the application for end users (car owners), internal employees, or potential customers. But LLM can “do” more.

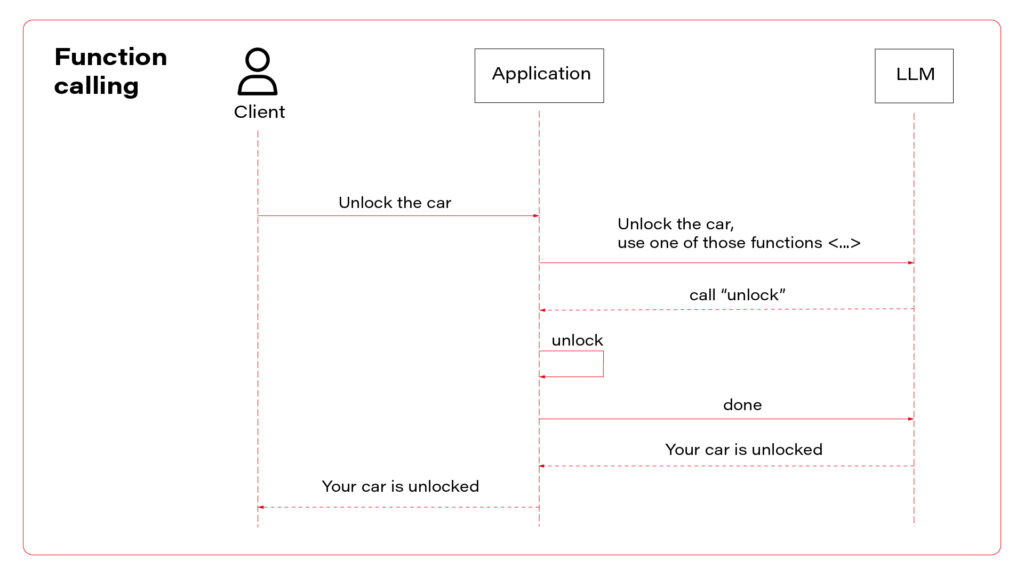

Function calling

To “do” is the keyword. In this section we’ll teach the LLM to do something for us, not only to provide information or tell jokes . An operational chatbot can download more data if needed and decide which data is required for the conversation, but it can also execute real operations. Most modern vehicles are delivered with mobile applications that you can use to read data (localize the car, check the mileage, read warnings) or to execute operations (open doors, turn on air conditioning, or start charging process). Let’s do the same with the chatbot.

Function calling is a built-in functionality of GPT models. There is a field in the API model for tools (functions), and it can produce responses in a JSON format. You can try to achieve the same with any other LLM with a prompt like that.

In this environment, you have access to a set of tools you can use to answer the user's question.

You may call them like this:

<function_calls>

<invoke>

<tool_name>$TOOL_NAME</tool_name>

<parameters>

<$PARAMETER_NAME>$PARAMETER_VALUE</$PARAMETER_NAME>

</parameters>

</invoke>

</function_calls>

Here are the tools available:

<tools>

<tool_description>

<tool_name>unlock</tool_name>

<description>

Unlocks the car.

</description>

<parameters>

<parameter>

<name>vin</name>

<type>string</type>

<description>Car identifier</description>

</parameter>

</parameters>

</tool_description>

</tools>

This is a prompt from the user: ….

Unfortunately, LLMs often don’t like to follow a required structure of completions, so you might face some errors when parsing responses.

With the GPT, the official documentation recommends verifying the response format, but I’ve never encountered any issue with this functionality.

Let’s see a sample request with functions’ definitions.

{

"model": "gpt-4",

"messages": [

{ "role": "user", "content": "Unlock my car" }

],

"tools": [

{

"type": "function",

"function": {

"name": "unlock",

"description": "Unlocks the car",

"parameters": {

"type": "object",

"properties": {

"vin": {

"type": "string",

"description": "Car identifier"

},

"required": ["vin"]

}

}

}

],

}

To avoid making the article even longer, I encourage you to visit the official documentation for reference.

If the LLM decides to call a function instead of answering the user, the response contains the function-calling request.

{

…

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": null,

"tool_calls": [

{

"id": "call_abc123",

"type": "function",

"function": {

"name": "unlock",

"arguments": "{\"vin\": \"ABC123\"}"

}

}

]

},

"logprobs": null,

"finish_reason": "tool_calls"

}

]

}

Based on the finish_reason value, your application decides to return the content to the user or to execute the operation. The important fact is – there is no magic that can be used to automatically call some API or execute a function in your code. Your application must find a function based on the name, and parse arguments from the JSON-formatted list. Then the response of the function should be sent to the LLM (not to the user), and the LLM makes the decision about next steps – to call another function (or the same with different arguments) or to write a response for the user. To send the response to the LLM, just add it to the conversation.

{

"model": "gpt-4",

"messages": [

{ "role": "user", "content": "Unlock my car" },

{"role": "assistant", "content": null, "function_call": {"name": "unlock", "arguments": "{\"vin\": \"ABC123\"}"}},

{"role": "function", "name": "unlock", "content": "{\"success\": true}"}

],

"tools": [

…

],

}

In the example above, the next response is more or less “Sure, I’ve opened your car”.

With this approach, you need to send with each request not only the conversation history and system prompts but also a list of all functions available with all parameters. Keep it in mind when counting your tokens.

Follow up

As you can see, we can limit the chatbot versatility by prompt engineering and boost its resourcefulness with RAG or external tools. It brings us to another level of LLMs usability but now we need to meld it together and not throw the baby out with the bathwater. In the last article we’ll consider the application architecture, plug some optimization, and evade common pitfalls. We’ll be right back!

How to develop AI-driven personal assistants tailored to automotive needs. Part 1

Artificial Intelligence is everywhere - my laundry dryer is “powered by AI” (whatever it means), and I suppose there are some fridges on the market that take photos of their content to send you a shopping list and maybe even propose a recipe for your next dinner basing on the food you have. Some people say that generative AI and large language models (LLMs) are the most important inventions since the Internet, and we observe the beginning of the next industrial revolution.

However, household appliances and the newest history deliberation are not in our sphere of interest. The article about AI-based tools to support developers is getting old quickly due to the extremely fast development of new tools and their capabilities. But what can we, software makers, propose to our customers to keep up with the world changing?

Let’s talk about chatbots. Today, we try to break down an AI-driven personal assistants topic for the automotive industry. First, to create a chatbot, we need a language model.

The best-known LLM is currently OpenAI GPT4 that powers ChatGPT and thousands of different tools and applications, including a very powerful, widely available Microsoft Copilot. Of course, there are more similar models: Anthropic Claude with a huge context window, recently updated Google Bard, available for self-hosting Llama, code-completion tailored Tabnine, etc.

Some of them can give you a human-like conversation experience, especially combined with voice recognition and text-to-speech models – they are smart, advanced, interactive, helpful, and versatile. Is it enough to offer an AI-driven personal assistant for your automotive customers?

Well, as usual, it depends.

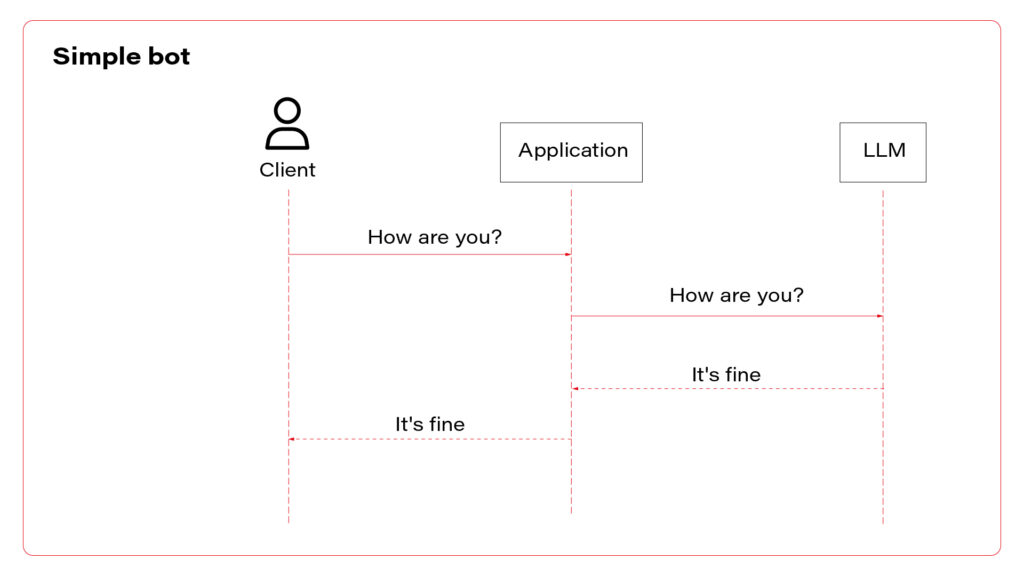

What is a “chatbot”?

The first step is to identify end-users and match their requirements with the toolkit possibilities. Let’s start with the latter point.

We’re going to implement a text-generating tool, so in this article, we don’t consider graphics, music, video, and all other generation models. We need a large language model that “understands” a natural language (or more languages) prompts and generates natural language answers (so-called “completions”).

Besides that, the model needs to operate on the domain knowledge depending on the use case. Hypothetically, it’s possible to create such a model from scratch, using general resources, like open-licensed books (to teach it the language) and your company resources (to teach it the domain), but the process is complex, very expensive in all dimensions (people, money, hardware, power, time, etc.) and at the end of the day - unpredictable.

Therefore, we’re going to use a general-purpose model. Some models (like gpt-4-0613) are available for fine-tuning – a process of tailoring the model to better understand a domain. It may be required for your use case, but again, the process may be expensive and challenging, so I propose giving a shot at a “standard” model first.

Because of the built-in function calling functionality and low price with a large context window, in this article, we use gpt-4-turbo. Moreover, you can have your own Azure-hosted instance of it, which is almost certainly significant to your customer privacy policy. Of course, you can achieve the same with some extra prompt engineering with other models, too.

OK, what kind of AI-driven personal assistant do you want? We can distinguish three main concepts: general chatbot, knowledge-based one, and one allowed to execute actions for a user.

Your first chatbot

Let’s start with the implementation of a simple bot – to talk about everything except the newest history.

As I’ve mentioned, it’s often required not to use the OpenAI API, but rather its own cloud-hosted model instance. To deploy one, you need an Azure account. Go to https://portal.azure.com/ , create a new resource, and select “Azure OpenAI”. Then go to your new resource, select “Keys and endpoints” from the left menu, and copy the endpoint URL together with one of the API keys. The endpoint should look like this one: https://azure-openai-resource-name.openai.azure.com/.

Now, you create a model. Go to “Model deployments” and click the “Manage deployments” button. A new page appears where you can create a new instance of the gpt-4 model. Please note that if you want to use the gpt-4-turbo model, you need to select the 1106 model version which is not available in all regions yet. Check this page to verify availability across regions.

Now, you have your own GPT model instance. According to Azure's privacy policy, the model is stateless, and all your data is safe, but please read the “Preventing abuse and harmful content generation” and “How can customers get an exemption from abuse monitoring and human review?” sections of the policy document very carefully before continuing with sensitive data.

Let’s call the model!

curl --location https://azure-openai-resource-name.openai.azure.com/openai/deployments/name-of-your-deployment/chat/completions?api-version=2023-05-15' \

--header 'api-key: your-api-key \

--header 'Content-Type: application/json' \

--data '{

"messages": [

{