Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

Quarkus framework overview and comparison with Spring Boot

In this article, we’ll give an overview of the Quarkus framework and compare it with Spring Boot - the most popular Java backend framework.

Quarkus is an open-source Java framework for developing web applications. It is designed to build apps in a modern approach with microservices and cloud-native applications. Quarkus has built-in support for the two most popular package managers: Gradle and Maven. By default, a new starter project created using the official Quarkus CLI uses Maven, although it may be easily switched to generate a grade-based project instead.

Features comparison to Spring Boot framework

Let’s make an overview of the main features available in Quarkus and compare them to the equivalent ones from Spring Framework.

Application configuration

Quarkus uses an open-source SmallRye Config library for configuring applications. By default, it uses application.properties files for this purpose. However, there is an option to also use the yaml file. In order to enable yaml configuration, we need to add a quarkus-config-yaml dependency. Quarkus may also additionally read configuration using environment variables specified in the .env file. This is particularly useful for local development.

You may store some local settings in the .env file e.g., to enable retrieval of credentials from external sources for local builds and not commit the file, keeping it for local usage only. Spring does not support reading environment variables from an .env file, so developers have to either inject them by IDE or configure them directly using the application configuration file.

Similarly to Spring, Quarkus supports different profiles depending on the target environment. This may be achieved by adding a profile name to the application.properties file name, e.g.:

application.properties – base configuration

application-dev.properties – extended configuration for the dev environment. When the dev profile is active, it will add (or override) the configuration to the base profile.

There is also an ability to build a configuration hierarchy with an additional configuration layer to mention base and profile-specific configuration. We may specify the name of the parent profile using quarkus.config.profile.parent in application-<profile>.properties file. E.g., by specifying quarkus.config.profile.parent=common in the application-dev.properties file, we’ll get a three-level hierarchy:

application.properties – base configuration application-common.properties – overrides/extends base configuration

application-dev.properties – overrides/extends common profile configuration

Spring has similar setting called spring.config.import which allows to import properties from a specified file rather than creating a hierarchy.

Dependency injection

Quarkus uses Jakarta EE Contexts and Dependency Injection engine. Similarly to Spring, Quarkus uses the concept of a bean. A bean is an object managed by an application environment (container) which creation and behavior can be controlled by the developer in a declarative style using annotations. For injecting beans, same as in the Spring Boot, we may use field, setter, and constructor injections.

Beans can be created by annotating the bean class with a scoped annotation:

@ApplicationScopedpublic class DataService {

public void writeData() {

//...

}

}

Compare with similar bean in Spring Boot:

@Servicepublic class DataService {

public void writeData() {

//...

}

}

Another way to define beans is to use producer methods or fields. This is similar to @Configuration classes in Spring Boot.

Quarkus:

// Define beans@ApplicationScopedpublic class SettingsProducer {

@Produces

String appToken = "123abc";

@Produces

Map<String, String> settings() {

return Map.of(

"setting 1", "value1",

"setting 2", "value2"

);

}

@Produces

MyBean myBean() {

return new MyBean(); // custom class

}

}

// Inject beans:@ApplicationScopedpublic class DataService {

@Inject

private String appToken;

@Inject

private Map<String, String> settings;

@Inject

private MyBean myBean;

//…

}

Equivalent Spring Boot code:

// Configuration:@Configuration

public class SettingsProducer {

@Bean

String appToken() {

return "123abc";

}

@Bean

Map<String, String> settings() {

return Map.of(

"setting 1", "value1",

"setting 2", "value2"

);

}

@Bean

MyBean myBean() {

return new MyBean();

}

}

// Injecting beans:@Beanpublic class DataService {

@Autowired

private String appToken;

@Autowired

@Qualifier("settings") // needed to explicitly tell spring that Map itself is a bean

private Map<String, String> settings;

@Autowired

private MyBean myBean;

//…

}

Quarkus also provides built-in mechanisms for interceptors, decorator, and event handling using Jakarta EE framework annotations and classes.

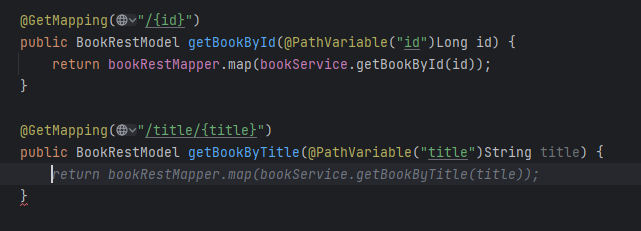

RESTful API

Building RESTful services with Quarkus is enabled by RESTEasy Reactive framework, which is an implementation of Jakarta REST specification. The example class to handle endpoint requests:

@Path("/api")

public class DataResource {

@GET

@Path("resource")

public String getData() {

return "My resource";

}

@POST

@Path("add/{data}")

public String addData(String data, @RestQuery String type) {

return "Adding data: " + data + " of type: " + type;

}

}

Class annotation @Path defines the root path of the URL. Each method contains HTTP method annotation ( @GET, @POST ) and @Path annotation that becomes a subpath of the root part. That is, in the example above, getData() is called for GET /api/resource request. Method addData() is called for POST /api/add/some_text?type=text request. Path component some_text will be specified as a data parameter to the addData() method. Query parameter text will be passed as a type parameter.

In Spring Boot, the above endpoint implementation using Spring Web is very similar:

@RestController

@RequestMapping("/api")

public class TestController {

@GetMapping("resource")

public String hello() {

return "My resource";

}

@PostMapping("add/{data}")

public String addData(@PathVariable String data, @RequestParam String type) {

return "Adding data: " + data + " of type: " + type;

}

}

Security

Quarkus has its own Security framework, similarly to Spring Boot. Quarkus Security has built-in authentication mechanisms (like Basic, Form-based etc) and provides an ability to use well-known external mechanisms, such as OpenID Connect or WebAuth. It also contains security annotations for role-based access control. On the other hand, Spring Security has more abilities and is more flexible than Quarkus Security. E.g., @Secured and @PreAuthorize Spring annotations are not provided natively by Quarkus. However, Quarkus can be integrated with Spring Security to use the functionality that is provided by Spring Security only.

Cloud integration

Quarkus supports building container images when creating an application. The following container technologies are supported:

- Docker. To generate a Dockerfile that allows the building of a docker image, the quarkus-container-image-docker extension is used.

- Jib. The Jib container images can be built by using the quarkus-container-image-jib extension. For Jib containerization, Quarkus provides caching of the app dependencies stored in a layer different from the application. This allows to rebuild the application fast. It also makes the application build smaller when pushing the container. Moreover, it gives the ability to build apps and containers without the need to have any client-side docker-related tool in case only push to the docker registry is needed.

- OpenShift. To build an OpenShift container, the quarkus-container-image-openshift is needed. The container is built by only uploading an artifact and its dependencies to the cluster, where they are merged into the container. Building OpenShift builds requires the creation of BuildConfig and two ImageStream resources. One resource is required for the builder image and one for the output image. The objects are created by the Quarkus Kubernetes extension.

- Buildpack. Quarkus extension quarkus-container-image-buildpack uses build packs to create container images. Internally, the Docker service is used.

Quarkus is positioned as a Kubernetes native. It provides a Quarkus Kubernetes extension that allows the deployment of apps to Kubernetes. This simplifies workflow and, in simple applications, allows one to deploy to Kubernetes in one step without deep knowledge of Kubernetes API itself. Using quarkus-kubernetes dependency provides auto-generated Kubernetes manifest in the target directory. The manifest is ready to be applied to the cluster using the following example command:

kubectl apply -f target/kubernetes/kubernetes.json

Quarkus also creates a dockerfile for the docker image for deployment. Application properties of the Quarkus app allow the configuration of container images to set their names, groups, etc. Kubernetes-related application properties allow the setup of a generated resource. E.g, quarkus.kubernetes.deployment-kind sets the resource kind to, e.g., Deployment, StatefulSet, or Job.

When it comes to Spring Boot it also has support of containerization and Kubernetes. However, such support is not out-of-the-box. The setup and deployment require much more configuration and technology-specific knowledge comparing to how it’s implemented in Quarkus. Quarkus reduces boilerplate code and setup since it has a native configuration for Kubernetes and containerization.

Production readiness and technical support

Quarkus is a relatively new framework comparing to Spring/Spring Boot. The initial Quarkus release took place on May 20, 2019. The first production Spring Framework release was on March 24, 2004, and Spring Boot was first released on April 1, 2014. Quarkus releases new versions a bit more frequently than Spring Boot, although the frequency is very good for both frameworks. There are 3-4 Quarkus releases per month and 5-6 releases of Spring Boot.

When it comes to bugs, there are 8289 total issues marked as bugs on github for Quarkus, and only 881 of them are in opened state. For Spring Boot, there are 3473 total issues that are marked as bugs, and only 66 of them are opened. The bugs are fixed faster for Spring Boot. The reason may be because it has a larger supporting community as Spring is much older.

Spring Boot has long-term support (LTS) versions that are maintained (updates/bug fixes) for a longer period of time without introducing major changes. Such an approach gives more stability and allows the use of the older version of your application that is getting all the critical fixes (e.g., fixes of known vulnerabilities). At the same time, you may work on the newer releases of your product to integrate and test the latest version of the framework. Spring Boot offers one year of regular support for each LTS edition and more than two years of commercial support.

Quarkus recently introduced LTS versions supported for one year. They plan to release a new LTS version every six months. Currently, Quarkus does not have commercial support for LTS versions.

Summary

In this article, we described key features of the Quarkus framework and compared them with the equivalent features in Spring Boot. Let’s summarize the main pros and cons of both frameworks in every area addressed.

- Application Configuration

Both Spring Boot and Quarkus have well-developed systems of configuration management. Even though Quarkus has the ability to build a simple three-level hierarchy of profiles, both frameworks can handle app configuration at a good level to satisfy app development needs.

- Dependency Injection

Both frameworks use a similar approach with beans injected at run time and use annotations to define bean types, assign bean information, and specify injection points.

- RESTful API

Spring Boot and Quarkus have very similar approach to writing RESTful API, with no major differences.

- Security

Quarkus provides the same authorization methods as Spring and has built-in integration with well-known authentication mechanisms. Spring Security offers more security-related annotations, although some of them can be used in Quarkus as it has the ability to integrate with the Spring Security framework.

- Cloud integration

Quarkus has native support for containerization and Kubernetes. It provides more abilities and is easier to use in cloud-native environments and tools compared to Spring Boot. This may be a key factor in choosing between Spring Boot and Quarkus for a development of a new app designed for a cloud-native environment.

- Production Readiness and Technical Support

Spring Boot is a much older framework. It has a larger support community and more users. It provides more robust LTS versions with the ability to extend tech support even more with LTS commercial support. Quarkus, on the other hand, has just recently introduced LTS versions with a shorter support period and doesn’t have commercial options. However, both frameworks are stable, bugs are continuously fixed, and new versions are released frequently.

For the most of its features, Quarkus implements Jakarta EE specifications, while Spring Boot includes its own solutions. Implementing the specification offers the advantage of creating a more consistent and robust API, as opposed to using non-standard methods. On the other hand, Spring Boot is quite an old and well-known framework and its features have proven to be stable and robust over time.

Android Automotive OS 11 Camera2 and EVS - Two different camera subsystems up and running

Android Automotive OS, AAOS in short, is a vehicle infotainment operating system that has gained a lot of traction recently, with most of the OEMs around the world openly announcing new versions of their infotainment based on Android. AAOS is based on the AOSP (Android Open Source Project) source code, which makes it fully compatible with Android, with additions that make it more useful in cars – different UI, integration with hardware layer, or vehicle-specific apps.

For OEMs and Tier1s, who are deeply accustomed to infotainment based on QNX/Autosar/Docker/Linux, and software developers working on AAOS apps, it’s sometimes difficult to quickly spin-up the development board or emulator supporting external hardware that has no out-of-the-box emulation built by Google. One of the common examples is camera access, which is missing in the official AAOS emulator these days, but the hardware itself is quite common in modern vehicles – which makes implementation of applications similar to Zoom or MS Teams for AAOS tempting to app developers.

In this article, I will explain how to build a simple test bench based on a cost-effective Raspberry Pi board and AAOS for developers to test their camera application. Examples will be based on AAOS 11 running on Raspberry Pi 4 and our Grape Up repository. Please check our previous article: " Build and Run Android Automotive OS on Raspberry Pi 4B " for a detailed description of how to run AAOS on this board.

Android Automotive OS has 2 different subsystems to access platform cameras: Camera2 and EVS. In this article, I will explain both how we can use it and how to get it running on Android Automotive OS 11.

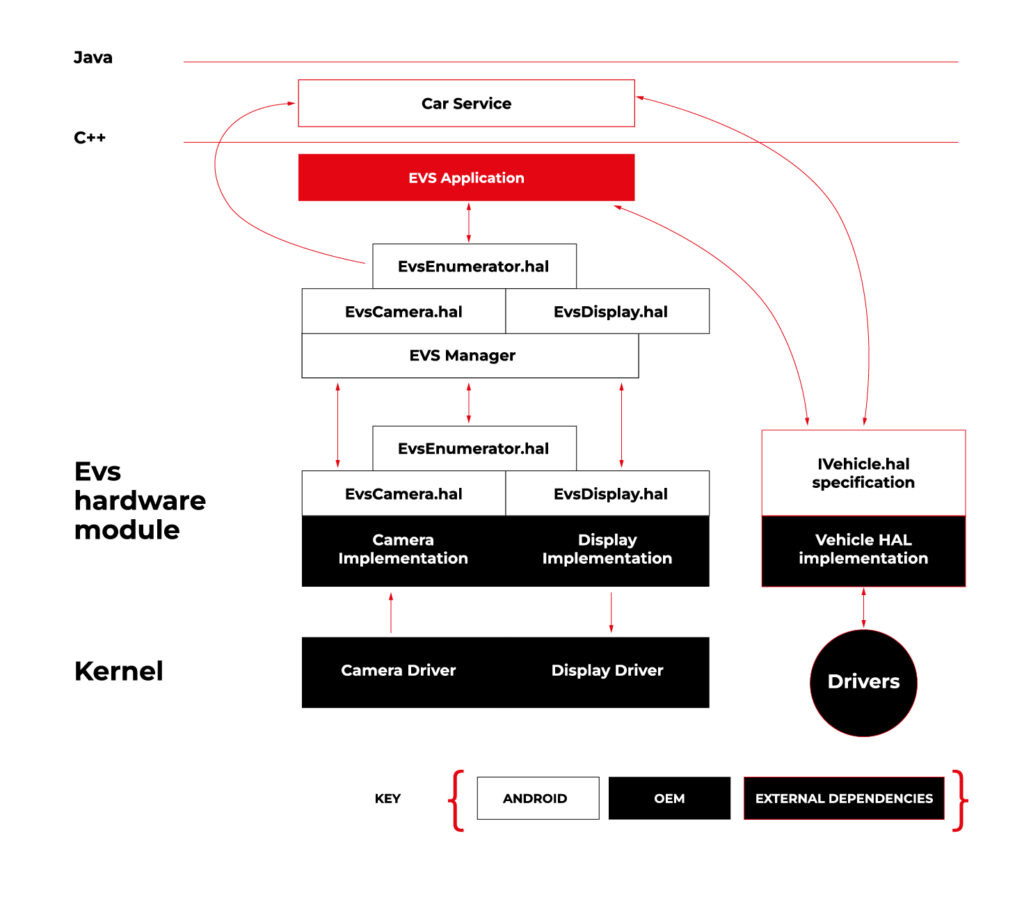

Exterior View System (EVS)

EVS is a subsystem to display parking and maneuvering camera image. It supports multiple cameras' access and view. The main goal and advantage of that subsystem is that it boots quickly and should display a parking view before 2 seconds, which is required by law.

Source https://source.android.com/docs/automotive/camera-hal

As you can see on the attached diagram, low layers of EVS depend on OEM source code. OEM needs to deliver Camera and Display implementation. However, Android delivers a sample application (/hardware/interfaces/automotive/evs/1.0) , which uses Linux V4L2 and OpenGL to grab camera frames and display them. You can find more information about EVS at https://source.android.com/docs/automotive/camera-hal

In our example, we will use samples from Android. Additionally, I assume you build our Raspberry Pi image (see our article ), as it has multiple changes that allow AAOS to reliably run on RPi4 and support its hardware.

You should have a camera connected to your board via USB. Please check if your camera is detected by V4L2. There should be a device file:

/dev/video0

Then, type on the console:

su

setprop persist.automotive.evs.mode 1

This will start the EVS system.

To display camera views:

evs_app

Type Ctrl-C to exit the app and go back to the normal Android view.

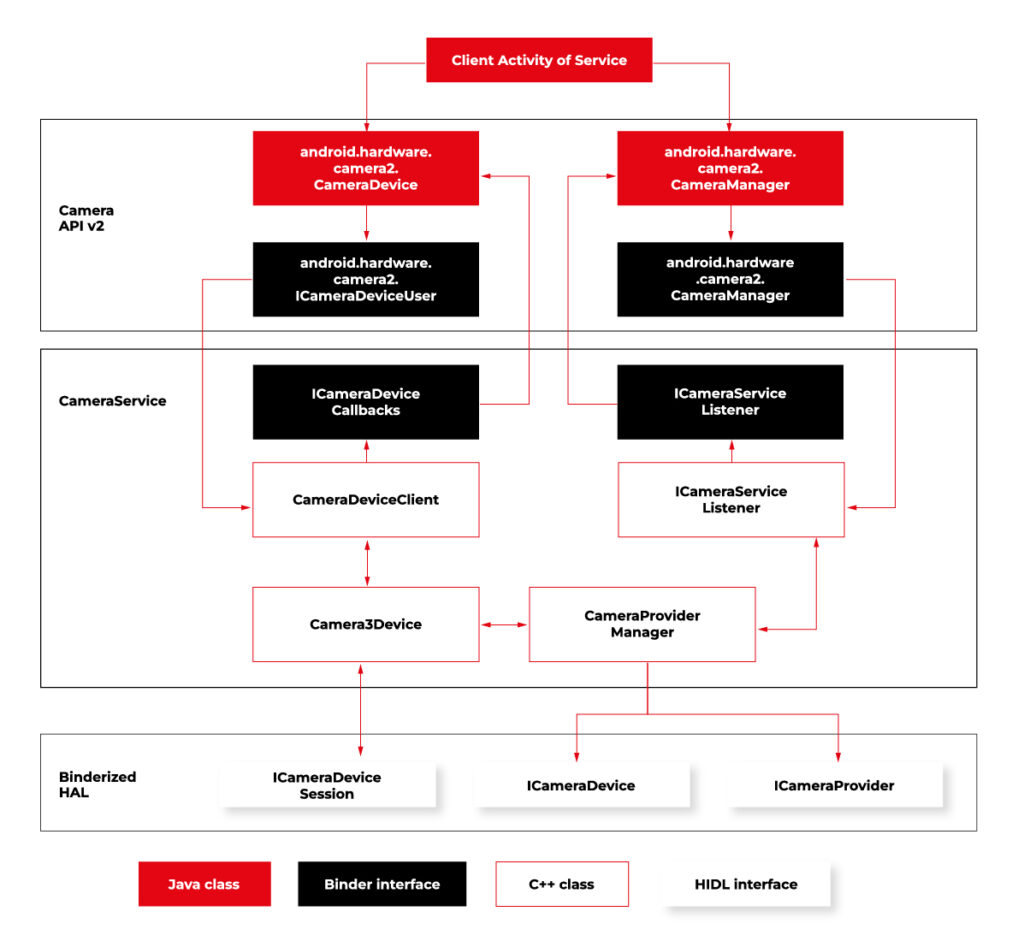

Camera2

Camera2 is a subsystem intended for camera access by “normal” Android applications (smartphones, tablets, etc.). It is a common system for all Android applications, recently slowly being replaced by CameraX. The developer of an Android app uses Java camera API to gain access to the camera.

Camera2 has three main layers, which are shown in the diagram below:

Source https://source.android.com/docs/core/camera

Low-level Camera access is implemented in CameraProvider. OEM can implement their own provider or a V4L2 camera driver can be used.

To get Camera2, you should enable it in the Car product make file. In

packages/services/Car/car_product/build/car_base.mk change config.disable_cameraservice to false.

PRODUCT_PROPERTY_OVERRIDES += config.disable_cameraservice=false

After that, rebuild Android:

make ramdisk systemimage vendorimage

Put it in the SD card and boot RPi with it. You will be able to run the “Camera” application on the AAOS screen, see camera output from the connected webcam, and run and debug applications using Camera API.

Summary

Now you know how to run both AAOS camera APIs on the RPi4 board. You can use both APIs to develop automotive applications leveraging cameras and test them using a simple USB webcam, which you may have somewhere on the shelf. If you found this article useful, you can also look at our previous articles about AAOS – both from the application development perspective and the OS perspective . Happy coding!

Unveiling the EU Data Act: Automotive industry implications

Fasten your seatbelts! The EU Data Act aims to drive a paradigm shift in the digital economy, and the automotive industry is about to experience a high-octane transformation. Get ready to explore the user-centric approach , new data-sharing mechanisms, and the roadmap for OEMs to adapt and thrive in the European data market. Are you prepared for this journey?

Key takeaways

- The EU Data Act grants users ownership and control of their data while introducing obligations for automotive OEMs to ensure fair competition.

- The Act facilitates data sharing between users, enterprises, and public sector bodies to promote innovation in the European automotive industry.

- Automotive OEMs must invest in resources and technologies to comply with the EU Data Act regulations for optimal growth opportunities.

The EU Data Act and its impact on the automotive industry

The EU Data Act applies to manufacturers, suppliers, and users of products or services placed on the market in the EU, as well as data holders and recipients based in the EU.

What is the EU Data Act regulation?

The EU Data Act is a proposed regulation that seeks to harmonize rules on fair access to and use of data in the European Union. The regulation sets out clear guidelines on who is obliged to surrender data, who can access it, how it can be used, and for what specific purposes it can be utilized.

In June 2023, the European Union took a significant step towards finalizing the Data Act, marking a pivotal moment in data governance. While the Act awaits formal adoption by the Council and Parliament following a legal-linguistic revision, the recent informal political agreement suggests its inevitability. This groundbreaking regulation will accelerate the monetization of industrial data while ensuring a harmonized playing field across the European Union.

User-centric approach

The European Data Act is revving up the engines of change in the automotive sector, putting users in the driver’s seat of their data and imposing specific obligations on OEMs. This means that connected products and related services must provide users with direct access to data generated in-vehicle, without any additional costs, and in a secure, structured, and machine-readable format.

Data handling by OEMs

A significant change is about to happen in data practices, particularly for OEMs operating in the automotive industry. Manufacturers and designers of smart products, such as smart cars, will be required to share data with users and authorized third parties. This shared data includes a wide range of information:

Included in the Sharing Obligation: The data collected during the user's interaction with the smart car that includes information about the car's operation and environment. This information is gathered from onboard applications such as GPS and sensor images, hardware status indications, as well as data generated during times of inaction by the user, such as when the car is on standby or switched off. Both raw and pre-processed data are collected and analyzed.

Excluded from the Sharing Obligation: Insights derived from raw data, any data produced when the user engages in activities like content recording or transmitting, and any data from products designed to be non-retrievable are not shared.

Sharing mechanisms and interactions

Data holders must make vehicle-generated data available (including associated metadata) promptly, without charge, and in a structured, commonly used, machine-readable format.

The legal basis for sharing personal data with connected vehicle users and legal entities or data recipients other than the user varies depending on the data subject and the sector-specific legislation to be presented.

Data access and third-party services

The Data Act identifies eligible entities for data sharing, encompassing both physical persons, such as individual vehicle owners or lessees, and legal persons, like organizations operating fleets of vehicles.

Requesting data sharing

Data can be accessed by users who are recipients either directly from the device's storage or from a remote server that captures the data. In cases where the data cannot be accessed directly, the manufacturers must promptly provide it.

The data must be free, straightforward, secure, and formatted for machine readability, and its quality should be maintained where necessary. There may be contracts that limit or deny access or further distribution of data if it breaches legal security requirements. This is a critical aspect for smart cars where sharing data might pose a risk to personal safety.

If the recipient of data is a third party , they cannot use the data to create competing products, only for maintenance. They cannot share the data unless it is for providing a user service and cannot prevent users who are consumers from sharing it with other parties.

Fair competition and trade secrets

The Data Act mandates that manufacturers share data, even when it is protected by trade secret laws. However, safeguards exist, allowing OEMs to impose confidentiality obligations and withhold data sharing in specific circumstances. These provisions ensure a balance between data access and trade secret protection. During the final negotiations on the Data Act, safeguarding trade secrets was a primary focus.

The Data Act now has provisions to prevent potential abusive behavior by data holders. It also includes an exception to data-sharing that permits manufacturers to reject certain data access requests if they can prove that such access would result in the disclosure of trade secrets, leading to severe and irreversible economic losses.

Connected vehicle data

Connected vehicle data takes the spotlight under the EU Data Act, empowering users with real-time access to their data and enabling data sharing with repair or service providers.

The implementation of the Data Act heavily involves connected cars. As per the Act, users, including companies, have the right to access the data collected by vehicles. However, manufacturers have the option to limit access under exceptional circumstances. This has a significant impact on data collection practices in the automotive sector.

Preparing for the EU Data Act: A guide for automotive OEMs

To stay ahead of the curve, OEMs must understand the business implications of the Data Act, adapt to new regulations, and invest in the necessary resources and technologies to ensure compliance.

As connected vehicles become the norm, OEMs that embrace the Data Act will be well-positioned to capitalize on new opportunities and drive growth in the European automotive sector.

Business implications

The EU Data Act imposes significant business implications on automotive OEMs, necessitating changes in their data handling practices and adherence to new obligations. As the industry embraces the user-centric approach to data handling, OEMs must design connected products and related services that provide users with access to their in-vehicle data.

To ensure a smooth transition and maintain a competitive edge, automotive OEMs must undertake a tailored and strategic preparation process.

Adapting to new regulations

Failure to comply with the Data Act could result in legal and financial repercussions for automotive OEMs. In order to avoid any possible problems, they should invest in the necessary resources and technologies to ensure compliance with the regulations of the Data Act.

They should also engage proactively with the requirements of the Data Act and implement compliance measures strategically.

By taking the following steps, automotive OEMs can navigate the regulatory landscape effectively and seize growth opportunities in the European automotive sector:

In-Depth Knowledge: Dive deep into the EU Data Act, with a special focus on its impact on the automotive industry. Recognize that the automotive sector is central to this regulation, requiring industry-specific understanding.

Data Segmentation: Perform a comprehensive analysis of your data, categorizing it into distinct groups. Identify which data types fall within the purview of the EU Data Act.

Compliance Framework Development:

- Internal Compliance: Audit and update policies to comply with the EU Data Act. Develop a data governance framework for access, sharing, and privacy.

- Data Access Protocols: Establish unambiguous protocols for data access and sharing, including procedures for obtaining user consent, data retrieval, and sharing modalities.

Data Privacy and Security:

- Data Safeguards: Enhance data privacy and security, including encryption and access controls.

Data Utilization: Develop plans for leveraging this data to generate new revenue streams while adhering to the EU Data Act's mandates.

User Engagement and Consent:

- Transparency: Forge clear and transparent channels of communication with users. Keep users informed about data collection, sharing, and usage practices, and obtain well-informed consent.

- Consent Management: Implement robust consent management systems to efficiently monitor and administer user consent. Ensure that users maintain control over their data.

Legal Advisors: Engage legal experts well-versed in data protection and privacy laws, particularly those relevant to the automotive sector. Seek guidance for interpreting and implementing the EU Data Act within your specific industry context.

Data Access Enhancement: Invest in technology infrastructure to facilitate data access and sharing as per the EU Data Act's stipulations. Ensure that data can be easily and securely provided in the required format.

Employee Education: Educate your workforce on the intricacies of the EU Data Act and its implications for daily operations. Ensure that employees possess a strong understanding of data protection principles.

Ongoing Compliance Oversight: Establish mechanisms for continuous compliance monitoring. Regularly assess data practices, consent management systems, and data security protocols to identify and address compliance gaps.

Collaboration with Peers: Collaborate closely with industry associations, fellow automotive OEMs, and stakeholders to share insights, best practices, and strategies for addressing the specific challenges posed by the EU Data Act in the automotive sector.

Future-Ready Solutions: Develop adaptable and scalable solutions that accommodate potential regulatory landscape shifts. Remain agile and prepared to adjust strategies as needed.

Boosting innovation capabilities

The Data Act may bring some challenges, but it also creates a favorable environment for innovation. By making industrial data more accessible, the Act offers a huge potential for data-driven businesses to explore innovative business models. Adapting to the Act can improve a company's ability to innovate, allowing it to use data as a strategic asset for growth and differentiation.

Summary

The EU Data Act is driving a paradigm shift in the automotive sector, putting users in control of their data and revolutionizing the way OEMs handle, share, and access vehicle-generated data.

By embracing the user-centric approach, ensuring compliance with data sharing and processing provisions, and investing in innovation capabilities, data holders can unlock new opportunities and drive growth in the European automotive market.

It's time for OEMs to take actionable steps to comply with the new regulation . Read this guide on building EU Data Act-compliant connected car software to learn what they are.

Get prepared to meet the EU Data Act deadlines

Ready to turn compliance into a competitive advantage? We’re here to assist you , whether you need expert guidance on regulatory changes or customized data-sharing solutions.

Predictive maintenance in automotive manufacturing

Our initial article on predictive maintenance covered the definition of such a system, its construction, and the key implementation challenges. In this part, we'll delve into how PdM technology is transforming different facets of the automotive industry and its advantages for OEMs, insurers, car rental companies, and vehicle owners.

Best predictive maintenance techniques and where you can use them

In the first part of the article, we discussed the importance of sensors in a PdM system. These sensors are responsible for collecting data from machines and vehicles, and they can measure various variables like temperature, vibration, pressure, or noise. Proper placement of these sensors on the machines and connecting them to IoT solutions, enables the transfer of data to the central repository of the system. After processing the data, we obtain information about specific machines or their parts that are prone to damage or downtime.

The automotive industry can benefit greatly from implementing these top predictive maintenance techniques.

Vibration analysis

How does it work?

Machinery used in the automotive industry and car components have a specific frequency of vibration. Deviations from this standard pattern can indicate "fatigue" of the material or interference from a third-party component that may affect the machine's operation. The PdM system enables you to detect these anomalies and alert the machine user before a failure occurs.

What can be detected?

The technique is mainly applied to high-speed rotating equipment. Vibration and oscillation analysis can detect issues such as bent shafts, loose mechanical components, engine problems, misalignment, and worn bearings or shafts.

Infrared thermography analysis

How does it work?

The technique involves using infrared cameras to detect thermal anomalies. This technology can identify malfunctioning electrical circuits, sensors or components that are emitting excessive heat due to overheating or operating at increased speeds. With this advanced technology, it's possible to anticipate and prevent such faults, and even create heat maps that can be used in predictive models and maintenance of heating systems.

What can be detected?

Infrared analysis is a versatile and non-invasive method that can be used on a wide scale. It is suitable for individual components, parts, and entire industrial facilities, and can detect rust, delamination, wear, or heat loss on various types of equipment.

Acoustic analysis monitoring

How does it work?

Machines produce sound waves while operating, and these waves can indicate equipment failure or an approaching critical point. The amplitude and character of these waves are specific to each machine. Even if the sound is too quiet for humans to hear in the initial phase of malfunction, sensors can detect abnormalities and predict when a failure is likely to occur.

What can be detected?

This PdM technology is relatively cheaper compared to others, but it does have some limitations in terms of usage. It is widely used in the Gas & Oil industry to detect gas and liquid leaks. In the automotive industry, it is commonly used for detecting vacuum leaks, unwanted friction, and stress on machine parts.

Motor circuit analysis

How does it work?

The technique works through electronic signature analysis (ESA). It involves measuring the supply voltage and operating current of an electronic engine. It allows locating and identifying problems related to the operation of electric engine components.

What can be detected?

Motor circuit analysis is a powerful tool that helps identify issues related to various components, such as bearings, rotor, clutch, stator winding, or system load irregularities. The main advantage of this technique is its short testing time and convenience for the operator, as it can be carried out in just two minutes while the machine is running.

PdM oil analysis

How does it work?

An effective method for Predictive Maintenance is to analyze oil samples from equipment without causing any damage. By analyzing the viscosity and size of the sample, along with detecting the presence or absence of third substances such as water, metals, acids or bases, we can obtain valuable information about mechanical damage, erosion or overheating of specific parts.

What can be detected?

Detecting anomalies early is crucial for hydraulic systems that consist of rotating and lubricating parts, such as pistons in a vehicle engine. By identifying issues promptly, effective solutions can be developed and potential damage to the equipment or a failure can be prevented.

Computer vision

How does it work?

Computer vision is revolutionizing the automotive industry by leveraging AI-based technology to enhance predictive maintenance processes. It achieves this by analyzing vast datasets, including real-time sensor data and historical performance records, to rapidly predict equipment wear and tear. By identifying patterns, detecting anomalies, and issuing early warnings for potential equipment issues, computer vision enables proactive maintenance scheduling.

What can be detected?

In the automotive industry, computer vision technology plays a crucial role in detecting equipment wear and tear patterns to predict maintenance requirements. It can also identify manufacturing defects such as scratches or flaws, welding defects in automotive components, part dimensions and volumes to ensure quality control, surface defects related to painting, tire patterns to match with wheels, and objects for robotic guidance and automation.

Who and how can benefit from predictive maintenance

Smart maintenance systems analyze multiple variables and provide a comprehensive overview, which can benefit several stakeholders in the automotive industry. These stakeholders range from vehicle manufacturing factories and the supply chain to service and dealerships, rental companies, insurance companies, and drivers.

Below, we have outlined the primary benefits that these stakeholders can enjoy. In the OEMs section, we have provided examples of specific implementations and case studies from the market.

Car rentals

Fleet health monitoring and better prediction of the service time

Managing service and repairs for a large number of vehicles can be costly and time-consuming for rental companies. When vehicles break down or are out of service while in the possession of customers, it can negatively impact the company’s revenue. To prevent this, car rental companies need constant insight into the condition of their vehicles and the ability to predict necessary maintenance. This allows them to manage their service plan more efficiently and minimize the risk of vehicle failure while on the road.

Car dealerships

Reducing breakdown scenarios

Car dealerships use predictive maintenance primarily to anticipate mechanical issues before they develop into serious problems. This approach helps in ensuring that vehicles sold or serviced by them are in optimal condition, which aids in preventing breakdowns or major faults for the customer down the line. By analyzing data from the vehicle's onboard sensors and historical maintenance records, dealerships can identify patterns that signify potential future failures. Predictive maintenance also benefits dealerships by allowing for proactive communication with vehicle owners, reducing breakdown scenarios, and enhancing customer satisfaction

Vehicle owners

Peace of mind

Periodic maintenance recommendations for vehicles are traditionally based on analyzing historical data from a large population of vehicle owners. However, each vehicle is used differently and could benefit from a tailored maintenance approach. Vehicles with high mileage or heavy usage should undergo more frequent oil changes than those that are used less frequently. By monitoring the actual vehicle condition and wear, owners can ensure that their vehicles are always at 100% and can better manage and plan for maintenance expenses.

Insurance companies

Risk & fraud

By using data from smart maintenance systems, insurance companies can enhance their risk modeling. The analysis of this data allows insurers to identify the assets that are at higher risk of requiring maintenance or replacement and adjust their premiums accordingly. In addition, smart maintenance systems can detect any instances of tampering with the equipment or negligence in maintenance. This can aid insurers in recognizing fraudulent claims.

OEMs successful development of PdM systems

BMW Group case study

The German brand implements various predictive maintenance tools and technologies, such as sensors, data analytics, and artificial intelligence, to prevent production downtime, promote sustainability, and ensure efficient resource utilization in its global manufacturing network. These innovative, cloud-based solutions are playing a vital role in enhancing their manufacturing processes and improving overall productivity.

The BMW Group's approach involves:

- Forecasting phenomena and anomalies using a cloud-based platform. Individual software modules within the platform can be easily switched on and off if necessary to instantly adapt to changing requirements. The high degree of standardization between individual components allows the system to be globally accessible. Moreover, it is highly scalable and allows new application scenarios to be easily implemented.

- Optimizing component replacements (this uses advanced real-time data analytics).

- Carrying out maintenance and service work in line with the requirements of the actual status of the system.

- Anomaly detection using advanced AI predictive algorithms.

Meanwhile, it should be taken into account that in BMW's body and paint shop alone, welding guns perform some 15,000 spot welds per day. At the BMW Group's plant in Regensburg, the conveyor systems' control units run 24/7. So any downtime is a huge loss.

→ SOURCE case study.

FORD case study

Predictive vehicle maintenance is one of the benefits offered to drivers and automotive service providers as part of Ford's partnerships with CARUSO and HIGH MOBILITY. In late 2020, Ford announced two new connected car agreements to potentially enable vehicle owners to benefit from a personalized third-party offer.

CARUSO and HIGH MOBILITY will function as an online data platform that is completely independent of Ford and allows third-party service providers secure and compliant access to vehicle-generated data. This access will, in turn, enable third-party providers to create personalized services for Ford vehicle owners. This will enable drivers to benefit from smarter insurance, technical maintenance and roadside recovery.

Sharing vehicle data (warning codes, GPS location, etc.) via an open platform is expected to be a way to maintain competitiveness in the connected mobility market.

→ SOURCE case study.

Predictive maintenance is the future of the automotive market

An effective PdM system means less time spent on equipment maintenance, saving on spare parts, eliminating unplanned downtime and improved management of company resources. And with that comes more efficient production and customers’ and employees’ satisfaction.

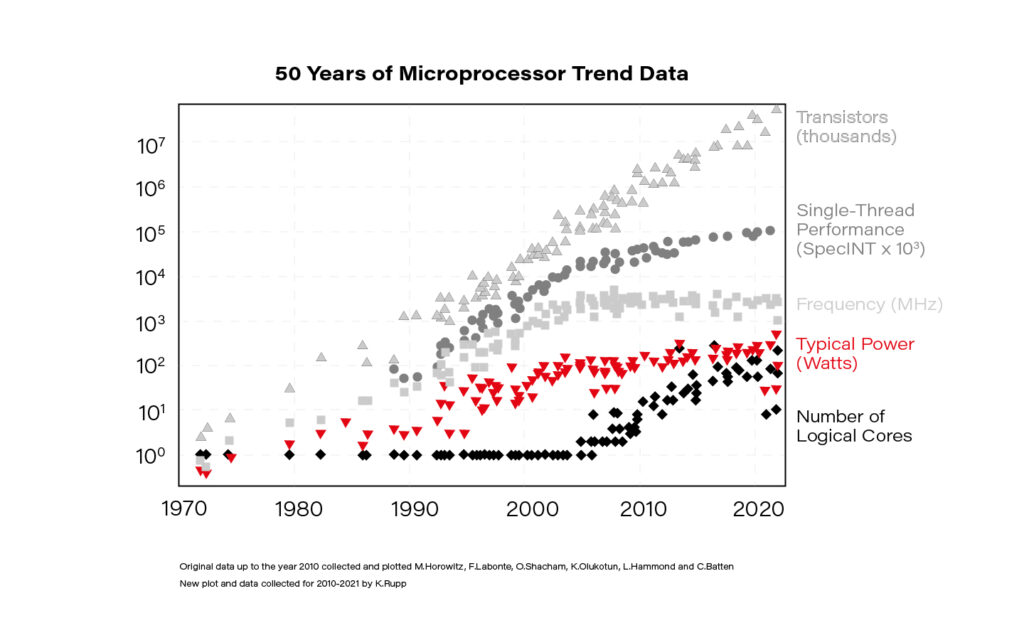

As the data shows, organizations that have implemented a PdM system report an average decrease of 55% in unplanned equipment failures. Another upside is that, compared to other connected car systems (such as infotainment systems), PdM is relatively easy to monetize. Data here can remain anonymous, and all parties involved in the production and operation of the vehicle reap the benefits.

Organizations have come to recognize the hefty returns on investment provided by predictive maintenance solutions and have thus adopted it on a global scale. According to Market Research Future, the global Predictive Maintenance market is projected to grow to 111.30 billion by 2030 , suggesting that further growth is possible in the future.

Android Automotive OS 14 is out – build your own emulator from scratch!

Android Automotive OS 14 has arrived, and it marks a significant evolution in the way users interact with their vehicle's system. This version brings enhanced user experience, improved Android API, and better OS-level security (as well as non-automotive Android 14). In this short article, we'll walk you through a tutorial on creating your own emulator from scratch, but first, here are some of the standout features and improvements introduced in Android Automotive OS 14 !

Android Automotive 14 noteworthy new features

- Enhanced UI: Now with an optional, improved home screen adaptation to the portrait mode for better vehicle compatibility.

- Multi-User Upgrades: Support parallel sessions with custom sound zones and multiple displays.

- Remote Access: Enables system wake-up, executes a task and then shutdown via external requests.

- Extended VHAL: More ADAS and non-ADAS properties included to represent activation status and the system state.

- App Quick Actions: A feature that allows applications to showcase quick actions.

- Infotainment Reference Design: The starting point for developers to create apps for Android Automotive OS.

- New Boot Animation: Well, as usual 😊

To learn about all new features provided in Android Automotive 14, follow this link: https://source.android.com/docs/automotive/start/releases/u_udc_release?hl=en

Steps to building an emulator

The best operating system for building an emulator in AAOs is Ubuntu 18.04 or higher. If you use a different operating system, you must follow some extra steps. For instance, you may need to install a repo from https://gerrit.googlesource.com/git-repo instead of using a package manager.

1) You need first to install the required dependencies

sudo apt install git-core gnupg flex bison build-essential zip curl zlib1g-dev libc6-dev-i386 libncurses5 x11proto-core-dev libx11-dev lib32z1-dev libgl1-mesa-dev libxml2-utils xsltproc unzip fontconfig repo

2) Then, configure Git, set your name and email address

git config --global user.name "Your name"

git config --global user.email your@email

3) After configuring Git, you can download source code from a Git repository

repo init -u https://android.googlesource.com/platform/manifest -b android-14.0.0_r54 --partial-clone --clone-filter=blob:limit=10M && repo sync

You can skip ‐‐ partial-clone and ‐‐ clone-filter. However, this will result in longer download times. It’s recommended to check for the latest android-14.0.0_rXX tag before downloading, which can be found on this page: https://android.googlesource.com/platform/manifest/+refs .

Keep in mind that downloading takes a lot of time because the sources take about 150GB even with partial clone and clone-filter enabled.

4) In the next step, set up environment variables using the script provided

. build/envsetup.sh

This method replaces your JAVA_HOME and modifies PATH, so be aware that your console may act differently now.

5) Select the system to build

lunch sdk_car_portrait_x86_64-eng

You can create a landscape build by removing "portrait". Also, change x86_64 to arm64 if you want to run the system on Mac. For more details on building on Mac, check out this article .

6) Create the system and the emulator image

m && m emu_img_zip

The first command will take hours to complete. Take a break: go running, biking, hiking, or whatever drives you. You can modify threat pool usage by the build system with -j parameter, like m -j 16 – the default one is the CPU count of your machine.

7) Copy the emulator image to Android Studio emulator directory

mkdir -p /mnt/c/Users/<user>/AppData/Local/Android/Sdk/system-images/android-34/custom_aaos_14/ && unzip -o out/target/product/emulator_x86_64/sdk-repo-Linux-system-images-eng.dape.zip -d /mnt/c/Users/<user>/AppData/Local/Android/Sdk/system-images/android-34/custom_aaos_14/

I assume you work on a Windows machine with WSL. Please adapt the above commands with your Android/SDK directory if you are working on native Linux.

Create a package.xml file in /mnt/c/Users/<user>/AppData/Local/Android/Sdk/system-images/android-34/custom_aaos_14/x86_64 directory with the this content . The file provided bases on existing package.xml files in other emulator images.

Adjust “tag”, “vendor”, and “display name” in the upper file if needed. Make sure to match <localPackage obsolete="false" path="system-images;android-34;custom_aaos_14;x86_64"> with the path you’d placed the emulator image.

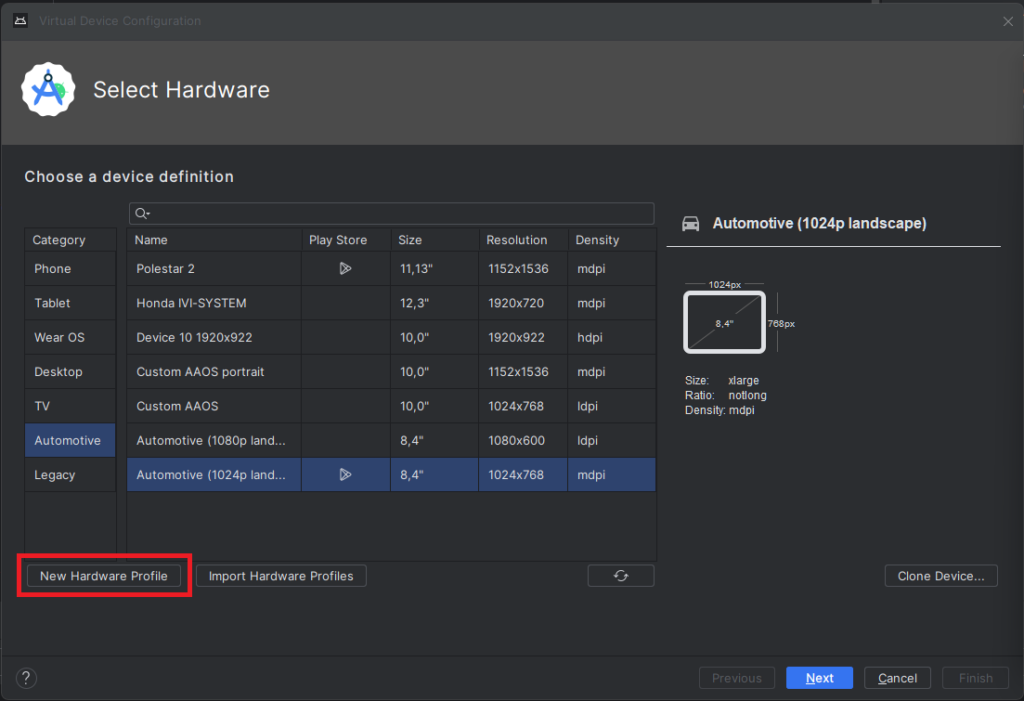

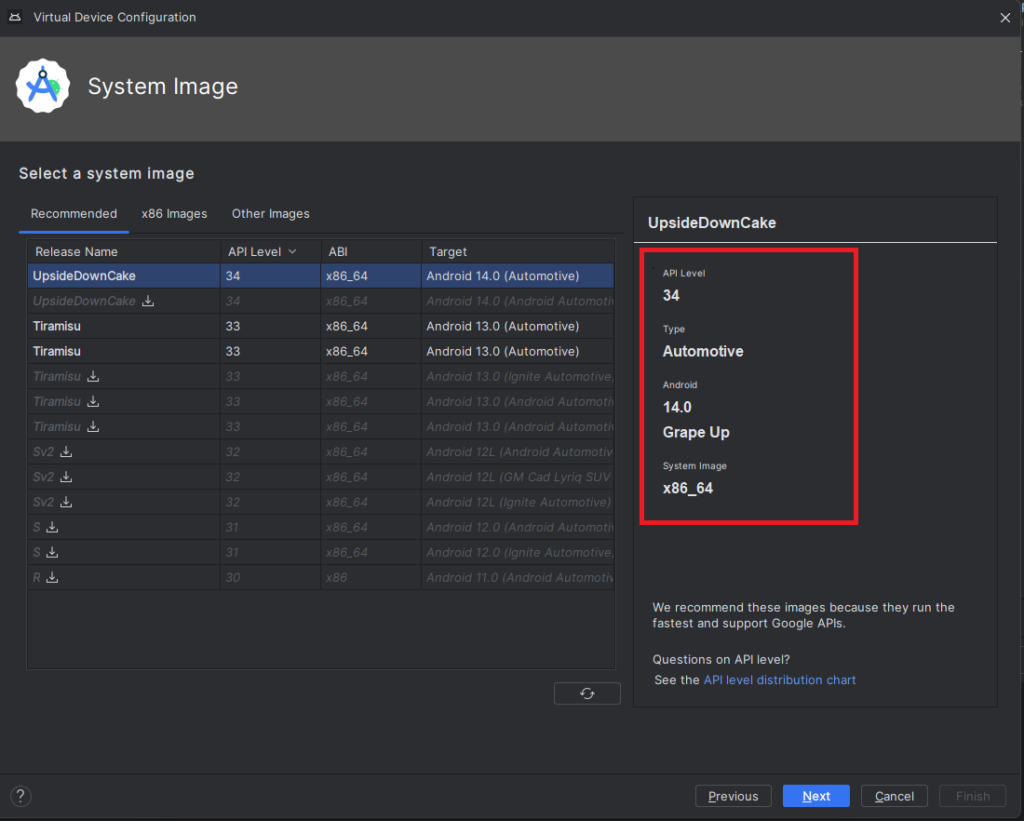

8) Now it’s time to create a new emulator in Android Studio

Open "Device Manager" and select "Create Virtual Device". In the left-hand menu, choose "Automotive" and add a new hardware profile using the button in the lower-left corner of the panel.

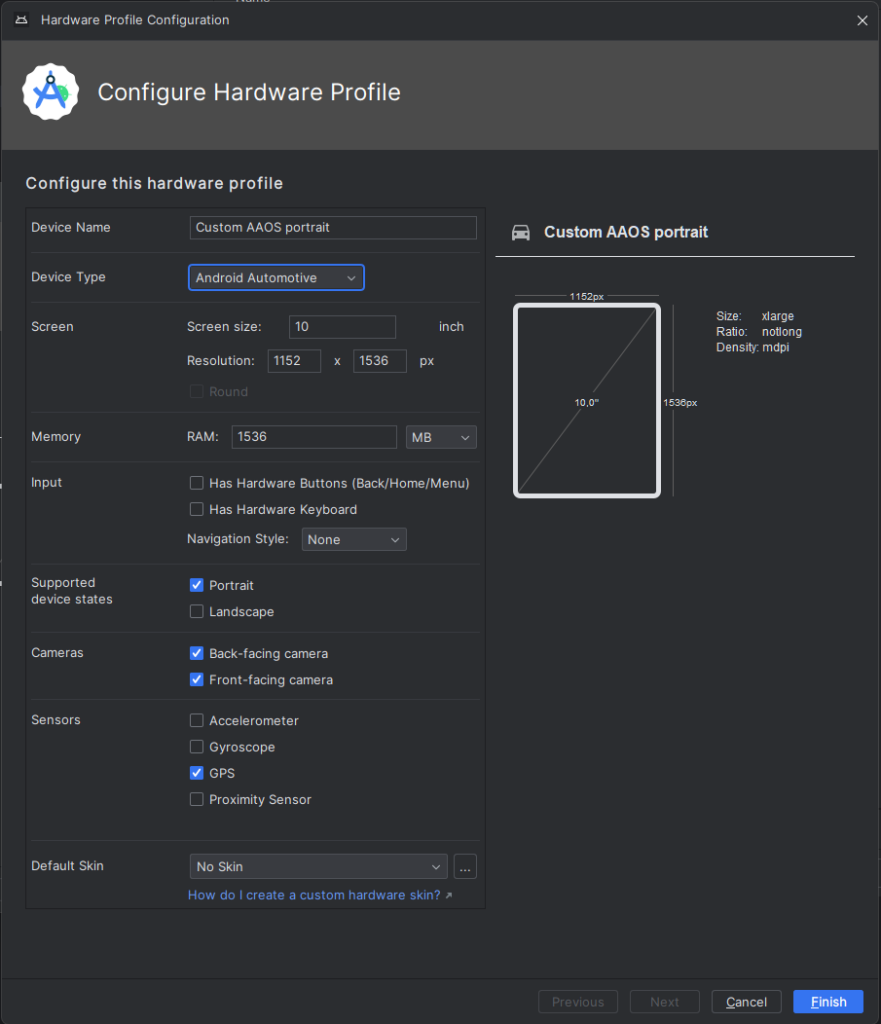

Select “Android Automotive” as a device type. Choose the correct resolution for your build. For example, I selected a resolution of 1152x1536 for a 10-inch device to create a portrait build. Next, allocate at least 1536 MB of RAM to your device. Then, choose only one supported device state - "Portrait" or "Landscape" - according to your build. Finally, disable any unnecessary sensors and skin for AAOS compatibility.

9) Accept and select your new hardware profile. Then, move on to the next step

10) Pick your emulator image (you can find it using the tag and vendor configured in package.xml)

11) On the final screen, enter a name and complete the configuration process

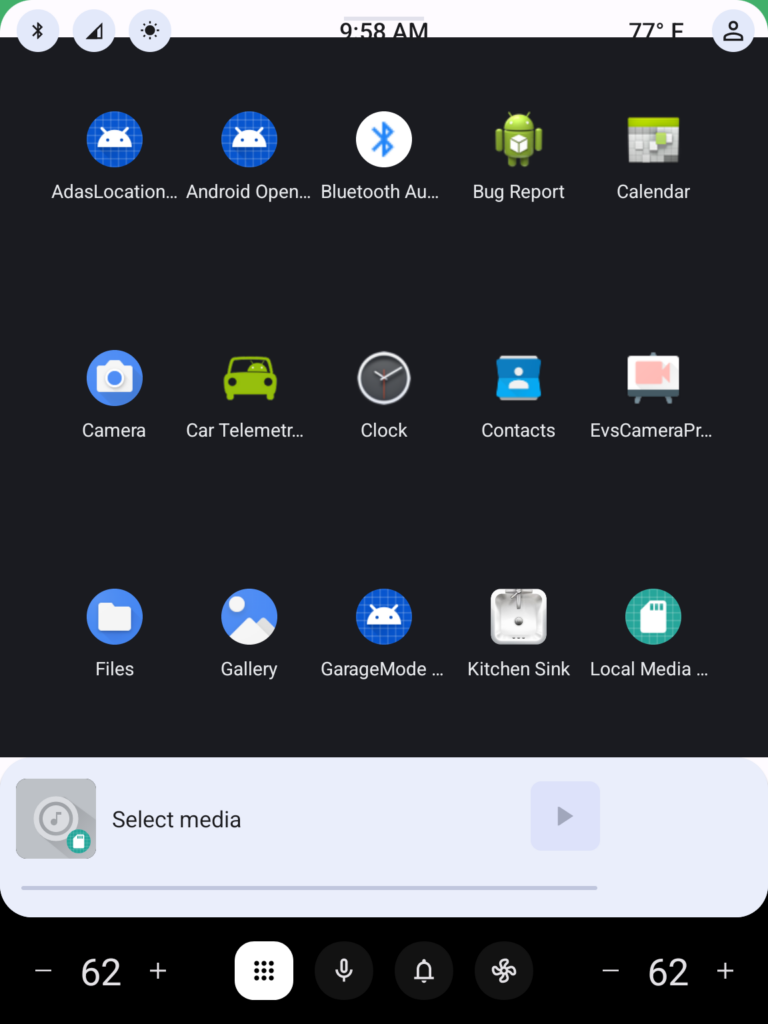

12) To start the emulator, go to the "Device Manager" and launch it from there

13) You’re all set! Enjoy!

Get started on creating your very own Android Automotive OS 14 emulator by following the steps outlined in this article. Explore the possibilities of car technology and discover what the future has in store. You can find a AAOS “Hello World” example in our article How to Build Your First App for Android Automotive OS . Start building, try out the various features, and have fun with your new setup!

Unleashing the full potential of MongoDB in automotive applications

Welcome to the second part of our article series about MongoDB in automotive. In the previous installment , we explored the power of MongoDB as a versatile data management solution for the automotive industry, focusing on its flexible data model, scalability, querying capabilities, and optimization techniques.

In this continuation, we will delve into two advanced features of MongoDB that further enhance its capabilities in automotive applications: timeseries data management and change streams. By harnessing the power of timeseries and change streams, MongoDB opens up new possibilities for managing and analyzing real-time data in the automotive domain. Join us as we uncover the exciting potential of MongoDB's advanced features and their impact on driving success in automotive applications.

Time-series data management in MongoDB for automotive

Managing time-series data effectively is a critical aspect of many automotive applications. From sensor data to vehicle telemetry, capturing and analyzing time-stamped data is essential for monitoring performance, detecting anomalies, and making informed decisions. MongoDB offers robust features and capabilities for managing time-series data efficiently. This section will explore the key considerations and best practices for leveraging MongoDB's time-series collections.

Understanding time-series collections

Introduced in MongoDB version 5.0, time-series collections provide a specialized data organization scheme optimized for storing and retrieving time-series data. Time-series data consists of a sequence of data points collected at specific intervals, typically related to time. This could include information such as temperature readings, speed measurements, or fuel consumption over time.

MongoDB employs various optimizations in a time-series collection to enhance performance and storage efficiency. One of the notable features is the organization of data into buckets. Data points within a specific time range are grouped in these buckets. This time range, often referred to as granularity, determines the level of detail or resolution at which data points are stored within each bucket. This bucketing approach offers several advantages.

Firstly, it improves query performance by enabling efficient data retrieval within a particular time interval. With data organized into buckets, MongoDB can quickly identify and retrieve the relevant data points, reducing the time required for queries.

Secondly, bucketing allows for efficient storage and compression of data within each bucket. By grouping data points, MongoDB can apply compression techniques specific to each bucket, optimizing disk space utilization. This helps to minimize storage requirements, especially when dealing with large volumes of time-series data.

Choosing sharded or non-sharded collections

When working with time-series data, you have the option to choose between sharded and non-sharded collections. Sharded collections distribute data across multiple shards, enabling horizontal scalability and accommodating larger data volumes. However, it's essential to consider the trade-offs associated with sharding. While sharded collections offer increased storage capacity, they may introduce additional complexity and potentially impact performance compared to non-sharded collections.

In most cases, non-sharded collections are sufficient for managing time-series data, especially when proper indexing and data organization strategies are employed. Non-sharded collections provide simplicity and optimal performance for most time-series use cases, eliminating the need for managing a sharded environment.

Effective data compression for time-series collections

Given the potentially large volumes of data generated by time-series measurements, efficient data compression techniques are crucial for optimizing storage and query performance. MongoDB provides built-in compression options that reduce data size, minimizing storage requirements and facilitating faster data transfer. Using compression, MongoDB significantly reduces the disk space consumed by time-series data while maintaining fast query performance.

One of the key compression options available in MongoDB is the WiredTiger storage engine. WiredTiger offers advanced compression algorithms that efficiently compress and decompress data, reducing disk space utilization. This compression option is particularly beneficial for time-series collections where data points are stored over specific time intervals.

By leveraging WiredTiger compression, MongoDB achieves an optimal balance between storage efficiency and query performance for time-series collections. The compressed data takes up less space on disk, resulting in reduced storage costs and improved overall system scalability. Additionally, compressed data can be transferred more quickly across networks, improving data transfer speeds and reducing network bandwidth requirements.

Considerations for granularity and data retention

When designing a time-series data model in MongoDB, granularity and data retention policies are important factors to consider. Granularity refers to the level of detail or resolution at which data points are stored, while data retention policies determine how long the data is retained in the collection.

Choosing the appropriate granularity is crucial for striking a balance between data precision and performance. MongoDB provides different granularity options, such as "seconds," "minutes," and "hours," each covering a specific time span. Selecting the granularity depends on the time interval between consecutive data points that have the same unique value for a specific field, known as the meta field. You can optimize storage and query performance by aligning the granularity with the ingestion rate of data from a unique data source.

For example, if you collect temperature readings from weather sensors every five minutes, setting the granularity to "minutes" would be appropriate. This ensures that data points are grouped in buckets based on the specified time span, enabling efficient storage and retrieval of time-series data.

In addition to granularity, defining an effective data retention policy is essential for managing the size and relevance of the time-series collection over time. Consider factors such as the retention period for data points, the frequency of purging outdated data, and the impact on query performance.

MongoDB provides a Time to Live (TTL) mechanism that can automatically remove expired data points from a time-series collection based on a specified time interval. However, it's important to note that there is a known issue related to TTL for very old records in MongoDB at the time of writing this article. The issue is described in detail in the MongoDB Jira ticket SERVER-76560.

The TTL behavior in time series collections differs from regular collections. In a time series collection, TTL expiration occurs at the bucket level rather than on individual documents within the bucket. Once all documents within a bucket have expired, the entire bucket is removed during the next run of the background task that removes expired buckets.

This bucket-level expiration behavior means that TTL may not work in the exact same way as with normal collections, where individual documents are removed as soon as they expire. It's important to be aware of this distinction and consider it when designing your data retention strategy for time series collections.

When considering granularity and data retention policies, evaluate the specific requirements of your automotive application. Consider the level of precision needed for analysis, the data ingestion rate, and the desired storage and query performance. By carefully evaluating these factors and understanding the behavior of TTL in time series collections, you can design a time-series data model in MongoDB that optimizes both storage efficiency and query performance while meeting your application's needs.

Retrieving latest documents

In automotive applications, retrieving the latest documents for each unique meta key can be a common requirement. MongoDB provides an efficient approach to achieve this using the `DISTINCT_SCAN` stage in the aggregation pipeline. Let's explore how you can use this feature, along with an automotive example.

The `DISTINCT_SCAN` stage is designed to perform distinct scans on sorted data in an optimized manner. By leveraging the sorted nature of the data, it efficiently speeds up the process of identifying distinct values.

To illustrate its usage, let's consider a scenario where you have a time series collection of vehicle data that includes meta information and timestamps. You want to retrieve the latest document for each unique vehicle model. Here's an example code snippet demonstrating how to accomplish this:

```javascript

db.vehicleData.aggregate([

{ $sort: { metaField: 1, timestamp: -1 } },

{

$group: {

_id: "$metaField",

latestDocument: { $first: "$$ROOT" }

}

},

{ $replaceRoot: { newRoot: "$latestDocument" } }

])

```

In the above code, we first use the `$sort` stage to sort the documents based on the `metaField` field in ascending order and the `timestamp` field in descending order. This sorting ensures that the latest documents appear first within each group.

Next, we employ the `$group` stage to group the documents by the `metaField` field and select the first document using the `$first` operator. This operator retrieves the first document encountered in each group, corresponding to the latest document for each unique meta key.

Finally, we utilize the `$replaceRoot` stage to promote the `latestDocument` to the root level of the output, effectively removing the grouping and retaining only the latest documents.

By utilizing this approach, you can efficiently retrieve the latest documents per each meta key in an automotive dataset. The `DISTINCT_SCAN` stage optimizes the distinct scan operation, while the `$first` operator ensures accurate retrieval of the latest documents.

It's important to note that the `DISTINCT_SCAN` stage is an internal optimization technique of MongoDB's aggregation framework. It is automatically applied when the conditions are met, so you don't need to specify or enable it in your aggregation pipeline explicitly.

Time series collection limitations

While MongoDB Time Series brings valuable features for managing time-series data, it also has certain limitations to consider. Understanding these limitations can help developers make informed decisions when utilizing MongoDB for time-series data storage:

● Unsupported Features : Time series collections in MongoDB do not support certain features, including transactions and change streams. These features are not available when working specifically with time series data.

● A ggregation $out and $merge : The $out and $merge stages of the aggregation pipeline, commonly used for storing aggregation results in a separate collection or merging results with an existing collection, are not supported in time series collections. This limitation affects the ability to perform certain aggregation operations directly on time series collections.

● Updates and Deletes : Time series collections only support insert operations and read queries. This means that once data is inserted into a time series collection, it cannot be directly modified or deleted on a per-document basis. Any updates or manual delete operations will result in an error.

MongoDB change streams for real-time data monitoring

MongoDB Change Streams provide a powerful feature for real-time data monitoring in MongoDB. Change Streams allow you to capture and react to any changes happening in a MongoDB collection in a real-time manner. This is particularly useful in scenarios where you need to track updates, insertions, or deletions in your data and take immediate actions based on those changes.

Change Streams provide a unified and consistent way to subscribe to the database changes, making it easier to build reactive applications that respond to real-time data modifications.

```javascript

// MongoDB Change Streams for Real-Time Data Monitoring

const MongoClient = require('mongodb').MongoClient;

// Connection URL

const url = 'mongodb://localhost:27017';

// Database and collection names

const dbName = 'mydatabase';

const collectionName = 'mycollection';

// Create a change stream

MongoClient.connect(url, function(err, client) {

if (err) throw err;

const db = client.db(dbName);

const collection = db.collection(collectionName);

// Create a change stream cursor with filtering

const changeStream = collection.watch([{ $match: { operationType: 'delete' } }]);

// Set up event listeners for change events

changeStream.on('change', function(change) {

// Process the delete event

console.log('Delete Event:', change);

// Perform further actions based on the delete event

});

// Close the connection

// client.close();

});

```

In this updated example, we use the `$match` stage in the change stream pipeline to filter for delete operations only. The `$match` stage is specified as an array in the `watch()` method. The `{ operationType: 'delete' }` filter ensures that only delete events will be captured by the change stream.

Now, when a delete operation occurs in the specified collection, the `'change'` event listener will be triggered, and the callback function will execute. Inside the callback, you can process the delete event and perform additional actions based on your application's requirements. It's important to note that the change stream will only provide the document ID for delete operations. The actual content of the document is no longer available. If you need the document content, you need to retrieve it before the delete operation or store it separately for reference.

One important aspect to consider is related to the inability to distinguish whether a document was removed manually or due to the Time to Live (TTL) mechanism. Change streams do not provide explicit information about the reason for document removal. This means that when a document is removed, the application cannot determine if it was deleted manually by a user or automatically expired through the TTL mechanism. Depending on the use case, it may be necessary to implement additional logic or mechanisms within the application to handle this distinction if it is critical for the business requirements.

Here are some key aspects and benefits of using MongoDB Change Streams for real-time data monitoring:

● Real-Time Event Capture : Change Streams allow you to capture changes as they occur, providing a real-time view of the database activity. This enables you to monitor data modifications instantly and react to them in real-time.

● Flexibility in Filtering : You can specify filters and criteria to define the changes you want to capture. This gives you the flexibility to focus on specific documents, fields, or operations and filter out irrelevant changes, optimizing your monitoring process.

● Data Integration and Pipelines : Change Streams can be easily integrated into your existing data processing pipelines or applications. You can consume the change events and perform further processing, transformation, or analysis based on your specific use case.

● Scalability and High Availability : Change Streams are designed to work seamlessly in distributed and sharded MongoDB environments. They leverage the underlying replica set architecture to ensure high availability and fault tolerance, making them suitable for demanding and scalable applications.

● Event-Driven Architecture : Using Change Streams, you can adopt an event-driven architecture for your MongoDB applications. Instead of continuously polling the database for changes, you can subscribe to the change events and respond immediately, reducing unnecessary resource consumption.

MongoDB Change Streams provide a powerful mechanism for real-time data monitoring, enabling you to build reactive, event-driven applications and workflows. By capturing and processing database changes in real-time, you can enhance the responsiveness and agility of your applications, leading to improved user experiences and efficient data processing.

Another consideration is that low-frequency events may lead to an invalid resume token. Change streams rely on a resume token to keep track of the last processed change. In cases where there are long periods of inactivity or low-frequency events, the resume token may become invalid or expired. Therefore, the application must handle this situation gracefully and take appropriate action when encountering an invalid resume token. This may involve reestablishing the change stream or handling the situation in a way that ensures data integrity and consistency.

It's important to note that while Change Streams offer real-time monitoring capabilities, they should be used judiciously, considering the potential impact on system resources. Monitoring a large number of collections or frequently changing data can introduce additional load on the database, so it's essential to carefully design and optimize your Change Streams implementation to meet your specific requirements.

Conclusion

By harnessing the capabilities of MongoDB, developers can unlock a world of possibilities in modern application development. From its NoSQL model to efficient time-series data management, batch writes, data retrieval, and real-time monitoring with Change Streams, MongoDB provides a powerful toolkit. By following best practices and understanding its limitations, developers can maximize the potential of MongoDB, resulting in scalable, performant, and data-rich applications.

MongoDB can be likened to a Swiss Army Knife in the world of database systems, offering a versatile set of features and capabilities. However, it is important to note that MongoDB is not a one-size-fits-all solution for every use case. This article series aim to showcase the capabilities and potential use cases of MongoDB.

While MongoDB provides powerful features like time-series data management and change streams for real-time data monitoring, it is essential to consider alternative solutions as well. Depending on factors such as team skills, the company-adopted tools, and specific project requirements, exploring other options like leveraging IaaS provider-native solutions such as DynamoDB for specific use cases within the automotive domain may be worthwhile.

Furthermore, it is important to highlight that both articles focused on using MongoDB in automotive primarily for hot storage, while the aspect of cold storage for automotive applications is not covered.

When starting a new project, it is crucial to conduct thorough research, consider the specific requirements, and evaluate the available options in the market. While MongoDB may provide robust features for certain scenarios, dedicated time-series databases like InfluxDB may offer a more tailored and specialized solution for specific time-series data needs. Choosing the right tool for the job requires careful consideration and an understanding of the trade-offs and strengths of each option available.

Driving success in delivering innovation for automotive: Exploring various partnership models

The automotive sector has rapidly evolved, recognizing that cutting-edge software is now the primary factor differentiating car brands and models. Automotive leaders are reshaping the industry by creating innovative software-defined vehicles that seamlessly integrate advanced features, providing drivers with a genuinely captivating experience.

While some automotive manufacturers have begun building in-house expertise, others are turning to third-party software development companies for assistance. However, such partnerships can take on different forms depending on factors like the vendor’s size and specialization, which can sometimes lead to challenges in cooperation.

Still, success is possible if both parties adopt the right approach despite any problems that may arise. To ensure optimal collaboration, we recommend implementing the Value-Aligned Partnership concept.

By adopting this approach, vendors accept full accountability for delivery, match their contributions to the client's business goals, and use their experience to guarantee success. We use this strategy at Grape Up to ensure our clients receive the best possible results.

This article explores the advantages and challenges of working with service vendors of various sizes and specializations. It also highlights the Value-Aligned Partnership model as a way to overcome these challenges and maximize the benefits of client-vendor cooperation.

Large vendors and system integrators

Working with multi-client software vendors can pose unique challenges for automotive companies, as these companies typically operate on a body leasing model , providing temporary skilled personnel for development services.

Furthermore, these companies may lack specialized expertise , which is particularly true for large organizations with high turnover rates in their development teams. Despite having impressive case studies, the team assigned to a specific project doesn't always possess the necessary experience due to frequent personnel changes within the company.

Moreover, big vendors usually have numerous clients and projects to manage simultaneously. This may lead to limited personalized attention and cause potential delays in delivery. Outsourcing services to countries with different time zones, quality assurance procedures, and cultural norms can further complicate the situation.

However, despite these challenges, such partnerships have significant advantages, which should be considered in the decision-making process.

Advantages of partnering with large vendors and system integrators

- Access to diverse expertise : A wide array of specialists is available for various project aspects.

- Scalability advantage : Project resource requirements are met without bottlenecks.

- Accelerated time-to-market : Development speed is increased with skilled, available teams.

- Cost-efficiency : It's a cost-effective alternative via vendor teams, reducing overhead.

- Risk management : Turnover risks are handled by vendors to ensure continuity.

- Cross-industry insights application : Diverse sector practices are applied to automotive projects.

- Agile adaptation : They are efficiently adjusting to changing project needs.

- Enhanced global collaboration: Creativity is boosted by leveraging time zones and diverse perspectives from vendors.

Working with smaller vendors

Your other option is to work with smaller software companies, but you should be aware of the potential challenges of such collaboration, as well. For example, while they are exceptionally well-versed in a particular field or technology, they might lack the breadth of knowledge necessary to meet the needs of the industry. Additionally, limited resources or difficulties in scaling could hinder their ability to keep pace with the growing demands of the sector.

Furthermore, although these vendors excel in specific technical areas , they may struggle to provide comprehensive end-to-end solutions, leaving gaps in the development process.

Adding to these challenges, smaller companies often have a more restricted perspective due to their limited engagement in partnerships , infrequent conference attendance, and a narrower appeal that doesn't span a global audience. This can result in a lack of exposure to diverse ideas and practices, hindering their ability to innovate and adapt.

Benefits of working with smaller vendors

- Tailored excellence: Small vendors often craft industry-specific, innovative solutions without unnecessary features.

- Personal priority: They prioritize each client, ensuring dedicated attention and satisfaction.

- Flexible negotiation: Smaller companies offer negotiable terms and pricing, unlike larger counterparts.

- Bespoke solutions: They customize offerings based on your unique needs, unlike a generic approach.

- Agile responsiveness: Quick release cycles, technical agility, and transparent interactions are their strengths.

- Meaningful connections: They deeply care about your success, fostering personal relationships.

- Accountable quality: They take responsibility for their products, as development and support are integrated.

Niche specialization companies

Collaborating with a company specializing in the automotive sector offers distinct advantages, addressing challenges some large and small vendors face. Their in-depth knowledge of the automotive industry ensures tailored solutions that meet specific requirements efficiently.

As opposed to vendors who work in several industries, they are quick to adjust to shifts in the market, allowing software development projects to be successful and meet the ever-changing needs of the automobile sector.

It is important, however, to consider the potential drawbacks when entering partnerships that rely heavily on a narrow area of expertise. Niche solutions may not be versatile , and specialization can lead to higher costs and resistance to innovation . Additionally, overreliance on a single source could lead to dependency concerns and a limited perspective on the market. It is important to weigh these risks against the benefits and ensure that partnerships are balanced to avoid stagnation and limited options.

When working with software vendors, no matter their size or specialization level, it is essential to adopt the right approach to cooperation to mitigate risks and challenges.

Value-aligned partnership model explained

This cooperation model prioritizes shared values, expertise, and cultural compatibility. It's important to note, in this context, that a company's size doesn't limit its abilities to become a successful partner. Both large and small vendors can be just as driven and invested in professional development.

What matters most is a company's mindset and commitment to continuous improvement. Small and large businesses can excel by prioritizing a robust organizational culture, regular training sessions, knowledge sharing among employees, and partnerships that leverage the strengths of both parties.

Thus, the Value-Aligned Partnership is a model that brings together the benefits of working with different types of companies. It combines the diverse expertise of large vendors with the agility and tailored solutions of small companies while incorporating vast industry-specific knowledge.

In a value-aligned partnership model, the vendor goes beyond simply providing software development services. They actively engage in the journey towards success by fully immersing themselves in the client's vision . By thoroughly comprehending the customer's values and goals, a partner ensures that every contribution they make aligns seamlessly with the overall direction of the business and can even foster innovation within the client's company.

Building a strong partnership based on shared values takes time and effort, but it's worth it for the exceptional outcomes that result. Open communication, collaboration, and mutual understanding are key factors in creating a foundation for long-term cooperation and shared success between the two parties.

In the fast-paced and ever-changing automotive industry, having expertise specific to the domain is crucial. A value-aligned partner recognizes the importance of retaining in-house knowledge and skills related to the sector. As a company that prioritizes this approach, Grape Up invests in measures to minimize turnover , provide ongoing training and education, and ensure that our team possesses deep domain expertise . This commitment to automotive know-how strengthens the partnership's reliability and establishes us as a trusted, long-term ally for the automotive company.

Conclusion

The automotive industry is transforming remarkably with the rise of software-defined vehicles. OEMs realize they can't tackle this revolution alone and seek the perfect collaborators to join them on this exciting journey. These partners bring a wealth of expertise in software development, cloud technologies, artificial intelligence, and more. With their finger on the pulse of the automotive industry, they understand the ever-changing trends and challenges. They take the time to comprehend the OEM's vision, objectives, and market positioning, enabling them to provide tailored solutions that address specific needs.

How to manage an M5Stack Core2 for AWS. Part 3 – best of Micropython and C meld together

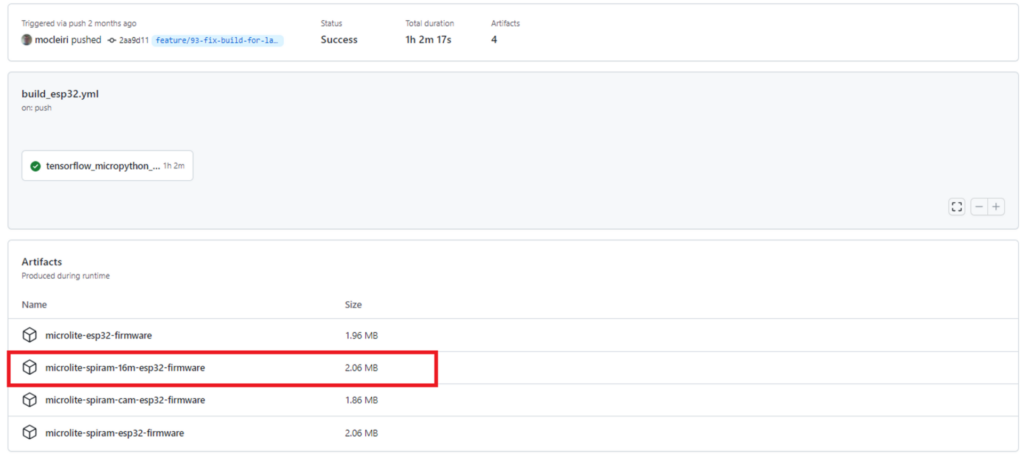

In the first part of the article , we’ve covered Micropython usage with UiFlow and VS Code environments. In the second one , we use C/C++ with more granular device control. This time, we’ll try to run Micropython with an external library added.

Micropython with interactive console