Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

Android AAOS 14 - EVS network camera

The automotive industry has been rapidly evolving with technological advancements that enhance the driving experience and safety. Among these innovations, the Android Automotive Operating System (AAOS) has stood out, offering a versatile and customizable platform for car manufacturers.

The Exterior View System (EVS) is a comprehensive camera-based system designed to provide drivers with real-time visual monitoring of their vehicle's surroundings. It typically includes multiple cameras positioned around the vehicle to eliminate blind spots and enhance situational awareness, significantly aiding in maneuvers like parking and lane changes. By integrating with advanced driver assistance systems, EVS contributes to increased safety and convenience for drivers.

For more detailed information about EVS and its configuration, we highly recommend reading our article "Android AAOS 14 - Surround View Parking Camera: How to Configure and Launch EVS (Exterior View System)." This foundational article provides essential insights and instructions that we will build upon in this guide.

The latest Android Automotive Operating System , AAOS 14, presents new possibilities, but it does not natively support Ethernet cameras. In this article, we describe our implementation of an Ethernet camera integration with the Exterior View System (EVS) on Android.

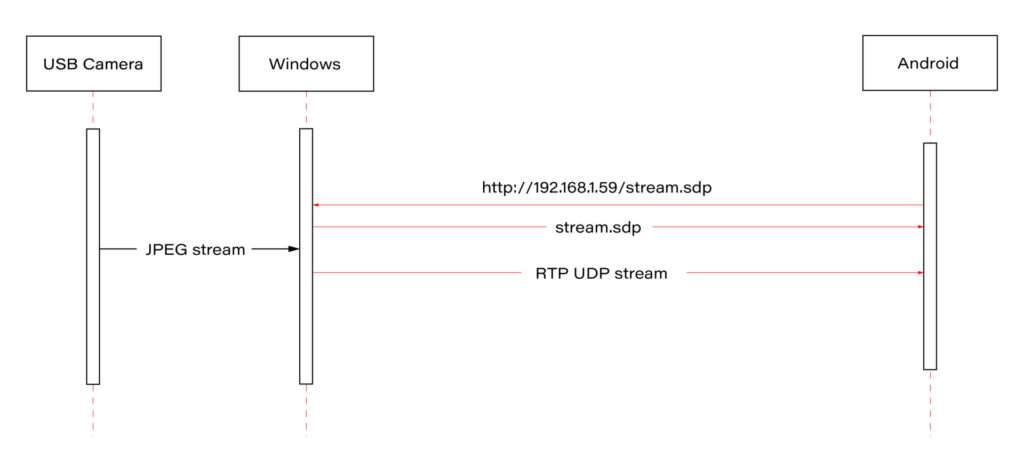

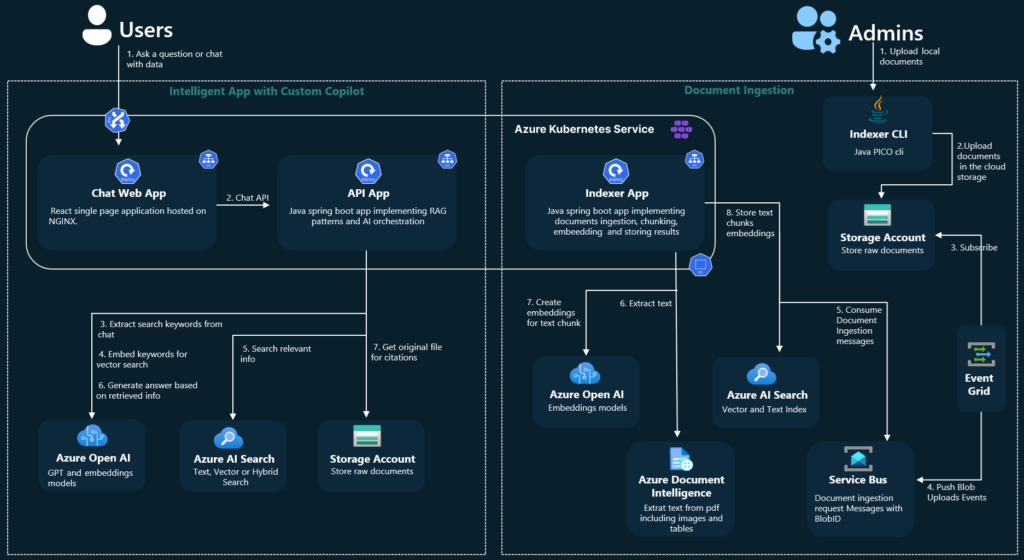

Our approach involves connecting a USB camera to a Windows laptop and streaming the video using the Real-time Transport Protocol (RTP). By employing the powerful FFmpeg software, the video stream will be broadcast and described in an SDP (Session Description Protocol) file, accessible via an HTTP server. On the Android side, we'll utilize the FFmpeg library to receive and decode the video stream, effectively bringing the camera feed into the AAOS 14 environment.

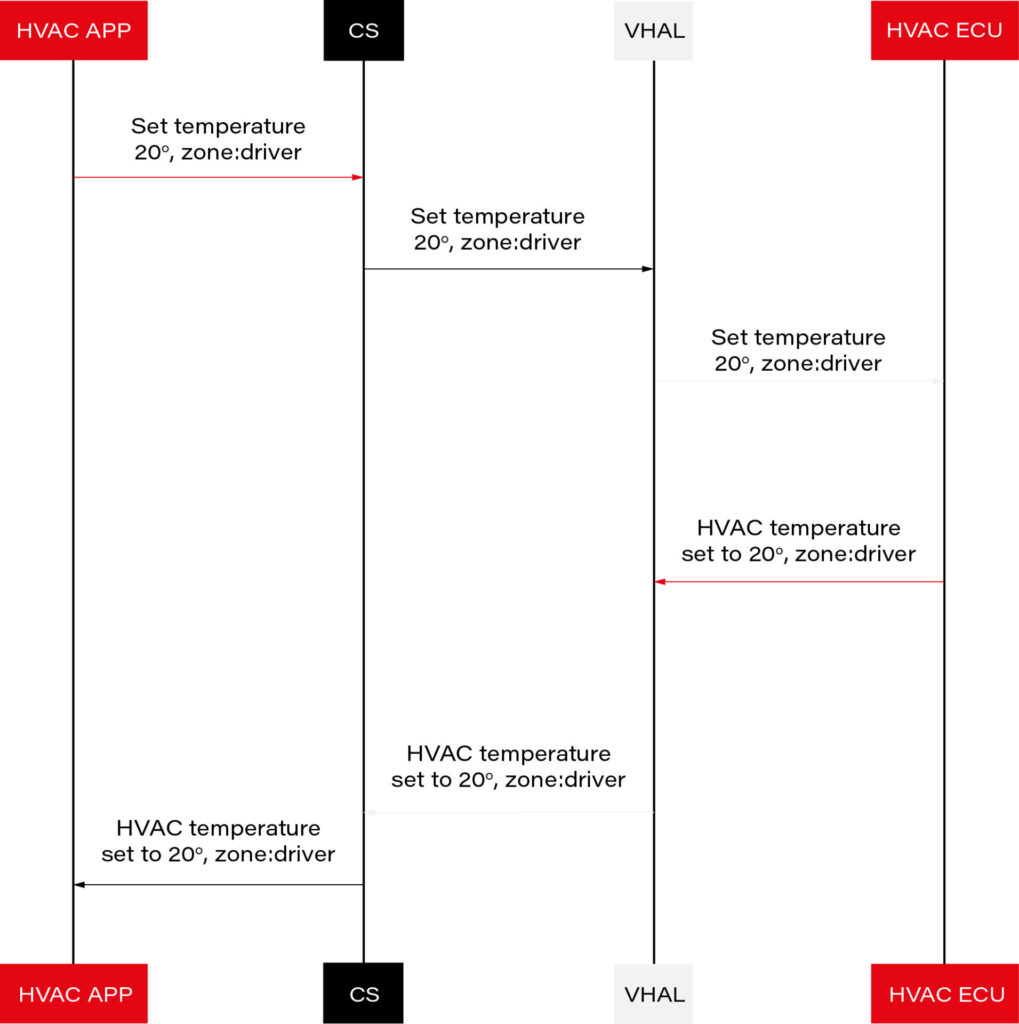

This article provides a step-by-step guide on how we achieved this integration of the EVS network camera, offering insights and practical instructions for those looking to implement a similar solution. The following diagram provides an overview of the entire process:

Building FFmpeg Library for Android

To enable RTP camera streaming on Android, the first step is to build the FFmpeg library for the platform. This section describes the process in detail, using the ffmpeg-android-maker project. Follow these steps to successfully build and integrate the FFmpeg library with the Android EVS (Exterior View System) Driver.

Step 1: Install Android SDK

First, install the Android SDK. For Ubuntu/Debian systems, you can use the following commands:

sudo apt update && sudo apt install android-sdk

The SDK should be installed in /usr/lib/android-sdk .

Step 2: Install NDK

Download the Android NDK (Native Development Kit) from the official website:

https://developer.android.com/ndk/downloads

After downloading, extract the NDK to your desired location.

Step 3: Build FFmpeg

Clone the ffmpeg-android-maker repository and navigate to its directory:

git clone https://github.com/Javernaut/ffmpeg-android-maker.gitcd ffmpeg-android-maker

Set the environment variables to point to the SDK and NDK:

export ANDROID_SDK_HOME=/usr/lib/android-sdk

export ANDROID_NDK_HOME=/path/to/ndk/

Run the build script:

./ffmpeg-android-maker.sh

This script will download FFmpeg source code and dependencies, and compile FFmpeg for various Android architectures.

Step 4: Copy Library Files to EVS Driver

After the build process is complete, copy the .so library files from build/ffmpeg/ to the EVS Driver directory in your Android project:

cp build/ffmpeg/*.so /path/to/android/project/packages/services/Car/cpp/evs/sampleDriver/aidl/

Step 5: Add Libraries to EVS Driver Build Files

Edit the Android.bp file in the aidl directory to include the prebuilt FFmpeg libraries:

cc_prebuilt_library_shared {

name: "rtp-libavcodec",

vendor: true,

srcs: ["libavcodec.so"],

strip: {

none: true,

},

check_elf_files: false,

}

cc_prebuilt_library {

name: "rtp-libavformat",

vendor: true,

srcs: ["libavformat.so"],

strip: {

none: true,

},

check_elf_files: false,

}

cc_prebuilt_library {

name: "rtp-libavutil",

vendor: true,

srcs: ["libavutil.so"],

strip: {

none: true,

},

check_elf_files: false,

}

cc_prebuilt_library_shared {

name: "rtp-libswscale",

vendor: true,

srcs: ["libswscale.so"],

strip: {

none: true,

},

check_elf_files: false,

}

Add prebuilt libraries to EVS Driver app:

cc_binary {

name: "android.hardware.automotive.evs-default",

defaults: ["android.hardware.graphics.common-ndk_static"],

vendor: true,

relative_install_path: "hw",

srcs: [

":libgui_frame_event_aidl",

"src/*.cpp"

],

shared_libs: [

"rtp-libavcodec",

"rtp-libavformat",

"rtp-libavutil",

"rtp-libswscale",

"android.hardware.graphics.bufferqueue@1.0",

"android.hardware.graphics.bufferqueue@2.0",

android.hidl.token@1.0-utils,

....]

}

By following these steps, you will have successfully built the FFmpeg library for Android and integrated it into the EVS Driver.

EVS Driver RTP Camera Implementation

In this chapter, we will demonstrate how to quickly implement RTP support for the EVS (Exterior View System) driver in Android AAOS 14. This implementation is for demonstration purposes only. For production use, the implementation should be optimized, adapted to specific requirements, and all possible configurations and edge cases should be thoroughly tested. Here, we will focus solely on displaying the video stream from RTP.

The main files responsible for capturing and decoding video from USB cameras are implemented in the EvsV4lCamera and VideoCapture classes. To handle RTP, we will copy these classes and rename them to EvsRTPCamera and RTPCapture . RTP handling will be implemented in RTPCapture . We need to implement four main functions:

bool open(const char* deviceName, const int32_t width = 0, const int32_t height = 0);

void close();

bool startStream(std::function<void(RTPCapture*, imageBuffer*, void*)> callback = nullptr);

void stopStream();

We will use the official example from the FFmpeg library, https://github.com/FFmpeg/FFmpeg/blob/master/doc/examples/demux_decode.c, which decodes the specified video stream into RGBA buffers. After adapting the example, the RTPCapture.cpp file will look like this:

#include "RTPCapture.h"

#include <android-base/logging.h>

#include <errno.h>

#include <error.h>

#include <fcntl.h>

#include <memory.h>

#include <stdio.h>

#include <stdlib.h>

#include <sys/ioctl.h>

#include <sys/mman.h>

#include <unistd.h>

#include <cassert>

#include <iomanip>

#include <stdio.h>

#include <stdlib.h>

#include <iostream>

#include <fstream>

#include <sstream>

static AVFormatContext *fmt_ctx = NULL;

static AVCodecContext *video_dec_ctx = NULL, *audio_dec_ctx;

static int width, height;

static enum AVPixelFormat pix_fmt;

static enum AVPixelFormat out_pix_fmt = AV_PIX_FMT_RGBA;

static AVStream *video_stream = NULL, *audio_stream = NULL;

static struct SwsContext *resize;

static const char *src_filename = NULL;

static uint8_t *video_dst_data[4] = {NULL};

static int video_dst_linesize[4];

static int video_dst_bufsize;

static int video_stream_idx = -1, audio_stream_idx = -1;

static AVFrame *frame = NULL;

static AVFrame *frame2 = NULL;

static AVPacket *pkt = NULL;

static int video_frame_count = 0;

int RTPCapture::output_video_frame(AVFrame *frame)

{

LOG(INFO) << "Video_frame: " << video_frame_count++

<< " ,scale height: " << sws_scale(resize, frame->data, frame->linesize, 0, height, video_dst_data, video_dst_linesize);

if (mCallback) {

imageBuffer buf;

buf.index = video_frame_count;

buf.length = video_dst_bufsize;

mCallback(this, &buf, video_dst_data[0]);

}

return 0;

}

int RTPCapture::decode_packet(AVCodecContext *dec, const AVPacket *pkt)

{

int ret = 0;

ret = avcodec_send_packet(dec, pkt);

if (ret < 0) {

return ret;

}

// get all the available frames from the decoder

while (ret >= 0) {

ret = avcodec_receive_frame(dec, frame);

if (ret < 0) {

if (ret == AVERROR_EOF || ret == AVERROR(EAGAIN))

{

return 0;

}

return ret;

}

// write the frame data to output file

if (dec->codec->type == AVMEDIA_TYPE_VIDEO) {

ret = output_video_frame(frame);

}

av_frame_unref(frame);

if (ret < 0)

return ret;

}

return 0;

}

int RTPCapture::open_codec_context(int *stream_idx,

AVCodecContext **dec_ctx, AVFormatContext *fmt_ctx, enum AVMediaType type)

{

int ret, stream_index;

AVStream *st;

const AVCodec *dec = NULL;

ret = av_find_best_stream(fmt_ctx, type, -1, -1, NULL, 0);

if (ret < 0) {

fprintf(stderr, "Could not find %s stream in input file '%s'\n",

av_get_media_type_string(type), src_filename);

return ret;

} else {

stream_index = ret;

st = fmt_ctx->streams[stream_index];

/* find decoder for the stream */

dec = avcodec_find_decoder(st->codecpar->codec_id);

if (!dec) {

fprintf(stderr, "Failed to find %s codec\n",

av_get_media_type_string(type));

return AVERROR(EINVAL);

}

/* Allocate a codec context for the decoder */

*dec_ctx = avcodec_alloc_context3(dec);

if (!*dec_ctx) {

fprintf(stderr, "Failed to allocate the %s codec context\n",

av_get_media_type_string(type));

return AVERROR(ENOMEM);

}

/* Copy codec parameters from input stream to output codec context */

if ((ret = avcodec_parameters_to_context(*dec_ctx, st->codecpar)) < 0) {

fprintf(stderr, "Failed to copy %s codec parameters to decoder context\n",

av_get_media_type_string(type));

return ret;

}

av_opt_set((*dec_ctx)->priv_data, "preset", "ultrafast", 0);

av_opt_set((*dec_ctx)->priv_data, "tune", "zerolatency", 0);

/* Init the decoders */

if ((ret = avcodec_open2(*dec_ctx, dec, NULL)) < 0) {

fprintf(stderr, "Failed to open %s codec\n",

av_get_media_type_string(type));

return ret;

}

*stream_idx = stream_index;

}

return 0;

}

bool RTPCapture::open(const char* /*deviceName*/, const int32_t /*width*/, const int32_t /*height*/) {

LOG(INFO) << "RTPCapture::open";

int ret = 0;

avformat_network_init();

mFormat = V4L2_PIX_FMT_YUV420;

mWidth = 1920;

mHeight = 1080;

mStride = 0;

/* open input file, and allocate format context */

if (avformat_open_input(&fmt_ctx, "http://192.168.1.59/stream.sdp", NULL, NULL) < 0) {

LOG(ERROR) << "Could not open network stream";

return false;

}

LOG(INFO) << "Input opened";

isOpened = true;

/* retrieve stream information */

if (avformat_find_stream_info(fmt_ctx, NULL) < 0) {

LOG(ERROR) << "Could not find stream information";

return false;

}

LOG(INFO) << "Stream info found";

if (open_codec_context(&video_stream_idx, &video_dec_ctx, fmt_ctx, AVMEDIA_TYPE_VIDEO) >= 0) {

video_stream = fmt_ctx->streams[video_stream_idx];

/* allocate image where the decoded image will be put */

width = video_dec_ctx->width;

height = video_dec_ctx->height;

pix_fmt = video_dec_ctx->sw_pix_fmt;

resize = sws_getContext(width, height, AV_PIX_FMT_YUVJ422P,

width, height, out_pix_fmt, SWS_BICUBIC, NULL, NULL, NULL);

LOG(ERROR) << "RTPCapture::open pix_fmt: " << video_dec_ctx->pix_fmt

<< ", sw_pix_fmt: " << video_dec_ctx->sw_pix_fmt

<< ", my_fmt: " << pix_fmt;

ret = av_image_alloc(video_dst_data, video_dst_linesize,

width, height, out_pix_fmt, 1);

if (ret < 0) {

LOG(ERROR) << "Could not allocate raw video buffer";

return false;

}

video_dst_bufsize = ret;

}

av_dump_format(fmt_ctx, 0, src_filename, 0);

if (!audio_stream && !video_stream) {

LOG(ERROR) << "Could not find audio or video stream in the input, aborting";

ret = 1;

return false;

}

frame = av_frame_alloc();

if (!frame) {

LOG(ERROR) << "Could not allocate frame";

ret = AVERROR(ENOMEM);

return false;

}

frame2 = av_frame_alloc();

pkt = av_packet_alloc();

if (!pkt) {

LOG(ERROR) << "Could not allocate packet";

ret = AVERROR(ENOMEM);

return false;

}

return true;

}

void RTPCapture::close() {

LOG(DEBUG) << __FUNCTION__;

}

bool RTPCapture::startStream(std::function<void(RTPCapture*, imageBuffer*, void*)> callback) {

LOG(INFO) << "startStream";

if(!isOpen()) {

LOG(ERROR) << "startStream failed. Stream not opened";

return false;

}

stop_thread_1 = false;

mCallback = callback;

mCaptureThread = std::thread([this]() { collectFrames(); });

return true;

}

void RTPCapture::stopStream() {

LOG(INFO) << "stopStream";

stop_thread_1 = true;

mCaptureThread.join();

mCallback = nullptr;

}

bool RTPCapture::returnFrame(int i) {

LOG(INFO) << "returnFrame" << i;

return true;

}

void RTPCapture::collectFrames() {

int ret = 0;

LOG(INFO) << "Reading frames";

/* read frames from the file */

while (av_read_frame(fmt_ctx, pkt) >= 0) {

if (stop_thread_1) {

return;

}

if (pkt->stream_index == video_stream_idx) {

ret = decode_packet(video_dec_ctx, pkt);

}

av_packet_unref(pkt);

if (ret < 0)

break;

}

}

int RTPCapture::setParameter(v4l2_control&) {

LOG(INFO) << "RTPCapture::setParameter";

return 0;

}

int RTPCapture::getParameter(v4l2_control&) {

LOG(INFO) << "RTPCapture::getParameter";

return 0;

}

std::set<uint32_t> RTPCapture::enumerateCameraControls() {

LOG(INFO) << "RTPCapture::enumerateCameraControls";

std::set<uint32_t> ctrlIDs;

return std::move(ctrlIDs);

}

void* RTPCapture::getLatestData() {

LOG(INFO) << "RTPCapture::getLatestData";

return nullptr;

}

bool RTPCapture::isFrameReady() {

LOG(INFO) << "RTPCapture::isFrameReady";

return true;

}

void RTPCapture::markFrameConsumed(int i) {

LOG(INFO) << "RTPCapture::markFrameConsumed frame: " << i;

}

bool RTPCapture::isOpen() {

LOG(INFO) << "RTPCapture::isOpen";

return isOpened;

}

Next, we need to modify EvsRTPCamera to use our RTPCapture class instead of VideoCapture . In EvsRTPCamera.h , add:

#include "RTPCapture.h"

And replace:

VideoCapture mVideo = {};

with:

RTPCapture mVideo = {};

In EvsRTPCamera.cpp , we also need to make changes. In the forwardFrame(imageBuffer* pV4lBuff, void* pData) function, replace:

mFillBufferFromVideo(bufferDesc, (uint8_t*)targetPixels, pData, mVideo.getStride());

with:

memcpy(targetPixels, pData, pV4lBuff->length);

This is because the VideoCapture class provides a buffer from the camera in various YUYV pixel formats. The mFillBufferFromVideo function is responsible for converting the pixel format to RGBA. In our case, RTPCapture already provides an RGBA buffer. This is done in the

int RTPCapture::output_video_frame(AVFrame *frame) function using sws_scale from the FFmpeg library.

Now we need to ensure that our RTP camera is recognized by the system. The EvsEnumerator class and its enumerateCameras function are responsible for detecting cameras. This function adds all video files from the /dev/ directory.

To add our RTP camera, we will append the following code at the end of the enumerateCameras function:

if (addCaptureDevice("rtp1")) {

++captureCount;

}

This will add a camera with the ID "rtp1" to the list of detected cameras, making it visible to the system.

The final step is to modify the EvsEnumerator: :openCamera function to direct the camera with the ID "rtp1" to the RTP implementation. Normally, when opening a USB camera, an instance of the EvsV4lCamera class is created:

pActiveCamera = EvsV4lCamera::Create(id.data());

In our example, we will hardcode the ID check and create the appropriate object:

if (id == "rtp1") {

pActiveCamera = EvsRTPCamera::Create(id.data());

} else {

pActiveCamera = EvsV4lCamera::Create(id.data());

}

With this implementation, our camera should start working. Now we need to build the EVS Driver application and push it to the device along with the FFmpeg libraries:

mmma packages/services/Car/cpp/evs/sampleDriver/

adb push out/target/product/rpi4/vendor/bin/hw/android.hardware.automotive.evs-default /vendor/bin/hw/

Launching the RTP Camera

To stream video from your camera, you need to install FFmpeg ( https://www.ffmpeg.org/download.html#build-windows ) and an HTTP server on the computer that will be streaming the video.

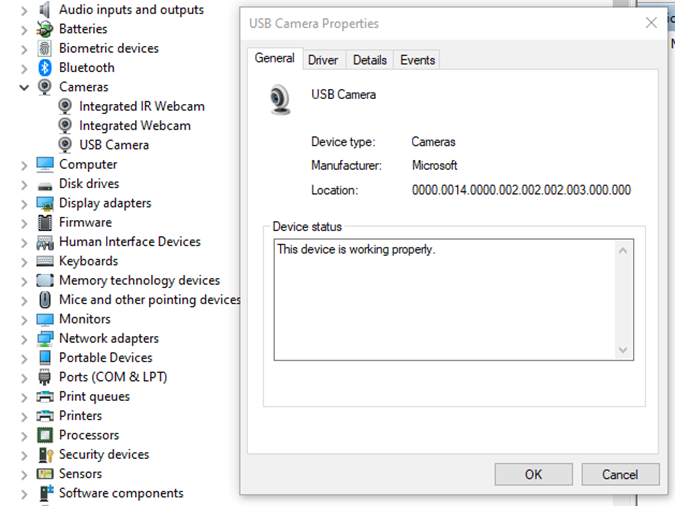

Start FFmpeg (example on Windows):

ffmpeg -f dshow -video_size 1280x720 -i video="USB Camera" -c copy -f rtp rtp://192.168.1.53:8554

where:

- -video_size is video resolution

- "USB Camera" is the name of the camera as it appears in the Device Manager

- "-c copy" means that individual frames from the camera (in JPEG format) will be copied to the RTP stream without changes. Otherwise, FFmpeg would need to decode and re-encode the image, introducing unnecessary delays.

- "rtp://192.168.1.53:8554": 192.168.1.53 is the IP address of our Android device. You should adjust this accordingly. Port 8554 can be left as the default.

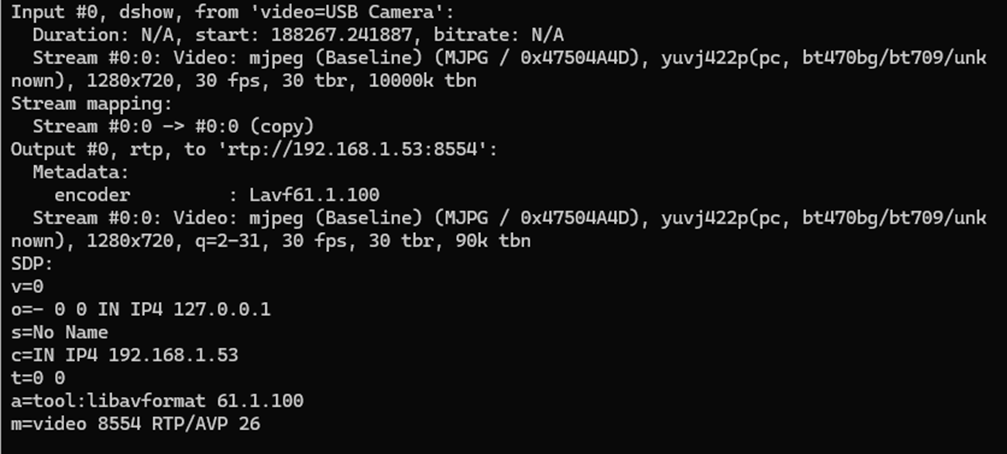

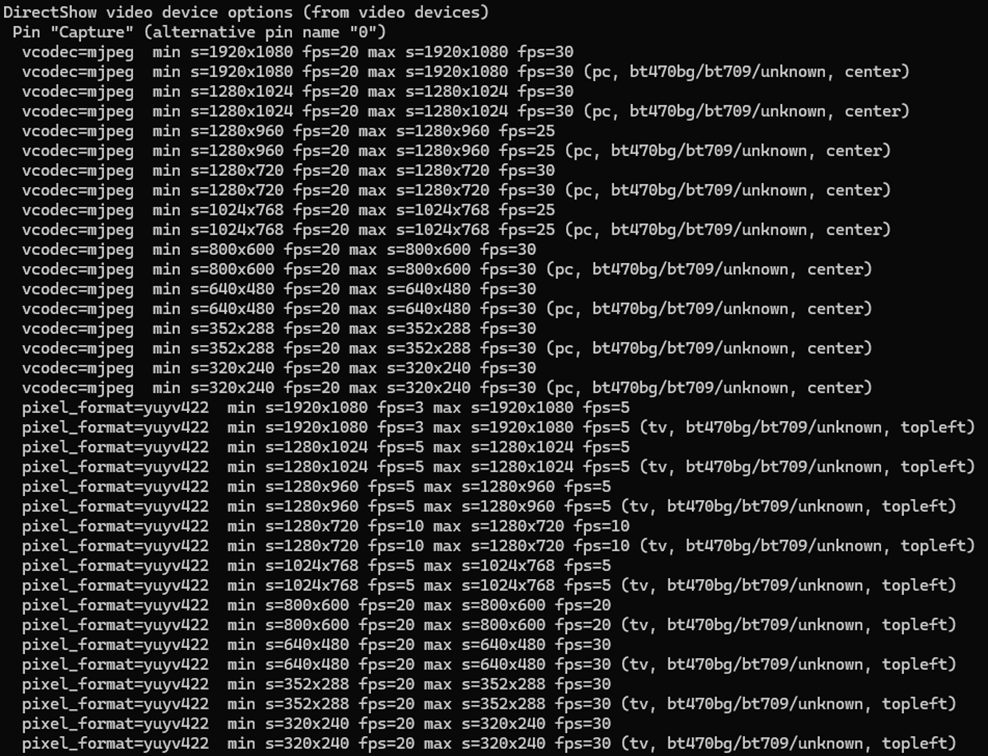

After starting FFmpeg, you should see output similar to this on the console:

Here, we see the input, output, and SDP sections. In the input section, the codec is JPEG, which is what we need. The pixel format is yuvj422p, with a resolution of 1920x1080 at 30 fps. The stream parameters in the output section should match.

Next, save the SDP section to a file named stream.sdp on the HTTP server. Our EVS Driver application needs to fetch this file, which describes the stream.

In our example, the Android device should access this file at: http://192.168.1.59/stream.sdp

The exact content of the file should be:

v=0

o=- 0 0 IN IP4 127.0.0.1

s=No Name

c=IN IP4 192.168.1.53

t=0 0

a=tool:libavformat 61.1.100

m=video 8554 RTP/AVP 26

Now, restart the EVS Driver application on the Android device:

killall android.hardware.automotive.evs-default

Then, configure the EVS app to use the camera "rtp1". For detailed instructions on how to configure and launch the EVS (Exterior View System), refer to the article "Android AAOS 14 - Surround View Parking Camera: How to Configure and Launch EVS (Exterior View System)".

Performance Testing

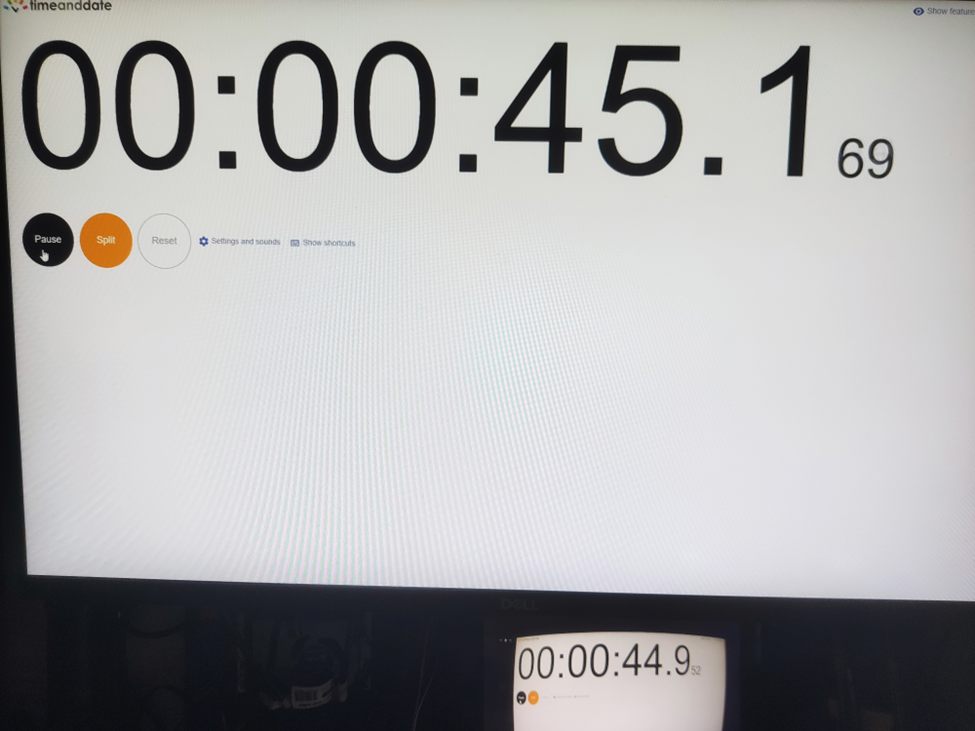

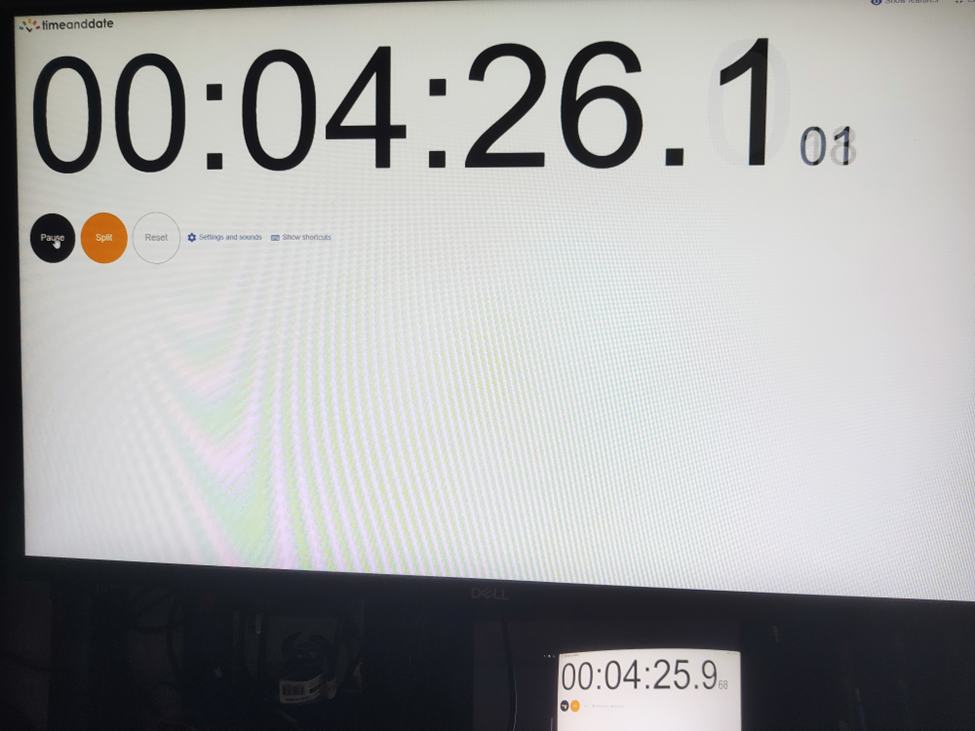

In this chapter, we will measure and compare the latency of the video stream from a camera connected via USB and RTP.

How Did We Measure Latency?

- Setup Timer: Displayed a timer on the computer screen showing time with millisecond precision.

- Camera Capture: Pointed the EVS camera at this screen so that the timer was also visible on the Android device screen.

- Snapshot Comparison: Took photos of both screens simultaneously. The time displayed on the Android device was delayed compared to the computer screen. The difference in time between the computer and the Android device represents the camera's latency.

This latency is composed of several factors:

- Camera Latency: The time the camera takes to capture the image from the sensor and encode it into the appropriate format.

- Transmission Time: The time taken to transmit the data via USB or RTP.

- Decoding and Display: The time to decode the video stream and display the image on the screen.

Latency Comparison

Below are the photos showing the latency:

USB Camera

RTP Camera

From these measurements, we found that the average latency for a camera connected via USB to the Android device is 200ms , while the latency for the camera connected via RTP is 150ms . This result is quite surprising.

The reasons behind these results are:

- The EVS implementation on Android captures video from the USB camera in YUV and similar formats, whereas FFmpeg streams RTP video in JPEG format.

- The USB camera used has a higher latency in generating YUV images compared to JPEG. Additionally, the frame rate is much lower. For a resolution of 1280x720, the YUV format only supports 10 fps, whereas JPEG supports the full 30 fps.

All camera modes can be checked using the command:

ffmpeg -f dshow -list_options true -i video="USB Camera"

Conclusion

This article has taken you through the comprehensive process of integrating an RTP camera into the Android EVS (Exterior View System) framework, highlighting the detailed steps involved in both the implementation and the performance evaluation.

We began our journey by developing new classes, EvsRTPCamera and RTPCapture , which were specifically designed to handle RTP streams using FFmpeg. This adaptation allowed us to process and stream real-time video effectively. To ensure our system recognized the RTP camera, we made critical adjustments to the EvsEnumerator class. By customizing the enumerateCameras and openCamera functions, we ensured that our RTP camera was correctly instantiated and recognized by the system.

Next, we focused on building and deploying the EVS Driver application, including the necessary FFmpeg libraries, to our target Android device. This step was crucial for validating our implementation in a real-world environment. We also conducted a detailed performance evaluation to measure and compare the latency of video feeds from USB and RTP cameras. Using a timer displayed on a computer screen, we captured the timer with the EVS camera and compared the time shown on both the computer and Android screens. This method allowed us to accurately determine the latency introduced by each camera setup.

Our performance tests revealed that the RTP camera had an average latency of 150ms, while the USB camera had a latency of 200ms. This result was unexpected but highly informative. The lower latency of the RTP camera was largely due to the use of the JPEG format, which our particular USB camera handled less efficiently due to its slower YUV processing. This significant finding underscores the RTP camera's suitability for applications requiring real-time video performance, such as automotive surround view parking systems, where quick response times are essential for safety and user experience.

Modernizing legacy applications with generative AI: Lessons from R&D Projects

As digital transformation accelerates, modernizing legacy applications has become essential for businesses to stay competitive. The application modernization market size, valued at USD 21.32 billion in 2023 , is projected to reach USD 74.63 billion by 2031 (1), reflecting the growing importance of updating outdated systems.

With 94% of business executives viewing AI as key to future success and 76% increasing their investments in Generative AI due to its proven value (2), it's clear that AI is becoming a critical driver of innovation. One key area where AI is making a significant impact is application modernization - an essential step for businesses aiming to improve scalability, performance, and efficiency.

Based on two projects conducted by our R&D team , we've seen firsthand how Generative AI can streamline the process of rewriting legacy systems.

Let’s start by discussing the importance of rewriting legacy systems and how GenAI-driven solutions are transforming this process.

Why re-write applications?

In the rapidly evolving software development landscape, keeping applications up-to-date with the latest programming languages and technologies is crucial. Rewriting applications to new languages and frameworks can significantly enhance performance, security, and maintainability. However, this process is often labor-intensive and prone to human error.

Generative AI offers a transformative approach to code translation by:

- leveraging advanced machine learning models to automate the rewriting process

- ensuring consistency and efficiency

- accelerating modernization of legacy systems

- facilitating cross-platform development and code refactoring

As businesses strive to stay competitive, adopting Generative AI for code translation becomes increasingly important. It enables them to harness the full potential of modern technologies while minimizing risks associated with manual rewrites.

Legacy systems, often built on outdated technologies, pose significant challenges in terms of maintenance and scalability. Modernizing legacy applications with Generative AI provides a viable solution for rewriting these systems into modern programming languages, thereby extending their lifespan and improving their integration with contemporary software ecosystems.

This automated approach not only preserves core functionality but also enhances performance and security, making it easier for organizations to adapt to changing technological landscapes without the need for extensive manual intervention.

Why Generative AI?

Generative AI offers a powerful solution for rewriting applications, providing several key benefits that streamline the modernization process.

Modernizing legacy applications with Generative AI proves especially beneficial in this context for the following reasons:

- Identifying relationships and business rules: Generative AI can analyze legacy code to uncover complex dependencies and embedded business rules, ensuring critical functionalities are preserved and enhanced in the new system.

- Enhanced accuracy: Automating tasks like code analysis and documentation, Generative AI reduces human errors and ensures precise translation of legacy functionalities, resulting in a more reliable application.

- Reduced development time and cost: Automation significantly cuts down the time and resources needed for rewriting systems. Faster development cycles and fewer human hours required for coding and testing lower the overall project cost.

- Improved security: Generative AI aids in implementing advanced security measures in the new system, reducing the risk of threats and identifying vulnerabilities, which is crucial for modern applications.

- Performance optimization: Generative AI enables the creation of optimized code from the start, integrating advanced algorithms that improve efficiency and adaptability, often missing in older systems.

By leveraging Generative AI, organizations can achieve a smooth transition to modern system architectures, ensuring substantial returns in performance, scalability, and maintenance costs.

In this article, we will explore:

- the use of Generative AI for rewriting a simple CRUD application

- the use of Generative AI for rewriting a microservice-based application

- the challenges associated with using Generative AI

For these case studies, we used OpenAI's ChatGPT-4 with a context of 32k tokens to automate the rewriting process, demonstrating its advanced capabilities in understanding and generating code across different application architectures.

We'll also present the benefits of using a data analytics platform designed by Grape Up's experts. The platform utilizes Generative AI and neural graphs to enhance its data analysis capabilities, particularly in data integration, analytics, visualization, and insights automation.

Project 1: Simple CRUD application

The source CRUD project was used as an example of a simple CRUD application - one written utilizing .Net Core as a framework, Entity Framework Core for the ORM, and SQL Server for a relational database. The target project containes a backend application created using Java 17 and Spring Boot 3.

Steps taken to conclude the project

Rewriting a simple CRUD application using Generative AI involves a series of methodical steps to ensure a smooth transition from the old codebase to the new one. Below are the key actions undertaken during this process:

- initial architecture and data flow investigation - conducting a thorough analysis of the existing application's architecture and data flow.

- generating target application skeleton - creating the initial skeleton of the new application in the target language and framework.

- converting components - translating individual components from the original codebase to the new environment, ensuring that all CRUD operations were accurately replicated.

- generating tests - creating automated tests for the backend to ensure functionality and reliability.

Throughout each step, some manual intervention by developers was required to address code errors, compilation issues, and other problems encountered after using OpenAI's tools.

Initial architecture and data flows’ investigation

The first stage in rewriting a simple CRUD application using Generative AI is to conduct a thorough investigation of the existing architecture and data flow. This foundational step is crucial for understanding the current system's structure, dependencies, and business logic.

This involved:

- codebase analysis

- data flow mapping – from user inputs to database operations and back

- dependency identification

- business logic extraction – documenting the core business logic embedded within the application

While OpenAI's ChatGPT-4 is powerful, it has some limitations when dealing with large inputs or generating comprehensive explanations of entire projects. For example:

- OpenAI couldn’t read files directly from the file system

- Inputting several project files at once often resulted in unclear or overly general outputs

However, OpenAI excels at explaining large pieces of code or individual components. This capability aids in understanding the responsibilities of different components and their data flows. Despite this, developers had to conduct detailed investigations and analyses manually to ensure a complete and accurate understanding of the existing system.

This is the point at which we used our data analytics platform. In comparison to OpenAI, it focuses on data analysis. It's especially useful for analyzing data flows and project architecture, particularly thanks to its ability to process and visualize complex datasets. While it does not directly analyze source code, it can provide valuable insights into how data moves through a system and how different components interact.

Moreover, the platform excels at visualizing and analyzing data flows within your application. This can help identify inefficiencies, bottlenecks, and opportunities for optimization in the architecture.

Generating target application skeleton

As with OpenAI's inability to analyze the entire project, the attempt to generate the skeleton of the target application was also unsuccessful, so the developer had to manually create it. To facilitate this, Spring Initializr was used with the following configuration:

- Java: 17

- Spring Boot: 3.2.2

- Gradle: 8.5

Attempts to query OpenAI for the necessary Spring dependencies faced challenges due to significant differences between dependencies for C# and Java projects. Consequently, all required dependencies were added manually.

Additionally, the project included a database setup. While OpenAI provided a series of steps for adding database configuration to a Spring Boot application, these steps needed to be verified and implemented manually.

Converting components

After setting up the backend, the next step involved converting all project files - Controllers, Services, and Data Access layers - from C# to Java Spring Boot using OpenAI.

The AI proved effective in converting endpoints and data access layers, producing accurate translations with only minor errors, such as misspelled function names or calls to non-existent functions.

In cases where non-existent functions were generated, OpenAI was able to create the function bodies based on prompts describing their intended functionality. Additionally, OpenAI efficiently generated documentation for classes and functions.

However, it faced challenges when converting components with extensive framework-specific code. Due to differences between frameworks in various languages, the AI sometimes lost context and produced unusable code.

Overall, OpenAI excelled at:

- converting data access components

- generating REST APIs

However, it struggled with:

- service-layer components

- framework-specific code where direct mapping between programming languages was not possible

Despite these limitations, OpenAI significantly accelerated the conversion process, although manual intervention was required to address specific issues and ensure high-quality code.

Generating tests

Generating tests for the new code is a crucial step in ensuring the reliability and correctness of the rewritten application. This involves creating both unit tests and integration tests to validate individual components and their interactions within the system.

To create a new test, the entire component code was passed to OpenAI with the query: "Write Spring Boot test class for selected code."

OpenAI performed well at generating both integration tests and unit tests; however, there were some distinctions:

- For unit tests , OpenAI generated a new test for each if-clause in the method under test by default.

- For integration tests , only happy-path scenarios were generated with the given query.

- Error scenarios could also be generated by OpenAI, but these required more manual fixes due to a higher number of code issues.

If the test name is self-descriptive, OpenAI was able to generate unit tests with a lower number of errors.

Project 2: Microservice-based application

As an example of a microservice-based application, we used the Source microservice project - an application built using .Net Core as the framework, Entity Framework Core for the ORM, and a Command Query Responsibility Segregation (CQRS) approach for managing and querying entities. RabbitMQ was used to implement the CQRS approach and EventStore to store events and entity objects. Each microservice could be built using Docker, with docker-compose managing the dependencies between microservices and running them together.

The target project includes:

- a microservice-based backend application created with Java 17 and Spring Boot 3

- a frontend application using the React framework

- Docker support for each microservice

- docker-compose to run all microservices at once

Project stages

Similarly to the CRUD application rewriting project, converting a microservice-based application using Generative AI requires a series of steps to ensure a seamless transition from the old codebase to the new one. Below are the key steps undertaken during this process:

- initial architecture and data flows’ investigation - conducting a thorough analysis of the existing application's architecture and data flow.

- rewriting backend microservices - selecting an appropriate framework for implementing CQRS in Java, setting up a microservice skeleton, and translating the core business logic from the original language to Java Spring Boot.

- generating a new frontend application - developing a new frontend application using React to communicate with the backend microservices via REST APIs.

- generating tests for the frontend application - creating unit tests and integration tests to validate its functionality and interactions with the backend.

- containerizing new applications - generating Docker files for each microservice and a docker-compose file to manage the deployment and orchestration of the entire application stack.

Throughout each step, developers were required to intervene manually to address code errors, compilation issues, and other problems encountered after using OpenAI's tools. This approach ensured that the new application retains the functionality and reliability of the original system while leveraging modern technologies and best practices.

Initial architecture and data flows’ investigation

The first step in converting a microservice-based application using Generative AI is to conduct a thorough investigation of the existing architecture and data flows. This foundational step is crucial for understanding:

- the system’s structure

- its dependencies

- interactions between microservices

Challenges with OpenAI

Similar to the process for a simple CRUD application, at the time, OpenAI struggled with larger inputs and failed to generate a comprehensive explanation of the entire project. Attempts to describe the project or its data flows were unsuccessful because inputting several project files at once often resulted in unclear and overly general outputs.

OpenAI’s strengths

Despite these limitations, OpenAI proved effective in explaining large pieces of code or individual components. This capability helped in understanding:

- the responsibilities of different components

- their respective data flows

Developers can create a comprehensive blueprint for the new application by thoroughly investigating the initial architecture and data flows. This step ensures that all critical aspects of the existing system are understood and accounted for, paving the way for a successful transition to a modern microservice-based architecture using Generative AI.

Again, our data analytics platform was used in project architecture analysis. By identifying integration points between different application components, the platform helps ensure that the new application maintains necessary connections and data exchanges.

It can also provide a comprehensive view of your current architecture, highlighting interactions between different modules and services. This aids in planning the new architecture for efficiency and scalability. Furthermore, the platform's analytics capabilities support identifying potential risks in the rewriting process.

Rewriting backend microservices

Rewriting the backend of a microservice-based application involves several intricate steps, especially when working with specific architectural patterns like CQRS (Command Query Responsibility Segregation) and event sourcing . The source C# project uses the CQRS approach, implemented with frameworks such as NServiceBus and Aggregates , which facilitate message handling and event sourcing in the .NET ecosystem.

Challenges with OpenAI

Unfortunately, OpenAI struggled with converting framework-specific logic from C# to Java. When asked to convert components using NServiceBus, OpenAI responded:

"The provided C# code is using NServiceBus, a service bus for .NET, to handle messages. In Java Spring Boot, we don't have an exact equivalent of NServiceBus, but here's how you might convert the given C# code to Java Spring Boot..."

However, the generated code did not adequately cover the CQRS approach or event-sourcing mechanisms.

Choosing Axon framework

Due to these limitations, developers needed to investigate suitable Java frameworks. After thorough research, the Axon Framework was selected, as it offers comprehensive support for:

- domain-driven design

- CQRS

- event sourcing

Moreover, Axon provides out-of-the-box solutions for message brokering and event handling and has a Spring Boot integration library , making it a popular choice for building Java microservices based on CQRS.

Converting microservices

Each microservice from the source project could be converted to Java Spring Boot using a systematic approach, similar to converting a simple CRUD application. The process included:

- analyzing the data flow within each microservice to understand interactions and dependencies

- using Spring Initializr to create the initial skeleton for each microservice

- translating the core business logic, API endpoints, and data access layers from C# to Java

- creating unit and integration tests to validate each microservice’s functionality

- setting up the event sourcing mechanism and CQRS using the Axon Framework, including configuring Axon components and repositories for event sourcing

Manual Intervention

Due to the lack of direct mapping between the source project's CQRS framework and the Axon Framework, manual intervention was necessary. Developers had to implement framework-specific logic manually to ensure the new system retained the original's functionality and reliability.

Generating a new frontend application

The source project included a frontend component written using aspnetcore-https and aspnetcore-react libraries, allowing for the development of frontend components in both C# and React.

However, OpenAI struggled to convert this mixed codebase into a React-only application due to the extensive use of C#.

Consequently, it proved faster and more efficient to generate a new frontend application from scratch, leveraging the existing REST endpoints on the backend.

Similar to the process for a simple CRUD application, when prompted with “Generate React application which is calling a given endpoint” , OpenAI provided a series of steps to create a React application from a template and offered sample code for the frontend.

- OpenAI successfully generated React components for each endpoint

- The CSS files from the source project were reusable in the new frontend to maintain the same styling of the web application.

- However, the overall structure and architecture of the frontend application remained the developer's responsibility.

Despite its capabilities, OpenAI-generated components often exhibited issues such as:

- mixing up code from different React versions, leading to code failures.

- infinite rendering loops.

Additionally, there were challenges related to CORS policy and web security:

- OpenAI could not resolve CORS issues autonomously but provided explanations and possible steps for configuring CORS policies on both the backend and frontend

- It was unable to configure web security correctly.

- Moreover, since web security involves configurations on the frontend and multiple backend services, OpenAI could only suggest common patterns and approaches for handling these cases, which ultimately required manual intervention.

Generating tests for the frontend application

Once the frontend components were completed, the next task was to generate tests for these components. OpenAI proved to be quite effective in this area. When provided with the component code, OpenAI could generate simple unit tests using the Jest library.

OpenAI was also capable of generating integration tests for the frontend application, which are crucial for verifying that different components work together as expected and that the application interacts correctly with backend services.

However, some manual intervention was required to fix issues in the generated test code. The common problems encountered included:

- mixing up code from different React versions, leading to code failures.

- dependencies management conflicts, such as mixing up code from different test libraries.

Containerizing new application

The source application contained Dockerfiles that built images for C# applications. OpenAI successfully converted these Dockerfiles to a new approach using Java 17 , Spring Boot , and Gradle build tools by responding to the query:

"Could you convert selected code to run the same application but written in Java 17 Spring Boot with Gradle and Docker?"

Some manual updates, however, were needed to fix the actual jar name and file paths.

Once the React frontend application was implemented, OpenAI was able to generate a Dockerfile by responding to the query:

"How to dockerize a React application?"

Still, manual fixes were required to:

- replace paths to files and folders

- correct mistakes that emerged when generating multi-staged Dockerfiles , requiring further adjustments

While OpenAI was effective in converting individual Dockerfiles, it struggled with writing docker-compose files due to a lack of context regarding all services and their dependencies.

For instance, some microservices depend on database services, and OpenAI could not fully understand these relationships. As a result, the docker-compose file required significant manual intervention.

Conclusion

Modern tools like OpenAI's ChatGPT can significantly enhance software development productivity by automating various aspects of code writing and problem-solving. Leveraging large language models, such as OpenAI over ChatGPT can help generate large pieces of code, solve problems, and streamline certain tasks.

However, for complex projects based on microservices and specialized frameworks, developers still need to do considerable work manually, particularly in areas related to architecture, framework selection, and framework-specific code writing.

What Generative AI is good at:

- converting pieces of code from one language to another - Generative AI excels at translating individual code snippets between different programming languages, making it easier to migrate specific functionalities.

- generating large pieces of new code from scratch - OpenAI can generate substantial portions of new code, providing a solid foundation for further development.

- generating unit and integration tests - OpenAI is proficient in creating unit tests and integration tests, which are essential for validating the application's functionality and reliability.

- describing what code does - Generative AI can effectively explain the purpose and functionality of given code snippets, aiding in understanding and documentation.

- investigating code issues and proposing possible solutions - Generative AI can quickly analyze code issues and suggest potential fixes, speeding up the debugging process.

- containerizing application - OpenAI can create Dockerfiles for containerizing applications, facilitating consistent deployment environments.

At the time of project implementation, Generative AI still had several limitations .

- OpenAI struggled to provide comprehensive descriptions of an application's overall architecture and data flow, which are crucial for understanding complex systems.

- It also had difficulty identifying equivalent frameworks when migrating applications, requiring developers to conduct manual research.

- Setting up the foundational structure for microservices and configuring databases were tasks that still required significant developer intervention.

- Additionally, OpenAI struggled with managing dependencies, configuring web security (including CORS policies), and establishing a proper project structure, often needing manual adjustments to ensure functionality.

Benefits of using the data analytics platform:

- data flow visualization: It provides detailed visualizations of data movement within applications, helping to map out critical pathways and dependencies that need attention during re-writing.

- architectural insights : The platform offers a comprehensive analysis of system architecture, identifying interactions between components to aid in designing an efficient new structure.

- integration mapping: It highlights integration points with other systems or components, ensuring that necessary integrations are maintained in the re-written application.

- risk assessment: The platform's analytics capabilities help identify potential risks in the transition process, allowing for proactive management and mitigation.

By leveraging GenerativeAI’s strengths and addressing its limitations through manual intervention, developers can achieve a more efficient and accurate transition to modern programming languages and technologies. This hybrid approach to modernizing legacy applications with Generative AI currently ensures that the new application retains the functionality and reliability of the original system while benefiting from the advancements in modern software development practices.

It's worth remembering that Generative AI technologies are rapidly advancing, with improvements in processing capabilities. As Generative AI becomes more powerful, it is increasingly able to understand and manage complex project architectures and data flows. This evolution suggests that in the future, it will play a pivotal role in rewriting projects.

Do you need support in modernizing your legacy systems with expert-driven solutions?

.................

Sources:

- https://www.verifiedmarketresearch.com/product/application-modernization-market/

- https://www2.deloitte.com/content/dam/Deloitte/us/Documents/deloitte-analytics/us-ai-institute-state-of-ai-fifth-edition.pdf

Building EU-compliant connected car software under the EU Data Act

The EU Data Act is about to change the rules of the game for many industries, and automotive OEMs are no exception. With new regulations aimed at making data generated by connected vehicles more accessible to consumers and third parties, OEMs are experiencing a major shift. So, what does this mean for the automotive space?

First, it means rethinking how data is managed, shared, and protected . OEMs must now meet new requirements for data portability, security, and privacy, using software compliant with the EU Data Act.

This guide will walk you through how they can prepare to not just survive but thrive under the new regulations.

The EU Data Act deadlines OEMs can’t miss

- Chapter II (B2B and B2C data sharing) has a deadline of September 2025.

- Article 3 (accessibility by design) has a deadline of September 2026.

- Chapter IV (contractual terms between businesses) has a deadline of September 2027.

Compliance requirements for automotive OEMs

The EU Data Act establishes specific obligations for automotive OEMs to ensure secure, transparent, and fair data sharing with both consumers (B2C) and third-party businesses (B2B). The following key provisions outline the requirements that OEMs must fulfill to comply with the Act.

B2C obligations

- Data accessibility for users:

- Connected products, such as vehicles, must be built in a way that makes data generated by their use accessible in a structured, machine-readable format. This requirement applies from the manufacturing stage, meaning the design process must incorporate data accessibility features.

- User control over data:

- Users should have the ability to control how their data is used, including the right to share it with third parties of their choice. This requires OEMs to implement systems that allow consumers to grant and revoke access to their data seamlessly.

- Transparency in data practices:

- OEMs are required to provide clear and transparent information about the nature and volume of collected data and the way to access it.

- When a user requests to make data available to a third party, the OEM must inform them about:

a) The identity of the third party

b) The purpose of data use

c) The type of data that will be shared

d) The right of the user to withdraw consent for the third party to access the data

B2B obligations

1. Fair access to data:

- OEMs must ensure that data generated by connected products is accessible to third parties at the user’s request under fair, reasonable, and non-discriminatory conditions.

- This means that data sharing cannot be restricted to certain partners or proprietary platforms; it must be available to a broad range of businesses, including independent repair shops, insurers, and fleet managers.

2. Compliance with security and privacy regulations:

- While sharing non-personal data, OEMs must still comply with relevant data security and privacy regulations. This means that data must be protected from unauthorized access and that any data-sharing agreements are in line with the EU Data Act and GDPR.

3. Protection of trade secrets

- OEMs have a right and obligation to protect their trade secrets and should only disclose them when necessary to meet the agreed purpose. This means identifying protected data, agreeing on confidentiality measures with third parties, and suspending data sharing if these measures are not properly followed or if sharing would cause significant economic harm.

Understanding the specific obligations is only the first step for automotive OEMs. Based on this information, they can build software compliant with the EU Data Act. To navigate these new requirements effectively, OEMs need to adopt an approach that not only meets regulatory demands but also strengthens their competitive edge.

Thriving under the EU Data Act: smart investments and privacy-first strategies

Automotive OEMs must take a strategic approach to both their software and operational frameworks, balancing compliance requirements with innovation and customer trust. The key is to prioritize solutions that improve data accessibility and governance while minimizing costs. This starts with redesigning connected products and services to align with the Act’s data-sharing mandates and creating solutions to handle data requests efficiently.

Another critical focus is balancing privacy concerns with data-sharing obligations . OEMs must handle non-personal data responsibly under the EU Data Act while managing personal data in accordance with GDPR. This includes providing transparency about data usage and giving customers control over their data.

To achieve this balance, OEMs should identify which data needs to be shared with third parties and integrate privacy considerations across all stages of product development and data management. Transparent communication about data use, supported by clear policies and customer controls, helps to reinforce this trust.

Opportunities under the EU Data Act

The EU Data Act presents compliance challenges, but it also offers significant opportunities for OEMs that are prepared to adapt. By meeting the Act’s requirements for fair data sharing, OEMs can expand their services and build new partnerships. While direct monetization from data access fees is limited, there are numerous opportunities to leverage shared data to develop new value-added services and improve operational efficiency.

Next steps for automotive OEMs

To move to implementation, OEMs must take targeted actions that address the compliance requirements outlined earlier. These steps lay the groundwork for integrating broader strategies and turning compliance efforts into opportunities for operational improvement and future growth.

Integrate data accessibility into vehicle design

Start integrating data accessibility into vehicle design now to comply by 2026. This involves adapting both front and back-end components of products and services to enable secure and seamless data access and transfer according to the EU Data Act.

Provide user and third-party access to generated data

Introduce easy-to-use mechanisms that let users request access to their data or share it with chosen third parties. Access control should be straightforward, involving simple user identification and making data accessible to authorized users upon request. Develop dedicated data-sharing solutions, applications, or portals that enable third parties to request access to data with user consent.

Implement trade secret protection measures

OEMs should protect their trade secrets by identifying which vehicle data is commercially sensitive. Implement measures like data encryption and access controls to safeguard this information when sharing data. Clearly communicate your approach to protecting trade secrets without disclosing the sensitive information itself.

Implement transparent and secure data handling

Provide clear information to users about what data is collected, how it is used, and with whom it is shared. Transparent data practices help build trust and align with users' data rights under the EU Data Act.

Remember about the non-personal data that is being collected, too. Apply appropriate measures to preserve data quality and prevent its unauthorized access, transfer, or use.

Enable data interoperability and portability

The Act sets out essential requirements to facilitate the interoperability of data and data-sharing mechanisms, with a strong emphasis on data portability. OEMs need to make their data systems compatible with third-party services, allowing data to be easily transferred between platforms.

For example, if a car owner wants to switch from an OEM-provided app to a third-party app for vehicle diagnostics, OEMs must not create technical, contractual, or organizational barriers that would make this switch difficult.

Prepare the data

Choose a data format that fulfills the EU Data Act’s requirement for data to be shared in a “commonly used and machine-readable format.” This approach supports data accessibility and usability across different platforms and services.

Moving forward with confidence

The EU Data Act is bringing new obligations but also offering valuable opportunities. Navigating these changes may seem challenging, but with the right approach, they can become a catalyst for growth.

AAOS 14 - Surround view parking camera: How to configure and launch exterior view system

EVS - park mode

The Android Automotive Operating System (AAOS) 14 introduces significant advancements, including a Surround View Parking Camera system. This feature, part of the Exterior View System (EVS), provides a comprehensive 360-degree view around the vehicle, enhancing parking safety and ease. This article will guide you through the process of configuring and launching the EVS on AAOS 14 .

Structure of the EVS system in Android 14

The Exterior View System (EVS) in Android 14 is a sophisticated integration designed to enhance driver awareness and safety through multiple external camera feeds. This system is composed of three primary components: the EVS Driver application, the Manager application, and the EVS App. Each component plays a crucial role in capturing, managing, and displaying the images necessary for a comprehensive view of the vehicle's surroundings.

EVS driver application

The EVS Driver application serves as the cornerstone of the EVS system, responsible for capturing images from the vehicle's cameras. These images are delivered as RGBA image buffers, which are essential for further processing and display. Typically, the Driver application is provided by the vehicle manufacturer, tailored to ensure compatibility with the specific hardware and camera setup of the vehicle.

To aid developers, Android 14 includes a sample implementation of the Driver application that utilizes the Linux V4L2 (Video for Linux 2) subsystem. This example demonstrates how to capture images from USB-connected cameras, offering a practical reference for creating compatible Driver applications. The sample implementation is located in the Android source code at packages/services/Car/cpp/evs/sampleDriver .

Manager application

The Manager application acts as the intermediary between the Driver application and the EVS App. Its primary responsibilities include managing the connected cameras and displays within the system.

Key Tasks :

- Camera Management : Controls and coordinates the various cameras connected to the vehicle.

- Display Management : Manages the display units, ensuring the correct images are shown based on the input from the Driver application.

- Communication : Facilitates communication between the Driver application and the EVS App, ensuring a smooth data flow and integration.

EVS app

The EVS App is the central component of the EVS system, responsible for assembling the images from the various cameras and displaying them on the vehicle's screen. This application adapts the displayed content based on the vehicle's gear selection, providing relevant visual information to the driver.

For instance, when the vehicle is in reverse gear (VehicleGear::GEAR_REVERSE), the EVS App displays the rear camera feed to assist with reversing maneuvers. When the vehicle is in park gear (VehicleGear::GEAR_PARK), the app showcases a 360-degree view by stitching images from four cameras, offering a comprehensive overview of the vehicle’s surroundings. In other gear positions, the EVS App stops displaying images and remains in the background, ready to activate when the gear changes again.

The EVS App achieves this dynamic functionality by subscribing to signals from the Vehicle Hardware Abstraction Layer (VHAL), specifically the VehicleProperty::GEAR_SELECTION . This allows the app to adjust the displayed content in real-time based on the current gear of the vehicle.

Communication interface

Communication between the Driver application, Manager application, and EVS App is facilitated through the IEvsEnumerator HAL interface. This interface plays a crucial role in the EVS system, ensuring that image data is captured, managed, and displayed accurately. The IEvsEnumerator interface is defined in the Android source code at hardware/interfaces/automotive/evs/1.0/IEvsEnumerator.hal .

EVS subsystem update

Evs source code is located in: packages/services/Car/cpp/evs. Please make sure you use the latest sources because there were some bugs in the later version that cause Evs to not work.

cd packages/services/Car/cpp/evs

git checkout main

git pull

mm

adb push out/target/product/rpi4/vendor/bin/hw/android.hardware.automotive.evs-default /vendor/bin/hw/

adb push out/target/product/rpi4/system/bin/evs_app /system/bin/

EVS driver configuration

To begin, we need to configure the EVS Driver. The configuration file is located at /vendor/etc/automotive/evs/evs_configuration_override.xml .

Here is an example of its content:

<configuration>

<!-- system configuration -->

<system>

<!-- number of cameras available to EVS -->

<num_cameras value='2'/>

</system>

<!-- camera device information -->

<camera>

<!-- camera device starts -->

<device id='/dev/video0' position='rear'>

<caps>

<!-- list of supported controls -->

<supported_controls>

<control name='BRIGHTNESS' min='0' max='255'/>

<control name='CONTRAST' min='0' max='255'/>

<control name='AUTO_WHITE_BALANCE' min='0' max='1'/>

<control name='WHITE_BALANCE_TEMPERATURE' min='2000' max='7500'/>

<control name='SHARPNESS' min='0' max='255'/>

<control name='AUTO_FOCUS' min='0' max='1'/>

<control name='ABSOLUTE_FOCUS' min='0' max='255' step='5'/>

<control name='ABSOLUTE_ZOOM' min='100' max='400'/>

</supported_controls>

<!-- list of supported stream configurations -->

<!-- below configurations were taken from v4l2-ctrl query on

Logitech Webcam C930e device -->

<stream id='0' width='1280' height='720' format='RGBA_8888' framerate='30'/>

</caps>

<!-- list of parameters -->

<characteristics>

</characteristics>

</device>

<device id='/dev/video2' position='front'>

<caps>

<!-- list of supported controls -->

<supported_controls>

<control name='BRIGHTNESS' min='0' max='255'/>

<control name='CONTRAST' min='0' max='255'/>

<control name='AUTO_WHITE_BALANCE' min='0' max='1'/>

<control name='WHITE_BALANCE_TEMPERATURE' min='2000' max='7500'/>

<control name='SHARPNESS' min='0' max='255'/>

<control name='AUTO_FOCUS' min='0' max='1'/>

<control name='ABSOLUTE_FOCUS' min='0' max='255' step='5'/>

<control name='ABSOLUTE_ZOOM' min='100' max='400'/>

</supported_controls>

<!-- list of supported stream configurations -->

<!-- below configurations were taken from v4l2-ctrl query on

Logitech Webcam C930e device -->

<stream id='0' width='1280' height='720' format='RGBA_8888' framerate='30'/>

</caps>

<!-- list of parameters -->

<characteristics>

</characteristics>

</device>

</camera>

<!-- display device starts -->

<display>

<device id='display0' position='driver'>

<caps>

<!-- list of supported inpu stream configurations -->

<stream id='0' width='1280' height='800' format='RGBA_8888' framerate='30'/>

</caps>

</device>

</display>

</configuration>

In this configuration, two cameras are defined: /dev/video0 (rear) and /dev/video2 (front). Both cameras have one stream defined with a resolution of 1280 x 720, a frame rate of 30, and an RGBA format.

Additionally, there is one display defined with a resolution of 1280 x 800, a frame rate of 30, and an RGBA format.

Configuration details

The configuration file starts by specifying the number of cameras available to the EVS system. This is done within the <system> tag, where the <num_cameras> tag sets the number of cameras to 2.

Each camera device is defined within the <camera> tag. For example, the rear camera ( /dev/video0 ) is defined with various capabilities such as brightness, contrast, auto white balance, and more. These capabilities are listed under the <supported_controls> tag. Similarly, the front camera ( /dev/video2 ) is defined with the same set of controls.

Both cameras also have their supported stream configurations listed under the <stream> tag. These configurations specify the resolution, format, and frame rate of the video streams.

The display device is defined under the <display> tag. The display configuration includes supported input stream configurations, specifying the resolution, format, and frame rate.

EVS driver operation

When the EVS Driver starts, it reads this configuration file to understand the available cameras and display settings. It then sends this configuration information to the Manager application. The EVS Driver will wait for requests to open and read from the cameras, operating according to the defined configurations.

EVS app configuration

Configuring the EVS App is more complex. We need to determine how the images from individual cameras will be combined to create a 360-degree view. In the repository, the file packages/services/Car/cpp/evs/apps/default/res/config.json.readme contains a description of the configuration sections:

{

"car" : { // This section describes the geometry of the car

"width" : 76.7, // The width of the car body

"wheelBase" : 117.9, // The distance between the front and rear axles

"frontExtent" : 44.7, // The extent of the car body ahead of the front axle

"rearExtent" : 40 // The extent of the car body behind the rear axle

},

"displays" : [ // This configures the dimensions of the surround view display

{ // The first display will be used as the default display

"displayPort" : 1, // Display port number, the target display is connected to

"frontRange" : 100, // How far to render the view in front of the front bumper

"rearRange" : 100 // How far the view extends behind the rear bumper

}

],

"graphic" : { // This maps the car texture into the projected view space

"frontPixel" : 23, // The pixel row in CarFromTop.png at which the front bumper appears

"rearPixel" : 223 // The pixel row in CarFromTop.png at which the back bumper ends

},

"cameras" : [ // This describes the cameras potentially available on the car

{

"cameraId" : "/dev/video32", // Camera ID exposed by EVS HAL

"function" : "reverse,park", // Set of modes to which this camera contributes

"x" : 0.0, // Optical center distance right of vehicle center

"y" : -40.0, // Optical center distance forward of rear axle

"z" : 48, // Optical center distance above ground

"yaw" : 180, // Optical axis degrees to the left of straight ahead

"pitch" : -30, // Optical axis degrees above the horizon

"roll" : 0, // Rotation degrees around the optical axis

"hfov" : 125, // Horizontal field of view in degrees

"vfov" : 103, // Vertical field of view in degrees

"hflip" : true, // Flip the view horizontally

"vflip" : true // Flip the view vertically

}

]

}

The EVS app configuration file is crucial for setting up the system for a specific car. Although the inclusion of comments makes this example an invalid JSON, it serves to illustrate the expected format of the configuration file. Additionally, the system requires an image named CarFromTop.png to represent the car.

In the configuration, units of length are arbitrary but must remain consistent throughout the file. In this example, units of length are in inches.

The coordinate system is right-handed: X represents the right direction, Y is forward, and Z is up, with the origin located at the center of the rear axle at ground level. Angle units are in degrees, with yaw measured from the front of the car, positive to the left (positive Z rotation). Pitch is measured from the horizon, positive upwards (positive X rotation), and roll is always assumed to be zero. Please keep in mind that, unit of angles are in degrees, but they are converted to radians during configuration reading. So, if you want to change it in EVS App source code, use radians.

This setup allows the EVS app to accurately interpret and render the camera images for the surround view parking system.

The configuration file for the EVS App is located at /vendor/etc/automotive/evs/config_override.json . Below is an example configuration with two cameras, front and rear, corresponding to our driver setup:

{

"car": {

"width": 76.7,

"wheelBase": 117.9,

"frontExtent": 44.7,

"rearExtent": 40

},

"displays": [

{

"_comment": "Display0",

"displayPort": 0,

"frontRange": 100,

"rearRange": 100

}

],

"graphic": {

"frontPixel": -20,

"rearPixel": 260

},

"cameras": [

{

"cameraId": "/dev/video0",

"function": "reverse,park",

"x": 0.0,

"y": 20.0,

"z": 48,

"yaw": 180,

"pitch": -10,

"roll": 0,

"hfov": 115,

"vfov": 80,

"hflip": false,

"vflip": false

},

{

"cameraId": "/dev/video2",

"function": "front,park",

"x": 0.0,

"y": 100.0,

"z": 48,

"yaw": 0,

"pitch": -10,

"roll": 0,

"hfov": 115,

"vfov": 80,

"hflip": false,

"vflip": false

}

]

}

Running EVS

Make sure all apps are running:

ps -A | grep evs

automotive_evs 3722 1 11007600 6716 binder_thread_read 0 S evsmanagerd

graphics 3723 1 11362488 30868 binder_thread_read 0 S android.hardware.automotive.evs-default

automotive_evs 3736 1 11068388 9116 futex_wait 0 S evs_app

To simulate reverse gear you can call:

evs_app --test --gear reverse

And park:

evs_app --test --gear park

EVS app should be displayed on the screen.

Troubleshooting

When configuring and launching the EVS (Exterior View System) for the Surround View Parking Camera in Android AAOS 14, you may encounter several issues.

To debug that, you can use logs from EVS system:

logcat EvsDriver:D EvsApp:D evsmanagerd:D *:S

Multiple USB cameras - image freeze

During the initialization of the EVS system, we encountered an issue with the image feed from two USB cameras. While the feed from one camera displayed smoothly, the feed from the second camera either did not appear at all or froze after displaying a few frames.

We discovered that the problem lay in the USB communication between the camera and the V4L2 uvcvideo driver. During the connection negotiation, the camera reserved all available USB bandwidth. To prevent this, the uvcvideo driver needs to be configured with the parameter quirks=128 . This setting allows the driver to allocate the USB bandwidth based on the actual resolution and frame rate of the camera.

To implement this solution, the parameter should be set in the bootloader, within the kernel command line, for example:

console=ttyS0,115200 no_console_suspend root=/dev/ram0 rootwait androidboot.hardware=rpi4 androidboot.selinux=permissive uvcvideo.quirks=128

After applying this setting, the image feed from both cameras should display smoothly, resolving the freezing issue.

Green frame around camera image

In the current implementation of the EVS system, the camera image is surrounded by a green frame, as illustrated in the following image:

To eliminate this green frame, you need to modify the implementation of the EVS Driver. Specifically, you should edit the GlWrapper.cpp file located at cpp/evs/sampleDriver/aidl/src/ .

In the void GlWrapper::renderImageToScreen() function, change the following lines:

-0.8, 0.8, 0.0f, // left top in window space

0.8, 0.8, 0.0f, // right top

-0.8, -0.8, 0.0f, // left bottom

0.8, -0.8, 0.0f // right bottom

to

-1.0, 1.0, 0.0f, // left top in window space

1.0, 1.0, 0.0f, // right top

-1.0, -1.0, 0.0f, // left bottom

1.0, -1.0, 0.0f // right bottom

After making this change, rebuild the EVS Driver and deploy it to your device. The camera image should now be displayed full screen without the green frame.

Conclusion

In this article, we delved into the intricacies of configuring and launching the EVS (Exterior View System) for the Surround View Parking Camera in Android AAOS 14. We explored the critical components that make up the EVS system: the EVS Driver, EVS Manager, and EVS App, detailing their roles and interactions.

The EVS Driver is responsible for providing image buffers from the vehicle's cameras, leveraging a sample implementation using the Linux V4L2 subsystem to handle USB-connected cameras. The EVS Manager acts as an intermediary, managing camera and display resources and facilitating communication between the EVS Driver and the EVS App. Finally, the EVS App compiles the images from various cameras, displaying a cohesive 360-degree view around the vehicle based on the gear selection and other signals from the Vehicle HAL.

Configuring the EVS system involves setting up the EVS Driver through a comprehensive XML configuration file, defining camera and display parameters. Additionally, the EVS App configuration, outlined in a JSON file, ensures the correct mapping and stitching of camera images to provide an accurate surround view.

By understanding and implementing these configurations, developers can harness the full potential of the Android AAOS 14 platform to enhance vehicle safety and driver assistance through an effective Surround View Parking Camera system. This comprehensive setup not only improves the parking experience but also sets a foundation for future advancements in automotive technology.

How to make your enterprise data ready for AI

As AI continues to transform industries, one thing becomes increasingly clear: the success of AI-driven initiatives depends not just on algorithms but on the quality and readiness of the data that fuels them. Without well-prepared data, even the most advanced artificial intelligence endeavors can fall short of their promise. In this guide, we cover the practical steps you need to take to prepare your data for AI.

What's the point of AI-ready data?

The conversation around AI has shifted dramatically in recent years. No longer a distant possibility, AI is now actively changing business landscapes - transforming supply chains through predictive analytics, personalizing customer experiences with advanced recommendation engines, and even assisting in complex fields like financial modeling and healthcare diagnostics.

The focus today is not on whether AI technologies can fulfill its potential but on how organizations can best deploy it to achieve meaningful, scalable business outcomes.

Despite pouring significant resources into AI, businesses are still finding it challenging to fully tap into its economic potential.

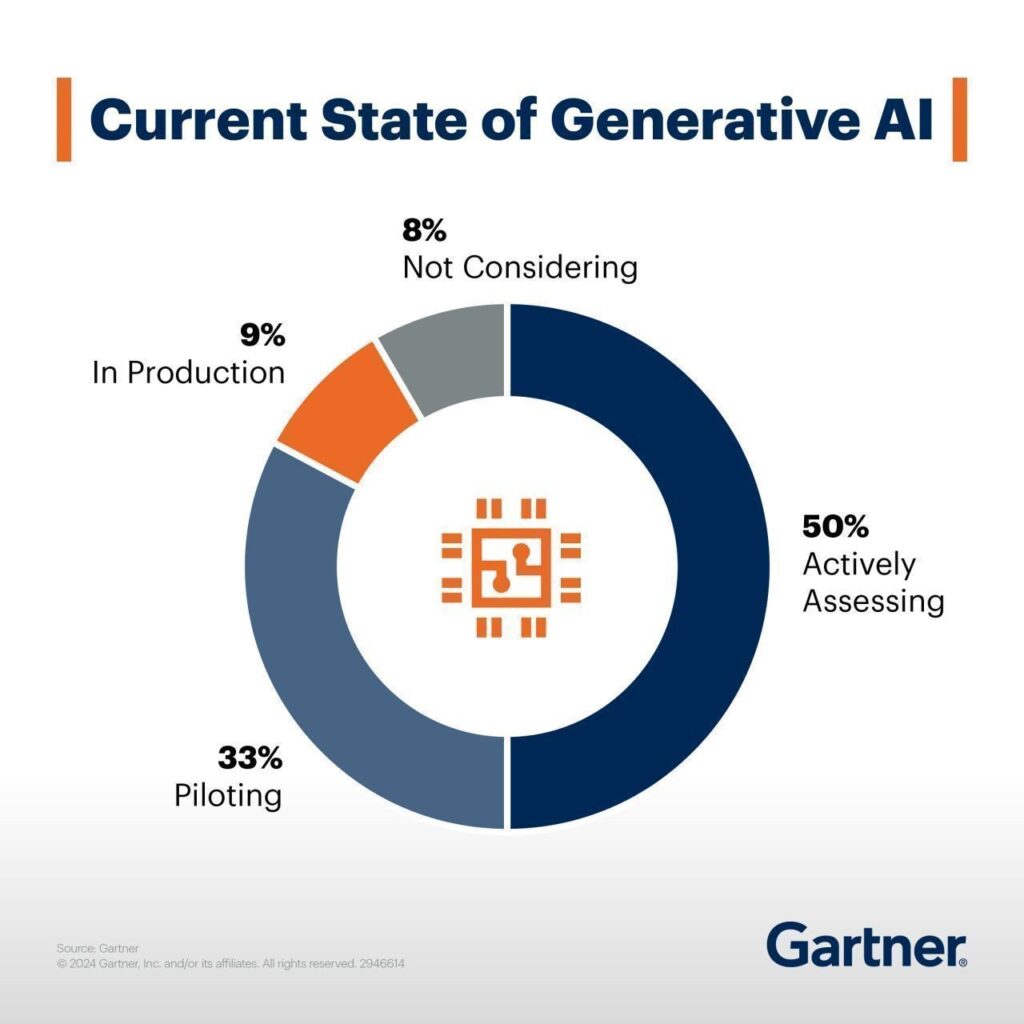

For example, according to Gartner , 50% of organizations are actively assessing GenAI's potential, and 33% are in the piloting stage. Meanwhile, only 9% have fully implemented generative AI applications in production, while 8% do not consider them at all.

Source: www.gartner.com

The problem often comes down to a key but frequently overlooked factor: the relationship between AI and data. The key issue is the lack of data preparedness . In fact, only 37% of data leaders believe that their organizations have the right data foundation for generative AI, with just 11% agreeing strongly. That means specifically that chief data officers and data leaders need to develop new data strategies and improve data quality to make generative AI work effectively .

What does your business gain by getting your data AI-ready?

When your data is clean, organized, and well-managed , AI can help you make smarter decisions, boost efficiency, and even give you a leg up on the competition .

So, what exactly are the benefits of putting in the effort to prepare your data for AI? Let’s break it down into some real, tangible advantages.

- Clean, organized data allows AI to quickly analyze large amounts of information, helping businesses understand customer preferences, spot market trends, and respond more effectively to changes.

- Getting data AI-ready can save time by automating repetitive tasks and reducing errors.