Expert Software Engineer at Grape Up and Cloud Foundry Certified Developer. He has experience in designing and implementing distributed systems which utilize cloud-native patterns and DevOps approach. In his free time, he loves to sail and travel around the world.

Read articles

Dead code analysis in legacy modernization: What we found in a 654,273-line codebase

On one of our client engagements, we ran a deep dead code analysis against a Java codebase of 654,273 lines. Roughly 275,000 of those lines sat in the business-logic layer that had been auto-translated from COBOL by a previous modernization vendor. After deep static and semantic analysis, we estimated that between 120,000 and 150,000 of those lines would not exist in a hand-written Java equivalent. Nearly half the code carried no semantic weight.

What matters more than the numbers is how we got to them, and why no off-the-shelf static analyzer would have produced the same answer. The ratios here are specific to this particular auto-translated project. Hand-written legacy systems behave very differently. Without structured understanding of the codebase before transformation, none of this would have surfaced, and the modernization plan would have been built around the wrong codebase.

Why understanding comes before transformation

Modernization teams routinely jump from "we have legacy code" to "let's prompt an AI to rewrite it." That approach fails at enterprise scale for a simple reason: the first question is not how do we migrate but what do we actually have.

This is also where the difference between prompt engineering and a modernization workflow becomes concrete. A prompt is a single instruction handed to a model. A workflow is a repeatable, governed sequence of operations with structured inputs, validated outputs, and traceable evidence. Prompts produce snippets. Workflows produce decisions that a CTO can defend in a steering committee.

Before any transformation, you need structured knowledge of the system you're working with: business documentation, dependency maps, architectural reconstruction, static and semantic findings. That knowledge becomes the substrate for every downstream change. Business logic reconstruction and dependency mapping answer what is worth migrating. Dead code analysis answers a related but different question: how much of what you see is actually real?

A transformation pipeline applied to a codebase you don't understand is a parallel waste machine. It will faithfully migrate every dead branch, every ceremonial wrapper, every empty-string initializer into your modern stack. An AI agent asked to migrate tens of thousands of lines of structural boilerplate will produce tens of thousands of lines of structural boilerplate in the target language. The waste survives the transformation. This is also why "can AI agents migrate legacy code reliably?" is the wrong question. Reliability is a property of the workflow surrounding the agent, not of the agent itself.

Two categories of waste

Dead code analysis splits findings into two categories.

Strict dead code is lines whose execution has no observable effect. The IDE will usually flag these.

Translation overhead is lines that are syntactically alive but exist only because a mechanical translator emitted them. The IDE cannot see this because the surface code is well-formed; every statement looks like real work.

Static analysis tools handle the first category. The second is where the volume hides - and where modernization budgets quietly evaporate. Detecting it requires semantic reasoning, codebase-wide context, and pattern recognition that no IDE inspection provides.

The legacy codebase under analysis

The client owned a large back-office system originally written in COBOL. A prior modernization vendor had performed a mechanical COBOL-to-Java translation through a decompilation toolchain. The output Java code compiled and ran in production. There were no automated tests. The only validation performed at the time of translation was manual, and it had happened years before we arrived. By the time the system reached us, nobody on the team could fully describe what the code did - the institutional memory of the translation effort had moved on, and the surface code was opaque enough that no one was confident enough to touch it.

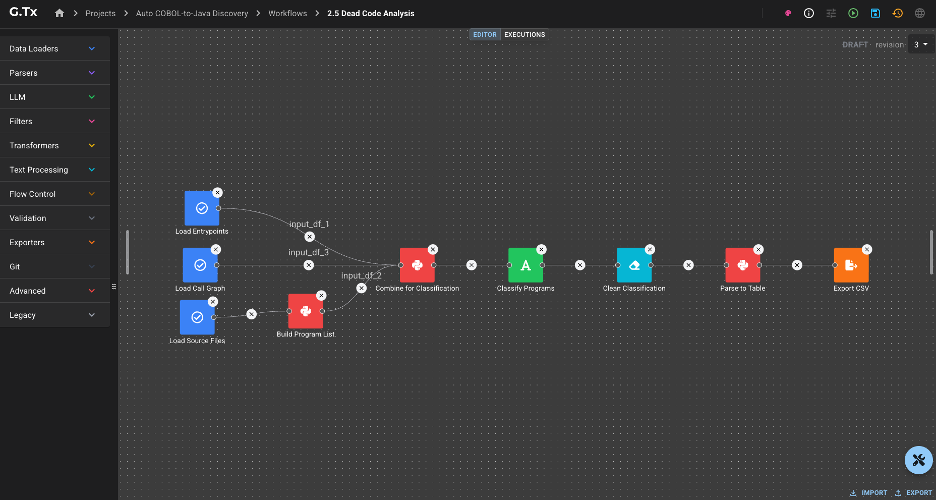

We began with the Understand phase, the first step of our modernization process, focused on reconstructing what the codebase actually does before any migration is scoped. The process runs on G.Tx, Grape Up's agentic platform for enterprise legacy modernization, which models Understand as a set of reusable workflows backed by AI agents, structured context, and engineering governance. The dead code analysis workflow produced the findings the rest of this article is built on.

What static analysis caught

Some of the dead weight was syntactically obvious: indicator-variable boilerplate left over from COBOL host-variable conventions, redundant explicit casts preserved from the bytecode, discarded DAO results, duplicate branches in if-chains, redundant re-initializations of locals. The IDE could see all of it. In this codebase the relevant inspections had been silenced because the warning count was unusable. A finding technically visible to static analysis behaved, in practice, as if it were invisible.

Integer stationOutInd = 0;

// ... no writes anywhere ...

if (stationOutInd != 0) { stationOut = ""; } // always false

Even with the IDE's help, the visible findings explained only a small fraction of the auto-translated layer. The bigger story sat behind what the IDE could not see.

What semantic analysis revealed

The architectural patterns were harder. Each one looked like ordinary Java to an analyzer. Each line allocated, called, or assigned something. The waste was architectural, not syntactic, and only became visible once we looked at the codebase as a whole.

The ValueHolder marshalling dance. Wrapper-class boilerplate emulating COBOL's BY REFERENCE. Every multi-output call became three lines of wrap-call-unwrap, often on the same variable repeatedly:

copyCountHolder = new ValueHolder(Integer.class, (Object) copyCount);

returnCode = printFilter.searchStationCopyCount(

stationPrint, "DOCUMENT_TYPE_A", (ValueHolder<Integer>) copyCountHolder

);

copyCount = (Integer) copyCountHolder.getValue();

In idiomatic Java the same sites collapse to a return value, a record, or a small result class.

Section-global state emulation. COBOL paragraphs share state through working storage, a flat namespace visible to every paragraph. The translator preserved that model by giving each service module its own Context class and turning every former local variable into a context field accessed through a wrapping getter on every term of every expression.

this.getServiceContext().setBrand(this.getServiceContext().getBrandCode());

this.getServiceContext().getInvoice().setBrandCode(this.getServiceContext().getBrand());

The deeper finding came from cross-referencing reads and writes: many context fields were written by exactly one paragraph and read by exactly that same paragraph. They had no business being state at all. They were locals masquerading as state because the translator did not know the difference.

DTO bloat. COBOL PIC X(n) working-storage fields default to spaces, not null. The translator preserved the equivalent by initializing every Java string field to `""`. Every COBOL 01-level record became a Java DTO with one field, one getter, one setter, and one empty-string initializer per string field.

The IDE's redundant-initializer inspection only fires when the explicit value matches the JVM default. "" is not the default for String (which is null), so the inspection treated every empty-string initializer as intentional.

A few smaller patterns followed the same logic: identity assignments via UxRuntime.assign for COBOL MOVE statements that needed no coercion, and UxRuntime.memset calls on Java objects that did nothing. Each was invisible to static analysis because each looked like a real method call.

The same translator habits also produced latent correctness bugs, not just overhead. Methods that take a String parameter and reassign it across dozens of lines (a literal translation of COBOL BY REFERENCE) silently lose every write at return, because Java is pass-by-value for object references:

public void formatLetterMessage(Long period, Long invoiceId, String message) {

// 50+ lines of work, repeatedly reassigning `message`

message = StringUtils.replaceCharAt(message, charPos, ' ');

// method ends — every write is lost

}Elsewhere in the same codebase, the translator used ValueHolder precisely to emulate pass-by-reference correctly. The pattern of forgetting to wrap is the bug. Try/catch blocks that perform conditional database lookups and write a result through a setter, only to be overwritten by an unconditional setter immediately after the block, fall in the same category: dead code at the line level, latent defect at the behaviour level. In a system without automated tests, neither shape had any chance of being noticed.

Aggregate picture of the dead code

In this particular auto-translated codebase, strict dead code accounted for roughly 5–10% of the 275,000-line business-logic layer. Translation overhead accounted for another 35–45%. Together, roughly 45–55% of the auto-translated layer would not exist in a hand-written Java equivalent - between 120,000 and 150,000 lines of code carrying no semantic weight.

The bulk of that volume came from a small number of patterns:

These ratios reflect this specific auto-translated project. Other codebases, especially hand-written legacy systems, distribute their waste very differently. The methodology generalizes; the percentages do not.

In the worst-affected individual methods, 30–50% of the body was dead or boilerplate at the line level. A developer reading those methods was spending up to one line out of every two on mechanical noise before reaching anything that described the actual business behaviour.

Why this changes the modernization conversation

- Migration cost estimation collapses by half when overhead is removed. Quoting "275,000 lines to migrate" anchors the budget. Quoting "approximately 130,000 lines of real logic, plus 145,000 lines of removable overhead" reframes the engagement entirely — both in scope and in sequencing. This is, in concrete terms, the ROI of a feasibility analysis for legacy modernization: half the scope you were about to budget for is not real.

- AI-assisted transformation amplifies whatever you feed it. An agent asked to migrate the ValueHolder dance will faithfully migrate the ValueHolder dance. The Understand phase puts the cleanup before the migration, not after.

- Test generation is more reliable on real code than on boilerplate. Auto-generated tests for dead branches and clobbered setters pass but verify nothing. Understand-phase outputs allow downstream test-generation workflows to skip dead surface area entirely — which matters even more in systems like this one, where no test suite existed and behaviour had to be reconstructed from code rather than from assertions.

- Static analysis alone is insufficient. The IDE saw a small fraction of the problem here. The remaining required semantic analysis aware of the translator's idioms.

- Modernizing in place is a real option. Not every legacy system needs to be rewritten or replatformed. Once dead code and hidden dependencies are mapped, in-place hardening like removing overhead, recovering documentation, restoring testability, is often the higher-ROI path. The decision to migrate, modernize in place, or split the system between the two should follow the analysis, not precede it.

- Human-in-the-loop validation remains mandatory. Several findings were latent correctness bugs hiding behind dead code. Auto-deleting them without engineering review would be reckless. The output of a dead code analysis workflow is a curated, traceable finding set, not a green-light-to-delete list.

How the G.Tx Understand operationalizes this

The dead code analysis workflow produces, for each finding, a classification of what is dead, the location in the codebase, and the rationale explaining why it qualifies as dead. Aggregate counts per classification are available as well, so engineering teams can see both the individual evidence and the overall distribution of waste across the codebase. Every classification is traceable back to source locations or runtime evidence.

And dead code is not only a code-level phenomenon. The same analytical lens applies one layer up: endpoints that no client has called in years, scheduled jobs that nobody remembers writing, service modules whose only consumer was decommissioned long ago, infrastructure quietly burning budget for traffic that no longer exists. Code-level dead code is a maintainability and correctness problem. Functionality-level dead code is a cost and risk problem. Both belonging the Understand phase, because both shape the same decision: what is worth migrating, what is worth hardening in place, and what should simply be turned off.

That last point matters for hallucination control. Models hallucinate when they infer from incomplete context. The artifacts produced during Understand, classified findings, traceable evidence, mapped dependencies, are exactly the grounding downstream agents need during transformation. Hallucination is reduced before any code is touched, because the model has real evidence to work with instead of having to guess at the codebase.

Lessons for legacy modernization

- Quantify before you migrate. The single most valuable artifact in a modernization engagement is a defensible number for real code volume. Without it, every estimate is fiction.

- Auto-translation defers cost; it does not eliminate it. A clean compile is not evidence of a maintainable codebase. In this engagement, half the code was overhead, and the only thing keeping that overhead in production was that nobody was confident enough to touch it. The code was unreadable, undocumented, and unverified. Removing anything felt riskier than leaving everything.

- The IDE is not enough. Roughly 90% of the waste in this codebase was invisible to off-the-shelf static analysis. Semantic, codebase-aware analysis closed the gap.

- Dead code is a correctness signal, not just a hygiene problem. Several of the patterns we found were latent bugs the team did not know they had.

- Modernization is an orchestration problem. No single prompt, agent, or tool produces findings of this kind. It takes reusable workflows, curated context, structured outputs, and disciplined human review.

Modernization decisions made without an Understand phase are decisions made about the wrong codebase. In this engagement, the "wrong codebase" was roughly twice the size of the real one, and the real one was the only one worth migrating.

---

If you suspect your own auto-translated or long-lived legacy system is carrying overhead nobody has measured, the G.Tx Understand phase exists precisely for that conversation. Reach out - we'll start with a focused feasibility analysis for legacy modernization and produce a defensible picture of what you actually have.

Connected car: Challenges and opportunities for the automotive industry

The development of connected car technology accelerated digital disruption in the automotive industry. Verified Market Research valued the connected car market at USD 72.68 billion in 2019 and projected its value to reach USD 215.23 billion by 2027. Along with the rapid growth of this market’s worth, we observe the constant development of new customer-centric services that goes far beyond driving experience.

While the development of connected car technology created a demand for connectivity solutions and drive-assistance systems, companies willing to build their position in this market have to face some significant challenges. This article is the first one of the mini-series that guides you through the main obstacles with building software for connected cars. We start with the basics of a connected vehicle, then dive into the details of prototyping and providing production-ready solutions. Finally, we analyze and predict the future of verticals associated with automotive-rental car enterprises, insurers, and mobility providers.

This series provides you with hands-on knowledge based on our experience in developing production-grade and cutting-edge software for the leading automotive and car rental enterprises. We share our insights and pointers to overcome recurring issues that happen to every software development team working with these technologies.

What is a Connected Car?

A Connected Car is a vehicle that can communicate bidirectionally with other systems outside the car , such as infrastructure, other vehicles, or home/office. Connected cars belong to the expanding environment of devices that comprise the Internet of Things landscape. As well as all devices that are connected to the internet, some functions of a vehicle can be managed remotely.

Along with that, IoT devices are valuable resources of data and information that enable further development of associated services. And while most car owners would describe it as the mobile application paired with a car that allows users to check the fuel level, open/close doors, control air conditioning, and, in some cases, start the ignition, this technology goes much further.

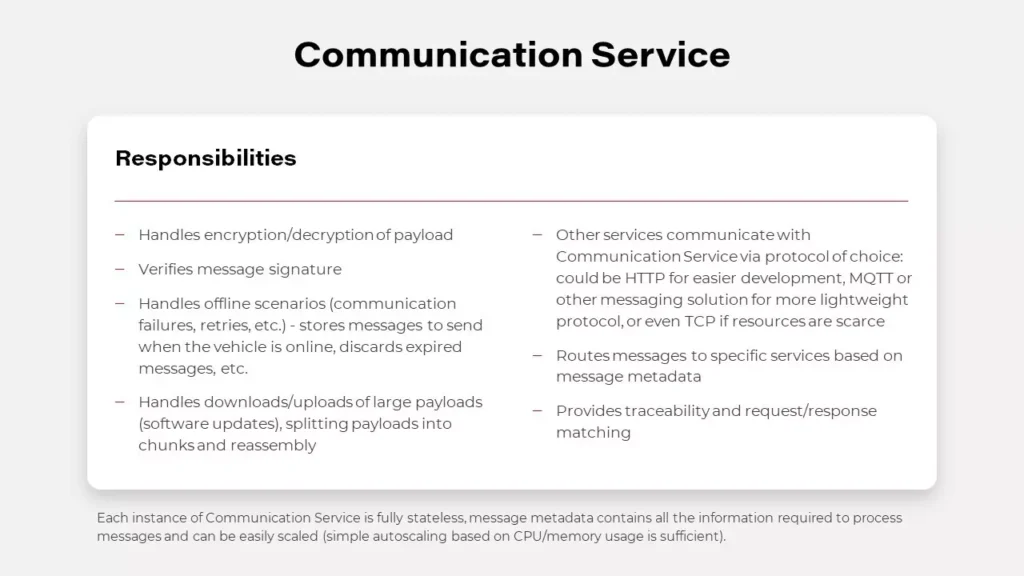

V2C - Vehicle to Cloud

Let’s focus on some real-case scenarios to showcase the capabilities of connected car technology. If a car is connected, it may also have a sat-nav system with a traffic monitoring feature that can alert a driver if there is a traffic jam in front of them and suggest an alternative route. Or maybe there is a storm at the upcoming route and navigation can warn the driver. How does it work?

That is mostly possible thanks to what we call V2C - Vehicle to Cloud communication. Utilizing the fact that a car is connected, and it is sending and gathering data, a driver may also try to find it, in case it was stolen. Telematics data is also helpful to understand the reasons behind an accident on the road - we can analyze what happened before the accident and what may have led to the event. The data can be also used for predictive maintenance, even if the rules managing the dates are changing dynamically.

While this seems just like a nice-to-have feature for the drivers, it allows car manufacturers to provide an extensive set of subscription-based features and functionalities for the end-users. The availability of services may depend on the current car state - location, temperature, and technical availability. As an example: during the winter, if the car is equipped with heated seats and the temperature drops under 0 Celsius, but the subscription for this feature expires, the infotainment can propose to buy the new one - which is more tempting when the user is at this time cold.

V2I - Vehicle to Infrastructure

A vehicle equipped with connected car technology is not limited to communicating only with the cloud. Such a car is capable of exchanging data and information with road infrastructure, and this functionality is called V2I - Vehicle to Infrastructure communication. A car processes information from infrastructure components - road signs, lane markings, traffic lights to support the driving experience by suggesting decision makings. In the next steps, V2I can provide drivers with information about traffic jams and free parking spots.

Currently, in Stuttgart, Germany, the city’s infrastructure provides the data live traffic lights data for vehicle manufacturers, so drivers can see not just what light is on, but how long they have to wait for the red light to switch to green again. This part of connected car technology can rapidly develop with the utilization of wireless communication and the digitalization of road infrastructure.

V2V - Vehicle to Vehicle

Another highly valuable type of communication provided by connected car technology is V2V - Vehicle to Vehicle. By developing an environment in which numerous cars are able to wirelessly exchange data, the automotive industry offers a new experience - every vehicle can use the information provided by a car belonging to the network, which leads to more effective communication covering traffic, car parking, alternative routes, issues on the road, or even some worth-seeing spots.

It may also significantly increase safety on the road, when one car notifies another that drives a few hundred meters behind him that it just had a hard breaking or that the road surface is slippery, using the information from ABS, ESP, or TC systems. That has not just an informational value but is also used for Adaptive Cruise Control or Travel Assist systems and reduces the speed of vehicles automatically increasing the safety of the travelers. V2V communication makes use of network and scale effects - the more users have connected to the network, the more helpful and complete information the network provides.

The list of use cases for connected car technology is only limited by our imagination but is excelling rapidly as many teams are joining the movement aiming to transform the way we travel and communicate. The Connected Car revolution leads to many changes and impacts both user experience and business models of the associated industries.

How connected car technology impacts business models of the automotive industry

Connected cars bring innovative solutions to the whole environment comprising the automotive landscape. Original Equipment Manufacturers (OEMs) have gained new revenue streams. Now vehicles allow their users to access stores and purchase numerous features and associated services that enhance customer experience, such as infotainment systems. By delivering aftermarket services directly to a car, the automotive industry monetizes new channels. Furthermore, these systems enable automakers to deliver advertisements, which become an increasing source of revenue.

The development of new technology in automotive creates a similar change as we observed in the mobile phone market. When smartphones equipped with operating systems had become a new normal, significantly increased the number of new apps that now allow their users to manage numerous services and tasks using the device.

But it is just an introduction to numerous business opportunities provided by connected cars. Since data has become a new competitive advantage that fuels the digital economy, collecting and distributing data about user behavior and vehicle performance is seen as highly profitable, especially when taking into account the potential interest of insurers providers.

Assembled data while used properly gives OEMs powerful insights into customer behavior that should lead to the rapid growth of new technologies and products improving customer experiences, such as predictive maintenance or fleet management.

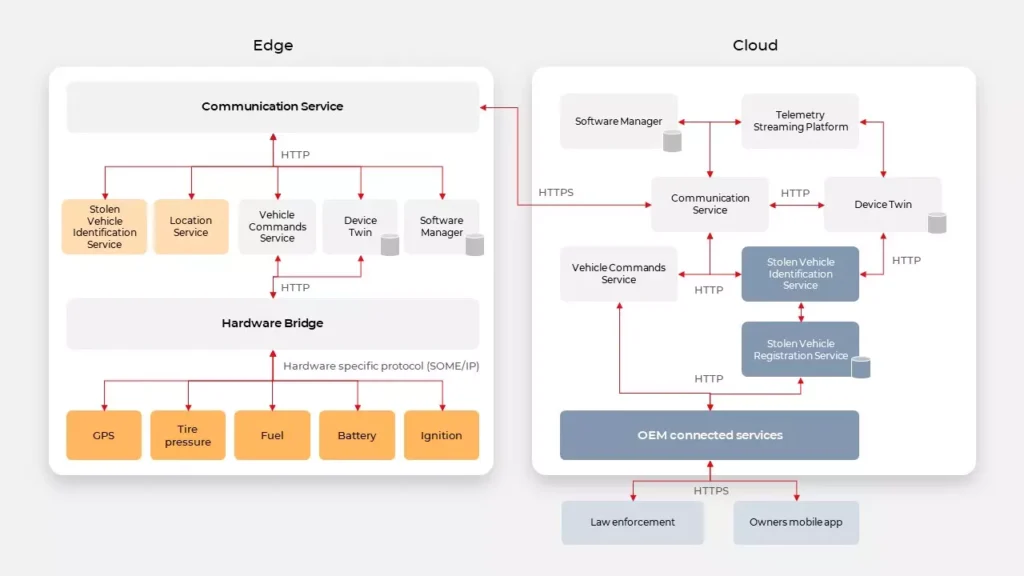

The architecture behind connected car technology

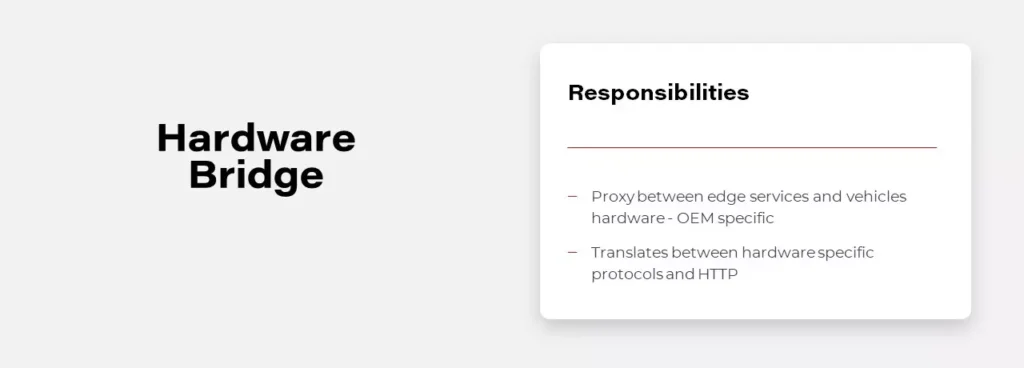

Automotive companies utilize data from vehicle sensors and allow 3rd party providers to access their systems through dedicated API layers. Let’s dive into such architecture.

High-Level Architecture

System components

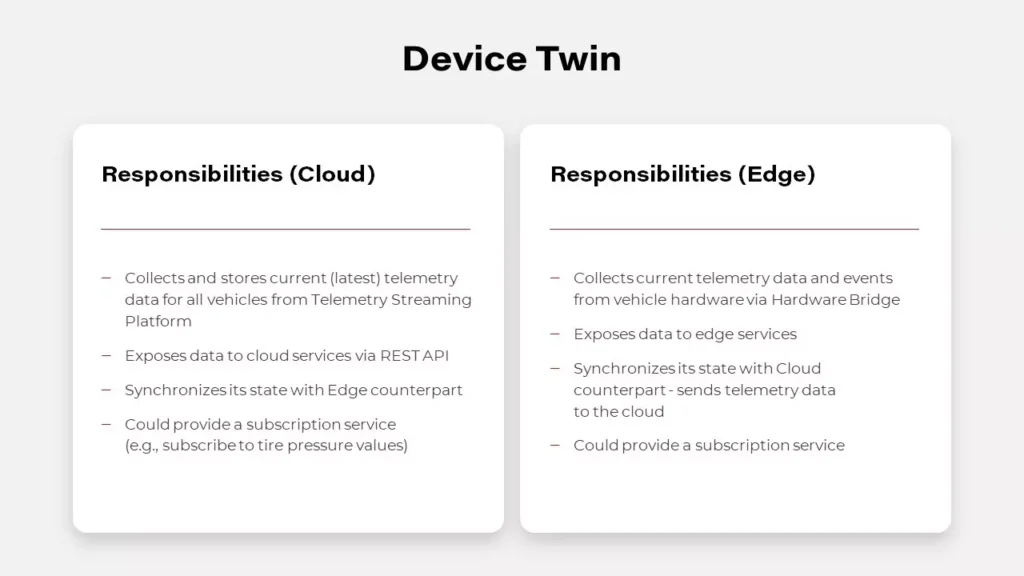

Digital Twin in automotive

A digital twin is a virtual replica and software representation of a product, system, or process. This concept is being adopted and developed in the automotive industry, as carmakers utilize its powerful capabilities to increase customer satisfaction, improve the way they develop vehicles and their systems, and innovate. A digital twin empowers automotive companies to collect various information from numerous sensors, as this tool allows to capture operational and behavioral data generated by a vehicle. Equipped with these insights, the leading automotive enterprises work on enhancing performance and customize user experience, but meanwhile, they have to tackle significant challenges.

First of all, getting data from vehicles is problematic. Hardware built-in vehicles have particular limits, which leads to reduced capabilities in providing software. Unlike software, once shipped hardware cannot be easily adjusted to the changing conditions and works for several years at least. Furthermore, while willing to deliver a customer-centric experience, automakers still have to protect their users from numerous threats. To protect vehicles from denial of service attacks, vehicles can throttle the number of requests. Overall, it’s a good idea but can have a terrible impact when multiple applications are trying to get data from vehicles, e.g., in the rental domain. This complex problem can be simply solved by Digital Twin. It can expose data to all applications without them needing to connect to the vehicle by simply gathering all real-time vehicle data in the cloud.

Implementation of this pattern is possible by using NoSQL databases like MongoDB or Cassandra and reliable communication layers, examples are described below. Digital Twin may be implemented in two possible ways, uni- or bidirectional.

Unidirectional Digital Twin

Unidirectional Digital Twin is saving only values received from the vehicle, in case of conflict it resolves the situation based on event timestamp. However, it doesn’t mean that the event causing the conflict is discarded and lost, usually every event is sent to the data platform. The data platform is a useful concept for data analysis and became handy when implementing complex use cases like analyzing driver habits.

Bidirectional Digital Twin

The Bidirectional Digital Twin design is based on the concept of the current and desired state. The vehicle is reporting the current state to the platform, and on the other hand, the platform is trying to change the state in the vehicle to the desired value. In this situation, in case of conflict, not only the timestamp matters as some operations from the cloud may not be applied to the vehicle in every state, eg., the engine can’t be disabled when the vehicle is moving.

However, meeting the goal of developing a Digital Twin may be tricky though as it all depends on the OEM and provided API. Sometimes it doesn’t expose enough properties or doesn’t provide real-time updates. In such cases, it may be even impossible to implement this pattern.

API

At first, designing a Connected Car API isn’t different from designing an API for any other backend system. It should start with an in-depth analysis of a domain, in this case, automotive. Then user stories should be written down, and with that, the development team should be able to find common parts and requirements to be able to determine the most suitable communication protocol. There are a lot of possible solutions to choose from. There are several reliable and high-traffic oriented message brokers like Kafka or hosted solutions AWS Kinesis. However, the simplest solution based on HTTP can also handle the most complex cases when used with Server-Sent Events or WebSockets. When designing API for mobile applications, we should also consider implementing push notifications for a better user experience.

When designing API in the IoT ecosystem, you can’t rely too much on your connection with edge devices. There are a lot of connectivity challenges, for example, a weak cellular range. You can’t guarantee when your command to a car will be delivered, and if a car will respond in milliseconds or even at all. One of the best patterns here is to provide the asynchronous API. It doesn’t matter on which layer you’re building your software if it’s a connector between vehicle and cloud or a system communicating with the vehicle’s API provider. Asynchronous API allows you to limit your resource consumption and avoid timeouts that leave systems in an unknown state. It’s a good practice to include a connector, the logic which handles all connection flaws. Well designed and developed connectors should be responsible for retries, throttling, batching, and caching of request and response.

OEM’s are now implementing a unified API concept that enables its customers to communicate with their cars through the cloud at the same quality level as when they use direct connections (for example using Wi-Fi). This means that the developer sees no difference in communicating with the car directly or using the cloud. What‘s also worth noting: the unified API works well with the Digital Twin concept, which leads to cuts in communication with the vehicle as third-party apps are able to connect with the services in the cloud instead of communicating directly with an in-car software component.

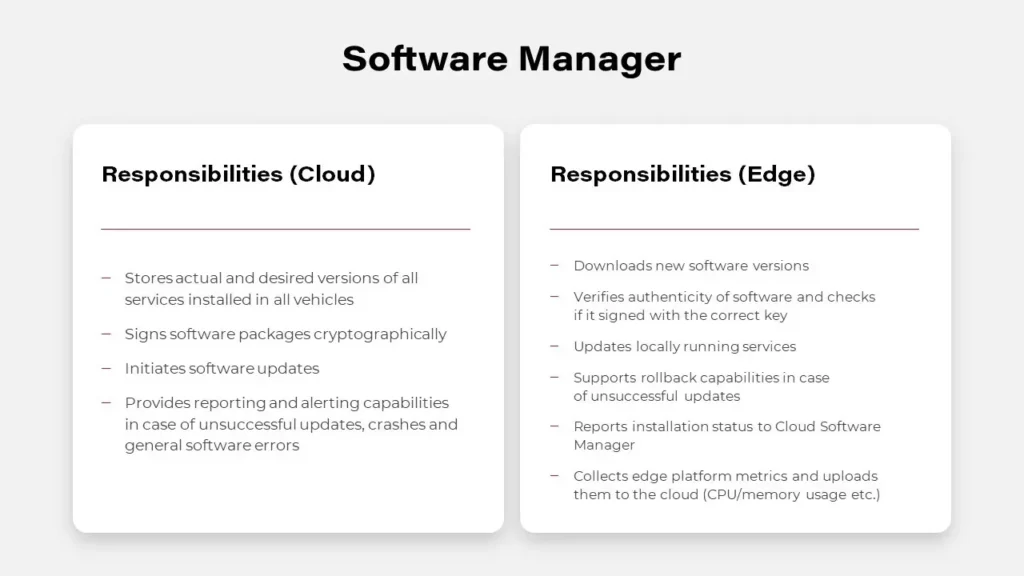

What’s next for connected car technology

Once the challenges become tackled, connected vehicles provide automakers and adjacent industries with a chance to establish beneficial co-operations, build new revenue streams, or even create completely new business models. The possibilities delivered thanks to over-the-air communication (OTA) allowing to send fixes, updates, and upgrades to already sold cars, provide new monetization channels, and sustain customer relationships.

As previously mentioned, the global connected car market is projected to reach USD 215.23 billion by 2027. To acquire shares in this market, automotive companies are determined to adjust their processes and operations. Among key factors that impact the development of connected car technology, we can point out a few crucial. The average lifecycle of a car is about 10 years. Today, automakers make decisions regarding connected cars that will go into production two to four years from now. For the cellular connectivity strategy to remain relevant over 12 to 15 years, significant challenges and assumptions need to be collaboratively addressed by OEMs, telematics control unit suppliers, and service providers.

Automakers must manage software in the field reliably, cost-efficiently, and, most importantly, securely – not just patch fixes, but also continually upgrade and enhance the functionality. The availability of OTA updates reduces the burden on dealerships and certified repair centers but requires better and more extensive testing, as the breakage of critical features is not an option.

Cellular solutions need to be agile to be compatible with emerging network technologies over the vehicle lifetime, e.g., 5G to be the industry standard in the next few years. The chosen solution must deliver reliable, seamless, uninterrupted coverage in all countries and markets where the vehicles are sold and driven.

Solution developers must offer scalable, cost-effective ways to develop upgradeable software that can be universally deployed across technologies, hardware, and chipsets. A huge focus must be put on testing the changes automatically on both the cloud platform side and the vehicle side.

As Connected Vehicles proliferate, the auto industry will need to adapt and transform itself into the growing technological dependency. OEMs and Tier-1 manufacturers must partner with technology specialists to thrive in an era of software-defined vehicles. As connectivity requires skills and capabilities outside of the OEMs’ domain, automakers will necessarily have to be software developers. An open platform environment will go a long way to encourage external developers to design apps for vehicle connectivity platforms.

Transition towards data-driven organization in the insurance industry: Comparison of data streaming platforms

Insurance has always been an industry that relied heavily on data. But these days, it is even more so than in the past. The constant increase of data sources like wearables, cars, home sensors, and the amount of data they generate presents a new challenge. The struggle is in connecting to all that data, processing and understanding it to make data-driven decisions .

And the scale is tremendous. Last year the total amount of data created and consumed in the world was 59 zettabytes, which is the equivalent of 59 trillion gigabytes. The predictions are that by 2025 the amount will reach 175 zettabytes.

On the other hand, we’ve got customers who want to consume insurance products similarly to how they consume services from e-tailers like Amazon.

The key to meeting the customer expectations lies in the ability to process the data in near real-time and streamline operations to ensure that customers get the products they need when they want them. And this is where the data streaming platforms come to help.

Traditional data landscape

In the traditional landscape businesses often struggled with siloed data or data that was in various incompatible formats. Some of the commonly used solutions that should be mentioned here are:

- Big Data systems like Cassandra that let users store a very large amount of data.

- Document databases such as Elasticsearch that provide a rich interactive query model.

- And relational databases like Oracle and PostgreSQL

That means there were databases with good query mechanisms, Big Data systems capable of handling huge volumes of data, and messaging systems for near-real-time message processing.

But there was no single solution that could handle it all, so the need for a new type of solution became apparent. One that would be capable of processing massive volumes of data in real-time , processing the data from a specific time window while being able to scale out and handle ordered messages.

Data streaming platforms- pros & cons and when should they be used

Data streaming is a continuous stream of data that can be processed, stored, analyzed, and acted upon as it's generated in real-time. Data streams are generated by all types of sources, in various formats and volumes.

But what benefits does deploying data streaming platforms bring exactly?

- First of all, they can process the data in real-time.

- Data in the stream is an ordered, replayable, and fault-tolerant sequence of immutable records.

- In comparison to regular databases, scaling does not require complex synchronization of data access.

- Because the producers and consumers are loosely coupled with each other and act independently, it’s easy to add new consumers or scale down.

- Resiliency because of the replayability of stream and the decoupling of consumers and producers.

But there are also some downsides:

- Tools like Kafka (specifically event streaming platforms) lack features like message prioritization which means data can’t be processed in a different order based on its importance.

- Error handling is not easy and it’s necessary to prepare a strategy for it. Examples of those strategies are fail fast, ignore the message, or send to dead letter queue.

- Retry logic doesn’t come out of the box.

- Schema policy is necessary. Despite being loosely coupled, producers and consumers are still coupled by schema contract. Without this policy in place, it’s really difficult to maintain the working system and handle updates. Data streaming platforms compared to traditional databases require additional tools to query the data in the stream, and it won't be so efficient as querying a database.

Having covered the advantages and disadvantages of streaming technology, it’s important to consider when implementing a streaming platform is a valid decision and when other solutions might be a better choice.

In what cases data streaming platforms can be used:

- Whenever there is a need to process data in real-time, i.e., feeding data to Machine Learning and AI systems.

- When it’s necessary to perform log analysis, check sensor and data metrics.

- For fraud detection and telemetry.

- To do low latency messaging or event sourcing.

When data streaming platforms are not the ideal solution:

- The volume of events or messages is low, i.e., several thousand a day.

- When there is a need for random access to query the data for specific records.

- When it’s mostly historical data that is used for reporting and visualization.

- For using large payloads like big pictures, videos, or documents, or in general binary large objects.

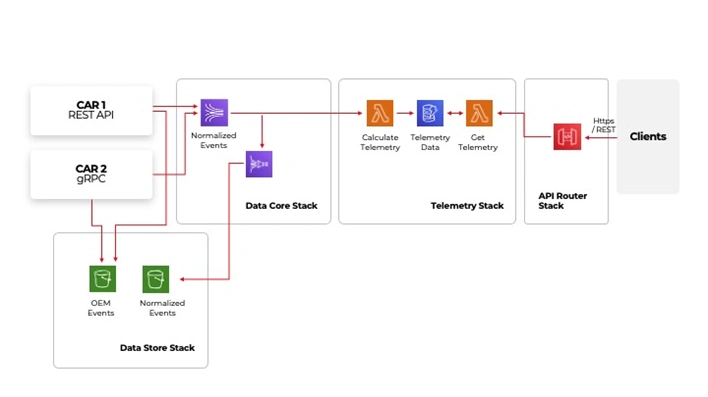

Example architecture deployed on AWS

On the left-hand side, there are integrations points with vehicles. The way how they are integrated may vary depending on OEM or make and model. However, despite the protocol they use in the end, they will deliver data to our platform. The stream can receive the data in various formats, in this case, depending on the car manufacturer. The data is processed and then sent to the normalized events. From where it can be sent using a firehose to AWS S3 storage for future needs, i.e., historical data analysis or feeding Machine Learning models . After normalization, it is also sent to the telemetry stack, where the vehicle location and information about acceleration, braking, and cornering speed is extracted and then made available to clients through an API.

Tool comparison

There are many tools available that support data streaming. This comparison is divided into three categories- ease of use, stream processing, and ordering & schema registry and will focus on Apache Kafka as the most popular tool currently in use and RocketMQ and Apache Pulsar as more niche but capable alternatives.

It is important to note that these tools are open-source, so having a qualified and experienced team is necessary to perform implementation and maintenance.

Ease of use

- It is worth noticing that commonly used tools have the biggest communities of experts. That leads to constant development, and it becomes easier for businesses to find talent with the right skills and experience. Kafka has the largest community as Rocket and Pulsar are less popular.

- The tools are comprised of several services. One of them is usually a management tool that can significantly improve user experience. It is built in for Pulsar and Rocket but unfortunately, Kafka is missing it.

- Kafka has built-in connectors that help integrate data sources in an easy and quick way.

- Pulsar also has an integration mechanism that can connect to different data sources, but Rocket has none.

- The number of client libraries has to do with the popularity of the tool. And the more libraries there are, the easier the tool is to use. Kafka is widely used, and so it has many client libraries. Rocket and Pulsar are less popular, so the number of libraries available is much smaller.

- It’s possible to use these tools as a managed service. In that scenario, Kafka has the best support as it is offered by all major public cloud providers- AWS, GCP, and Azure. Rocket is offered by Alibaba Cloud, Pulsar by several niche companies.

- Requirement for extra services for the tools to work. Kafka requires ZooKeeper, Rocket doesn’t require any additional services and Pulsar requires both Zookeeper and BooKKeeper to manage additionally.

Stream processing

Kafka is a leader in this category as it has Kafka Streams. It is a built-in library that simplifies client applications implementation and gives developers a lot of flexibility. Rocket, on the other hand, has no built-in libraries, which means there is nothing to simplify the implementation and it does require a lot of custom work. Pulsar has Pulsar Functions which is a built-in function and can be helpful, but it’s basic and limited.

Ordering & schema registry

Message ordering is a crucial feature. Especially when there is a need to use services that are processing information based on transactions. Kafka offers just a single way of message ordering, and it’s through the use of keys. The keys are in messages that are assigned to a specific partition, and within the partition, the order is maintained.

Pulsar works similarly, either within partition with the use of keys or per producer in SinglePartition mode when the key is not provided.

RocketMQ works in a different way, as it ensures that the messages are always ordered. So if a use case requires that 100% of the messages are ordered then this is the tool that should be considered.

Schema registry is mainly used to validate and version the messages.

That’s an important aspect, as with asynchronous messaging, the common problem is that the message content is different from what the client app is expecting, and this can cause the apps to break.

Kafka has multiple implementations of schema registry thanks to its popularity and being hosted by major cloud providers. Rocket is building its schema registry, but it is not known when it will be ready. Pulsar does have its own schema registry, and it works like the one in Kafka.

Things to be aware of when implementing data streaming platform

- Duplicates. Duplicates can’t be avoided, they will happen at some point due to problems with things like network availability. That’s why exactly-once delivery is a useful feature that ensures messages are delivered only once.

- However, there are some issues with that. Firstly, a few of the out-of-the-box tools support exactly-once delivery and it needs to be set up before starting streaming. Secondly, exactly-once delivery can significantly slow down the stream. And lastly, end-user apps should recognize the messages they receive so that they don’t process duplicates.

- “Black Fridays”. These are scenarios with a sudden increase in the volume of data to process. And to handle these spikes in data volume, it is necessary to plan the infrastructure capacity beforehand. Some of the tools that have auto-scaling natively will handle those out of the box, like Kinesis from AWS. But others that are custom built will crash without proper tuning.

- Popular deployment strategies are also a thing to consider. Unfortunately, deploying data streaming platforms is not a straightforward operation, the popular deployment strategies like blue/green or canary deployment won’t work.

- Messages should always be treated as a structured entity. As the stream will accept everything, that we put in it, it is necessary to determine right from the start what kind of data will be processed. Otherwise, the end user applications will eventually crash if they receive messages in an unexpected format.

Best practices while deploying data streaming platforms

- Schema management. This links directly with the previous point about treating the messages as a structured entity. Schema promotes common data model and ensures backward/forward compatibility.

- Data retention. This is about setting limits on how long the data is stored in the data stream. Storing the data too long and constantly adding new data to the stream will eventually cause that you run out of resources.

- Capacity planning and autoscaling are directly connected to the “Black Fridays” scenario. During the setup, it is necessary to pay close attention to the capacity planning to make sure the environment will cope with sudden spikes in data volume. However, it’s also a good practice to plan for failure scenarios where autoscaling kicks in due to some other issue in the system and spins out of control.

- If the customer data geo-location is important to the specific use case from the regulatory perspective, then it is important to set up separate streams for different locations and make sure they are handled by local data centers.

- When it comes to disaster recovery, it is always wise to be prepared for unexpected downtime, and it’s easier to manage if there is the right toolset set up.

It used to be that people were responsible for the production of most data, but in the digital era, the exponential growth of IoT has caused the scales to shift, and now machine and sensor data is the majority. That data can help businesses build innovative products, services and make informed decisions.

To unlock the value in data, companies need to have a complex strategy in place. One of the key elements in that strategy is the ability to process data in real-time so choosing the tool for the streaming platform is extremely important.

The ability to process data as it arrives is becoming essential in the insurance industry. Streaming platforms help companies handle large data volumes efficiently, improving operations and customer service. Choosing the right tools and approach can make a big difference in performance and reliability.