Distributed quality assurance: How to manage QA teams around the world to cooperate successfully on a single project

Ensuring distributed quality assurance and managing a QA team is not always as straightforward as one may think it is. There is no way to predict the exact number of bugs being introduced into the code and therefore, there is no way to calculate the precise time when those issues are going to be fixed. The planning process is very fluid and very often the development team requires QA team’s attention and help to reproduce the issue. Such challenges arise even in the most usual team setups when there’s just one team. Then what about a project that is so big, that there are three QA teams working together on ensuring the quality and writing automation tests?

Distributed Quality Assurance

Scaling the project by introducing more teams arise many different kinds of challenges. Sometimes the teams work in different, just slightly overlapping time zones. Sometimes it is a different culture and language. Sometimes, if said teams are hired by different vendors, the processes may differ. Rarely, this is a combination of all of those factors.

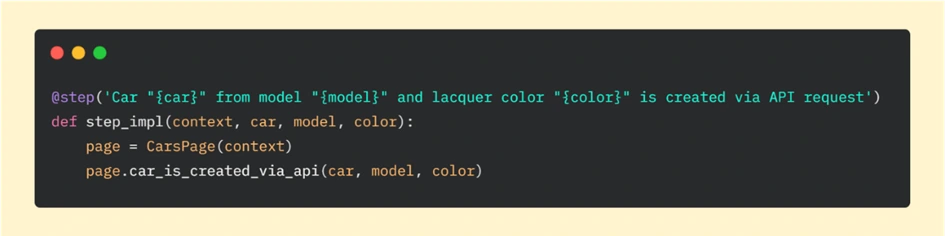

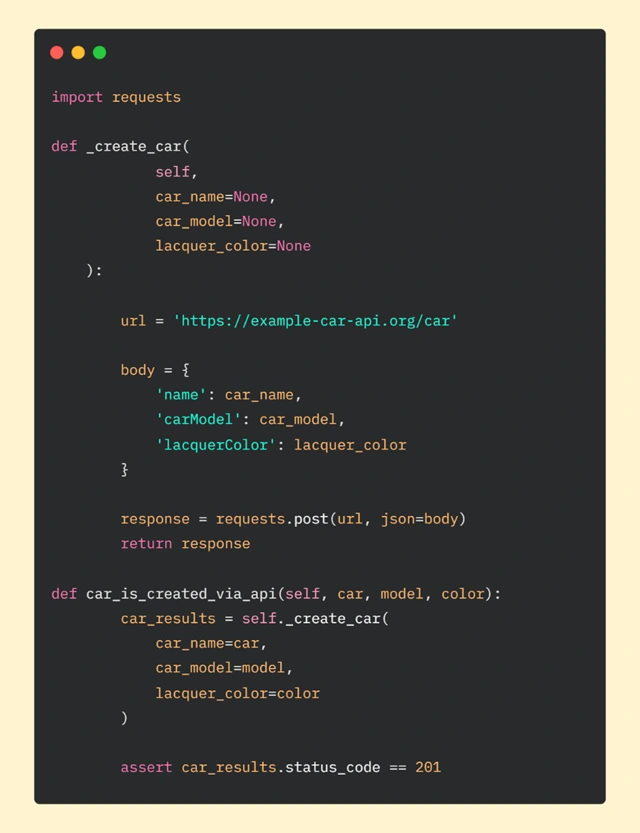

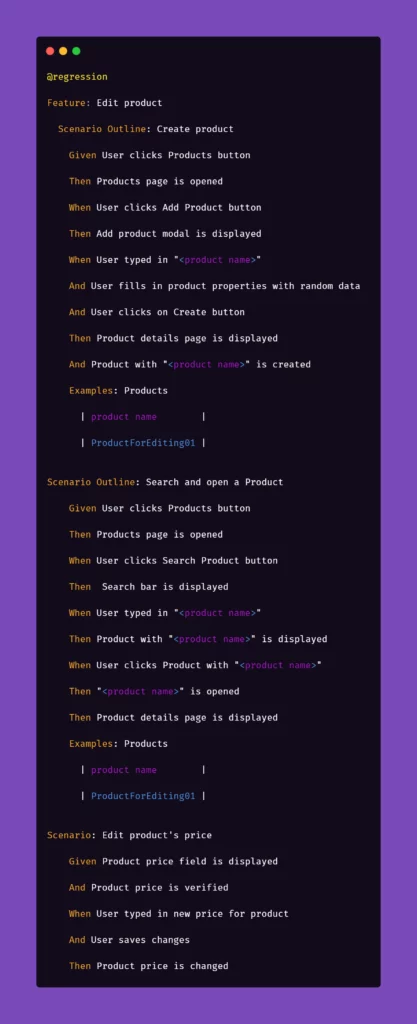

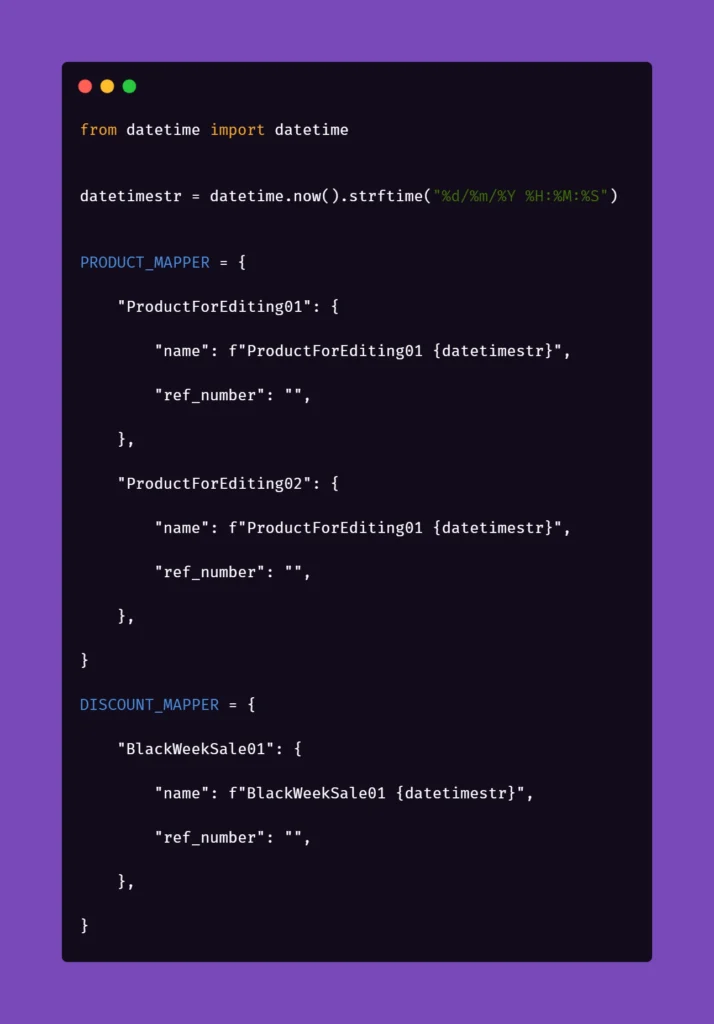

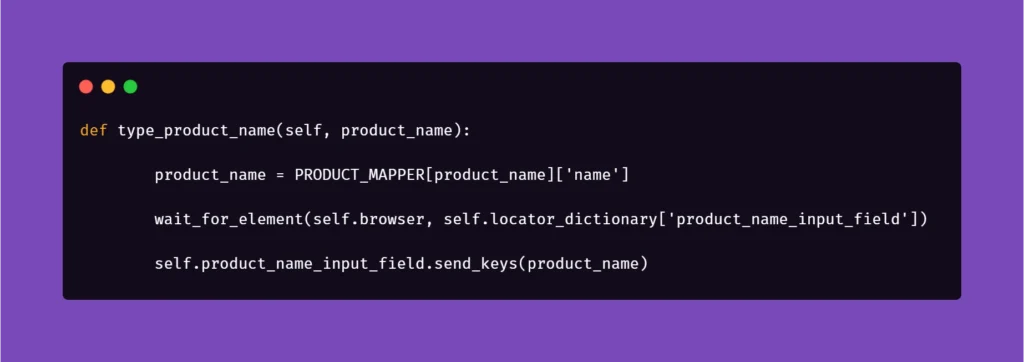

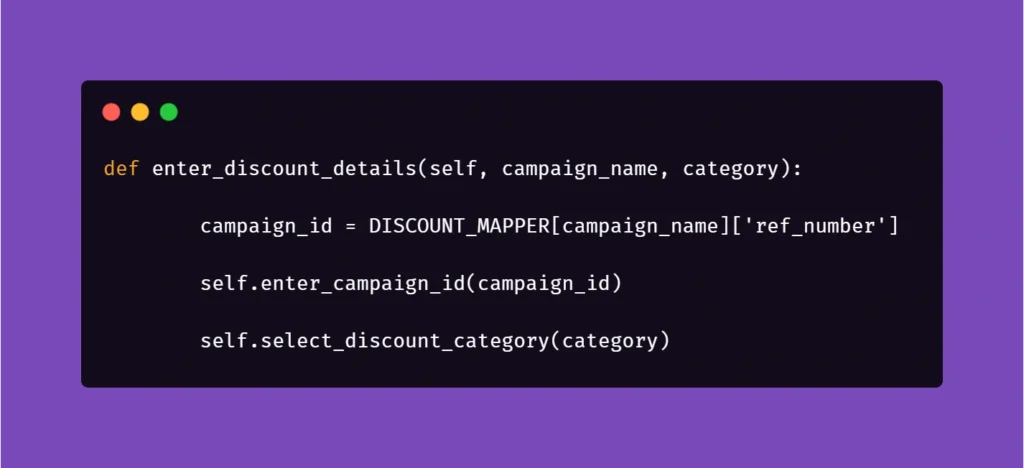

We are going to take a look at precisely that situation. The project included multiple teams writing automated Selenium tests for a single, large-scale web project simultaneously. Testers were working from Europe, Asia, and the US. Due to customer policy – all the source code for automation tests had to be pushed to a single Git repository.

Initially, there was no cooperation process defined as other teams joining the project organically when parts of the application they were responsible for, were integrated into the system. At this point, nobody anticipated an inevitable disaster.

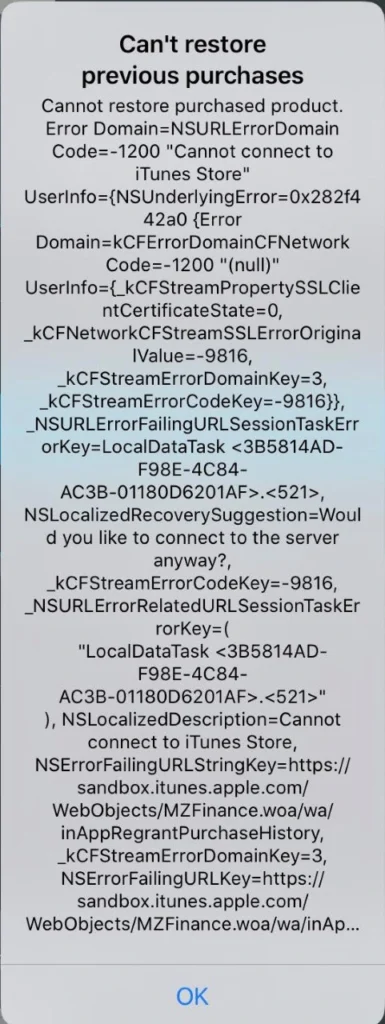

The realization came in when suddenly a new pull request came in. After six months of work, thousands of lines of the code, and multiple developers contributing - it was impossible to review such a big change and its impact on all other code – especially to foresee a possibility of invisible conflicts.

At this point, we knew that we must create a common process for all teams. We can’t just discard 6 months of development, but at the same time, we can’t merge it without thorough verification. One of the ideas was to split the work into multiple groups of branches – each team having their own set of development/integration/master, etc., but this contradicted the very idea of cooperation between the teams.

First, we’ve asked to split the huge PR into feature branches – possibly, one branch per test or one branch per feature being tested - and then merge them one by one. Going forward, all new tests should be added the same way, as relatively small pull requests instead of large chunks of code that are impossible to digest during the code review process.

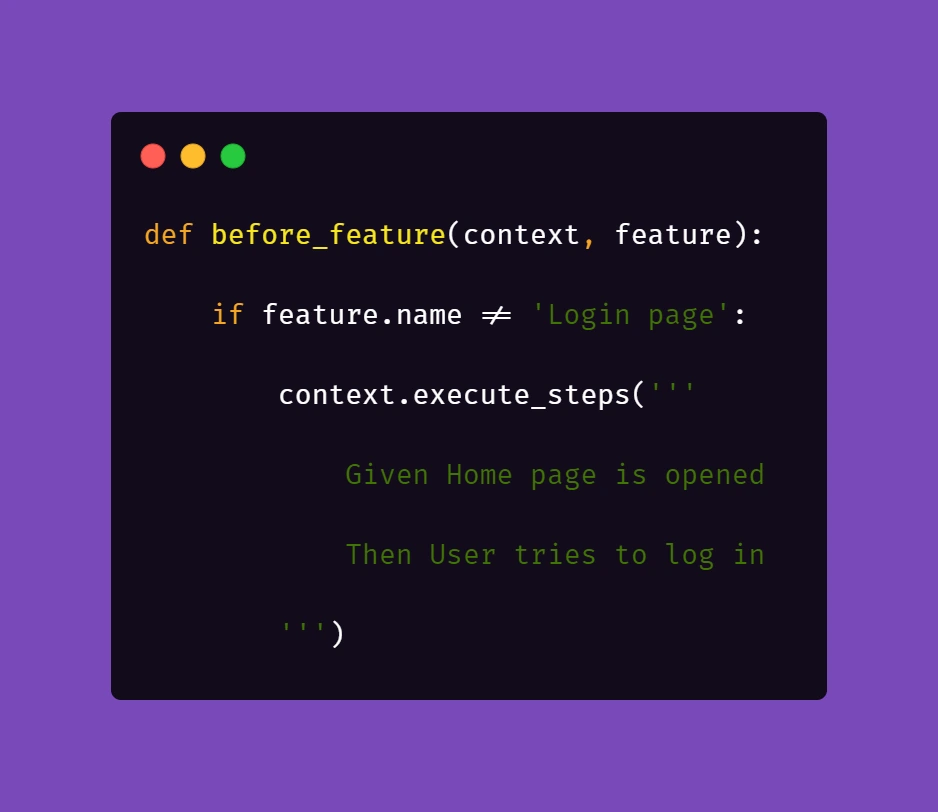

It was necessary to remind the teams that in such a setting, being synced with the latest changes saves everyone a ton of work. The process that we’ve introduced made it mandatory to pull the latest code from the development branch at least once a day to make sure there are no new conflicts.

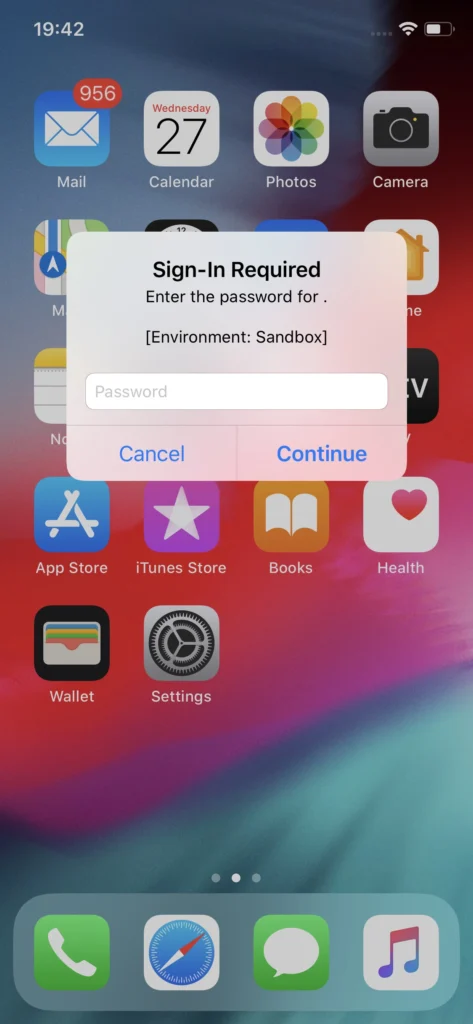

Then we’ve created separate pipelines for the other teams to test their changes, as even with small worktime overlap, it was still a blocker.

Additionally, the process requires at least two approvers from different teams to merge the changes into the development branch – this way teams do not only keep an eye on code quality but also make sure changes from other teams do not impact their work.

It may sound just like a few small steps, but overall, the implementation of the new approach took almost two months. This includes various meetings, agreements, presentations, and writing down the processes. On top of that, teams had to take care of integrating all PRs, big and small, into a new “version zero” codebase, which then was used as a fresh starting point.

The process was written down on a Confluence page accessible for all team members in all teams. It does not just include the rules initially accepted by the teams, but also coding standards, style guide, and links to the agile delivery process used for that particular project. Afterward, the result was presented to all teams and agreed to use from then on. And together we’ve decided to have a weekly sync just for the QA teams.

Key takeaways

The resulting process is working very well for us. It is a scaled-up version of the process we have used internally in our team, so the implementation went smooth and swift. The velocity of the automation tests development also improved over time, as less time was spent on fixing conflicts and going through PRs.

Of course, this process is not a one-size-fits-all solution and does not answer all the questions you might have if you want to implement a similar solution into your QA automation development. Their project includes shared code, such as libraries, which someone is obliged to maintain and take responsibility for. The technical debt reduction also has to be agreed upon and split evenly between the teams. All in all, if the development is based on a solid foundation like the described process, it’s easier to agree on smaller things on the go.

Grape Up guides enterprises on their data-driven transformation journey

Ready to ship? Let's talk.

Check related articles

Read our blog and stay informed about the industry's latest trends and solutions.

Interested in our services?

Reach out for tailored solutions and expert guidance.