Thinking out loud

Where we share the insights, questions, and observations that shape our approach.

How to run any type of workload anywhere in the cloud with open source technologies?

Nowadays, enterprises have the ability to set up and run a mature and open source cloud environment by bundling platform technologies like Cloud Foundry and Kubernetes with tools like BOSH, Terraform and Concourse. This bundle of open source cloud native technologies results in an enterprise grade and production-ready cloud environment, enabling enterprises to run any type of workload anywhere in an economically efficient way. Combining that with Enterprise Support from an experienced Enterprise IT Cloud solution provider gives enterprises the ideal enabler to achieve their digital transformation goals .

Reference architecture of an open source cloud environment

The reference architecture of an open source and enterprise grade cloud environment should at minimum contain these core elements:

- Infrastructure provisioning

- Release Management & Deployment

- Configuration Management

- Container based Runtime

- Orchestration & Scheduling

- Infrastructure & Application Telemetry software

- Monitoring & Analytics

- Application Deployment Automation

- Back-ups & Disaster Recovery

How to start?

For all the elements listed above, there are multiple open source technologies to choose from that one needs to be familiar with. The ‘Cloud Native Landscape’ from the Cloud Native Foundation is an elaborate and up-to-date overview of the open source cloud native tools available.

Navigating through this landscape and the multitude of tools to choose from is not an easy task. With a dedicated team and an agile approach every company is able to set up its own open source cloud environment. Nevertheless, bundling these technologies in a correct and seamless way is a complex undertaking. It takes an experienced and skilled partner to help enterprises simplify, automate and speed-up the setup process, relying solely on the same open source technologies . This support provides additional security and mitigates risk.

Benefits

Having a single cloud environment is a big enabler for DevOps adoption within enterprises. It provides development teams with a unified platform, giving them space to focus solely on building valuable applications. Ops teams manage a homogeneous environment by using a tool like BOSH for release management and deployment for both Application Runtime and Container Runtime.

Technologies like Cloud Foundry and Kubernetes are independent from the underlying infrastructure making it possible to utilize any combination of the public and/or private cloud as your infrastructure layer of choice. At the end of the day, you shouldn’t underestimate the economic value here. Open source technologies that are supported by large user communities and the largest technologies companies in the world make having your own Open Cloud Environment a very compelling option.

In-house vs Managed

In the light of growing complexity associated with managing DevOps enterprises look to partners to provide managed services for their cloud environments. The main reason is that all the different stack components of a mature cloud environment can be complex for many IT organizations to deploy and manage on their own. With frequent required updates to the different components of the bundle the average IT organization is not well suited to keep pace, without help from a partner.

Having a partner provide managed services for the cloud environment creates additional benefits; reducing complexity and more resource allocation to areas that create more direct business value.

Personally, I’m excited to be a close witness and participant of this growing trend and look forward to seeing more enterprises run their production workloads on open source technologies.

Cloud native: What does it mean for your business?

We witness how the world of IT constantly changes. Today, like never before, it is more often defined as “being THE business” rather than just “supporting the business”. In other words, the conventional application architectures and development methods are slowly becoming inadequate in this new world. Grape Up, playing a key role in the cloud migration strategy, helps Fortune 1000 companies make a smooth transition. We build apps that support the business itself, we advocate the agile methodology, and implement DevOps to optimize performance.

To clarify the idea behind cloud native technologies, we’ve put together the most important insights to help you and your team understand the essentials and benefits of Cloud Native Applications:

Microservices architecture

First and foremost, one must come to terms with the fact that the traditional application architecture means complex development, long testing and releasing new features only in a specific period. Whereas, the microservices approach is nothing like that. It deconstructs an application into many functional components. These components can be updated and deployed separately without having any impact on other parts of the application.

Depending on its functionality, every microservice can be updated as often as needed. For instance, a microservice that contains functionalities of a dynamic business offering will not affect other parts of the app that barely change at all. Thanks to this, an application can be developed without changing its fundamental architecture. Gone are the days when IT teams had to alter most of the application just to change one piece.

Operational design

One of the biggest issues that our customers face before the migration is the burden of moving new code releases into production. Along with monolithic architectures that combine the whole code into one executable, new code releases require deploying the entire application. Because production environment isn’t the same as development environment, it often becomes impossible for developers to detect potential bugs before the release. Also, testing new features without moving the whole environment to the new app version can become tricky. This, in turn, complicates releasing new code. Microservices solve this problem prefectly. Since the environment is divided, any changes in code are separated to executables. Thanks to this, updates do not change the rest of the application, which is what clients are initially concerned about.

API

One of the indisputable advantage of microservices which outweighs all traditional methods is the fact that they communicate by means of API. With that said, you can release new features step by step with a new API version simultaneously. And if any failures appear, there is also a possibility to shut off access to the new API while the previous version of your app is still operational. In the meantime, you can work on the new function.

DevOps

At Grape Up, we often work on on-site projects alongside our clients. On the first day of the project, we are introduced to multiple groups that are in charge of various parts of the app’s lifecycle such as operations, deployment, QA or development. Each of them has its own processes. This creates long gaps between tasks being handed over from one group to another. Such gaps result in ridiculously long deployment time frames which are very harmful to an IT business, especially when frequent releases are more than welcome. To efficiently get rid of these obstacles and improve the whole process, we introduce clients to DevOps.

By and large, DevOps is nothing else, but an attempt to eliminate the gaps between IT groups. It’s an engineering culture that Grape Up experts teach clients to use. If followed properly, they are able to transform manual processes to automated ones and start getting things done faster and better. The most important thing is to find the pain point in the application’s lifecycle. Let’s say that the QA department doesn’t have enough resources to test software and delays the entire process in time. A solution to this can be either to migrate testing to a cloud-based environment or put developers in charge of creating tests that analyze the code. By doing so, the QA stage can take place simultaneously with the development stage, not after it. And this is what it takes to understand DevOps.

The transition to cloud-native software development is no longer an option, it is a necessity. We hope that all the reasons mentioned above prompted you to embark on a journey called “Cloud-Native”, a promising opportunity for your company to grow in the years to come. And even if you’re still feeling hesitant, don’t think twice. Our expertise combined with your vision can be a great start into a brighter future for your enterprise .

A slippery slope from agile to fragile: treat your team with respect

„Great things in business are never done by one person, they’re done by a team of people.”

said Steve Jobs. Now imagine if he didn’t have such Agile approach. Would the iPhone ever exist?

Agile development, a set of methods and practices where solutions evolve thanks to the collaboration between cross-functional teams . It is also pictured to be a framework which helps to ‘get things done’ fast. On top of that, it helps to set a clear division of who is doing what, to spot blockers and plan ahead. And we’ve all heard of this. Most of us even know the definition of Agile by heart. In the last few decades, Agile has become the approach for modern product development. But despite its popularity, it is still misinterpreted quite often and its core values tend to get abused. This misinterpretation has become so common that it has developed a name for itself, frAgile. In other words, it is what your product and team become if you don’t follow the rules.

The thin line between Agile and frAgile

Working by the principles of Agile means you are flexible and you’re able to deliver your product the way the customer wants it and on time. By and large, Agile teaches u how to work smarter and how to eliminate all barriers to working efficiently. However, there are times when the attempt to follow Agile isn’t taken with enough care and the whole plan fails. Just like trying to keep balance when it’s your first time on ice skates.

With that said, I will step-by-step explain a few examples of how Agile can quickly and irreversably become something it should never be in the first place. Later on, I will list the tips on how to avoid stepping on that slippery slope. So let’s take a look at the examples:

Your technical debt is going through the roof

Just like projects, sprints are used to accomplish a goal. Quite often though, when the sprint is already running, new decisions and changes keep flowing in. As a result, your team keeps restarting work over and over again and works all the time. Does it sound familiar?

Unfortunately, if this situation continues, everyone gets used to it and it becomes the norm. It usually leads to a huge pile of technical debt you could ever imagine. Combine it with an endless list of defects caused by the lack of stability to nurture the code and you are doomed for failure.

You should always respond to change wisely. Despite the fact that the Agile methodology embraces changes and advocates flexibility, you shouldn’t overdo it. Especially not the changes that impact your sprint on a daily basis. Every bigger change ought to be consulted between sprints, and be based on the feedback received from users.

A big fish leaves the team and the project falls apart

Another, not so fortunate thing that can happen is a team member leave your team in the middle of a long-term, complex project that consists of more unstructured processes than meets the eye. With the job rotation in the contemporary world of IT, it happens all the time.

Once a Product Owner or a Team Leader is gone, none of the team members will be able to propoerly describe the system behavior and what should be delivered. As a result, deadlines will fail to be met and you will be chasing dreams about the quality of your product.

Find the balance between individuals and processes. And most importantly, never underestimate how your scope of work is documented and the team is managed. Otherwise, after an important team member is gone, the rest will be left in a difficult position. So prepare them for such events. Take your time to estimate what might hold back your team and what is absolutely necessary for the fast-paced of world-class engineering.

The project is nearly finished but your customer is nowhere near Agile

You would be surprised at how many companies out there are Agile… in their dreams. By appreciating the flexibility that Agile gives, they often confuse Agile working with flexible working and still work with the waterfall methodology in their minds. This can be spotted especially when someone overuses terms like sprint or scrum all the time. In reality, actions speak louder than words and one doesn’t have to show off their „rich” vocabulary.

Therefore, if you agree on a strict scope of delivery in your contract, you might regret it later on. We all know that the reason why IT is all about Agile is because plans tend to change. The only problems is that the list of features on the contract doesn’t. If the customer doesn’t fully understand the values of Agile, the business relationship can be put at risk.

Prioritize the collaboration with your client over contract negotiations. Focus on clear communication from the beginning and make sure that your client grasps the principles of Agile. Also, if along the way any unexpected changes to the established scope of work appear, make sure to carry them out in front of your client’s eyes.

Save me from drowning in frAgility

With all the above, here is how you can avoid messing up your work environment:

- Prepare user stories before planning the sprint. You will thank yourself later. If written collaboratively as part of the product backlog grooming proces, it will leverage creativity and the team’s knowledge. Not only will it reduce overhead, but also accelerate the product delivery.

- Be careful with changes during the sprint. Or simply - avoid abrupt changes. Thanks to this, your code base will have its necessary stability for performance.

- Turn yourself into a true Agile evangelist. Face reality that not everyone understands the core values of the world’s most beloved methodology – not even your customers. So even if someone tells you that they use Agile, take it with a pinch of salt. Strong business partnerships are built upon expectations that are clear to both sides.

At Grape Up, we follow the principles behind the Agile Manifesto for software development. We believe that business people and developers must work together daily throughout the project. It helps us and our clients achieve mutual success on their way to becoming cloud-native enterprises .

Building an easy to maintain, reusable user interface on macOS

The value of the user interface

What you see inside an app is called the user interface (UI). Undoubtedly, it is an important part of every software, and it’s no piece of cake to wrap the business logic with a fancy, (self-explanatory) and efficient visual representation.

How many times in your life have you heard not to judge the book by its cover? Probably quite a few. And how many times have you left an application and never came back just because you didn’t like the design at first sight? Probably more than just a few times.

At Grape Up, we achieve a successful UI through close cooperation between our software engineers (SE) and designers. And this article is about the software engineer’s part – programming UI for the macOS platform .

Programming user interface in Cocoa

Cocoa, an original name given to the Application Programming Interface (API) used for building Mac apps. It is fully responsible for the appearance of apps, their distinctive feel and responsiveness to user actions. The tool automates and simplifies many aspects of UI programming to comply with Apple's human interface guidelines (AHIG). It provides software engineers with several tools for UI development:

- Storyboards

- XIBs

- Custom code

Each of them gives the possibility to implement not only a fully functional, but also an incredibly useful and captivating UI. Having used all three of them at Grape Up, we could give you a comparative analysis of each tool, but we will not be discussing it here.

Why is it better to use XIBs and Storyboards?

Of course, it would be wrong to state that XIBs or Storyboards are simply better than the Custom code. Like always, there is no “silver bullet” – no universal approach or solution for every software development problem. On some occasions the Interface Builder (IB) won’t be helpful to achieve the desired appearance. However, years of hands-on experience backed up by numerous projects have allowed us to draw the following conclusions as to why it’s better to use the Interface Builder on a regular basis:

It’s easy to understand and update

Let’s say that the software engineer needs to deliver an update to an already existing container view with a number of subviews. In what case will he spend less time getting familiar with the existing solution and applying the update? When using XIB with visualized UI elements and layout constraints or when the UI is scripted in several hundred lines of code?

Another example, let’s imagine a set of very similar but still different views. The software engineer needs to extend this set with another view. In what case will he not duplicate the already existing solution and reuse it instead? These are a few examples of regular SE tasks.

In practice, most of the times you would expect the UI to be available in XIB/Storyboard, and not in the code. We can consider XIBs/Storyboards as a public high-level API which encapsulates all the details underneath. IB design will provide a better general overview as well as decrease the chance of missing something.

One of the biggest arguments against XIBs are merge conflicts. Usually, they are caused by not paying enough attention to decomposing tasks during the project. Which is exactly why we practice task decomposition, a project technique that breaks down the workload and tasks into smaller ones before the actual creation of the entire work structure. Thanks to this, we are able to save a lot of time in the long run. By using IB, you can create as much granular UI elements as you want. Moreover, this a proper way of targeting a reusable and easy to update User Interface.

It helps avoid mistakes and saves time

Another great advantage of the Interface Builder is the fact that software engineers can get away with plenty of the so called “dirty work”, such as objects initialization, configuration, basic constraints management, etc. The UI can be implemented faster and with less code. By using IB, the engineer simply follows the rule – “Less code produces less bugs”.

It generates warnings and errors

The warnings and errors generated by the Interface Builder when working with an Auto Layout engine: unsatisfiable layouts, ambiguous layouts, logical errors. Raised issues are quite descriptive and self-explanatory. In many cases, the IB even suggests a solution. Thanks to the mentioned issues it is a lot easier to prepare a proper and functional UI before even building the application. Which would rather be impossible to achieve when programming UI in code. With that said we can say that the IB is a great tool for both creating and testing the UI.

It accelerates the development cycle

The next useful feature that is available in IB is Previews. Thanks to it, the application interface can be previewed using the assistant editor, which displays a secondary editor side-by-side with the main one. It gives the possibility to check the designed Auto Layout on the fly and also significantly reduces development cycle time. Such feature is especially helpful when supporting multiple localizations.

It helps educate new team members

As we all know, during the project life time engineers leave and join the team. To make the onboarding process smooth and effective, we introduce new team members to a UI which is organized in XIB files or in Storyboards. As a result, they are given a complete overview of the application’s functionalities. After all, we all know how the saying goes– “Human brains prefer a visual content over the textual one”.

XIBs and Storyboards extended use cases

Is there a better way of organizing your User Interface designs than just keeping them in your Resources folder? Of course. Why not organize them in a library or a framework? Having a UI framework with all the custom views (XIBs) along with controllers to manage them can be quite convenient. After all, having UI elements in visual representation and keeping them atomic enough guarantees extensibility, manageability and reusability. For those that work on big, stretched over time projects it might be really useful.

Conclusion - providing an easy to maintain user interface

The modern world is all about information and the ways of transferring, processing as well as selling it. Why bother yourself with some boring symbols if there already are more fascinating ways of dealing with it right around the corner? The Interface Builder delivers a quick, convenient and reliable way for programming your UI. So, let’s save us some time for a mutual discussion.

Steps to successful application troubleshooting in a distributed cloud environment

At Grape Up, when we execute digital transformation, we need to take care of a lot of things. First of all, we need to pick a proper IaaS that meets our needs such as AWS or GCP. Then, we need to choose a suitable platform that will run on top of this infrastructure . In our case, it is either Cloud Foundry or Kubernetes. Next, we need to automate this whole setup and provide an easy way to reconfigure it in the future. Once we have the cloud infrastructure ready, we should plan how and what kind of applications we want to migrate to the new environment. This step requires analyzing the current state of the application’s portfolio and answering the following:

- What is the technology stack?

- Which apps are critical for the business?

- What kind of effort is required for replatforming a particular app?

Any components that are particularly troublesome or have some serious technical debts should be considered for modernization. This process is called “breaking the monolith” where we try to iteratively decompose the app into smaller parts, where each new part can be a new separate microservice. As a result, we end up with dozens of new or updated microservices running in the cloud.

So let’s assume that all the heavy lifting has been done. We have our new production-ready cloud platform up and running, we replatformed and/or modernized the apps and we have everything automated with the CI/CD pipelines. From now on, everything works as expected, can be easily scaled and the system is both highly available and resilient.

Application troubleshooting in a cloud environment

Unfortunately, quite often and soon enough we receive a report that some requests behave unusual in some scenarios. Of course, these kind of problems are not unusual no matter what kind of infrastructures, frameworks or languages we use. This is a standard maintenance or monitoring process that each computer system needs to take into account after it has been released to production.

Despite the fact that cloud environments and cloud-native apps improve a lot of things, application troubleshooting might be more complex in the new infrastructure compared to what the ‘old world’ represented.

Therefore, I would like to show you a few techniques that will help you with troubleshooting microservices problems in a distributed cloud environment. To exemplify everything, I will use Cloud Foundry as our cloud-native platform and Java/Spring microservices deployed on it. Some tips might be more general and can be applied in different scenarios.

Check if your app is running properly

There are two basic commands in CF C L I to check if your app is running:

- ‘cf apps’ – this will list all applications deployed to current space with their state and the number of instances currently running. Find your app and check if its state says “started”

- ‘cf app <app_name>` - this command is similar to the one above, but will also show you more detailed information about a particular app. Additionally, since the app is running, you can also check what is the current CPU usage, memory usage and disk utilization.

This step should be first since it’s the fastest way to check if the application is running on the Cloud Foundry Platform.

Check logs & events

If our app is running, you can check its lifecycle events with :

`cf events <app_name>`

This will help you diagnose what was happening with the app. Cloud Foundry could have been reporting some errors before the app finally started. This might be a sign of a potential issue. Another example might be when events show that our app is being restarted repeatedly. This could indicate a shortage of memory which in turn causes the Cloud Foundry platform to destroy the app container.

Events give you just a broad look on what has happened with the app, but if you want more details you need to check your logs. Cloud Foundry helps a lot with handling your logs. There are three ways to check them:

- `cf logs <app_name> --recent` - dumps only recent logs. It will output them to your console so you can use linux commands to filter them.

- `cf logs <app_name> - returns a real-time stream of the application logs.

- Configure syslog drain which will stream logs to your external log management tool (ex: Prometheus, Papertrail) - https://docs.cloudfoundry.org/devguide/services/log-management.html.

This method is as good as the maturity or consistency of your logs, but the Cloud Foundry platform also helps in the case of adding some standardization to your logs. Each log line will have the following info :

- Timestamp

- Log type – CF component that is origin of log line

- Channel – either OUT (logs emitted on stdout) or ERR (logs emitted on stderr)

- Message

Check your configuration

If you have investigated your logs and found out that the connection to some external service is failing, you must check the configuration that your app uses in its cloud environment. There are a few places you should look into:

- Examine your environment variables with the `cf env <app_name>` command. This will list all environment variables (container variables) and details of each binded service.

- `cf ssh <app_name> -i 0` enables you to SSH into container hosting your app. With the ‘i’ parameter you can point to a particular instance. Now, it is possible to check the files you are interested in to see if the configuration is set up properly.

- If you use any configuration server (like Spring Cloud Config), check if the connection to this server works. Make sure that the spring profiles are set up correctly and double-check the content of your configuration files.

Diagnose network traffic

There are cases in which your application runs properly, the entire configuration is correct, you don’t see anything extraordinary in your events and your logs don’t really show anything. This could be why:

- ou don’t log enough information in your app

- There is a network related issue

- Request processing is blocked at some point in your web server

With the first one, you can’t really do much if your app is already in production. You can only prevent such situations in the future by talking more effort in implementing proper logging. To check if the second issue relates to you:

- SSH to the Container/VM hosting your app and use the linux `tcpdump` command. Tcpdump is a network packet analyzer which can help you check if the traffic on an expected port is flowing.

- Using `netstat -l | grep <your_port>` you can check if there is a process that listens on your expected port. If it exists, you can verify if this is the proper one (i.e. Tomcat server).

- If your server listens on a proper port but you still don’t see the expected traffic with tcpdump then you might check firewalls, security groups and ACLs. You can use linux netcat (‘nc’ command) to verify if TCP connections can be established between the container hosting your app and the target server.

Print your thread stack

Your app is running and listening on a proper port, the TCP traffic is flowing correctly and you have well designed the logging system. But still there are no new logs for a particular request and you cannot diagnose at which point and where exactly your app processing has stopped.

In this scenario it might be useful to use a Java tool to print the current thread stack which is called jstack. It’s a very simple and handy tool recommended for diagnosing what is currently happening on your java server.

Once you have executed jstack -f , you will see the stack traces of all Java threads that run within a target JVM. This way you can check if some threads are blocked and on what execution point they’ve stopped.

Implement /health endpoints in your apps

A good practice in microservice architecture is to implement the ‘/health’ endpoint in each application. Basically, the responsibility of this endpoint is to return the application health information in a short and concise way. For example, you can return a list of app external services with a status for each one: UP or DOWN. If the status is DOWN, you can tell what caused the error. For example, ‘timeout when connecting to MySQL’.

From the security perspective, we can return the global UP/DOWN information for all unauthenticated users. It will be used to quickly determine if something is wrong. The list of all services with error details will be accessible only for authenticated users with proper roles.

In Spring Boot apps, if you add a dependency to the ‘spring-boot-starter-actuator’, there are extra ‘/health’ endpoints. Also, there is a simple way to extend the default behavior. All you need to do is implement your custom health indicator classes that will implement the `HealthIndicator` interface.

Use distributed HTTP tracing systems

If your system is composed of dozens of microservices and the interactions between them are getting more complex, then you might come across difficulties without any distributed tracing system. Fortunately, there are open source tools that solve such problems.

You can choose from HTrace, Zipkin or Spring Sleuth library that abstracts many concepts similar to distributed tracing. All this tools are based on the same concept of adding additional trace information to HTTP headers.

Certainly, a big advantage of using Spring Sleuth is that it is almost invisible for most users. Your interactions with external systems should be instrumented automatically by the framework. Trace information can be logged to a standard output or sent to a remote collector service when you can visualize your requests better.

Think about integrating APM tools

APM stands for Application Performance Management. These tools are often external services that help you to monitor your whole system health and diagnose potential problems. In most cases, you will need to integrate them with your applications. For example you might need to run some agent parallel to your app which will report your app diagnostics to external APM server in the background.

Additionally, you will have rich dashboards for visualizing your system’s state and its health. You have many ways to adjust and customize those dashboards according with your needs.

APM Examples : New Relic, Dynatrace, AppDynamics

These tools are must-haves for a highly available distributed environment.

Remote debugging in Cloud Foundry

Every developer is familiar with the concept of debugging, but less than 90% of time we are talking about local development debugging where you run the code on your machine. Sometimes, you receive a report that something is doesn’t behave the way it should on one of your testing environments. Of course, you could deploy a particular version on your local environment, but it is hard to simulate all aspects of this environment. In this case, it might be best to debug an application in a place where it is actually running. To perform a remote debug procedure on Cloud Foundry , see the below:

Please note that you must have the same source code version opened in your IDE. This method is very useful for development or testing environments. However, it shouldn’t be used on production environments.

Summary of application troubleshooting in cloud native

To sum up, I hope that all the above will help you with application troubleshooting problems with microservices in a distributed cloud environment and that everything will indeed work as expected, will be easily scaled and the system will be both highly available and resilient.

Kubernetes as a solution to container orchestration

Containerization

Kubernetes has become a synonym for containerization. Containerization, also known as operating-system-level virtualization provides the ability to run multiple isolated containers on the same Kernel. That is, on the same operating system that controls everything inside the system. It brings a lot of flexibility in terms of managing application deployment.

Deploying a few containers is not a difficult task. It can be done by means of a simple tool for defining and running multi-container Docker applications like Docker Compose. Doing it manually via command line interface is also a solution.

Challenges in the container environment

Since the container ecosystem moves fast it is challenging for developers to stay up-to-date with what is possible in the container environment. It’s usually in the production system where things get more complicated as mature architecture can consist of hundreds or thousands of containers. But then again, it’s not the deployment of such swarm that’s the biggest challenge.

What’s even more confusing is the quality of our system called High Availability. In other words, it is when multiple instances of the same container must be distributed across nodes available in the cluster. The type of the application that lives in a particular container that dictates the distribution algorithm that should be applied. Once the containers are deployed and distributed across the cluster, we encounter another problem: the system behavior in the presence of node failure.

Luckily enough, modern solutions provide a self-healing mechanism. Therefore, if a node hits the capacity limits or its down issues, the container will be redeployed on a different node to ensure stability. With that said, managing multiple containers without a sophisticated tool is almost impossible. This sophisticated tool is known as a container orchestrator. Companies have many options when it comes to platforms for running containers. Deciding which one is the best for a particular organization can be a challenging task itself. There are plenty of solutions on the market among which the most popular one is Kubernetes [1].

Kubernetes

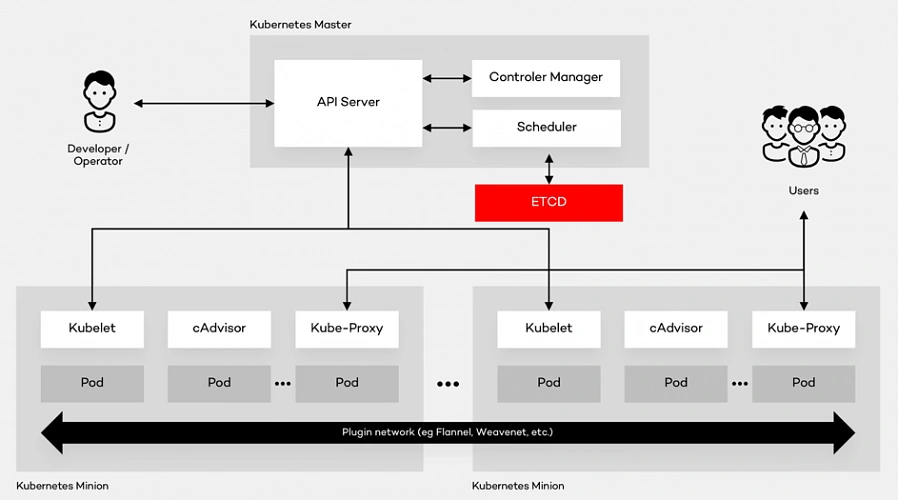

Kubernetes is the open source container platform first released by Google in 2014. The name Kubernetes, translated from Ancient Greek and means “Helmsman”. The whole idea behind this open-source project was based on Google’s experience of running containers at an enormous scale. The company uses Kubernetes for the Google Container Engine (GKE), their own Container as a Service (CaaS). And it shouldn’t be a surprise to anyone that numerous other platforms out there such as IBM Cloud, AWS or Microsoft Azure support Kubernetes. The tool can manage the two most popular types of containers – Docker & Rocket. Moreover, it helps organize networking, computing and storage – three nightmares of the microservice world. Its architecture is based on two types of nodes – Master and Minion as shown below:

Architecture glossary

- PI Server – entry point for REST commands. It processes and validates the requests and executes the logic.

- Scheduler – it supports the deployment of configured pods and services onto the nodes.

- Controller Manager – uses an apiserver to control the shared state of the cluster and makes changes if necessary.

- ETCD Storage – key-value store used mainly for shared configuration and service discovery.

- Kubelet – receives the configuration of a pod from the apiserver and makes sure that the right containers are running. It also communicates with the master node.

- cAdvisor – (Container Advisor) it collects and processes information about each running container. Most importantly, it helps container users understand the resource usage and performance characteristics of their containers.

- Kube - Proxy – runs on each node. It manages the networking routing for TCP (Transmission Control Protocol) and UDP (User Datagram Protocol) packets which are used for sending bits of data.

- Pod – the fundamental element of the architecture, a group of containers that, in a non-containerized setup, would all run on a single server.

Architecture description

A Pod provides abstraction of the container and makes it possible to group them and deploy on the same host. The containers that are in the same Pod share a network, storage and a run specification. Every single Minion Node runs a kubelet agent process which connects it to the Master Node as well as a kube-proxy which can do simple TCP and UDP stream forwarding. The Kubernetes architecture model assumes that Pods can communicate with other Pods, regardless of which host they land on. Besides, they also have a short lifetime: they’re created, destroyed and then created again depending on the server. Connectivity can be implemented in various methods (kube-router, L2 network etc.). In many cases, a simple overlay network based on a Flannel is a sufficient solution.

Summary

As a company with years of experience in the cloud evolution, we advise enterprises to think even up to ten years into the future when choosing the right platform . It all depends on where they see technology heading. Hopefully, this summary will help you understand the fundamentals of component containerization, the Kubernetes architecture and, in the end, make the right decision.