Enterprise AI voice agents

Custom AI voice agents that go beyond keyword recognition -they reason over your enterprise data, execute tasks, and adapt in real time. Deployed in the cloud or embedded on-device, built for security, low latency, and seamless integration with your existing systems.

Replace scripted interactions with intelligent voice agents

Automate complex interactions at scale

Handle Tier-1 and Tier-2 customer inquiries that previously required human agents. AI voice agents resolve issues end-to-end - not just route calls - reducing cost-per-contact while maintaining high resolution rates.

Scale to demand without quality trade-offs

Cloud-native architecture handles massive spikes in call volume during peak events without degradation. No seasonal staffing, no hold times, no service gaps.

Own a voice experience built for your business

Unlike off-the-shelf assistants, our voice agents are engineered around your brand guidelines, domain terminology, and compliance requirements - not adapted from a generic template.

Turn conversations into structured insights

Analyze thousands of hours of voice interactions to extract actionable data on customer pain points, product feedback, and operational patterns - insights that stay locked in traditional call center recordings.

Custom AI voice agents designed for mission-critical operations

We combine deep expertise in cloud-native infrastructure with advanced AI engineering to build voice solutions tailored to specific industry contexts. From architecture design through deployment and optimization, every component is engineered for your operational requirements.

LLM-powered conversational intelligence

Voice agents powered by Large Language Models that understand context across conversation turns and reference user history for personalized, coherent interactions.

Enterprise data access via RAG

Agents retrieve answers from your enterprise knowledge base in real time using Retrieval-Augmented Generation, respecting user permissions and data access policies.

Flexible deployment - cloud, edge, or hybrid

Run voice agents in the cloud for global scale, fully embedded on-device for offline capability (e.g., in-car infotainment, aviation systems), or in a hybrid setup combining both.

Autonomous task execution

Beyond conversation, agents trigger APIs to perform actions - booking flights, processing claims, adjusting vehicle settings, updating records - without human handoff.

Key features

Low-latency response

Optimized inference pipelines and speech-to-speech models deliver near-instant responses, making interactions feel natural rather than transactional.

Contextual memory

The agent retains context across conversation turns and references past user history to deliver personalized, coherent interactions.

Dynamic tone adaptation

The system adjusts vocal tone and delivery style based on the situation - a capability unique to voice that text-based LLMs cannot replicate.

Complex tool use

Agents autonomously call APIs and execute multi-step workflows: booking, claims processing, system adjustments, data retrieval - all within the conversation flow.

Enterprise-grade security

Built with strict adherence to data privacy standards (GDPR, SOC2), ensuring sensitive corporate data remains protected throughout every interaction.

Hybrid and edge deployment

Flexible architecture supports cloud, on-device, or hybrid deployment - enabling offline capability for environments where connectivity is limited or latency-critical.

Every conversation is a transaction

In-vehicle voice assistant

Voice agents embedded in vehicle infotainment systems that control settings, provide navigation assistance, and access vehicle diagnostics - running on-device for low latency and offline reliability.

Crew and passenger support

Voice-enabled systems for crew task management, passenger service inquiries, and real-time operational updates — deployed on edge devices where connectivity is limited.

Customer Service automation

AI voice agents handling account inquiries, claims processing, fraud alerts, and transaction support — integrated with core banking systems and compliant with financial regulations.

Intelligent contact center

Enterprise-wide voice automation replacing traditional IVR systems with context-aware agents that resolve issues end-to-end, escalate intelligently, and generate structured interaction data.

Learn how we help our customers tackle their challenges

Explore how we redefine industry standards through innovation.

Regulate and innovate at the same time? Tell us what's holding you back.

Reach out for tailored solutions and expert guidance.

Insights on AI-driven legacy modernization

Learn more how we found a way to migrate smarter.

Sybase migration to Azure: What nobody tells you before you start

If you’re running Sybase in 2026, you already know the uncomfortable truth: the platform still works, but the ecosystem around it is quietly contracting: SAP patches ASE, yet engineering investment, tooling innovation, and community momentum are all flowing toward HANA and the cloud. ASE gets maintenance. Everything else moves on.

For most IT managers and DBA teams, the question is no longer whether to migrate. It’s how to do it without breaking things that have run reliably for 20 years.

The migration is bigger than “move the database”

When leadership hears “database migration”, they picture moving tables. What’s actually in a Sybase estate looks more like this:

.jpg)

- Database logic - Business logic written in T-SQL or Watcom SQL (SQL Anywhere), deeply embedded across stored procedures, functions and other database structures is the largest workstream.

- Connected applications - Every app querying data, and other Sybase products like IQ, and PowerBuilder. Each carries a distinct risk profile and should be scoped independently.

- Integration layer - CIS federation, proxy tables, driver stacks, ETL pipelines, BI connections: the glue between systems, frequently undocumented.

- Sync and replication - Replication Server is its own infrastructure layer, SQL Anywhere adds niche sync tools like SQL Remote and MobiLink. None of it has a modern equivalent - this requires redesign, not conversion.

- Operations and monitoring - scheduled jobs, alert rules, maintenance, performance monitoring, and backup strategies built on sp_sysmon, mon* tables, Backup Server, and dbcc. The knowledge transfers, the implementation doesn't.

- In-database services - Extended stored procedures, encryption, LOB handling, and in some estates SOAP endpoints or Java-in-DB. Most need external replacements on the target platform.

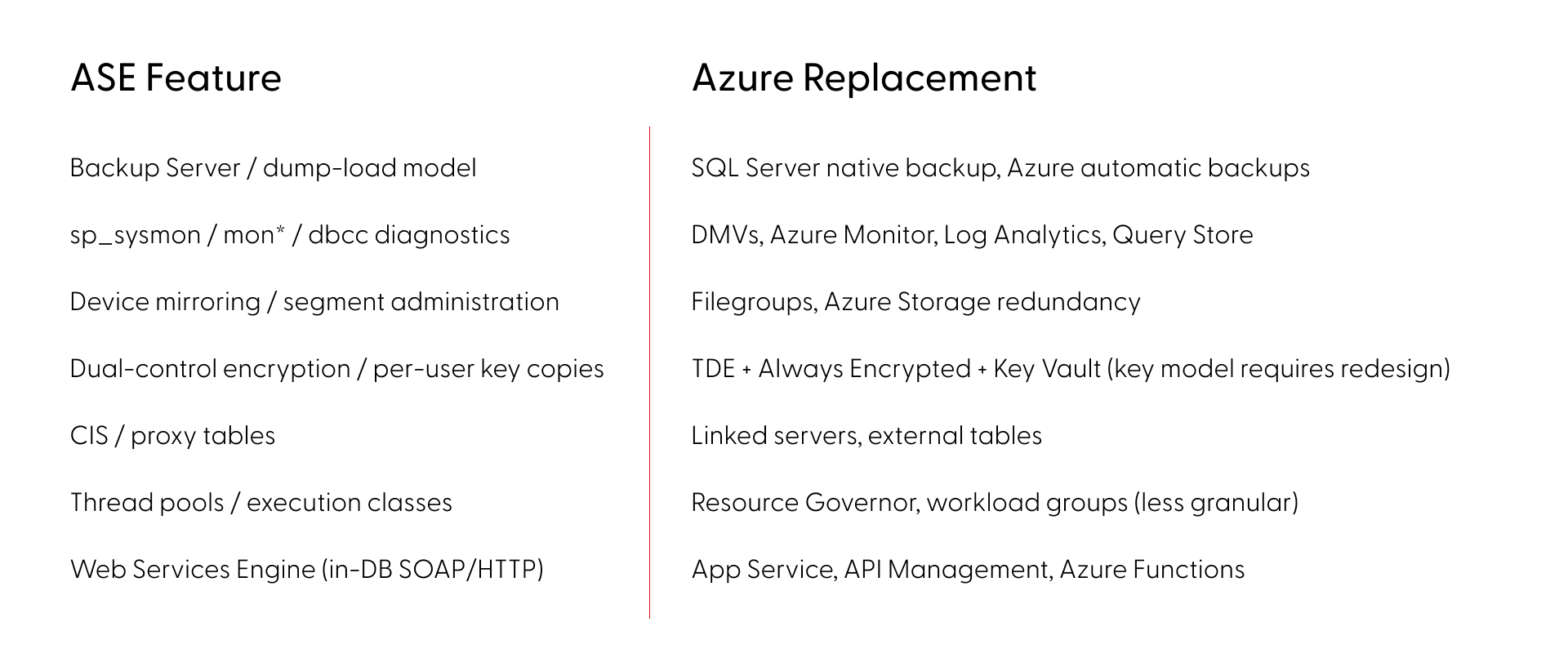

Where Sybase ASE migrations to Azure break

The most dangerous category isn’t missing features - it’s features that look identical but behave differently across platforms. ASE and SQL Server share a T-SQL lineage, which creates a false sense of safety. The syntax compiles - but the runtime behavior diverges in ways that pass testing and surface under production load. Here are some of the most common examples:

- Transactions: ASE's chained mode and nested transaction semantics differ from both SQL Server and Oracle. Error handling, rollback behavior, and transaction scoping each work differently across all three platforms.

- Identity: ASE isolates @@identity inside stored procedures and triggers. SQL Server's @@identity leaks across scopes, potentially returning wrong values from nested calls.

- Locking: ASE's per-table locking schemes (allpages, datapages, datarows) and lock promotion thresholds have no equivalent on SQL Server or Oracle. Concurrency patterns tuned for ASE risk deadlocks post-cutover.

- LOB access: Data types map cleanly (unitext to nvarchar(max) or NCLOB). The pointer-based access model (readtext, writetext, textptr) does not and must be rewritten.

- NULLs: In Oracle, an empty string is NULL. Code checking for empty strings silently returns wrong results without any error.

- Collation: ASE sets sort order at server level only and defaults to case-sensitive binary. SQL Server and Oracle support multiple collation levels with different defaults, changing JOIN cardinality, GROUP BY grouping, and UNIQUE constraint behavior.

Beyond syntax, cross-database patterns compound the problem: USE, db..object references, and CIS passthrough are everywhere in ASE estates and break the moment the engine changes. Migration tools like Microsoft's SSMA handle syntax conversion, but they don't detect behavioral divergence. The dangerous gaps aren't in what fails to convert - they're in what converts cleanly but runs differently.

The actual risk picture (by the numbers)

A full feature mapping of ASE (versions 15,16) against SQL Server and Oracle on Azure across all deployment tiers gives a clearer picture: (Based on Grape Up G.Tx internal analysis across enterprise migration assessments.)

- ~90% of ASE features have a clear migration path - low or medium risk

- SQL Server carries ~20% fewer high-risk items than Oracle across every ASE version - the shared T-SQL lineage makes a measurable difference

- PowerBuilder pushes the high-risk share to ~19% and should be scoped and phased independently

In ASE alone, roughly 30 features have no direct equivalent or workaround, but every one of those has a modern replacement approach on Azure, although some require significant architectural redesign rather than direct substitution.

SQL Server or Oracle on Azure - how to choose

The right target depends on what’s actually in your codebase.

SQL Server has the shorter path for most ASE estates. The T-SQL lineage reduces rewrite volume, and the platform carries fewer high-risk items across the board. Thirty years of divergence still mean real work, particularly around transaction semantics and locking behavior.

Oracle carries higher effort by default - PL/SQL vs. T-SQL is a language rewrite, NULL handling differs, and no direct replace for ASE’s nested transaction rollback semantics.

Deployment tier matters too. Azure VM, Managed Instance, and SQL Database each involve different trade-offs on compatibility, operational overhead, and cost. The right answer depends on your specific feature usage.

The operational risk of waiting

The engineers who know the platform deeply - who understand the undocumented behaviors, the operational quirks, the edge cases in the locking model - are retiring. That institutional knowledge compounds the migration effort every year it walks out the door.

Starting your Sybase migration to Azure: assessment before assumptions

A reliable migration starts with knowing exactly what you have. That means automated discovery across your live codebase - versions, features, dependencies, behavioral edge cases - not a manual audit based on what the team remembers.

This is what Grape Up’s G.Tx platform does in the assessment phase. G.Tx runs automated inventory against your environment, maps features against the target platform, identifies behavioral differences that won’t surface in standard testing, and produces a high-level risk report. The same platform then powers execution - code conversion, schema migration, test generation, and validation - so the assessment and the migration run on a single consistent picture of your estate, not on handover documents.

The engagement runs in four phases. Each ends with a deliverable and a client sign-off before the next begins:

- Assessment (1–2 weeks) - free, no commitment. Automated discovery of your system, delivered as a risk report with a next steps recommendation.

- Feasibility (2–4 weeks) - full migration plan with identified blockers, mitigations, and timeline.

- Proof of Concept - a representative subset of your codebase migrated on real data, against agreed acceptance criteria.

- Scale- full migration, AI-accelerated, with phased cutover and operational handover.

You control the pace. Nothing moves to the next phase without your approval.

Frequently Asked Questions: Sybase Migration to Azure

Is migration to another SQL database the only option?

For most estates, a like-for-like migration is the most pragmatic path — it preserves existing logic and minimizes rewrite scope. But depending on your goals and architectural dependencies, a full redesign may be the better long-term investment. This means decomposing the monolithic database into independent components that communicate with each other rather than relying on shared database logic. The result is a more flexible, extensible, and maintainable architecture that is no longer constrained by the boundaries of a single database engine. The best approach can be decided during the Feasibility phase.

What is the best migration target for Sybase ASE - SQL Server or Oracle on Azure?

SQL Server on Azure is the recommended target for most Sybase ASE estates. The shared T-SQL lineage reduces rewrite volume and lowers the share of high-risk migration items by approximately 20% compared to Oracle. Oracle remains viable for estates where PL/SQL integration or Oracle-specific features are already part of the architecture, but it carries higher baseline effort. The final choice depends on your codebase - a G.Tx assessment will map your specific feature usage against both targets before you commit.

How long does a Sybase migration to Azure take?

Timeline depends on estate size and complexity, but the structured phases give reliable checkpoints: Assessment runs 1–2 weeks, Feasibility 2–4 weeks, Proof of Concept varies by scope, and full scale migration is planned during Feasibility. The phased approach is designed to migrate and validate the most business-critical functionality first, progressively offloading the original system only as each phase proves stable on the target platform. Feasibility analysis is the most reliable way to get an accurate estimate for your specific environment.

What are the biggest risks in a Sybase ASE migration?

The highest-risk category is not missing features - it's behavioral divergence: code that converts cleanly but runs differently in production. Transaction semantics, identity scoping, locking behavior, NULL handling in Oracle, and collation defaults are examples of gaps that may not be caught in testing and only surface under real load. Standard migration tools like SSMA automate schema conversion but are not designed to detect behavioral differences. Automated analysis of your codebase can surface these discrepancies early, making sure they never reach production.

Is Sybase ASE still supported in 2026?

SAP ASE 16.0 reached End of Mainstream Maintenance on December 31, 2025, meaning SAP no longer provides new security patches or fixes for this version. ASE 16.1 retains mainstream support until December 31, 2030, giving organizations on that version more runway, but there are no new ASE versions on SAP's roadmap. New capabilities, cloud-native features, and tooling investment are being directed at HANA and cloud products. The surrounding ecosystem is contracting in measurable ways. The practical risk is not that ASE will stop working, but that maintaining it becomes increasingly expensive as the specialist talent pool shrinks, integration tooling is deprecated, and the burden of filling those gaps falls on internal teams — as unpatched vulnerabilities quietly accumulate.

How do Sybase ASE stored procedures migrate to SQL Server?

ASE stored procedures are written in T-SQL, which shares a lineage with SQL Server T-SQL - but decades of platform divergence mean direct conversion is rarely clean. Syntax differences are mostly handled by automated tools. The harder problems are behavioral: code that converts cleanly can still run differently in production, and standard conversion tools are not designed to catch them. Stored procedures rarely exist in isolation — understanding their true migration scope requires analysis in the context of the full system.

What does a Sybase migration assessment include?

An initial assessment covers automated discovery of a representative part of your system — architectural overview, feature usage, stored procedures, connected applications mapped against your target platform. The result is a report covering system health (including security findings), AI transformation feasibility, and top risks ranked by severity and impact — identifying behavioral differences that standard tools miss, with concrete next steps and PoC proposals. Grape Up’s G.Tx Assessment runs in 1–2 weeks, is free with no commitment, and becomes the foundation for all subsequent migration phases.

Challenges of the legacy migration process and best practices to mitigate them

Legacy software is the backbone of many organizations, but as technology advances, these systems can become more of a burden than a benefit. Migrating from a legacy system to a modern solution is a daunting task fraught with challenges, from grappling with outdated code and conflicting stakeholder interests to managing dependencies on third-party vendors and ensuring compliance with stringent regulatory standards.

However, with the right strategies and leveraging advanced technologies like Generative AI, these challenges can be effectively mitigated.

Challenge #1: Limited knowledge of the legacy solution

The average lifespan of business software can vary widely depending on several factors, such as the type of software or the industry it serves. Nevertheless, no matter if the software is 5 or 25 years old, it is highly possible its creators and subject matter experts are not accessible anymore (or they barely remember what they built and how it really works), the documentation is incomplete, the code messy and the technology forgotten a long time ago.

Lack of knowledge of the legacy solution not only blocks its further development and maintenance but also negatively affects its migration – it significantly slows down the analysis and replacement process.

Mitigation:

The only way to understand what kind of functionality, processes and dependencies are covered by the legacy software and what really needs to get migrated is in-depth analysis. An extensive discovery phase initiating every migration project should cover:

- interviews with the key users and knowledge keepers,

- observations of the employees and daily operations performed within the system,

- study of all the available documentation and resources,

- source code examination.

The discovery phase, although long (and boring!), demanding, and very costly, is crucial for the migration project’s success. Therefore, it is not recommended to give in to the temptation to take any shortcuts there.

At Grape Up , we do not. We make sure we learn the legacy software in detail, optimizing the analytical efforts at the same time. We support the discovery process by leveraging Generative AI tools . They help us to understand the legacy spaghetti code, forgotten purpose, dependencies, and limitations. GenAI enables us to make use of existing incomplete documentation or to go through technologies that nobody has expertise in anymore. This approach significantly speeds the discovery phase up, making it smoother and more efficient.

Challenge #2: Blurry idea of the target solution & conflicting interests

Unfortunately, understanding the legacy software and having a complete idea of the target replacement are two separate things. A decision to build a new solution, especially in a corporate environment, usually encourages multiple stakeholders (representing different groups of interests) to promote their visions and ideas. Often conflicting, to be precise.

This nonlinear stream of contradicting requirements leads to an uncontrollable growth of the product backlog, which becomes extremely difficult to manage and prioritize. In consequence, efficient decision-making (essential for the product’s success) is barely possible.

Mitigation:

A strong Product Management community with a single product leader - empowered to make decisions and respected by the entire organization – is the key factor here. If combined with a matching delivery model (which may vary depending on a product & project specifics), it sets the goals and frames for the mission and guides its crew.

For huge legacy migration projects with a blurry scope, requiring constant validation and prioritization, an Agile-based, continuous discovery & delivery process is the only possible way to go. With a flexible product roadmap (adjusted on the fly), both creative and development teams work simultaneously, and regular feedback loops are established.

High pressure from the stakeholders always makes the Product Leader’s job difficult. Bold scope decisions become easier when MVP/MDP (Minimum Viable / Desirable Product) approach & MoSCoW (must-have, should-have, could-have, and won't-have, or will not have right now) prioritization technique are in place.

At Grape Up, we assist our clients with establishing and maintaining efficient product & project governance, supporting the in-house management team with our experienced consultants such as Business Analysts, Scrum Masters, Project Managers, or Proxy Product Owners.

Challenge #3: Strategical decisions impacting the future

Migrating the legacy software gives the organization a unique opportunity to sunset outdated technologies, remove all the infrastructural pain points, reach out for modern solutions, and sketch a completely new architecture.

However, these are very heavy decisions. They must not only address the current needs but also be adaptable to future growth. Wrong choices can result in technical debt, forcing another costly migration – much sooner than planned.

Mitigation:

A careful evaluation of the current and future needs is a good starting point for drafting the first technical roadmap and architecture. Conducting a SWOT analysis (Strengths, Weaknesses, Opportunities, Threats) for potential technologies and infrastructural choices provides a balanced view, helping to identify the most suitable options that align with the organization's long-term plan. For Grape Up, one of the key aspects of such an analysis is always industry trends.

Another crucial factor that supports this difficult decision-making process is maintaining technical documentation through Architectural Decision Records (ADRs). ADRs capture the rationale behind key decisions, ensuring that all stakeholders understand the choices made regarding technologies, frameworks, or architectures. This documentation serves as a valuable reference for future decisions and discussions, helping to avoid repeating past mistakes or unnecessary changes (e.g. when a new architect joins the team and pushes for his own technical preferences).

Challenge #4: Dependencies and legacy 3 rd parties

When migrating from a legacy system, one of the significant challenges is managing dependencies with numerous other applications and services which are integrated with the old solution, and need to remain connected with the new one. Many of these are often provided by third-party vendors that may not be willing or able to quickly respond to our project’s needs and adapt to any changes, posing a significant risk to the migration process. Unfortunately, some of the dependencies are likely to be hidden and spotted not early enough, affecting the project’s budget and timeline.

Mitigation:

To mitigate this risk, it's essential to establish strong governance over third-party relationships before the project really begins. This includes forming solid partnerships and ensuring that clear contracts are in place, detailing the rules of cooperation and responsibilities. Prioritizing demands related to third-party integrations (such as API modifications, providing test environments, SLA, etc.), testing the connections early, and building time buffers into the migration plan are also crucial steps to reduce the impact of potential delays or issues.

Furthermore, leveraging Generative AI, which Grape Up does when migrating the legacy solution, can be a powerful tool in identifying and analyzing the complexities of these dependencies. Our consultants can also help to spot potential risks and suggest strategies to minimize disruptions, ensuring that third-party systems continue to function seamlessly during and after the migration.

Challenge #5: Lack of experience and sufficient resources

A legacy migration requires expertise and resources that most organizations lack internally. It is 100% natural. These kinds of tasks occur rarely; therefore, in most cases, owning a huge in-house IT department would be irrational.

Without prior experience in legacy migrations, internal teams may struggle with project initiation; for that reason, external support becomes necessary. Unfortunately, quite often, the involvement of vendors and contractors results in new challenges for the company by increasing its vulnerability (e.g., becoming dependent on externals, having data protection issues, etc.).

Mitigation:

To boost insufficient internal capabilities, it's essential to partner with experienced and trusted vendors who have a proven track record in legacy migrations. Their expertise can help navigate the complexities of the process while ensuring best practices are followed.

However, it's recommended to maintain a balance between internal and external resources to keep control over the project and avoid over-reliance on external parties. Involving multiple vendors can diversify the risk and prevent dependency on a single provider.

By leveraging Generative AI, Grape Up manages to optimize resource use, reducing the amount of manual work that consultants and developers do when migrating the legacy software. With a smaller external headcount involved, it is much easier for organizations to manage their projects and keep a healthy balance between their own resources and their partners.

Challenge #6: Budget and time pressure

Due to their size, complexity, and importance for the business, budget constraints and time pressure are always common challenges for legacy migration projects. Resources are typically insufficient to cover all the requirements (that keep on growing), unexpected expenses (that always pop up), and the need to meet hard deadlines. These pressures can result in compromised quality, incomplete migrations, or even the entire project’s failure if not managed effectively.

Mitigation:

Those are the other challenges where strong governance and effective product ownership would be helpful. Implementing an iterative approach with a focus on delivering an MVP (Minimum Viable Product) or MDP (Minimum Desirable Product) can help prioritize essential features and manage scope within the available budget and time.

For tracking convenience, it is useful to budget each feature or part of the system separately. It’s also important to build realistic time and financial buffers and continuously update estimates as the project progresses to account for unforeseen issues. There are multiple quick and sufficient (called “magic”) estimation methods that your team may use for that purpose, such as silent grouping.

As stated before, at Grape Up, we use Generative AI to reduce the workload on teams by analyzing the old solution and generating significant parts of the new one automatically. This helps to keep the project on track, even under tight budget and time constraints.

Challenge #7: Demanding validation process

A critical but typically disregarded and forgotten aspect of legacy migration is ensuring the new system meets not only all the business demands but also compliance, security, performance, and accessibility requirements. What if some of the implemented features appear to be illegal? Or our new system lets only a few concurrent users log in?

Without proper planning and continuous validation, these non-functional requirements can become major issues shortly before or after the release, putting the entire project at risk.

Mitigation:

Implementation of comprehensive validation, monitoring, and testing strategies from the project's early stages is a must. This should encompass both functional and non-functional requirements to ensure all aspects of the system are covered.

Efficient validation processes must not be a one-time activity but rather a regular occurrence. It also needs to involve a broad range of stakeholders and experts, such as:

- representatives of different user groups (to verify if the system covers all the critical business functions and is adjusted to their specific needs – e.g. accessibility-related),

- the legal department (to examine whether all the planned features are legally compliant),

- quality assurance experts (to continuously perform all the necessary tests, including security and performance testing).

Prioritizing non-functional requirements, such as performance and security, is essential to prevent potential issues from undermining the project’s success. For each legacy migration, there are also individual, very project-specific dimensions of validation. At Grape Up, during the discovery phase our analysts empowered by GenAI take their time to recognize all the critical aspects of the new solution’s quality, proposing the right thresholds, testing tools, and validation methods.

Challenge #8: Data migration & rollout strategy

Migrating data from a legacy system is one of the most challenging tasks of a migration project, particularly when dealing with vast amounts of historical data accumulated over many years. It is complex and costly, requiring meticulous planning to avoid data loss, corruption, or inconsistency.

Additionally, the release of the new system can have a significant impact on customers, especially if not handled smoothly. The risk of encountering unforeseen issues during the rollout phase is high, which can lead to extended downtime, customer dissatisfaction, and a prolonged stabilization period.

Mitigation:

Firstly, it is essential to establish comprehensive data migration and rollout strategies early in the project. Perhaps migrating all historical data is not necessary? Selective migration can significantly reduce the complexity, cost, and time involved.

A base plan for the rollout is equally important to minimize customer impact. This includes careful scheduling of releases, thorough testing in staging environments that closely mimic production, and phased rollouts that allow for gradual transition rather than a big-bang approach.

At Grape Up, we strongly recommend investing in Continuous Integration and Continuous Delivery (CI/CD) pipelines that can streamline the release process, enabling automated testing, deployment, and quick iterations. Test automation ensures that any changes or fixes (that are always numerous when rolling out) are rapidly validated, reducing the risk of introducing new issues during subsequent releases.

Post-release, a hypercare phase is crucial to provide dedicated support and rapid response to any problems that arise. It involves close monitoring of the system’s performance, user feedback, and quick deployment of fixes as needed. By having a hypercare plan in place, the organization can ensure that any issues are addressed promptly, reducing the overall impact on customers and business operations.

Summary

Legacy migration is undoubtedly a complex and challenging process, but with careful planning, strong governance, and the right blend of internal and external expertise, it can be navigated successfully. By prioritizing critical aspects such as in-depth analysis, strategic decision-making, and robust validation processes, organizations can mitigate the risks involved and avoid common pitfalls.

Managing budgets and expenses effectively is crucial, as unforeseen costs can quickly escalate. Leveraging advanced technologies like Generative AI not only enhances the efficiency and accuracy of the migration process but also helps control costs by streamlining tasks and reducing the overall burden on resources.

At Grape Up, we understand the intricacies of legacy migration and are committed to helping our clients transition smoothly to modern solutions that support future growth and innovation. With the right strategies in place, your organization can move beyond the limitations of legacy systems, achieving a successful migration within budget while embracing a future of improved performance, scalability, and flexibility.

Modernizing legacy applications with generative AI: Lessons from R&D Projects

As digital transformation accelerates, modernizing legacy applications has become essential for businesses to stay competitive. The application modernization market size, valued at USD 21.32 billion in 2023 , is projected to reach USD 74.63 billion by 2031 (1), reflecting the growing importance of updating outdated systems.

With 94% of business executives viewing AI as key to future success and 76% increasing their investments in Generative AI due to its proven value (2), it's clear that AI is becoming a critical driver of innovation. One key area where AI is making a significant impact is application modernization - an essential step for businesses aiming to improve scalability, performance, and efficiency.

Based on two projects conducted by our R&D team , we've seen firsthand how Generative AI can streamline the process of rewriting legacy systems.

Let’s start by discussing the importance of rewriting legacy systems and how GenAI-driven solutions are transforming this process.

Why re-write applications?

In the rapidly evolving software development landscape, keeping applications up-to-date with the latest programming languages and technologies is crucial. Rewriting applications to new languages and frameworks can significantly enhance performance, security, and maintainability. However, this process is often labor-intensive and prone to human error.

Generative AI offers a transformative approach to code translation by:

- leveraging advanced machine learning models to automate the rewriting process

- ensuring consistency and efficiency

- accelerating modernization of legacy systems

- facilitating cross-platform development and code refactoring

As businesses strive to stay competitive, adopting Generative AI for code translation becomes increasingly important. It enables them to harness the full potential of modern technologies while minimizing risks associated with manual rewrites.

Legacy systems, often built on outdated technologies, pose significant challenges in terms of maintenance and scalability. Modernizing legacy applications with Generative AI provides a viable solution for rewriting these systems into modern programming languages, thereby extending their lifespan and improving their integration with contemporary software ecosystems.

This automated approach not only preserves core functionality but also enhances performance and security, making it easier for organizations to adapt to changing technological landscapes without the need for extensive manual intervention.

Why Generative AI?

Generative AI offers a powerful solution for rewriting applications, providing several key benefits that streamline the modernization process.

Modernizing legacy applications with Generative AI proves especially beneficial in this context for the following reasons:

- Identifying relationships and business rules: Generative AI can analyze legacy code to uncover complex dependencies and embedded business rules, ensuring critical functionalities are preserved and enhanced in the new system.

- Enhanced accuracy: Automating tasks like code analysis and documentation, Generative AI reduces human errors and ensures precise translation of legacy functionalities, resulting in a more reliable application.

- Reduced development time and cost: Automation significantly cuts down the time and resources needed for rewriting systems. Faster development cycles and fewer human hours required for coding and testing lower the overall project cost.

- Improved security: Generative AI aids in implementing advanced security measures in the new system, reducing the risk of threats and identifying vulnerabilities, which is crucial for modern applications.

- Performance optimization: Generative AI enables the creation of optimized code from the start, integrating advanced algorithms that improve efficiency and adaptability, often missing in older systems.

By leveraging Generative AI, organizations can achieve a smooth transition to modern system architectures, ensuring substantial returns in performance, scalability, and maintenance costs.

In this article, we will explore:

- the use of Generative AI for rewriting a simple CRUD application

- the use of Generative AI for rewriting a microservice-based application

- the challenges associated with using Generative AI

For these case studies, we used OpenAI's ChatGPT-4 with a context of 32k tokens to automate the rewriting process, demonstrating its advanced capabilities in understanding and generating code across different application architectures.

We'll also present the benefits of using a data analytics platform designed by Grape Up's experts. The platform utilizes Generative AI and neural graphs to enhance its data analysis capabilities, particularly in data integration, analytics, visualization, and insights automation.

Project 1: Simple CRUD application

The source CRUD project was used as an example of a simple CRUD application - one written utilizing .Net Core as a framework, Entity Framework Core for the ORM, and SQL Server for a relational database. The target project containes a backend application created using Java 17 and Spring Boot 3.

Steps taken to conclude the project

Rewriting a simple CRUD application using Generative AI involves a series of methodical steps to ensure a smooth transition from the old codebase to the new one. Below are the key actions undertaken during this process:

- initial architecture and data flow investigation - conducting a thorough analysis of the existing application's architecture and data flow.

- generating target application skeleton - creating the initial skeleton of the new application in the target language and framework.

- converting components - translating individual components from the original codebase to the new environment, ensuring that all CRUD operations were accurately replicated.

- generating tests - creating automated tests for the backend to ensure functionality and reliability.

Throughout each step, some manual intervention by developers was required to address code errors, compilation issues, and other problems encountered after using OpenAI's tools.

Initial architecture and data flows’ investigation

The first stage in rewriting a simple CRUD application using Generative AI is to conduct a thorough investigation of the existing architecture and data flow. This foundational step is crucial for understanding the current system's structure, dependencies, and business logic.

This involved:

- codebase analysis

- data flow mapping – from user inputs to database operations and back

- dependency identification

- business logic extraction – documenting the core business logic embedded within the application

While OpenAI's ChatGPT-4 is powerful, it has some limitations when dealing with large inputs or generating comprehensive explanations of entire projects. For example:

- OpenAI couldn’t read files directly from the file system

- Inputting several project files at once often resulted in unclear or overly general outputs

However, OpenAI excels at explaining large pieces of code or individual components. This capability aids in understanding the responsibilities of different components and their data flows. Despite this, developers had to conduct detailed investigations and analyses manually to ensure a complete and accurate understanding of the existing system.

This is the point at which we used our data analytics platform. In comparison to OpenAI, it focuses on data analysis. It's especially useful for analyzing data flows and project architecture, particularly thanks to its ability to process and visualize complex datasets. While it does not directly analyze source code, it can provide valuable insights into how data moves through a system and how different components interact.

Moreover, the platform excels at visualizing and analyzing data flows within your application. This can help identify inefficiencies, bottlenecks, and opportunities for optimization in the architecture.

Generating target application skeleton

As with OpenAI's inability to analyze the entire project, the attempt to generate the skeleton of the target application was also unsuccessful, so the developer had to manually create it. To facilitate this, Spring Initializr was used with the following configuration:

- Java: 17

- Spring Boot: 3.2.2

- Gradle: 8.5

Attempts to query OpenAI for the necessary Spring dependencies faced challenges due to significant differences between dependencies for C# and Java projects. Consequently, all required dependencies were added manually.

Additionally, the project included a database setup. While OpenAI provided a series of steps for adding database configuration to a Spring Boot application, these steps needed to be verified and implemented manually.

Converting components

After setting up the backend, the next step involved converting all project files - Controllers, Services, and Data Access layers - from C# to Java Spring Boot using OpenAI.

The AI proved effective in converting endpoints and data access layers, producing accurate translations with only minor errors, such as misspelled function names or calls to non-existent functions.

In cases where non-existent functions were generated, OpenAI was able to create the function bodies based on prompts describing their intended functionality. Additionally, OpenAI efficiently generated documentation for classes and functions.

However, it faced challenges when converting components with extensive framework-specific code. Due to differences between frameworks in various languages, the AI sometimes lost context and produced unusable code.

Overall, OpenAI excelled at:

- converting data access components

- generating REST APIs

However, it struggled with:

- service-layer components

- framework-specific code where direct mapping between programming languages was not possible

Despite these limitations, OpenAI significantly accelerated the conversion process, although manual intervention was required to address specific issues and ensure high-quality code.

Generating tests

Generating tests for the new code is a crucial step in ensuring the reliability and correctness of the rewritten application. This involves creating both unit tests and integration tests to validate individual components and their interactions within the system.

To create a new test, the entire component code was passed to OpenAI with the query: "Write Spring Boot test class for selected code."

OpenAI performed well at generating both integration tests and unit tests; however, there were some distinctions:

- For unit tests , OpenAI generated a new test for each if-clause in the method under test by default.

- For integration tests , only happy-path scenarios were generated with the given query.

- Error scenarios could also be generated by OpenAI, but these required more manual fixes due to a higher number of code issues.

If the test name is self-descriptive, OpenAI was able to generate unit tests with a lower number of errors.

Project 2: Microservice-based application

As an example of a microservice-based application, we used the Source microservice project - an application built using .Net Core as the framework, Entity Framework Core for the ORM, and a Command Query Responsibility Segregation (CQRS) approach for managing and querying entities. RabbitMQ was used to implement the CQRS approach and EventStore to store events and entity objects. Each microservice could be built using Docker, with docker-compose managing the dependencies between microservices and running them together.

The target project includes:

- a microservice-based backend application created with Java 17 and Spring Boot 3

- a frontend application using the React framework

- Docker support for each microservice

- docker-compose to run all microservices at once

Project stages

Similarly to the CRUD application rewriting project, converting a microservice-based application using Generative AI requires a series of steps to ensure a seamless transition from the old codebase to the new one. Below are the key steps undertaken during this process:

- initial architecture and data flows’ investigation - conducting a thorough analysis of the existing application's architecture and data flow.

- rewriting backend microservices - selecting an appropriate framework for implementing CQRS in Java, setting up a microservice skeleton, and translating the core business logic from the original language to Java Spring Boot.

- generating a new frontend application - developing a new frontend application using React to communicate with the backend microservices via REST APIs.

- generating tests for the frontend application - creating unit tests and integration tests to validate its functionality and interactions with the backend.

- containerizing new applications - generating Docker files for each microservice and a docker-compose file to manage the deployment and orchestration of the entire application stack.

Throughout each step, developers were required to intervene manually to address code errors, compilation issues, and other problems encountered after using OpenAI's tools. This approach ensured that the new application retains the functionality and reliability of the original system while leveraging modern technologies and best practices.

Initial architecture and data flows’ investigation

The first step in converting a microservice-based application using Generative AI is to conduct a thorough investigation of the existing architecture and data flows. This foundational step is crucial for understanding:

- the system’s structure

- its dependencies

- interactions between microservices

Challenges with OpenAI

Similar to the process for a simple CRUD application, at the time, OpenAI struggled with larger inputs and failed to generate a comprehensive explanation of the entire project. Attempts to describe the project or its data flows were unsuccessful because inputting several project files at once often resulted in unclear and overly general outputs.

OpenAI’s strengths

Despite these limitations, OpenAI proved effective in explaining large pieces of code or individual components. This capability helped in understanding:

- the responsibilities of different components

- their respective data flows

Developers can create a comprehensive blueprint for the new application by thoroughly investigating the initial architecture and data flows. This step ensures that all critical aspects of the existing system are understood and accounted for, paving the way for a successful transition to a modern microservice-based architecture using Generative AI.

Again, our data analytics platform was used in project architecture analysis. By identifying integration points between different application components, the platform helps ensure that the new application maintains necessary connections and data exchanges.

It can also provide a comprehensive view of your current architecture, highlighting interactions between different modules and services. This aids in planning the new architecture for efficiency and scalability. Furthermore, the platform's analytics capabilities support identifying potential risks in the rewriting process.

Rewriting backend microservices

Rewriting the backend of a microservice-based application involves several intricate steps, especially when working with specific architectural patterns like CQRS (Command Query Responsibility Segregation) and event sourcing . The source C# project uses the CQRS approach, implemented with frameworks such as NServiceBus and Aggregates , which facilitate message handling and event sourcing in the .NET ecosystem.

Challenges with OpenAI

Unfortunately, OpenAI struggled with converting framework-specific logic from C# to Java. When asked to convert components using NServiceBus, OpenAI responded:

"The provided C# code is using NServiceBus, a service bus for .NET, to handle messages. In Java Spring Boot, we don't have an exact equivalent of NServiceBus, but here's how you might convert the given C# code to Java Spring Boot..."

However, the generated code did not adequately cover the CQRS approach or event-sourcing mechanisms.

Choosing Axon framework

Due to these limitations, developers needed to investigate suitable Java frameworks. After thorough research, the Axon Framework was selected, as it offers comprehensive support for:

- domain-driven design

- CQRS

- event sourcing

Moreover, Axon provides out-of-the-box solutions for message brokering and event handling and has a Spring Boot integration library , making it a popular choice for building Java microservices based on CQRS.

Converting microservices

Each microservice from the source project could be converted to Java Spring Boot using a systematic approach, similar to converting a simple CRUD application. The process included:

- analyzing the data flow within each microservice to understand interactions and dependencies

- using Spring Initializr to create the initial skeleton for each microservice

- translating the core business logic, API endpoints, and data access layers from C# to Java

- creating unit and integration tests to validate each microservice’s functionality

- setting up the event sourcing mechanism and CQRS using the Axon Framework, including configuring Axon components and repositories for event sourcing

Manual Intervention

Due to the lack of direct mapping between the source project's CQRS framework and the Axon Framework, manual intervention was necessary. Developers had to implement framework-specific logic manually to ensure the new system retained the original's functionality and reliability.

Generating a new frontend application

The source project included a frontend component written using aspnetcore-https and aspnetcore-react libraries, allowing for the development of frontend components in both C# and React.

However, OpenAI struggled to convert this mixed codebase into a React-only application due to the extensive use of C#.

Consequently, it proved faster and more efficient to generate a new frontend application from scratch, leveraging the existing REST endpoints on the backend.

Similar to the process for a simple CRUD application, when prompted with “Generate React application which is calling a given endpoint” , OpenAI provided a series of steps to create a React application from a template and offered sample code for the frontend.

- OpenAI successfully generated React components for each endpoint

- The CSS files from the source project were reusable in the new frontend to maintain the same styling of the web application.

- However, the overall structure and architecture of the frontend application remained the developer's responsibility.

Despite its capabilities, OpenAI-generated components often exhibited issues such as:

- mixing up code from different React versions, leading to code failures.

- infinite rendering loops.

Additionally, there were challenges related to CORS policy and web security:

- OpenAI could not resolve CORS issues autonomously but provided explanations and possible steps for configuring CORS policies on both the backend and frontend

- It was unable to configure web security correctly.

- Moreover, since web security involves configurations on the frontend and multiple backend services, OpenAI could only suggest common patterns and approaches for handling these cases, which ultimately required manual intervention.

Generating tests for the frontend application

Once the frontend components were completed, the next task was to generate tests for these components. OpenAI proved to be quite effective in this area. When provided with the component code, OpenAI could generate simple unit tests using the Jest library.

OpenAI was also capable of generating integration tests for the frontend application, which are crucial for verifying that different components work together as expected and that the application interacts correctly with backend services.

However, some manual intervention was required to fix issues in the generated test code. The common problems encountered included:

- mixing up code from different React versions, leading to code failures.

- dependencies management conflicts, such as mixing up code from different test libraries.

Containerizing new application

The source application contained Dockerfiles that built images for C# applications. OpenAI successfully converted these Dockerfiles to a new approach using Java 17 , Spring Boot , and Gradle build tools by responding to the query:

"Could you convert selected code to run the same application but written in Java 17 Spring Boot with Gradle and Docker?"

Some manual updates, however, were needed to fix the actual jar name and file paths.

Once the React frontend application was implemented, OpenAI was able to generate a Dockerfile by responding to the query:

"How to dockerize a React application?"

Still, manual fixes were required to:

- replace paths to files and folders

- correct mistakes that emerged when generating multi-staged Dockerfiles , requiring further adjustments

While OpenAI was effective in converting individual Dockerfiles, it struggled with writing docker-compose files due to a lack of context regarding all services and their dependencies.

For instance, some microservices depend on database services, and OpenAI could not fully understand these relationships. As a result, the docker-compose file required significant manual intervention.

Conclusion

Modern tools like OpenAI's ChatGPT can significantly enhance software development productivity by automating various aspects of code writing and problem-solving. Leveraging large language models, such as OpenAI over ChatGPT can help generate large pieces of code, solve problems, and streamline certain tasks.

However, for complex projects based on microservices and specialized frameworks, developers still need to do considerable work manually, particularly in areas related to architecture, framework selection, and framework-specific code writing.

What Generative AI is good at:

- converting pieces of code from one language to another - Generative AI excels at translating individual code snippets between different programming languages, making it easier to migrate specific functionalities.

- generating large pieces of new code from scratch - OpenAI can generate substantial portions of new code, providing a solid foundation for further development.

- generating unit and integration tests - OpenAI is proficient in creating unit tests and integration tests, which are essential for validating the application's functionality and reliability.

- describing what code does - Generative AI can effectively explain the purpose and functionality of given code snippets, aiding in understanding and documentation.

- investigating code issues and proposing possible solutions - Generative AI can quickly analyze code issues and suggest potential fixes, speeding up the debugging process.

- containerizing application - OpenAI can create Dockerfiles for containerizing applications, facilitating consistent deployment environments.

At the time of project implementation, Generative AI still had several limitations .

- OpenAI struggled to provide comprehensive descriptions of an application's overall architecture and data flow, which are crucial for understanding complex systems.

- It also had difficulty identifying equivalent frameworks when migrating applications, requiring developers to conduct manual research.

- Setting up the foundational structure for microservices and configuring databases were tasks that still required significant developer intervention.

- Additionally, OpenAI struggled with managing dependencies, configuring web security (including CORS policies), and establishing a proper project structure, often needing manual adjustments to ensure functionality.

Benefits of using the data analytics platform:

- data flow visualization: It provides detailed visualizations of data movement within applications, helping to map out critical pathways and dependencies that need attention during re-writing.

- architectural insights : The platform offers a comprehensive analysis of system architecture, identifying interactions between components to aid in designing an efficient new structure.

- integration mapping: It highlights integration points with other systems or components, ensuring that necessary integrations are maintained in the re-written application.

- risk assessment: The platform's analytics capabilities help identify potential risks in the transition process, allowing for proactive management and mitigation.

By leveraging GenerativeAI’s strengths and addressing its limitations through manual intervention, developers can achieve a more efficient and accurate transition to modern programming languages and technologies. This hybrid approach to modernizing legacy applications with Generative AI currently ensures that the new application retains the functionality and reliability of the original system while benefiting from the advancements in modern software development practices.

It's worth remembering that Generative AI technologies are rapidly advancing, with improvements in processing capabilities. As Generative AI becomes more powerful, it is increasingly able to understand and manage complex project architectures and data flows. This evolution suggests that in the future, it will play a pivotal role in rewriting projects.

Do you need support in modernizing your legacy systems with expert-driven solutions?

.................

Sources:

- https://www.verifiedmarketresearch.com/product/application-modernization-market/

- https://www2.deloitte.com/content/dam/Deloitte/us/Documents/deloitte-analytics/us-ai-institute-state-of-ai-fifth-edition.pdf

.jpeg)