Read articles

Kubernetes as a solution to container orchestration

Containerization

Kubernetes has become a synonym for containerization. Containerization, also known as operating-system-level virtualization provides the ability to run multiple isolated containers on the same Kernel. That is, on the same operating system that controls everything inside the system. It brings a lot of flexibility in terms of managing application deployment.

Deploying a few containers is not a difficult task. It can be done by means of a simple tool for defining and running multi-container Docker applications like Docker Compose. Doing it manually via command line interface is also a solution.

Challenges in the container environment

Since the container ecosystem moves fast it is challenging for developers to stay up-to-date with what is possible in the container environment. It’s usually in the production system where things get more complicated as mature architecture can consist of hundreds or thousands of containers. But then again, it’s not the deployment of such swarm that’s the biggest challenge.

What’s even more confusing is the quality of our system called High Availability. In other words, it is when multiple instances of the same container must be distributed across nodes available in the cluster. The type of the application that lives in a particular container that dictates the distribution algorithm that should be applied. Once the containers are deployed and distributed across the cluster, we encounter another problem: the system behavior in the presence of node failure.

Luckily enough, modern solutions provide a self-healing mechanism. Therefore, if a node hits the capacity limits or its down issues, the container will be redeployed on a different node to ensure stability. With that said, managing multiple containers without a sophisticated tool is almost impossible. This sophisticated tool is known as a container orchestrator. Companies have many options when it comes to platforms for running containers. Deciding which one is the best for a particular organization can be a challenging task itself. There are plenty of solutions on the market among which the most popular one is Kubernetes [1].

Kubernetes

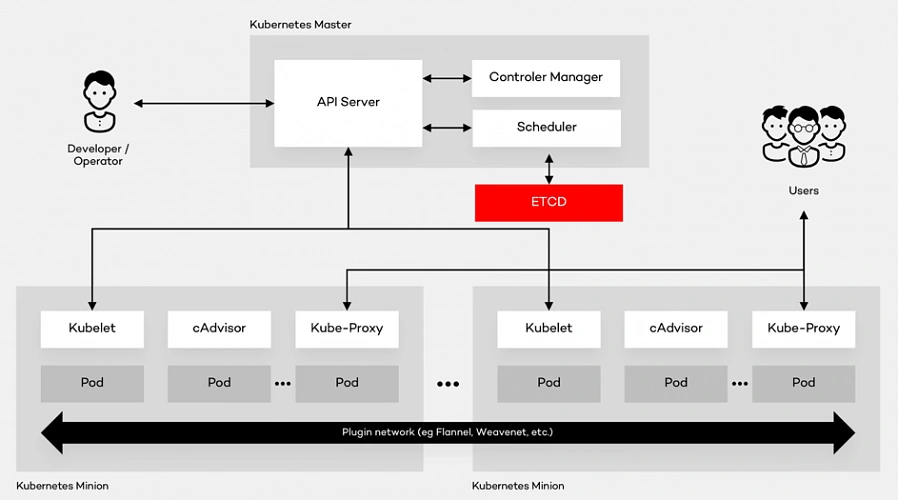

Kubernetes is the open source container platform first released by Google in 2014. The name Kubernetes, translated from Ancient Greek and means “Helmsman”. The whole idea behind this open-source project was based on Google’s experience of running containers at an enormous scale. The company uses Kubernetes for the Google Container Engine (GKE), their own Container as a Service (CaaS). And it shouldn’t be a surprise to anyone that numerous other platforms out there such as IBM Cloud, AWS or Microsoft Azure support Kubernetes. The tool can manage the two most popular types of containers – Docker & Rocket. Moreover, it helps organize networking, computing and storage – three nightmares of the microservice world. Its architecture is based on two types of nodes – Master and Minion as shown below:

Architecture glossary

- PI Server – entry point for REST commands. It processes and validates the requests and executes the logic.

- Scheduler – it supports the deployment of configured pods and services onto the nodes.

- Controller Manager – uses an apiserver to control the shared state of the cluster and makes changes if necessary.

- ETCD Storage – key-value store used mainly for shared configuration and service discovery.

- Kubelet – receives the configuration of a pod from the apiserver and makes sure that the right containers are running. It also communicates with the master node.

- cAdvisor – (Container Advisor) it collects and processes information about each running container. Most importantly, it helps container users understand the resource usage and performance characteristics of their containers.

- Kube - Proxy – runs on each node. It manages the networking routing for TCP (Transmission Control Protocol) and UDP (User Datagram Protocol) packets which are used for sending bits of data.

- Pod – the fundamental element of the architecture, a group of containers that, in a non-containerized setup, would all run on a single server.

Architecture description

A Pod provides abstraction of the container and makes it possible to group them and deploy on the same host. The containers that are in the same Pod share a network, storage and a run specification. Every single Minion Node runs a kubelet agent process which connects it to the Master Node as well as a kube-proxy which can do simple TCP and UDP stream forwarding. The Kubernetes architecture model assumes that Pods can communicate with other Pods, regardless of which host they land on. Besides, they also have a short lifetime: they’re created, destroyed and then created again depending on the server. Connectivity can be implemented in various methods (kube-router, L2 network etc.). In many cases, a simple overlay network based on a Flannel is a sufficient solution.

Summary

As a company with years of experience in the cloud evolution, we advise enterprises to think even up to ten years into the future when choosing the right platform . It all depends on where they see technology heading. Hopefully, this summary will help you understand the fundamentals of component containerization, the Kubernetes architecture and, in the end, make the right decision.

The path towards enterprise level AWS infrastructure – EC2, AMI, Bastion Host, RDS

Let’s pick up the thread of our journey into the AWS Cloud, and keep discovering the intrinsics of the cloud computing universe while building a highly available, secure and fault-tolerant cloud system on the AWS platform. This article is the second one of the mini-series which walks you through the process of creating an enterprise-level AWS infrastructure and explains concepts and components of the Amazon Web Services platform. In the previous part, we scaffolded our infrastructure; specifically, we created the VPC, subnets, NAT gateways, and configured network routing. If you have missed that, we strongly encourage you to read it first. In this article, we will build on top of the work we have done in the previous part, and this time we focus on the configuration of EC2 instances, the creation of AMI images, setting up Bastion Hosts, and RDS database.

The whole series comprises of:

- Part 1 - Architecture Scaffolding (VPC, Subnets, Elastic IP, NAT).

- Part 2 - The Path Towards Enterprise Level AWS Infrastructure – EC2, AMI, Bastion Host, RDS.

- Part 3 - Load Balancing and Application Deployment (Elastic Load Balancer)

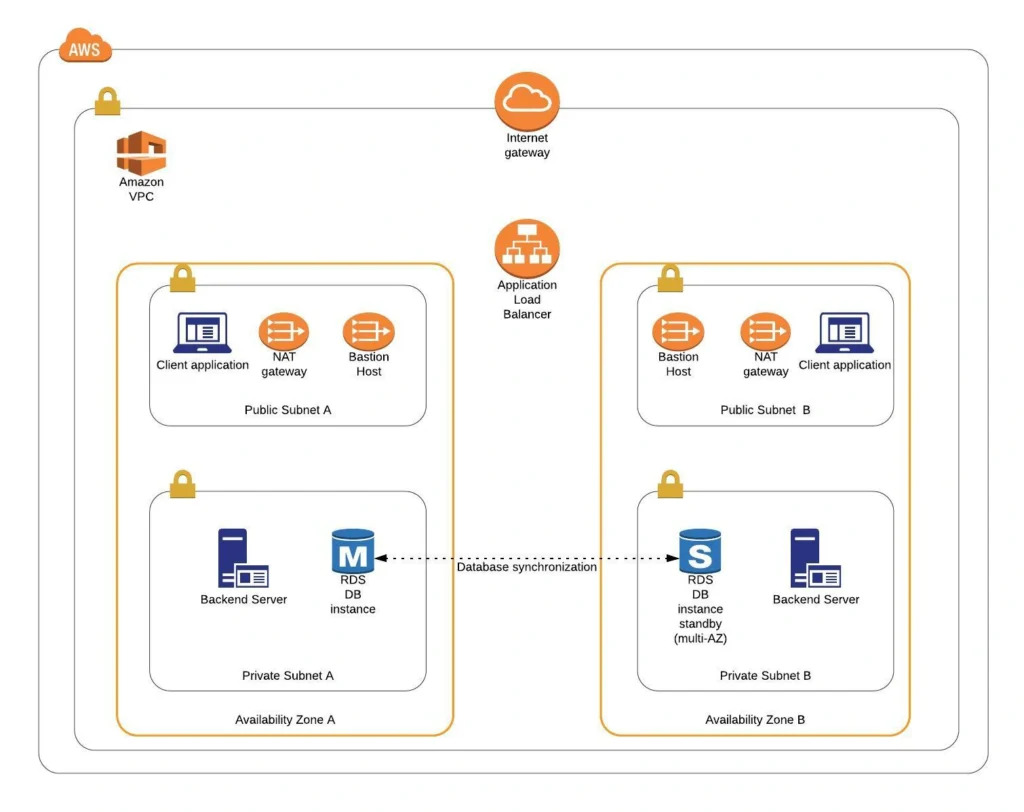

Infrastructure overview

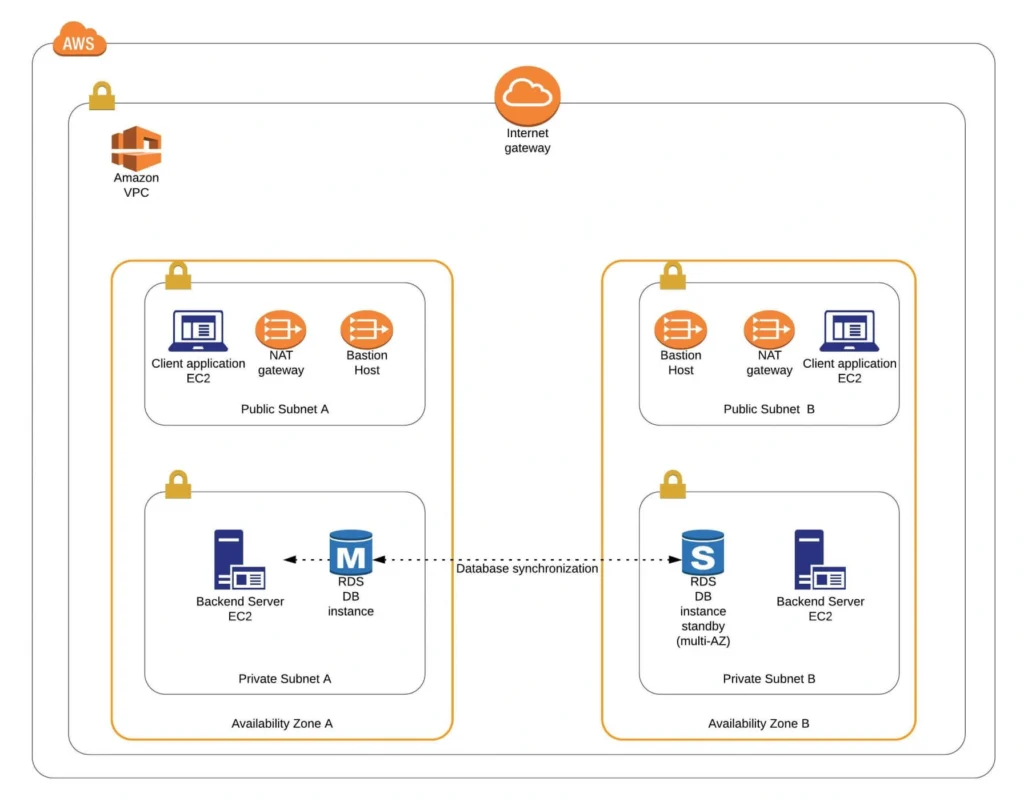

The diagram below presents our designed infrastructure. If you would like to learn more about design choices behind it, please read Part 1 - Architecture Scaffolding (VPC, Subnets, Elastic IP, NAT) . We have already created a VPC, subnets, NAT Gateways, and configured network routing. In this part of the series, we focus on the configuration of required EC2 instances, the creation of AMI images, setting up Bastion Hosts, and the RDS database.

AWS theory

1. Elastic cloud compute cloud (EC2)

Elastic Cloud Compute Cloud (EC2) is an Amazon service that allows you to manage your virtual computing environments, known as EC2 instances, on AWS. An EC2 instance is simply a virtual machine provisioned with a certain amount of resources such as CPU, memory, storage, and network capacity launched in a selected AWS region and availability zone. The elasticity of EC2 means that you can scale up or down resources easily, depending on your needs and requirements. The network security of your instances can be managed with the use of security groups by the configuration of protocols, ports, and IP addresses that your instances can communicate with.

There are five basic types of EC2 instances, which you can use based on your system requirements.

- General Purpose,

- Compute Optimized,

- Memory Optimized,

- Accelerated Computing,

- Storage Optimized.

In our infrastructure, we will use only general-purpose instances, but if you would like to learn more about different features of instance types, see the AWS documentation.

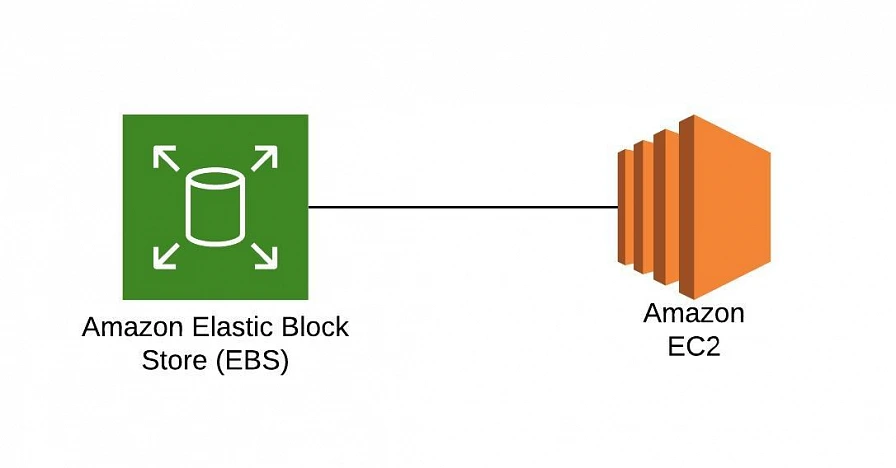

All EC2 instances come with instance store volumes for temporary data that is deleted whenever the instance is stopped or terminated, as well as with Elastic Block Store (EBS) , which is a persistent storage volume working independently of the EC2 instance itself.

2. Amazon Machine Images (AMI)

Amazon utilizes templates of software configurations, known as Amazon Machine Images (AMI) , in order to facilitate the creation of custom EC2 instances. AMIs are image templates that contain software such as operating systems, runtime environments, and actual applications that are used to launch EC2 instances. This allows us to preconfigure our AMIs and dynamically launch new instances on the go using this image instead of always setting up VM environments from scratch. Amazon provides some ready to use AMIs on the AWS Marketplace, which you can extend, customize, and save as your own (which we will do soon).

3. Key pair

Amazon provides a secure EC2 login mechanism with the use of public-key cryptography. During the instance boot time, the public key is put in an entry within ~/.ssh/authorized_keys , and then you can securely access your instance through SSH using a private key instead of a password. The public and private keys are known as a key pair.

4. IAM role

IAM means Identity and Access Management and it defines authentication and authorization rules for your system. IAM roles are IAM identities which comprise a set of permissions that control access to AWS services and can be attached to AWS resources such as users, applications, or services. As an example, if your application needs access to a specific AWS service such as an S3 Bucket, its EC2 instance needs to have a role with appropriate permission assigned.

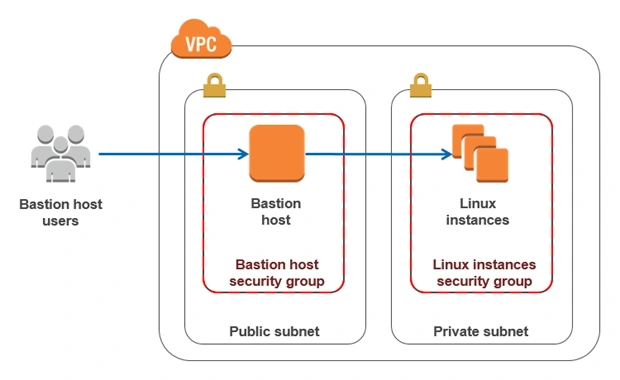

5. Bastion Host

Bastion Host is a special purpose instance placed in a public subnet, which is used to allow access to instances located in private subnets while providing an increased level of security. It acts as a bridge between users and private instances, and due to its exposure to potential attacks, it is configured to withstand any penetration attempts. The private instances only expose their SSH ports to a bastion host, not allowing any direct connection. What is more, bastion hosts may be configured to log any activity providing additional security auditing.

6. Amazon Relational Database Service (RDS)

6.1. RDS

RDS is an Amazon service for the management of relational databases in the cloud. As of now (23.04.2020), it supports six database engines specifically Amazon Aurora, PostgreSQL, MySQL, MariaDB, Oracle Database, and SQL Server. It is easy to configure, scale and it provides high availability and reliability with the use of Read Replicas and Multi-AZ Deployment features.

6.2. Read replicas

RDS Read Replicas are asynchronous, read-only instances that are replicas of a primary “master” db instance. They can be used for handling queries that do not require any data change, thus reliving the workload from the master node.

6.3. Multi-AZ deployment

AWS Multi-AZ Deployment is an option to allow RDS to create a secondary, standby instance in a different AZ, and replicate it synchronously with the data from the master node. Both master and standby instances run on their own physically independent infrastructures, and only the primary instance can be accessed directly. The standby replica is used as a failover in case of any master’s failure, without changing the endpoint of your DB.

This reduces downtime of your system and makes it easier to perform version upgrades or create backup snapshots, as they can be done on the spare instance. Multi-AZ is usually used only on the master instance. However, it is also possible to create read replicas with Multi-AZ deployment, which results in a resilient disaster recovery infrastructure.

Practice

We have two applications that we would like to run on our AWS infrastructure. One is a Java 11 Spring Boot application, so the EC2 which will host it is required to have Java 11 installed. The second one is a React.js frontend application, which requires a virtual machine with a Node.js environment. Therefore, as the first step, we are going to set up a Bastion Host, which will allow us to ssh our instances. Then, we will launch and configure those two EC2 instances manually in the first availability zone. Later on, we will create AMIs based on those instances and use them for the creation of EC2s in the second availability zone.

1. Availability Zone A

1.1. Bastion Host

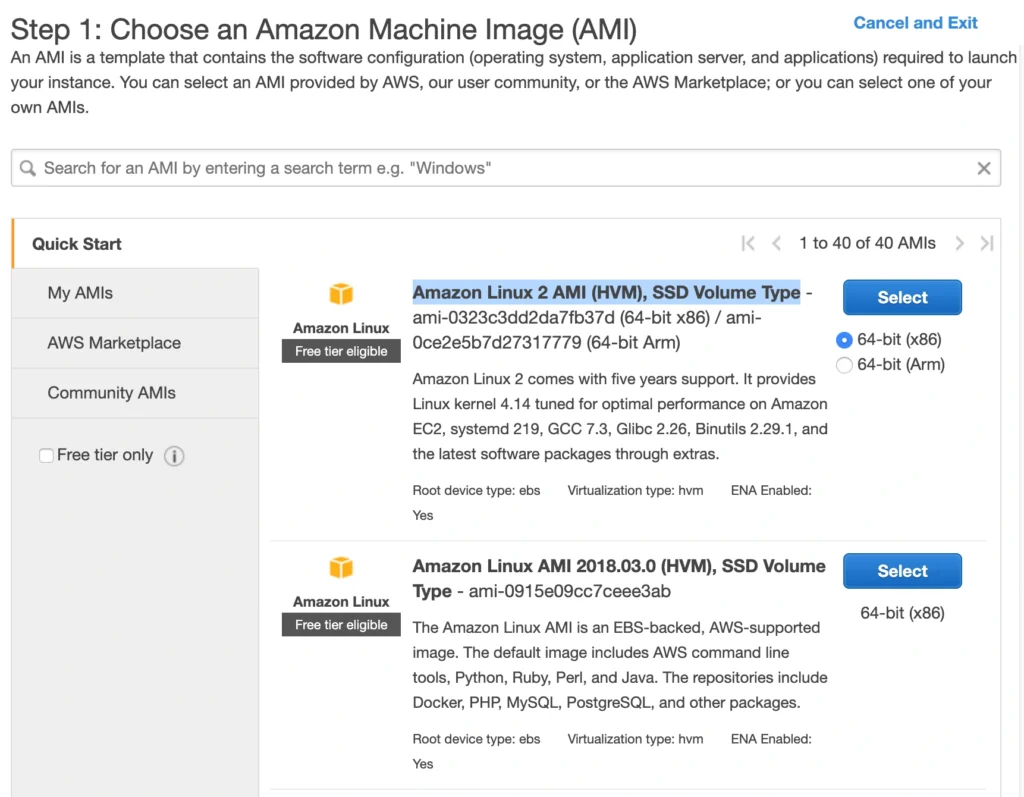

A Bastion Host is nothing more than a special-purpose EC2 instance. Hence, in order to create a Bastion Host, go into the AWS Management Console, and search for EC2 service. Then click the Launch Instance button, and you will be shown with an EC2 launch wizard. The first step is the selection of an AMI image for your instance. You can filter AMIs and select one based on your preferences. In this article, we will use the Amazon Linux 2 AMI (HVM), SSD Volume Type image.

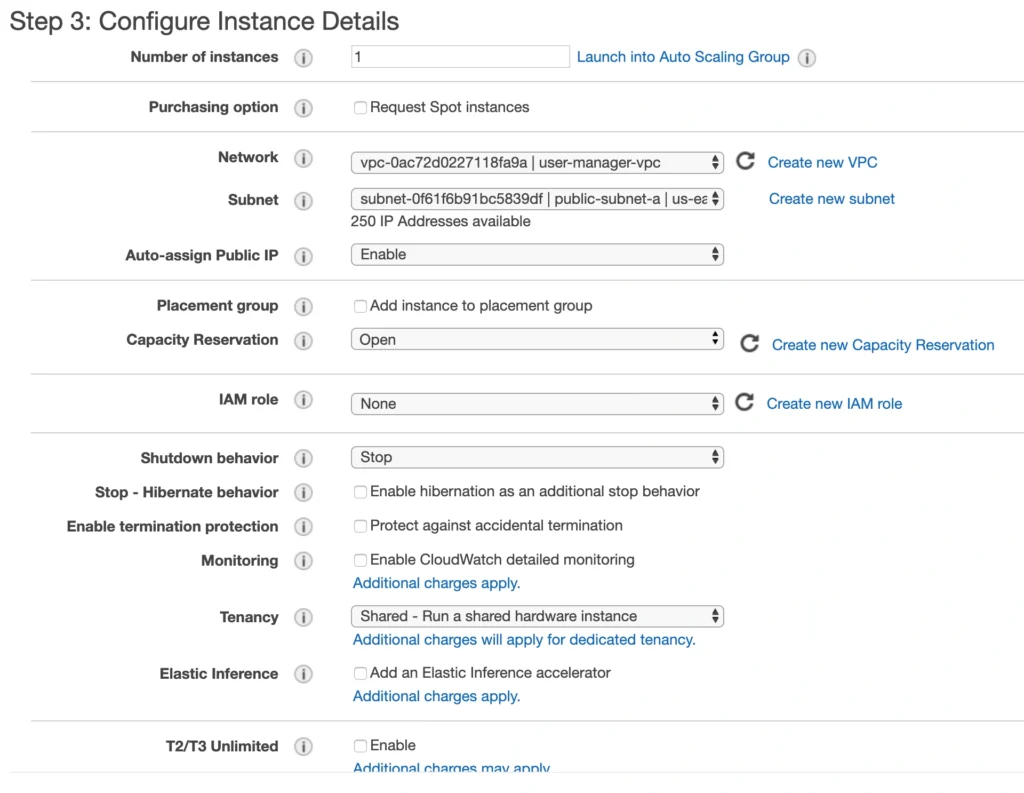

On the next screen, we need to choose an instance type for our image. Here, I am sticking with the AWS free tier program, so I will go with the general-purpose t2.micro type. Click Next: Configure instance Details . Here, we can define the number of instances, network settings, IAM configuration, etc. For now, let’s start with 1 instance, we will work on the scalability of our infrastructure later. In the Network section, choose your previously created VPC and public-subnet-a and enable Public IP auto-assignment. We do not need to specify any IAM role as we are not going to use any of the AWS services.

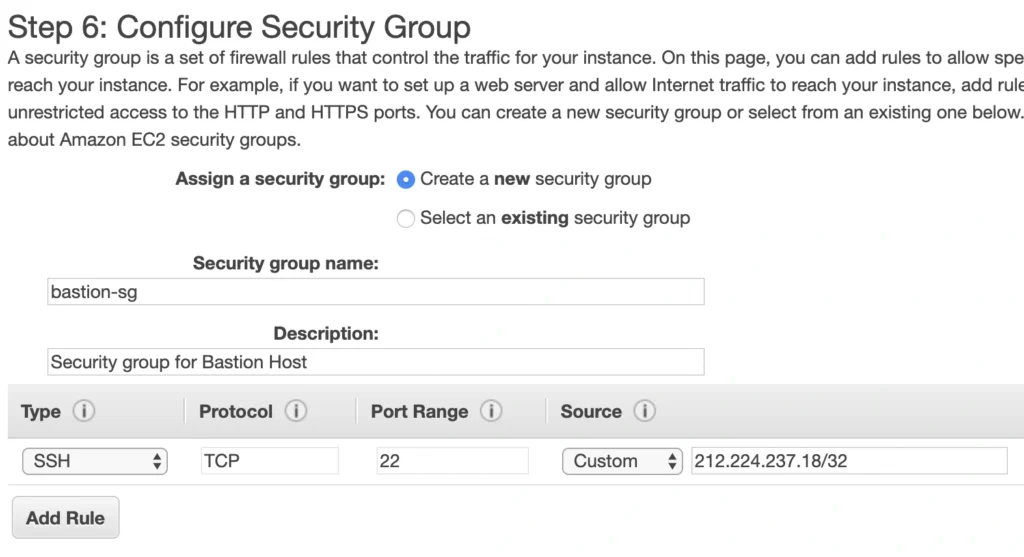

Click Next . Here you can see that the wizard automatically configures your instance with an 8GB EBS storage, which is enough for us. Click Next again. Now, we can add tags to improve the recognizability of our instance. Let’s add a Name tag bastion-a-ec2 . On the next screen, we can configure a security group for our instance. Create a new security group, name it bastion-sg .

You can see that there is already one predefined rule exposing our instance for SSH sessions from 0.0.0.0/0 (anywhere). You should change it here to allow only connections from your IP address. The important thing to note here is that in the production environment you would never expose your instances to the whole world, instead, you would whitelist the IP addresses of employees allowed to connect to your instance.

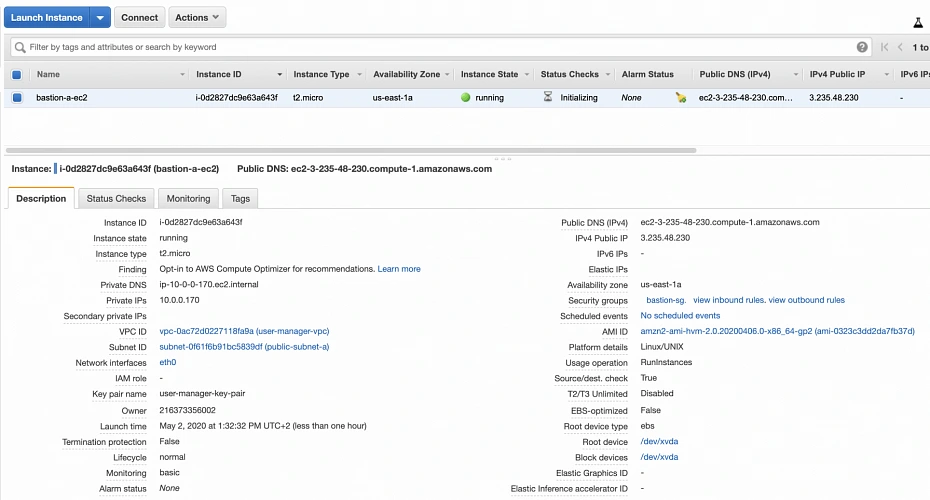

In the next step, you can review your EC2 configuration and launch it. The last action is the creation of a key pair. This is important because we need this key pair to ssh to our instance. Name the key pair e.g. user-manager-key-pair , download the private key, and store it locally on your machine. This is it, Amazon will take some time, but in the end, your EC2 instance will be launched.

In the instance description section, you can find the public IP address of your instance. We can use it to ssh to the EC2. That is where we will need previously generated and hopefully locally saved private key (*.pem file). That’s it, our instance is ready for now. However, in production, it would be a good idea to harden the security of the Bastion Host even more. If you would like to learn more about that, we recommend this article .

1.2. Backend server EC2

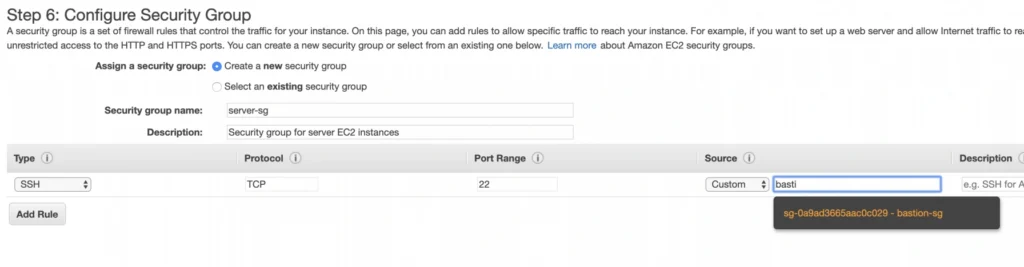

Now, let’s create an instance for the backend server. Click Launch instance again, choose the same AMI image as before, place it in your user-manager-vpc, private-subnet-a, and do not enable public IP auto-assignment this time. Move through the next steps as before, add a server-a-ec2 name tag. In the security group configuration, create a new security group, and modify its settings to allow SSH incoming communication only from the bastion-sg .

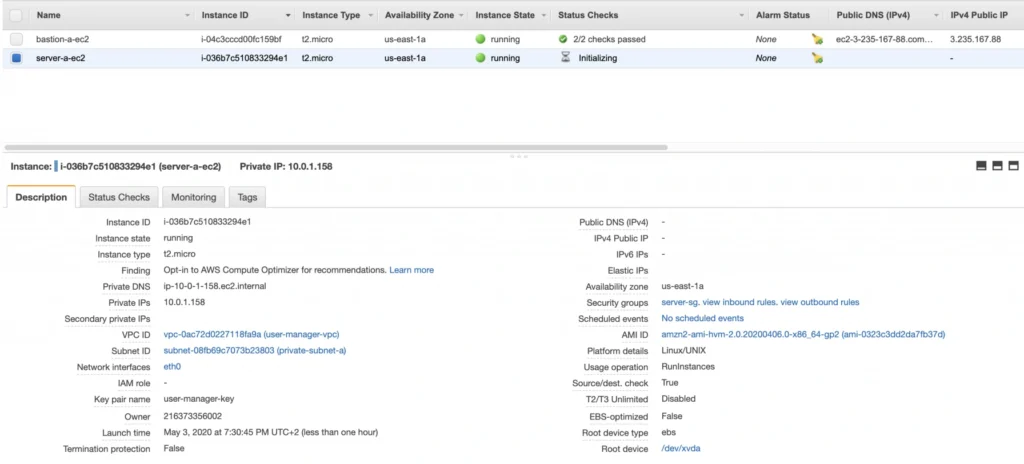

Launch the instance. You can create a new key pair or use the previously created one (for simplicity I recommend using the same key pair for all instances). In the end, you should have your second instance up and running.

You can see that server-a-ec2 does not have any public IP address. However, we can access it through the bastion host. First, we need to add our key to a keychain and then we can ssh to our bastion host instance adding -A flag to the ssh command. This flag enables agent-forwarding, which will let you ssh into your private instance without explicitly specifying private key again. This is a recommended way, which lets you avoid storage of the private key on the bastion host instance which could lead to a security breach.

ssh-add -k

ssh -A -i path-to-your-pem-file ec2-user@bastion-a-ec2-instance-public-ip

Then, inside your bastion host execute the command:

ssh ec2-user@server-a-ec2-instance-private-ip

Now, you should be inside your server-a-ec2 private instance. Let’s install the required software on the machine by executing those commands:

sudo yum update -y &&

sudo amazon-linux-extras enable corretto8 &&

sudo yum clean metadata &&

sudo yum install java-11-amazon-corretto &&

java --version

As a result, you should have java 11 installed on your server-a-ec2 instance. You can go back to the local command prompt by executing the exit command twice.

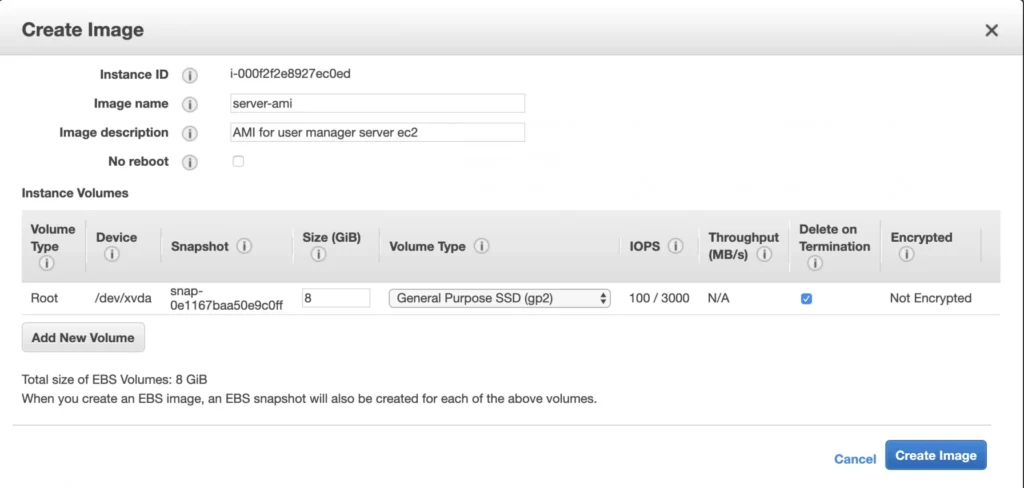

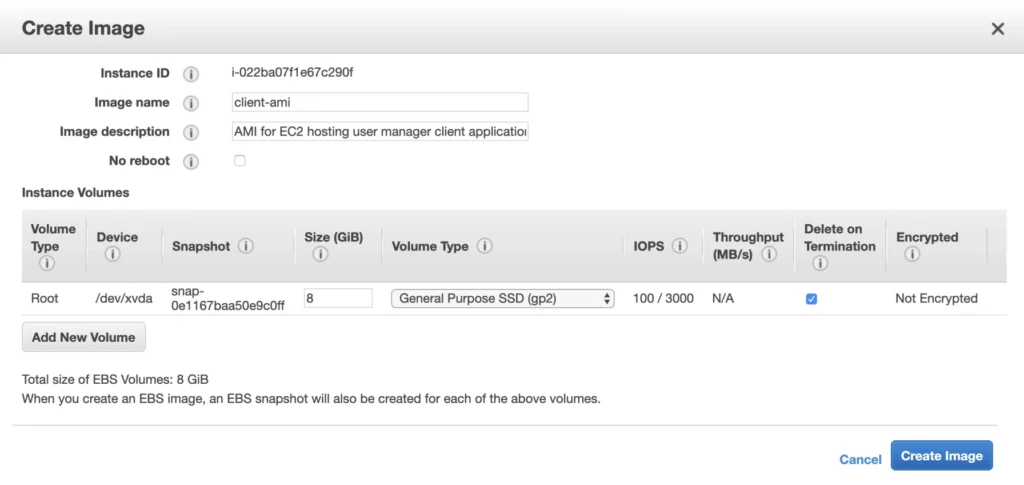

AMI

The ec2 instance for the backend server is ready for the deployment. In the second availability zone, we could follow exactly the same steps. However, there is an easier way. We can create an AMI image based on our pre-configured instance and use it later for the creation of the corresponding instance in availability zone b. In order to do that, go again into the Instances menu, select your instance, click Actions -> Image -> Create image . Your AMI image will be created and you will be able to find it in the Images/AMIs section.

1.3. Client application EC2

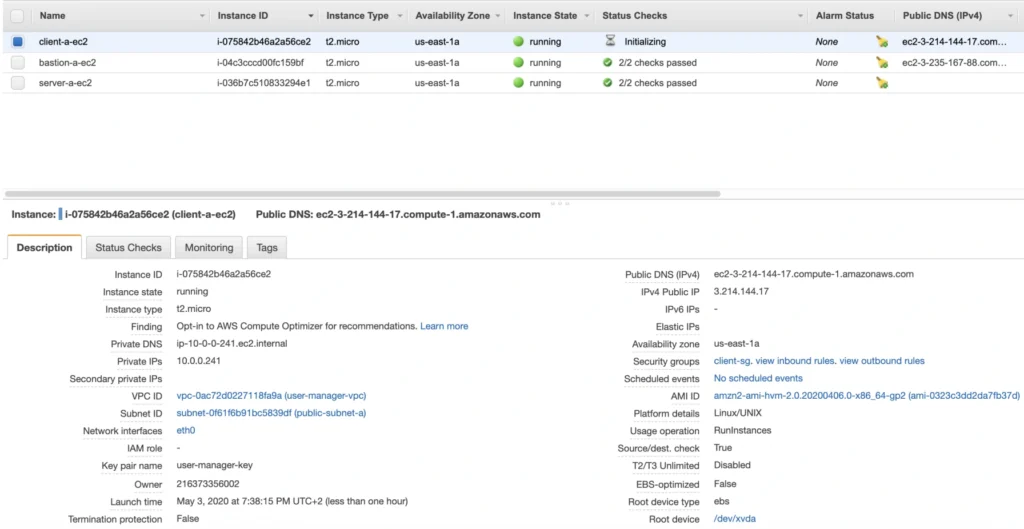

The last EC2 instance we need in the Availability Zone A will host the client application. So, let’s go once again through the process of EC2 creation. Launch instance, select the same base AMI as before, select your VPC, place the instance in the public-subnet-a , and enable public IP assignment. Then, add a client-a-ec2 Name tag, and create a new security group client-sg allowing SSH incoming connection from the bastion-sg security group. That’s it, launch it.

Now, SSH to the instance through the bastion host, and install the required software.

ssh -A -i path-to-your-pem-file ec2-user@bastion-a-ec2-instance-public-ip

Then, inside your bastion host execute the command:

ssh -A -i path-to-your-pem-file ec2-user@bastion-a-ec2-instance-public-ip

Inside client-a-ec2 command prompt, execute :

sudo yum update &&

curl -sL https://rpm.nodesource.com/setup_12.x | sudo bash - &&

sudo yum install -y nodejs &&

node -v &&

npm -v

Exit the EC2 command prompt and create a new AMI image based on it.

2. Availability Zone B

2.1. Bastion Host

Create the second bastion host instance following the same steps as for availability zone a, but this time place it in public-subnet-b , add Name tag bastion-b-ec2 , and assign to it previously created bastion-sg security group.

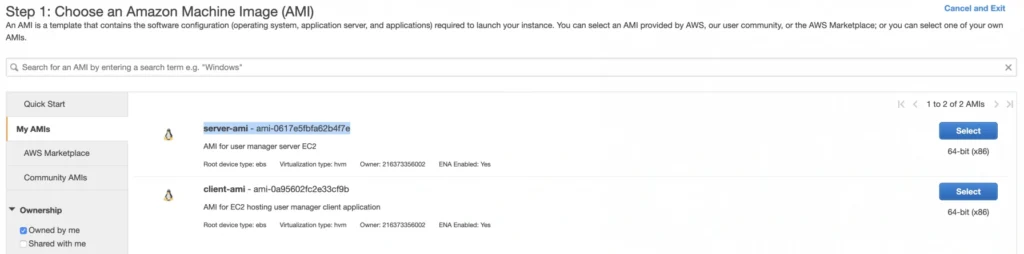

2.2. Backend server EC2

For the backend server EC2, go again to the Launch Instance menu, and this time instead of using Amazon’s AMI switch to My AMI’s tab and select the previously created server-ami image. Place the instance in the private-subnet-b , add a name tag server-b-ec2 , and assign to it the server-sg security group.

2.3. Client application EC2

Just as for the backend server instance, launch the client-b-ec2 using your custom AMI image. This time select the client-ami image, place EC2 in the public-subnet-b , enable automatic IP assignment, and choose the client-sg security group.

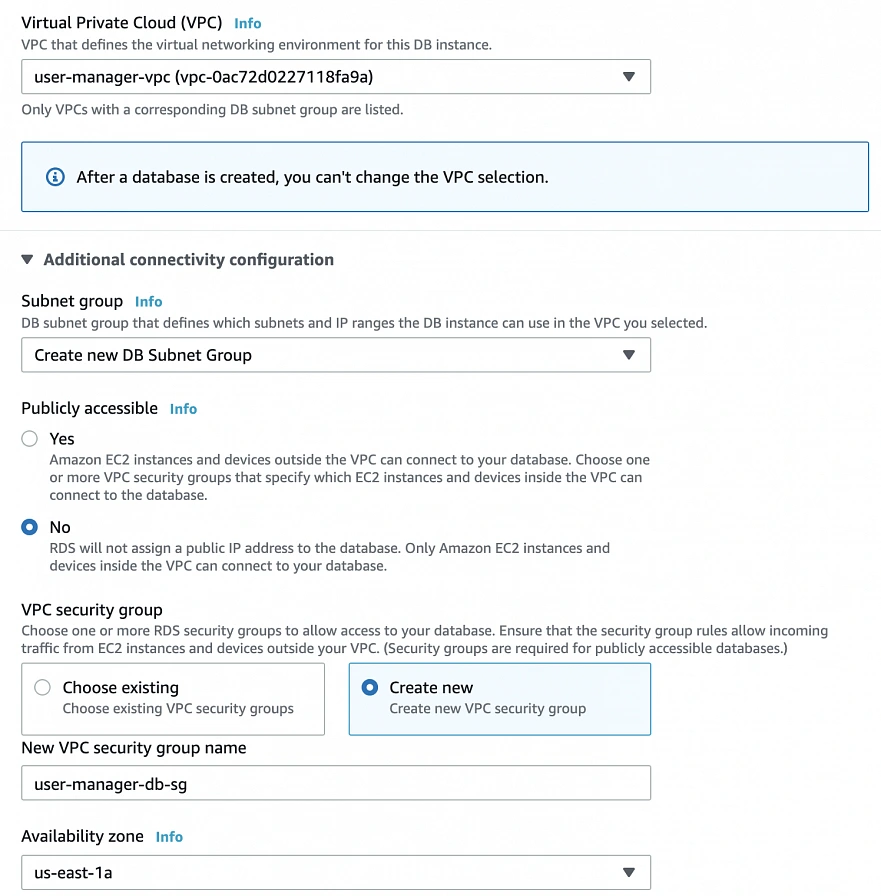

3. RDS

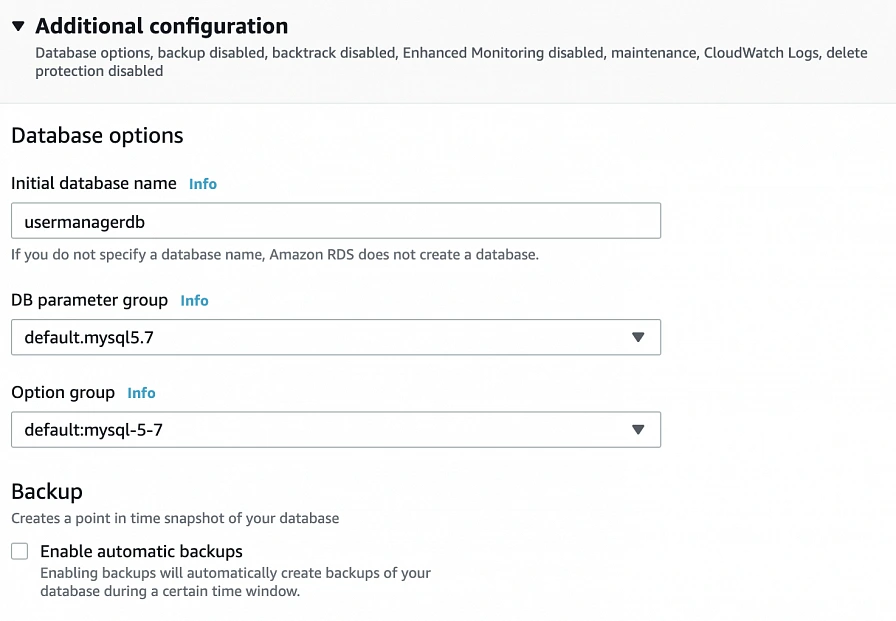

We have all our EC2 instances ready. The last part which we will cover in this article is the configuration of RDS. For that, go into the RDS service in the AWS Management Console and click Create database. In the database configuration window, follow the standard configuration path. Select MySQL db engine, and select Free tier template. Set your db name as user-manager-db , specify master username and password, select your user-manager-vpc , availability zone a, and make the database publicly not accessible. Create also a new user-manager-db-sg security group.

In the Additional configuration section, specify the initial db name, and finally create a database.

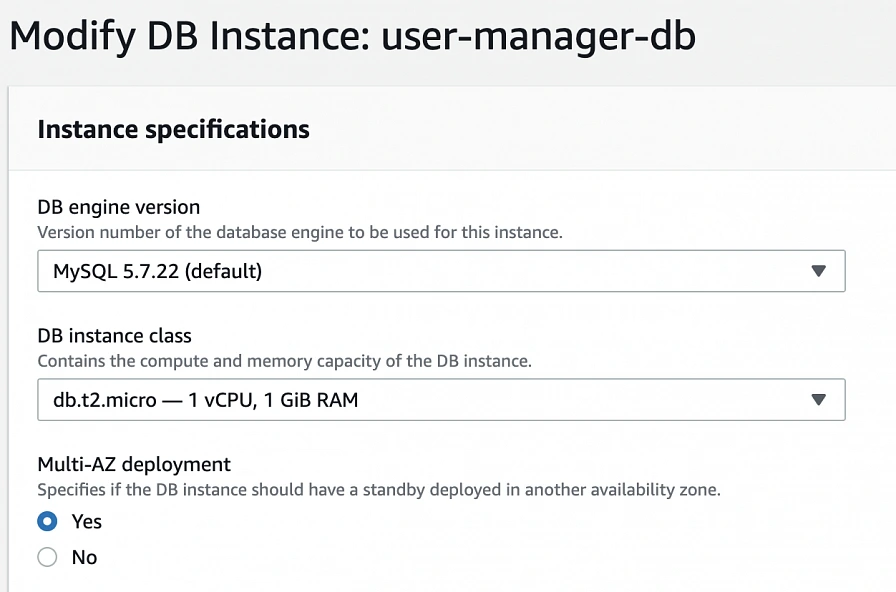

After AWS finishes the creation process, you will be able to get the database endpoint, which we will use to connect to the database from our application later on. Now, in order to provide high availability of the database, click the Modify button on the created database screen, and enable Multi-AZ deployment. Please, bear in mind that Multi-AZ deployment is not included in the free tier program, so if you would like to avoid any charges, skip this point.

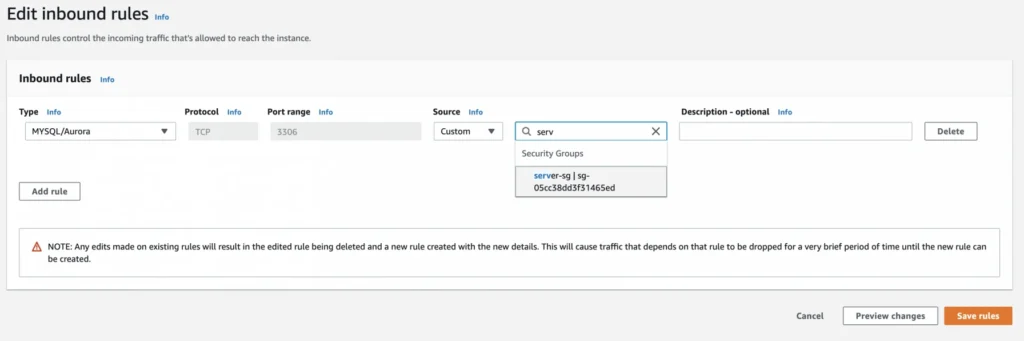

As the last step, we need to add a rule to the user-manager-db-sg to allow incoming connections from our server-sg on port 3306 in order to allow communication between our server and the database.

EC2, AMI, Bastion Host, RDS - Summary

Congratulations, our infrastructure is almost ready for deployment. As you can see in our final diagram, the only thing which is missing is the load balancer. In the next part of the series, we will take care of that, and deploy our applications to have a fully functioning system running on AWS infrastructure!

Sources:

- https://cloudacademy.com/blog/aws-bastion-host-nat-instances-vpc-peering-security/

- https://aws.amazon.com/quickstart/architecture/linux-bastion/

- https://aws.amazon.com/blogs/security/securely-connect-to-linux-instances-running-in-a-private-amazon-vpc/

- https://app.pluralsight.com/library/courses/aws-developer-getting-started/table-of-contents

- https://app.pluralsight.com/library/courses/aws-developer-designing-developing/table-of-contents

- https://app.pluralsight.com/library/courses/aws-networking-deep-dive-vpc/table-of-contents

- https://www.techradar.com/news/what-is-amazon-rds

- https://medium.com/kaodim-engineering/hardening-ssh-using-aws-bastion-and-mfa-45d491288872

- https://cloudacademy.com/blog/aws-bastion-host-nat-instances-vpc-peering-security/

- https://docs.aws.amazon.com/IAM/latest/UserGuide/id_roles.html

- https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ec2-key-pairs.html

- https://aws.amazon.com/ec2/instance-types/

- https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/concepts.html

Reactive service to service communication with RSocket – abstraction over RSocket

If you are familiar with the previous articles of this series ( Introduction , Load balancing & Resumability ), you have probably noticed that RSocket provides a low-level API. We can operate directly on the methods from the interaction model and without any constraints sends the frames back and forth. It gives us a lot of freedom and control, but it may introduce extra issues, especially related to the contract between microservices. To solve these problems, we can use RSocket through a generic abstraction layer. There are two available solutions out there: RSocket RPC module and integration with Spring Framework. In the following sections, we will discuss them briefly.

RPC over RSocket

Keeping the contract between microservices clean and well-defined is one of the crucial concerns of the distributed systems. To assure that applications can exchange the data we can leverage Remote Procedure Calls. Fortunately, RSocket has dedicated RPC module which uses Protobuf as a serialization mechanism, so that we can benefit from RSocket performance and keep the contract in check at the same time. By combining generated services and objects with RSocket acceptors we can spin up fully operational RPC server, and just as easily consume it using RPC client.

In the first place, we need the definition of the service and the object. In the example below, we create simple CustomerService with four endpoints – each of them represents a different method from the interaction model.

syntax = "proto3";

option java_multiple_files = true;

option java_outer_classname = "ServiceProto";

package com.rsocket.rpc;

import "google/protobuf/empty.proto";

message SingleCustomerRequest {

string id = 1;

}

message MultipleCustomersRequest {

repeated string ids = 1;

}

message CustomerResponse {

string id = 1;

string name = 2;

}

service CustomerService {

rpc getCustomer(SingleCustomerRequest) returns (CustomerResponse) {} //request-response

rpc getCustomers(MultipleCustomersRequest) returns (stream CustomerResponse) {} //request-stream

rpc deleteCustomer(SingleCustomerRequest) returns (google.protobuf.Empty) {} //fire'n'forget

rpc customerChannel(stream MultipleCustomersRequest) returns (stream CustomerResponse) {} //request-channel

}

In the second step, we have to generate classes out of the proto file presented above. To do that we can create a gradle task as follows:

protobuf {

protoc {

artifact = 'com.google.protobuf:protoc:3.6.1'

}

generatedFilesBaseDir = "${projectDir}/build/generated-sources/"

plugins {

rsocketRpc {

artifact = 'io.rsocket.rpc:rsocket-rpc-protobuf:0.2.17'

}

}

generateProtoTasks {

all()*.plugins {

rsocketRpc {}

}

}

}

As a result of generateProto task, we should obtain service interface, service client and service server classes, in this case, CustomerService , CustomerServiceClient , CustomerServiceServer respectively. In the next step, we have to implement the business logic of generated service (CustomerService):

public class DefaultCustomerService implements CustomerService {

private static final List RANDOM_NAMES = Arrays.asList("Andrew", "Joe", "Matt", "Rachel", "Robin", "Jack");

@Override

public Mono getCustomer(SingleCustomerRequest message, ByteBuf metadata) {

log.info("Received 'getCustomer' request [{}]", message);

return Mono.just(CustomerResponse.newBuilder()

.setId(message.getId())

.setName(getRandomName())

.build());

}

@Override

public Flux getCustomers(MultipleCustomersRequest message, ByteBuf metadata) {

return Flux.interval(Duration.ofMillis(1000))

.map(time -> CustomerResponse.newBuilder()

.setId(UUID.randomUUID().toString())

.setName(getRandomName())

.build());

}

@Override

public Mono deleteCustomer(SingleCustomerRequest message, ByteBuf metadata) {

log.info("Received 'deleteCustomer' request [{}]", message);

return Mono.just(Empty.newBuilder().build());

}

@Override

public Flux customerChannel(Publisher messages, ByteBuf metadata) {

return Flux.from(messages)

.doOnNext(message -> log.info("Received 'customerChannel' request [{}]", message))

.map(message -> CustomerResponse.newBuilder()

.setId(UUID.randomUUID().toString())

.setName(getRandomName())

.build());

}

private String getRandomName() {

return RANDOM_NAMES.get(new Random().nextInt(RANDOM_NAMES.size() - 1));

}

}

Finally, we can expose the service via RSocket. To achieve that, we have to create an instance of a service server (CustomerServiceServer) and inject an implementation of our service (DefaultCustomerService). Then, we are ready to create an RSocket acceptor instance. The API provides RequestHandlingRSocket which wraps service server instance and does the translation of endpoints defined in the contract to methods available in the RSocket interaction model.

public class Server {

public static void main(String[] args) throws InterruptedException {

CustomerServiceServer serviceServer = new CustomerServiceServer(new DefaultCustomerService(), Optional.empty(), Optional.empty());

RSocketFactory

.receive()

.acceptor((setup, sendingSocket) -> Mono.just(

new RequestHandlingRSocket(serviceServer)

))

.transport(TcpServerTransport.create(7000))

.start()

.block();

Thread.currentThread().join();

}

}

On the client-side, the implementation is pretty straightforward. All we need to do is create the RSocket instance and inject it to the service client via the constructor, then we are ready to go.

@Slf4j

public class Client {

public static void main(String[] args) {

RSocket rSocket = RSocketFactory

.connect()

.transport(TcpClientTransport.create(7000))

.start()

.block();

CustomerServiceClient customerServiceClient = new CustomerServiceClient(rSocket);

customerServiceClient.deleteCustomer(SingleCustomerRequest.newBuilder()

.setId(UUID.randomUUID().toString()).build())

.block();

customerServiceClient.getCustomer(SingleCustomerRequest.newBuilder()

.setId(UUID.randomUUID().toString()).build())

.doOnNext(response -> log.info("Received response for 'getCustomer': [{}]", response))

.block();

customerServiceClient.getCustomers(MultipleCustomersRequest.newBuilder()

.addIds(UUID.randomUUID().toString()).build())

.doOnNext(response -> log.info("Received response for 'getCustomers': [{}]", response))

.subscribe();

customerServiceClient.customerChannel(s -> s.onNext(MultipleCustomersRequest.newBuilder()

.addIds(UUID.randomUUID().toString())

.build()))

.doOnNext(customerResponse -> log.info("Received response for 'customerChannel' [{}]", customerResponse))

.blockLast();

}

}

Combining RSocket with RPC approach helps to maintain the contract between microservices and improves day to day developer experience. It is suitable for typical scenarios, where we do not need full control over the frames, but on the other hand, it does not limit the protocol flexibility. We can still expose RPC endpoints as well as plain RSocket acceptors in the same application so that we can easily choose the best communication pattern for the given use case.

In the context of RPC over the RSocket one more fundamental question may arise: is it better than gRPC? There is no easy answer to that question. RSocket is a new technology, and it needs some time to get the same maturity level as gRPC has. On the other hand, it surpasses gRPC in two areas: performance ( benchmarks available here ) and flexibility - it can be used as a transport layer for RPC or as a plain messaging solution. Before making a decision on which one to use in a production environment, you should determine if RSocket align with your early adoption strategy and does not put your software at risk. Personally, I would recommend introducing RSocket in less critical areas, and then extend its usage to the rest of the system.

Spring boot integration

The second available solution, which provides an abstraction over the RSocket is the integration with Spring Boot. Here we use RSocket as a reactive messaging solution and leverage spring annotations to link methods with the routes with ease. In the following example, we implement two Spring Boot applications – the requester and the responder. The responder exposes RSocket endpoints through CustomerController and has a mapping to three routes: customer , customer-stream and customer-channel . Each of these mappings reflects different method from RSocket interaction model (request-response, request stream, and channel respectively). Customer controller implements simple business logic and returns CustomerResponse object with a random name as shown in the example below:

@Slf4j

@SpringBootApplication

public class RSocketResponderApplication {

public static void main(String[] args) {

SpringApplication.run(RSocketResponderApplication.class);

}

@Controller

public class CustomerController {

private final List RANDOM_NAMES = Arrays.asList("Andrew", "Joe", "Matt", "Rachel", "Robin", "Jack");

@MessageMapping("customer")

CustomerResponse getCustomer(CustomerRequest customerRequest) {

return new CustomerResponse(customerRequest.getId(), getRandomName());

}

@MessageMapping("customer-stream")

Flux getCustomers(MultipleCustomersRequest multipleCustomersRequest) {

return Flux.range(0, multipleCustomersRequest.getIds().size())

.delayElements(Duration.ofMillis(500))

.map(i -> new CustomerResponse(multipleCustomersRequest.getIds().get(i), getRandomName()));

}

@MessageMapping("customer-channel")

Flux getCustomersChannel(Flux requests) {

return Flux.from(requests)

.doOnNext(message -> log.info("Received 'customerChannel' request [{}]", message))

.map(message -> new CustomerResponse(message.getId(), getRandomName()));

}

private String getRandomName() {

return RANDOM_NAMES.get(new Random().nextInt(RANDOM_NAMES.size() - 1));

}

}

}

Please notice that the examples presented below are based on the Spring Boot RSocket starter 2.2.0.M4, which means that it is not an official release yet, and the API may be changed.

It is worth noting that Spring Boot automatically detects the RSocket library on the classpath and spins up the server. All we need to do is specify the port:

spring:

rsocket:

server:

port: 7000

These few lines of code and configuration set up the fully operational responder with message mapping (the code is available here )

Let’s take a look on the requester side. Here we implement CustomerServiceAdapter which is responsible for communication with the responder. It uses RSocketRequester bean that wraps the RSocket instance, mime-type and encoding/decoding details encapsulated inside RSocketStrategies object. The RSocketRequester routes the messages and deals with serialization/deserialization of the data in a reactive manner. All we need to do is provide the route, the data and the way how we would like to consume the messages from the responder – as a single object (Mono) or as a stream (Flux).

@Slf4j

@SpringBootApplication

public class RSocketRequesterApplication {

public static void main(String[] args) {

SpringApplication.run(RSocketRequesterApplication.class);

}

@Bean

RSocket rSocket() {

return RSocketFactory

.connect()

.frameDecoder(PayloadDecoder.ZERO_COPY)

.dataMimeType(MimeTypeUtils.APPLICATION_JSON_VALUE)

.transport(TcpClientTransport.create(7000))

.start()

.block();

}

@Bean

RSocketRequester rSocketRequester(RSocket rSocket, RSocketStrategies rSocketStrategies) {

return RSocketRequester.wrap(rSocket, MimeTypeUtils.APPLICATION_JSON,

rSocketStrategies);

}

@Component

class CustomerServiceAdapter {

private final RSocketRequester rSocketRequester;

CustomerServiceAdapter(RSocketRequester rSocketRequester) {

this.rSocketRequester = rSocketRequester;

}

Mono getCustomer(String id) {

return rSocketRequester

.route("customer")

.data(new CustomerRequest(id))

.retrieveMono(CustomerResponse.class)

.doOnNext(customerResponse -> log.info("Received customer as mono [{}]", customerResponse));

}

Flux getCustomers(List ids) {

return rSocketRequester

.route("customer-stream")

.data(new MultipleCustomersRequest(ids))

.retrieveFlux(CustomerResponse.class)

.doOnNext(customerResponse -> log.info("Received customer as flux [{}]", customerResponse));

}

Flux getCustomerChannel(Flux customerRequestFlux) {

return rSocketRequester

.route("customer-channel")

.data(customerRequestFlux, CustomerRequest.class)

.retrieveFlux(CustomerResponse.class)

.doOnNext(customerResponse -> log.info("Received customer as flux [{}]", customerResponse));

}

}

}

Besides the communication with the responder, the requester exposes the RESTful API with three mappings: /customers/{id} , /customers , /customers-channel . Here we use spring web-flux and on top of the HTTP2 protocol. Please notice that the last two mappings produce the text event stream, which means that the value will be streamed to the web browser when it becomes available.

@RestController

class CustomerController {

private final CustomerServiceAdapter customerServiceAdapter;

CustomerController(CustomerServiceAdapter customerServiceAdapter) {

this.customerServiceAdapter = customerServiceAdapter;

}

@GetMapping("/customers/{id}")

Mono getCustomer(@PathVariable String id) {

return customerServiceAdapter.getCustomer(id);

}

@GetMapping(value = "/customers", produces = MediaType.TEXT_EVENT_STREAM_VALUE)

Publisher getCustomers() {

return customerServiceAdapter.getCustomers(getRandomIds(10));

}

@GetMapping(value = "/customers-channel", produces = MediaType.TEXT_EVENT_STREAM_VALUE)

Publisher getCustomersChannel() {

return customerServiceAdapter.getCustomerChannel(Flux.interval(Duration.ofMillis(1000))

.map(id -> new CustomerRequest(UUID.randomUUID().toString())));

}

private List getRandomIds(int amount) {

return IntStream.range(0, amount)

.mapToObj(n -> UUID.randomUUID().toString())

.collect(toList());

}

}

To play with REST endpoints mentioned above, you can use following curl commands:

curl http://localhost:8080/customers/1

curl http://localhost:8080/customers

curl http://localhost:8080/customers-channel

Please notice that requester application code is available here

The integration with Spring Boot and RPC module are complementary solutions on top of the RSocket. The first one is messaging oriented and provides convenient message routing API whereas the RPC module enables the developer to easily control the exposed endpoints and maintain the contract between microservices. Both of these solutions have applications and can be easily combined with RSocket low-level API to fulfill the most sophisticated requirements with consistent manner using a single protocol.

Series summary

This article is the last one of the mini-series related to RSocket – the new binary protocol which may revolutionize service to service communication in the cloud. Its rich interaction model , performance and extra features like client load balancing and resumability make it a perfect candidate for almost all possible business cases. The usage of the protocol may be simplified by available abstraction layers: Spring Boot integration and RPC module which address most typical day to day scenarios.

Please notice that the protocol is in release candidate version (1.0.0-RC2), therefore it is not recommended to use it in the production environment. Still, you should keep an eye on it, as the growing community and support of the big tech companies (e.g. Netflix, Facebook, Alibaba, Netifi) may turn RSocket as a primary communication protocol in the cloud.